"Time": models, code, and papers

Feature Shift Detection: Localizing Which Features Have Shifted via Conditional Distribution Tests

Jul 14, 2021

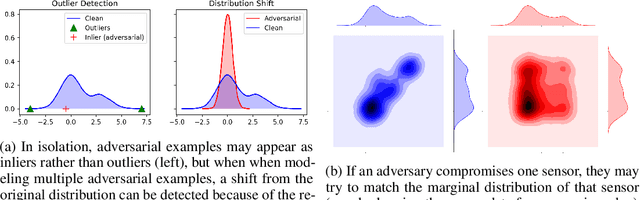

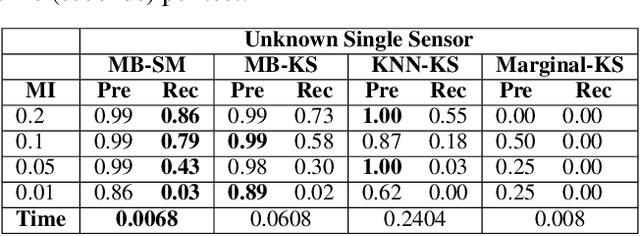

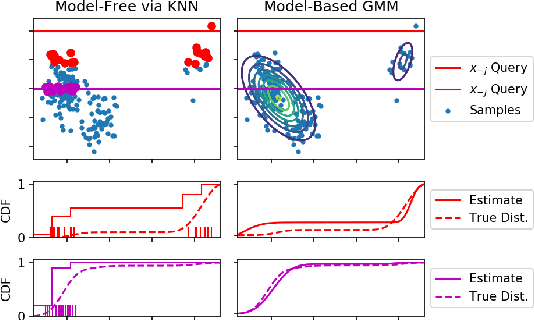

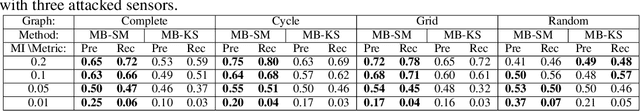

While previous distribution shift detection approaches can identify if a shift has occurred, these approaches cannot localize which specific features have caused a distribution shift -- a critical step in diagnosing or fixing any underlying issue. For example, in military sensor networks, users will want to detect when one or more of the sensors has been compromised, and critically, they will want to know which specific sensors might be compromised. Thus, we first define a formalization of this problem as multiple conditional distribution hypothesis tests and propose both non-parametric and parametric statistical tests. For both efficiency and flexibility, we then propose to use a test statistic based on the density model score function (i.e. gradient with respect to the input) -- which can easily compute test statistics for all dimensions in a single forward and backward pass. Any density model could be used for computing the necessary statistics including deep density models such as normalizing flows or autoregressive models. We additionally develop methods for identifying when and where a shift occurs in multivariate time-series data and show results for multiple scenarios using realistic attack models on both simulated and real world data.

* NeurIPS 2020 Camera Ready

H3D-Net: Few-Shot High-Fidelity 3D Head Reconstruction

Jul 26, 2021

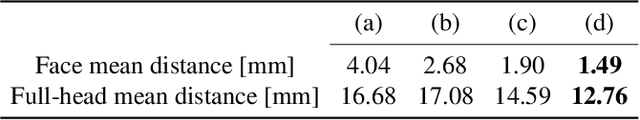

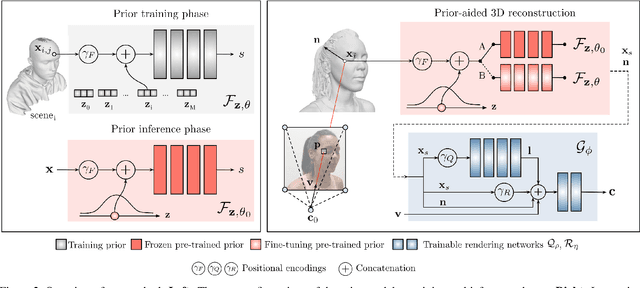

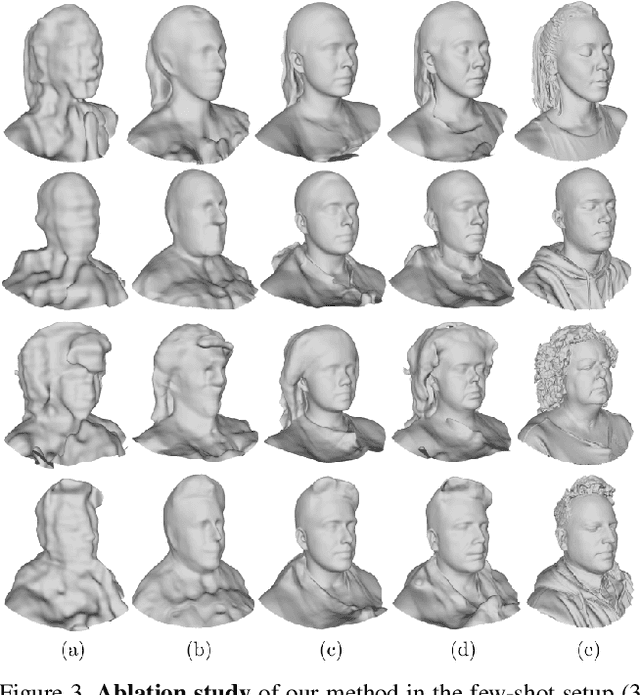

Recent learning approaches that implicitly represent surface geometry using coordinate-based neural representations have shown impressive results in the problem of multi-view 3D reconstruction. The effectiveness of these techniques is, however, subject to the availability of a large number (several tens) of input views of the scene, and computationally demanding optimizations. In this paper, we tackle these limitations for the specific problem of few-shot full 3D head reconstruction, by endowing coordinate-based representations with a probabilistic shape prior that enables faster convergence and better generalization when using few input images (down to three). First, we learn a shape model of 3D heads from thousands of incomplete raw scans using implicit representations. At test time, we jointly overfit two coordinate-based neural networks to the scene, one modeling the geometry and another estimating the surface radiance, using implicit differentiable rendering. We devise a two-stage optimization strategy in which the learned prior is used to initialize and constrain the geometry during an initial optimization phase. Then, the prior is unfrozen and fine-tuned to the scene. By doing this, we achieve high-fidelity head reconstructions, including hair and shoulders, and with a high level of detail that consistently outperforms both state-of-the-art 3D Morphable Models methods in the few-shot scenario, and non-parametric methods when large sets of views are available.

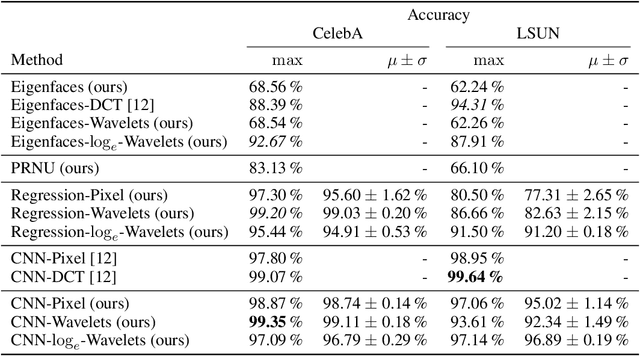

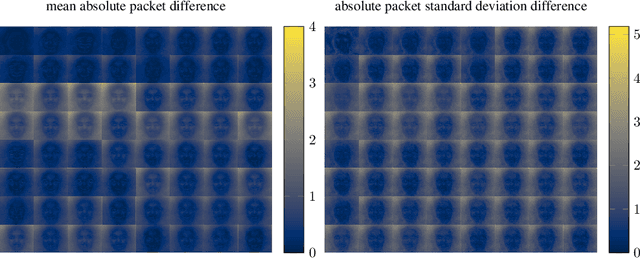

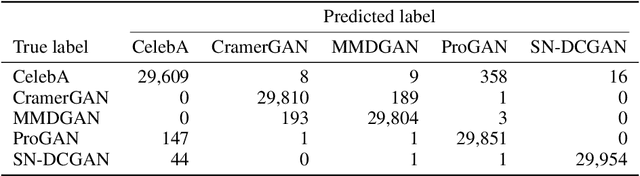

Wavelet-Packet Powered Deepfake Image Detection

Jun 17, 2021

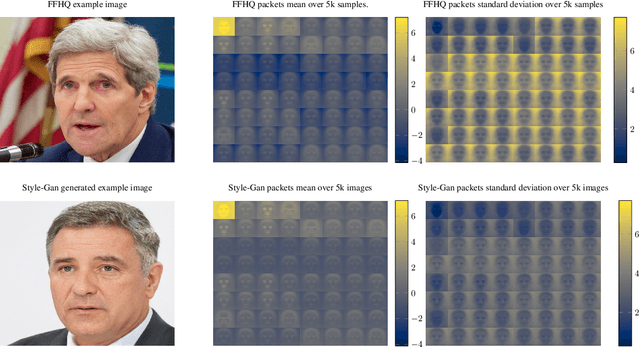

As neural networks become more able to generate realistic artificial images, they have the potential to improve movies, music, video games and make the internet an even more creative and inspiring place. Yet, at the same time, the latest technology potentially enables new digital ways to lie. In response, the need for a diverse and reliable toolbox arises to identify artificial images and other content. Previous work primarily relies on pixel-space CNN or the Fourier transform. To the best of our knowledge, wavelet-based gan analysis and detection methods have been absent thus far. This paper aims to fill this gap and describes a wavelet-based approach to gan-generated image analysis and detection. We evaluate our method on FFHQ, CelebA, and LSUN source identification problems and find improved or competitive performance.

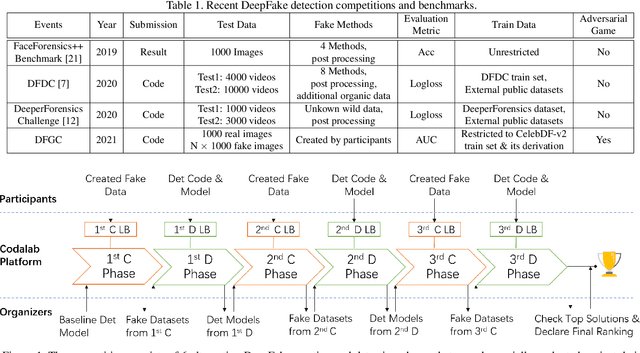

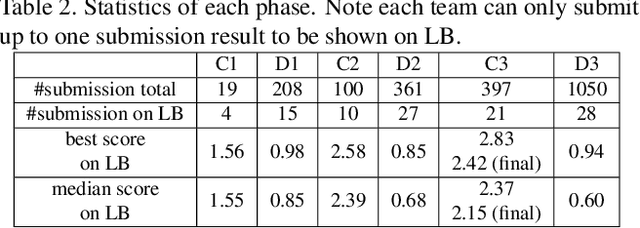

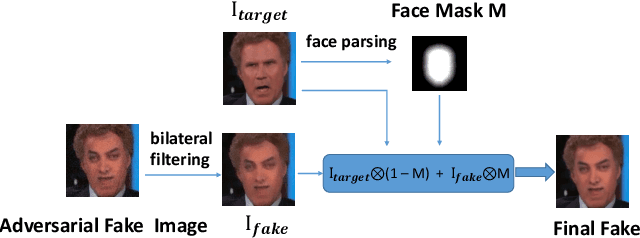

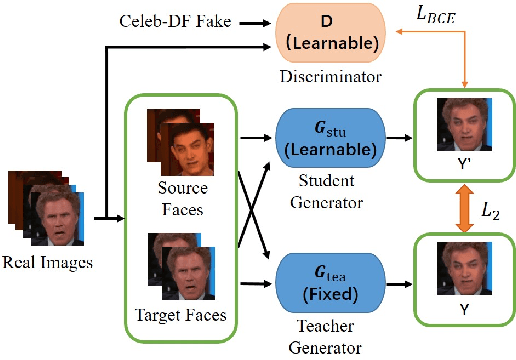

DFGC 2021: A DeepFake Game Competition

Jun 02, 2021

This paper presents a summary of the DFGC 2021 competition. DeepFake technology is developing fast, and realistic face-swaps are increasingly deceiving and hard to detect. At the same time, DeepFake detection methods are also improving. There is a two-party game between DeepFake creators and detectors. This competition provides a common platform for benchmarking the adversarial game between current state-of-the-art DeepFake creation and detection methods. In this paper, we present the organization, results and top solutions of this competition and also share our insights obtained during this event. We also release the DFGC-21 testing dataset collected from our participants to further benefit the research community.

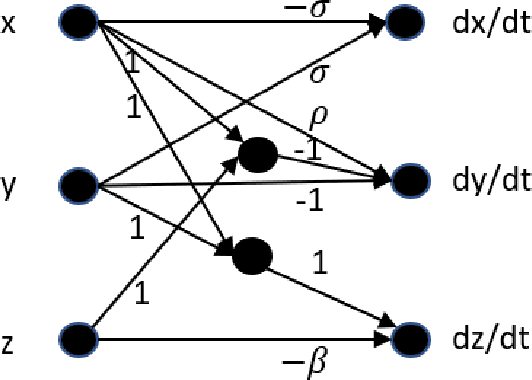

Learn Like The Pro: Norms from Theory to Size Neural Computation

Jun 21, 2021

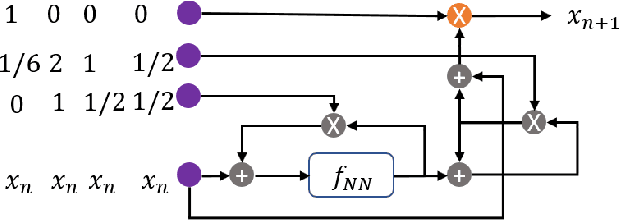

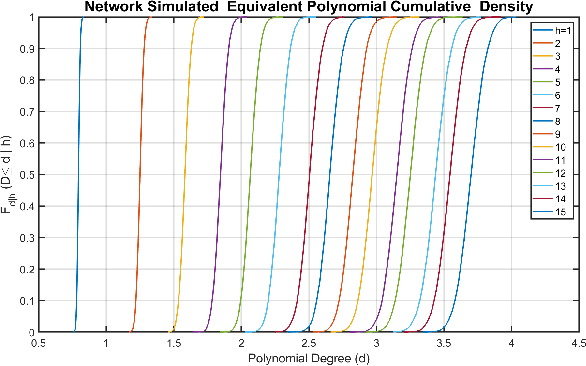

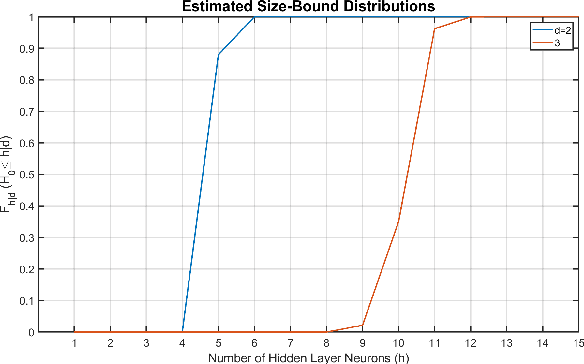

The optimal design of neural networks is a critical problem in many applications. Here, we investigate how dynamical systems with polynomial nonlinearities can inform the design of neural systems that seek to emulate them. We propose a Learnability metric and its associated features to quantify the near-equilibrium behavior of learning dynamics. Equating the Learnability of neural systems with equivalent parameter estimation metric of the reference system establishes bounds on network structure. In this way, norms from theory provide a good first guess for neural structure, which may then further adapt with data. The proposed approach neither requires training nor training data. It reveals exact sizing for a class of neural networks with multiplicative nodes that mimic continuous- or discrete-time polynomial dynamics. It also provides relatively tight lower size bounds for classical feed-forward networks that is consistent with simulated assessments.

TopicTracker: A Platform for Topic Trajectory Identification and Visualisation

Mar 02, 2021

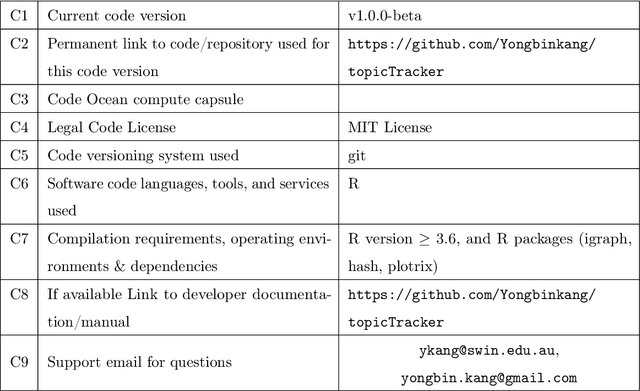

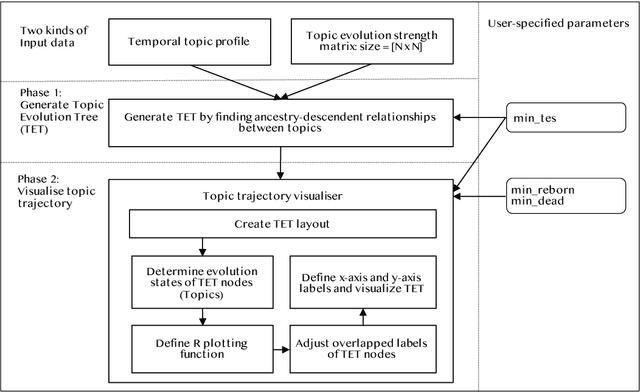

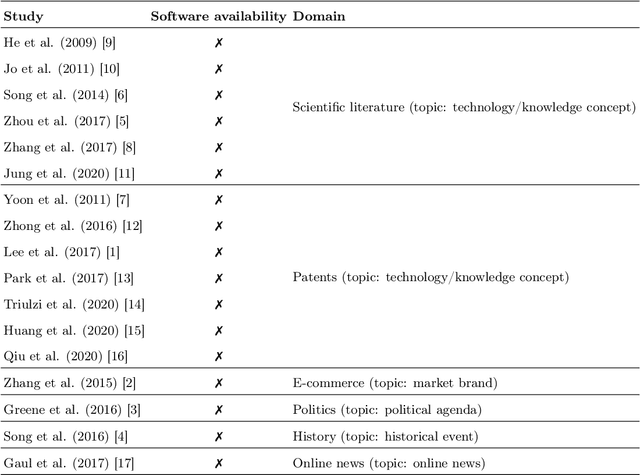

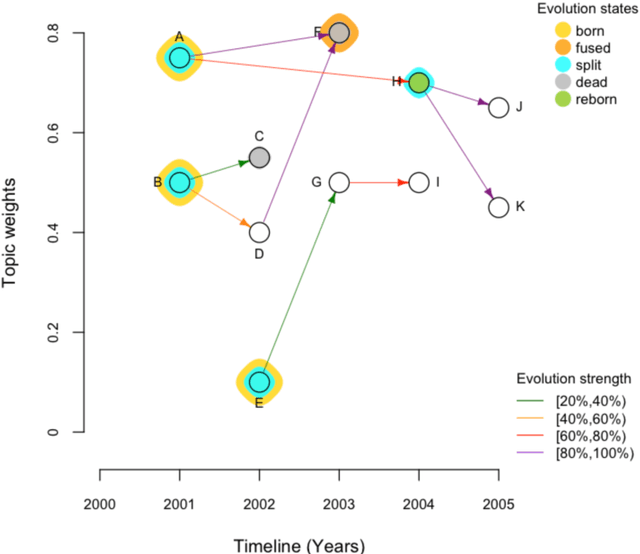

Topic trajectory information provides crucial insight into the dynamics of topics and their evolutionary relationships over a given time. Also, this information can help to improve our understanding on how new topics have emerged or formed through a sequential or interrelated events of emergence, modification and integration of prior topics. Nevertheless, the implementation of the existing methods for topic trajectory identification is rarely available as usable software. In this paper, we present TopicTracker, a platform for topic trajectory identification and visualisation. The key of Topic Tracker is that it can represent the three facets of information together, given two kinds of input: a time-stamped topic profile consisting of the set of the underlying topics over time, and the evolution strength matrix among them: evolutionary pathways of dynamic topics, evolution states of the topics, and topic importance. TopicTracker is a publicly available software implemented using the R software.

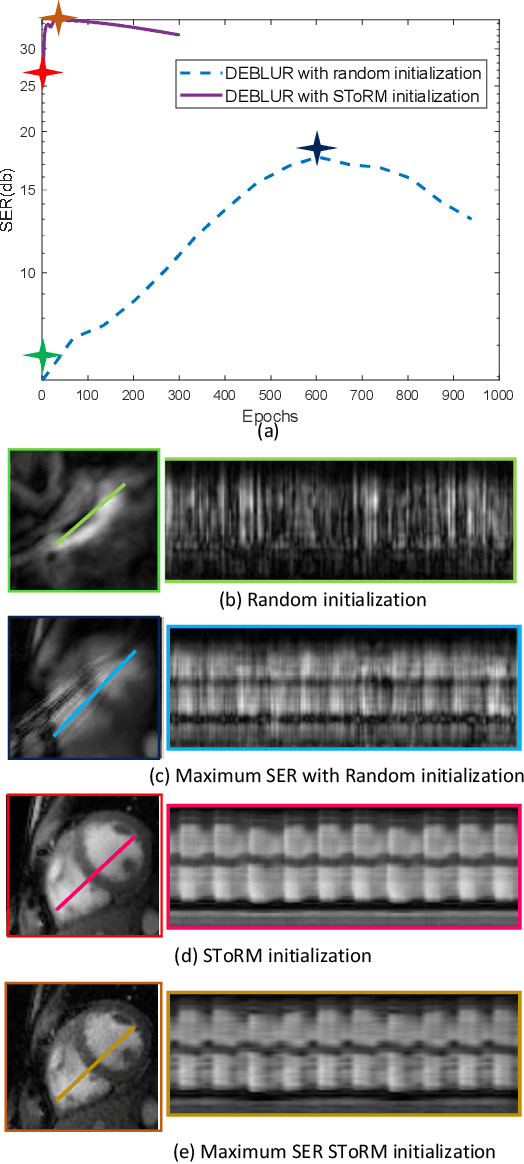

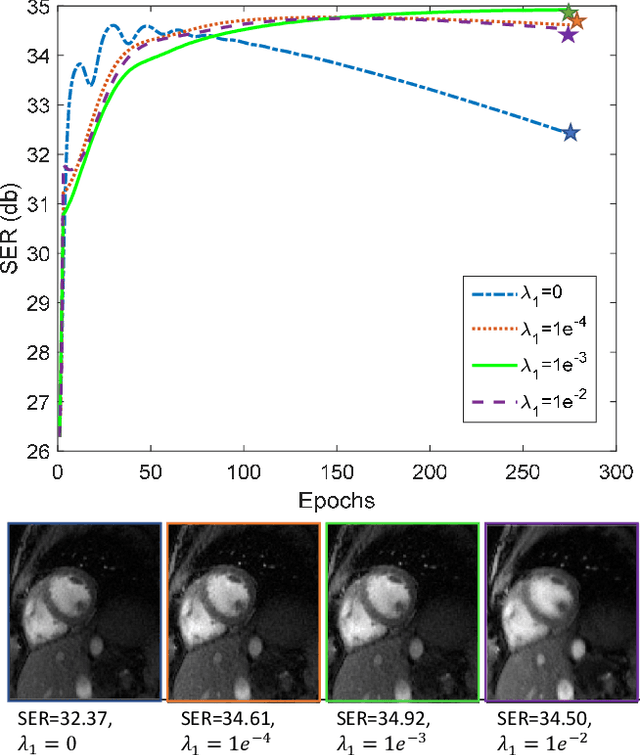

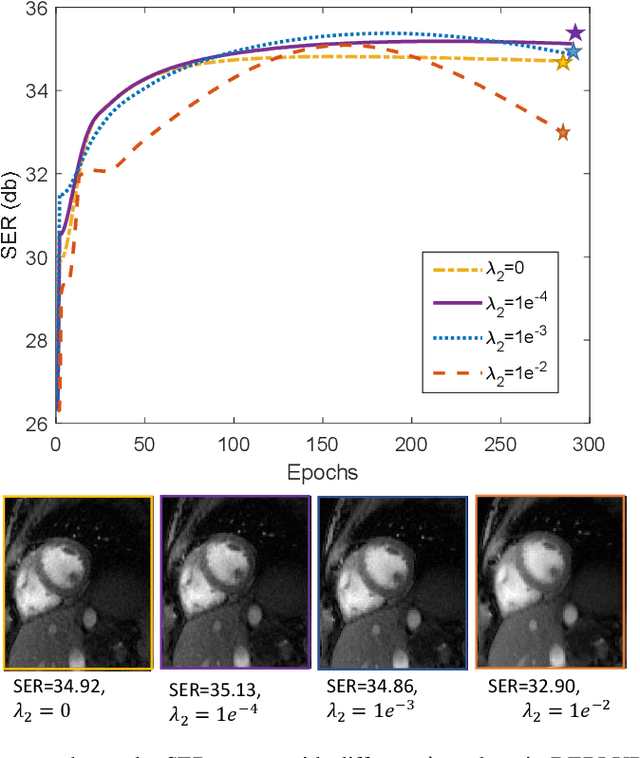

Dynamic Imaging using Deep Bi-linear Unsupervised Regularization (DEBLUR)

Jun 30, 2021

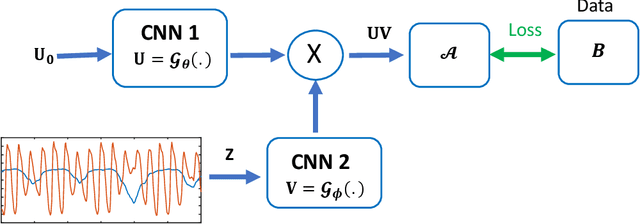

Bilinear models that decompose dynamic data to spatial and temporal factors are powerful and memory-efficient tools for the recovery of dynamic MRI data. These methods rely on sparsity and energy compaction priors on the factors to regularize the recovery. The quality of the recovered images depend on the specific priors. Motivated by deep image prior, we introduce a novel bilinear model whose factors are represented using convolutional neural networks (CNNs). The CNN parameters are learned from the undersampled data off the same subject. To reduce the run time and to improve performance, we initialize the CNN parameters. We use sparsity regularization of the network parameters to minimize the overfitting of the network to measurement noise. Our experiments on free breathing and ungated cardiac cine data acquired using a navigated golden-angle gradient-echo radial sequence show the ability of our method to provide reduced spatial blurring as compared to low-rank and SToRM reconstructions.

Finite Time Adaptive Stabilization of LQ Systems

Jul 22, 2018Stabilization of linear systems with unknown dynamics is a canonical problem in adaptive control. Since the lack of knowledge of system parameters can cause it to become destabilized, an adaptive stabilization procedure is needed prior to regulation. Therefore, the adaptive stabilization needs to be completed in finite time. In order to achieve this goal, asymptotic approaches are not very helpful. There are only a few existing non-asymptotic results and a full treatment of the problem is not currently available. In this work, leveraging the novel method of random linear feedbacks, we establish high probability guarantees for finite time stabilization. Our results hold for remarkably general settings because we carefully choose a minimal set of assumptions. These include stabilizability of the underlying system and restricting the degree of heaviness of the noise distribution. To derive our results, we also introduce a number of new concepts and technical tools to address regularity and instability of the closed-loop matrix.

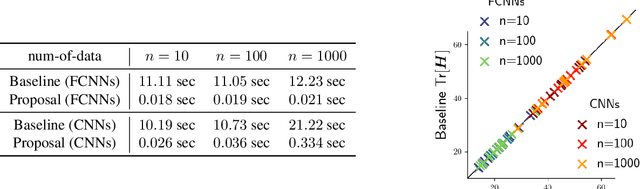

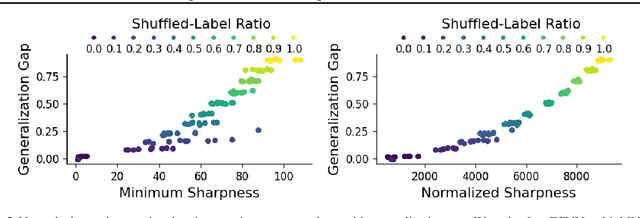

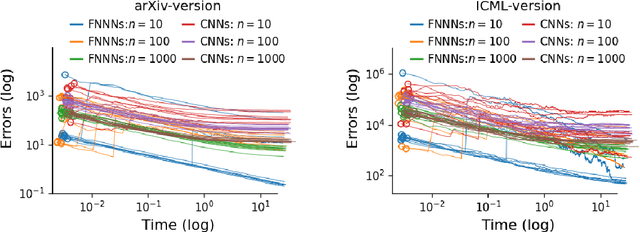

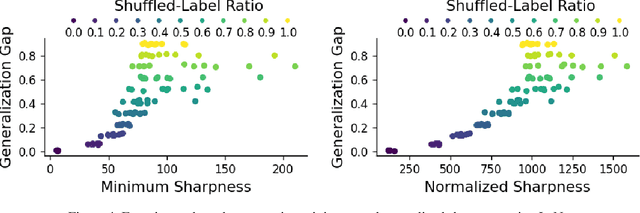

Minimum sharpness: Scale-invariant parameter-robustness of neural networks

Jun 26, 2021

Toward achieving robust and defensive neural networks, the robustness against the weight parameters perturbations, i.e., sharpness, attracts attention in recent years (Sun et al., 2020). However, sharpness is known to remain a critical issue, "scale-sensitivity." In this paper, we propose a novel sharpness measure, Minimum Sharpness. It is known that NNs have a specific scale transformation that constitutes equivalent classes where functional properties are completely identical, and at the same time, their sharpness could change unlimitedly. We define our sharpness through a minimization problem over the equivalent NNs being invariant to the scale transformation. We also develop an efficient and exact technique to make the sharpness tractable, which reduces the heavy computational costs involved with Hessian. In the experiment, we observed that our sharpness has a valid correlation with the generalization of NNs and runs with less computational cost than existing sharpness measures.

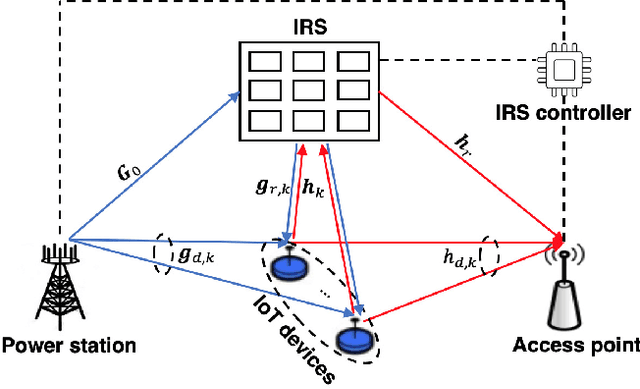

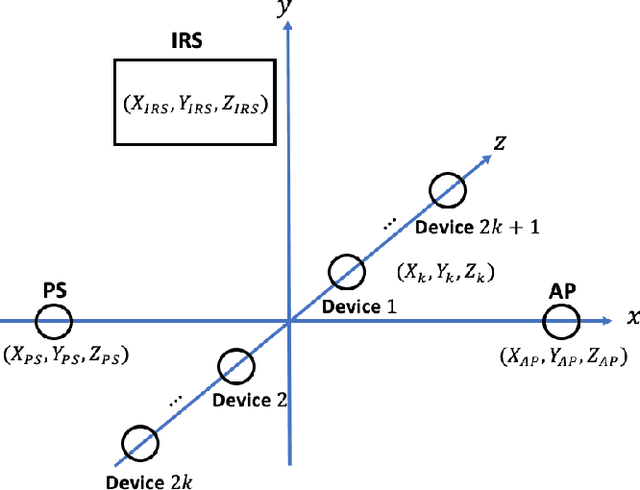

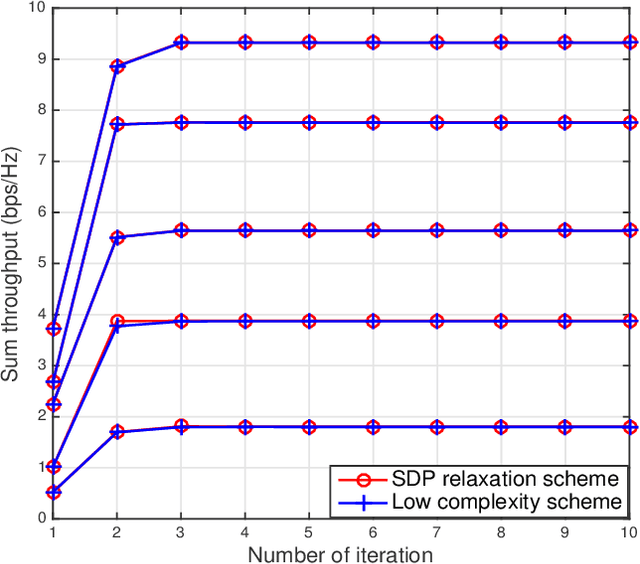

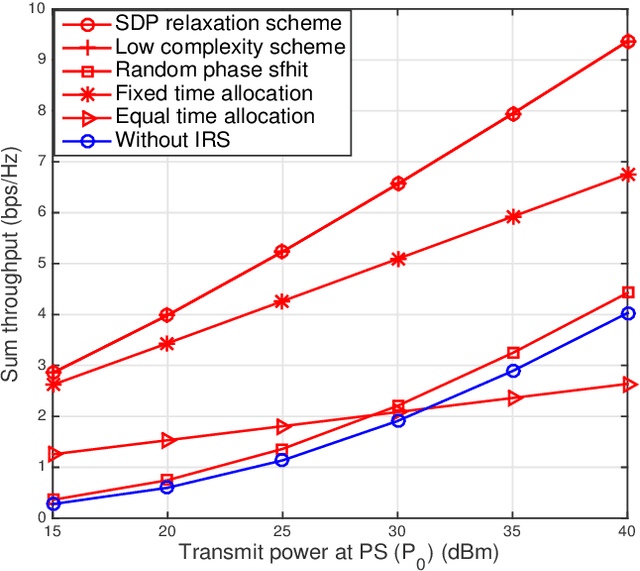

A Unified Framework for IRS Enabled Wireless Powered Sensor Networks

Mar 19, 2021

This paper unveils the importance of intelligent reflecting surface (IRS) in a wireless powered sensor network (WPSN). Specifically, a multi-antenna power station (PS) employs energy beamforming to provide wireless charging for multiple Internet of Things (IoT) devices, which utilize the harvested energy to deliver their own messages to an access point (AP). Meanwhile, an IRS is deployed to enhance the performances of wireless energy transfer (WET) and wireless information transfer (WIT) by intelligently adjusting the phase shift of each reflecting element. To evaluate the performance of this IRS assisted WPSN, we are interested in maximizing its system sum throughput to jointly optimize the energy beamforming of the PS, the transmission time allocation, as well as the phase shifts of the WET and WIT phases. The formulated problem is not jointly convex due to the multiple coupled variables. To deal with its non-convexity, we first independently find the phase shifts of the WIT phase in closed-form. We further propose an alternating optimization (AO) algorithm to iteratively solve the sum throughput maximization problem. To be specific, a semidefinite programming (SDP) relaxation approach is adopted to design the energy beamforming and the time allocation for given phase shifts of WET phase, which is then optimized for given energy beamforming and time allocation. Moreover, we propose an AO low-complexity scheme to significantly reduce the computational complexity incurred by the SDP relaxation, where the optimal closed-form energy beamforming, time allocation, and phase shifts of the WET phase are derived. Finally, numerical results are demonstrated to validate the effectiveness of the proposed algorithm, and highlight the beneficial role of the IRS in comparison to the benchmark schemes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge