"Time": models, code, and papers

Scheduling Aerial Vehicles in an Urban Air Mobility Scheme

Aug 03, 2021

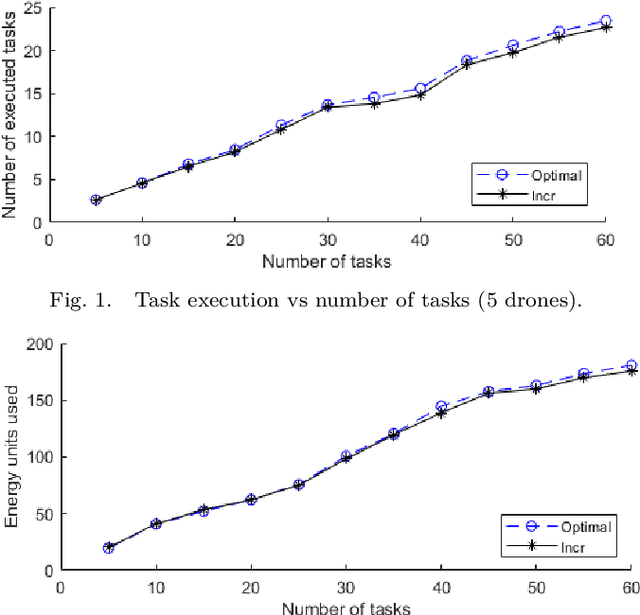

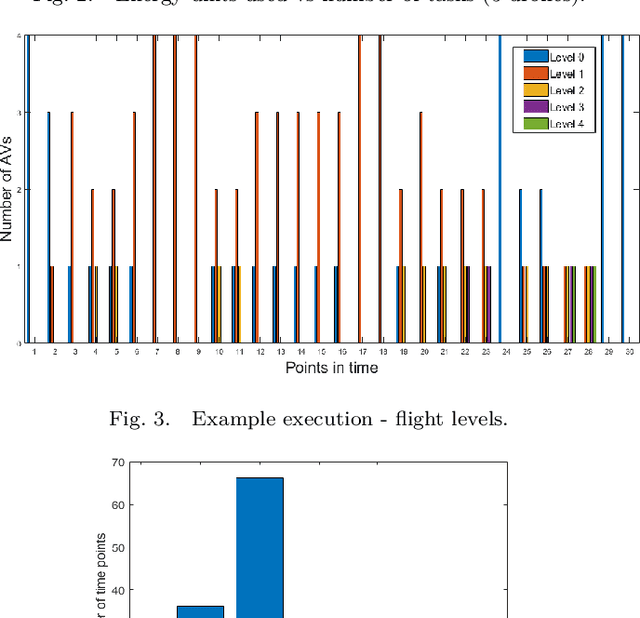

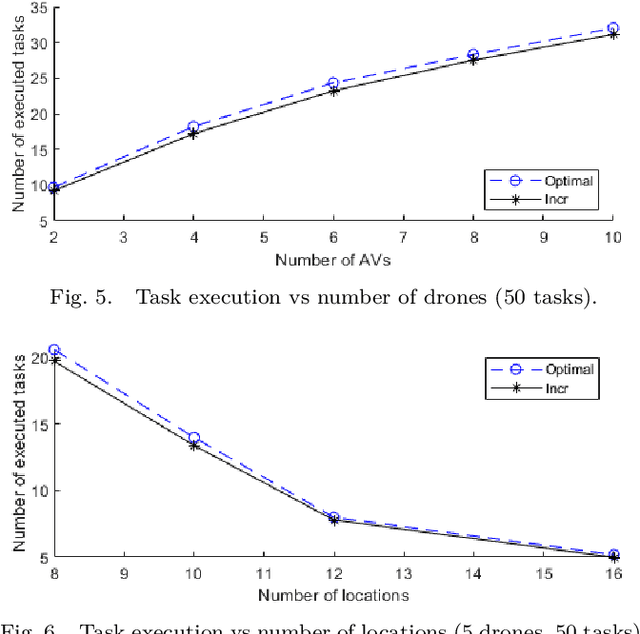

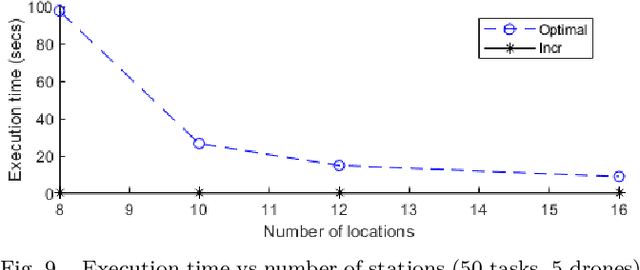

Highly populated cities face several challenges, one of them being the intense traffic congestion. In recent years, the concept of Urban Air Mobility has been put forward by large companies and organizations as a way to address this problem, and this approach has been rapidly gaining ground. This disruptive technology involves aerial vehicles (AVs) for hire than can be utilized by customers to travel between locations within large cities. This concept has the potential to drastically decrease traffic congestion and reduce air pollution, since these vehicles typically use electric motors powered by batteries. This work studies the problem of scheduling the assignment of AVs to customers, having as a goal to maximize the serviced customers and minimize the energy consumption of the AVs by forcing them to fly at the lowest possible altitude. Initially, an Integer Linear Program (ILP) formulation is presented, that is solved offline and optimally, followed by a near-optimal algorithm, that solves the problem incrementally, one AV at a time, to address scalability issues, allowing scheduling in problems involving large numbers of locations, AVs, and customer requests.

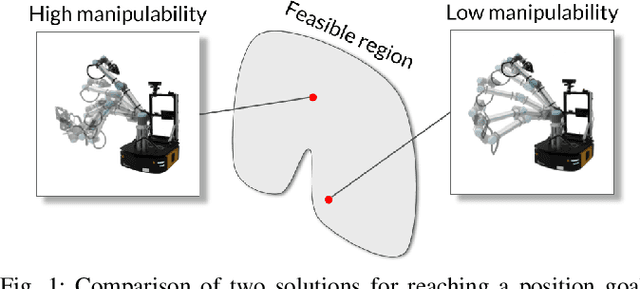

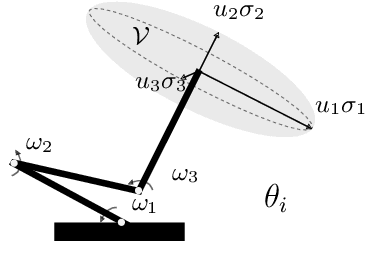

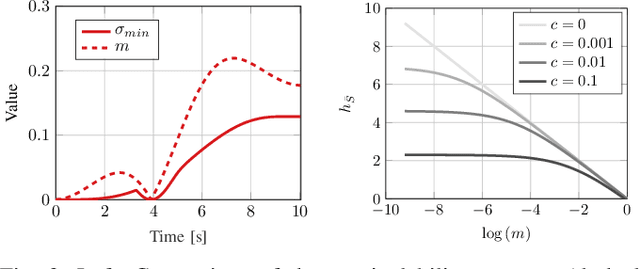

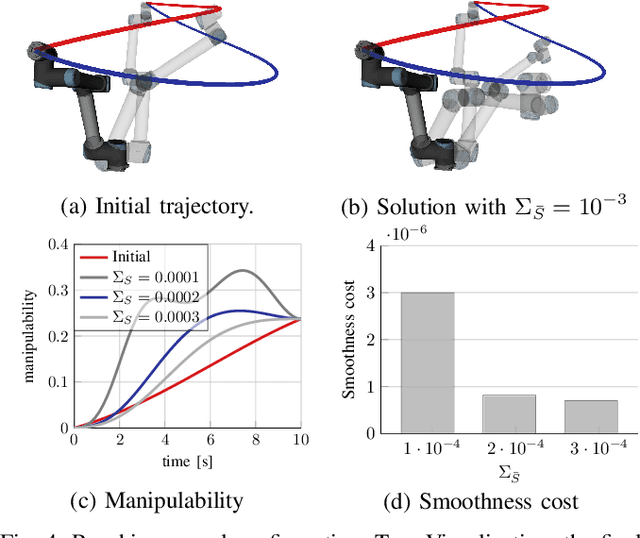

Fast Manipulability Maximization Using Continuous-Time Trajectory Optimization

Aug 08, 2019

A significant challenge in manipulation motion planning is to ensure agility in the face of unpredictable changes during task execution. This requires the identification and possible modification of suitable joint-space trajectories, since the joint velocities required to achieve a specific end-effector motion vary with manipulator configuration. For a given manipulator configuration, the joint space-to-task space velocity mapping is characterized by a quantity known as the manipulability index. In contrast to previous control-based approaches, we examine the maximization of manipulability during planning as a way of achieving adaptable and safe joint space-to-task space motion mappings in various scenarios. By representing the manipulator trajectory as a continuous-time Gaussian process (GP), we are able to leverage recent advances in trajectory optimization to maximize the manipulability index during trajectory generation. Moreover, the sparsity of our chosen representation reduces the typically large computational cost associated with maximizing manipulability when additional constraints exist. Results from simulation studies and experiments with a real manipulator demonstrate increases in manipulability, while maintaining smooth trajectories with more dexterous (and therefore more agile) arm configurations.

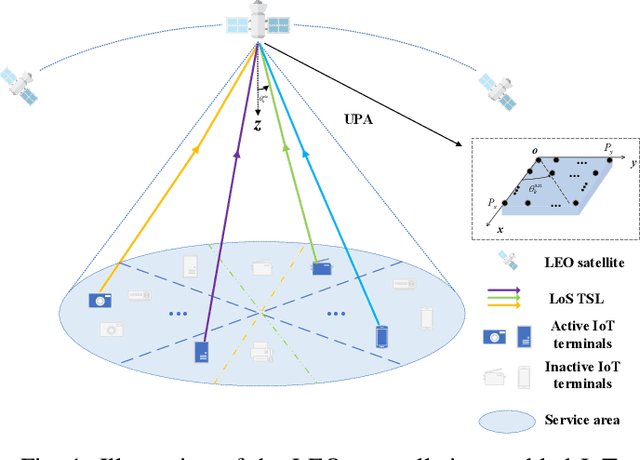

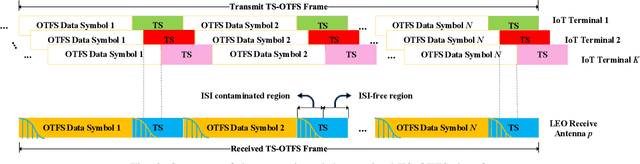

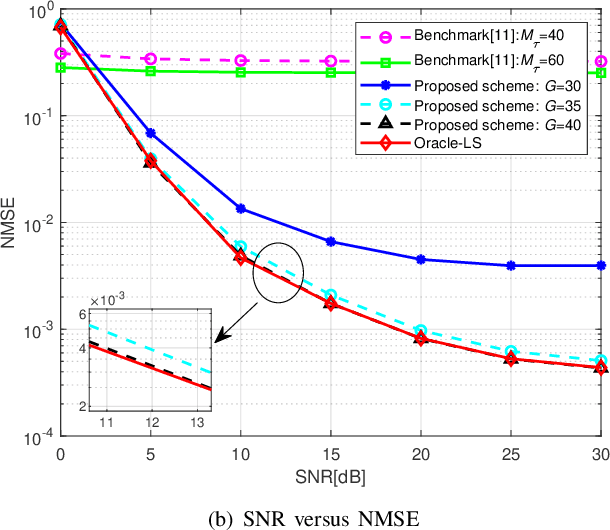

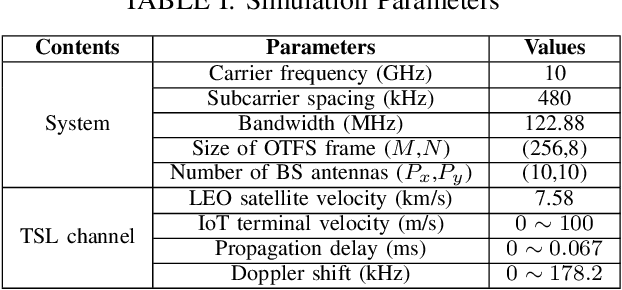

Joint Active User Detection and Channel Estimation for Grant-Free NOMA-OTFS in LEO Constellation Internet-of-Things

Aug 03, 2021

The flourishing low-Earth orbit (LEO) constellation communication network provides a promising solution for seamless coverage services to Internet-of-Things (IoT) terminals. However, confronted with massive connectivity and rapid variation of terrestrial-satellite link (TSL), the traditional grant-free random-access schemes always fail to match this scenario. In this paper, a new non-orthogonal multiple-access (NOMA) transmission protocol that incorporates orthogonal time frequency space (OTFS) modulation is proposed to solve these problems. Furthermore, we propose a two-stages joint active user detection and channel estimation scheme based on the training sequences aided OTFS data frame structure. Specifically, in the first stage, with the aid of training sequences, we perform active user detection and coarse channel estimation by recovering the sparse sampled channel vectors. And then, we develop a parametric approach to facilitate more accurate result of channel estimation with the previously recovered sampled channel vectors according to the inherent characteristics of TSL channel. Simulation results demonstrate the superiority of the proposed method in this kind of high-mobility scenario in the end.

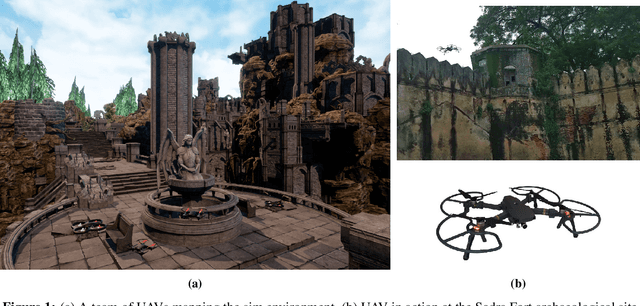

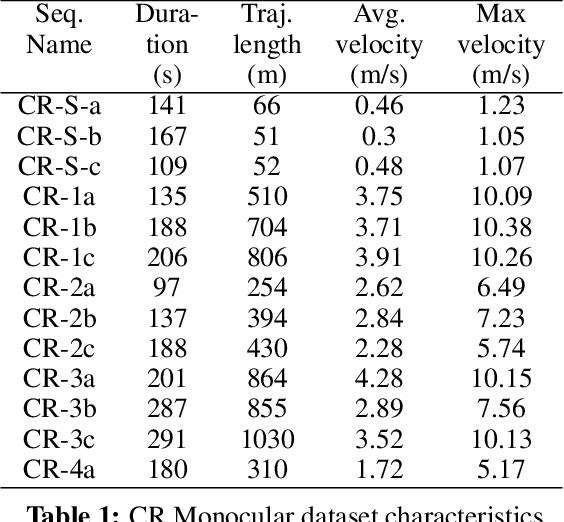

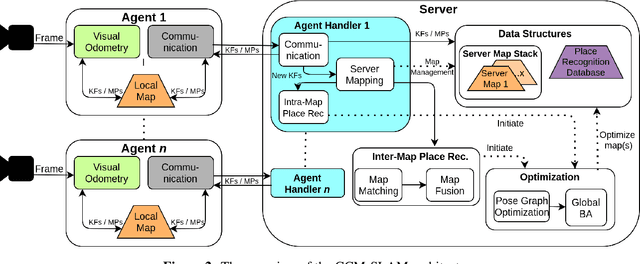

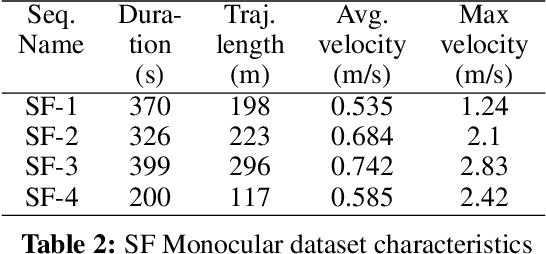

Collaborative Mapping of Archaeological Sites using multiple UAVs

May 17, 2021

UAVs have found an important application in archaeological mapping. Majority of the existing methods employ an offline method to process the data collected from an archaeological site. They are time-consuming and computationally expensive. In this paper, we present a multi-UAV approach for faster mapping of archaeological sites. Employing a team of UAVs not only reduces the mapping time by distribution of coverage area, but also improves the map accuracy by exchange of information. Through extensive experiments in a realistic simulation (AirSim), we demonstrate the advantages of using a collaborative mapping approach. We then create the first 3D map of the Sadra Fort, a 15th Century Fort located in Gujarat, India using our proposed method. Additionally, we present two novel archaeological datasets recorded in both simulation and real-world to facilitate research on collaborative archaeological mapping. For the benefit of the community, we make the AirSim simulation environment, as well as the datasets publicly available.

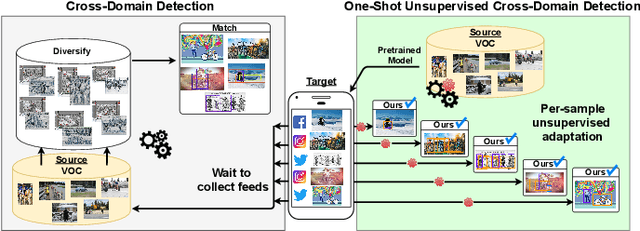

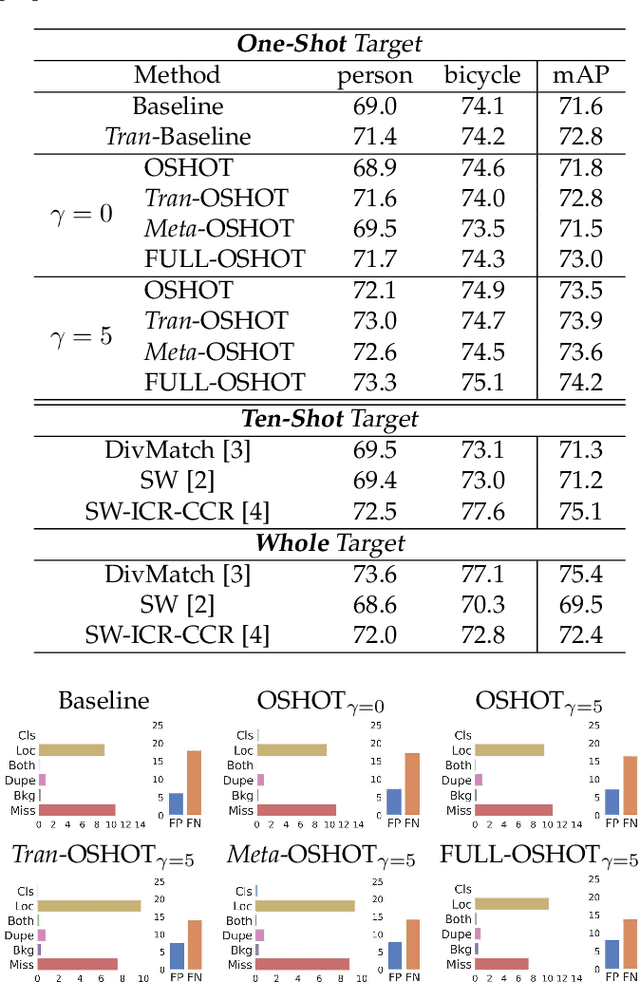

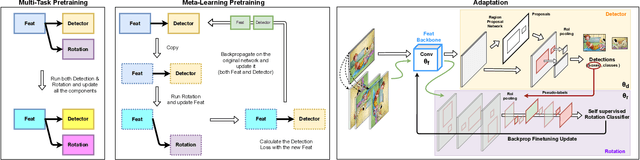

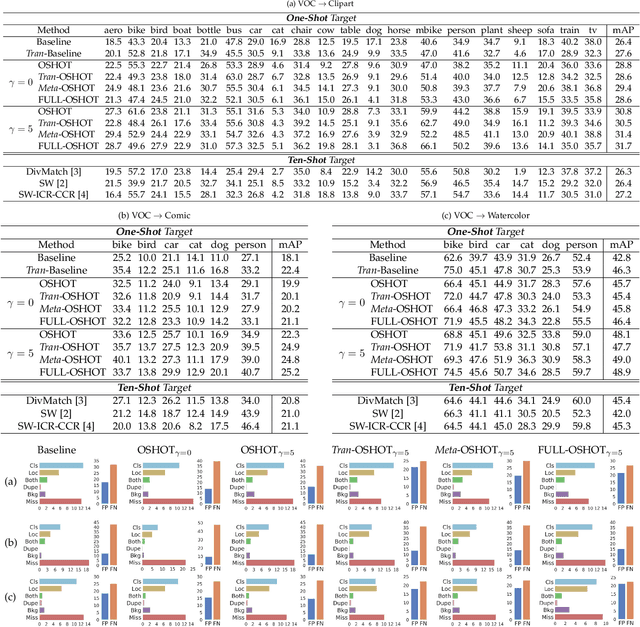

Self-Supervision & Meta-Learning for One-Shot Unsupervised Cross-Domain Detection

Jun 07, 2021

Deep detection models have largely demonstrated to be extremely powerful in controlled settings, but appear brittle and fail when applied off-the-shelf on unseen domains. All the adaptive approaches developed to amend this issue access a sizable amount of target samples at training time, a strategy not suitable when the target is unknown and its data are not available in advance. Consider for instance the task of monitoring image feeds from social media: as every image is uploaded by a different user it belongs to a different target domain that is impossible to foresee during training. Our work addresses this setting, presenting an object detection algorithm able to perform unsupervised adaptation across domains by using only one target sample, seen at test time. We introduce a multi-task architecture that one-shot adapts to any incoming sample by iteratively solving a self-supervised task on it. We further exploit meta-learning to simulate single-sample cross domain learning episodes and better align to the test condition. Moreover, a cross-task pseudo-labeling procedure allows to focus on the image foreground and enhances the adaptation process. A thorough benchmark analysis against the most recent cross-domain detection methods and a detailed ablation study show the advantage of our approach.

Shape As Points: A Differentiable Poisson Solver

Jun 07, 2021

In recent years, neural implicit representations gained popularity in 3D reconstruction due to their expressiveness and flexibility. However, the implicit nature of neural implicit representations results in slow inference time and requires careful initialization. In this paper, we revisit the classic yet ubiquitous point cloud representation and introduce a differentiable point-to-mesh layer using a differentiable formulation of Poisson Surface Reconstruction (PSR) that allows for a GPU-accelerated fast solution of the indicator function given an oriented point cloud. The differentiable PSR layer allows us to efficiently and differentiably bridge the explicit 3D point representation with the 3D mesh via the implicit indicator field, enabling end-to-end optimization of surface reconstruction metrics such as Chamfer distance. This duality between points and meshes hence allows us to represent shapes as oriented point clouds, which are explicit, lightweight and expressive. Compared to neural implicit representations, our Shape-As-Points (SAP) model is more interpretable, lightweight, and accelerates inference time by one order of magnitude. Compared to other explicit representations such as points, patches, and meshes, SAP produces topology-agnostic, watertight manifold surfaces. We demonstrate the effectiveness of SAP on the task of surface reconstruction from unoriented point clouds and learning-based reconstruction.

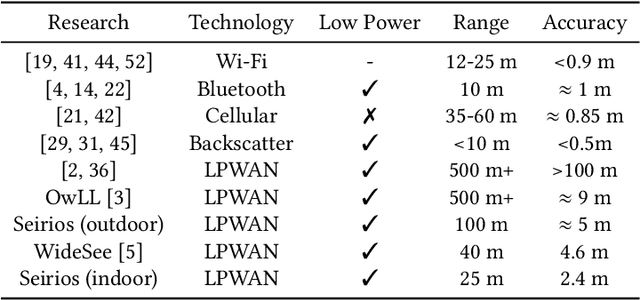

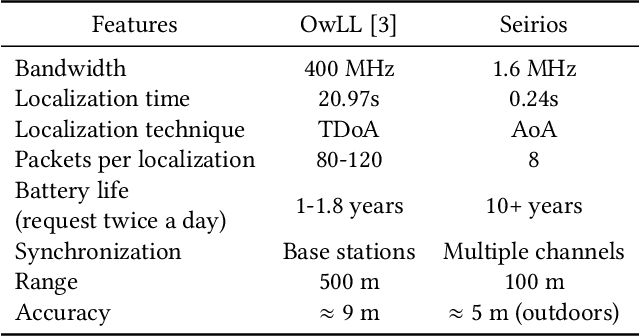

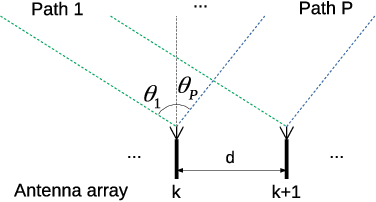

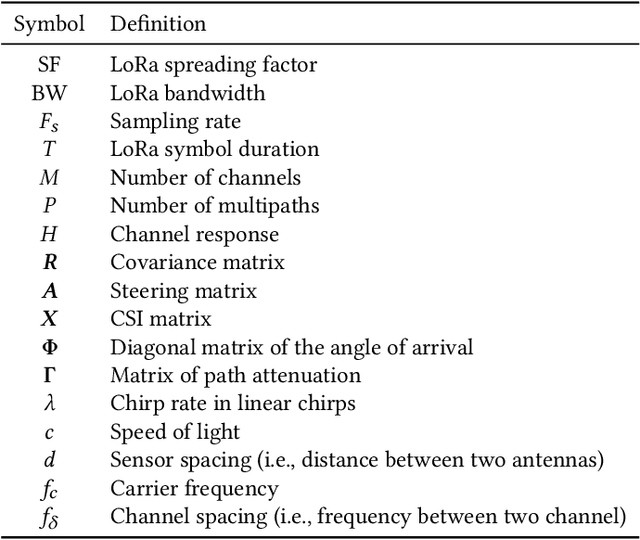

Seirios: Leveraging Multiple Channels for LoRaWAN Indoor and Outdoor Localization

Aug 16, 2021

Localization is important for a large number of Internet of Things (IoT) endpoint devices connected by LoRaWAN. Due to the bandwidth limitations of LoRaWAN, existing localization methods without specialized hardware (e.g., GPS) produce poor performance. To increase the localization accuracy, we propose a super-resolution localization method, called Seirios, which features a novel algorithm to synchronize multiple non-overlapped communication channels by exploiting the unique features of the radio physical layer to increase the overall bandwidth. By exploiting both the original and the conjugate of the physical layer, Seirios can resolve the direct path from multiple reflectors in both indoor and outdoor environments. We design a Seirios prototype and evaluate its performance in an outdoor area of 100 m $\times$ 60 m, and an indoor area of 25 m $\times$ 15 m, which shows that Seirios can achieve a median error of 4.4 m outdoors (80% samples < 6.4 m), and 2.4 m indoors (80% samples < 6.1 m), respectively. The results show that Seirios produces 42% less localization error than the baseline approach. Our evaluation also shows that, different to previous studies in Wi-Fi localization systems that have wider bandwidth, time-of-fight (ToF) estimation is less effective for LoRaWAN localization systems with narrowband radio signals.

Clustering Filipino Disaster-Related Tweets Using Incremental and Density-Based Spatiotemporal Algorithm with Support Vector Machines for Needs Assessment 2

Aug 16, 2021

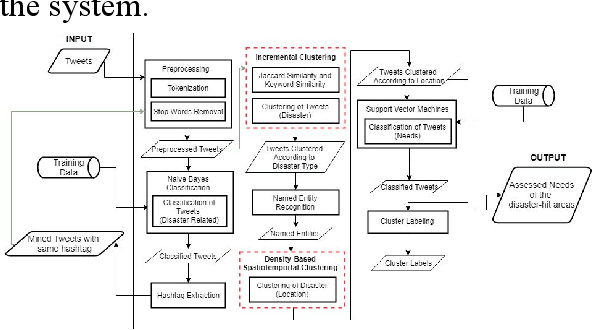

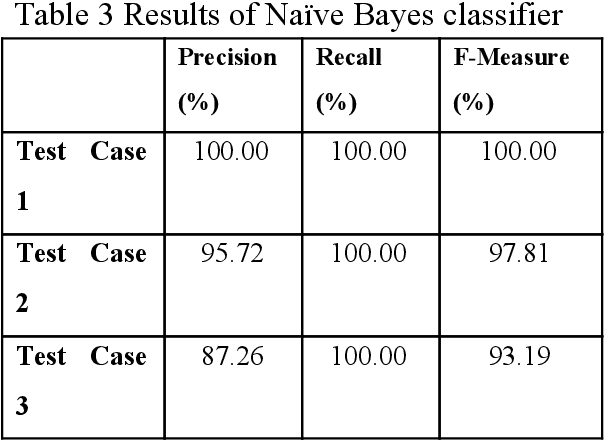

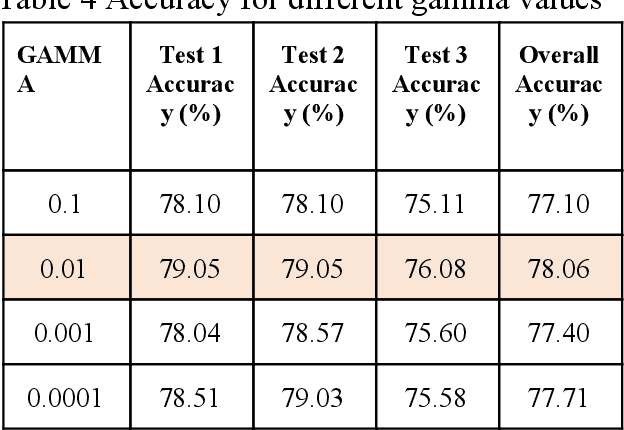

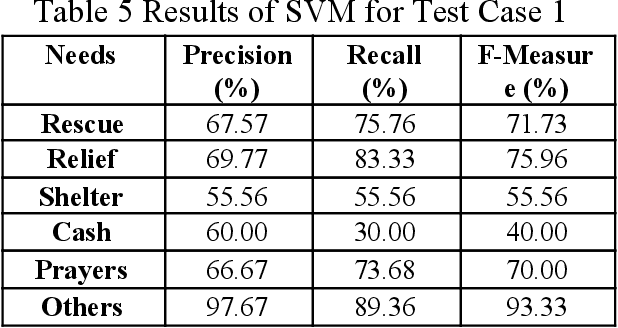

Social media has played a huge part on how people get informed and communicate with one another. It has helped people express their needs due to distress especially during disasters. Because posts made through it are publicly accessible by default, Twitter is among the most helpful social media sites in times of disaster. With this, the study aims to assess the needs expressed during calamities by Filipinos on Twitter. Data were gathered and classified as either disaster-related or unrelated with the use of Na\"ive Bayes classifier. After this, the disaster-related tweets were clustered per disaster type using Incremental Clustering Algorithm, and then sub-clustered based on the location and time of the tweet using Density-based Spatiotemporal Clustering Algorithm. Lastly, using Support Vector Machines, the tweets were classified according to the expressed need, such as shelter, rescue, relief, cash, prayer, and others. After conducting the study, results showed that the Incremental Clustering Algorithm and Density-Based Spatiotemporal Clustering Algorithm were able to cluster the tweets with f-measure scores of 47.20% and 82.28% respectively. Also, the Na\"ive Bayes and Support Vector Machines were able to classify with an average f-measure score of 97% and an average accuracy of 77.57% respectively.

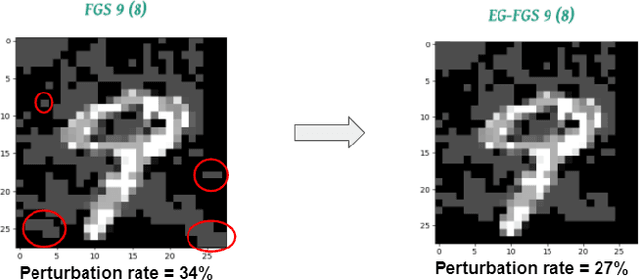

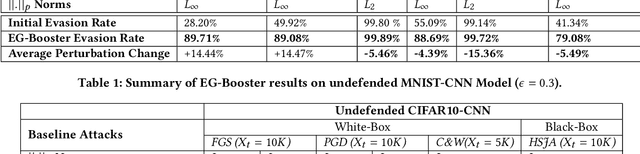

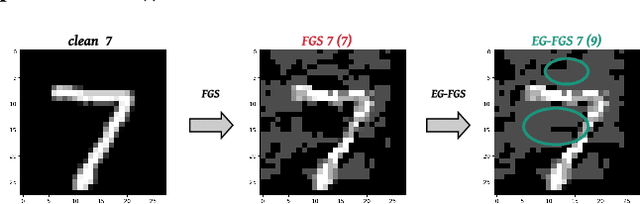

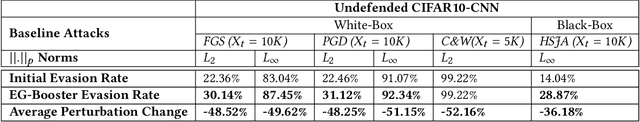

EG-Booster: Explanation-Guided Booster of ML Evasion Attacks

Aug 31, 2021

The widespread usage of machine learning (ML) in a myriad of domains has raised questions about its trustworthiness in security-critical environments. Part of the quest for trustworthy ML is robustness evaluation of ML models to test-time adversarial examples. Inline with the trustworthy ML goal, a useful input to potentially aid robustness evaluation is feature-based explanations of model predictions. In this paper, we present a novel approach called EG-Booster that leverages techniques from explainable ML to guide adversarial example crafting for improved robustness evaluation of ML models before deploying them in security-critical settings. The key insight in EG-Booster is the use of feature-based explanations of model predictions to guide adversarial example crafting by adding consequential perturbations likely to result in model evasion and avoiding non-consequential ones unlikely to contribute to evasion. EG-Booster is agnostic to model architecture, threat model, and supports diverse distance metrics used previously in the literature. We evaluate EG-Booster using image classification benchmark datasets, MNIST and CIFAR10. Our findings suggest that EG-Booster significantly improves evasion rate of state-of-the-art attacks while performing less number of perturbations. Through extensive experiments that covers four white-box and three black-box attacks, we demonstrate the effectiveness of EG-Booster against two undefended neural networks trained on MNIST and CIFAR10, and another adversarially-trained ResNet model trained on CIFAR10. Furthermore, we introduce a stability assessment metric and evaluate the reliability of our explanation-based approach by observing the similarity between the model's classification outputs across multiple runs of EG-Booster.

Domain-guided Machine Learning for Remotely Sensed In-Season Crop Growth Estimation

Jun 24, 2021

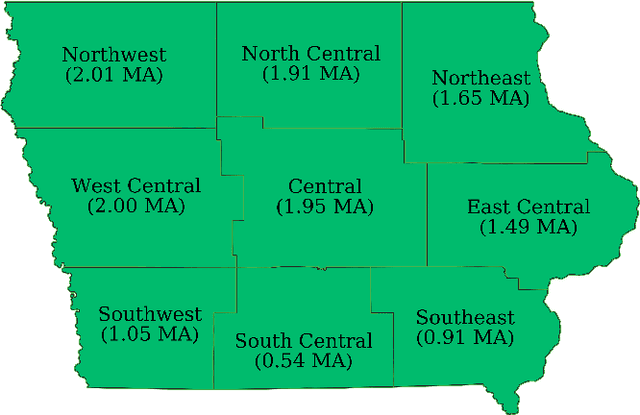

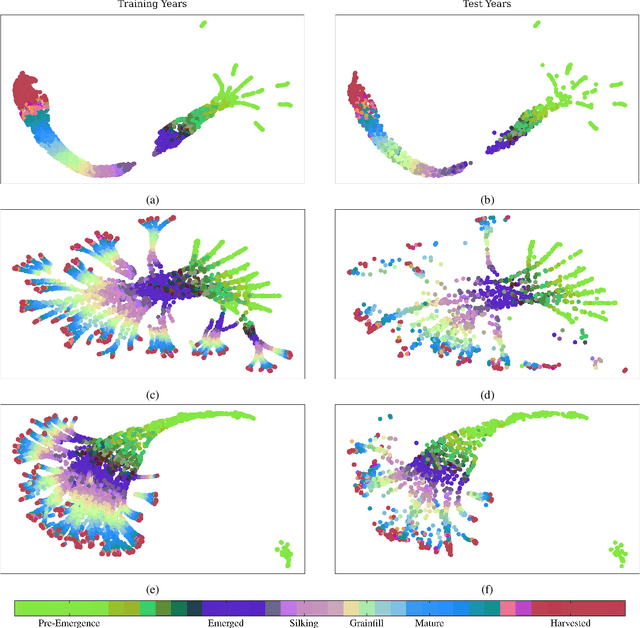

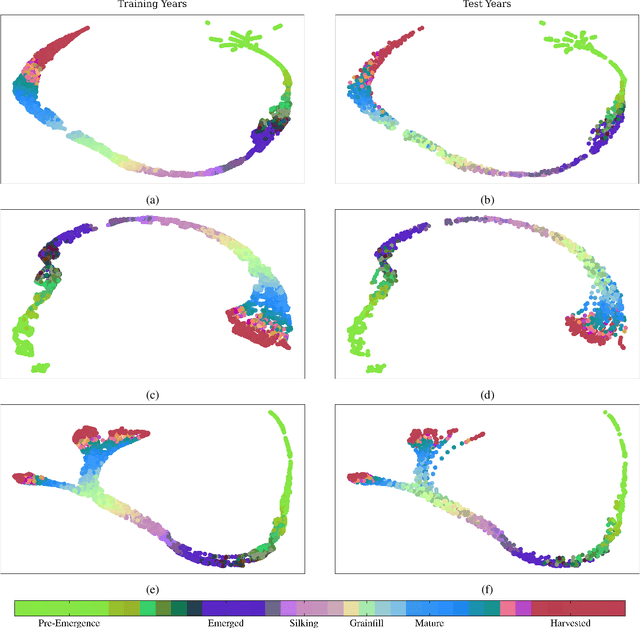

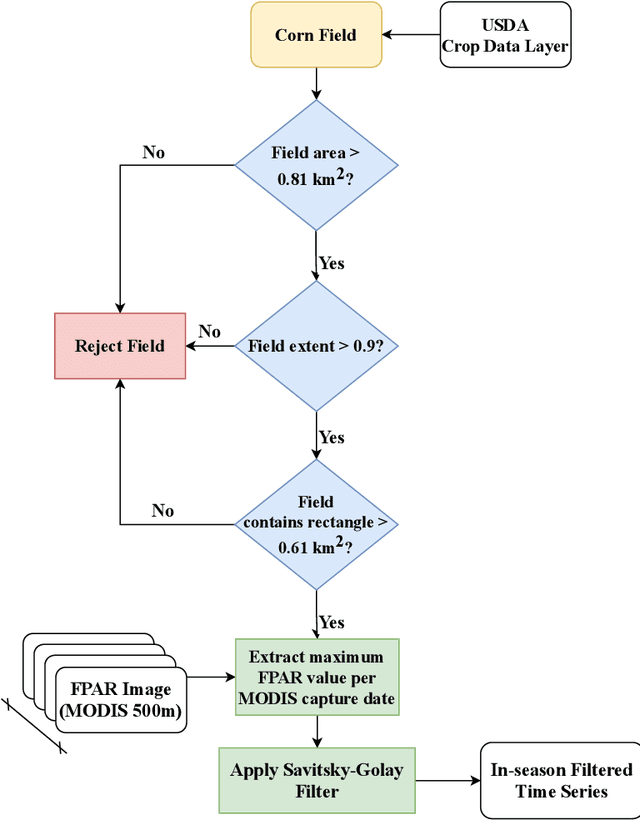

Advanced machine learning techniques have been used in remote sensing (RS) applications such as crop mapping and yield prediction, but remain under-utilized for tracking crop progress. In this study, we demonstrate the use of agronomic knowledge of crop growth drivers in a Long Short-Term Memory-based, Domain-guided neural network (DgNN) for in-season crop progress estimation. The DgNN uses a branched structure and attention to separate independent crop growth drivers and capture their varying importance throughout the growing season. The DgNN is implemented for corn, using RS data in Iowa for the period 2003-2019, with USDA crop progress reports used as ground truth. State-wide DgNN performance shows significant improvement over sequential and dense-only NN structures, and a widely-used Hidden Markov Model method. The DgNN had a 3.5% higher Nash-Sutfliffe efficiency over all growth stages and 33% more weeks with highest cosine similarity than the other NNs during test years. The DgNN and Sequential NN were more robust during periods of abnormal crop progress, though estimating the Silking-Grainfill transition was difficult for all methods. Finally, Uniform Manifold Approximation and Projection visualizations of layer activations showed how LSTM-based NNs separate crop growth time-series differently from a dense-only structure. Results from this study exhibit both the viability of NNs in crop growth stage estimation (CGSE) and the benefits of using domain knowledge. The DgNN methodology presented here can be extended to provide near-real time CGSE of other crops.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge