"Time": models, code, and papers

Parallel Multi-Graph Convolution Network For Metro Passenger Volume Prediction

Aug 29, 2021

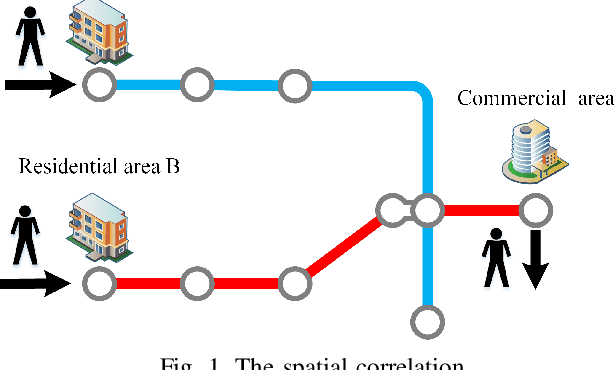

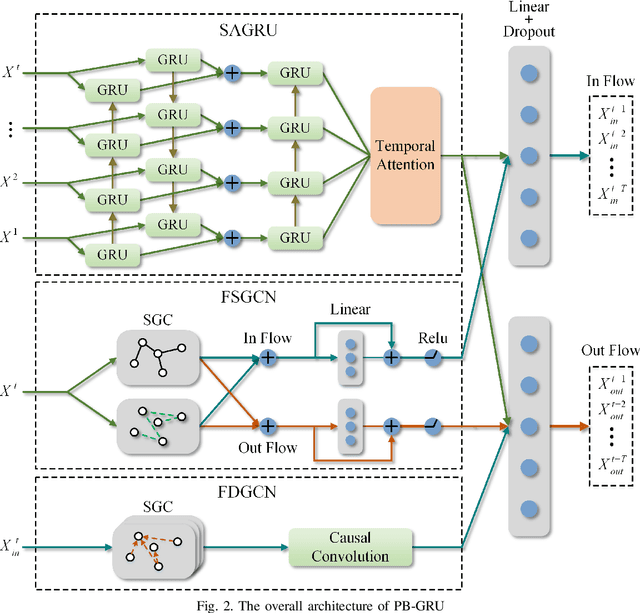

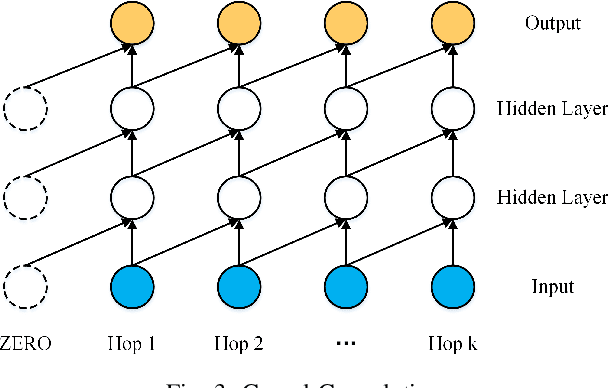

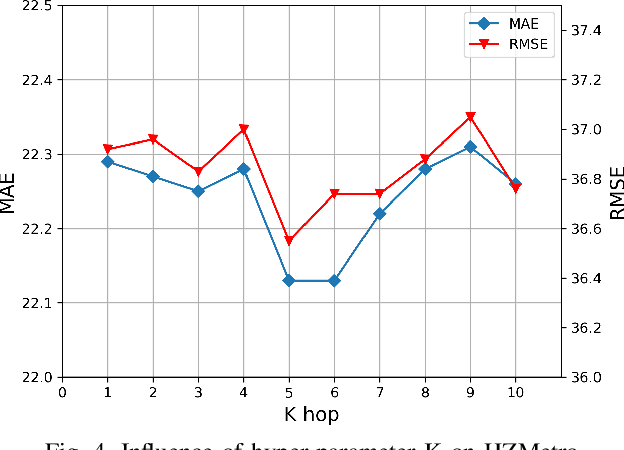

Accurate prediction of metro passenger volume (number of passengers) is valuable to realize real-time metro system management, which is a pivotal yet challenging task in intelligent transportation. Due to the complex spatial correlation and temporal variation of urban subway ridership behavior, deep learning has been widely used to capture non-linear spatial-temporal dependencies. Unfortunately, the current deep learning methods only adopt graph convolutional network as a component to model spatial relationship, without making full use of the different spatial correlation patterns between stations. In order to further improve the accuracy of metro passenger volume prediction, a deep learning model composed of Parallel multi-graph convolution and stacked Bidirectional unidirectional Gated Recurrent Unit (PB-GRU) was proposed in this paper. The parallel multi-graph convolution captures the origin-destination (OD) distribution and similar flow pattern between the metro stations, while bidirectional gated recurrent unit considers the passenger volume sequence in forward and backward directions and learns complex temporal features. Extensive experiments on two real-world datasets of subway passenger flow show the efficacy of the model. Surprisingly, compared with the existing methods, PB-GRU achieves much lower prediction error.

luvHarris: A Practical Corner Detector for Event-cameras

May 24, 2021

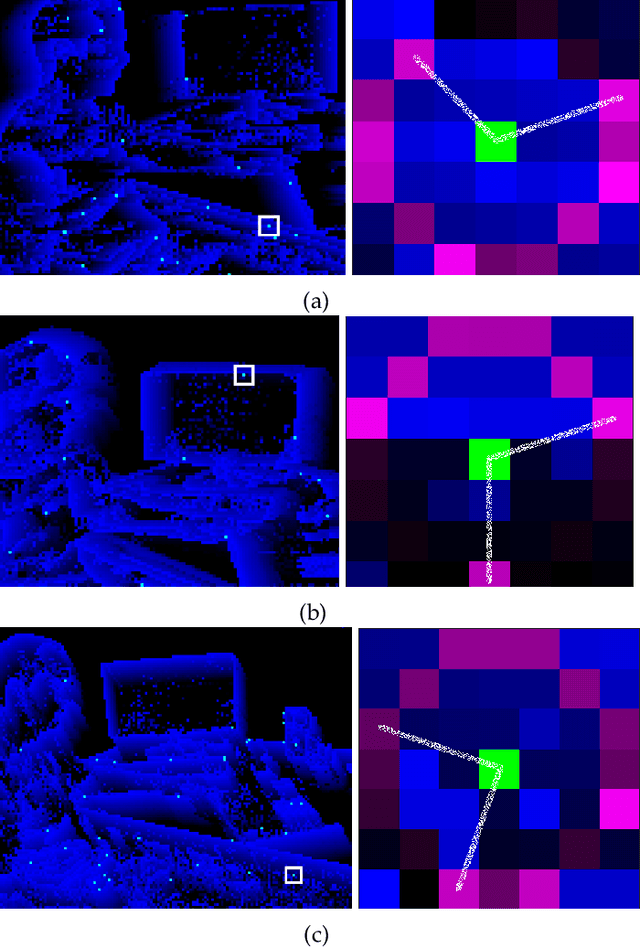

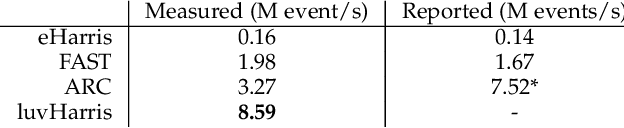

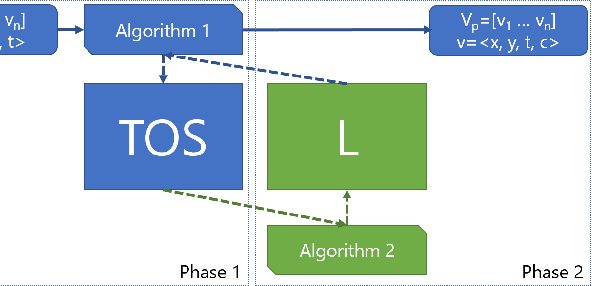

There have been a number of corner detection methods proposed for event cameras in the last years, since event-driven computer vision has become more accessible. Current state-of-the-art have either unsatisfactory accuracy or real-time performance when considered for practical use; random motion using a live camera in an unconstrained environment. In this paper, we present yet another method to perform corner detection, dubbed look-up event-Harris (luvHarris), that employs the Harris algorithm for high accuracy but manages an improved event throughput. Our method has two major contributions, 1. a novel "threshold ordinal event-surface" that removes certain tuning parameters and is well suited for Harris operations, and 2. an implementation of the Harris algorithm such that the computational load per-event is minimised and computational heavy convolutions are performed only 'as-fast-as-possible', i.e. only as computational resources are available. The result is a practical, real-time, and robust corner detector that runs more than $2.6\times$ the speed of current state-of-the-art; a necessity when using high-resolution event-camera in real-time. We explain the considerations taken for the approach, compare the algorithm to current state-of-the-art in terms of computational performance and detection accuracy, and discuss the validity of the proposed approach for event cameras.

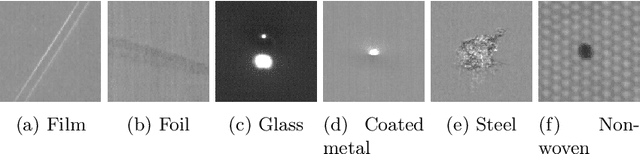

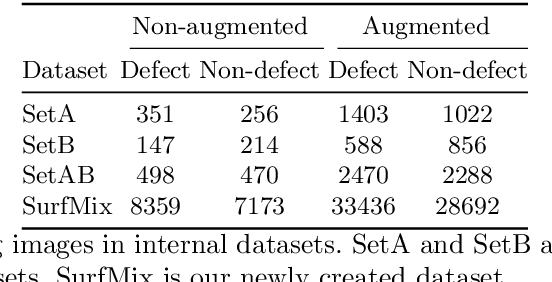

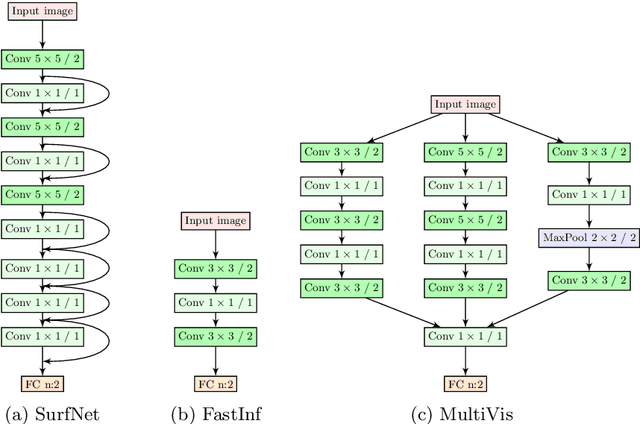

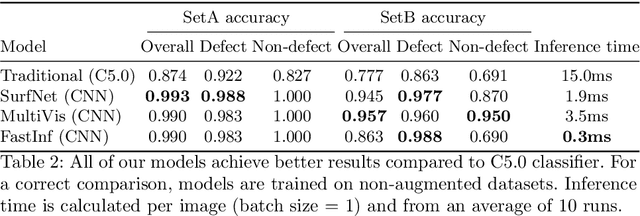

Surface Defect Classification in Real-Time Using Convolutional Neural Networks

Apr 07, 2019

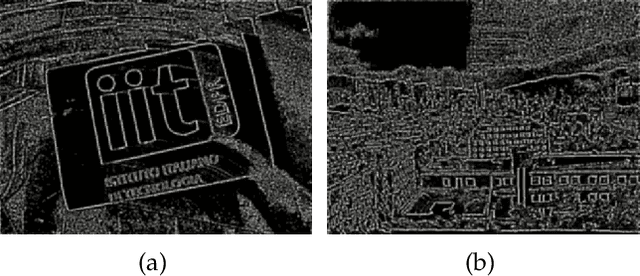

Surface inspection systems are an important application domain for computer vision, as they are used for defect detection and classification in the manufacturing industry. Existing systems use hand-crafted features which require extensive domain knowledge to create. Even though Convolutional neural networks (CNNs) have proven successful in many large-scale challenges, industrial inspection systems have yet barely realized their potential due to two significant challenges: real-time processing speed requirements and specialized narrow domain-specific datasets which are sometimes limited in size. In this paper, we propose CNN models that are specifically designed to handle capacity and real-time speed requirements of surface inspection systems. To train and evaluate our network models, we created a surface image dataset containing more than 22000 labeled images with many types of surface materials and achieved 98.0% accuracy in binary defect classification. To solve the class imbalance problem in our datasets, we introduce neural data augmentation methods which are also applicable to similar domains that suffer from the same problem. Our results show that deep learning based methods are feasible to be used in surface inspection systems and outperform traditional methods in accuracy and inference time by considerable margins.

Mispronunciation Detection and Correction via Discrete Acoustic Units

Aug 12, 2021

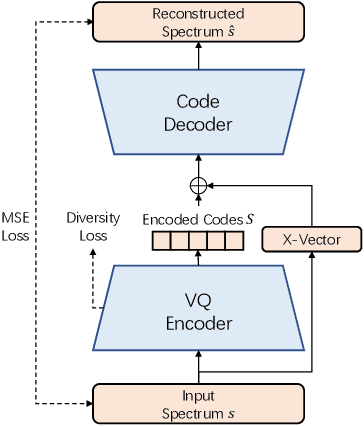

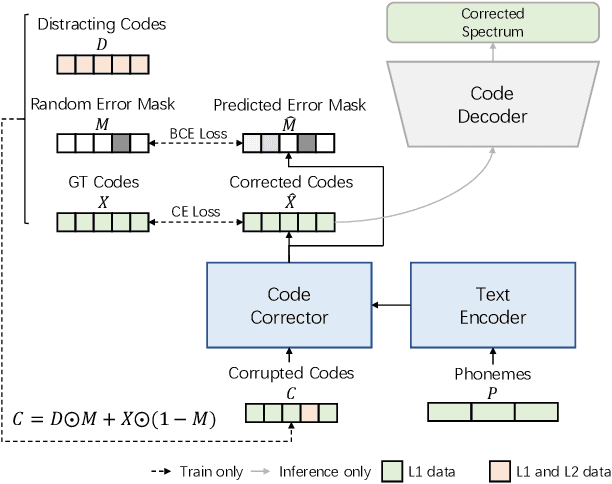

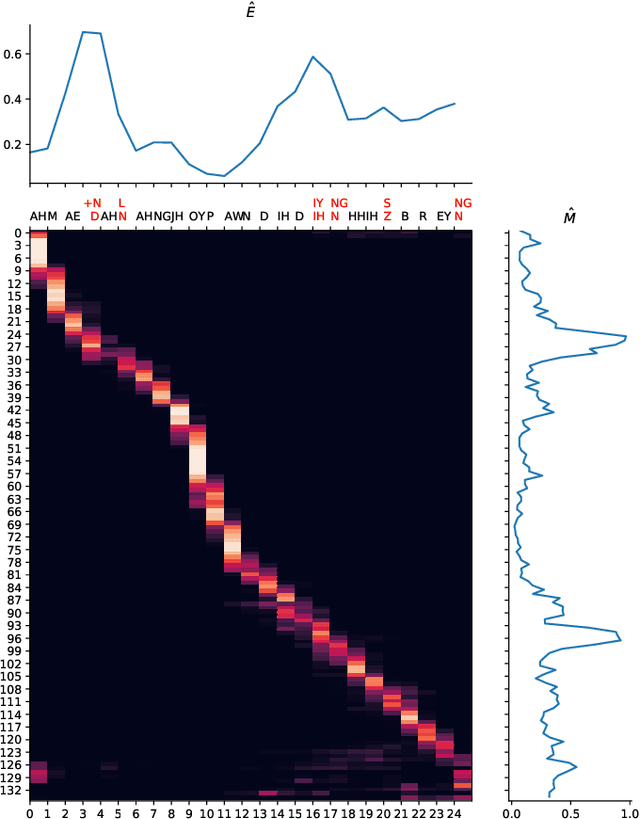

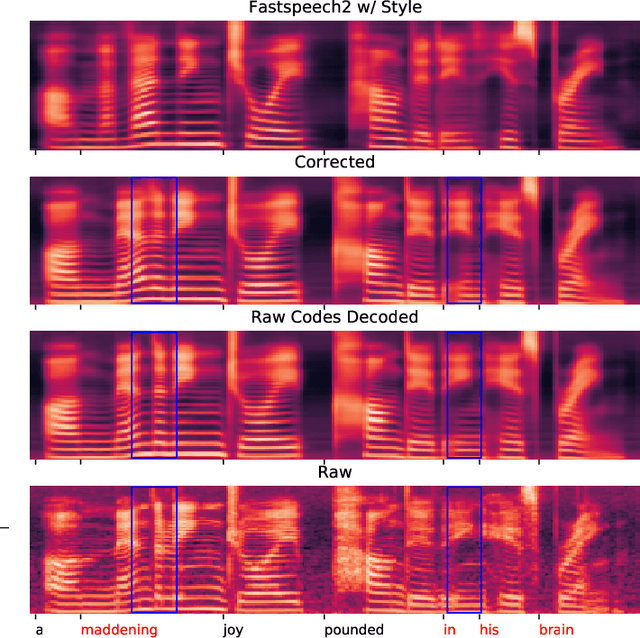

Computer-Assisted Pronunciation Training (CAPT) plays an important role in language learning. However, conventional CAPT methods cannot effectively use non-native utterances for supervised training because the ground truth pronunciation needs expensive annotation. Meanwhile, certain undefined nonnative phonemes cannot be correctly classified into standard phonemes. To solve these problems, we use the vector-quantized variational autoencoder (VQ-VAE) to encode the speech into discrete acoustic units in a self-supervised manner. Based on these units, we propose a novel method that integrates both discriminative and generative models. The proposed method can detect mispronunciation and generate the correct pronunciation at the same time. Experiments on the L2-Arctic dataset show that the detection F1 score is improved by 9.58% relatively compared with recognition-based methods. The proposed method also achieves a comparable word error rate (WER) and the best style preservation for mispronunciation correction compared with text-to-speech (TTS) methods.

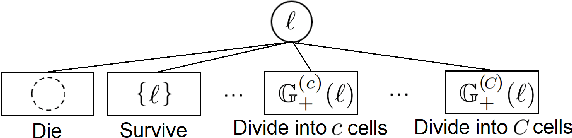

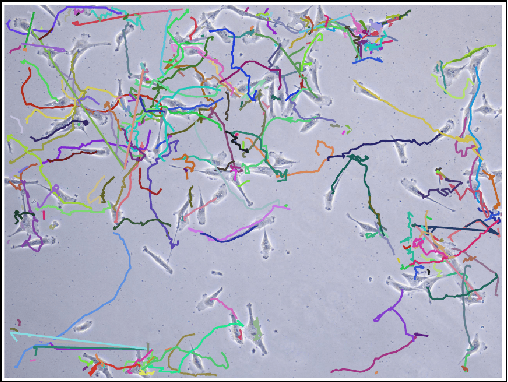

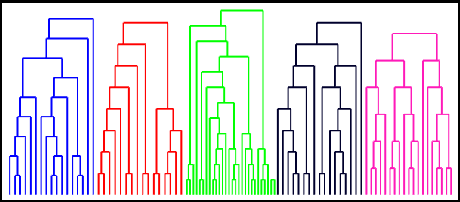

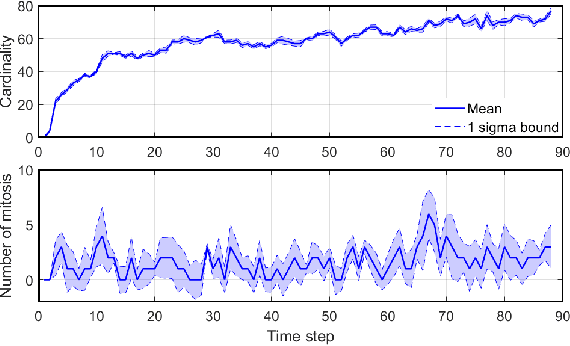

Tracking Cells and their Lineages via Labeled Random Finite Sets

Apr 22, 2021

Determining the trajectories of cells and their lineages or ancestries in live-cell experiments are fundamental to the understanding of how cells behave and divide. This paper proposes novel online algorithms for jointly tracking and resolving lineages of an unknown and time-varying number of cells from time-lapse video data. Our approach involves modeling the cell ensemble as a labeled random finite set with labels representing cell identities and lineages. A spawning model is developed to take into account cell lineages and changes in cell appearance prior to division. We then derive analytic filters to propagate multi-object distributions that contain information on the current cell ensemble including their lineages. We also develop numerical implementations of the resulting multi-object filters. Experiments using simulation, synthetic cell migration video, and real time-lapse sequence, are presented to demonstrate the capability of the solutions.

Explainable Identification of Dementia from Transcripts using Transformer Networks

Sep 14, 2021

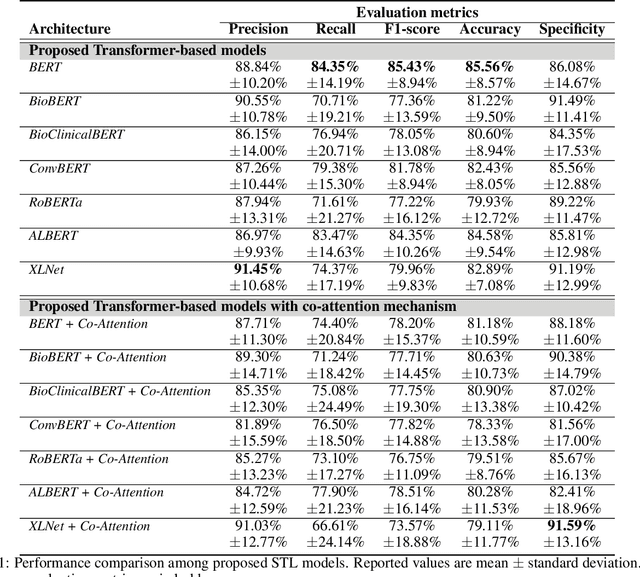

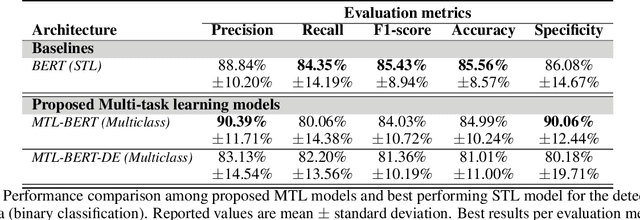

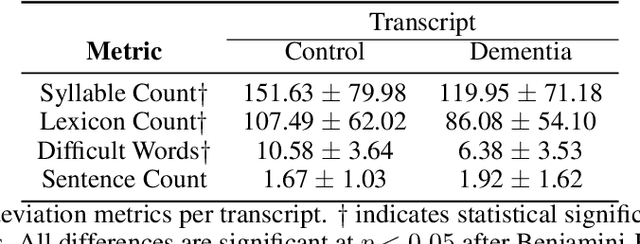

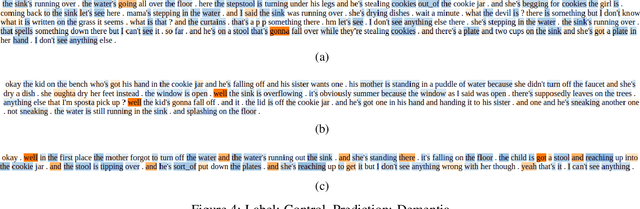

Alzheimer's disease (AD) is the main cause of dementia which is accompanied by loss of memory and may lead to severe consequences in peoples' everyday life if not diagnosed on time. Very few works have exploited transformer-based networks and despite the high accuracy achieved, little work has been done in terms of model interpretability. In addition, although Mini-Mental State Exam (MMSE) scores are inextricably linked with the identification of dementia, research works face the task of dementia identification and the task of the prediction of MMSE scores as two separate tasks. In order to address these limitations, we employ several transformer-based models, with BERT achieving the highest accuracy accounting for 85.56%. Concurrently, we propose an interpretable method to detect AD patients based on siamese networks reaching accuracy up to 81.18%. Next, we introduce two multi-task learning models, where the main task refers to the identification of dementia (binary classification), while the auxiliary one corresponds to the identification of the severity of dementia (multiclass classification). Our model obtains accuracy equal to 84.99% on the detection of AD patients in the multi-task learning setting. Finally, we present some new methods to identify the linguistic patterns used by AD patients and non-AD ones, including text statistics, vocabulary uniqueness, word usage, correlations via a detailed linguistic analysis, and explainability techniques (LIME). Findings indicate significant differences in language between AD and non-AD patients.

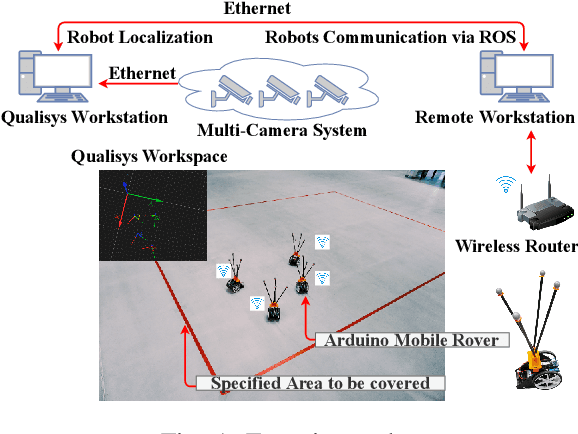

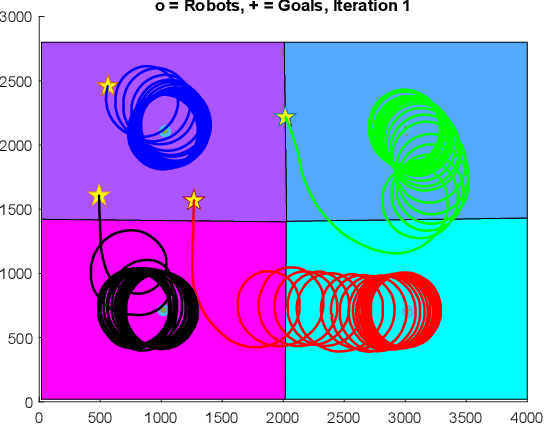

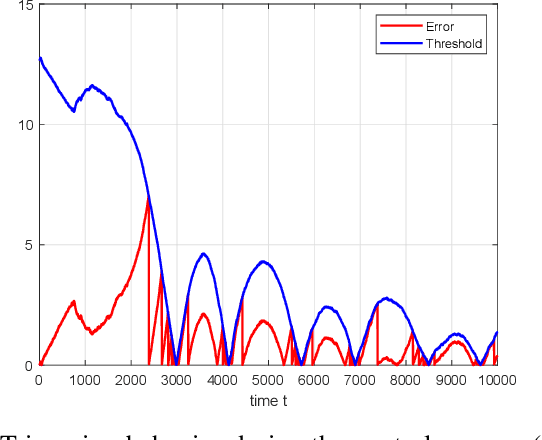

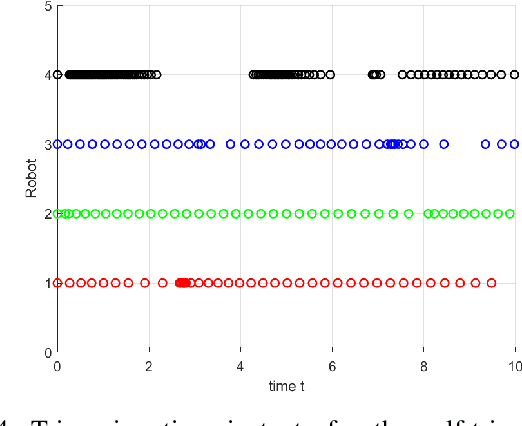

Distributed Event- and Self-Triggered Coverage Control with Speed Constrained Unicycle Robots

Jul 30, 2021

Voronoi coverage control is a particular problem of importance in the area of multi-robot systems, which considers a network of multiple autonomous robots, tasked with optimally covering a large area. This is a common task for fleets of fixed-wing Unmanned Aerial Vehicles (UAVs), which are described in this work by a unicycle model with constant forward-speed constraints. We develop event-based control/communication algorithms to relax the resource requirements on wireless communication and control actuators, an important feature for battery-driven or otherwise energy-constrained systems. To overcome the drawback that the event-triggered algorithm requires continuous measurement of system states, we propose a self-triggered algorithm to estimate the next triggering time. Hardware experiments illustrate the theoretical results.

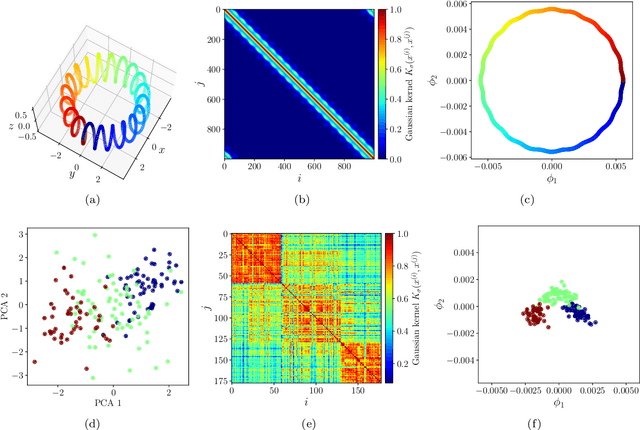

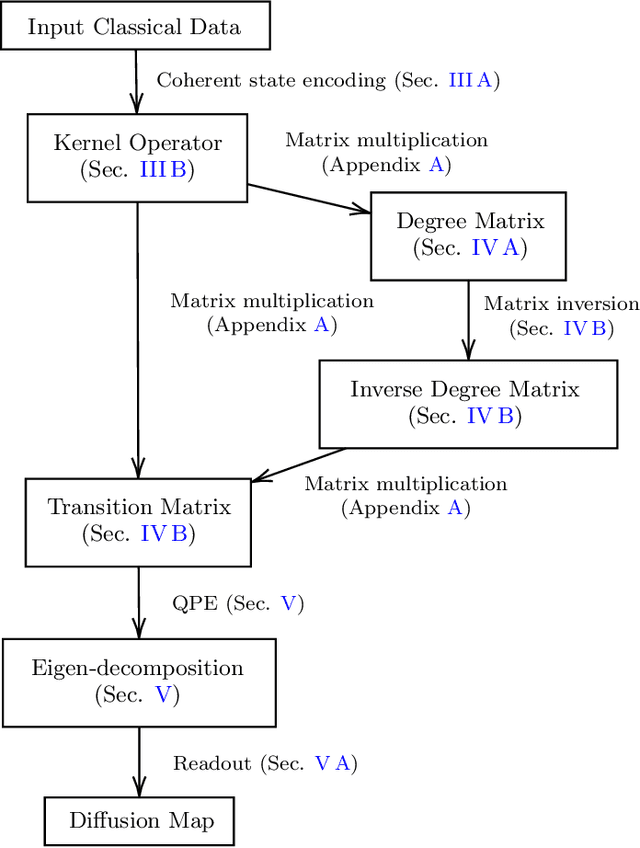

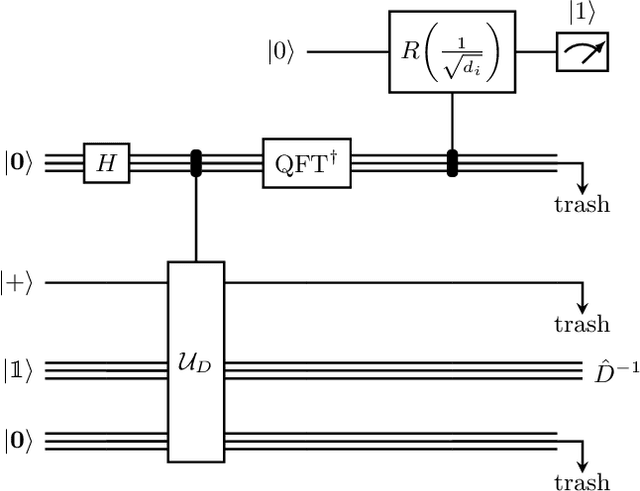

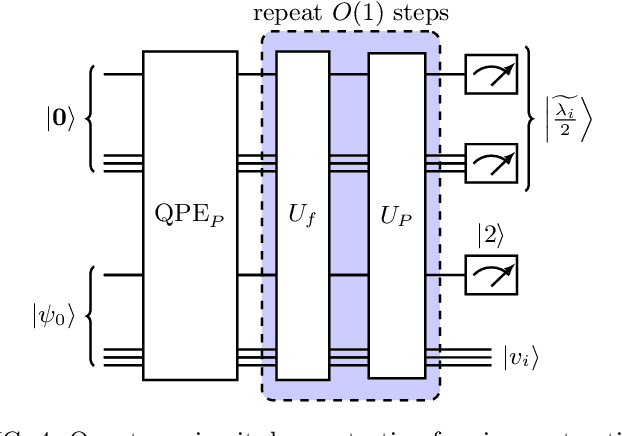

Quantum diffusion map for nonlinear dimensionality reduction

Jun 14, 2021

Inspired by random walk on graphs, diffusion map (DM) is a class of unsupervised machine learning that offers automatic identification of low-dimensional data structure hidden in a high-dimensional dataset. In recent years, among its many applications, DM has been successfully applied to discover relevant order parameters in many-body systems, enabling automatic classification of quantum phases of matter. However, classical DM algorithm is computationally prohibitive for a large dataset, and any reduction of the time complexity would be desirable. With a quantum computational speedup in mind, we propose a quantum algorithm for DM, termed quantum diffusion map (qDM). Our qDM takes as an input N classical data vectors, performs an eigen-decomposition of the Markov transition matrix in time $O(\log^3 N)$, and classically constructs the diffusion map via the readout (tomography) of the eigenvectors, giving a total runtime of $O(N^2 \text{polylog}\, N)$. Lastly, quantum subroutines in qDM for constructing a Markov transition operator, and for analyzing its spectral properties can also be useful for other random walk-based algorithms.

Excess Capacity and Backdoor Poisoning

Sep 14, 2021

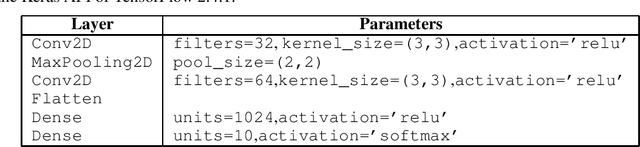

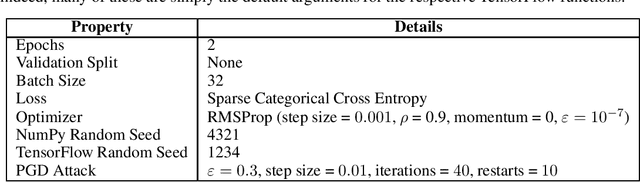

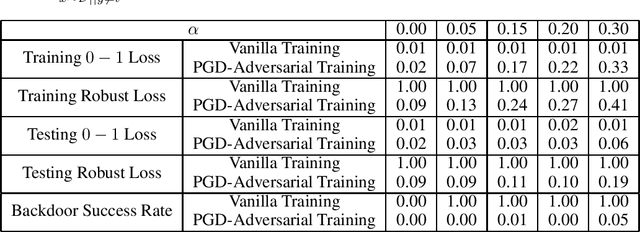

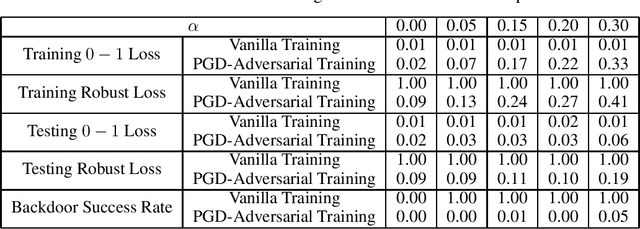

A backdoor data poisoning attack is an adversarial attack wherein the attacker injects several watermarked, mislabeled training examples into a training set. The watermark does not impact the test-time performance of the model on typical data; however, the model reliably errs on watermarked examples. To gain a better foundational understanding of backdoor data poisoning attacks, we present a formal theoretical framework within which one can discuss backdoor data poisoning attacks for classification problems. We then use this to analyze important statistical and computational issues surrounding these attacks. On the statistical front, we identify a parameter we call the memorization capacity that captures the intrinsic vulnerability of a learning problem to a backdoor attack. This allows us to argue about the robustness of several natural learning problems to backdoor attacks. Our results favoring the attacker involve presenting explicit constructions of backdoor attacks, and our robustness results show that some natural problem settings cannot yield successful backdoor attacks. From a computational standpoint, we show that under certain assumptions, adversarial training can detect the presence of backdoors in a training set. We then show that under similar assumptions, two closely related problems we call backdoor filtering and robust generalization are nearly equivalent. This implies that it is both asymptotically necessary and sufficient to design algorithms that can identify watermarked examples in the training set in order to obtain a learning algorithm that both generalizes well to unseen data and is robust to backdoors.

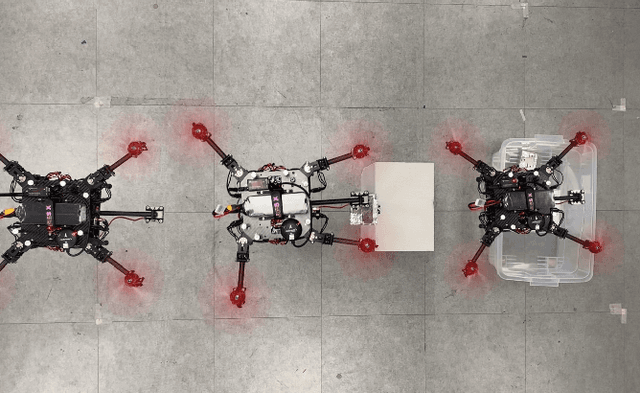

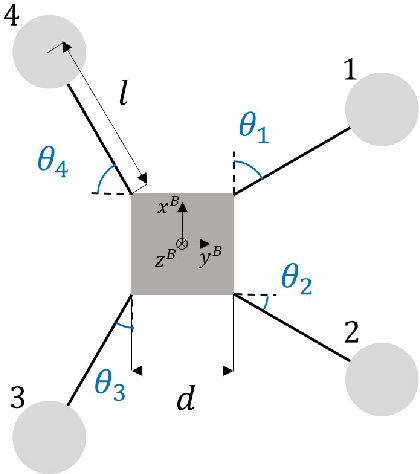

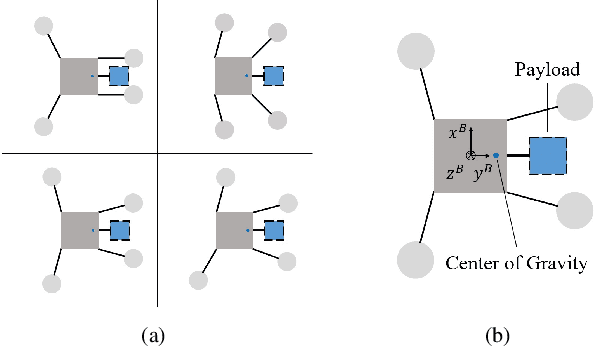

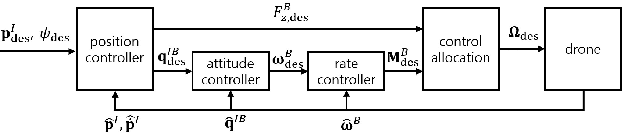

A Morphing Quadrotor that Can Optimize Morphology for Transportation

Aug 15, 2021

Multirotors can be effectively applied to various tasks, such as transportation, investigation, exploration, and lifesaving, depending on the type of payload. However, due to the nature of multirotors, the payload loaded on the multirotor is limited in its position and weight, which presents a major disadvantage when the multirotor is used in various fields. In this paper, we propose a novel method that greatly improves the restrictions on payload position and weight using a morphing quadrotor system. Our method can estimate the drone's weight, center of gravity position, and inertia tensor in real-time, which change depending on payload, and determine the optimal morphology for efficient and stable flight. An adaptive control method that can reflect the change in flight dynamics by payload and morphing is also presented. Experiments were conducted to confirm that the proposed morphing quadrotor improves the stability and efficiency in various situations of transporting payloads compared with the conventional quadrotor systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge