"Time": models, code, and papers

Deep 3D Mask Volume for View Synthesis of Dynamic Scenes

Aug 30, 2021

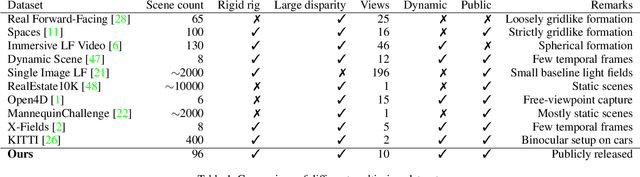

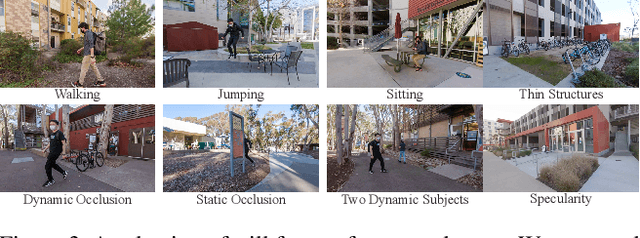

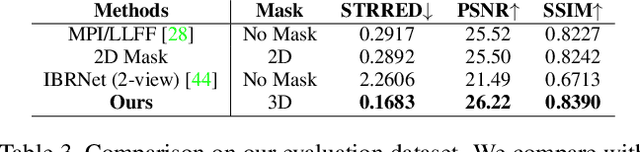

Image view synthesis has seen great success in reconstructing photorealistic visuals, thanks to deep learning and various novel representations. The next key step in immersive virtual experiences is view synthesis of dynamic scenes. However, several challenges exist due to the lack of high-quality training datasets, and the additional time dimension for videos of dynamic scenes. To address this issue, we introduce a multi-view video dataset, captured with a custom 10-camera rig in 120FPS. The dataset contains 96 high-quality scenes showing various visual effects and human interactions in outdoor scenes. We develop a new algorithm, Deep 3D Mask Volume, which enables temporally-stable view extrapolation from binocular videos of dynamic scenes, captured by static cameras. Our algorithm addresses the temporal inconsistency of disocclusions by identifying the error-prone areas with a 3D mask volume, and replaces them with static background observed throughout the video. Our method enables manipulation in 3D space as opposed to simple 2D masks, We demonstrate better temporal stability than frame-by-frame static view synthesis methods, or those that use 2D masks. The resulting view synthesis videos show minimal flickering artifacts and allow for larger translational movements.

Shaping Individualized Impedance Landscapes for Gait Training via Reinforcement Learning

Sep 05, 2021

Assist-as-needed (AAN) control aims at promoting therapeutic outcomes in robot-assisted rehabilitation by encouraging patients' active participation. Impedance control is used by most AAN controllers to create a compliant force field around a target motion to ensure tracking accuracy while allowing moderate kinematic errors. However, since the parameters governing the shape of the force field are often tuned manually or adapted online based on simplistic assumptions about subjects' learning abilities, the effectiveness of conventional AAN controllers may be limited. In this work, we propose a novel adaptive AAN controller that is capable of autonomously reshaping the force field in a phase-dependent manner according to each individual's motor abilities and task requirements. The proposed controller consists of a modified Policy Improvement with Path Integral algorithm, a model-free, sampling-based reinforcement learning method that learns a subject-specific impedance landscape in real-time, and a hierarchical policy parameter evaluation structure that embeds the AAN paradigm by specifying performance-driven learning goals. The adaptability of the proposed control strategy to subjects' motor responses and its ability to promote short-term motor adaptations are experimentally validated through treadmill training sessions with able-bodied subjects who learned altered gait patterns with the assistance of a powered ankle-foot orthosis.

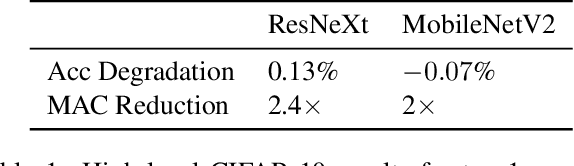

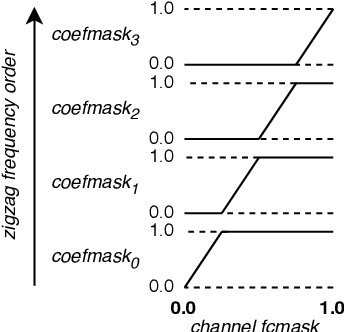

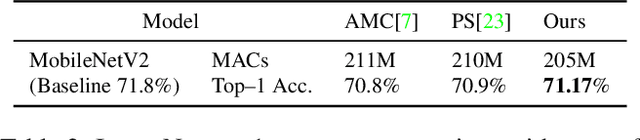

Dense Pruning of Pointwise Convolutions in the Frequency Domain

Sep 16, 2021

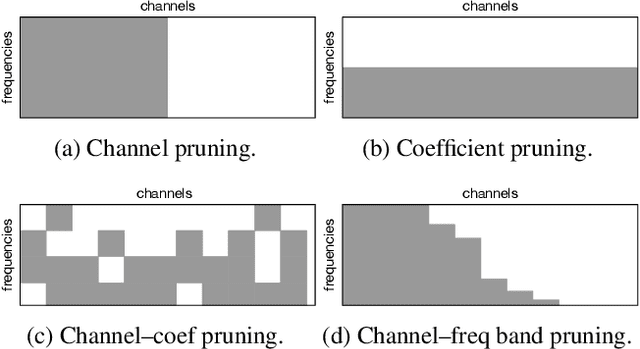

Depthwise separable convolutions and frequency-domain convolutions are two recent ideas for building efficient convolutional neural networks. They are seemingly incompatible: the vast majority of operations in depthwise separable CNNs are in pointwise convolutional layers, but pointwise layers use 1x1 kernels, which do not benefit from frequency transformation. This paper unifies these two ideas by transforming the activations, not the kernels. Our key insights are that 1) pointwise convolutions commute with frequency transformation and thus can be computed in the frequency domain without modification, 2) each channel within a given layer has a different level of sensitivity to frequency domain pruning, and 3) each channel's sensitivity to frequency pruning is approximately monotonic with respect to frequency. We leverage this knowledge by proposing a new technique which wraps each pointwise layer in a discrete cosine transform (DCT) which is truncated to selectively prune coefficients above a given threshold as per the needs of each channel. To learn which frequencies should be pruned from which channels, we introduce a novel learned parameter which specifies each channel's pruning threshold. We add a new regularization term which incentivizes the model to decrease the number of retained frequencies while still maintaining task accuracy. Unlike weight pruning techniques which rely on sparse operators, our contiguous frequency band pruning results in fully dense computation. We apply our technique to MobileNetV2 and in the process reduce computation time by 22% and incur <1% accuracy degradation.

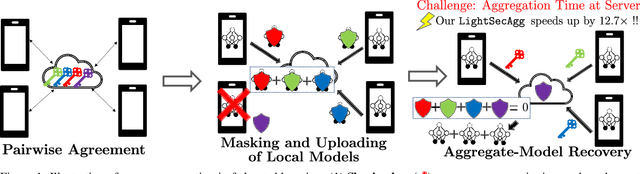

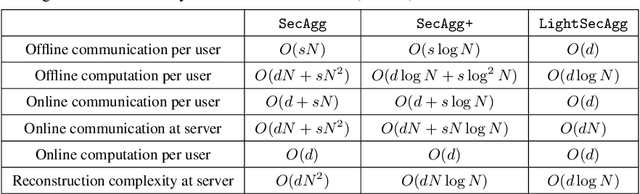

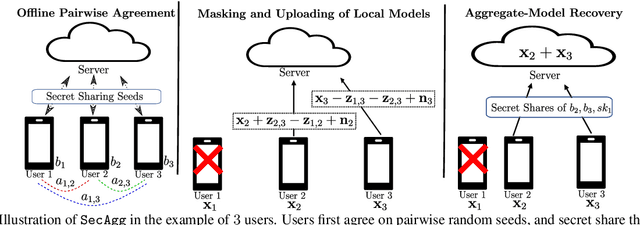

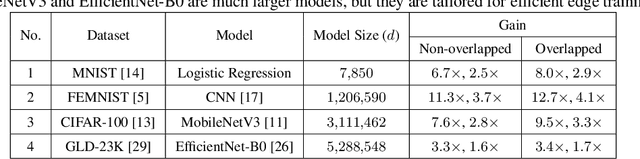

LightSecAgg: Rethinking Secure Aggregation in Federated Learning

Sep 29, 2021

Secure model aggregation is a key component of federated learning (FL) that aims at protecting the privacy of each user's individual model, while allowing their global aggregation. It can be applied to any aggregation-based approaches, including algorithms for training a global model, as well as personalized FL frameworks. Model aggregation needs to also be resilient to likely user dropouts in FL system, making its design substantially more complex. State-of-the-art secure aggregation protocols essentially rely on secret sharing of the random-seeds that are used for mask generations at the users, in order to enable the reconstruction and cancellation of those belonging to dropped users. The complexity of such approaches, however, grows substantially with the number of dropped users. We propose a new approach, named LightSecAgg, to overcome this bottleneck by turning the focus from "random-seed reconstruction of the dropped users" to "one-shot aggregate-mask reconstruction of the active users". More specifically, in LightSecAgg each user protects its local model by generating a single random mask. This mask is then encoded and shared to other users, in such a way that the aggregate-mask of any sufficiently large set of active users can be reconstructed directly at the server via encoded masks. We show that LightSecAgg achieves the same privacy and dropout-resiliency guarantees as the state-of-the-art protocols, while significantly reducing the overhead for resiliency to dropped users. Furthermore, our system optimization helps to hide the runtime cost of offline processing by parallelizing it with model training. We evaluate LightSecAgg via extensive experiments for training diverse models on various datasets in a realistic FL system, and demonstrate that LightSecAgg significantly reduces the total training time, achieving a performance gain of up to $12.7\times$ over baselines.

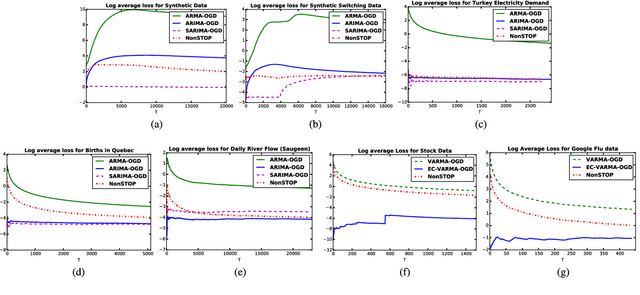

NonSTOP: A NonSTationary Online Prediction Method for Time Series

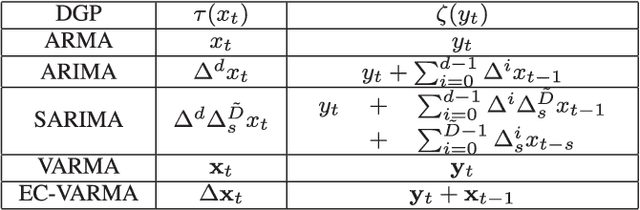

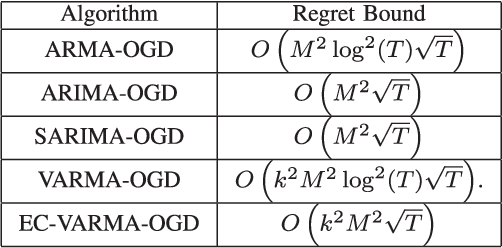

Aug 26, 2018

We present online prediction methods for time series that let us explicitly handle nonstationary artifacts (e.g. trend and seasonality) present in most real time series. Specifically, we show that applying appropriate transformations to such time series before prediction can lead to improved theoretical and empirical prediction performance. Moreover, since these transformations are usually unknown, we employ the learning with experts setting to develop a fully online method (NonSTOP-NonSTationary Online Prediction) for predicting nonstationary time series. This framework allows for seasonality and/or other trends in univariate time series and cointegration in multivariate time series. Our algorithms and regret analysis subsume recent related work while significantly expanding the applicability of such methods. For all the methods, we provide sub-linear regret bounds using relaxed assumptions. The theoretical guarantees do not fully capture the benefits of the transformations, thus we provide a data-dependent analysis of the follow-the-leader algorithm that provides insight into the success of using such transformations. We support all of our results with experiments on simulated and real data.

SurpriseNet: Melody Harmonization Conditioning on User-controlled Surprise Contours

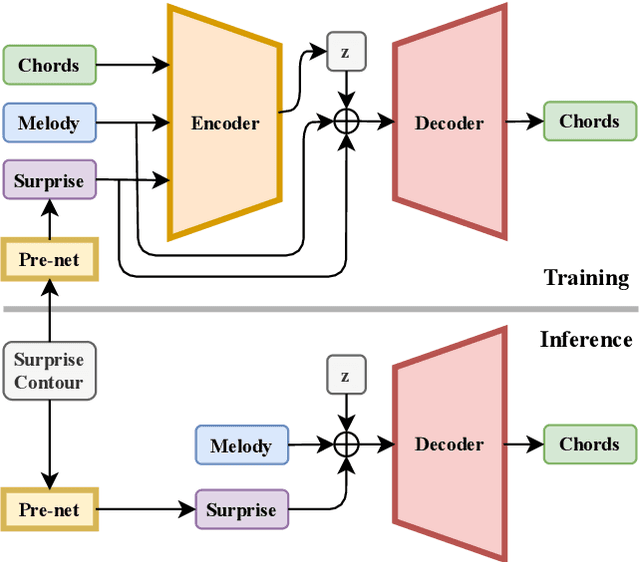

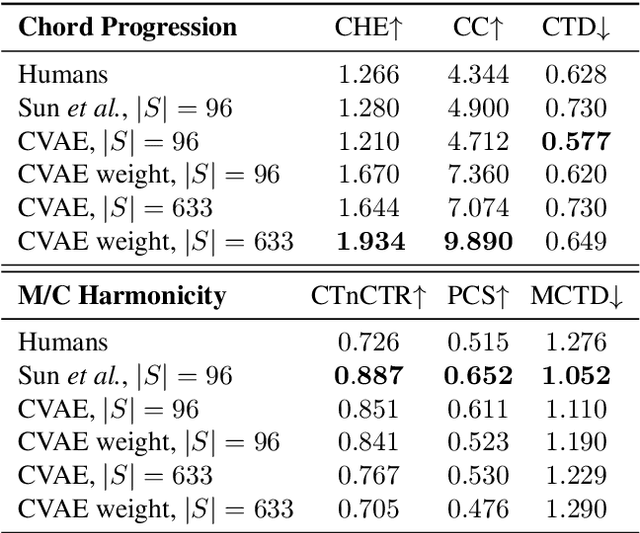

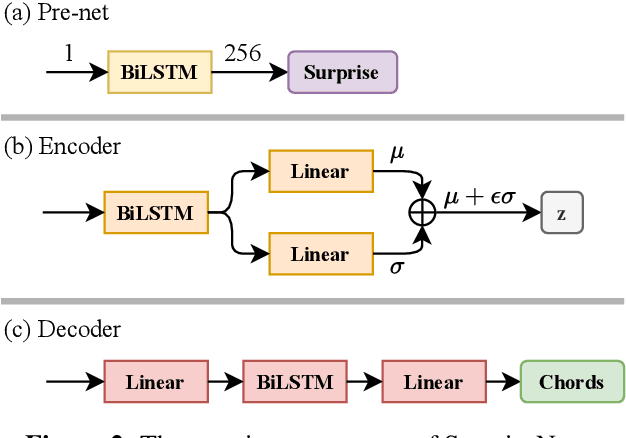

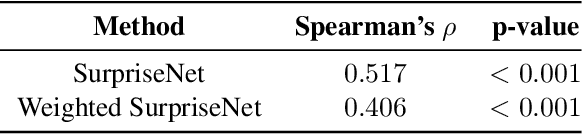

Aug 24, 2021

The surprisingness of a song is an essential and seemingly subjective factor in determining whether the listener likes it. With the help of information theory, it can be described as the transition probability of a music sequence modeled as a Markov chain. In this study, we introduce the concept of deriving entropy variations over time, so that the surprise contour of each chord sequence can be extracted. Based on this, we propose a user-controllable framework that uses a conditional variational autoencoder (CVAE) to harmonize the melody based on the given chord surprise indication. Through explicit conditions, the model can randomly generate various and harmonic chord progressions for a melody, and the Spearman's correlation and p-value significance show that the resulting chord progressions match the given surprise contour quite well. The vanilla CVAE model was evaluated in a basic melody harmonization task (no surprise control) in terms of six objective metrics. The results of experiments on the Hooktheory Lead Sheet Dataset show that our model achieves performance comparable to the state-of-the-art melody harmonization model.

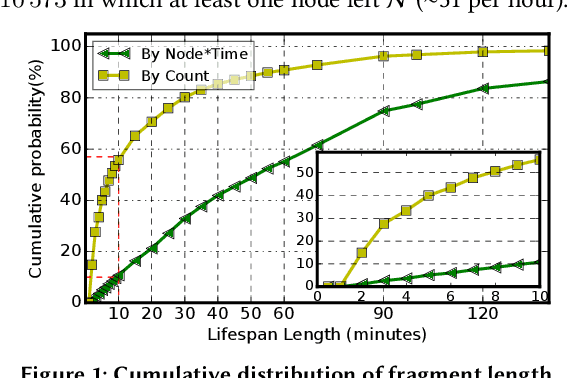

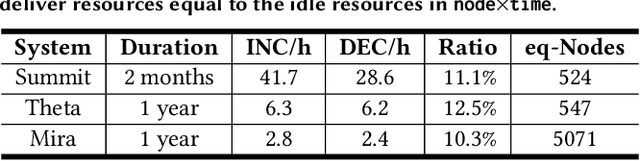

BFTrainer: Low-Cost Training of Neural Networks on Unfillable Supercomputer Nodes

Jun 22, 2021

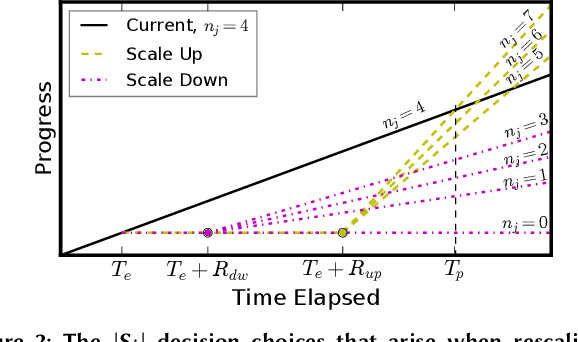

Supercomputer FCFS-based scheduling policies result in many transient idle nodes, a phenomenon that is only partially alleviated by backfill scheduling methods that promote small jobs to run before large jobs. Here we describe how to realize a novel use for these otherwise wasted resources, namely, deep neural network (DNN) training. This important workload is easily organized as many small fragments that can be configured dynamically to fit essentially any node*time hole in a supercomputer's schedule. We describe how the task of rescaling suitable DNN training tasks to fit dynamically changing holes can be formulated as a deterministic mixed integer linear programming (MILP)-based resource allocation algorithm, and show that this MILP problem can be solved efficiently at run time. We show further how this MILP problem can be adapted to optimize for administrator- or user-defined metrics. We validate our method with supercomputer scheduler logs and different DNN training scenarios, and demonstrate efficiencies of up to 93% compared with running the same training tasks on dedicated nodes. Our method thus enables substantial supercomputer resources to be allocated to DNN training with no impact on other applications.

Learning Representations from Imperfect Time Series Data via Tensor Rank Regularization

Jul 01, 2019

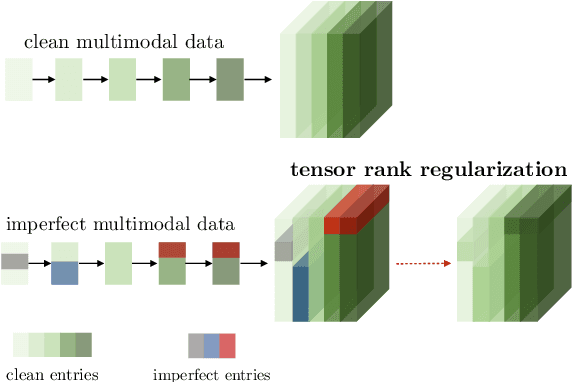

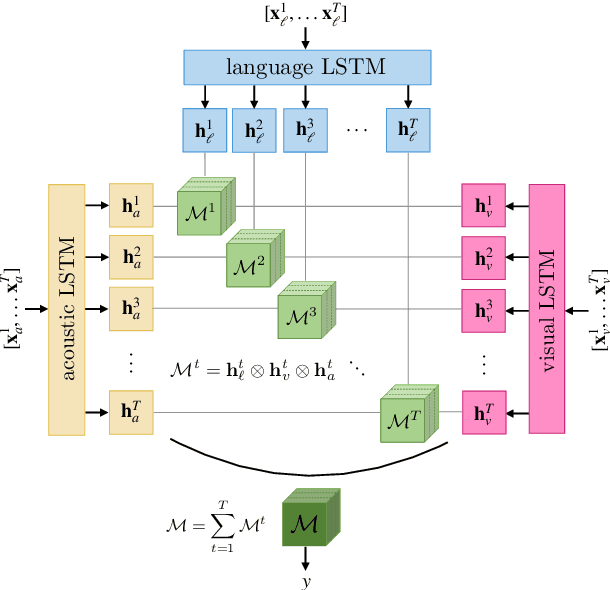

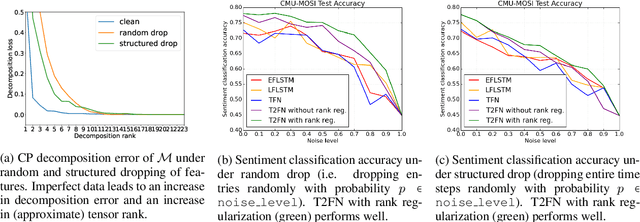

There has been an increased interest in multimodal language processing including multimodal dialog, question answering, sentiment analysis, and speech recognition. However, naturally occurring multimodal data is often imperfect as a result of imperfect modalities, missing entries or noise corruption. To address these concerns, we present a regularization method based on tensor rank minimization. Our method is based on the observation that high-dimensional multimodal time series data often exhibit correlations across time and modalities which leads to low-rank tensor representations. However, the presence of noise or incomplete values breaks these correlations and results in tensor representations of higher rank. We design a model to learn such tensor representations and effectively regularize their rank. Experiments on multimodal language data show that our model achieves good results across various levels of imperfection.

Precision and accuracy of acoustic gunshot location in an urban environment

Aug 16, 2021

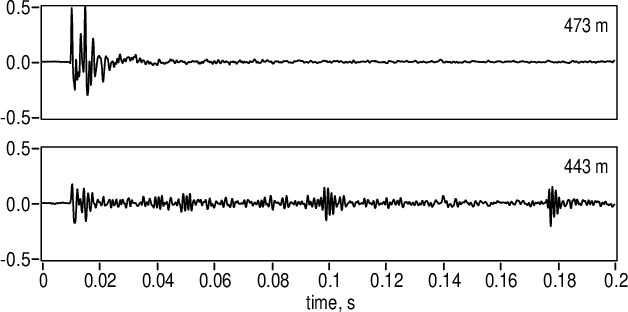

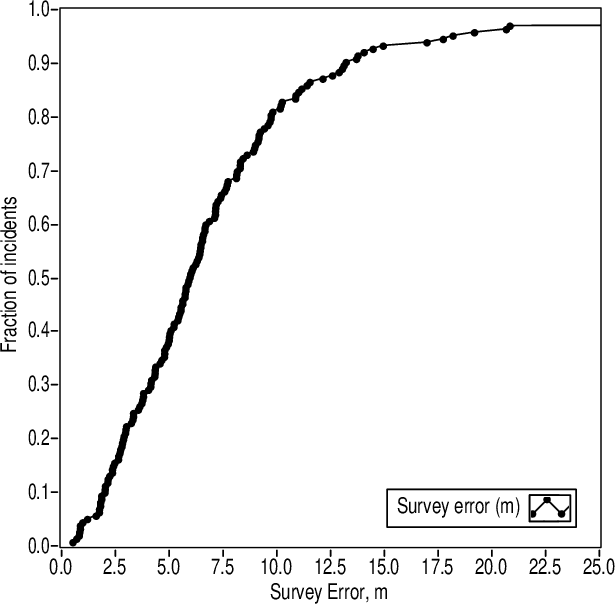

The muzzle blast caused by the discharge of a firearm generates a loud, impulsive sound that propagates away from the shooter in all directions. The location of the source can be computed from time-of-arrival measurements of the muzzle blast on multiple acoustic sensors at known locations, a technique known as multilateration. The multilateration problem is considerably simplified by assuming straight-line propagation in a homogeneous medium, a model for which there are multiple published solutions. Live-fire tests of the ShotSpotter gunshot location system in Pittsburgh, PA were analyzed off-line under several algorithms and geometric constraints to evaluate the accuracy of acoustic multilateration in a forensic context. Best results were obtained using the algorithm due to Mathias, Leonari and Galati under a two-dimensional geometric constraint. Multilateration on random subsets of the participating sensor array show that 96% of shots can be located to an accuracy of 15 m or better when six or more sensors participate in the solution.

Automatic Head Overcoat Thickness Measure with NASNet-Large-Decoder Net

Jun 22, 2021

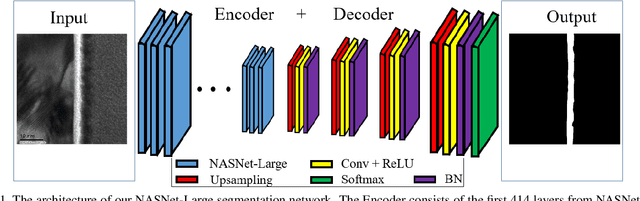

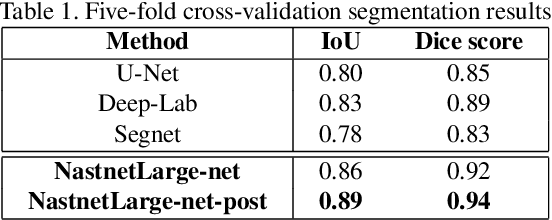

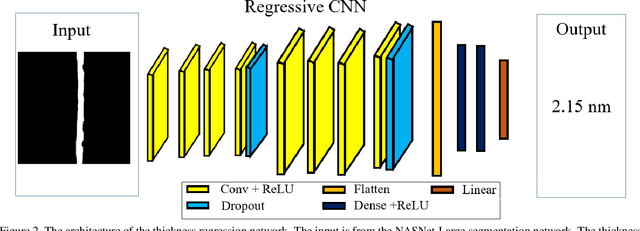

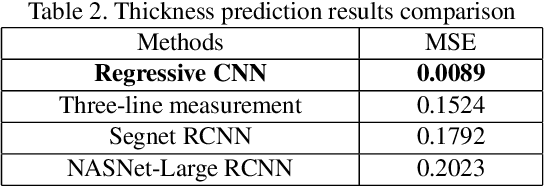

Transmission electron microscopy (TEM) is one of the primary tools to show microstructural characterization of materials as well as film thickness. However, manual determination of film thickness from TEM images is time-consuming as well as subjective, especially when the films in question are very thin and the need for measurement precision is very high. Such is the case for head overcoat (HOC) thickness measurements in the magnetic hard disk drive industry. It is therefore necessary to develop software to automatically measure HOC thickness. In this paper, for the first time, we propose a HOC layer segmentation method using NASNet-Large as an encoder and then followed by a decoder architecture, which is one of the most commonly used architectures in deep learning for image segmentation. To further improve segmentation results, we are the first to propose a post-processing layer to remove irrelevant portions in the segmentation result. To measure the thickness of the segmented HOC layer, we propose a regressive convolutional neural network (RCNN) model as well as orthogonal thickness calculation methods. Experimental results demonstrate a higher dice score for our model which has lower mean squared error and outperforms current state-of-the-art manual measurement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge