"Time": models, code, and papers

Sequence Adaptation via Reinforcement Learning in Recommender Systems

Jul 31, 2021

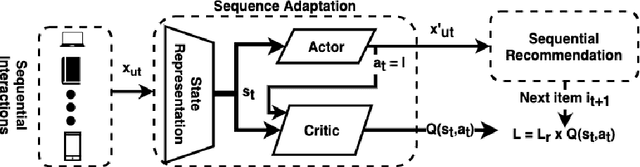

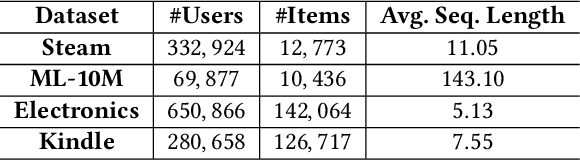

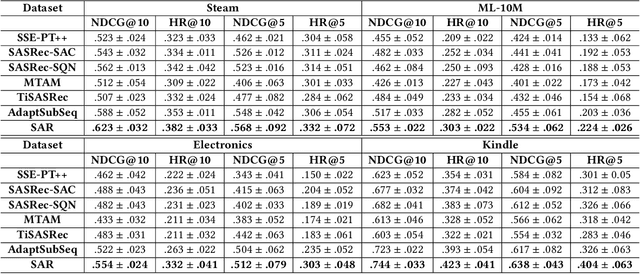

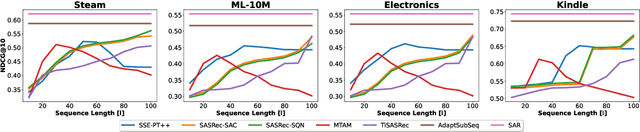

Accounting for the fact that users have different sequential patterns, the main drawback of state-of-the-art recommendation strategies is that a fixed sequence length of user-item interactions is required as input to train the models. This might limit the recommendation accuracy, as in practice users follow different trends on the sequential recommendations. Hence, baseline strategies might ignore important sequential interactions or add noise to the models with redundant interactions, depending on the variety of users' sequential behaviours. To overcome this problem, in this study we propose the SAR model, which not only learns the sequential patterns but also adjusts the sequence length of user-item interactions in a personalized manner. We first design an actor-critic framework, where the RL agent tries to compute the optimal sequence length as an action, given the user's state representation at a certain time step. In addition, we optimize a joint loss function to align the accuracy of the sequential recommendations with the expected cumulative rewards of the critic network, while at the same time we adapt the sequence length with the actor network in a personalized manner. Our experimental evaluation on four real-world datasets demonstrates the superiority of our proposed model over several baseline approaches. Finally, we make our implementation publicly available at https://github.com/stefanosantaris/sar.

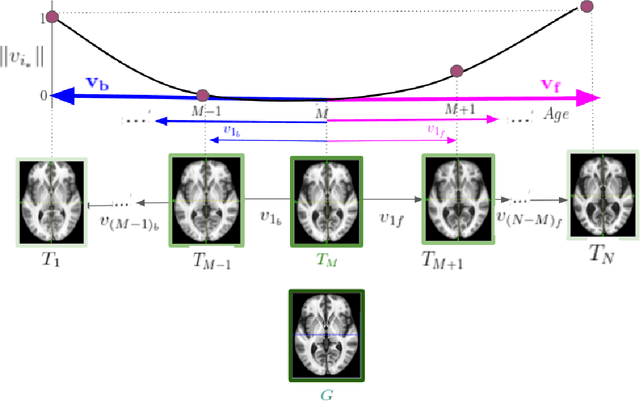

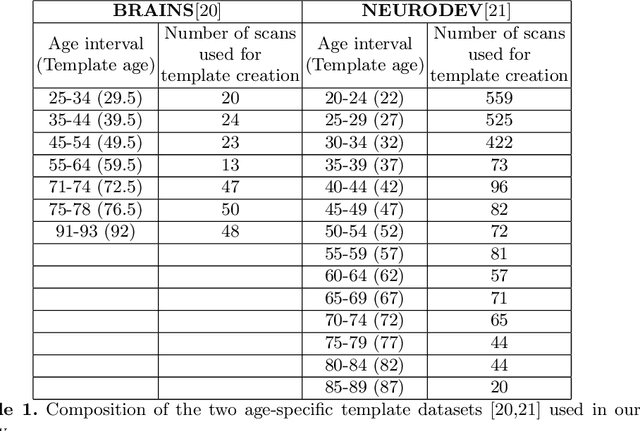

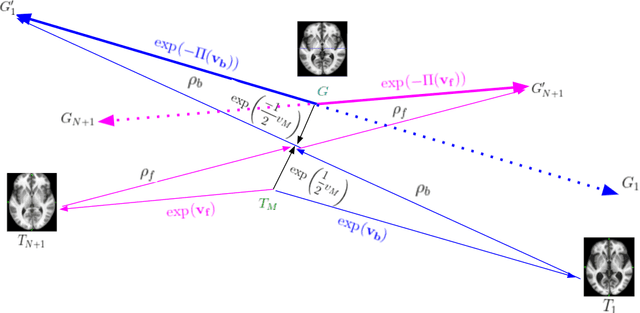

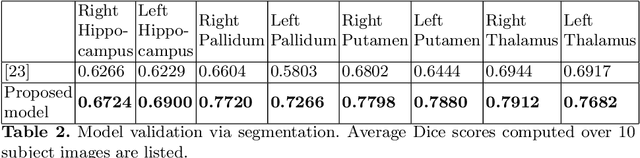

A Diffeomorphic Aging Model for Adult Human Brain from Cross-Sectional Data

Jun 28, 2021

Normative aging trends of the brain can serve as an important reference in the assessment of neurological structural disorders. Such models are typically developed from longitudinal brain image data -- follow-up data of the same subject over different time points. In practice, obtaining such longitudinal data is difficult. We propose a method to develop an aging model for a given population, in the absence of longitudinal data, by using images from different subjects at different time points, the so-called cross-sectional data. We define an aging model as a diffeomorphic deformation on a structural template derived from the data and propose a method that develops topology preserving aging model close to natural aging. The proposed model is successfully validated on two public cross-sectional datasets which provide templates constructed from different sets of subjects at different age points.

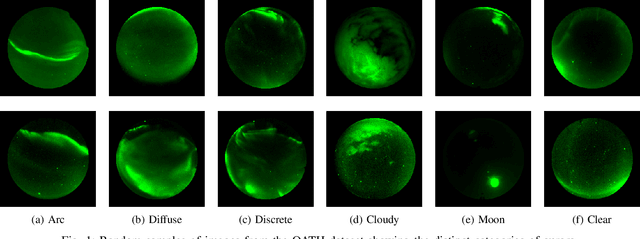

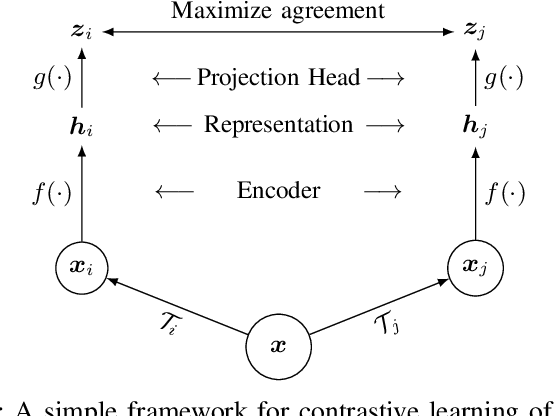

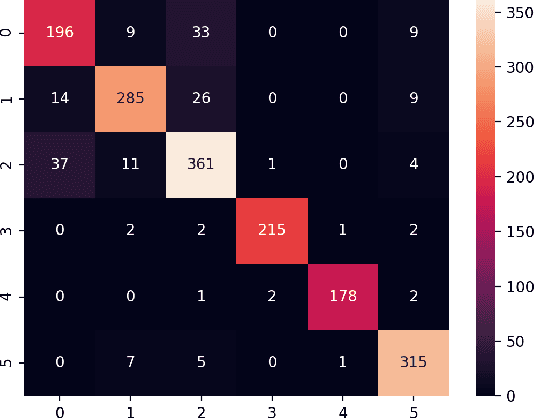

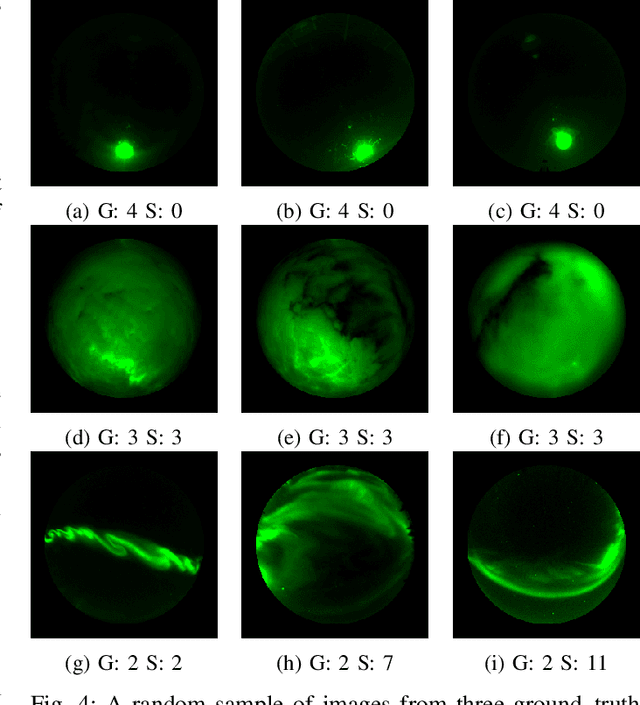

A Contrastive Learning Approach to Auroral Identification and Classification

Sep 29, 2021

Unsupervised learning algorithms are beginning to achieve accuracies comparable to their supervised counterparts on benchmark computer vision tasks, but their utility for practical applications has not yet been demonstrated. In this work, we present a novel application of unsupervised learning to the task of auroral image classification. Specifically, we modify and adapt the Simple framework for Contrastive Learning of Representations (SimCLR) algorithm to learn representations of auroral images in a recently released auroral image dataset constructed using image data from Time History of Events and Macroscale Interactions during Substorms (THEMIS) all-sky imagers. We demonstrate that (a) simple linear classifiers fit to the learned representations of the images achieve state-of-the-art classification performance, improving the classification accuracy by almost 10 percentage points over the current benchmark; and (b) the learned representations naturally cluster into more clusters than exist manually assigned categories, suggesting that existing categorizations are overly coarse and may obscure important connections between auroral types, near-earth solar wind conditions, and geomagnetic disturbances at the earth's surface. Moreover, our model is much lighter than the previous benchmark on this dataset, requiring in the area of fewer than 25\% of the number of parameters. Our approach exceeds an established threshold for operational purposes, demonstrating readiness for deployment and utilization.

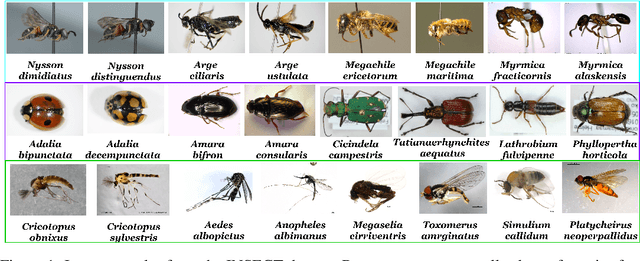

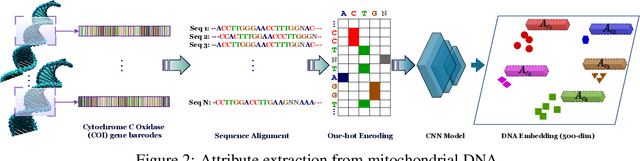

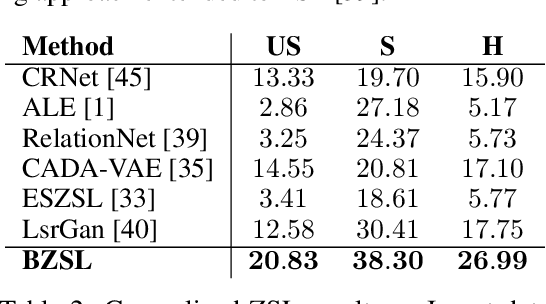

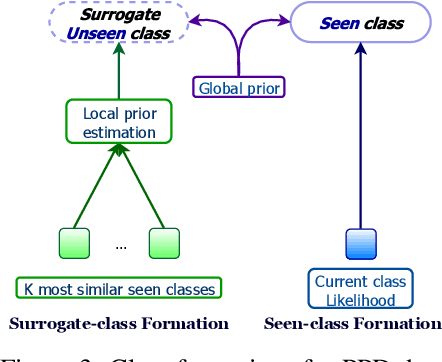

Fine-Grained Zero-Shot Learning with DNA as Side Information

Sep 29, 2021

Fine-grained zero-shot learning task requires some form of side-information to transfer discriminative information from seen to unseen classes. As manually annotated visual attributes are extremely costly and often impractical to obtain for a large number of classes, in this study we use DNA as side information for the first time for fine-grained zero-shot classification of species. Mitochondrial DNA plays an important role as a genetic marker in evolutionary biology and has been used to achieve near-perfect accuracy in the species classification of living organisms. We implement a simple hierarchical Bayesian model that uses DNA information to establish the hierarchy in the image space and employs local priors to define surrogate classes for unseen ones. On the benchmark CUB dataset, we show that DNA can be equally promising yet in general a more accessible alternative than word vectors as a side information. This is especially important as obtaining robust word representations for fine-grained species names is not a practicable goal when information about these species in free-form text is limited. On a newly compiled fine-grained insect dataset that uses DNA information from over a thousand species, we show that the Bayesian approach outperforms state-of-the-art by a wide margin.

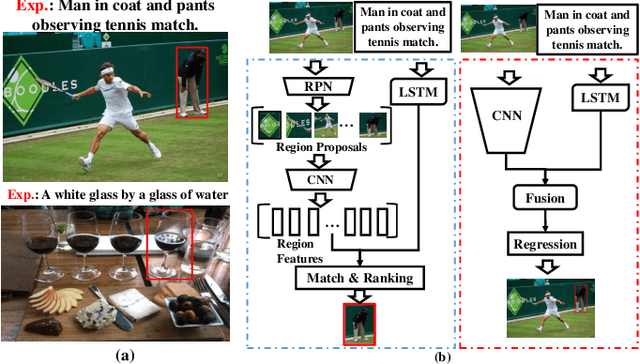

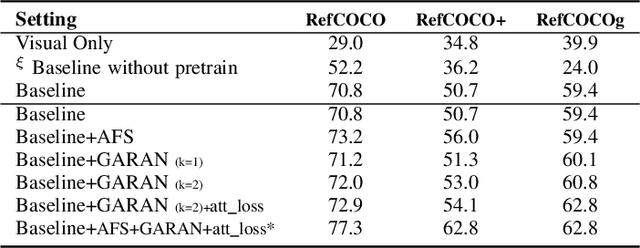

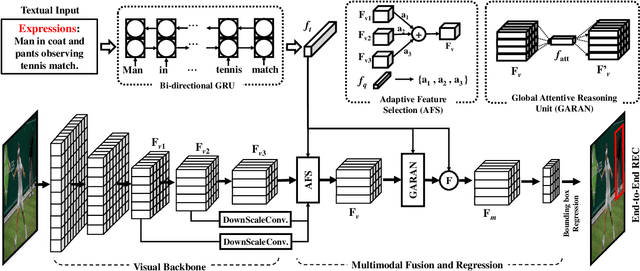

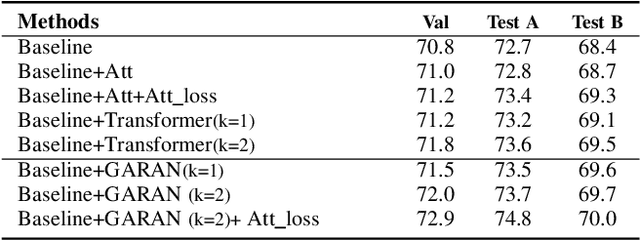

A Real-time Global Inference Network for One-stage Referring Expression Comprehension

Dec 07, 2019

Referring Expression Comprehension (REC) is an emerging research spot in computer vision, which refers to detecting the target region in an image given an text description. Most existing REC methods follow a multi-stage pipeline, which are computationally expensive and greatly limit the application of REC. In this paper, we propose a one-stage model towards real-time REC, termed Real-time Global Inference Network (RealGIN). RealGIN addresses the diversity and complexity issues in REC with two innovative designs: the Adaptive Feature Selection (AFS) and the Global Attentive ReAsoNing unit (GARAN). AFS adaptively fuses features at different semantic levels to handle the varying content of expressions. GARAN uses the textual feature as a pivot to collect expression-related visual information from all regions, and thenselectively diffuse such information back to all regions, which provides sufficient context for modeling the complex linguistic conditions in expressions. On five benchmark datasets, i.e., RefCOCO, RefCOCO+, RefCOCOg, ReferIt and Flickr30k, the proposed RealGIN outperforms most prior works and achieves very competitive performances against the most advanced method, i.e., MAttNet. Most importantly, under the same hardware, RealGIN can boost the processing speed by about 10 times over the existing methods.

Guiding Global Placement With Reinforcement Learning

Sep 06, 2021

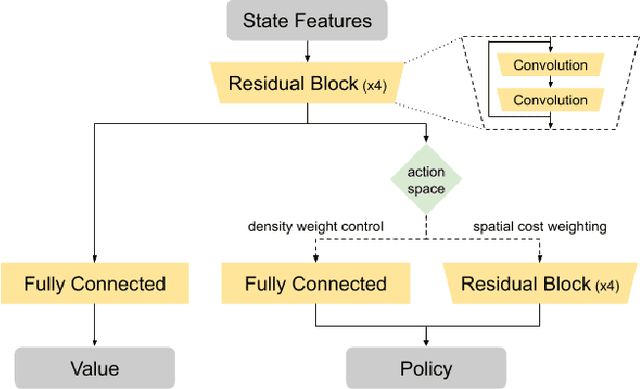

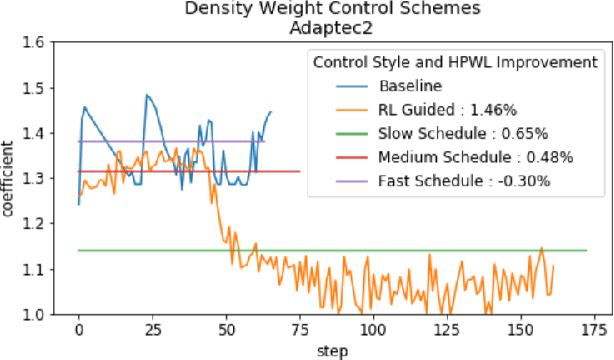

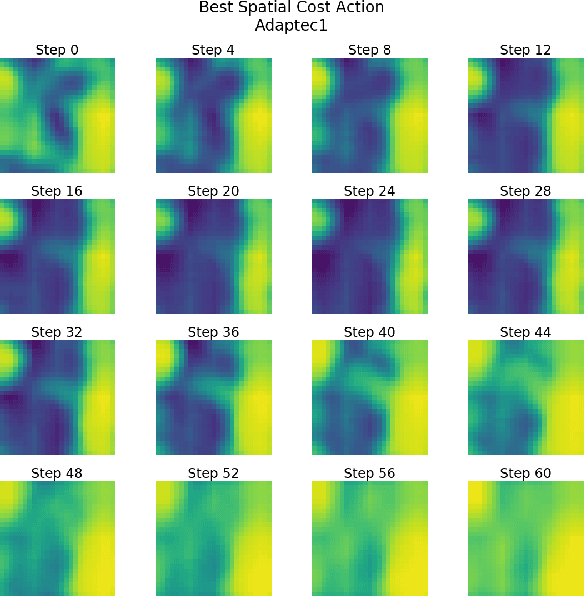

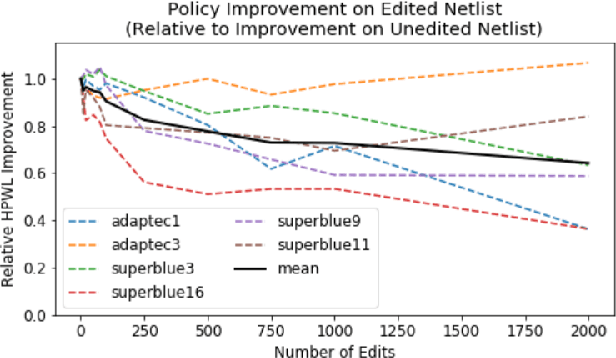

Recent advances in GPU accelerated global and detail placement have reduced the time to solution by an order of magnitude. This advancement allows us to leverage data driven optimization (such as Reinforcement Learning) in an effort to improve the final quality of placement results. In this work we augment state-of-the-art, force-based global placement solvers with a reinforcement learning agent trained to improve the final detail placed Half Perimeter Wire Length (HPWL). We propose novel control schemes with either global or localized control of the placement process. We then train reinforcement learning agents to use these controls to guide placement to improved solutions. In both cases, the augmented optimizer finds improved placement solutions. Our trained agents achieve an average 1% improvement in final detail place HPWL across a range of academic benchmarks and more than 1% in global place HPWL on real industry designs.

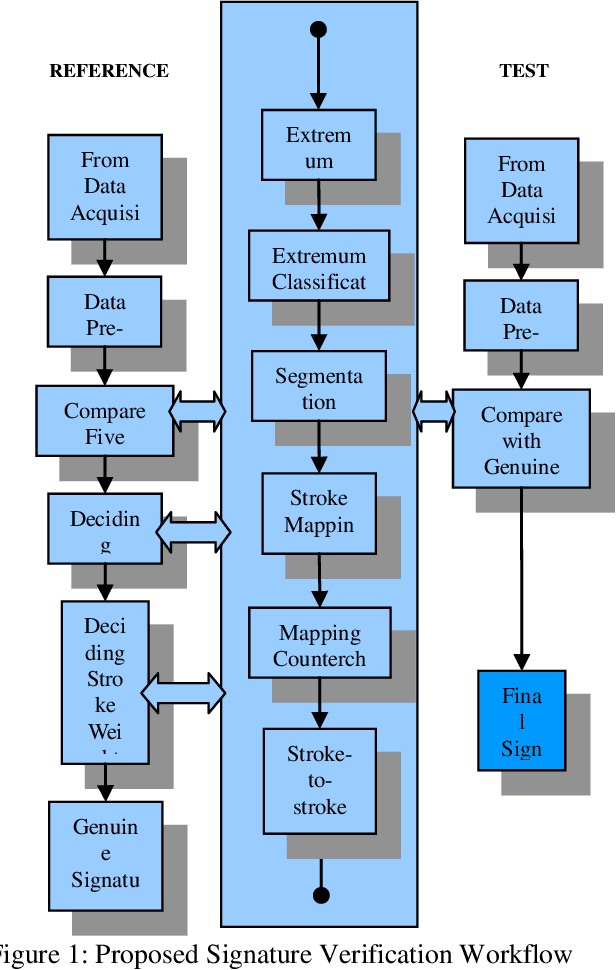

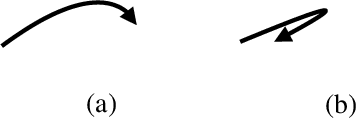

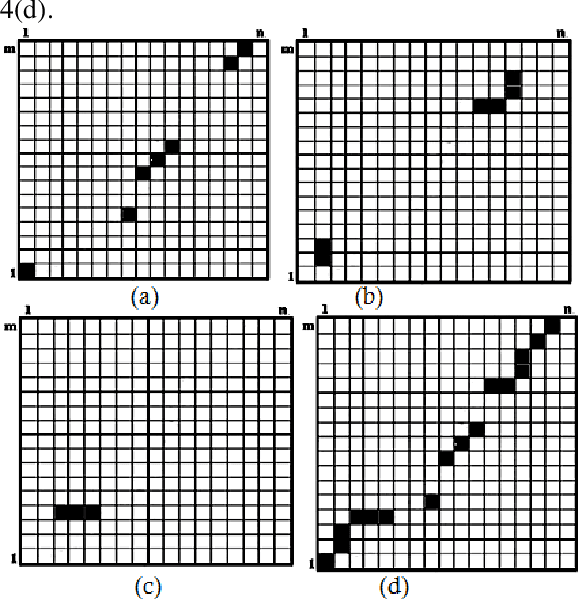

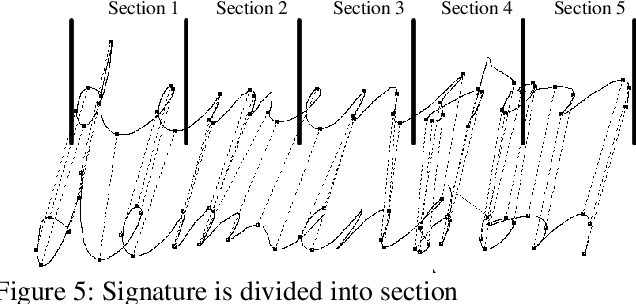

Improved Dynamic Time Warping (DTW) Approach for Online Signature Verification

Mar 26, 2019

Online signature verification is the process of verifying time series signature data which is generally obtained from the tablet-based device. Unlike offline signature images, the online signature image data consists of points that are arranged in a sequence of time. The aim of this research is to develop an improved approach to map the strokes in both test and reference signatures. Current methods make use of the Dynamic Time Warping (DTW) algorithm and its variant to segment them before comparing each of its data dimension. This paper presents a modified DTW algorithm with the proposed Lost Box Recovery Algorithm aims to improve the mapping performance for online signature verification

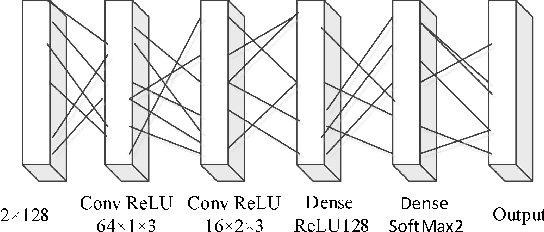

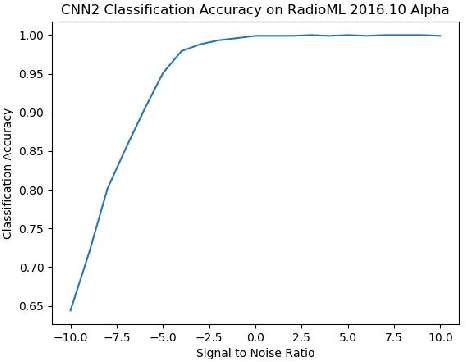

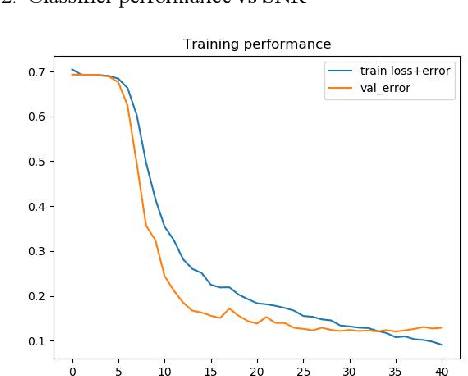

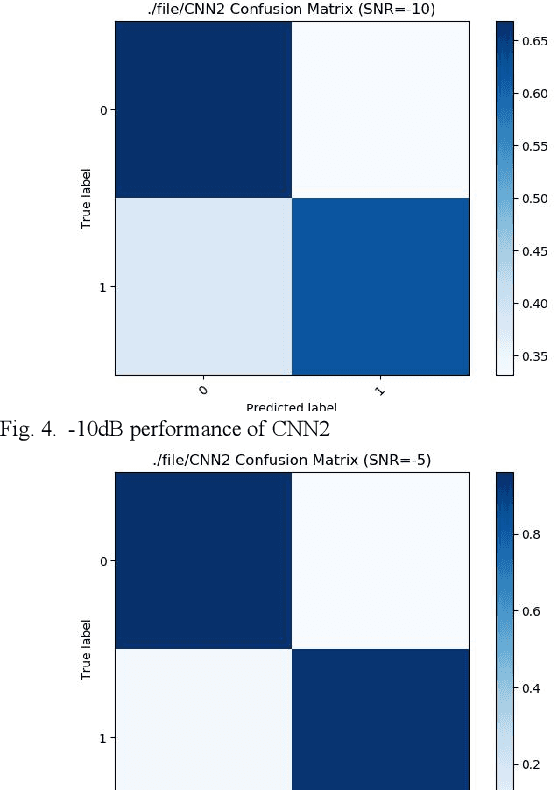

Convolutional Neural Networks for Space-Time Block Coding Recognition

Oct 19, 2019

We find that the latest advances in machine learning with deep neural network by applying them to the task of radio modulation recognition, channel coding recognition, and spectrum monitor. This paper first proposes a novel identification algorithm for Space-Time Block coding(STBC) signal. The feature between Spatial Multiplexing (SM) and Alamouti (AL) signals is extracted via adapting convolutional neural networks after preprocessing the received sequence. Unlike other algorithms, this method does not require any prior information of channel coefficient, and noise power and, consequently, is well-suited for non-cooperative context. Results show that the proposed algorithm performs well even at a low signal to noise ratio (SNR).

Rethinking Trajectory Evaluation for SLAM: a Probabilistic, Continuous-Time Approach

Jun 10, 2019

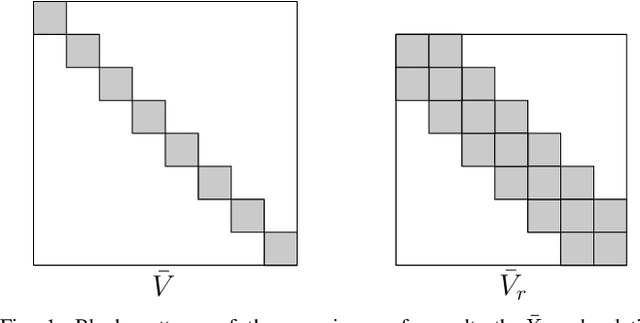

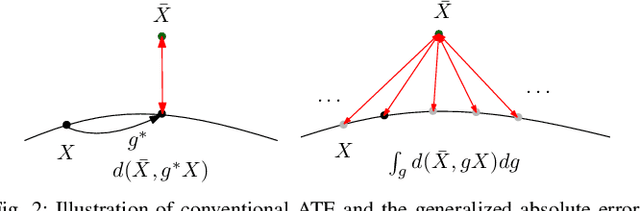

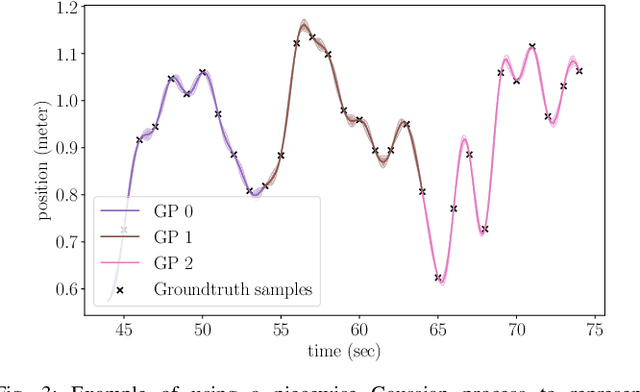

Despite the existence of different error metrics for trajectory evaluation in SLAM, their theoretical justifications and connections are rarely studied, and few methods handle temporal association properly. In this work, we propose to formulate the trajectory evaluation problem in a probabilistic, continuous-time framework. By modeling the groundtruth as random variables, the concepts of absolute and relative error are generalized to be likelihood. Moreover, the groundtruth is represented as a piecewise Gaussian Process in continuous-time. Within this framework, we are able to establish theoretical connections between relative and absolute error metrics and handle temporal association in a principled manner.

Data Assimilation Predictive GAN (DA-PredGAN): applied to determine the spread of COVID-19

May 17, 2021

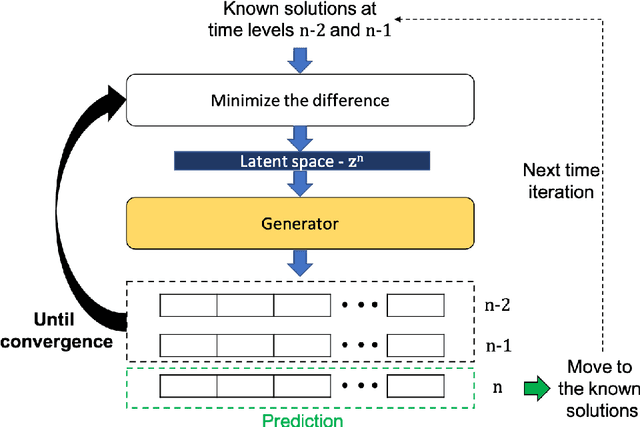

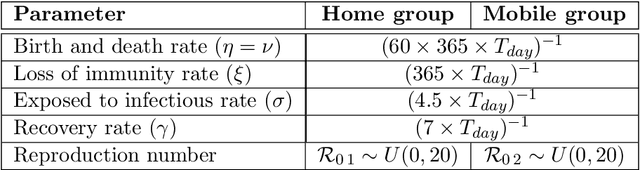

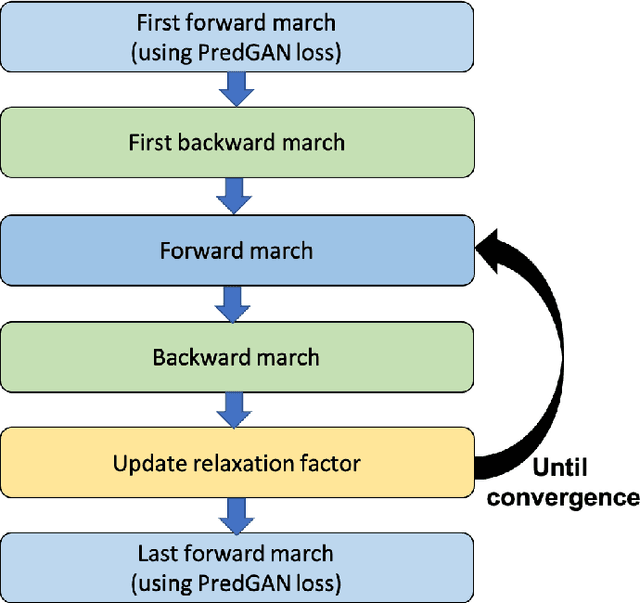

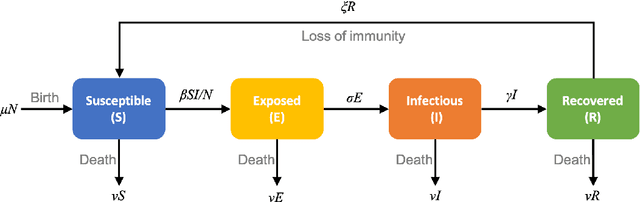

We propose the novel use of a generative adversarial network (GAN) (i) to make predictions in time (PredGAN) and (ii) to assimilate measurements (DA-PredGAN). In the latter case, we take advantage of the natural adjoint-like properties of generative models and the ability to simulate forwards and backwards in time. GANs have received much attention recently, after achieving excellent results for their generation of realistic-looking images. We wish to explore how this property translates to new applications in computational modelling and to exploit the adjoint-like properties for efficient data assimilation. To predict the spread of COVID-19 in an idealised town, we apply these methods to a compartmental model in epidemiology that is able to model space and time variations. To do this, the GAN is set within a reduced-order model (ROM), which uses a low-dimensional space for the spatial distribution of the simulation states. Then the GAN learns the evolution of the low-dimensional states over time. The results show that the proposed methods can accurately predict the evolution of the high-fidelity numerical simulation, and can efficiently assimilate observed data and determine the corresponding model parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge