"Time": models, code, and papers

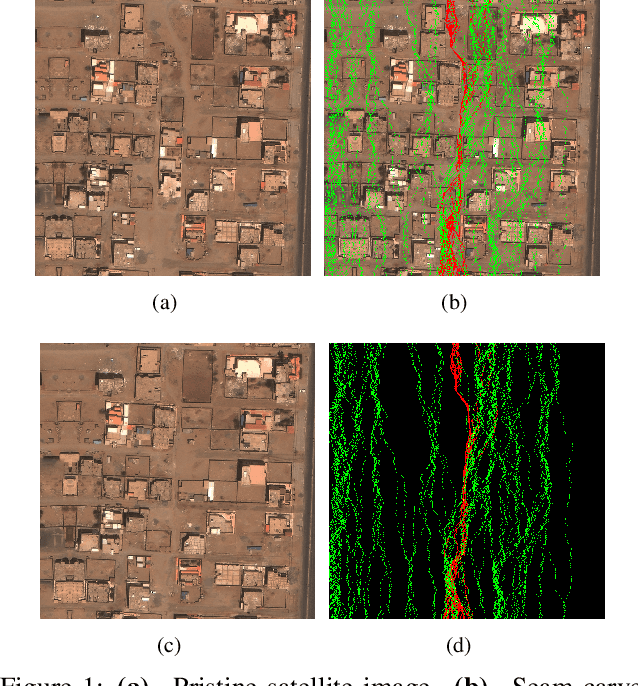

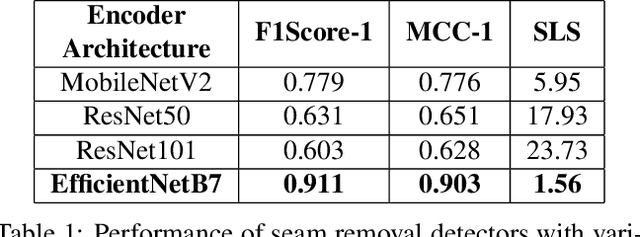

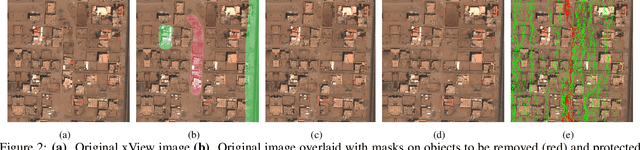

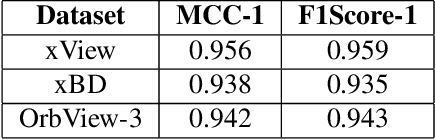

SeeTheSeams: Localized Detection of Seam Carving based Image Forgery in Satellite Imagery

Aug 28, 2021

Seam carving is a popular technique for content aware image retargeting. It can be used to deliberately manipulate images, for example, change the GPS locations of a building or insert/remove roads in a satellite image. This paper proposes a novel approach for detecting and localizing seams in such images. While there are methods to detect seam carving based manipulations, this is the first time that robust localization and detection of seam carving forgery is made possible. We also propose a seam localization score (SLS) metric to evaluate the effectiveness of localization. The proposed method is evaluated extensively on a large collection of images from different sources, demonstrating a high level of detection and localization performance across these datasets. The datasets curated during this work will be released to the public.

Mixed pooling of seasonality in time series pallet forecasting

Aug 14, 2019

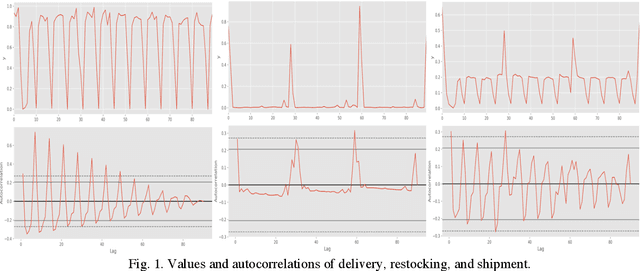

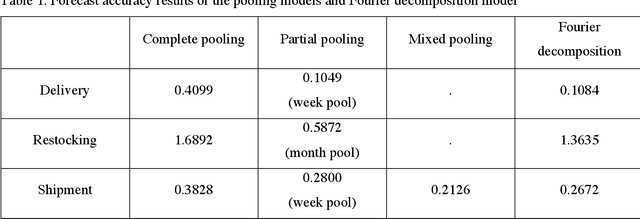

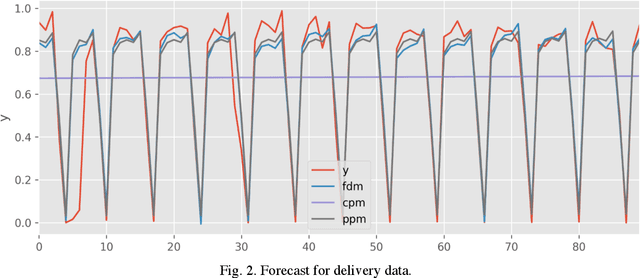

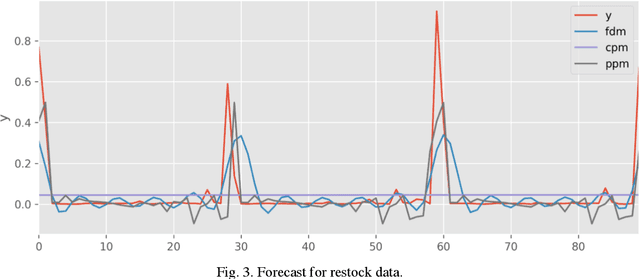

Multiple seasonal patterns play a key role in time series forecasting, especially for business time series where seasonal effects are often dramatic. Previous approaches including Fourier decomposition, exponential smoothing, and seasonal autoregressive integrated moving average (SARIMA) models do not reflect the distinct characteristics of each period in seasonal patterns, such as the unique behavior of specific days of the week in business data. We propose a multi-dimensional hierarchical model. Intermediate parameters for each seasonal period are first estimated, and a mixture of intermediate parameters is then taken, resulting in a model that successfully reflects the interactions between multiple seasonal patterns. Although this process reduces the data available for each parameter, a robust estimation can be obtained through a hierarchical Bayesian model implemented in Stan. Through this model, it becomes possible to consider both the characteristics of each seasonal period and the interactions among characteristics from multiple seasonal periods. Our new model achieved considerable improvements in prediction accuracy compared to previous models, including Fourier decomposition, which Prophet uses to model seasonality patterns. A comparison was performed on a real-world dataset of pallet transport from a national-scale logistic network.

Faster than LASER -- Towards Stream Reasoning with Deep Neural Networks

Jun 15, 2021

With the constant increase of available data in various domains, such as the Internet of Things, Social Networks or Smart Cities, it has become fundamental that agents are able to process and reason with such data in real time. Whereas reasoning over time-annotated data with background knowledge may be challenging, due to the volume and velocity in which such data is being produced, such complex reasoning is necessary in scenarios where agents need to discover potential problems and this cannot be done with simple stream processing techniques. Stream Reasoners aim at bridging this gap between reasoning and stream processing and LASER is such a stream reasoner designed to analyse and perform complex reasoning over streams of data. It is based on LARS, a rule-based logical language extending Answer Set Programming, and it has shown better runtime results than other state-of-the-art stream reasoning systems. Nevertheless, for high levels of data throughput even LASER may be unable to compute answers in a timely fashion. In this paper, we study whether Convolutional and Recurrent Neural Networks, which have shown to be particularly well-suited for time series forecasting and classification, can be trained to approximate reasoning with LASER, so that agents can benefit from their high processing speed.

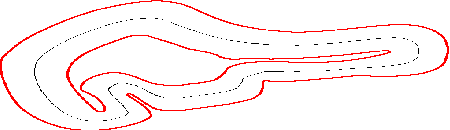

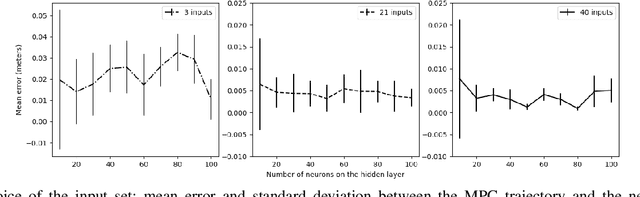

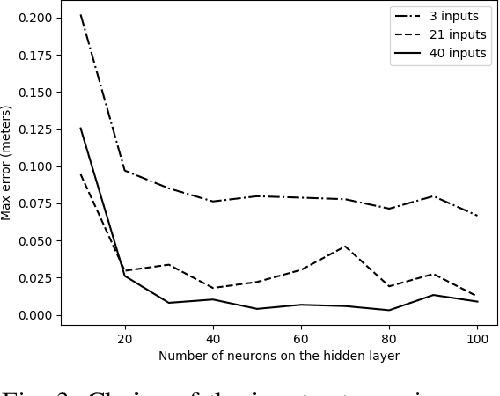

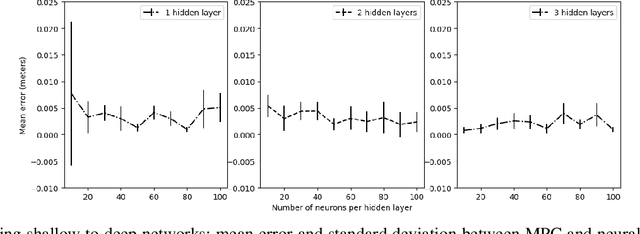

Neural Network Based Model Predictive Control for an Autonomous Vehicle

Jul 30, 2021

We study learning based controllers as a replacement for model predictive controllers (MPC) for the control of autonomous vehicles. We concentrate for the experiments on the simple yet representative bicycle model. We compare training by supervised learning and by reinforcement learning. We also discuss the neural net architectures so as to obtain small nets with the best performances. This work aims at producing controllers that can both be embedded on real-time platforms and amenable to verification by formal methods techniques.

SlimYOLOv3: Narrower, Faster and Better for Real-Time UAV Applications

Jul 25, 2019

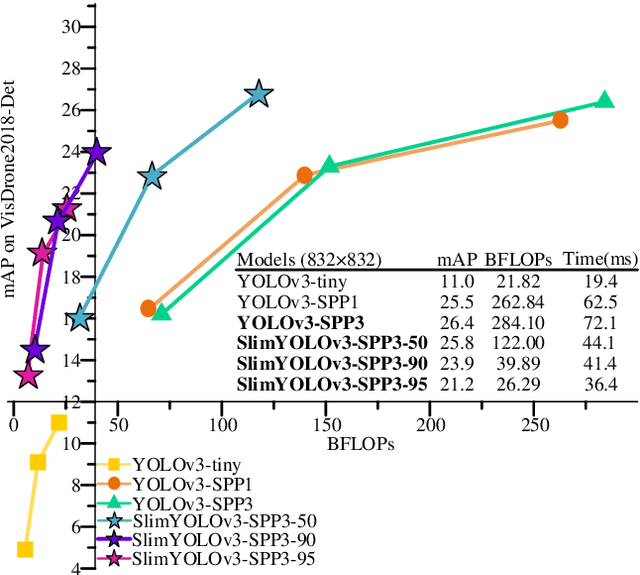

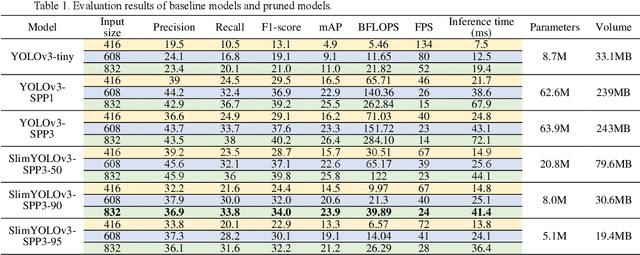

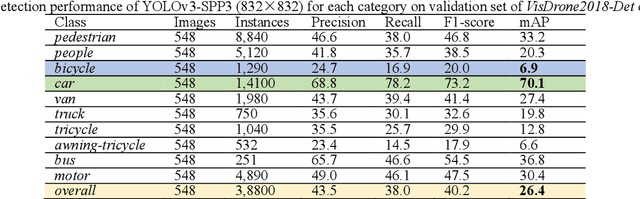

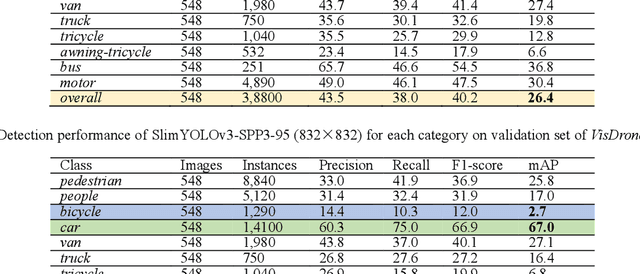

Drones or general Unmanned Aerial Vehicles (UAVs), endowed with computer vision function by on-board cameras and embedded systems, have become popular in a wide range of applications. However, real-time scene parsing through object detection running on a UAV platform is very challenging, due to limited memory and computing power of embedded devices. To deal with these challenges, in this paper we propose to learn efficient deep object detectors through channel pruning of convolutional layers. To this end, we enforce channel-level sparsity of convolutional layers by imposing L1 regularization on channel scaling factors and prune less informative feature channels to obtain "slim" object detectors. Based on such approach, we present SlimYOLOv3 with fewer trainable parameters and floating point operations (FLOPs) in comparison of original YOLOv3 (Joseph Redmon et al., 2018) as a promising solution for real-time object detection on UAVs. We evaluate SlimYOLOv3 on VisDrone2018-Det benchmark dataset; compelling results are achieved by SlimYOLOv3 in comparison of unpruned counterpart, including ~90.8% decrease of FLOPs, ~92.0% decline of parameter size, running ~2 times faster and comparable detection accuracy as YOLOv3. Experimental results with different pruning ratios consistently verify that proposed SlimYOLOv3 with narrower structure are more efficient, faster and better than YOLOv3, and thus are more suitable for real-time object detection on UAVs. Our codes are made publicly available at https://github.com/PengyiZhang/SlimYOLOv3.

AirDOS: Dynamic SLAM benefits from Articulated Objects

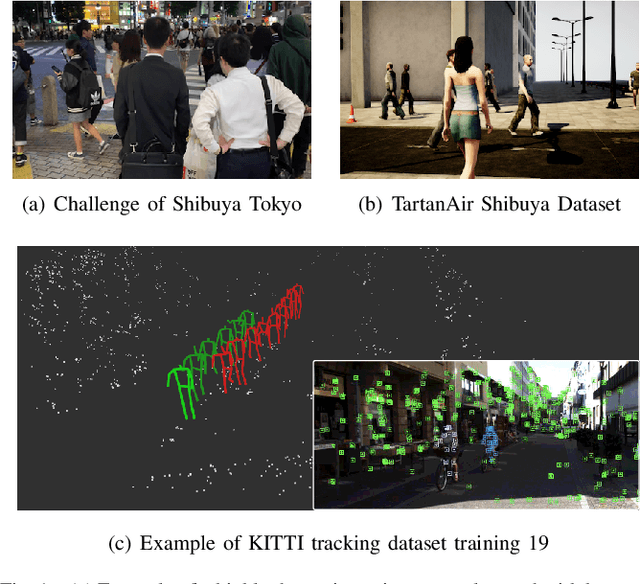

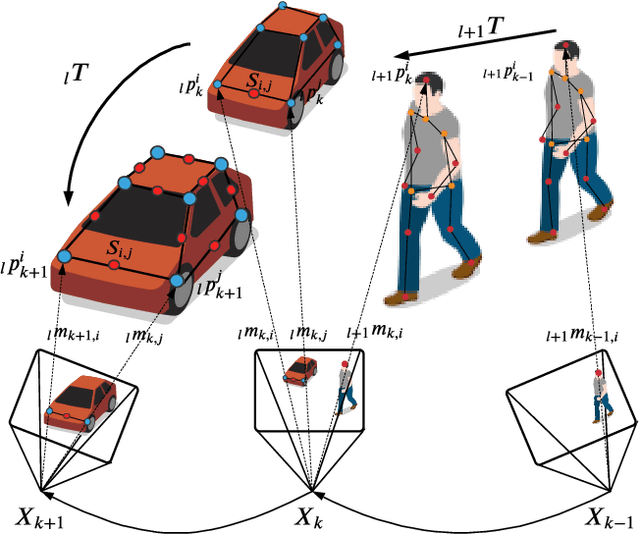

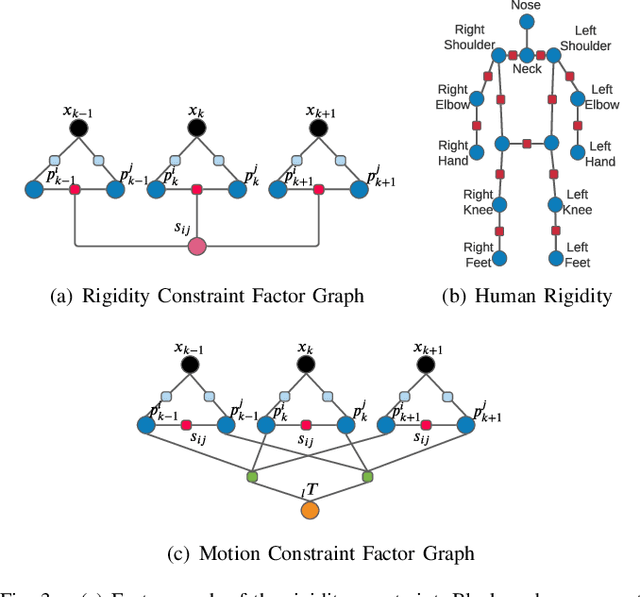

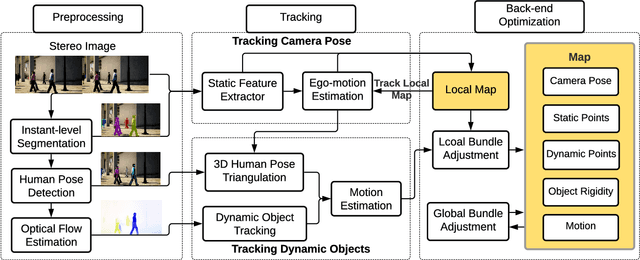

Sep 21, 2021

Dynamic Object-aware SLAM (DOS) exploits object-level information to enable robust motion estimation in dynamic environments. It has attracted increasing attention with the recent success of learning-based models. Existing methods mainly focus on identifying and excluding dynamic objects from the optimization. In this paper, we show that feature-based visual SLAM systems can also benefit from the presence of dynamic articulated objects by taking advantage of two observations: (1) The 3D structure of an articulated object remains consistent over time; (2) The points on the same object follow the same motion. In particular, we present AirDOS, a dynamic object-aware system that introduces rigidity and motion constraints to model articulated objects. By jointly optimizing the camera pose, object motion, and the object 3D structure, we can rectify the camera pose estimation, preventing tracking loss, and generate 4D spatio-temporal maps for both dynamic objects and static scenes. Experiments show that our algorithm improves the robustness of visual SLAM algorithms in challenging crowded urban environments. To the best of our knowledge, AirDOS is the first dynamic object-aware SLAM system demonstrating that camera pose estimation can be improved by incorporating dynamic articulated objects.

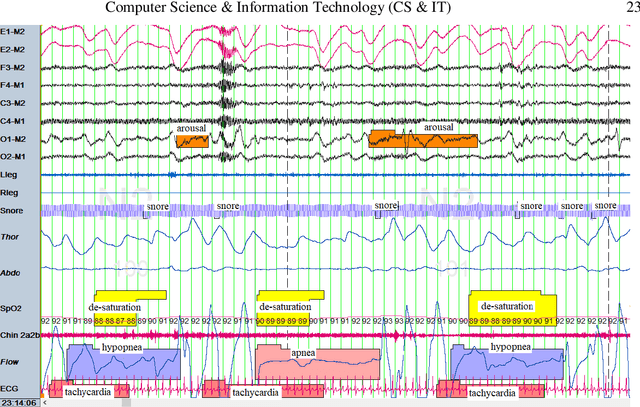

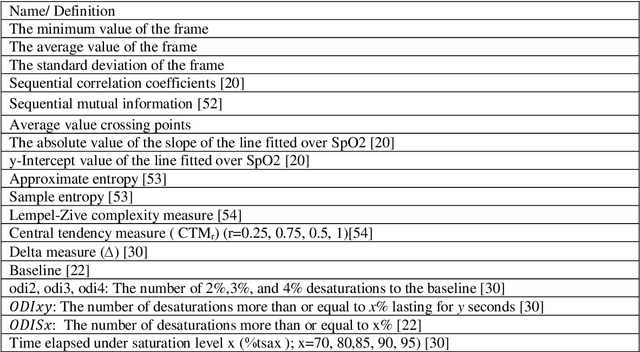

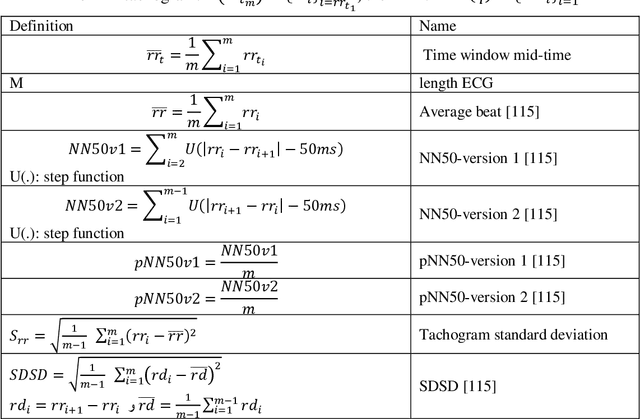

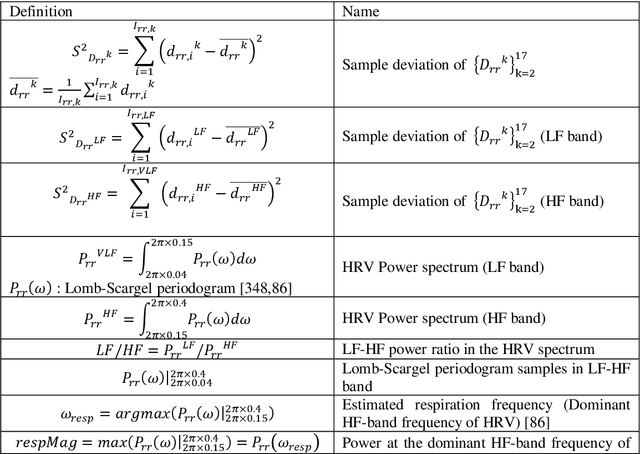

Online Obstructive Sleep Apnea Detection Based on Hybrid Machine Learning And Classifier Combination For Home-based Applications

Oct 01, 2021

Automatic detection of obstructive sleep apnea (OSA) is in great demand. OSA is one of the most prevalent diseases of the current century and established comorbidity to Covid-19. OSA is characterized by complete or relative breathing pauses during sleep. According to medical observations, if OSA remained unrecognized and un-treated, it may lead to physical and mental complications. The gold standard of scoring OSA severity is the time-consuming and expensive method of polysomnography (PSG). The idea of online home-based surveillance of OSA is welcome. It serves as an effective way for spurred detection and reference of patients to sleep clinics. In addition, it can perform automatic control of the therapeutic/assistive devices. In this paper, several configurations for online OSA detection are proposed. The best configuration uses both ECG and SpO2 signals for feature extraction and MI analysis for feature reduction. Various methods of supervised machine learning are exploited for classification. Finally, to reach the best result, the most successful classifiers in sensitivity and specificity are combined in groups of three members with four different combination methods. The proposed method has advantages like limited use of biological signals, automatic detection, online working scheme, and uniform and acceptable performance (over 85%) in all the employed databases. These advantages have not been integrated in previous published methods.

Out-of-Distribution Dynamics Detection: RL-Relevant Benchmarks and Results

Jul 11, 2021

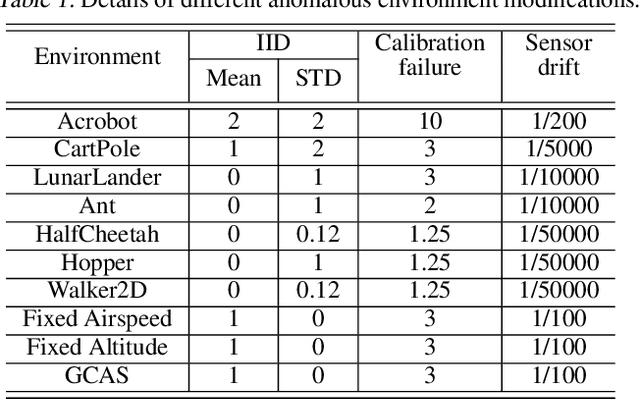

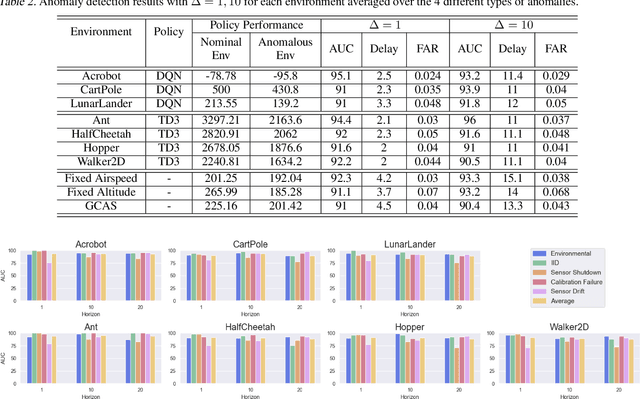

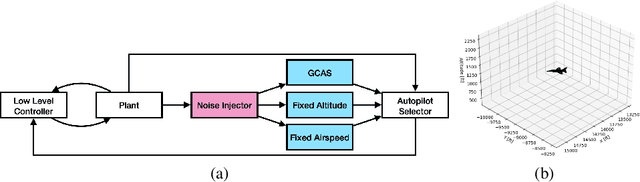

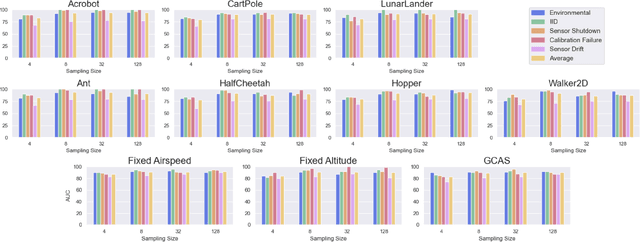

We study the problem of out-of-distribution dynamics (OODD) detection, which involves detecting when the dynamics of a temporal process change compared to the training-distribution dynamics. This is relevant to applications in control, reinforcement learning (RL), and multi-variate time-series, where changes to test time dynamics can impact the performance of learning controllers/predictors in unknown ways. This problem is particularly important in the context of deep RL, where learned controllers often overfit to the training environment. Currently, however, there is a lack of established OODD benchmarks for the types of environments commonly used in RL research. Our first contribution is to design a set of OODD benchmarks derived from common RL environments with varying types and intensities of OODD. Our second contribution is to design a strong OODD baseline approach based on recurrent implicit quantile networks (RIQNs), which monitors autoregressive prediction errors for OODD detection. Our final contribution is to evaluate the RIQN approach on the benchmarks to provide baseline results for future comparison.

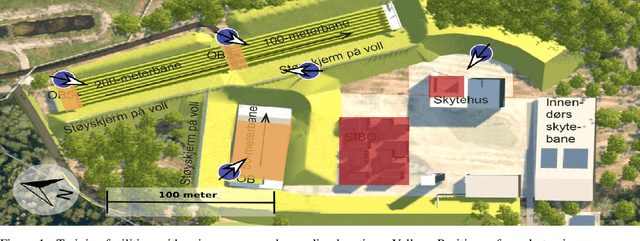

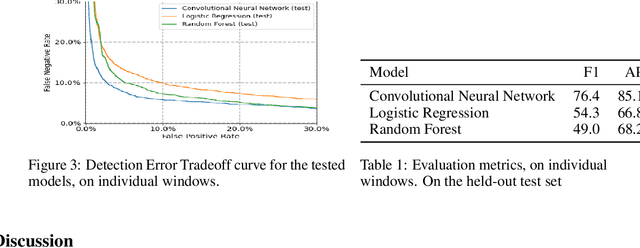

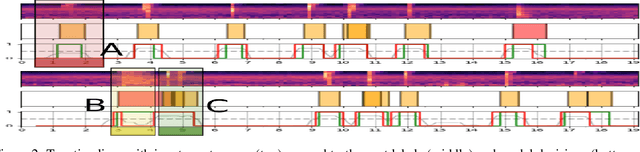

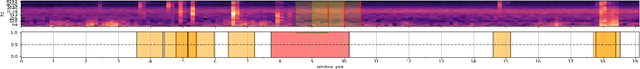

Automatic Detection Of Noise Events at Shooting Range Using Machine Learning

Jul 23, 2021

Outdoor shooting ranges are subject to noise regulations from local and national authorities. Restrictions found in these regulations may include limits on times of activities, the overall number of noise events, as well as limits on number of events depending on the class of noise or activity. A noise monitoring system may be used to track overall sound levels, but rarely provide the ability to detect activity or count the number of events, required to compare directly with such regulations. This work investigates the feasibility and performance of an automatic detection system to count noise events. An empirical evaluation was done by collecting data at a newly constructed shooting range and training facility. The data includes tests of multiple weapon configurations from small firearms to high caliber rifles and explosives, at multiple source positions, and collected on multiple different days. Several alternative machine learning models are tested, using as inputs time-series of standard acoustic indicators such as A-weighted sound levels and 1/3 octave spectrogram, and classifiers such as Logistic Regression and Convolutional Neural Networks. Performance for the various alternatives are reported in terms of the False Positive Rate and False Negative Rate. The detection performance was found to be satisfactory for use in automatic logging of time-periods with training activity.

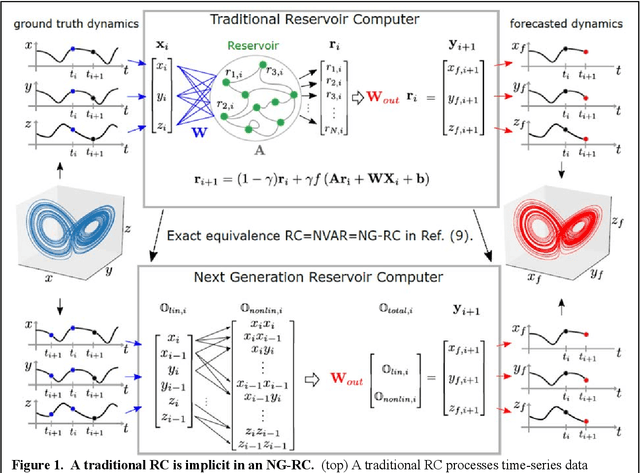

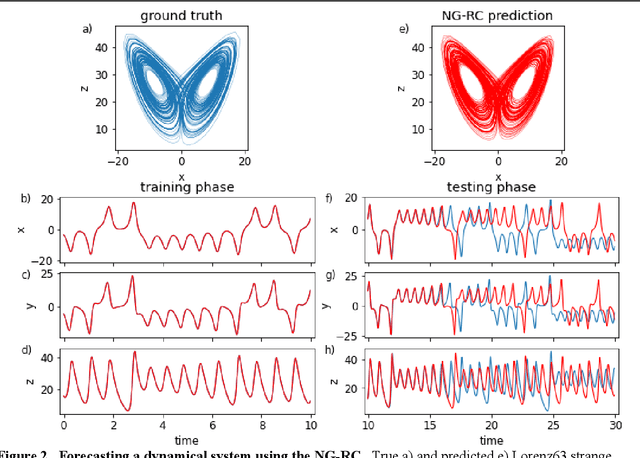

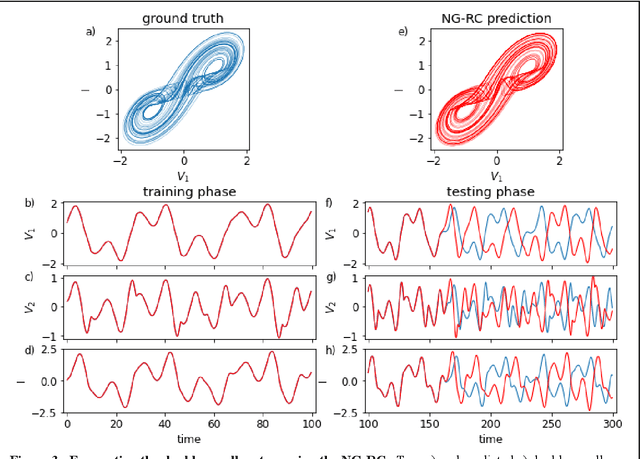

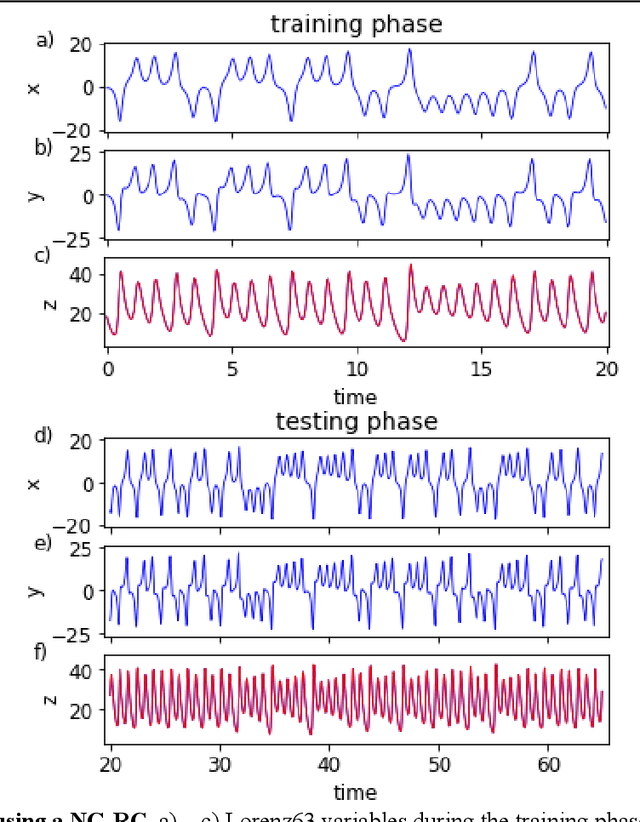

Next Generation Reservoir Computing

Jun 14, 2021

Reservoir computing is a best-in-class machine learning algorithm for processing information generated by dynamical systems using observed time-series data. Importantly, it requires very small training data sets, uses linear optimization, and thus requires minimal computing resources. However, the algorithm uses randomly sampled matrices to define the underlying recurrent neural network and has a multitude of metaparameters that must be optimized. Recent results demonstrate the equivalence of reservoir computing to nonlinear vector autoregression, which requires no random matrices, fewer metaparameters, and provides interpretable results. Here, we demonstrate that nonlinear vector autoregression excels at reservoir computing benchmark tasks and requires even shorter training data sets and training time, heralding the next generation of reservoir computing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge