"Time": models, code, and papers

Noise-Resistant Deep Metric Learning with Probabilistic Instance Filtering

Aug 03, 2021

Noisy labels are commonly found in real-world data, which cause performance degradation of deep neural networks. Cleaning data manually is labour-intensive and time-consuming. Previous research mostly focuses on enhancing classification models against noisy labels, while the robustness of deep metric learning (DML) against noisy labels remains less well-explored. In this paper, we bridge this important gap by proposing Probabilistic Ranking-based Instance Selection with Memory (PRISM) approach for DML. PRISM calculates the probability of a label being clean, and filters out potentially noisy samples. Specifically, we propose three methods to calculate this probability: 1) Average Similarity Method (AvgSim), which calculates the average similarity between potentially noisy data and clean data; 2) Proxy Similarity Method (ProxySim), which replaces the centers maintained by AvgSim with the proxies trained by proxy-based method; and 3) von Mises-Fisher Distribution Similarity (vMF-Sim), which estimates a von Mises-Fisher distribution for each data class. With such a design, the proposed approach can deal with challenging DML situations in which the majority of the samples are noisy. Extensive experiments on both synthetic and real-world noisy dataset show that the proposed approach achieves up to 8.37% higher Precision@1 compared with the best performing state-of-the-art baseline approaches, within reasonable training time.

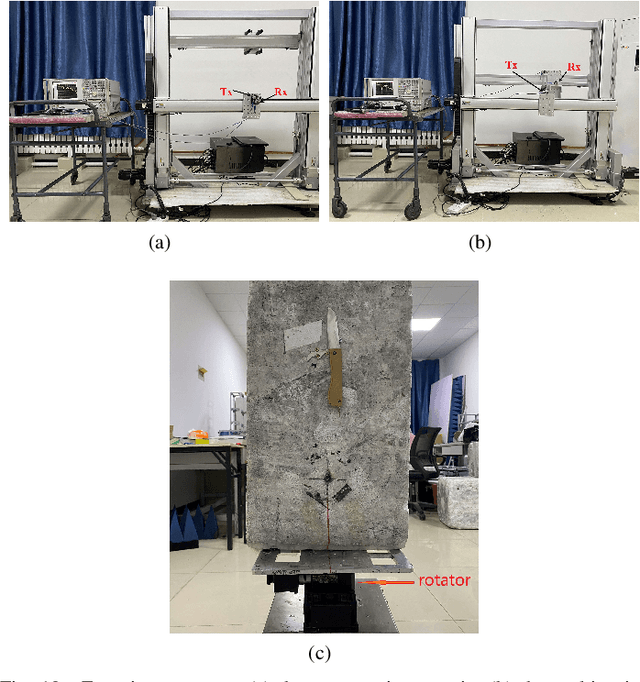

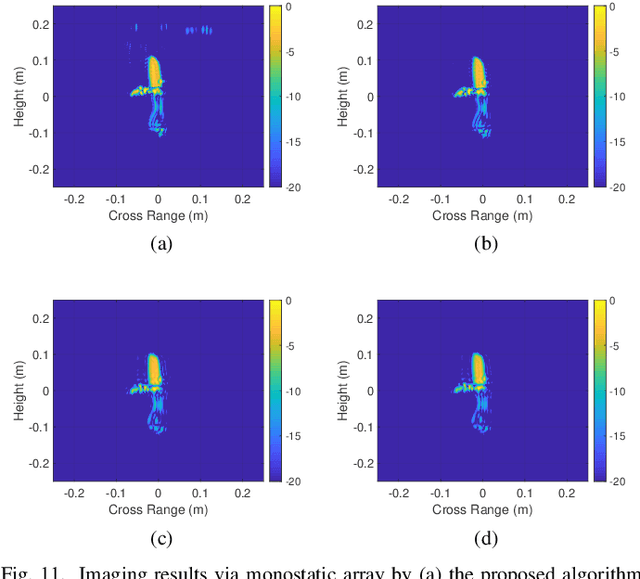

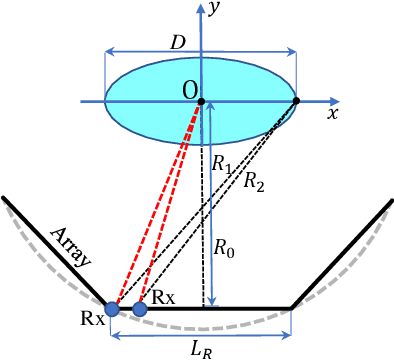

Near-Field Millimeter-Wave Imaging via Arrays in the Shape of Polyline

Sep 07, 2021

This paper proposes a polyline shaped array based system scheme, associated with mechanical scanning along the perpendicular direction of the array, for near-field millimeter-wave (MMW) imaging. Each section of the polyline is a chord of a circle with equal length. The polyline array, which can be realized as a monostatic array or a multistatic one, is capable of providing more observation angles than the linear or planar arrays. Further, we present the related three-dimensional (3-D) imaging algorithms based on a hybrid processing in the time domain and the spatial frequency domain. The nonuniform fast Fourier transform (NUFFT) is utilized to improve the computational efficiency. Simulations and experimental results are provided to demonstrate the efficacy of the proposed method in comparison with the back-projection (BP) algorithm.

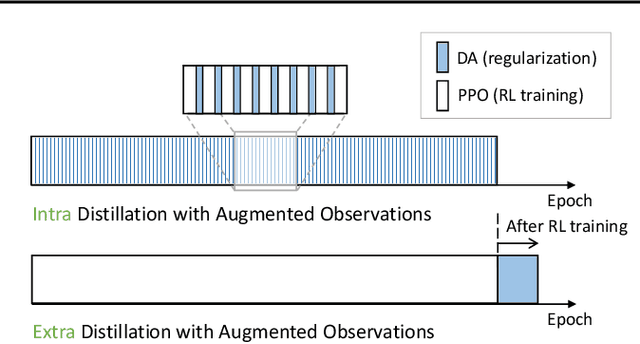

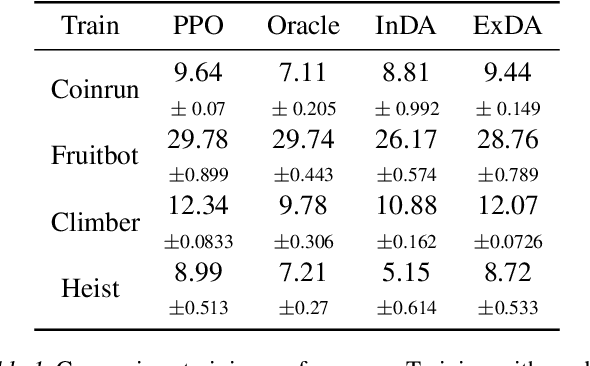

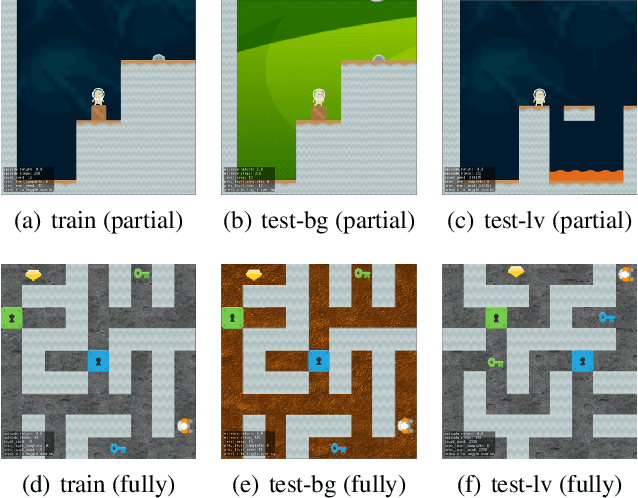

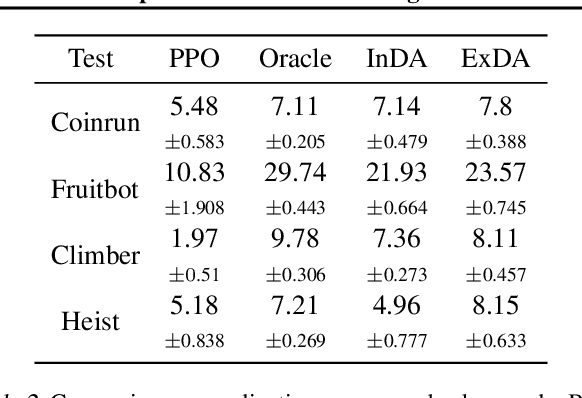

Time Matters in Using Data Augmentation for Vision-based Deep Reinforcement Learning

Feb 17, 2021

Data augmentation technique from computer vision has been widely considered as a regularization method to improve data efficiency and generalization performance in vision-based reinforcement learning. We variate the timing of using augmentation, which is, in turn, critical depending on tasks to be solved in training and testing. According to our experiments on Open AI Procgen Benchmark, if the regularization imposed by augmentation is helpful only in testing, it is better to procrastinate the augmentation after training than to use it during training in terms of sample and computation complexity. We note that some of such augmentations can disturb the training process. Conversely, an augmentation providing regularization useful in training needs to be used during the whole training period to fully utilize its benefit in terms of not only generalization but also data efficiency. These phenomena suggest a useful timing control of data augmentation in reinforcement learning.

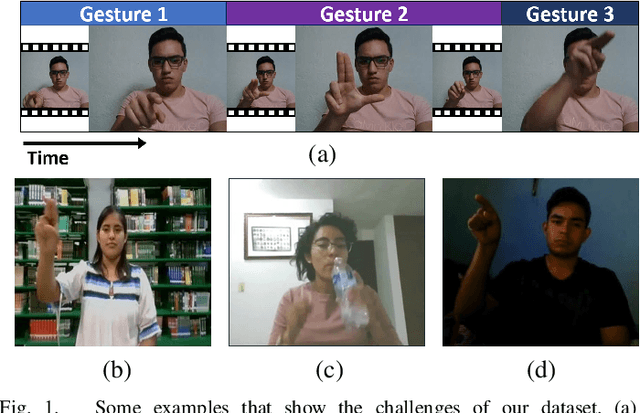

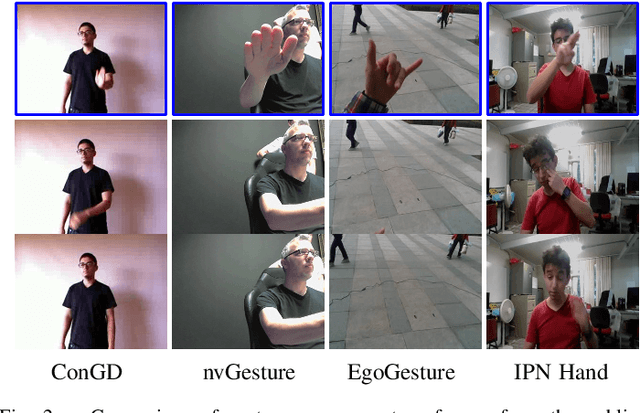

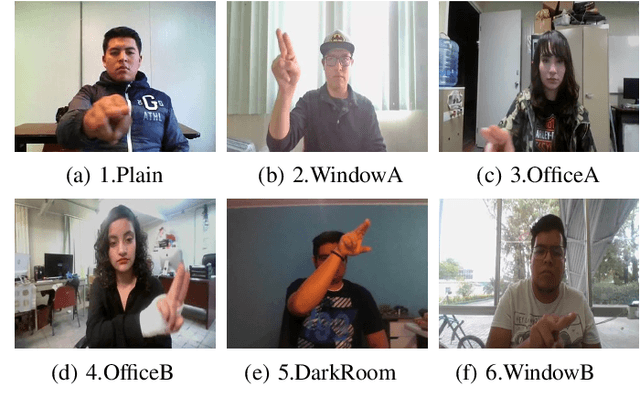

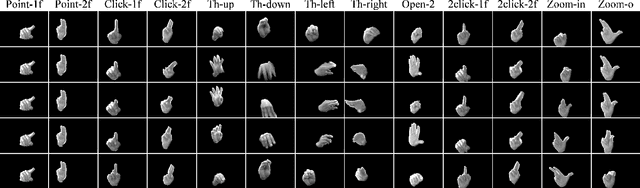

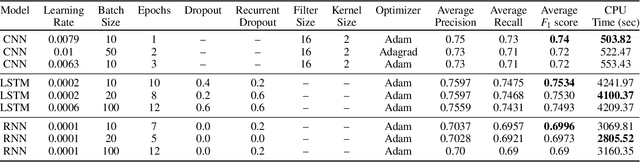

IPN Hand: A Video Dataset and Benchmark for Real-Time Continuous Hand Gesture Recognition

Apr 20, 2020

In the research community of continuous hand gesture recognition (HGR), the current publicly available datasets lack real-world elements needed to build responsive and efficient HGR systems. In this paper, we introduce a new benchmark dataset named IPN Hand with sufficient size, variation, and real-world elements able to train and evaluate deep neural networks. This dataset contains more than 4 000 gesture samples and 800 000 RGB frames from 50 distinct subjects. We design 13 different static and dynamic gestures focused on interaction with touchless screens. We especially consider the scenario when continuous gestures are performed without transition states, and when subjects perform natural movements with their hands as non-gesture actions. Gestures were collected from about 30 diverse scenes, with real-world variation in background and illumination. With our dataset, the performance of three 3D-CNN models is evaluated on the tasks of isolated and continuous real-time HGR. Furthermore, we analyze the possibility of increasing the recognition accuracy by adding multiple modalities derived from RGB frames, i.e., optical flow and semantic segmentation, while keeping the real-time performance of the 3D-CNN model. Our empirical study also provides a comparison with the publicly available nvGesture (NVIDIA) dataset. The experimental results show that the state-of-the-art ResNext-101 model decreases about 30% accuracy when using our real-world dataset, demonstrating that the IPN Hand dataset can be used as a benchmark, and may help the community to step forward in the continuous HGR. Our dataset and pre-trained models used in the evaluation are publicly available at https://github.com/GibranBenitez/IPN-hand.

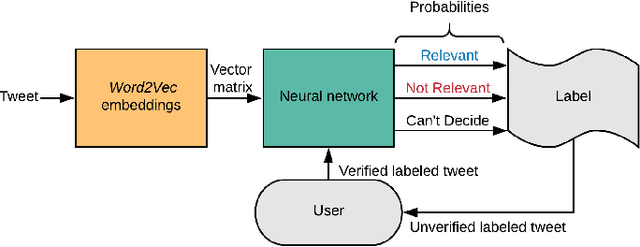

Interactive Learning for Identifying Relevant Tweets to Support Real-time Situational Awareness

Aug 01, 2019

Various domain users are increasingly leveraging real-time social media data to gain rapid situational awareness. However, due to the high noise in the deluge of data, effectively determining semantically relevant information can be difficult, further complicated by the changing definition of relevancy by each end user for different events. The majority of existing methods for short text relevance classification fail to incorporate users' knowledge into the classification process. Existing methods that incorporate interactive user feedback focus on historical datasets. Therefore, classifiers cannot be interactively retrained for specific events or user-dependent needs in real-time. This limits real-time situational awareness, as streaming data that is incorrectly classified cannot be corrected immediately, permitting the possibility for important incoming data to be incorrectly classified as well. We present a novel interactive learning framework to improve the classification process in which the user iteratively corrects the relevancy of tweets in real-time to train the classification model on-the-fly for immediate predictive improvements. We computationally evaluate our classification model adapted to learn at interactive rates. Our results show that our approach outperforms state-of-the-art machine learning models. In addition, we integrate our framework with the extended Social Media Analytics and Reporting Toolkit (SMART) 2.0 system, allowing the use of our interactive learning framework within a visual analytics system tailored for real-time situational awareness. To demonstrate our framework's effectiveness, we provide domain expert feedback from first responders who used the extended SMART 2.0 system.

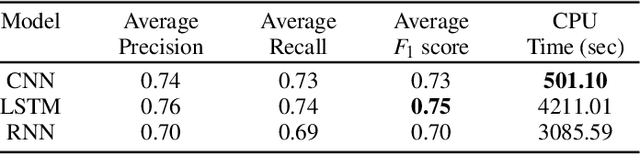

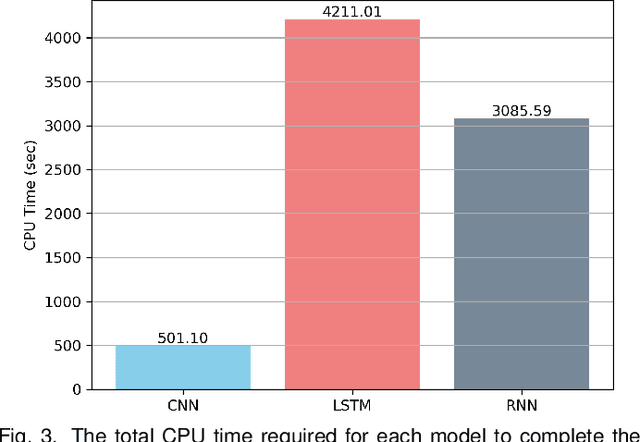

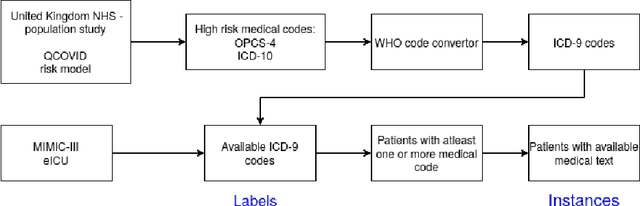

Predicting COVID-19 Patient Shielding: A Comprehensive Study

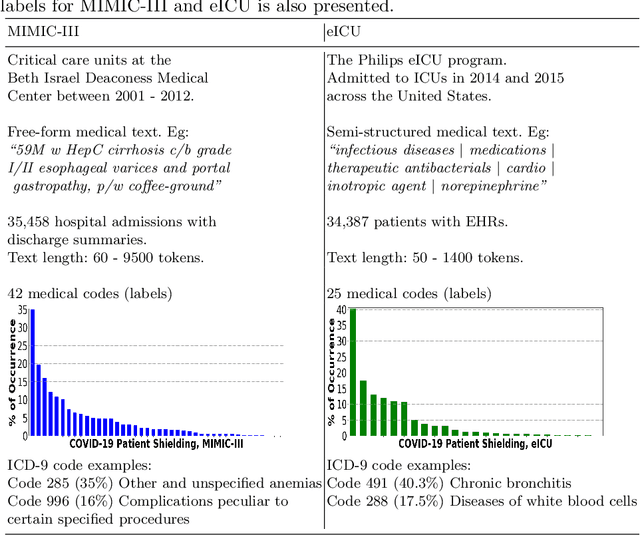

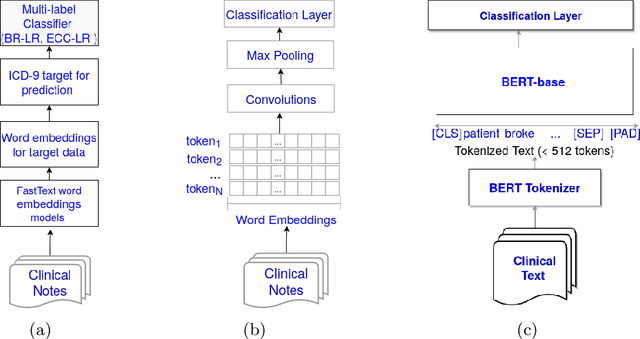

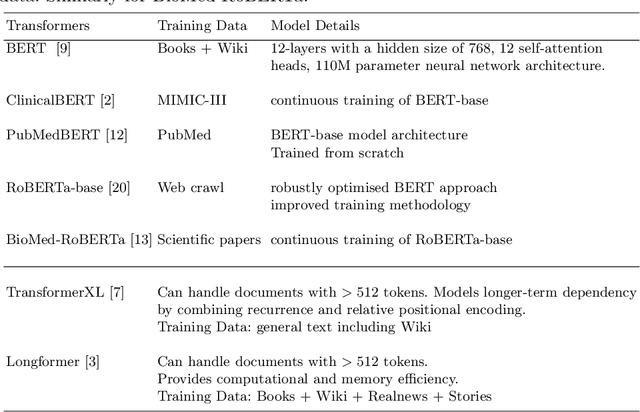

Oct 01, 2021

There are many ways machine learning and big data analytics are used in the fight against the COVID-19 pandemic, including predictions, risk management, diagnostics, and prevention. This study focuses on predicting COVID-19 patient shielding -- identifying and protecting patients who are clinically extremely vulnerable from coronavirus. This study focuses on techniques used for the multi-label classification of medical text. Using the information published by the United Kingdom NHS and the World Health Organisation, we present a novel approach to predicting COVID-19 patient shielding as a multi-label classification problem. We use publicly available, de-identified ICU medical text data for our experiments. The labels are derived from the published COVID-19 patient shielding data. We present an extensive comparison across 12 multi-label classifiers from the simple binary relevance to neural networks and the most recent transformers. To the best of our knowledge this is the first comprehensive study, where such a range of multi-label classifiers for medical text are considered. We highlight the benefits of various approaches, and argue that, for the task at hand, both predictive accuracy and processing time are essential.

* Accepted in AJCAI 2021

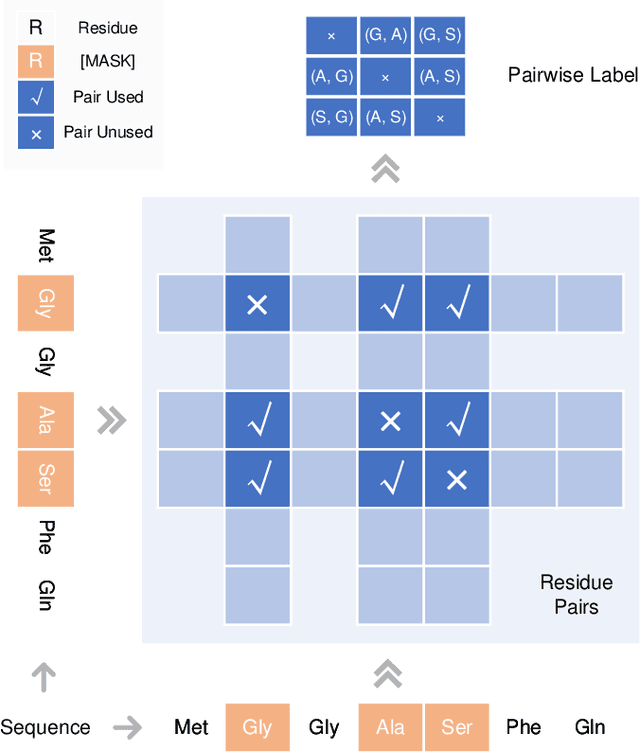

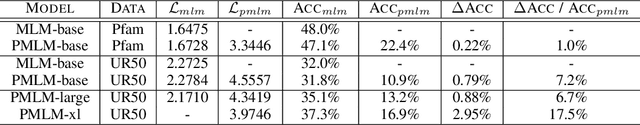

Pre-training Co-evolutionary Protein Representation via A Pairwise Masked Language Model

Oct 29, 2021

Understanding protein sequences is vital and urgent for biology, healthcare, and medicine. Labeling approaches are expensive yet time-consuming, while the amount of unlabeled data is increasing quite faster than that of the labeled data due to low-cost, high-throughput sequencing methods. In order to extract knowledge from these unlabeled data, representation learning is of significant value for protein-related tasks and has great potential for helping us learn more about protein functions and structures. The key problem in the protein sequence representation learning is to capture the co-evolutionary information reflected by the inter-residue co-variation in the sequences. Instead of leveraging multiple sequence alignment as is usually done, we propose a novel method to capture this information directly by pre-training via a dedicated language model, i.e., Pairwise Masked Language Model (PMLM). In a conventional masked language model, the masked tokens are modeled by conditioning on the unmasked tokens only, but processed independently to each other. However, our proposed PMLM takes the dependency among masked tokens into consideration, i.e., the probability of a token pair is not equal to the product of the probability of the two tokens. By applying this model, the pre-trained encoder is able to generate a better representation for protein sequences. Our result shows that the proposed method can effectively capture the inter-residue correlations and improves the performance of contact prediction by up to 9% compared to the MLM baseline under the same setting. The proposed model also significantly outperforms the MSA baseline by more than 7% on the TAPE contact prediction benchmark when pre-trained on a subset of the sequence database which the MSA is generated from, revealing the potential of the sequence pre-training method to surpass MSA based methods in general.

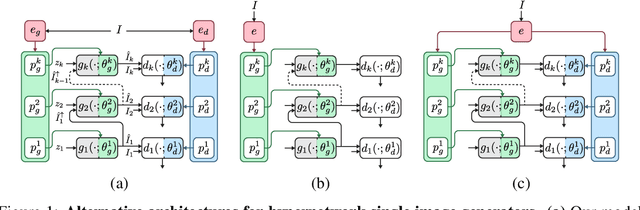

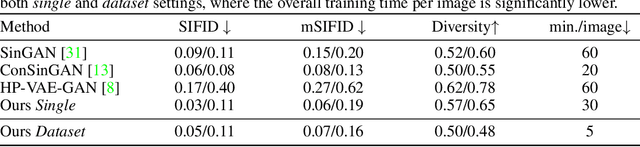

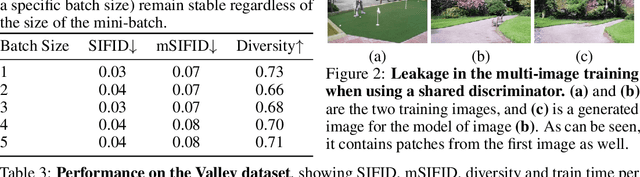

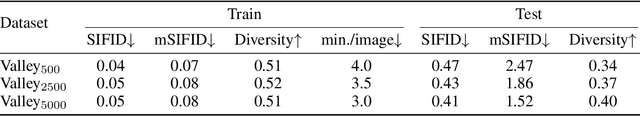

Meta Internal Learning

Oct 06, 2021

Internal learning for single-image generation is a framework, where a generator is trained to produce novel images based on a single image. Since these models are trained on a single image, they are limited in their scale and application. To overcome these issues, we propose a meta-learning approach that enables training over a collection of images, in order to model the internal statistics of the sample image more effectively. In the presented meta-learning approach, a single-image GAN model is generated given an input image, via a convolutional feedforward hypernetwork $f$. This network is trained over a dataset of images, allowing for feature sharing among different models, and for interpolation in the space of generative models. The generated single-image model contains a hierarchy of multiple generators and discriminators. It is therefore required to train the meta-learner in an adversarial manner, which requires careful design choices that we justify by a theoretical analysis. Our results show that the models obtained are as suitable as single-image GANs for many common image applications, significantly reduce the training time per image without loss in performance, and introduce novel capabilities, such as interpolation and feedforward modeling of novel images.

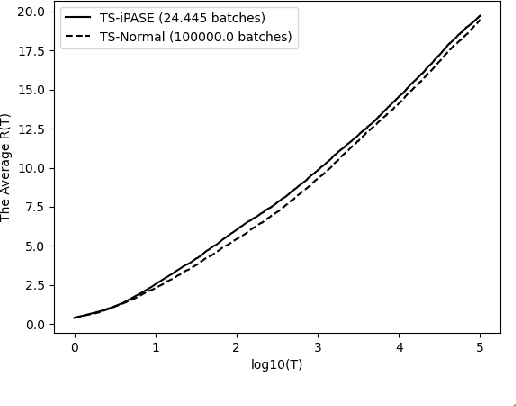

Asymptotic Performance of Thompson Sampling in the Batched Multi-Armed Bandits

Oct 01, 2021

We study the asymptotic performance of the Thompson sampling algorithm in the batched multi-armed bandit setting where the time horizon $T$ is divided into batches, and the agent is not able to observe the rewards of her actions until the end of each batch. We show that in this batched setting, Thompson sampling achieves the same asymptotic performance as in the case where instantaneous feedback is available after each action, provided that the batch sizes increase subexponentially. This result implies that Thompson sampling can maintain its performance even if it receives delayed feedback in $\omega(\log T)$ batches. We further propose an adaptive batching scheme that reduces the number of batches to $\Theta(\log T)$ while maintaining the same performance. Although the batched multi-armed bandit setting has been considered in several recent works, previous results rely on tailored algorithms for the batched setting, which optimize the batch structure and prioritize exploration in the beginning of the experiment to eliminate suboptimal actions. We show that Thompson sampling, on the other hand, is able to achieve a similar asymptotic performance in the batched setting without any modifications.

* This work was presented in 2021 IEEE International Symposium on Information Theory (ISIT)

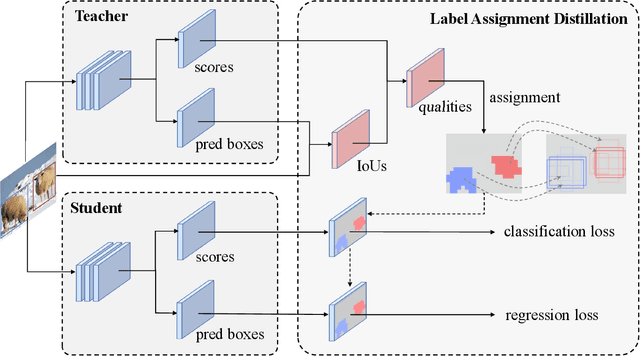

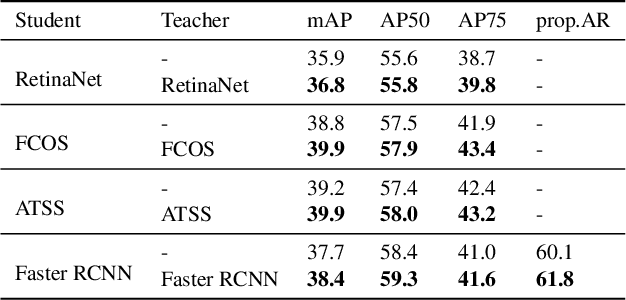

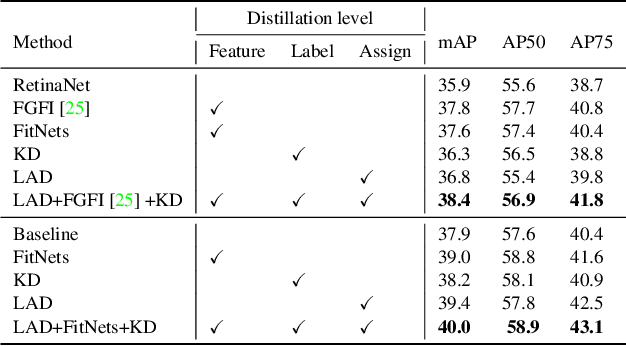

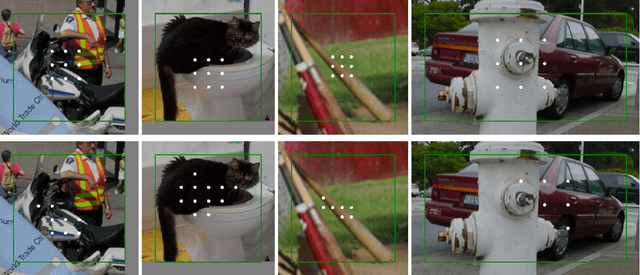

Label Assignment Distillation for Object Detection

Sep 18, 2021

Knowledge distillation methods are proved to be promising in improving the performance of neural networks and no additional computational expenses are required during the inference time. For the sake of boosting the accuracy of object detection, a great number of knowledge distillation methods have been proposed particularly designed for object detection. However, most of these methods only focus on feature-level distillation and label-level distillation, leaving the label assignment step, a unique and paramount procedure for object detection, by the wayside. In this work, we come up with a simple but effective knowledge distillation approach focusing on label assignment in object detection, in which the positive and negative samples of student network are selected in accordance with the predictions of teacher network. Our method shows encouraging results on the MSCOCO2017 benchmark, and can not only be applied to both one-stage detectors and two-stage detectors but also be utilized orthogonally with other knowledge distillation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge