"Time": models, code, and papers

Path Planning for Cellular-Connected UAV: A DRL Solution with Quantum-Inspired Experience Replay

Aug 30, 2021

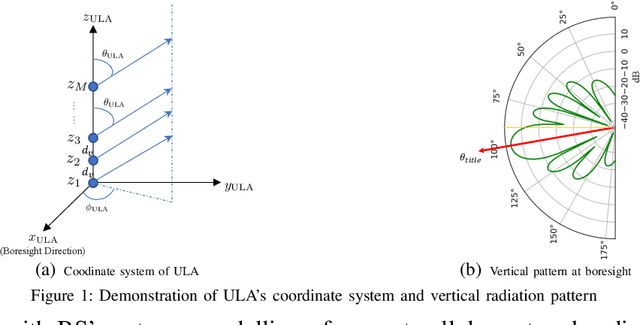

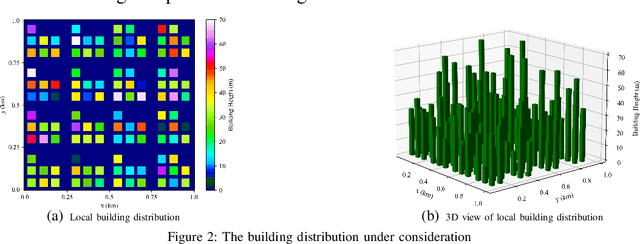

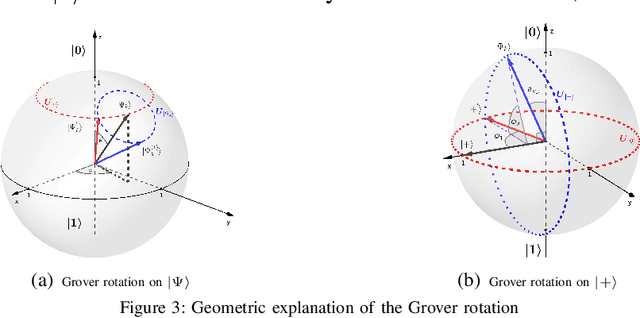

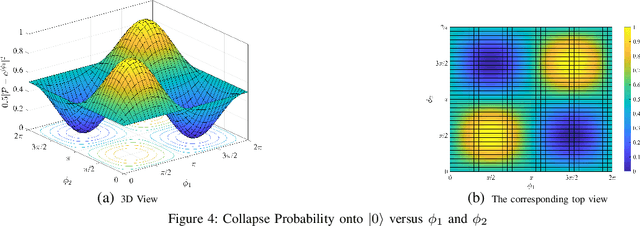

In cellular-connected unmanned aerial vehicle (UAV) network, a minimization problem on the weighted sum of time cost and expected outage duration is considered. Taking advantage of UAV's adjustable mobility, an intelligent UAV navigation approach is formulated to achieve the aforementioned optimization goal. Specifically, after mapping the navigation task into a Markov decision process (MDP), a deep reinforcement learning (DRL) solution with novel quantum-inspired experience replay (QiER) framework is proposed to help the UAV find the optimal flying direction within each time slot, and thus the designed trajectory towards the destination can be generated. Via relating experienced transition's importance to its associated quantum bit (qubit) and applying Grover iteration based amplitude amplification technique, the proposed DRL-QiER solution can commit a better trade-off between sampling priority and diversity. Compared to several representative baselines, the effectiveness and supremacy of the proposed DRL-QiER solution are demonstrated and validated in numerical results.

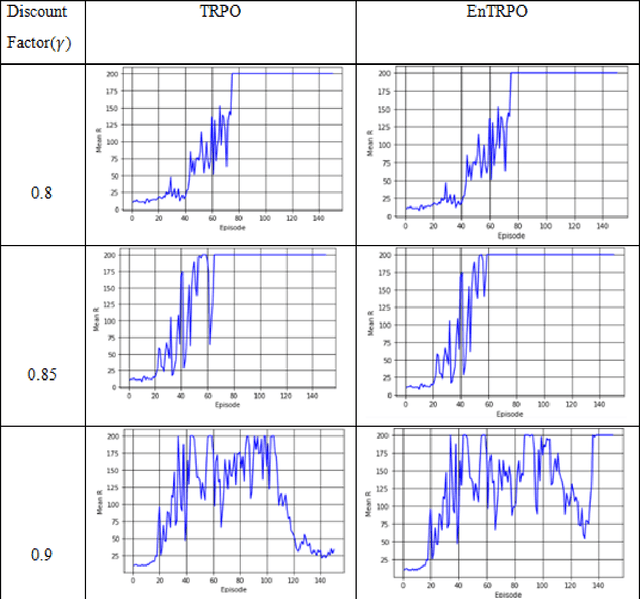

EnTRPO: Trust Region Policy Optimization Method with Entropy Regularization

Oct 26, 2021

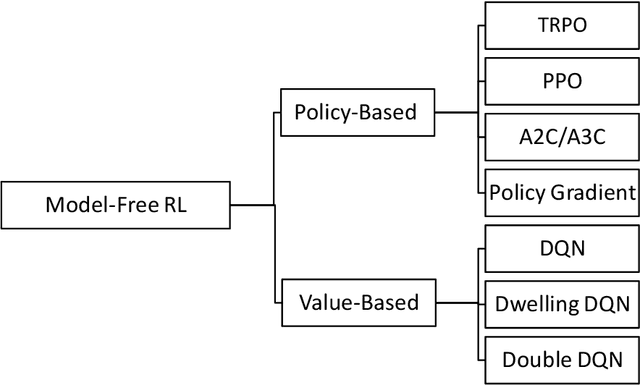

Trust Region Policy Optimization (TRPO) is a popular and empirically successful policy search algorithm in reinforcement learning (RL). It iteratively solved the surrogate problem which restricts consecutive policies to be close to each other. TRPO is an on-policy algorithm. On-policy methods bring many benefits, like the ability to gauge each resulting policy. However, they typically discard all the knowledge about the policies which existed before. In this work, we use a replay buffer to borrow from the off-policy learning setting to TRPO. Entropy regularization is usually used to improve policy optimization in reinforcement learning. It is thought to aid exploration and generalization by encouraging more random policy choices. We add an Entropy regularization term to advantage over {\pi}, accumulated over time steps, in TRPO. We call this update EnTRPO. Our experiments demonstrate EnTRPO achieves better performance for controlling a Cart-Pole system compared with the original TRPO

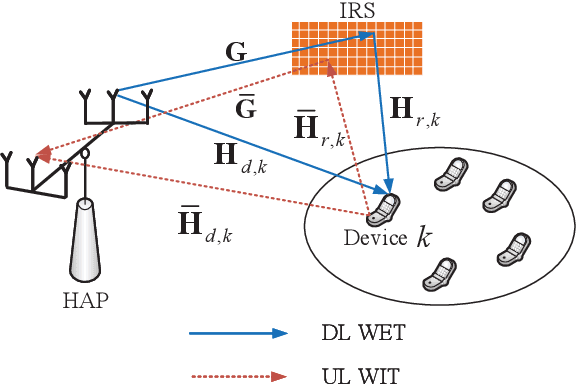

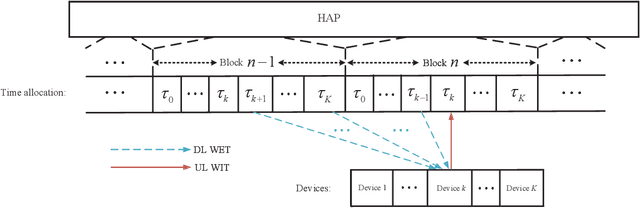

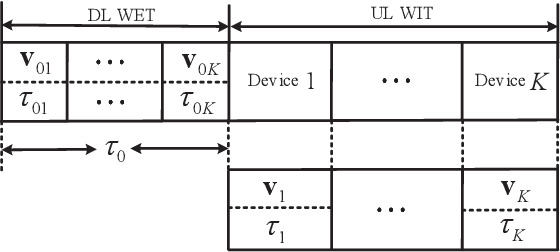

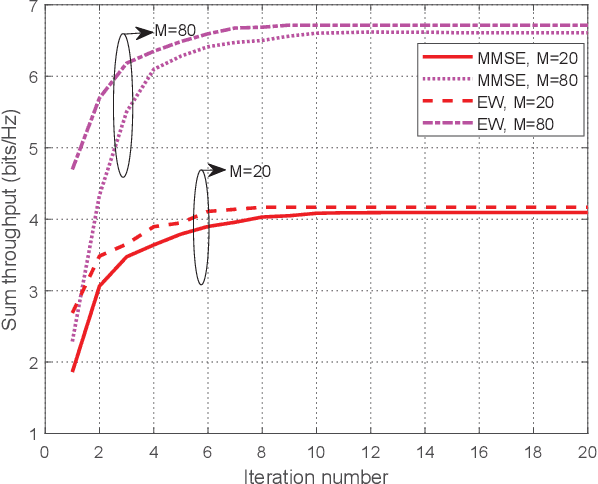

Throughput Maximization for IRS-Aided MIMO FD-WPCN with Non-Linear EH Model

Nov 28, 2021

This paper studies an intelligent reflecting surface (IRS)-aided multiple-input-multiple-output (MIMO) full-duplex (FD) wireless-powered communication network (WPCN), where a hybrid access point (HAP) operating in FD broadcasts energy signals to multiple devices for their energy harvesting (EH) in the downlink (DL) and meanwhile receives information signals from devices in the uplink (UL) with the help of an IRS. Taking into account the practical finite self-interference (SI) and the non-linear EH model, we formulate the weighted sum throughput maximization optimization problem by jointly optimizing DL/UL time allocation, precoding matrices at devices, transmit covariance matrices at the HAP, and phase shifts at the IRS. Since the resulting optimization problem is non-convex, there are no standard methods to solve it optimally in general. To tackle this challenge, we first propose an element-wise (EW) based algorithm, where each IRS phase shift is alternately optimized in an iterative manner. To reduce the computational complexity, a minimum mean-square error (MMSE) based algorithm is proposed, where we transform the original problem into an equivalent form based on the MMSE method, which facilities the design of an efficient iterative algorithm. In particular, the IRS phase shift optimization problem is recast as an second-order cone program (SOCP), where all the IRS phase shifts are simultaneously optimized. For comparison, we also study two suboptimal IRS beamforming configurations in simulations, namely partially dynamic IRS beamforming (PDBF) and static IRS beamforming (SBF), which strike a balance between the system performance and practical complexity.

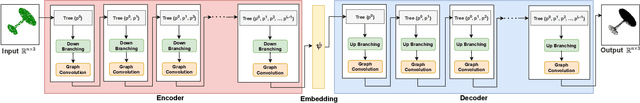

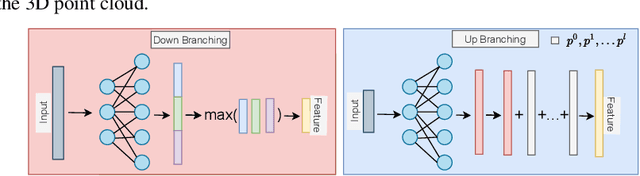

TreeGCN-ED: Encoding Point Cloud using a Tree-Structured Graph Network

Oct 11, 2021

Point cloud is an efficient way of representing and storing 3D geometric data. Deep learning algorithms on point clouds are time and memory efficient. Several methods such as PointNet and FoldingNet have been proposed for processing point clouds. This work proposes an autoencoder based framework to generate robust embeddings for point clouds by utilizing hierarchical information using graph convolution. We perform multiple experiments to assess the quality of embeddings generated by the proposed encoder architecture and visualize the t-SNE map to highlight its ability to distinguish between different object classes. We further demonstrate the applicability of the proposed framework in applications like: 3D point cloud completion and Single image based 3D reconstruction.

Representation learning for neural population activity with Neural Data Transformers

Aug 02, 2021Neural population activity is theorized to reflect an underlying dynamical structure. This structure can be accurately captured using state space models with explicit dynamics, such as those based on recurrent neural networks (RNNs). However, using recurrence to explicitly model dynamics necessitates sequential processing of data, slowing real-time applications such as brain-computer interfaces. Here we introduce the Neural Data Transformer (NDT), a non-recurrent alternative. We test the NDT's ability to capture autonomous dynamical systems by applying it to synthetic datasets with known dynamics and data from monkey motor cortex during a reaching task well-modeled by RNNs. The NDT models these datasets as well as state-of-the-art recurrent models. Further, its non-recurrence enables 3.9ms inference, well within the loop time of real-time applications and more than 6 times faster than recurrent baselines on the monkey reaching dataset. These results suggest that an explicit dynamics model is not necessary to model autonomous neural population dynamics. Code: https://github.com/snel-repo/neural-data-transformers

Dual-view Snapshot Compressive Imaging via Optical Flow Aided Recurrent Neural Network

Sep 11, 2021

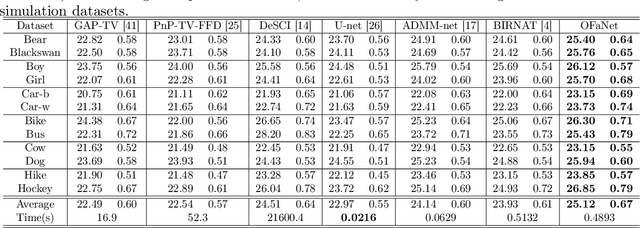

Dual-view snapshot compressive imaging (SCI) aims to capture videos from two field-of-views (FoVs) using a 2D sensor (detector) in a single snapshot, achieving joint FoV and temporal compressive sensing, and thus enjoying the advantages of low-bandwidth, low-power, and low-cost. However, it is challenging for existing model-based decoding algorithms to reconstruct each individual scene, which usually require exhaustive parameter tuning with extremely long running time for large scale data. In this paper, we propose an optical flow-aided recurrent neural network for dual video SCI systems, which provides high-quality decoding in seconds. Firstly, we develop a diversity amplification method to enlarge the differences between scenes of two FoVs, and design a deep convolutional neural network with dual branches to separate different scenes from the single measurement. Secondly, we integrate the bidirectional optical flow extracted from adjacent frames with the recurrent neural network to jointly reconstruct each video in a sequential manner. Extensive results on both simulation and real data demonstrate the superior performance of our proposed model in a short inference time. The code and data are available at https://github.com/RuiyingLu/OFaNet-for-Dual-view-SCI.

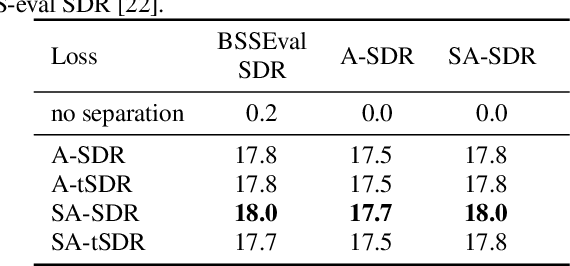

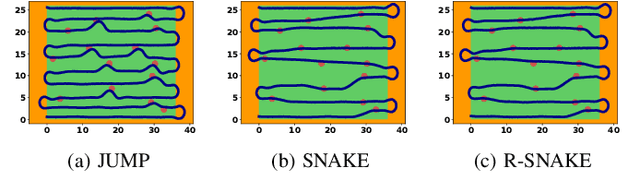

SA-SDR: A novel loss function for separation of meeting style data

Oct 29, 2021

Many state-of-the-art neural network-based source separation systems use the averaged Signal-to-Distortion Ratio (SDR) as a training objective function. The basic SDR is, however, undefined if the network reconstructs the reference signal perfectly or if the reference signal contains silence, e.g., when a two-output separator processes a single-speaker recording. Many modifications to the plain SDR have been proposed that trade-off between making the loss more robust and distorting its value. We propose to switch from a mean over the SDRs of each individual output channel to a global SDR over all output channels at the same time, which we call source-aggregated SDR (SA-SDR). This makes the loss robust against silence and perfect reconstruction as long as at least one reference signal is not silent. We experimentally show that our proposed SA-SDR is more stable and preferable over other well-known modifications when processing meeting-style data that typically contains many silent or single-speaker regions.

Online Coverage Planning for an Autonomous Weed Mowing Robot with Curvature Constraints

Nov 19, 2021

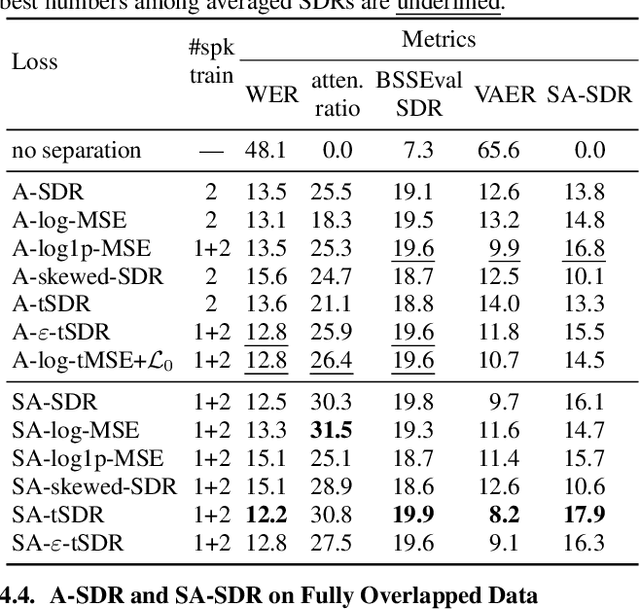

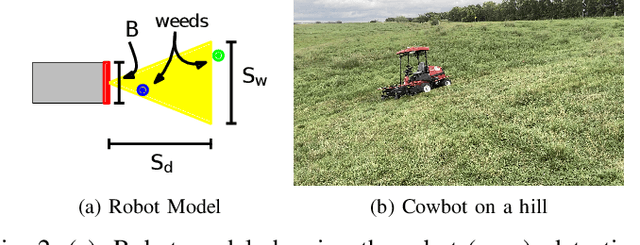

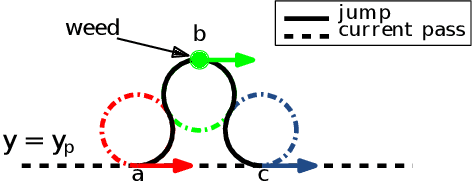

The land used for grazing cattle takes up about one-third of the land in the United States. These areas can be highly rugged. Yet, they need to be maintained to prevent weeds from taking over the nutritious grassland. This can be a daunting task especially in the case of organic farming since herbicides cannot be used. In this paper, we present the design of Cowbot, an autonomous weed mowing robot for pastures. Cowbot is an electric mower designed to operate in the rugged environments on cow pastures and provide a cost-effective method for weed control in organic farms. Path planning for the Cowbot is challenging since weed distribution on pastures is unknown. Given a limited field of view, online path planning is necessary to detect weeds and plan paths to mow them. We study the general online path planning problem for an autonomous mower with curvature and field of view constraints. We develop two online path planning algorithms that are able to utilize new information about weeds to optimize path length and ensure coverage. We deploy our algorithms on the Cowbot and perform field experiments to validate the suitability of our methods for real-time path planning. We also perform extensive simulation experiments which show that our algorithms result in up to 60 % reduction in path length as compared to baseline boustrophedon and random-search based coverage paths.

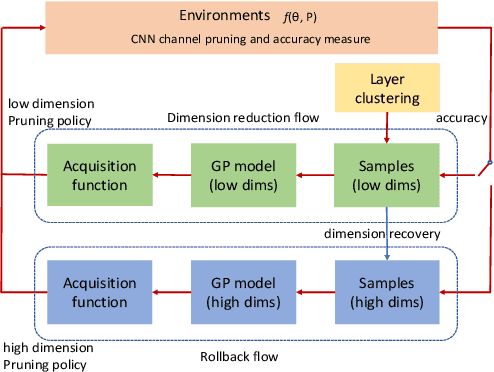

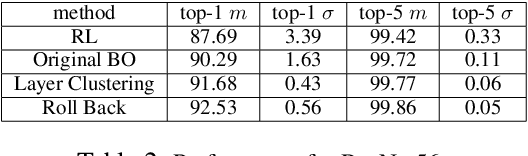

High-dimensional Bayesian Optimization for CNN Auto Pruning with Clustering and Rollback

Sep 22, 2021

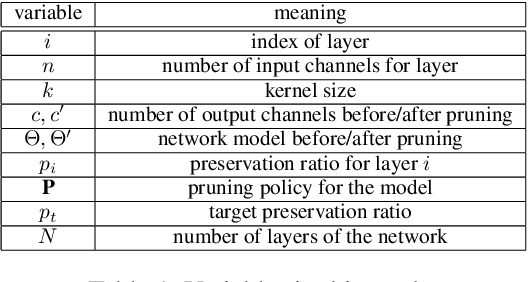

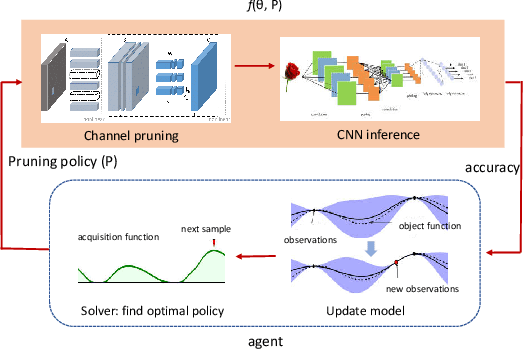

Pruning has been widely used to slim convolutional neural network (CNN) models to achieve a good trade-off between accuracy and model size so that the pruned models become feasible for power-constrained devices such as mobile phones. This process can be automated to avoid the expensive hand-crafted efforts and to explore a large pruning space automatically so that the high-performance pruning policy can be achieved efficiently. Nowadays, reinforcement learning (RL) and Bayesian optimization (BO)-based auto pruners are widely used due to their solid theoretical foundation, universality, and high compressing quality. However, the RL agent suffers from long training times and high variance of results, while the BO agent is time-consuming for high-dimensional design spaces. In this work, we propose an enhanced BO agent to obtain significant acceleration for auto pruning in high-dimensional design spaces. To achieve this, a novel clustering algorithm is proposed to reduce the dimension of the design space to speedup the searching process. Then, a roll-back algorithm is proposed to recover the high-dimensional design space so that higher pruning accuracy can be obtained. We validate our proposed method on ResNet, MobileNet, and VGG models, and our experiments show that the proposed method significantly improves the accuracy of BO when pruning very deep CNN models. Moreover, our method achieves lower variance and shorter time than the RL-based counterpart.

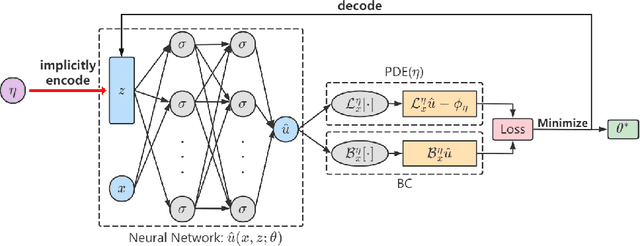

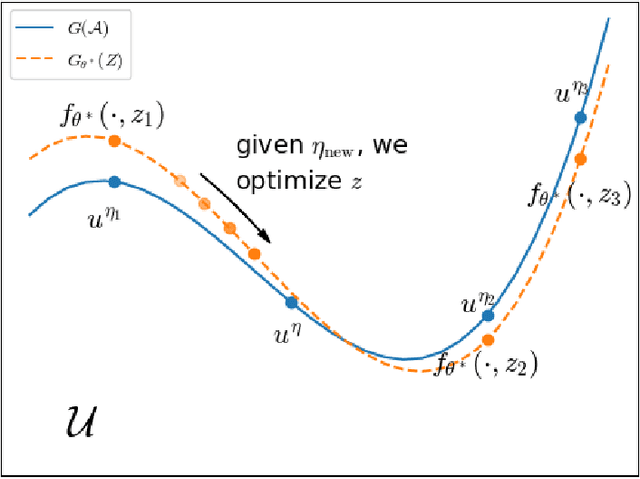

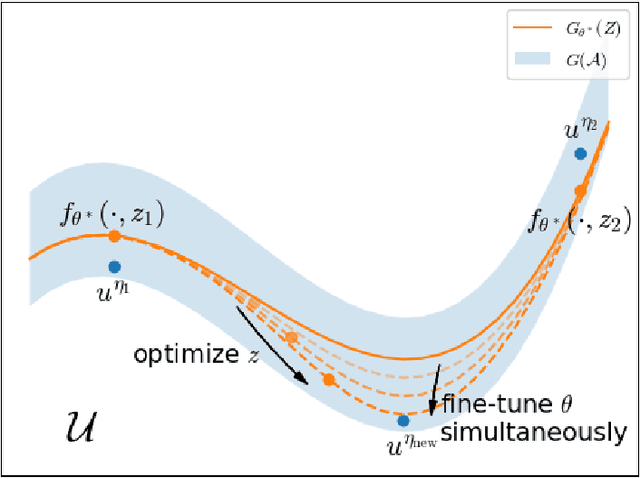

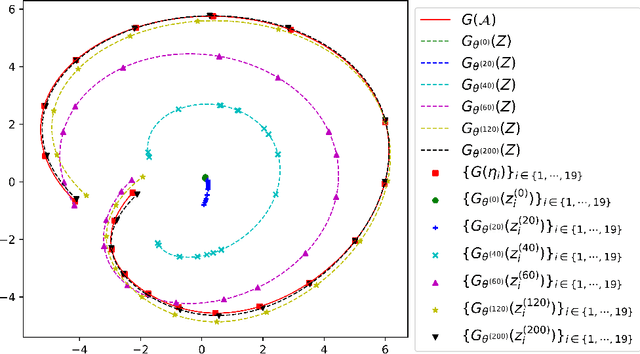

Meta-Auto-Decoder for Solving Parametric Partial Differential Equations

Nov 15, 2021

Partial Differential Equations (PDEs) are ubiquitous in many disciplines of science and engineering and notoriously difficult to solve. In general, closed-form solutions of PDEs are unavailable and numerical approximation methods are computationally expensive. The parameters of PDEs are variable in many applications, such as inverse problems, control and optimization, risk assessment, and uncertainty quantification. In these applications, our goal is to solve parametric PDEs rather than one instance of them. Our proposed approach, called Meta-Auto-Decoder (MAD), treats solving parametric PDEs as a meta-learning problem and utilizes the Auto-Decoder structure in \cite{park2019deepsdf} to deal with different tasks/PDEs. Physics-informed losses induced from the PDE governing equations and boundary conditions is used as the training losses for different tasks. The goal of MAD is to learn a good model initialization that can generalize across different tasks, and eventually enables the unseen task to be learned faster. The inspiration of MAD comes from (conjectured) low-dimensional structure of parametric PDE solutions and we explain our approach from the perspective of manifold learning. Finally, we demonstrate the power of MAD though extensive numerical studies, including Burgers' equation, Laplace's equation and time-domain Maxwell's equations. MAD exhibits faster convergence speed without losing the accuracy compared with other deep learning methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge