"Time": models, code, and papers

Does MAML Only Work via Feature Re-use? A Data Centric Perspective

Dec 24, 2021

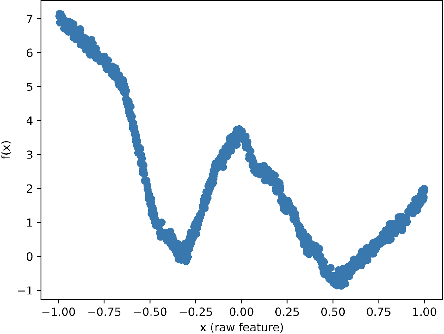

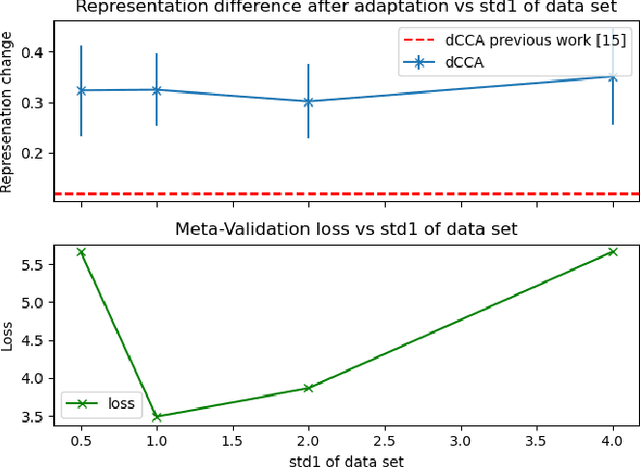

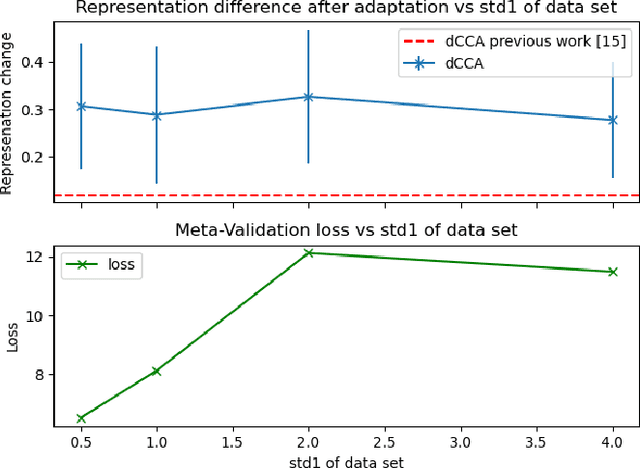

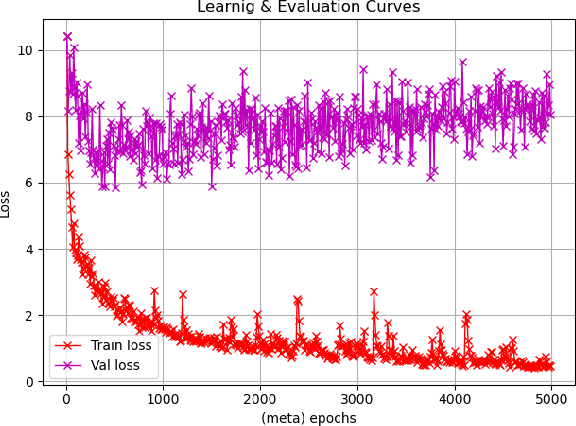

Recent work has suggested that a good embedding is all we need to solve many few-shot learning benchmarks. Furthermore, other work has strongly suggested that Model Agnostic Meta-Learning (MAML) also works via this same method - by learning a good embedding. These observations highlight our lack of understanding of what meta-learning algorithms are doing and when they work. In this work, we provide empirical results that shed some light on how meta-learned MAML representations function. In particular, we identify three interesting properties: 1) In contrast to previous work, we show that it is possible to define a family of synthetic benchmarks that result in a low degree of feature re-use - suggesting that current few-shot learning benchmarks might not have the properties needed for the success of meta-learning algorithms; 2) meta-overfitting occurs when the number of classes (or concepts) are finite, and this issue disappears once the task has an unbounded number of concepts (e.g., online learning); 3) more adaptation at meta-test time with MAML does not necessarily result in a significant representation change or even an improvement in meta-test performance - even when training on our proposed synthetic benchmarks. Finally, we suggest that to understand meta-learning algorithms better, we must go beyond tracking only absolute performance and, in addition, formally quantify the degree of meta-learning and track both metrics together. Reporting results in future work this way will help us identify the sources of meta-overfitting more accurately and help us design more flexible meta-learning algorithms that learn beyond fixed feature re-use. Finally, we conjecture the core challenge of re-thinking meta-learning is in the design of few-shot learning data sets and benchmarks - rather than in the algorithms, as suggested by previous work.

MARTINI: Smart Meter Driven Estimation of HVAC Schedules and Energy Savings Based on WiFi Sensing and Clustering

Oct 17, 2021

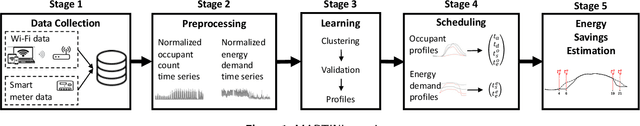

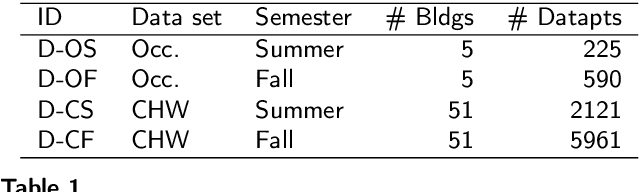

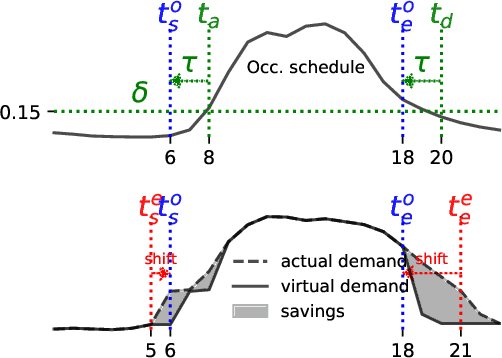

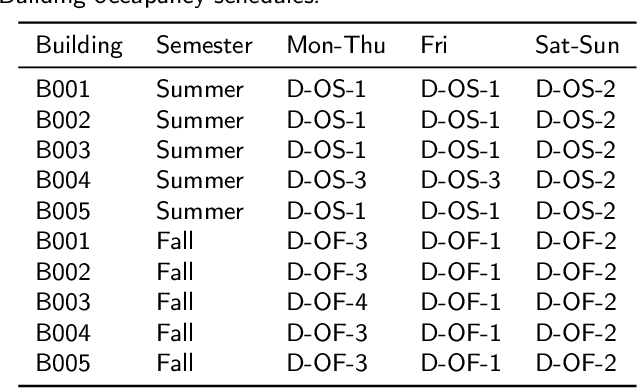

HVAC systems account for a significant portion of building energy use. Nighttime setback scheduling is an energy conservation measure where cooling and heating setpoints are increased and decreased respectively during unoccupied periods with the goal of obtaining energy savings. However, knowledge of a building's real occupancy is required to maximize the success of this measure. In addition, there is the need for a scalable way to estimate energy savings potential from energy conservation measures that is not limited by building specific parameters and experimental or simulation modeling investments. Here, we propose MARTINI, a sMARt meTer drIveN estImation of occupant-derived HVAC schedules and energy savings that leverages the ubiquity of energy smart meters and WiFi infrastructure in commercial buildings. We estimate the schedules by clustering WiFi-derived occupancy profiles and, energy savings by shifting ramp-up and setback times observed in typical/measured load profiles obtained by clustering smart meter energy profiles. Our case-study results with five buildings over seven months show an average of 8.1%-10.8% (summer) and 0.2%-5.9% (fall) chilled water energy savings when HVAC system operation is aligned with occupancy. We validate our method with results from building energy performance simulation (BEPS) and find that estimated average savings of MARTINI are within 0.9%-2.4% of the BEPS predictions. In the absence of occupancy information, we can still estimate potential savings from increasing ramp-up time and decreasing setback start time. In 51 academic buildings, we find savings potentials between 1%-5%.

A Bio-inspired Modular System for Humanoid Posture Control

Dec 04, 2021

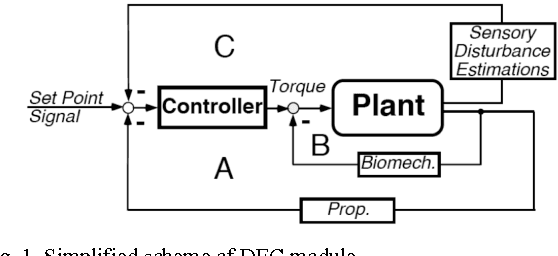

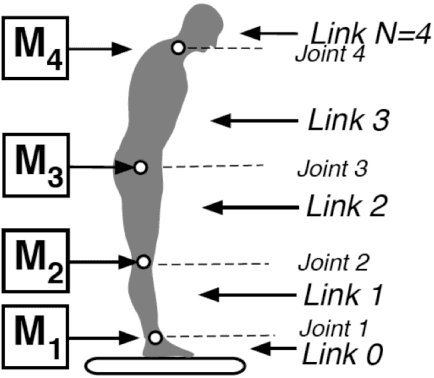

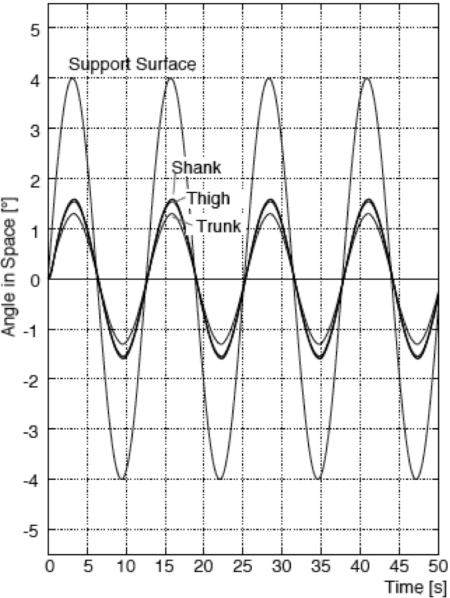

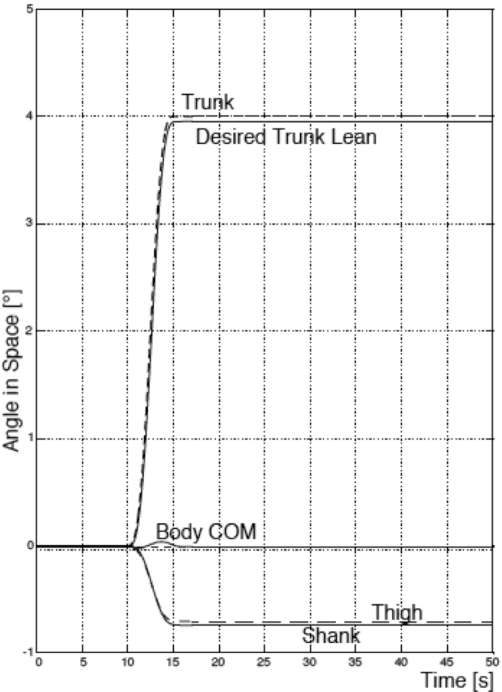

Bio-inspired sensorimotor control systems may be appealing to roboticists who try to solve problems of multiDOF humanoids and human-robot interactions. This paper presents a simple posture control concept from neuroscience, called disturbance estimation and compensation, DEC concept [1]. It provides human-like mechanical compliance due to low loop gain, tolerance of time delays, and automatic adjustment to changes in external disturbance scenarios. Its outstanding feature is that it uses feedback of multisensory disturbance estimates rather than 'raw' sensory signals for disturbance compensation. After proof-of-principle tests in 1 and 2 DOF posture control robots, we present here a generalized DEC control module for multi-DOF robots. In the control layout, one DEC module controls one DOF (modular control architecture). Modules of neighboring joints are synergistically interconnected using vestibular information in combination with joint angle and torque signals. These sensory interconnections allow each module to control the kinematics of the more distal links as if they were a single link. This modular design makes the complexity of the robot control scale linearly with the DOFs and error robustness high compared to monolithic control architectures. The presented concept uses Matlab/Simulink (The MathWorks, Natick, USA) for both, model simulation and robot control and will be available as open library

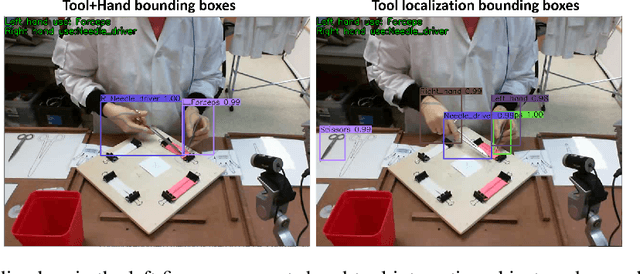

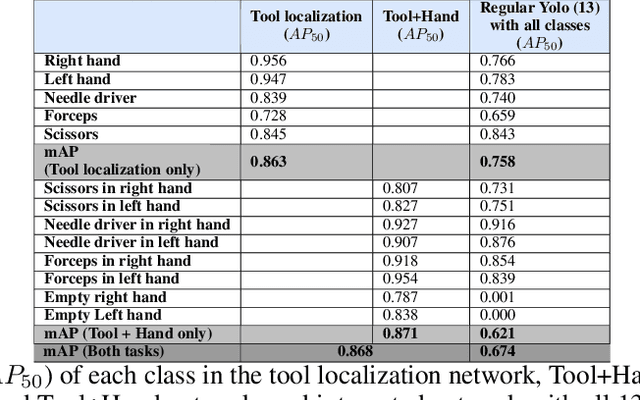

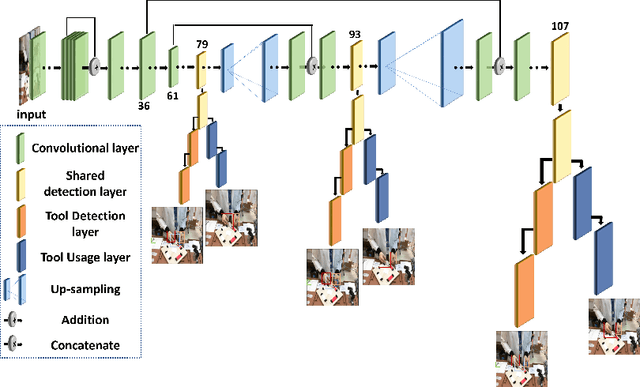

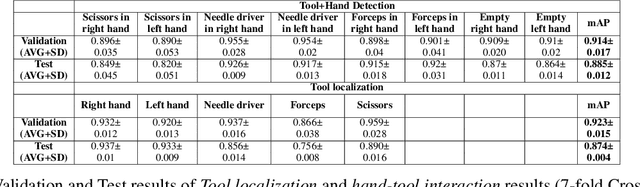

Video-based fully automatic assessment of open surgery suturing skills

Oct 26, 2021

The goal of this study was to develop new reliable open surgery suturing simulation system for training medical students in situation where resources are limited or in the domestic setup. Namely, we developed an algorithm for tools and hands localization as well as identifying the interactions between them based on simple webcam video data, calculating motion metrics for assessment of surgical skill. Twenty-five participants performed multiple suturing tasks using our simulator. The YOLO network has been modified to a multi-task network, for the purpose of tool localization and tool-hand interaction detection. This was accomplished by splitting the YOLO detection heads so that they supported both tasks with minimal addition to computer run-time. Furthermore, based on the outcome of the system, motion metrics were calculated. These metrics included traditional metrics such as time and path length as well as new metrics assessing the technique participants use for holding the tools. The dual-task network performance was similar to that of two networks, while computational load was only slightly bigger than one network. In addition, the motion metrics showed significant differences between experts and novices. While video capture is an essential part of minimally invasive surgery, it is not an integral component of open surgery. Thus, new algorithms, focusing on the unique challenges open surgery videos present, are required. In this study, a dual-task network was developed to solve both a localization task and a hand-tool interaction task. The dual network may be easily expanded to a multi-task network, which may be useful for images with multiple layers and for evaluating the interaction between these different layers.

Learning to Solve Combinatorial Optimization Problems on Real-World Graphs in Linear Time

Jun 12, 2020

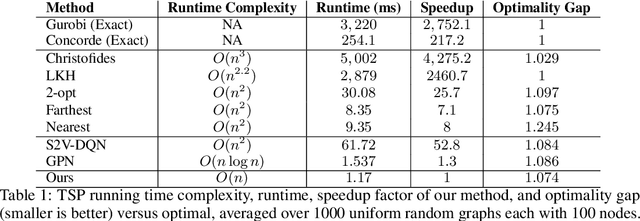

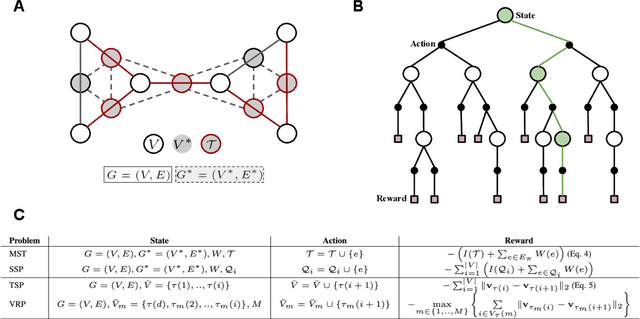

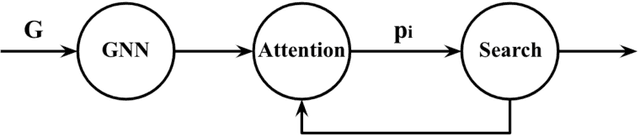

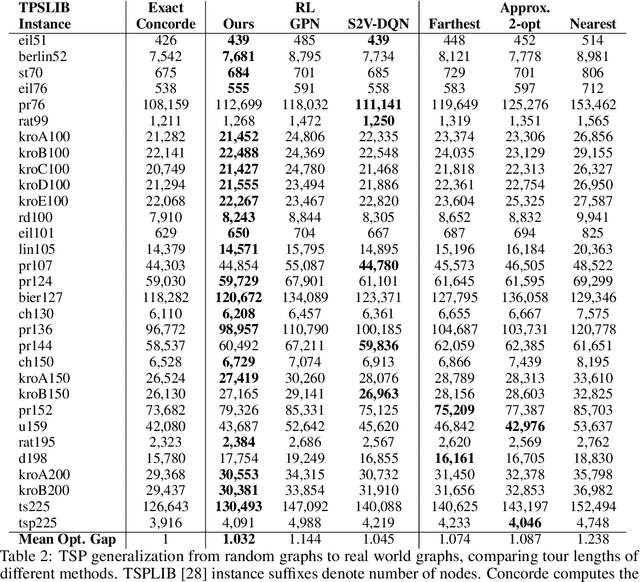

Combinatorial optimization algorithms for graph problems are usually designed afresh for each new problem with careful attention by an expert to the problem structure. In this work, we develop a new framework to solve any combinatorial optimization problem over graphs that can be formulated as a single player game defined by states, actions, and rewards, including minimum spanning tree, shortest paths, traveling salesman problem, and vehicle routing problem, without expert knowledge. Our method trains a graph neural network using reinforcement learning on an unlabeled training set of graphs. The trained network then outputs approximate solutions to new graph instances in linear running time. In contrast, previous approximation algorithms or heuristics tailored to NP-hard problems on graphs generally have at least quadratic running time. We demonstrate the applicability of our approach on both polynomial and NP-hard problems with optimality gaps close to 1, and show that our method is able to generalize well: (i) from training on small graphs to testing on large graphs; (ii) from training on random graphs of one type to testing on random graphs of another type; and (iii) from training on random graphs to running on real world graphs.

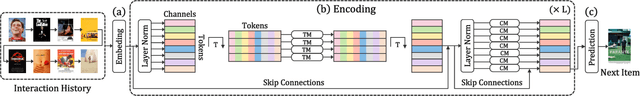

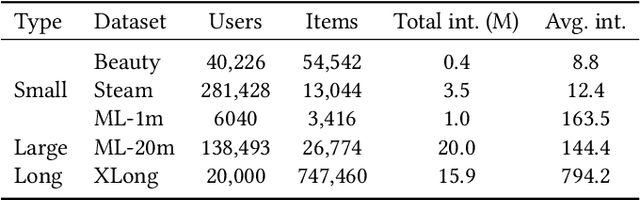

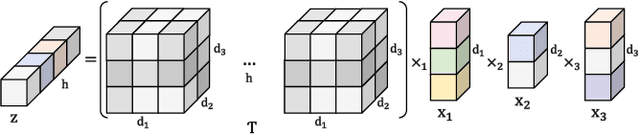

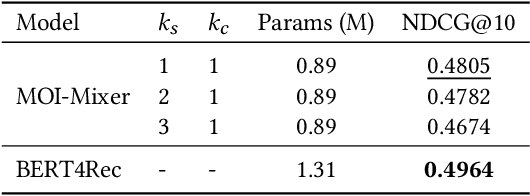

MOI-Mixer: Improving MLP-Mixer with Multi Order Interactions in Sequential Recommendation

Aug 17, 2021

Successful sequential recommendation systems rely on accurately capturing the user's short-term and long-term interest. Although Transformer-based models achieved state-of-the-art performance in the sequential recommendation task, they generally require quadratic memory and time complexity to the sequence length, making it difficult to extract the long-term interest of users. On the other hand, Multi-Layer Perceptrons (MLP)-based models, renowned for their linear memory and time complexity, have recently shown competitive results compared to Transformer in various tasks. Given the availability of a massive amount of the user's behavior history, the linear memory and time complexity of MLP-based models make them a promising alternative to explore in the sequential recommendation task. To this end, we adopted MLP-based models in sequential recommendation but consistently observed that MLP-based methods obtain lower performance than those of Transformer despite their computational benefits. From experiments, we observed that introducing explicit high-order interactions to MLP layers mitigates such performance gap. In response, we propose the Multi-Order Interaction (MOI) layer, which is capable of expressing an arbitrary order of interactions within the inputs while maintaining the memory and time complexity of the MLP layer. By replacing the MLP layer with the MOI layer, our model was able to achieve comparable performance with Transformer-based models while retaining the MLP-based models' computational benefits.

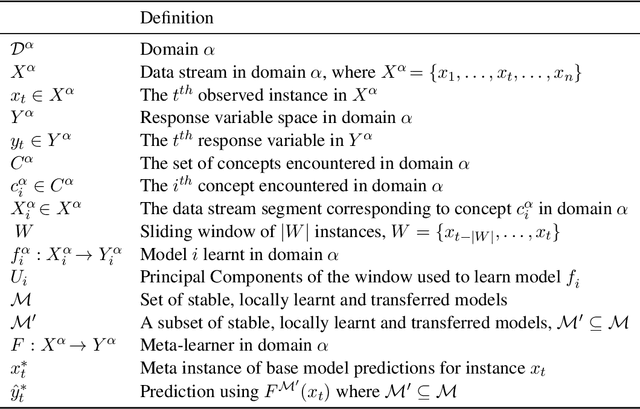

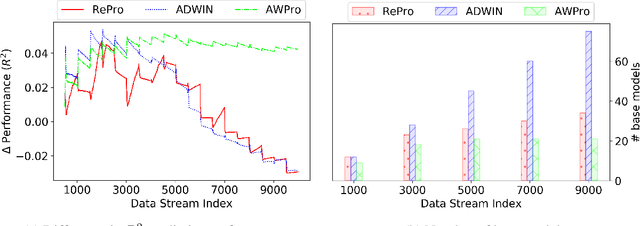

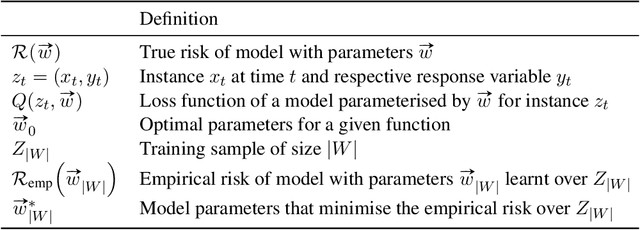

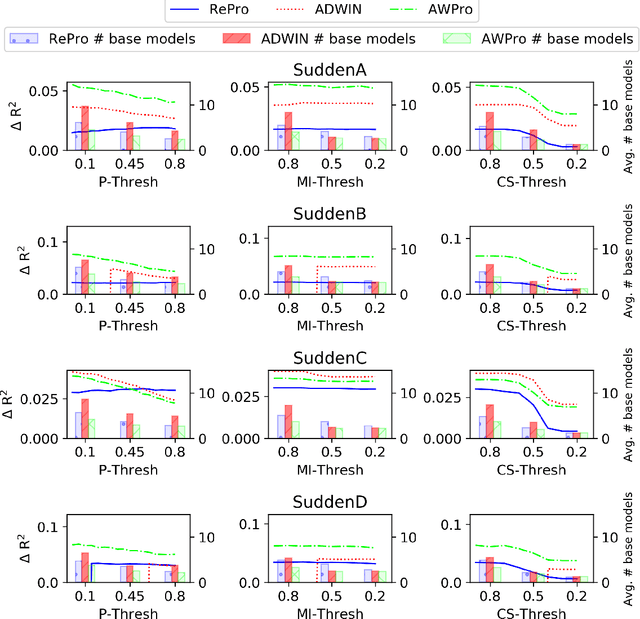

Conceptually Diverse Base Model Selection for Meta-Learners in Concept Drifting Data Streams

Nov 29, 2021

Meta-learners and ensembles aim to combine a set of relevant yet diverse base models to improve predictive performance. However, determining an appropriate set of base models is challenging, especially in online environments where the underlying distribution of data can change over time. In this paper, we present a novel approach for estimating the conceptual similarity of base models, which is calculated using the Principal Angles (PAs) between their underlying subspaces. We propose two methods that use conceptual similarity as a metric to obtain a relevant yet diverse subset of base models: (i) parameterised threshold culling and (ii) parameterless conceptual clustering. We evaluate these methods against thresholding using common ensemble pruning metrics, namely predictive performance and Mutual Information (MI), in the context of online Transfer Learning (TL), using both synthetic and real-world data. Our results show that conceptual similarity thresholding has a reduced computational overhead, and yet yields comparable predictive performance to thresholding using predictive performance and MI. Furthermore, conceptual clustering achieves similar predictive performances without requiring parameterisation, and achieves this with lower computational overhead than thresholding using predictive performance and MI when the number of base models becomes large.

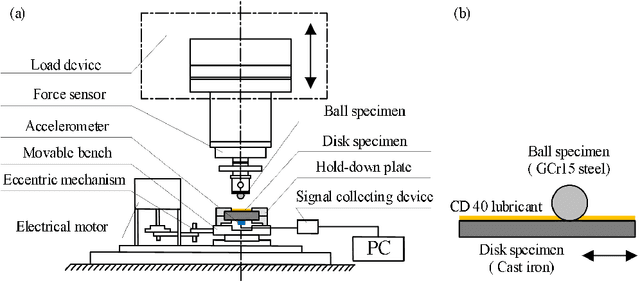

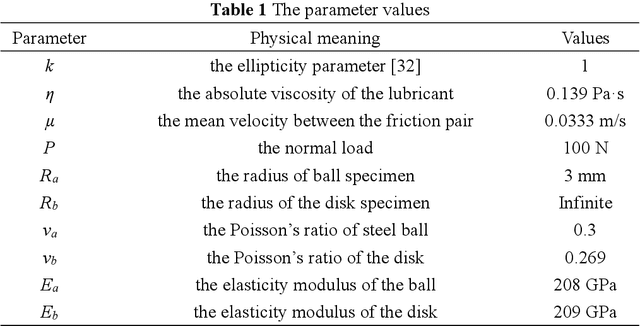

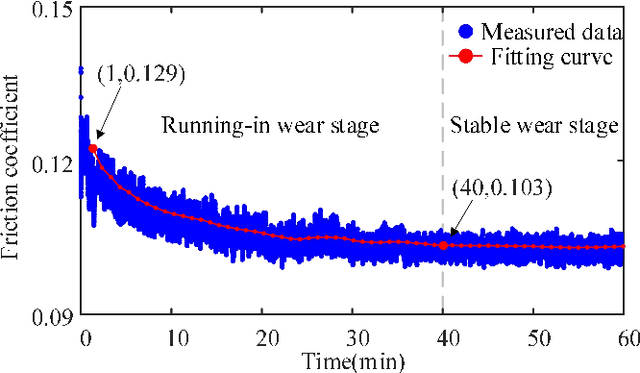

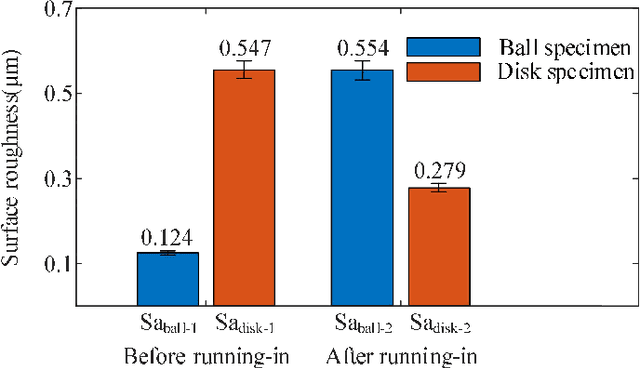

Experimental Investigation on the Friction-induced Vibration with Periodic Characteristics in a Running-in Process under Lubrication

Nov 24, 2021

This paper investigated the friction-induced vibration (FIV) behavior under the running-in process with oil lubrication. The FIV signal with periodic characteristics under lubrication was identified with the help of the squeal signal induced in an oil-free wear experiment and then extracted by the harmonic wavelet packet transform (HWPT). The variation of the FIV signal from running-in wear stage to steady wear stage was studied by its root mean square (RMS) values. The result indicates that the time-frequency characteristics of the FIV signals evolve with the wear process and can reflect the wear stages of the friction pairs. The RMS evolvement of the FIV signal is in the same trend to the composite surface roughness and demonstrates that the friction pair goes through the running-in wear stage and the steady wear stage. Therefore, the FIV signal with periodic characteristics can describe the evolvement of the running-in process and distinguish the running-in wear stage and the stable wear stage of the friction pair.

Robust Federated Learning via Over-The-Air Computation

Nov 11, 2021

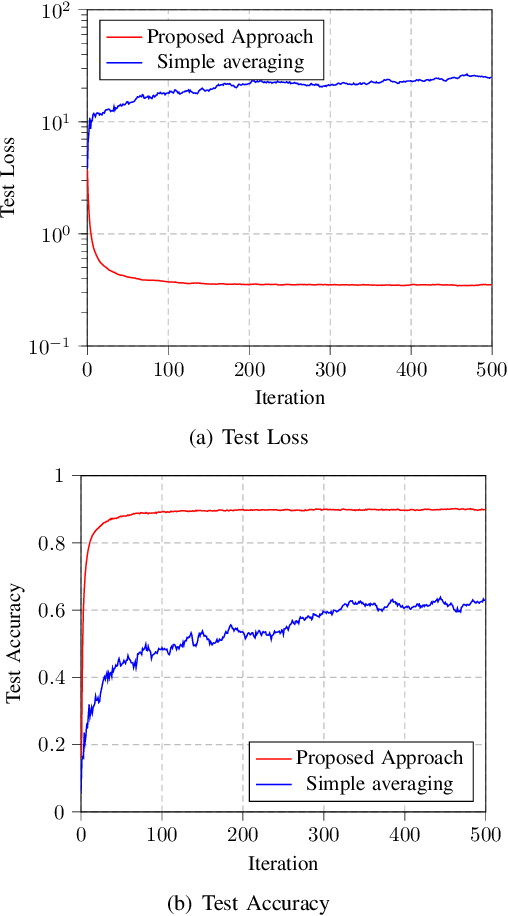

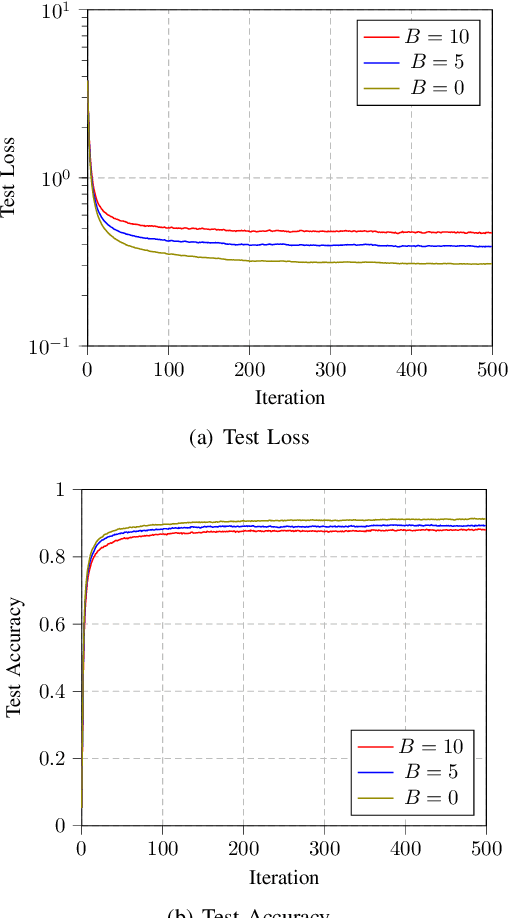

This paper investigates the robustness of over-the-air federated learning to Byzantine attacks. The simple averaging of the model updates via over-the-air computation makes the learning task vulnerable to random or intended modifications of the local model updates of some malicious clients. We propose a robust transmission and aggregation framework to such attacks while preserving the benefits of over-the-air computation for federated learning. For the proposed robust federated learning, the participating clients are randomly divided into groups and a transmission time slot is allocated to each group. The parameter server aggregates the results of the different groups using a robust aggregation technique and conveys the result to the clients for another training round. We also analyze the convergence of the proposed algorithm. Numerical simulations confirm the robustness of the proposed approach to Byzantine attacks.

Sistema de sensoriamento sem fio aplicavel a deteccao de incendios florestais

Nov 24, 2021In this research work, a hardware and software system is developed that uses wireless sensors to monitor environmental variables such as temperature, gas concentration and luminosity, in order to detect the existence of forest fires. Lora technology was used for wireless sensor networks with communication range that can reach on average up to 5km in urban areas and 10km in rural areas. The developed system also has an integrated web application (dashboard) and that in real time, collects data from wireless sensors, which together form the sensor module, also called device. Then, this data is presented on a map associ- ated with the positioning of each sensor module. The developed system was tested using practical experiments that used flames, gases and lighting, simulating the occurrence of fires. With the tests performed, it was observed the feasibility of the system, hardware/software developed, in detecting the fires in the simulated scenarios. Therefore, it was found that the research is promising, and may advance in the future for the detection of real fires.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge