"Time": models, code, and papers

End-to-End Deep Learning of Lane Detection and Path Prediction for Real-Time Autonomous Driving

Feb 09, 2021

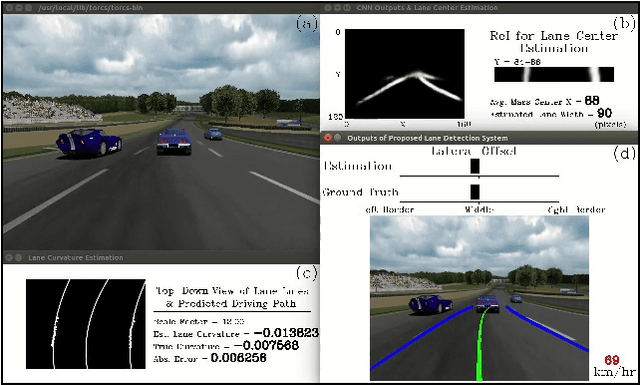

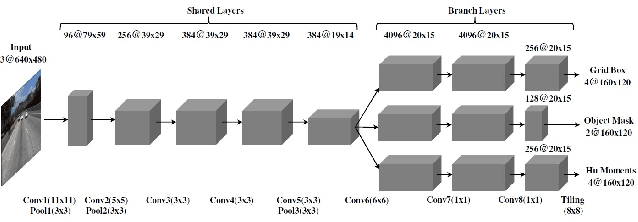

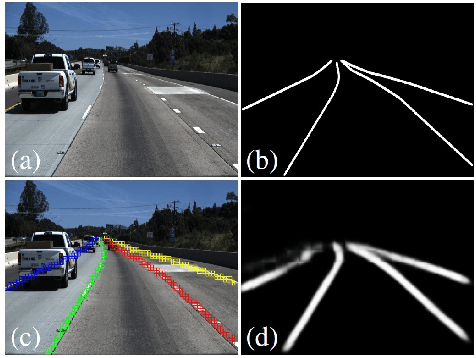

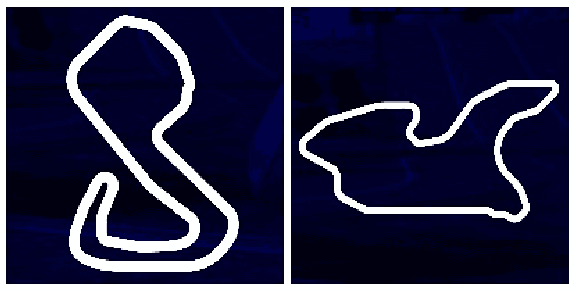

We propose an end-to-end three-task convolutional neural network (3TCNN) having two regression branches of bounding boxes and Hu moments and one classification branch of object masks for lane detection and road recognition. The Hu-moment regressor performs lane localization and road guidance using local and global Hu moments of segmented lane objects, respectively. Based on 3TCNN, we then propose lateral offset and path prediction (PP) algorithms to form an integrated model (3TCNN-PP) that can predict driving path with dynamic estimation of lane centerline and path curvature for real-time autonomous driving. We also develop a CNN-PP simulator that can be used to train a CNN by real or artificial traffic images, test it by artificial images, quantify its dynamic errors, and visualize its qualitative performance. Simulation results show that 3TCNN-PP is comparable to related CNNs and better than a previous CNN-PP, respectively. The code, annotated data, and simulation videos of this work can be found on our website for further research on NN-PP algorithms of autonomous driving.

A Priori Denoising Strategies for Sparse Identification of Nonlinear Dynamical Systems: A Comparative Study

Jan 29, 2022

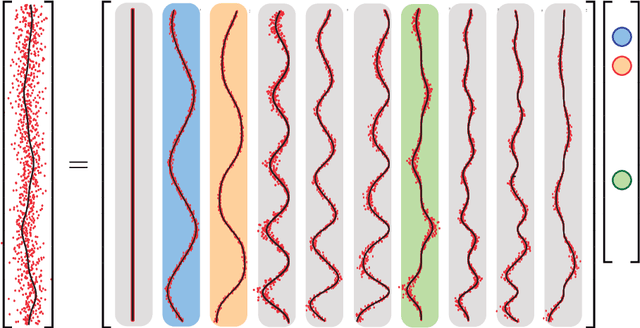

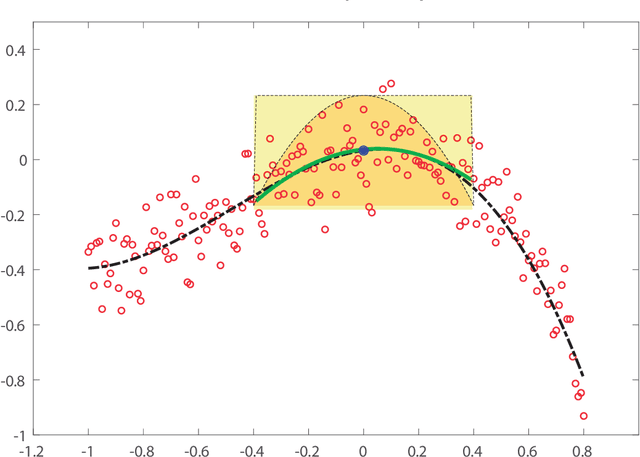

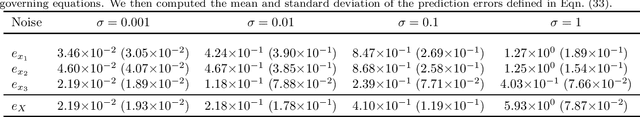

In recent years, identification of nonlinear dynamical systems from data has become increasingly popular. Sparse regression approaches, such as Sparse Identification of Nonlinear Dynamics (SINDy), fostered the development of novel governing equation identification algorithms assuming the state variables are known a priori and the governing equations lend themselves to sparse, linear expansions in a (nonlinear) basis of the state variables. In the context of the identification of governing equations of nonlinear dynamical systems, one faces the problem of identifiability of model parameters when state measurements are corrupted by noise. Measurement noise affects the stability of the recovery process yielding incorrect sparsity patterns and inaccurate estimation of coefficients of the governing equations. In this work, we investigate and compare the performance of several local and global smoothing techniques to a priori denoise the state measurements and numerically estimate the state time-derivatives to improve the accuracy and robustness of two sparse regression methods to recover governing equations: Sequentially Thresholded Least Squares (STLS) and Weighted Basis Pursuit Denoising (WBPDN) algorithms. We empirically show that, in general, global methods, which use the entire measurement data set, outperform local methods, which employ a neighboring data subset around a local point. We additionally compare Generalized Cross Validation (GCV) and Pareto curve criteria as model selection techniques to automatically estimate near optimal tuning parameters, and conclude that Pareto curves yield better results. The performance of the denoising strategies and sparse regression methods is empirically evaluated through well-known benchmark problems of nonlinear dynamical systems.

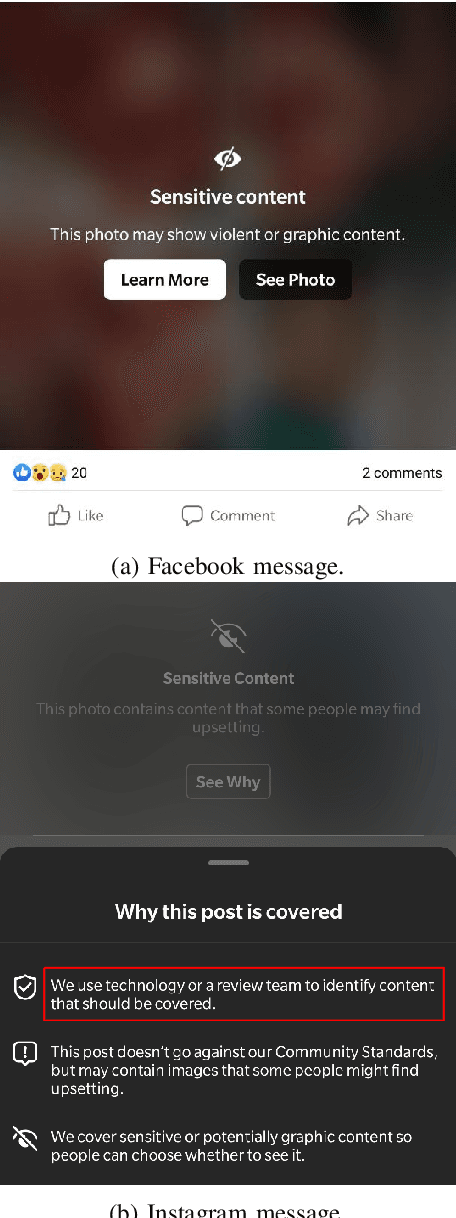

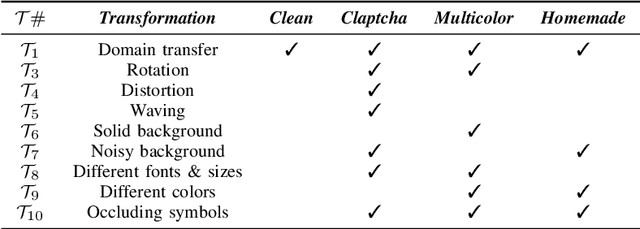

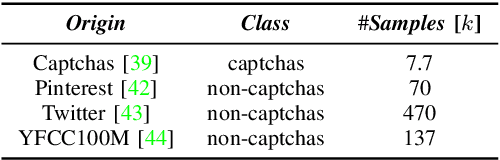

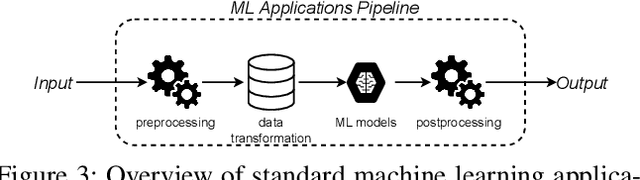

Captcha Attack: Turning Captchas Against Humanity

Jan 13, 2022

Nowadays, people generate and share massive content on online platforms (e.g., social networks, blogs). In 2021, the 1.9 billion daily active Facebook users posted around 150 thousand photos every minute. Content moderators constantly monitor these online platforms to prevent the spreading of inappropriate content (e.g., hate speech, nudity images). Based on deep learning (DL) advances, Automatic Content Moderators (ACM) help human moderators handle high data volume. Despite their advantages, attackers can exploit weaknesses of DL components (e.g., preprocessing, model) to affect their performance. Therefore, an attacker can leverage such techniques to spread inappropriate content by evading ACM. In this work, we propose CAPtcha Attack (CAPA), an adversarial technique that allows users to spread inappropriate text online by evading ACM controls. CAPA, by generating custom textual CAPTCHAs, exploits ACM's careless design implementations and internal procedures vulnerabilities. We test our attack on real-world ACM, and the results confirm the ferocity of our simple yet effective attack, reaching up to a 100% evasion success in most cases. At the same time, we demonstrate the difficulties in designing CAPA mitigations, opening new challenges in CAPTCHAs research area.

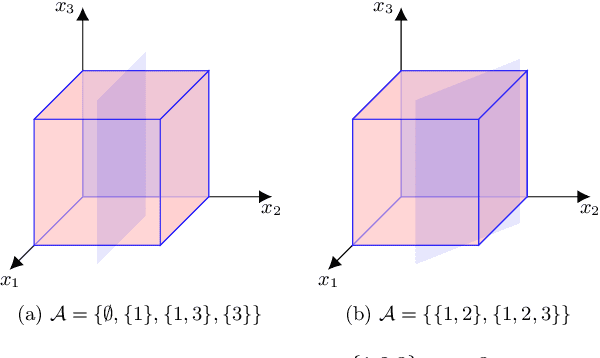

Neural networks with linear threshold activations: structure and algorithms

Nov 15, 2021

In this article we present new results on neural networks with linear threshold activation functions. We precisely characterize the class of functions that are representable by such neural networks and show that 2 hidden layers are necessary and sufficient to represent any function representable in the class. This is a surprising result in the light of recent exact representability investigations for neural networks using other popular activation functions like rectified linear units (ReLU). We also give precise bounds on the sizes of the neural networks required to represent any function in the class. Finally, we design an algorithm to solve the empirical risk minimization (ERM) problem to global optimality for these neural networks with a fixed architecture. The algorithm's running time is polynomial in the size of the data sample, if the input dimension and the size of the network architecture are considered fixed constants. The algorithm is unique in the sense that it works for any architecture with any number of layers, whereas previous polynomial time globally optimal algorithms work only for very restricted classes of architectures.

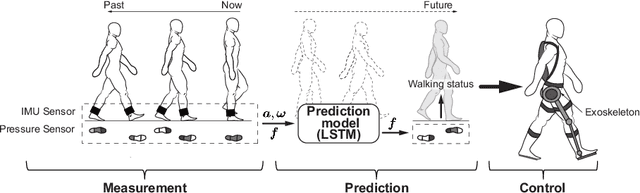

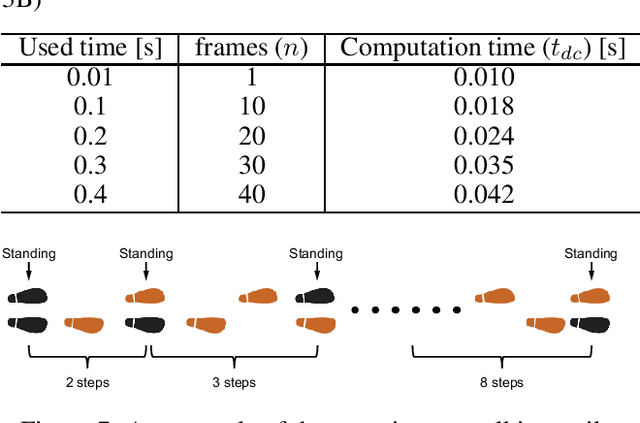

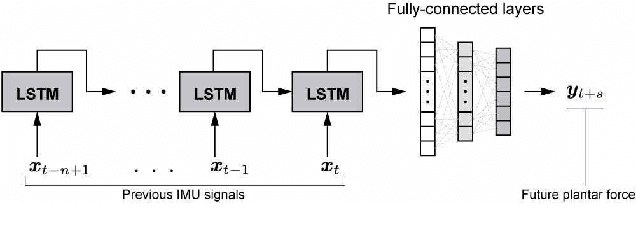

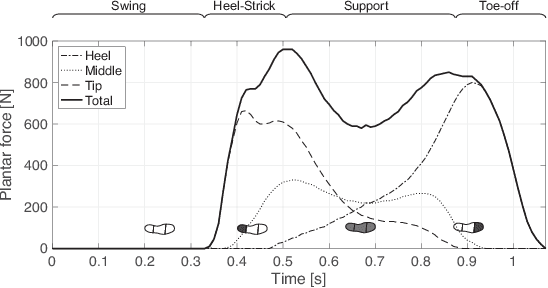

Control of Walking Assist Exoskeleton with Time-delay Based on the Prediction of Plantar Force

Jul 17, 2020

Many kinds of lower-limb exoskeletons were developed for walking assistance. However, when controlling these exoskeletons, time-delay due to the computation time and the communication delays is still a general problem. In this research, we propose a novel method to prevent the time-delay when controlling a walking assist exoskeleton by predicting the future plantar force and walking status. By using Long Short-Term Memory and a fully-connected network, the plantar force can be predicted using only data measured by inertial measurement unit sensors, not only during the walking period but also at the start and end of walking. From the predicted plantar force, the walking status and the desired assistance timing can also be determined. By considering the time-delay and sending the control commands beforehand, the exoskeleton can be moved precisely on the desired assistance timing. In experiments, the prediction accuracy of the plantar force and the assistance timing are confirmed. The performance of the proposed method is also evaluated by using the trained model to control the exoskeleton.

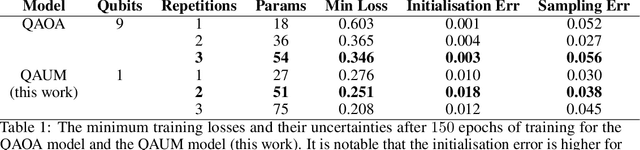

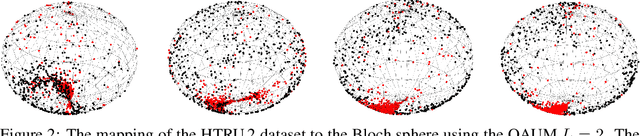

Quantum Machine Learning for Radio Astronomy

Dec 05, 2021

In this work we introduce a novel approach to the pulsar classification problem in time-domain radio astronomy using a Born machine, often referred to as a \emph{quantum neural network}. Using a single-qubit architecture, we show that the pulsar classification problem maps well to the Bloch sphere and that comparable accuracies to more classical machine learning approaches are achievable. We introduce a novel single-qubit encoding for the pulsar data used in this work and show that this performs comparably to a multi-qubit QAOA encoding.

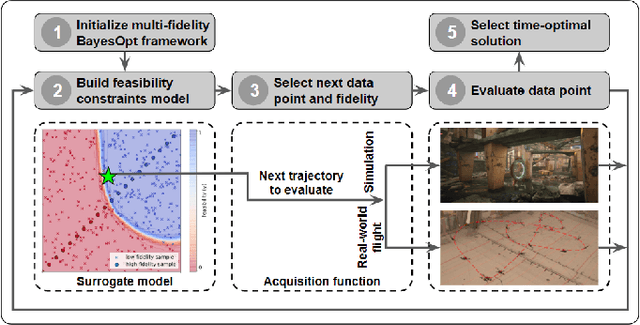

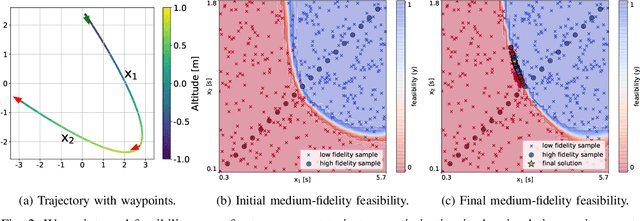

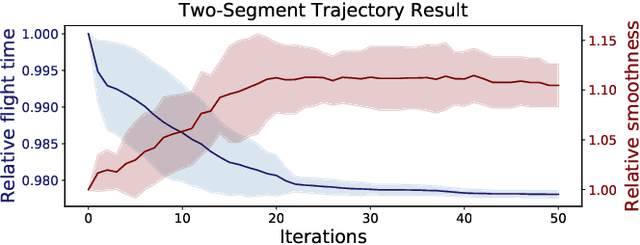

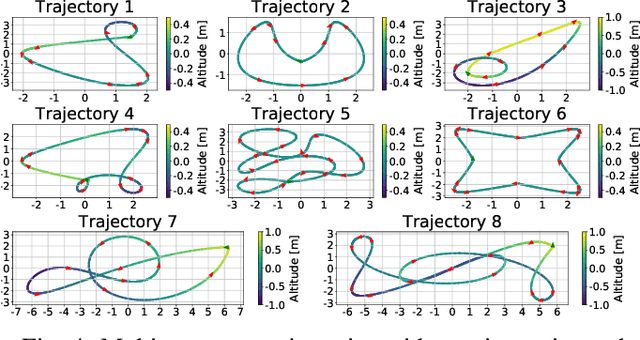

Multi-Fidelity Black-Box Optimization for Time-Optimal Quadrotor Maneuvers

Jun 03, 2020

We consider the problem of generating a time-optimal quadrotor trajectory that attains a set of prescribed waypoints. This problem is challenging since the optimal trajectory is located on the boundary of the set of dynamically feasible trajectories. This boundary is hard to model as it involves limitations of the entire system, including hardware and software, in agile high-speed flight. In this work, we propose a multi-fidelity Bayesian optimization framework that models the feasibility constraints based on analytical approximation, numerical simulation, and real-world flight experiments. By combining evaluations at different fidelities, trajectory time is optimized while keeping the number of required costly flight experiments to a minimum. The algorithm is thoroughly evaluated in both simulation and real-world flight experiments at speeds up to 11 m/s. Resulting trajectories were found to be significantly faster than those obtained through minimum-snap trajectory planning.

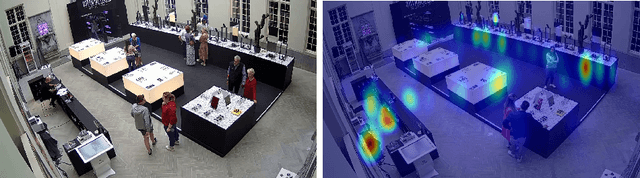

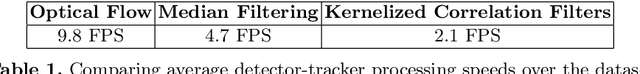

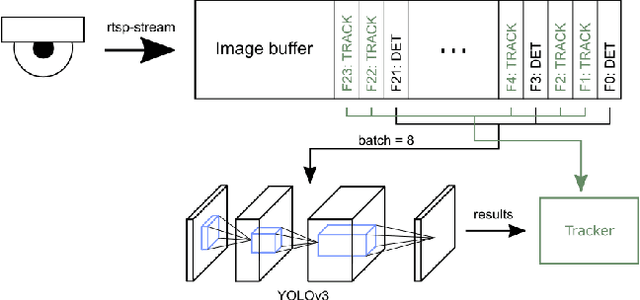

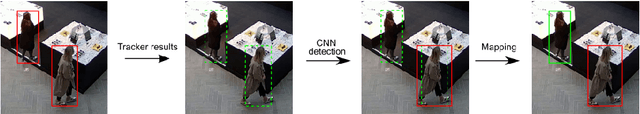

Real-time Embedded Person Detection and Tracking for Shopping Behaviour Analysis

Jul 09, 2020

Shopping behaviour analysis through counting and tracking of people in shop-like environments offers valuable information for store operators and provides key insights in the stores layout (e.g. frequently visited spots). Instead of using extra staff for this, automated on-premise solutions are preferred. These automated systems should be cost-effective, preferably on lightweight embedded hardware, work in very challenging situations (e.g. handling occlusions) and preferably work real-time. We solve this challenge by implementing a real-time TensorRT optimized YOLOv3-based pedestrian detector, on a Jetson TX2 hardware platform. By combining the detector with a sparse optical flow tracker we assign a unique ID to each customer and tackle the problem of loosing partially occluded customers. Our detector-tracker based solution achieves an average precision of 81.59% at a processing speed of 10 FPS. Besides valuable statistics, heat maps of frequently visited spots are extracted and used as an overlay on the video stream.

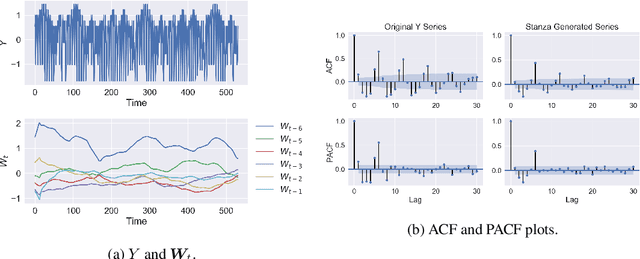

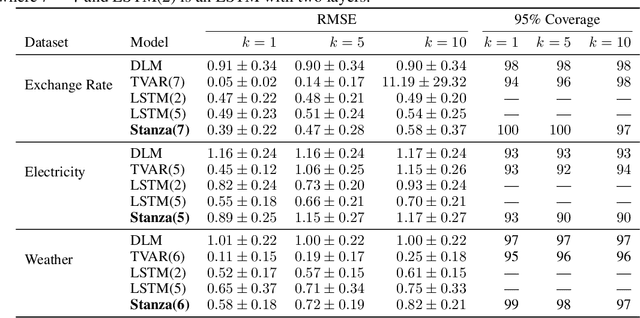

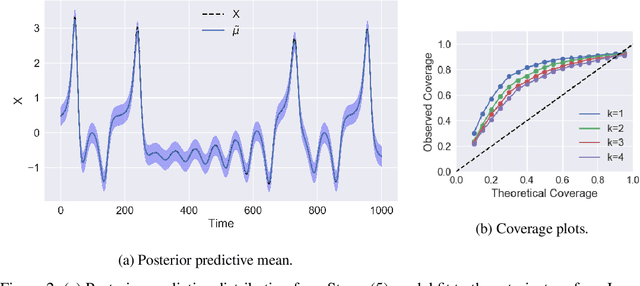

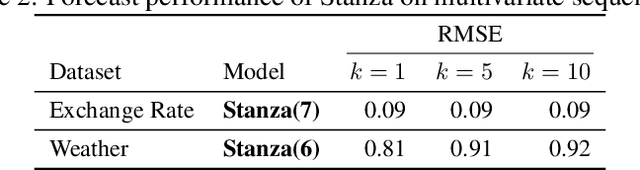

Stanza: A Nonlinear State Space Model for Probabilistic Inference in Non-Stationary Time Series

Jun 11, 2020

Time series with long-term structure arise in a variety of contexts and capturing this temporal structure is a critical challenge in time series analysis for both inference and forecasting settings. Traditionally, state space models have been successful in providing uncertainty estimates of trajectories in the latent space. More recently, deep learning, attention-based approaches have achieved state of the art performance for sequence modeling, though often require large amounts of data and parameters to do so. We propose Stanza, a nonlinear, non-stationary state space model as an intermediate approach to fill the gap between traditional models and modern deep learning approaches for complex time series. Stanza strikes a balance between competitive forecasting accuracy and probabilistic, interpretable inference for highly structured time series. In particular, Stanza achieves forecasting accuracy competitive with deep LSTMs on real-world datasets, especially for multi-step ahead forecasting.

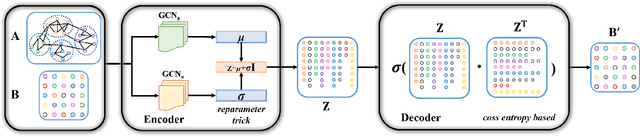

VGAER: graph neural network reconstruction based community detection

Jan 08, 2022

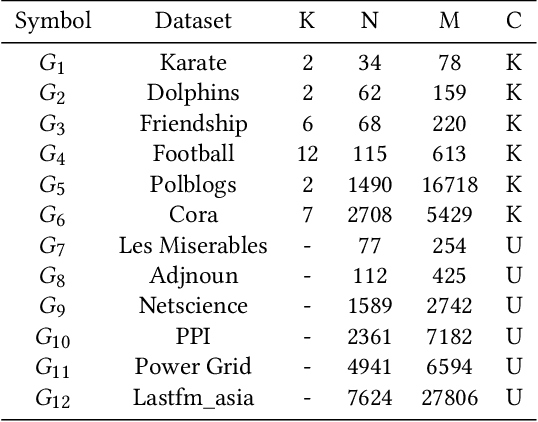

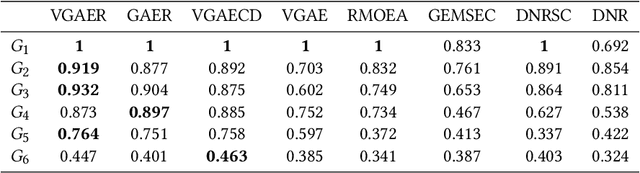

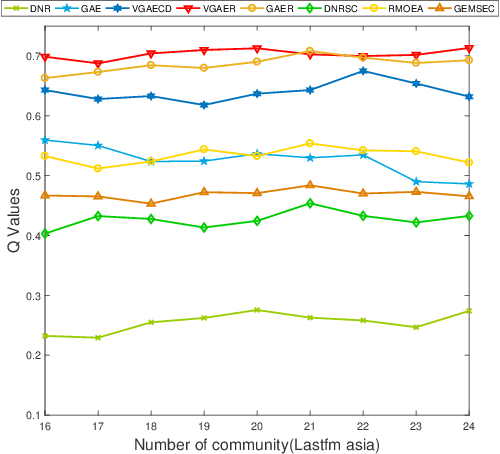

Community detection is a fundamental and important issue in network science, but there are only a few community detection algorithms based on graph neural networks, among which unsupervised algorithms are almost blank. By fusing the high-order modularity information with network features, this paper proposes a Variational Graph AutoEncoder Reconstruction based community detection VGAER for the first time, and gives its non-probabilistic version. They do not need any prior information. We have carefully designed corresponding input features, decoder, and downstream tasks based on the community detection task and these designs are concise, natural, and perform well (NMI values under our design are improved by 59.1% - 565.9%). Based on a series of experiments with wide range of datasets and advanced methods, VGAER has achieved superior performance and shows strong competitiveness and potential with a simpler design. Finally, we report the results of algorithm convergence analysis and t-SNE visualization, which clearly depicted the stable performance and powerful network modularity ability of VGAER. Our codes are available at https://github.com/qcydm/VGAER.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge