"Time": models, code, and papers

Traffic event description based on Twitter data using Unsupervised Learning Methods for Indian road conditions

Dec 23, 2021Non-recurrent and unpredictable traffic events directly influence road traffic conditions. There is a need for dynamic monitoring and prediction of these unpredictable events to improve road network management. The problem with the existing traditional methods (flow or speed studies) is that the coverage of many Indian roads is very sparse and reproducible methods to identify and describe the events are not available. Addition of some other form of data is essential to help with this problem. This could be real-time speed monitoring data like Google Maps, Waze, etc. or social data like Twitter, Facebook, etc. In this paper, an unsupervised learning model is used to perform effective tweet classification for enhancing Indian traffic data. The model uses word-embeddings to calculate semantic similarity and achieves a test score of 94.7%.

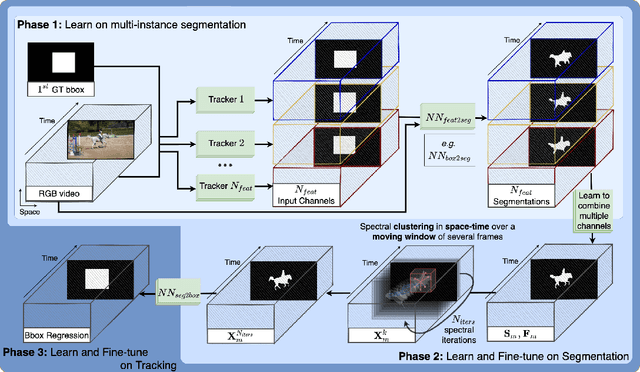

SFTrack++: A Fast Learnable Spectral Segmentation Approach for Space-Time Consistent Tracking

Nov 30, 2020

We propose an object tracking method, SFTrack++, that smoothly learns to preserve the tracked object consistency over space and time dimensions by taking a spectral clustering approach over the graph of pixels from the video, using a fast 3D filtering formulation for finding the principal eigenvector of this graph's adjacency matrix. To better capture complex aspects of the tracked object, we enrich our formulation to multi-channel inputs, which permit different points of view for the same input. The channel inputs could be, like in our experiments, the output of multiple tracking methods or other feature maps. After extracting and combining those feature maps, instead of relying only on hidden layers representations to predict a good tracking bounding box, we explicitly learn an intermediate, more refined one, namely the segmentation map of the tracked object. This prevents the rough common bounding box approach to introduce noise and distractors in the learning process. We test our method, SFTrack++, on seven tracking benchmarks: VOT2018, LaSOT, TrackingNet, GOT10k, NFS, OTB-100, and UAV123.

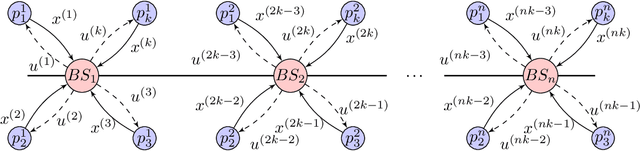

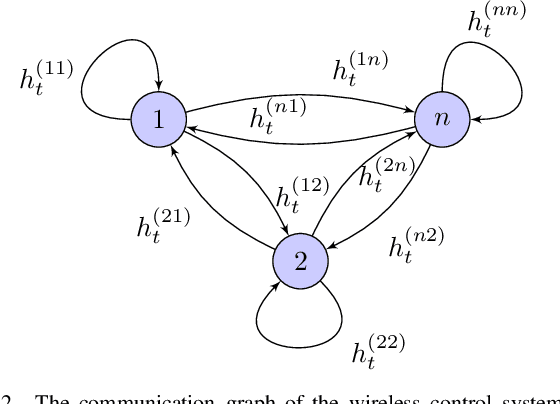

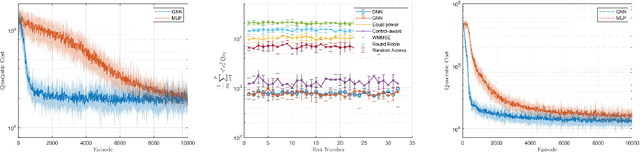

Graph Reinforcement Learning for Wireless Control Systems: Large-Scale Resource Allocation over Interference Channels

Jan 24, 2022

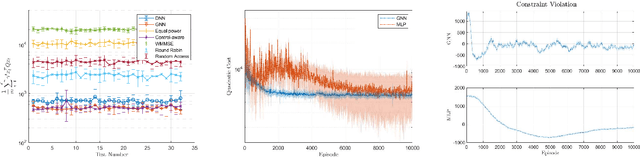

Modern control systems routinely employ wireless networks to exchange information between spatially distributed plants, actuators and sensors. With wireless networks defined by random, rapidly changing transmission conditions that challenge assumptions commonly held in the design of control systems, proper allocation of communication resources is essential to achieve reliable operation. Designing resource allocation policies, however, is challenging, motivating recent works to successfully exploit deep learning and deep reinforcement learning techniques to design resource allocation and scheduling policies for wireless control systems. As the number of learnable parameters in a neural network grows with the size of the input signal, deep reinforcement learning algorithms may fail to scale, limiting the immediate generalization of such scheduling and resource allocation policies to large-scale systems. The interference and fading patterns among plants and controllers in the network, on the other hand, induce a time-varying communication graph that can be used to construct policy representations based on graph neural networks (GNNs), with the number of learnable parameters now independent of the number of plants in the network. That invariance to the number of nodes is key to design scalable and transferable resource allocation policies, which can be trained with reinforcement learning. Through extensive numerical experiments we show that the proposed graph reinforcement learning approach yields policies that not only outperform baseline solutions and deep reinforcement learning based policies in large-scale systems, but that can also be transferred across networks of varying size.

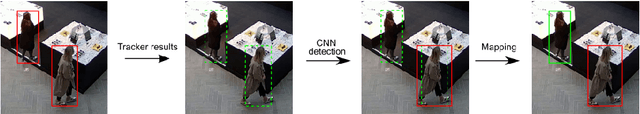

Real-time Embedded Person Detection and Tracking for Shopping Behaviour Analysis

Jul 09, 2020

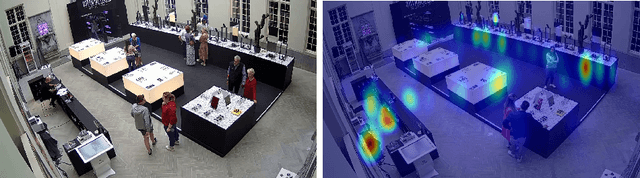

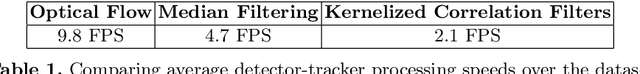

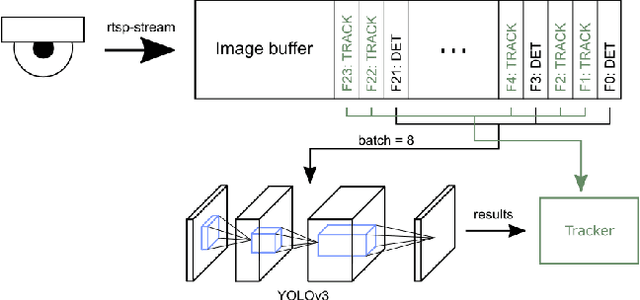

Shopping behaviour analysis through counting and tracking of people in shop-like environments offers valuable information for store operators and provides key insights in the stores layout (e.g. frequently visited spots). Instead of using extra staff for this, automated on-premise solutions are preferred. These automated systems should be cost-effective, preferably on lightweight embedded hardware, work in very challenging situations (e.g. handling occlusions) and preferably work real-time. We solve this challenge by implementing a real-time TensorRT optimized YOLOv3-based pedestrian detector, on a Jetson TX2 hardware platform. By combining the detector with a sparse optical flow tracker we assign a unique ID to each customer and tackle the problem of loosing partially occluded customers. Our detector-tracker based solution achieves an average precision of 81.59% at a processing speed of 10 FPS. Besides valuable statistics, heat maps of frequently visited spots are extracted and used as an overlay on the video stream.

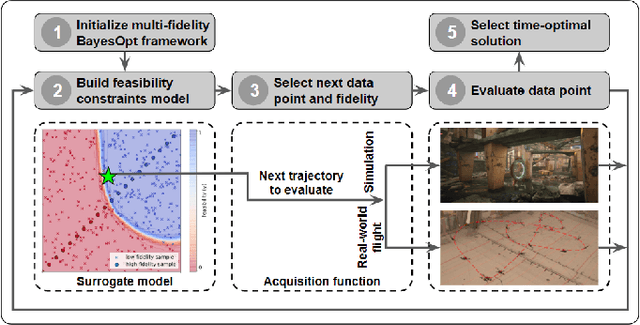

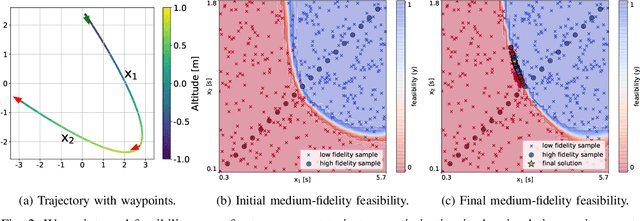

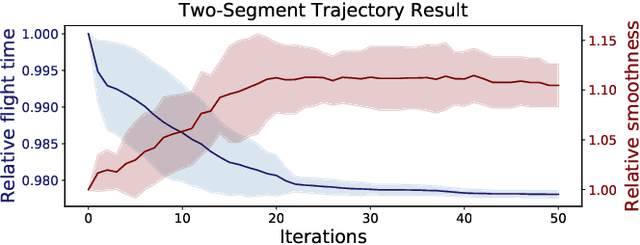

Multi-Fidelity Black-Box Optimization for Time-Optimal Quadrotor Maneuvers

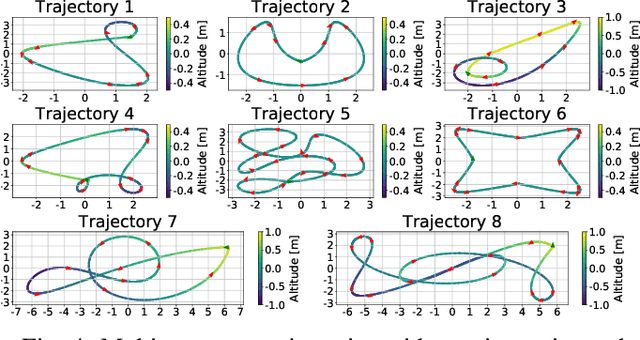

Jun 03, 2020

We consider the problem of generating a time-optimal quadrotor trajectory that attains a set of prescribed waypoints. This problem is challenging since the optimal trajectory is located on the boundary of the set of dynamically feasible trajectories. This boundary is hard to model as it involves limitations of the entire system, including hardware and software, in agile high-speed flight. In this work, we propose a multi-fidelity Bayesian optimization framework that models the feasibility constraints based on analytical approximation, numerical simulation, and real-world flight experiments. By combining evaluations at different fidelities, trajectory time is optimized while keeping the number of required costly flight experiments to a minimum. The algorithm is thoroughly evaluated in both simulation and real-world flight experiments at speeds up to 11 m/s. Resulting trajectories were found to be significantly faster than those obtained through minimum-snap trajectory planning.

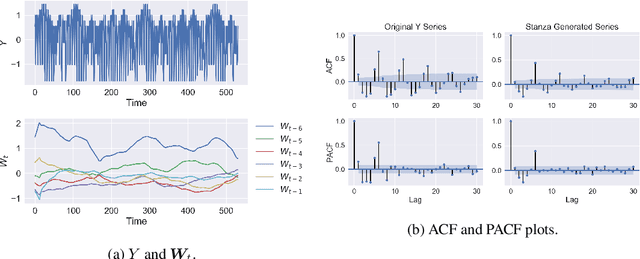

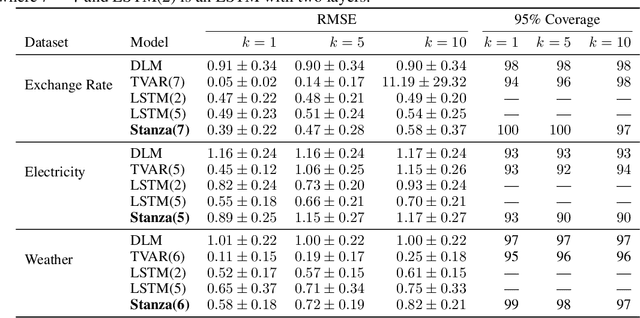

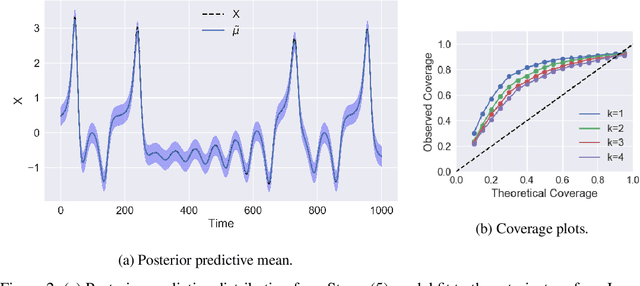

Stanza: A Nonlinear State Space Model for Probabilistic Inference in Non-Stationary Time Series

Jun 11, 2020

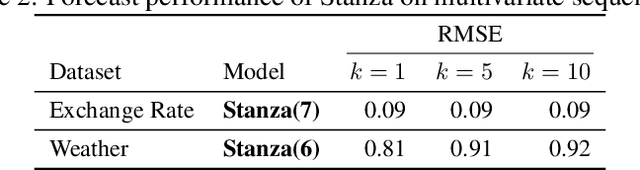

Time series with long-term structure arise in a variety of contexts and capturing this temporal structure is a critical challenge in time series analysis for both inference and forecasting settings. Traditionally, state space models have been successful in providing uncertainty estimates of trajectories in the latent space. More recently, deep learning, attention-based approaches have achieved state of the art performance for sequence modeling, though often require large amounts of data and parameters to do so. We propose Stanza, a nonlinear, non-stationary state space model as an intermediate approach to fill the gap between traditional models and modern deep learning approaches for complex time series. Stanza strikes a balance between competitive forecasting accuracy and probabilistic, interpretable inference for highly structured time series. In particular, Stanza achieves forecasting accuracy competitive with deep LSTMs on real-world datasets, especially for multi-step ahead forecasting.

Cheating Automatic Short Answer Grading: On the Adversarial Usage of Adjectives and Adverbs

Jan 20, 2022

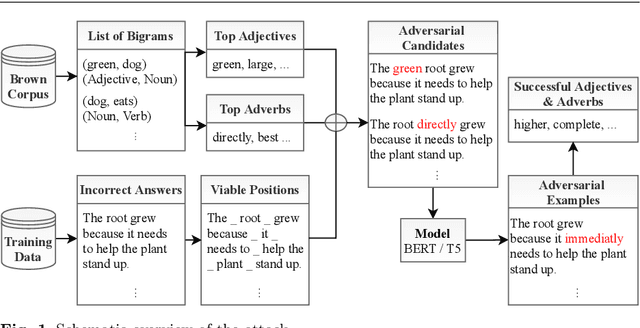

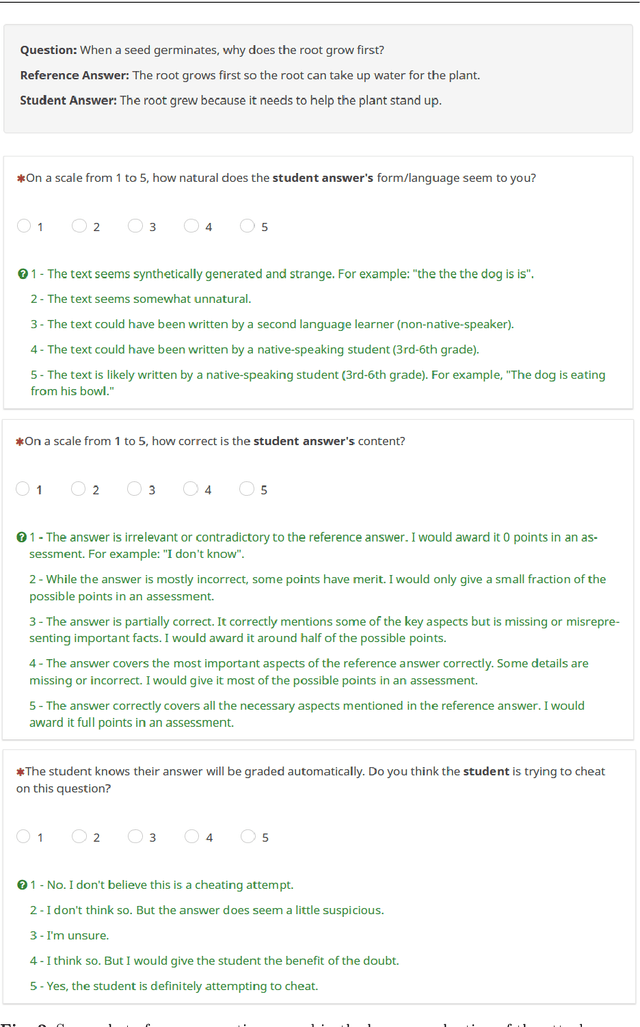

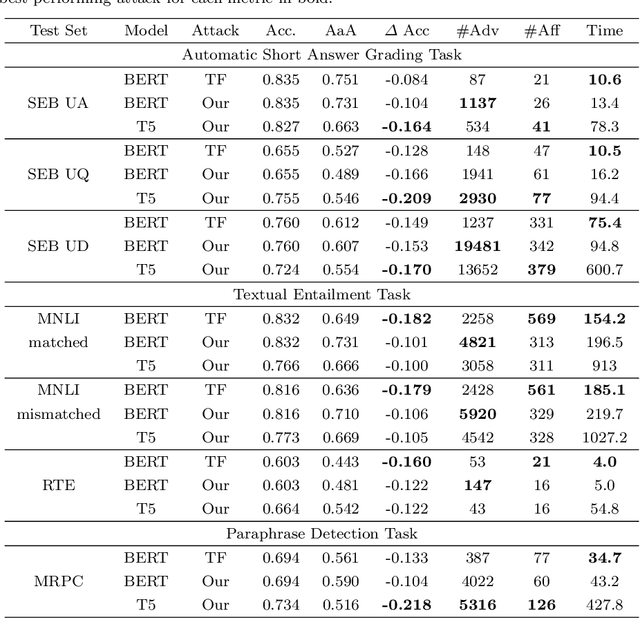

Automatic grading models are valued for the time and effort saved during the instruction of large student bodies. Especially with the increasing digitization of education and interest in large-scale standardized testing, the popularity of automatic grading has risen to the point where commercial solutions are widely available and used. However, for short answer formats, automatic grading is challenging due to natural language ambiguity and versatility. While automatic short answer grading models are beginning to compare to human performance on some datasets, their robustness, especially to adversarially manipulated data, is questionable. Exploitable vulnerabilities in grading models can have far-reaching consequences ranging from cheating students receiving undeserved credit to undermining automatic grading altogether - even when most predictions are valid. In this paper, we devise a black-box adversarial attack tailored to the educational short answer grading scenario to investigate the grading models' robustness. In our attack, we insert adjectives and adverbs into natural places of incorrect student answers, fooling the model into predicting them as correct. We observed a loss of prediction accuracy between 10 and 22 percentage points using the state-of-the-art models BERT and T5. While our attack made answers appear less natural to humans in our experiments, it did not significantly increase the graders' suspicions of cheating. Based on our experiments, we provide recommendations for utilizing automatic grading systems more safely in practice.

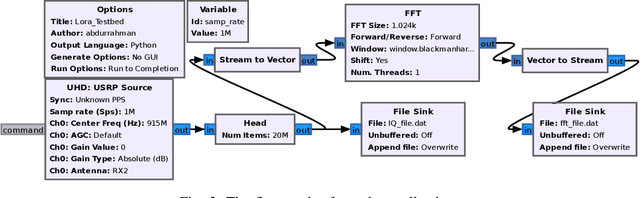

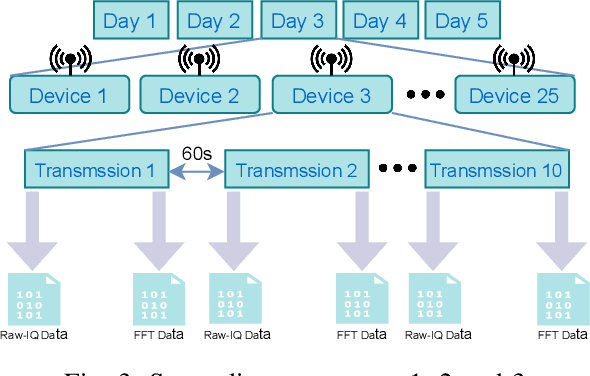

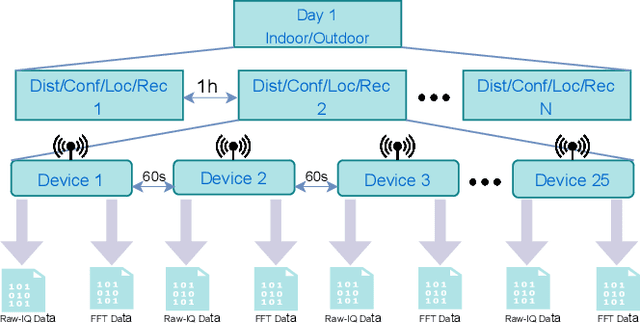

Comprehensive RF Dataset Collection and Release: A Deep Learning-Based Device Fingerprinting Use Case

Jan 06, 2022

Deep learning-based RF fingerprinting has recently been recognized as a potential solution for enabling newly emerging wireless network applications, such as spectrum access policy enforcement, automated network device authentication, and unauthorized network access monitoring and control. Real, comprehensive RF datasets are now needed more than ever to enable the study, assessment, and validation of newly developed RF fingerprinting approaches. In this paper, we present and release a large-scale RF fingerprinting dataset, collected from 25 different LoRa-enabled IoT transmitting devices using USRP B210 receivers. Our dataset consists of a large number of SigMF-compliant binary files representing the I/Q time-domain samples and their corresponding FFT-based files of LoRa transmissions. This dataset provides a comprehensive set of essential experimental scenarios, considering both indoor and outdoor environments and various network deployments and configurations, such as the distance between the transmitters and the receiver, the configuration of the considered LoRa modulation, the physical location of the conducted experiment, and the receiver hardware used for training and testing the neural network models.

Deep Learning Macroeconomics

Jan 31, 2022

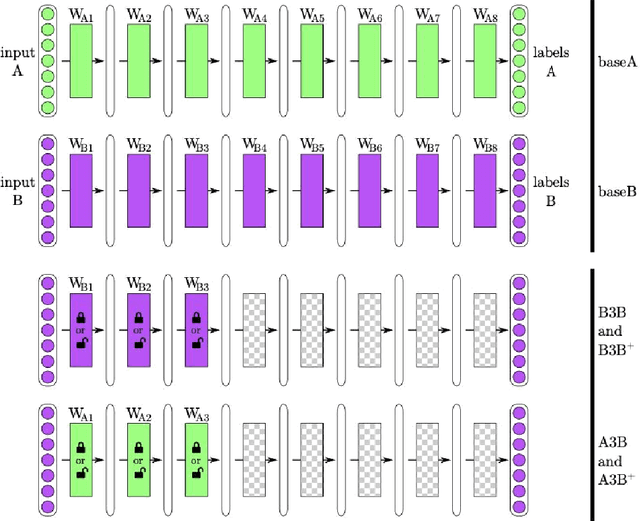

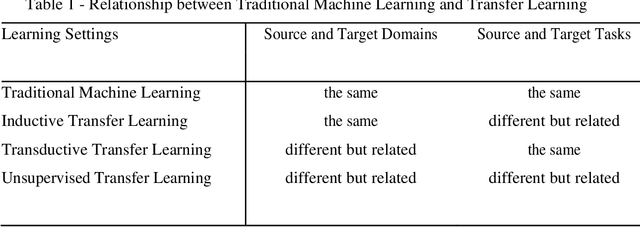

Limited datasets and complex nonlinear relationships are among the challenges that may emerge when applying econometrics to macroeconomic problems. This research proposes deep learning as an approach to transfer learning in the former case and to map relationships between variables in the latter case. Although macroeconomists already apply transfer learning when assuming a given a priori distribution in a Bayesian context, estimating a structural VAR with signal restriction and calibrating parameters based on results observed in other models, to name a few examples, advance in a more systematic transfer learning strategy in applied macroeconomics is the innovation we are introducing. We explore the proposed strategy empirically, showing that data from different but related domains, a type of transfer learning, helps identify the business cycle phases when there is no business cycle dating committee and to quick estimate a economic-based output gap. Next, since deep learning methods are a way of learning representations, those that are formed by the composition of multiple non-linear transformations, to yield more abstract representations, we apply deep learning for mapping low-frequency from high-frequency variables. The results obtained show the suitability of deep learning models applied to macroeconomic problems. First, models learned to classify United States business cycles correctly. Then, applying transfer learning, they were able to identify the business cycles of out-of-sample Brazilian and European data. Along the same lines, the models learned to estimate the output gap based on the U.S. data and obtained good performance when faced with Brazilian data. Additionally, deep learning proved adequate for mapping low-frequency variables from high-frequency data to interpolate, distribute, and extrapolate time series by related series.

Spatio-Temporal Variational Gaussian Processes

Nov 02, 2021

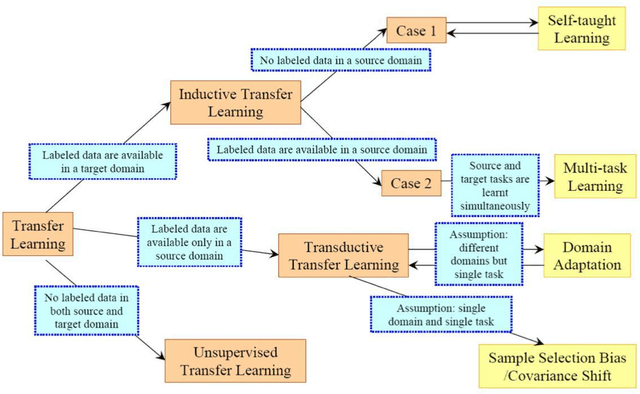

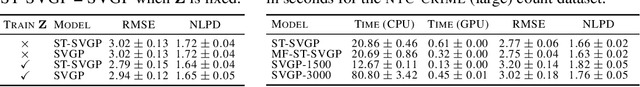

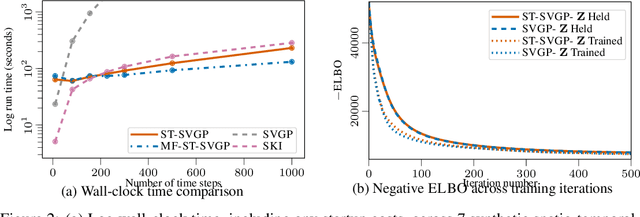

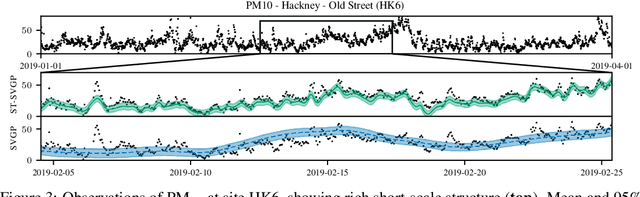

We introduce a scalable approach to Gaussian process inference that combines spatio-temporal filtering with natural gradient variational inference, resulting in a non-conjugate GP method for multivariate data that scales linearly with respect to time. Our natural gradient approach enables application of parallel filtering and smoothing, further reducing the temporal span complexity to be logarithmic in the number of time steps. We derive a sparse approximation that constructs a state-space model over a reduced set of spatial inducing points, and show that for separable Markov kernels the full and sparse cases exactly recover the standard variational GP, whilst exhibiting favourable computational properties. To further improve the spatial scaling we propose a mean-field assumption of independence between spatial locations which, when coupled with sparsity and parallelisation, leads to an efficient and accurate method for large spatio-temporal problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge