"Time": models, code, and papers

Improving \textit{Tug-of-War} sketch using Control-Variates method

Mar 04, 2022

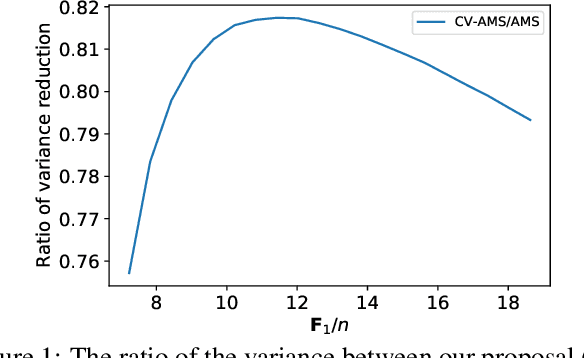

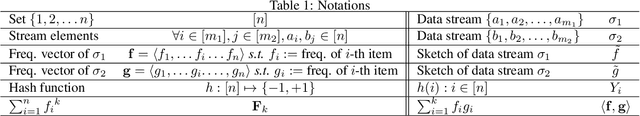

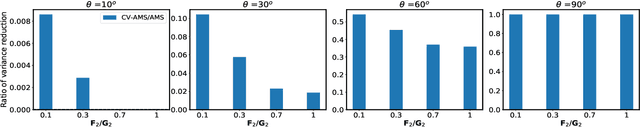

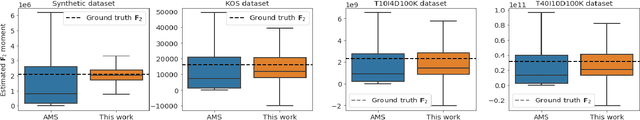

Computing space-efficient summary, or \textit{a.k.a. sketches}, of large data, is a central problem in the streaming algorithm. Such sketches are used to answer \textit{post-hoc} queries in several data analytics tasks. The algorithm for computing sketches typically requires to be fast, accurate, and space-efficient. A fundamental problem in the streaming algorithm framework is that of computing the frequency moments of data streams. The frequency moments of a sequence containing $f_i$ elements of type $i$, are the numbers $\mathbf{F}_k=\sum_{i=1}^n {f_i}^k,$ where $i\in [n]$. This is also called as $\ell_k$ norm of the frequency vector $(f_1, f_2, \ldots f_n).$ Another important problem is to compute the similarity between two data streams by computing the inner product of the corresponding frequency vectors. The seminal work of Alon, Matias, and Szegedy~\cite{AMS}, \textit{a.k.a. Tug-of-war} (or AMS) sketch gives a randomized sublinear space (and linear time) algorithm for computing the frequency moments, and the inner product between two frequency vectors corresponding to the data streams. However, the variance of these estimates typically tends to be large. In this work, we focus on minimizing the variance of these estimates. We use the techniques from the classical Control-Variate method~\cite{Lavenberg} which is primarily known for variance reduction in Monte-Carlo simulations, and as a result, we are able to obtain significant variance reduction, at the cost of a little computational overhead. We present a theoretical analysis of our proposal and complement it with supporting experiments on synthetic as well as real-world datasets.

Sketch-and-Lift: Scalable Subsampled Semidefinite Program for $K$-means Clustering

Feb 09, 2022

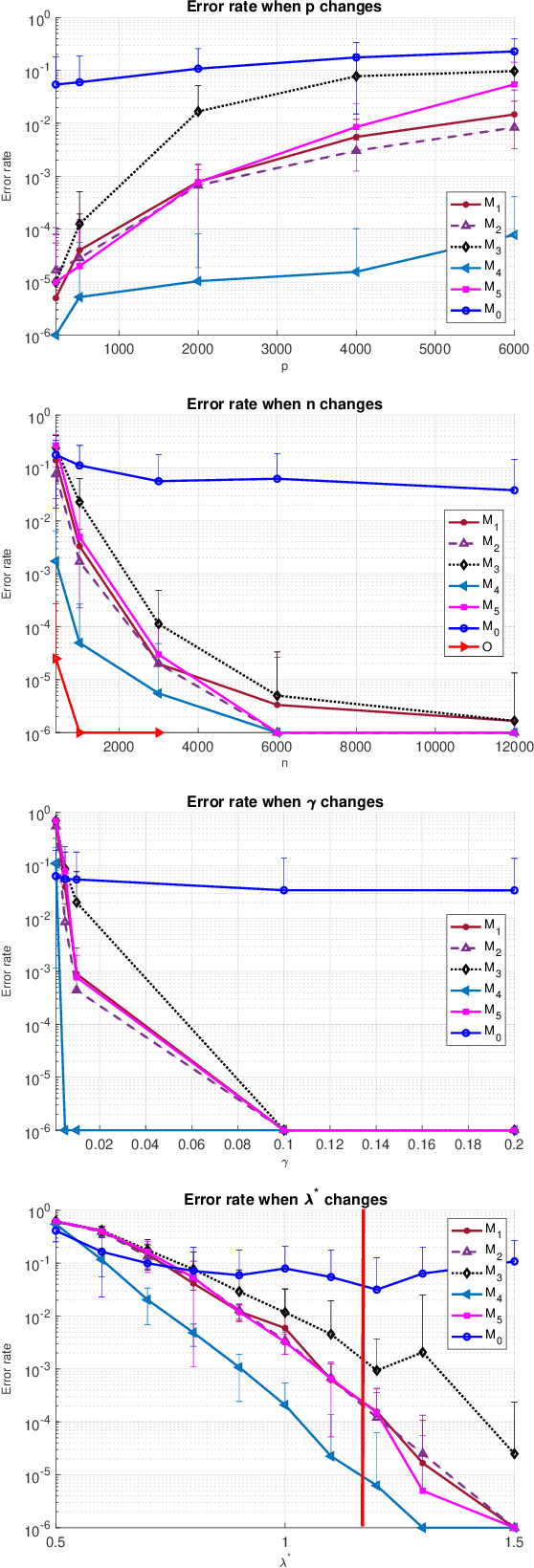

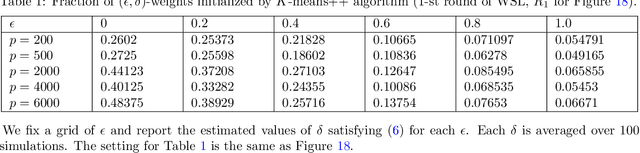

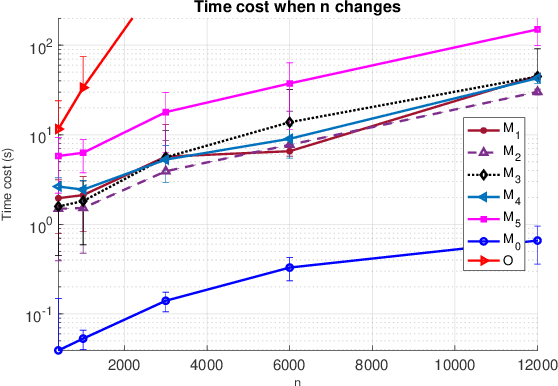

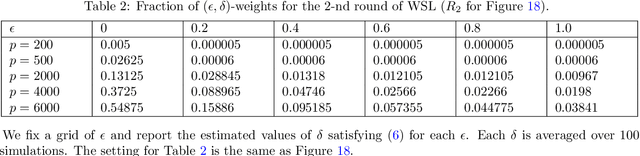

Semidefinite programming (SDP) is a powerful tool for tackling a wide range of computationally hard problems such as clustering. Despite the high accuracy, semidefinite programs are often too slow in practice with poor scalability on large (or even moderate) datasets. In this paper, we introduce a linear time complexity algorithm for approximating an SDP relaxed $K$-means clustering. The proposed sketch-and-lift (SL) approach solves an SDP on a subsampled dataset and then propagates the solution to all data points by a nearest-centroid rounding procedure. It is shown that the SL approach enjoys a similar exact recovery threshold as the $K$-means SDP on the full dataset, which is known to be information-theoretically tight under the Gaussian mixture model. The SL method can be made adaptive with enhanced theoretic properties when the cluster sizes are unbalanced. Our simulation experiments demonstrate that the statistical accuracy of the proposed method outperforms state-of-the-art fast clustering algorithms without sacrificing too much computational efficiency, and is comparable to the original $K$-means SDP with substantially reduced runtime.

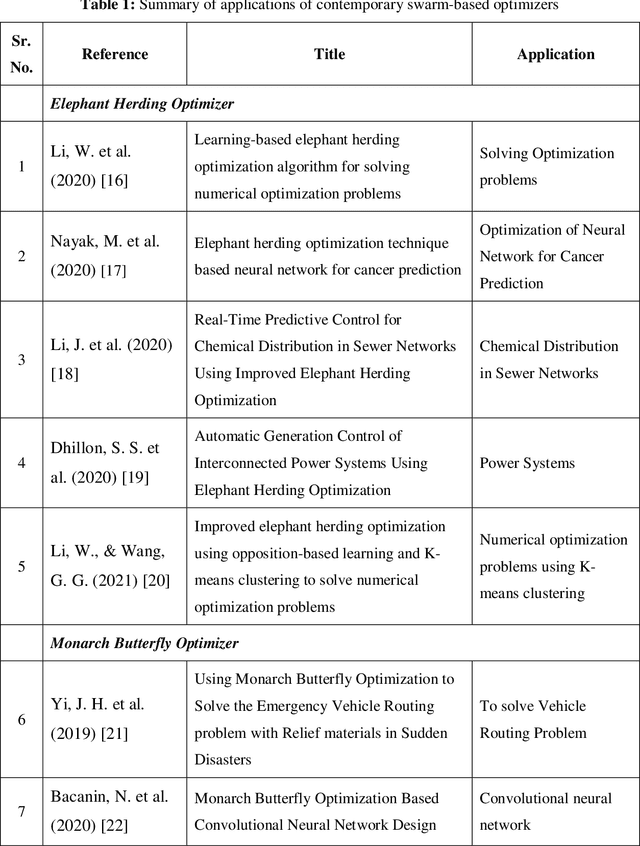

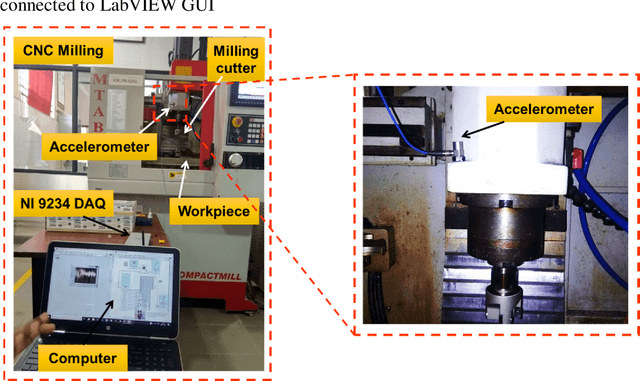

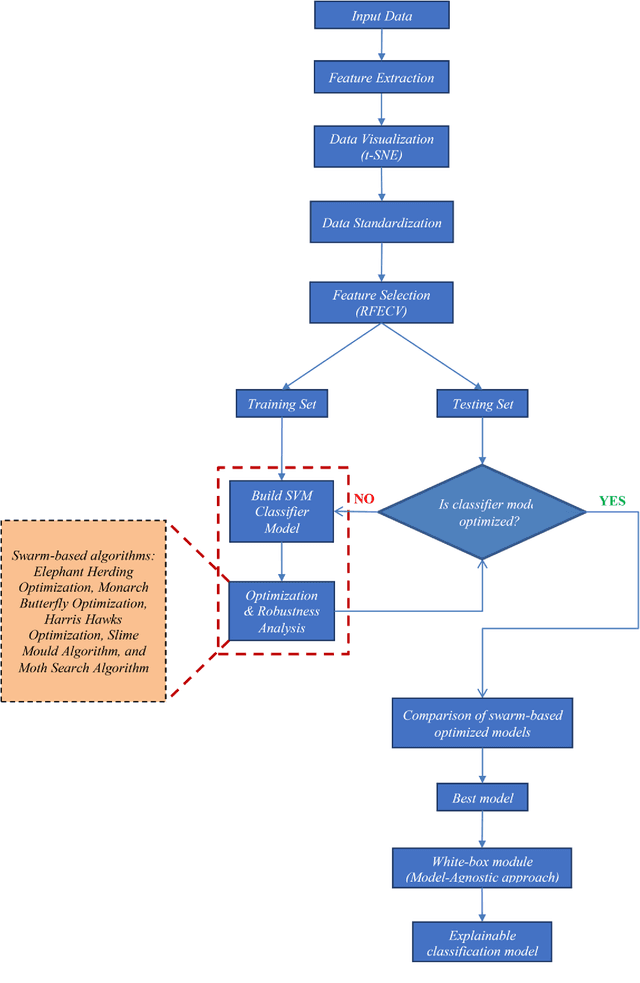

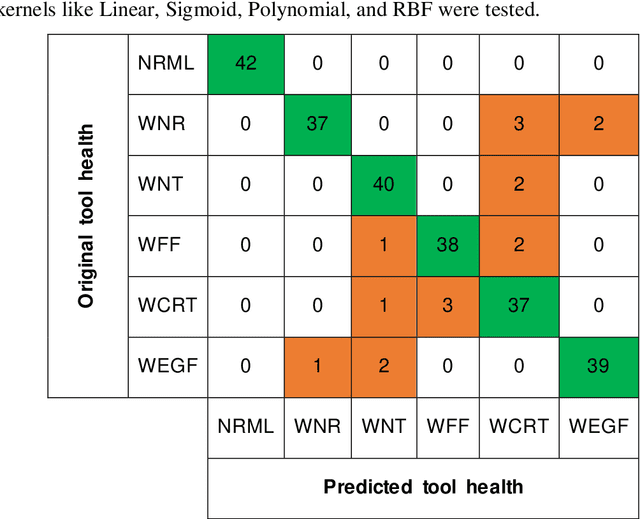

A White-Box SVM Framework and its Swarm-Based Optimization for Supervision of Toothed Milling Cutter through Characterization of Spindle Vibrations

Dec 15, 2021

In this paper, a white-Box support vector machine (SVM) framework and its swarm-based optimization is presented for supervision of toothed milling cutter through characterization of real-time spindle vibrations. The anomalous moments of vibration evolved due to in-process tool failures (i.e., flank and nose wear, crater and notch wear, edge fracture) have been investigated through time-domain response of acceleration and statistical features. The Recursive Feature Elimination with Cross-Validation (RFECV) with decision trees as the estimator has been implemented for feature selection. Further, the competence of standard SVM has been examined for tool health monitoring followed by its optimization through application of swarm based algorithms. The comparative analysis of performance of five meta-heuristic algorithms (Elephant Herding Optimization, Monarch Butterfly Optimization, Harris Hawks Optimization, Slime Mould Algorithm, and Moth Search Algorithm) has been carried out. The white-box approach has been presented considering global and local representation that provides insight into the performance of machine learning models in tool condition monitoring.

Cooperative Multi-UAV Coverage Mission Planning Platform for Remote Sensing Applications

Jan 19, 2022

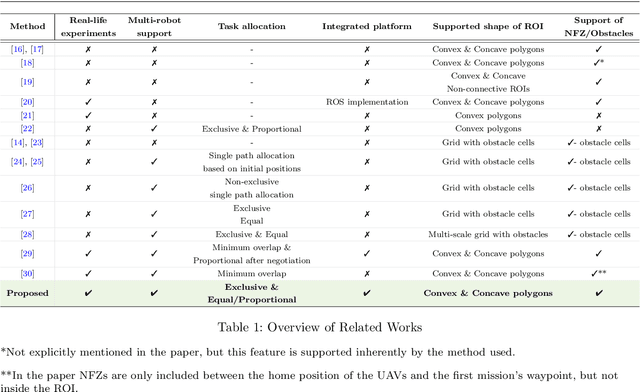

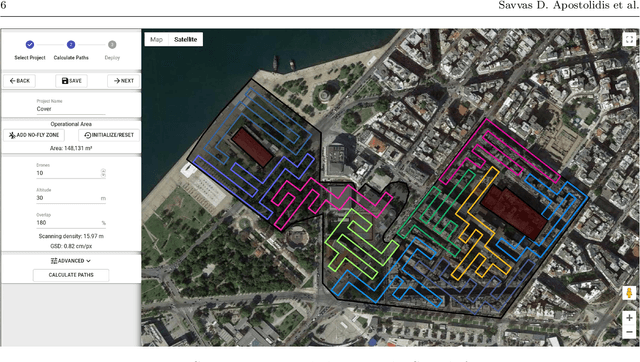

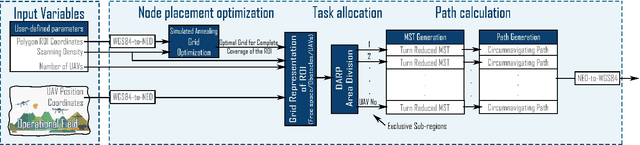

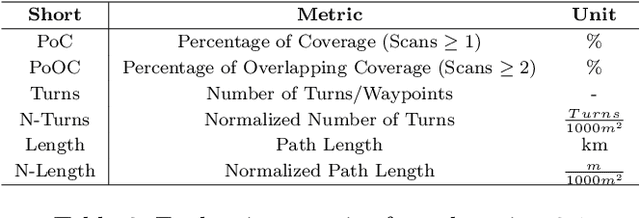

This paper proposes a novel mission planning platform, capable of efficiently deploying a team of UAVs to cover complex-shaped areas, in various remote sensing applications. Under the hood lies a novel optimization scheme for grid-based methods, utilizing Simulated Annealing algorithm, that significantly increases the achieved percentage of coverage and improves the qualitative features of the generated paths. Extensive simulated evaluation in comparison with a state-of-the-art alternative methodology, for coverage path planning (CPP) operations, establishes the performance gains in terms of achieved coverage and overall duration of the generated missions. On top of that, DARP algorithm is employed to allocate sub-tasks to each member of the swarm, taking into account each UAV's sensing and operational capabilities, their initial positions and any no-fly-zones possibly defined inside the operational area. This feature is of paramount importance in real-life applications, as it has the potential to achieve tremendous performance improvements in terms of time demanded to complete a mission, while at the same time it unlocks a wide new range of applications, that was previously not feasible due to the limited battery life of UAVs. In order to investigate the actual efficiency gains that are introduced by the multi-UAV utilization, a simulated study is performed as well. All of these capabilities are packed inside an end-to-end platform that eases the utilization of UAVs' swarms in remote sensing applications. Its versatility is demonstrated via two different real-life applications: (i) a photogrametry for precision agriculture and (ii) an indicative search and rescue for first responders missions, that were performed utilizing a swarm of commercial UAVs. The source code can be found at: https://github.com/savvas-ap/mCPP-optimized-DARP

* An implementation of the mCPP methodology introduced in this work, as well as a link for a demonstrative video and a link for a fully functional, on-line hosted instance of the presented platform can be found here: https://github.com/savvas-ap/mCPP-optimized-DARP

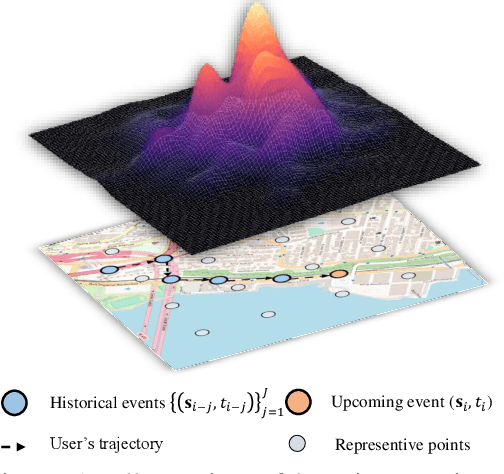

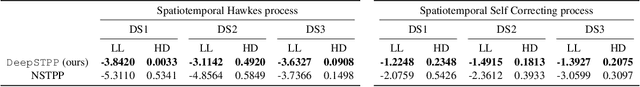

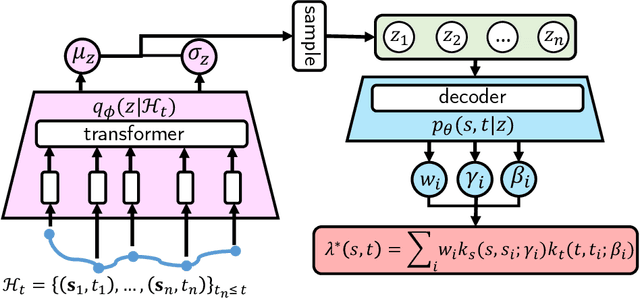

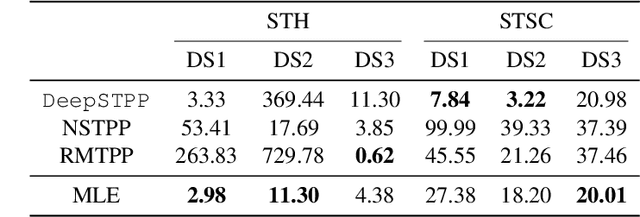

Neural Point Process for Learning Spatiotemporal Event Dynamics

Dec 12, 2021

Learning the dynamics of spatiotemporal events is a fundamental problem. Neural point processes enhance the expressivity of point process models with deep neural networks. However, most existing methods only consider temporal dynamics without spatial modeling. We propose Deep Spatiotemporal Point Process (DeepSTPP), a deep dynamics model that integrates spatiotemporal point processes. Our method is flexible, efficient, and can accurately forecast irregularly sampled events over space and time. The key construction of our approach is the nonparametric space-time intensity function, governed by a latent process. The intensity function enjoys closed-form integration for the density. The latent process captures the uncertainty of the event sequence. We use amortized variational inference to infer the latent process with deep networks. Using synthetic datasets, we validate our model can accurately learn the true intensity function. On real-world benchmark datasets, our model demonstrates superior performance over state-of-the-art baselines.

Decentralized Safe Multi-agent Stochastic Optimal Control using Deep FBSDEs and ADMM

Feb 22, 2022

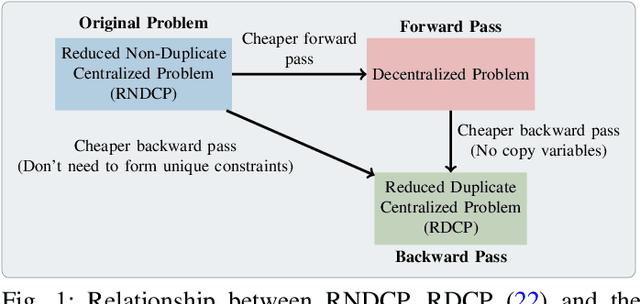

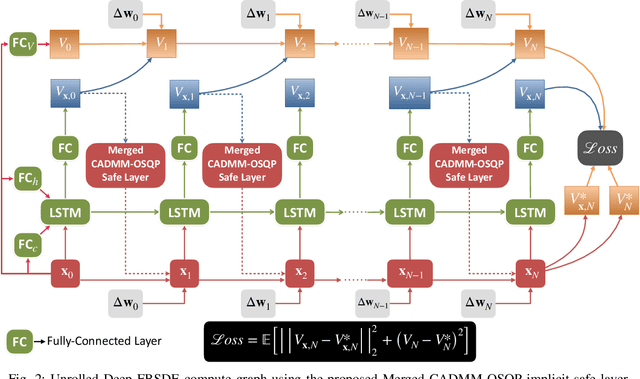

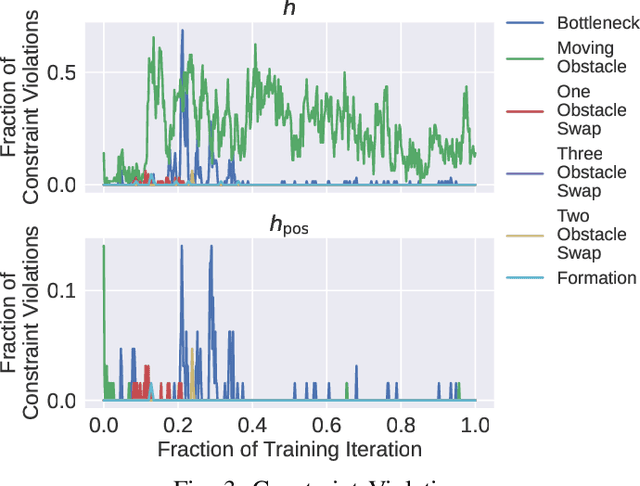

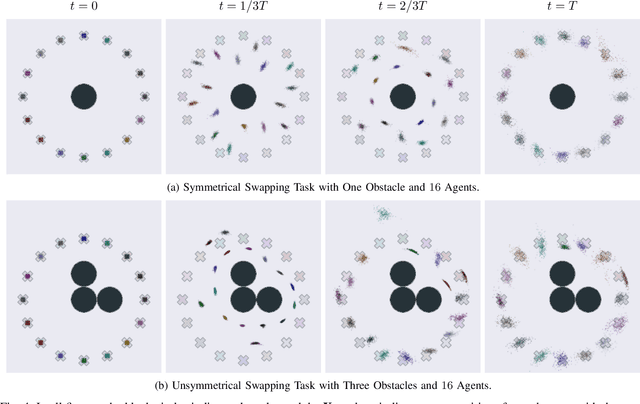

In this work, we propose a novel safe and scalable decentralized solution for multi-agent control in the presence of stochastic disturbances. Safety is mathematically encoded using stochastic control barrier functions and safe controls are computed by solving quadratic programs. Decentralization is achieved by augmenting to each agent's optimization variables, copy variables, for its neighbors. This allows us to decouple the centralized multi-agent optimization problem. However, to ensure safety, neighboring agents must agree on "what is safe for both of us" and this creates a need for consensus. To enable safe consensus solutions, we incorporate an ADMM-based approach. Specifically, we propose a Merged CADMM-OSQP implicit neural network layer, that solves a mini-batch of both, local quadratic programs as well as the overall consensus problem, as a single optimization problem. This layer is embedded within a Deep FBSDEs network architecture at every time step, to facilitate end-to-end differentiable, safe and decentralized stochastic optimal control. The efficacy of the proposed approach is demonstrated on several challenging multi-robot tasks in simulation. By imposing requirements on safety specified by collision avoidance constraints, the safe operation of all agents is ensured during the entire training process. We also demonstrate superior scalability in terms of computational and memory savings as compared to a centralized approach.

Conditional Generation Net for Medication Recommendation

Feb 18, 2022

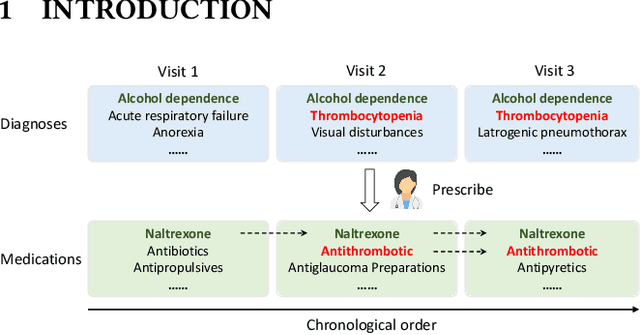

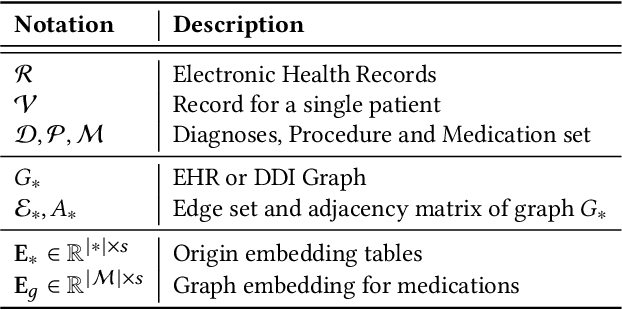

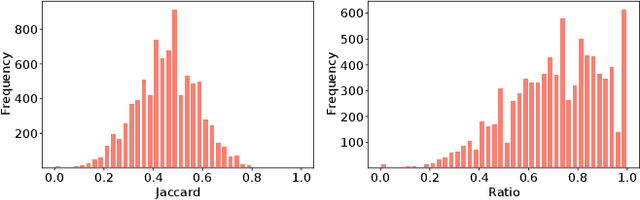

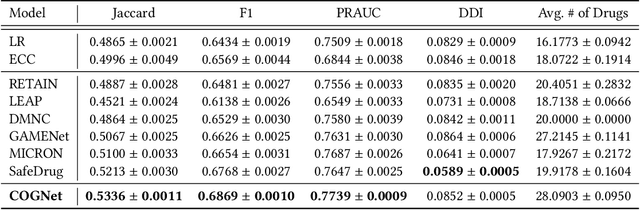

Medication recommendation targets to provide a proper set of medicines according to patients' diagnoses, which is a critical task in clinics. Currently, the recommendation is manually conducted by doctors. However, for complicated cases, like patients with multiple diseases at the same time, it's difficult to propose a considerate recommendation even for experienced doctors. This urges the emergence of automatic medication recommendation which can help treat the diagnosed diseases without causing harmful drug-drug interactions.Due to the clinical value, medication recommendation has attracted growing research interests.Existing works mainly formulate medication recommendation as a multi-label classification task to predict the set of medicines. In this paper, we propose the Conditional Generation Net (COGNet) which introduces a novel copy-or-predict mechanism to generate the set of medicines. Given a patient, the proposed model first retrieves his or her historical diagnoses and medication recommendations and mines their relationship with current diagnoses. Then in predicting each medicine, the proposed model decides whether to copy a medicine from previous recommendations or to predict a new one. This process is quite similar to the decision process of human doctors. We validate the proposed model on the public MIMIC data set, and the experimental results show that the proposed model can outperform state-of-the-art approaches.

An unsupervised extractive summarization method based on multi-round computation

Dec 15, 2021

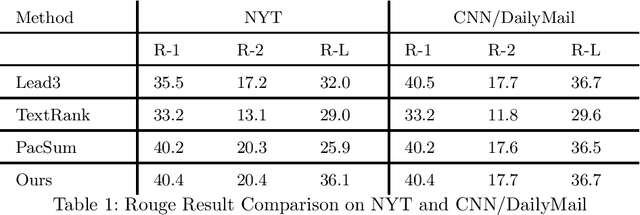

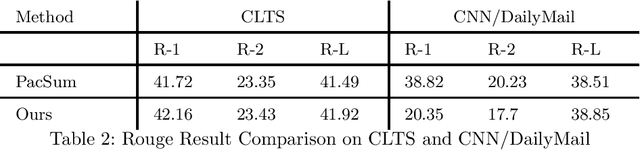

Text summarization methods have attracted much attention all the time. In recent years, deep learning has been applied to text summarization, and it turned out to be pretty effective. However, most of the current text summarization methods based on deep learning need large-scale datasets, which is difficult to achieve in practical applications. In this paper, an unsupervised extractive text summarization method based on multi-round calculation is proposed. Based on the directed graph algorithm, we change the traditional method of calculating the sentence ranking at one time to multi-round calculation, and the summary sentences are dynamically optimized after each round of calculation to better match the characteristics of the text. In this paper, experiments are carried out on four data sets, each separately containing Chinese, English, long and short texts. The experiment results show that our method has better performance than both baseline methods and other unsupervised methods and is robust on different datasets.

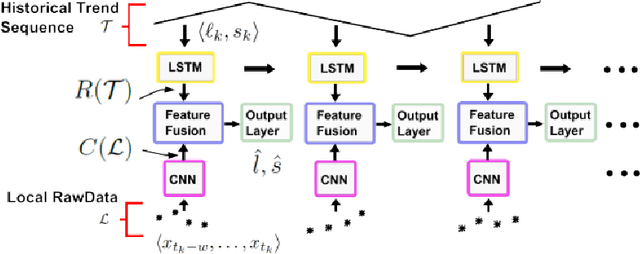

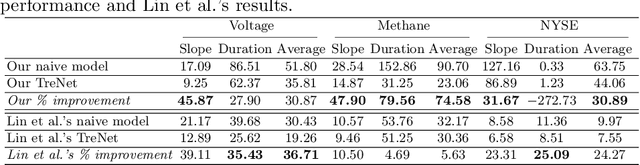

An analysis of deep neural networks for predicting trends in time series data

Sep 22, 2020

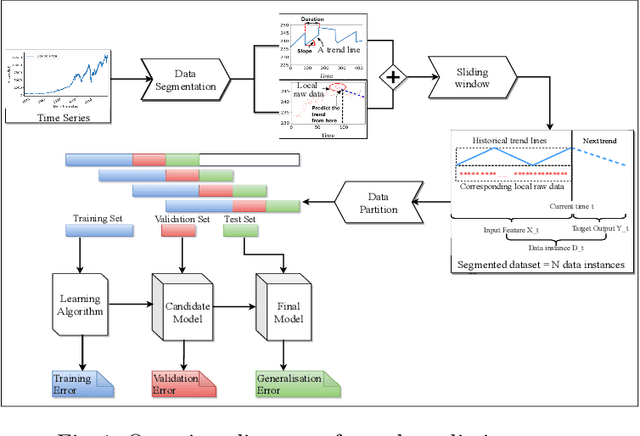

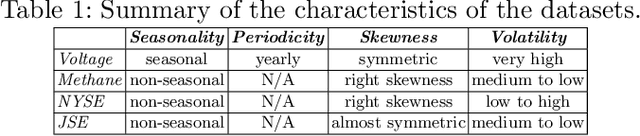

Recently, a hybrid Deep Neural Network (DNN) algorithm, TreNet was proposed for predicting trends in time series data. While TreNet was shown to have superior performance for trend prediction to other DNN and traditional ML approaches, the validation method used did not take into account the sequential nature of time series data sets and did not deal with model update. In this research we replicated the TreNet experiments on the same data sets using a walk-forward validation method and tested our optimal model over multiple independent runs to evaluate model stability. We compared the performance of the hybrid TreNet algorithm, on four data sets to vanilla DNN algorithms that take in point data, and also to traditional ML algorithms. We found that in general TreNet still performs better than the vanilla DNN models, but not on all data sets as reported in the original TreNet study. This study highlights the importance of using an appropriate validation method and evaluating model stability for evaluating and developing machine learning models for trend prediction in time series data.

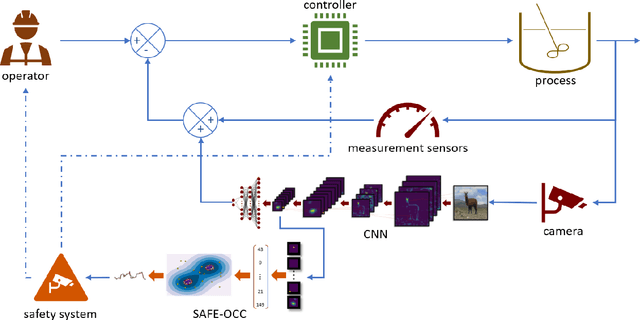

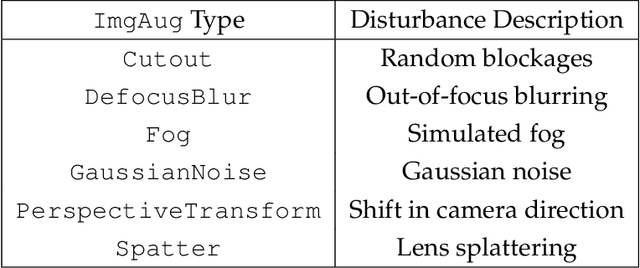

SAFE-OCC: A Novelty Detection Framework for Convolutional Neural Network Sensors and its Application in Process Control

Feb 03, 2022

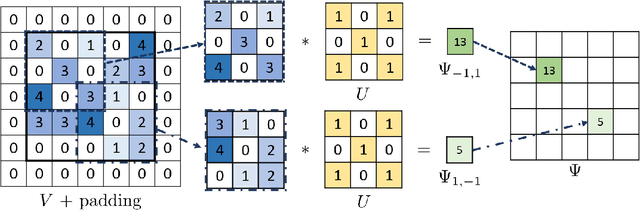

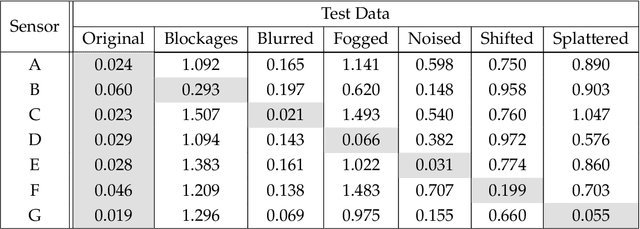

We present a novelty detection framework for Convolutional Neural Network (CNN) sensors that we call Sensor-Activated Feature Extraction One-Class Classification (SAFE-OCC). We show that this framework enables the safe use of computer vision sensors in process control architectures. Emergent control applications use CNN models to map visual data to a state signal that can be interpreted by the controller. Incorporating such sensors introduces a significant system operation vulnerability because CNN sensors can exhibit high prediction errors when exposed to novel (abnormal) visual data. Unfortunately, identifying such novelties in real-time is nontrivial. To address this issue, the SAFE-OCC framework leverages the convolutional blocks of the CNN to create an effective feature space to conduct novelty detection using a desired one-class classification technique. This approach engenders a feature space that directly corresponds to that used by the CNN sensor and avoids the need to derive an independent latent space. We demonstrate the effectiveness of SAFE-OCC via simulated control environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge