"Time": models, code, and papers

Weighted Random Cut Forest Algorithm for Anomaly Detections

Feb 01, 2022

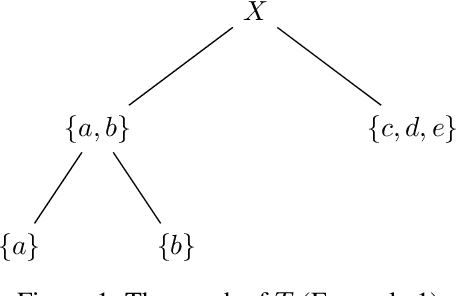

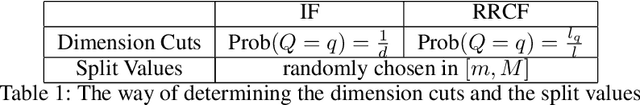

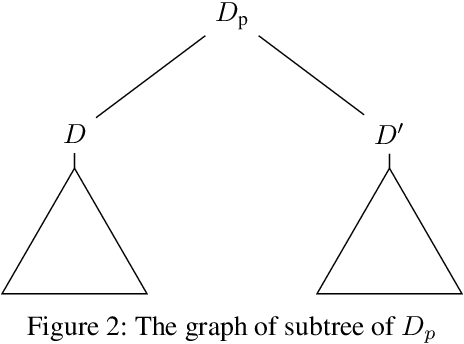

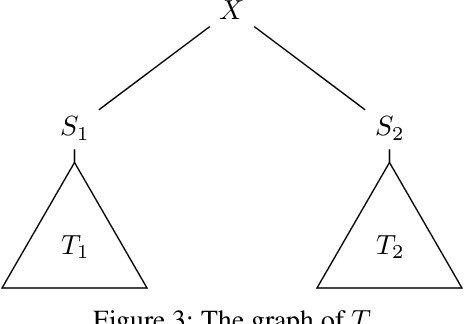

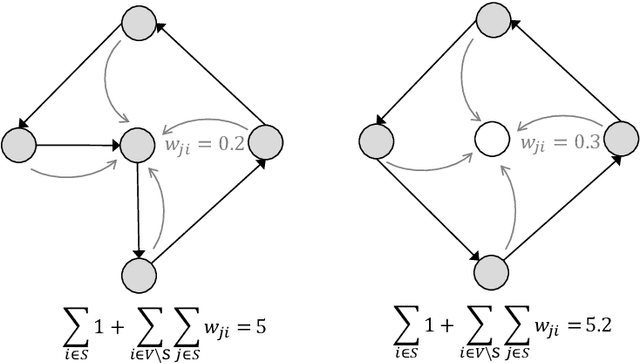

Random cut forest (RCF) algorithms have been developed for anomaly detection, particularly for the anomaly detection in time-series data. The RCF algorithm is the improved version of the isolation forest algorithm. Unlike the isolation forest algorithm, the RCF algorithm has the power of determining whether the real-time input has anomaly by inserting the input in the constructed tree network. There have been developed various RCF algorithms including Robust RCF (RRCF) with which the cutting procedure is adaptively chosen probabilistically. RRCF shows better performance compared to the isolation forest as the cutting dimension is decided based on the geometric range of the data. The overall data structure is, however, not considered in the adaptive cutting algorithm with the RRCF. In this paper, we propose a new RCF, so-called the weighted RCF (WRCF). In order to introduce the WRCF, we first introduce a new geometric measure, i.e., a \textit{density measure} which is crucial for the construction of the WRCF. We provide various mathematical properties of the density measure. The proposed WRCF also cuts the tree network adaptively, but with consideration of the denseness of the data. The proposed method is more efficient when the data is structured and achieves the desired anomaly score more rapidly than the RRCF. We provide theorems that prove our claims with numerical examples.

Assimilation of Satellite Active Fires Data

Apr 01, 2022Wildland fires pose an increasingly serious problem in our society. The number and severity of these fires has been rising for many years. Wildfires pose direct threats to life and property as well as threats through ancillary effects like reduced air quality. The aim of this thesis is to develop techniques to help combat the impacts of wildfires by improving wildfire modeling capabilities by using satellite fire observations. Already much work has been done in this direction by other researchers. Our work seeks to expand the body of knowledge using mathematically sound methods to utilize information about wildfires that considers the uncertainties inherent in the satellite data. In this thesis we explore methods for using satellite data to help initialize and steer wildfire simulations. In particular, we develop a method for constructing the history of a fire, a new technique for assimilating wildfire data, and a method for modifying the behavior of a modeled fire by inferring information about the fuels in the fire domain. These goals rely on being able to estimate the time a fire first arrived at every location in a geographic region of interest. Because detailed knowledge of real wildfires is typically unavailable, the basic procedure for developing and testing the methods in this thesis will be to first work with simulated data so that the estimates produced can be compared with known solutions. The methods thus developed are then applied to real-world scenarios. Analysis of these scenarios shows that the work with constructing the history of fires and data assimilation improves improves fire modeling capabilities. The research is significant because it gives us a better understanding of the capabilities and limitations of using satellite data to inform wildfire models and it points the way towards new avenues for modeling fire behavior.

Coach-assisted Multi-Agent Reinforcement Learning Framework for Unexpected Crashed Agents

Mar 16, 2022

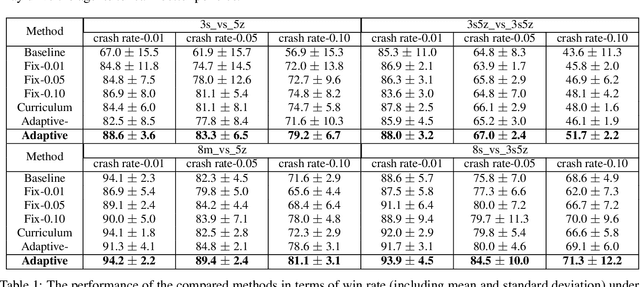

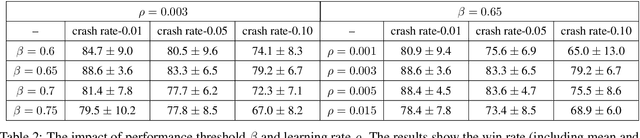

Multi-agent reinforcement learning is difficult to be applied in practice, which is partially due to the gap between the simulated and real-world scenarios. One reason for the gap is that the simulated systems always assume that the agents can work normally all the time, while in practice, one or more agents may unexpectedly "crash" during the coordination process due to inevitable hardware or software failures. Such crashes will destroy the cooperation among agents, leading to performance degradation. In this work, we present a formal formulation of a cooperative multi-agent reinforcement learning system with unexpected crashes. To enhance the robustness of the system to crashes, we propose a coach-assisted multi-agent reinforcement learning framework, which introduces a virtual coach agent to adjust the crash rate during training. We design three coaching strategies and the re-sampling strategy for our coach agent. To the best of our knowledge, this work is the first to study the unexpected crashes in the multi-agent system. Extensive experiments on grid-world and StarCraft II micromanagement tasks demonstrate the efficacy of adaptive strategy compared with the fixed crash rate strategy and curriculum learning strategy. The ablation study further illustrates the effectiveness of our re-sampling strategy.

Mission planning for emergency rapid mapping with drones

Mar 02, 2022

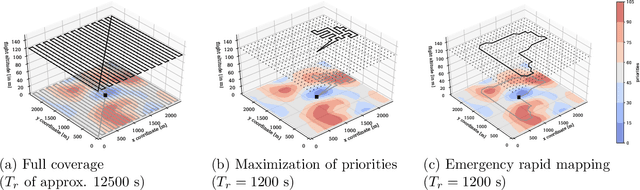

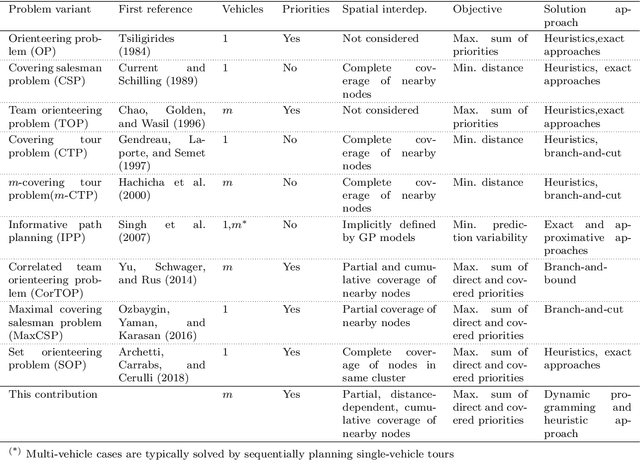

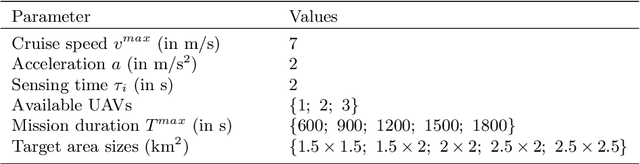

We introduce a mission planning concept for routing unmanned aerial vehicles (UAVs) through a set of sampling locations in the immediate aftermath of an incident such as a fire or chemical accident. Using interpolation methods that account for the spatial interdependencies inherent in the surveyed phenomenon, these samples allow predicting the distribution of hazardous substances across the affected area. We define the generalized correlated team orienteering problem (GCorTOP) for selecting {informative} samples considering spatial correlations between observed and unobserved locations as well as priorities in the surveyed area. To quickly provide high-quality solutions in time-sensitive situations, we propose a two-phase multi-start adaptive large neighborhood search (2MLS). We show the competitiveness of the solution approach using benchmark instances for the team orienteering problem and investigate the performance of the proposed models and solution approach in an extensive study based on newly introduced benchmark instances for the mission planning problem.

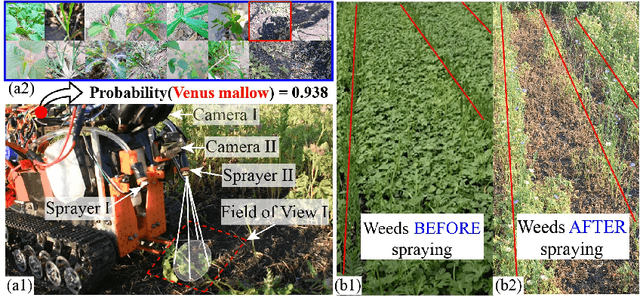

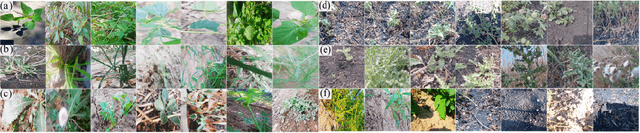

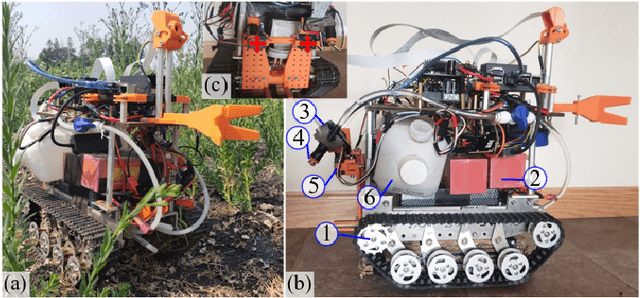

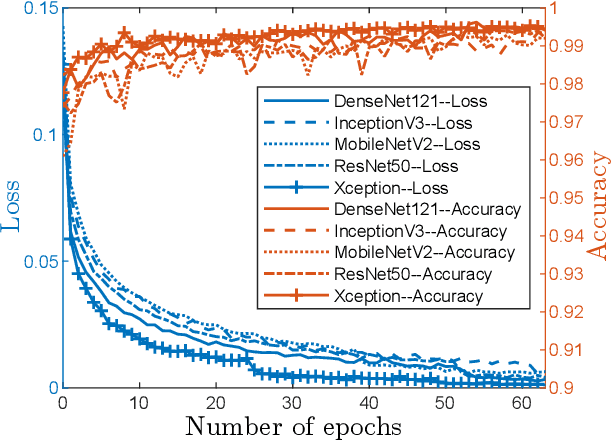

Deep-CNN based Robotic Multi-Class Under-Canopy Weed Control in Precision Farming

Dec 28, 2021

Smart weeding systems to perform plant-specific operations can contribute to the sustainability of agriculture and the environment. Despite monumental advances in autonomous robotic technologies for precision weed management in recent years, work on under-canopy weeding in fields is yet to be realized. A prerequisite of such systems is reliable detection and classification of weeds to avoid mistakenly spraying and, thus, damaging the surrounding plants. Real-time multi-class weed identification enables species-specific treatment of weeds and significantly reduces the amount of herbicide use. Here, our first contribution is the first adequately large realistic image dataset \textit{AIWeeds} (one/multiple kinds of weeds in one image), a library of about 10,000 annotated images of flax, and the 14 most common weeds in fields and gardens taken from 20 different locations in North Dakota, California, and Central China. Second, we provide a full pipeline from model training with maximum efficiency to deploying the TensorRT-optimized model onto a single board computer. Based on \textit{AIWeeds} and the pipeline, we present a baseline for classification performance using five benchmark CNN models. Among them, MobileNetV2, with both the shortest inference time and lowest memory consumption, is the qualified candidate for real-time applications. Finally, we deploy MobileNetV2 onto our own compact autonomous robot \textit{SAMBot} for real-time weed detection. The 90\% test accuracy realized in previously unseen scenes in flax fields (with a row spacing of 0.2-0.3 m), with crops and weeds, distortion, blur, and shadows, is a milestone towards precision weed control in the real world. We have publicly released the dataset and code to generate the results at \url{https://github.com/StructuresComp/Multi-class-Weed-Classification}.

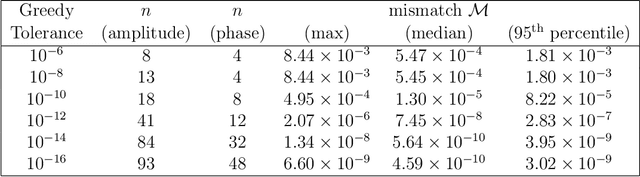

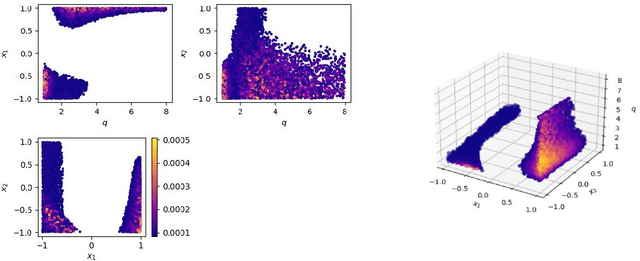

Deep Residual Error and Bag-of-Tricks Learning for Gravitational Wave Surrogate Modeling

Mar 16, 2022

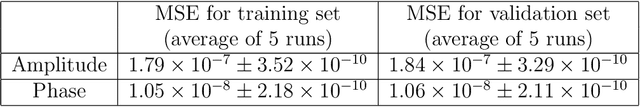

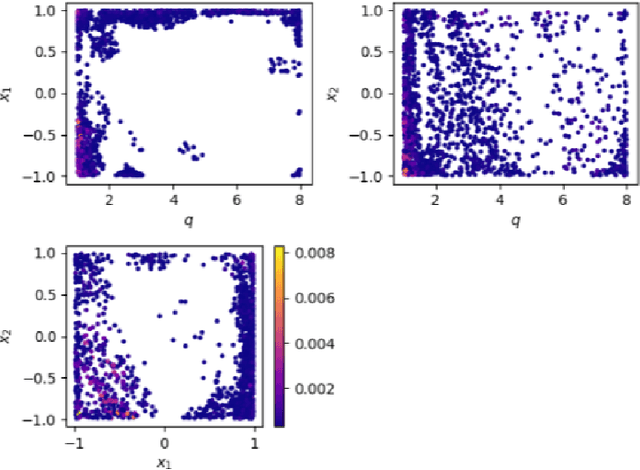

Deep learning methods have been employed in gravitational-wave astronomy to accelerate the construction of surrogate waveforms for the inspiral of spin-aligned black hole binaries, among other applications. We demonstrate, that the residual error of an artificial neural network that models the coefficients of the surrogate waveform expansion (especially those of the phase of the waveform) has sufficient structure to be learnable by a second network. Adding this second network, we were able to reduce the maximum mismatch for waveforms in a validation set by more than an order of magnitude. We also explored several other ideas for improving the accuracy of the surrogate model, such as the exploitation of similarities between waveforms, the augmentation of the training set, the dissection of the input space, using dedicated networks per output coefficient and output augmentation. In several cases, small improvements can be observed, but the most significant improvement still comes from the addition of a second network that models the residual error. Since the residual error for more general surrogate waveform models (when e.g. eccentricity is included) may also have a specific structure, one can expect our method to be applicable to cases where the gain in accuracy could lead to significant gains in computational time.

Collaborative Intelligent Reflecting Surface Networks with Multi-Agent Reinforcement Learning

Mar 26, 2022

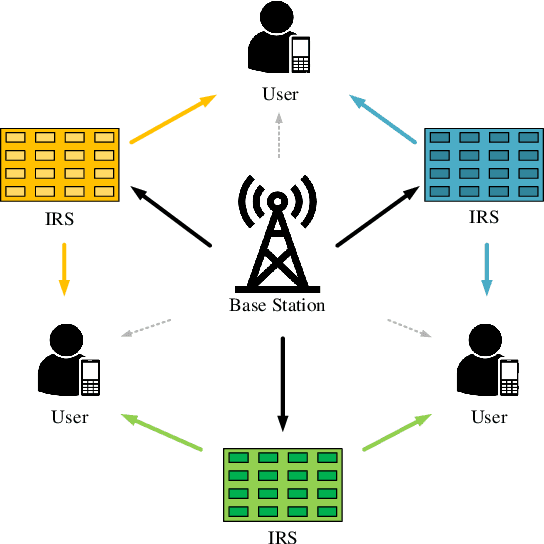

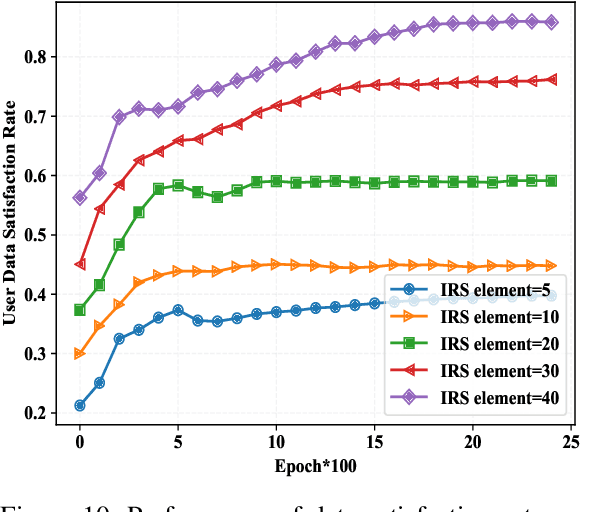

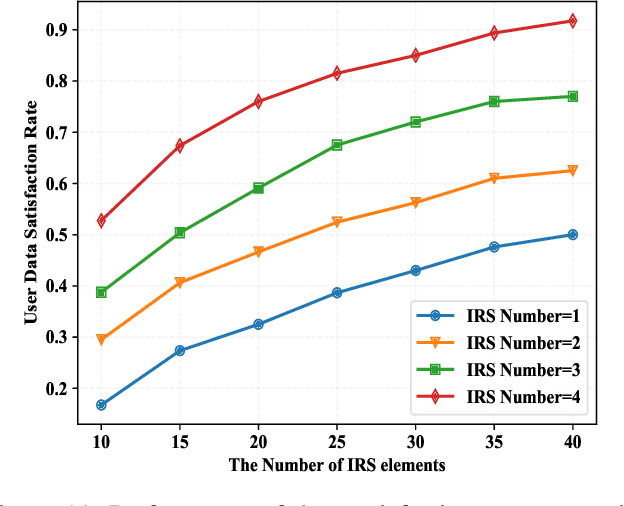

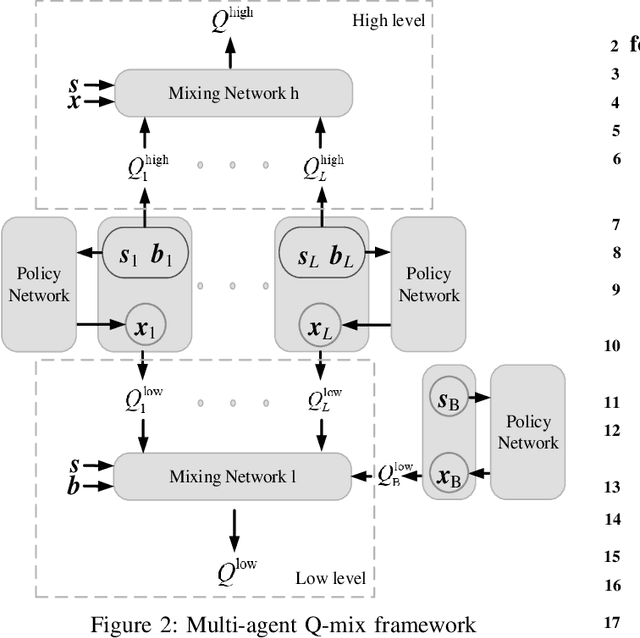

Intelligent reflecting surface (IRS) is envisioned to be widely applied in future wireless networks. In this paper, we investigate a multi-user communication system assisted by cooperative IRS devices with the capability of energy harvesting. Aiming to maximize the long-term average achievable system rate, an optimization problem is formulated by jointly designing the transmit beamforming at the base station (BS) and discrete phase shift beamforming at the IRSs, with the constraints on transmit power, user data rate requirement and IRS energy buffer size. Considering time-varying channels and stochastic arrivals of energy harvested by the IRSs, we first formulate the problem as a Markov decision process (MDP) and then develop a novel multi-agent Q-mix (MAQ) framework with two layers to decouple the optimization parameters. The higher layer is for optimizing phase shift resolutions, and the lower one is for phase shift beamforming and power allocation. Since the phase shift optimization is an integer programming problem with a large-scale action space, we improve MAQ by incorporating the Wolpertinger method, namely, MAQ-WP algorithm to achieve a sub-optimality with reduced dimensions of action space. In addition, as MAQ-WP is still of high complexity to achieve good performance, we propose a policy gradient-based MAQ algorithm, namely, MAQ-PG, by mapping the discrete phase shift actions into a continuous space at the cost of a slight performance loss. Simulation results demonstrate that the proposed MAQ-WP and MAQ-PG algorithms can converge faster and achieve data rate improvements of 10.7% and 8.8% over the conventional multi-agent DDPG, respectively.

Brain-Computer-Interface controlled robot via RaspberryPi and PiEEG

Feb 04, 2022

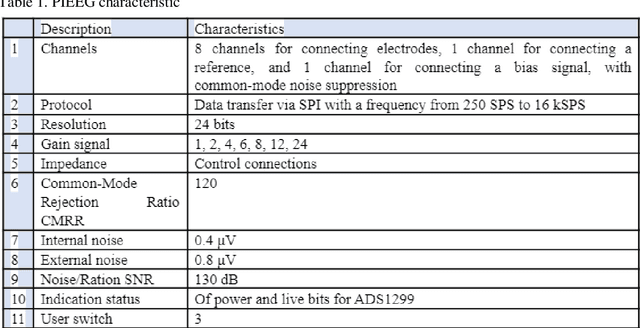

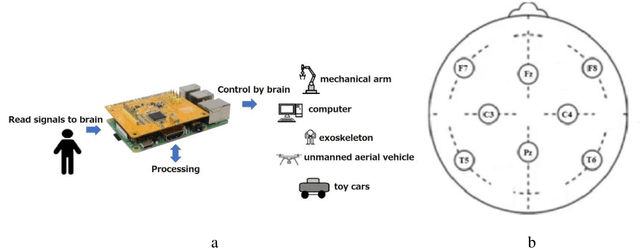

This paper presents Open-source software and a developed shield board for the Raspberry Pi family of single-board computers that can be used to read EEG signals. We have described the mechanism for reading EEG signals and decomposing them into a Fourier series and provided examples of controlling LEDs and a toy robot by blinking. Finally, we discussed the prospects of the brain-computer interface for the near future and considered various methods for controlling external mechanical objects using real-time EEG signals.

Counterfactually Evaluating Explanations in Recommender Systems

Mar 02, 2022

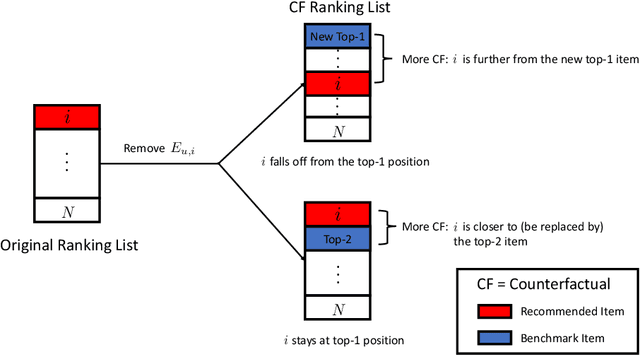

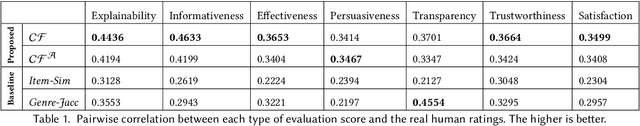

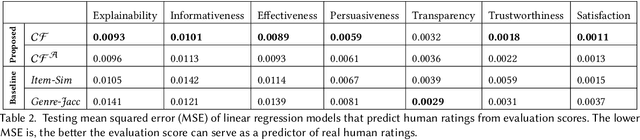

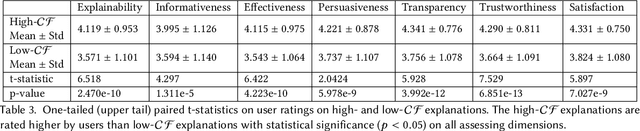

Modern recommender systems face an increasing need to explain their recommendations. Despite considerable progress in this area, evaluating the quality of explanations remains a significant challenge for researchers and practitioners. Prior work mainly conducts human study to evaluate explanation quality, which is usually expensive, time-consuming, and prone to human bias. In this paper, we propose an offline evaluation method that can be computed without human involvement. To evaluate an explanation, our method quantifies its counterfactual impact on the recommendation. To validate the effectiveness of our method, we carry out an online user study. We show that, compared to conventional methods, our method can produce evaluation scores more correlated with the real human judgments, and therefore can serve as a better proxy for human evaluation. In addition, we show that explanations with high evaluation scores are considered better by humans. Our findings highlight the promising direction of using the counterfactual approach as one possible way to evaluate recommendation explanations.

Applications of Online Nonnegative Matrix Factorization to Image and Time-Series Data

Nov 10, 2020

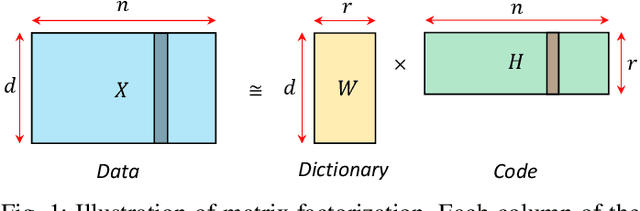

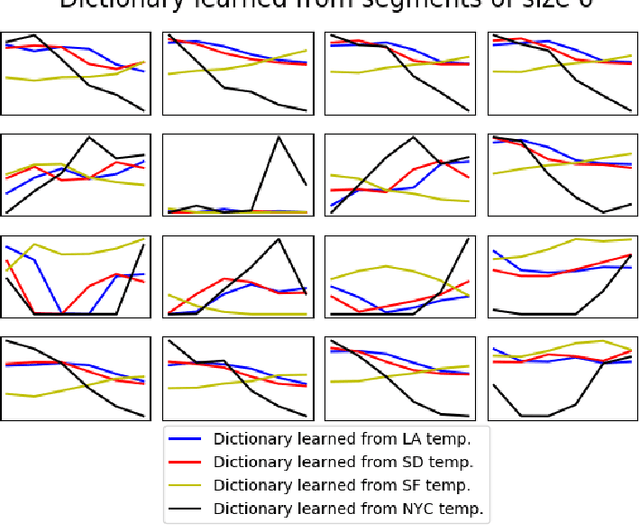

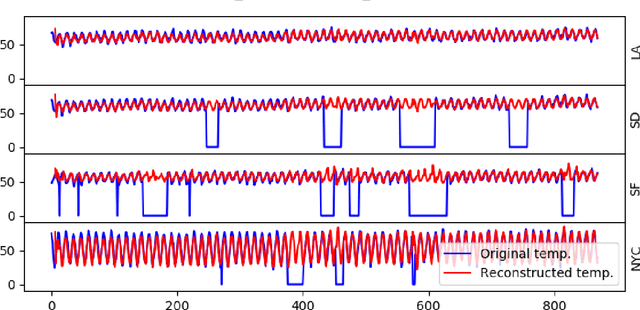

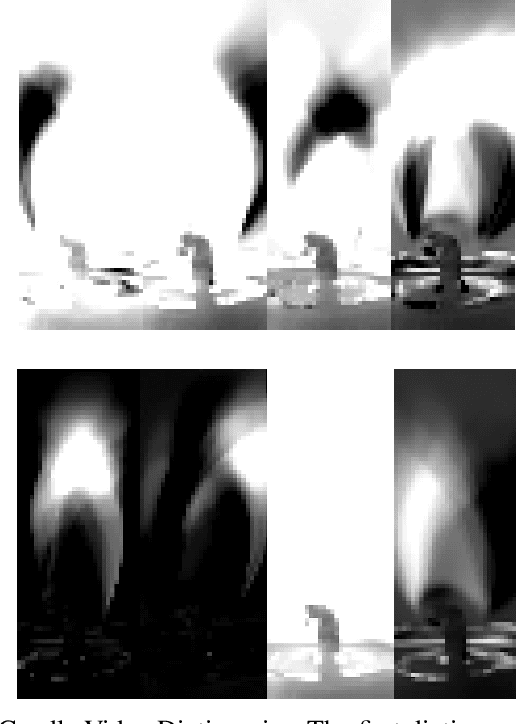

Online nonnegative matrix factorization (ONMF) is a matrix factorization technique in the online setting where data are acquired in a streaming fashion and the matrix factors are updated each time. This enables factor analysis to be performed concurrently with the arrival of new data samples. In this article, we demonstrate how one can use online nonnegative matrix factorization algorithms to learn joint dictionary atoms from an ensemble of correlated data sets. We propose a temporal dictionary learning scheme for time-series data sets, based on ONMF algorithms. We demonstrate our dictionary learning technique in the application contexts of historical temperature data, video frames, and color images.

* 9 pages, 8 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge