"Time": models, code, and papers

A Human-Centered Machine-Learning Approach for Muscle-Tendon Junction Tracking in Ultrasound Images

Feb 10, 2022

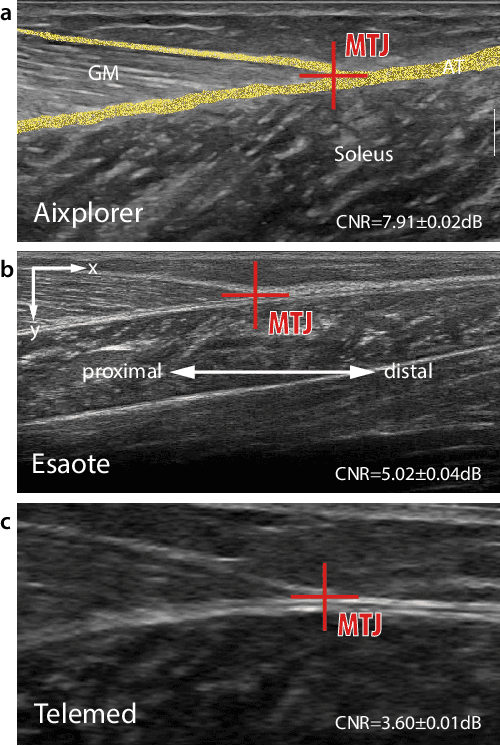

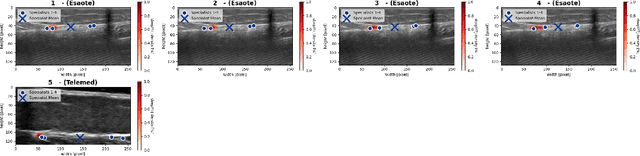

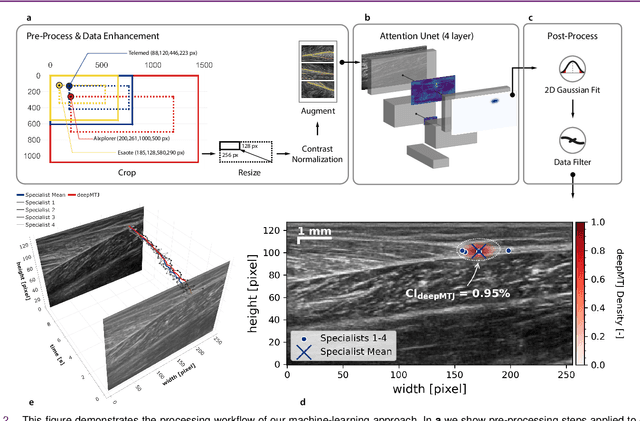

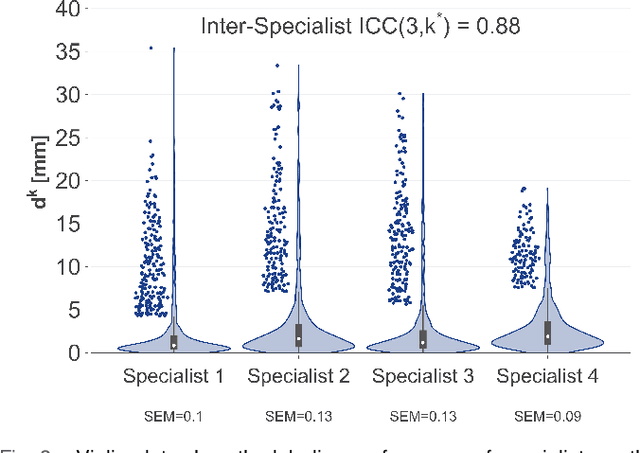

Biomechanical and clinical gait research observes muscles and tendons in limbs to study their functions and behaviour. Therefore, movements of distinct anatomical landmarks, such as muscle-tendon junctions, are frequently measured. We propose a reliable and time efficient machine-learning approach to track these junctions in ultrasound videos and support clinical biomechanists in gait analysis. In order to facilitate this process, a method based on deep-learning was introduced. We gathered an extensive dataset, covering 3 functional movements, 2 muscles, collected on 123 healthy and 38 impaired subjects with 3 different ultrasound systems, and providing a total of 66864 annotated ultrasound images in our network training. Furthermore, we used data collected across independent laboratories and curated by researchers with varying levels of experience. For the evaluation of our method a diverse test-set was selected that is independently verified by four specialists. We show that our model achieves similar performance scores to the four human specialists in identifying the muscle-tendon junction position. Our method provides time-efficient tracking of muscle-tendon junctions, with prediction times of up to 0.078 seconds per frame (approx. 100 times faster than manual labeling). All our codes, trained models and test-set were made publicly available and our model is provided as a free-to-use online service on https://deepmtj.org/.

P-split formulations: A class of intermediate formulations between big-M and convex hull for disjunctive constraints

Feb 10, 2022

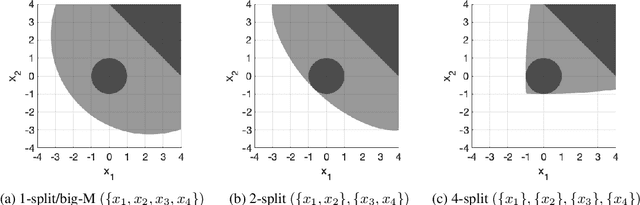

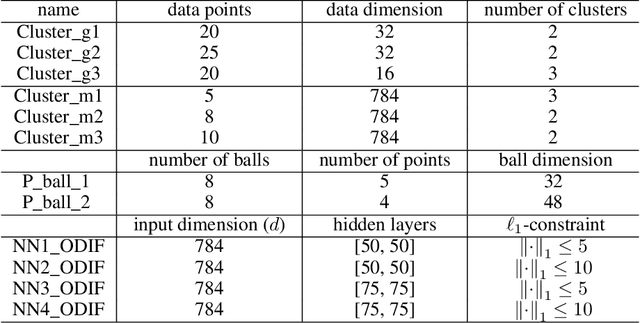

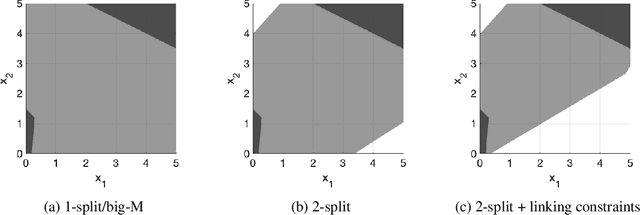

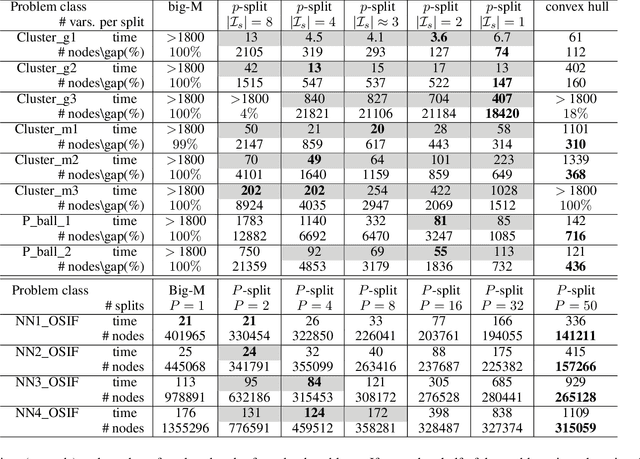

We develop a class of mixed-integer formulations for disjunctive constraints intermediate to the big-M and convex hull formulations in terms of relaxation strength. The main idea is to capture the best of both the big-M and convex hull formulations: a computationally light formulation with a tight relaxation. The "$P$-split" formulations are based on a lifted transformation that splits convex additively separable constraints into $P$ partitions and forms the convex hull of the linearized and partitioned disjunction. We analyze the continuous relaxation of the $P$-split formulations and show that, under certain assumptions, the formulations form a hierarchy starting from a big-M equivalent and converging to the convex hull. The goal of the $P$-split formulations is to form a strong approximation of the convex hull through a computationally simpler formulation. We computationally compare the $P$-split formulations against big-M and convex hull formulations on 320 test instances. The test problems include K-means clustering, P_ball problems, and optimization over trained ReLU neural networks. The computational results show promising potential of the $P$-split formulations. For many of the test problems, $P$-split formulations are solved with a similar number of explored nodes as the convex hull formulation, while reducing the solution time by an order of magnitude and outperforming big-M both in time and number of explored nodes.

Uncovering Instabilities in Variational-Quantum Deep Q-Networks

Feb 10, 2022

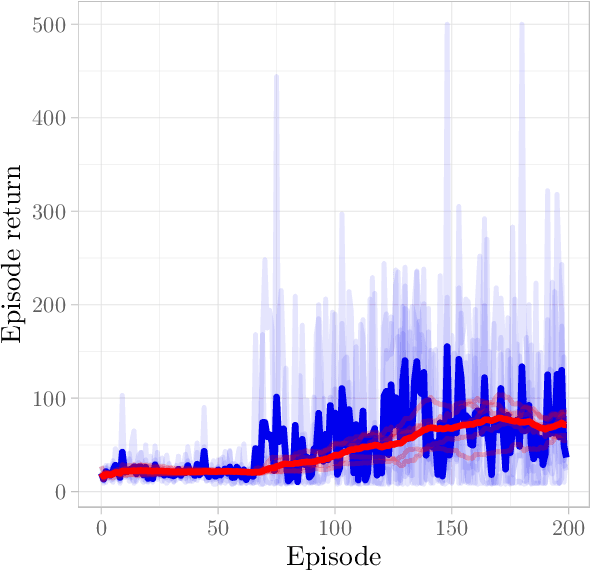

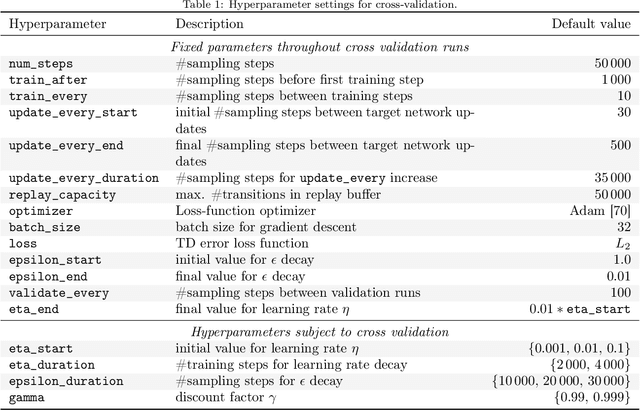

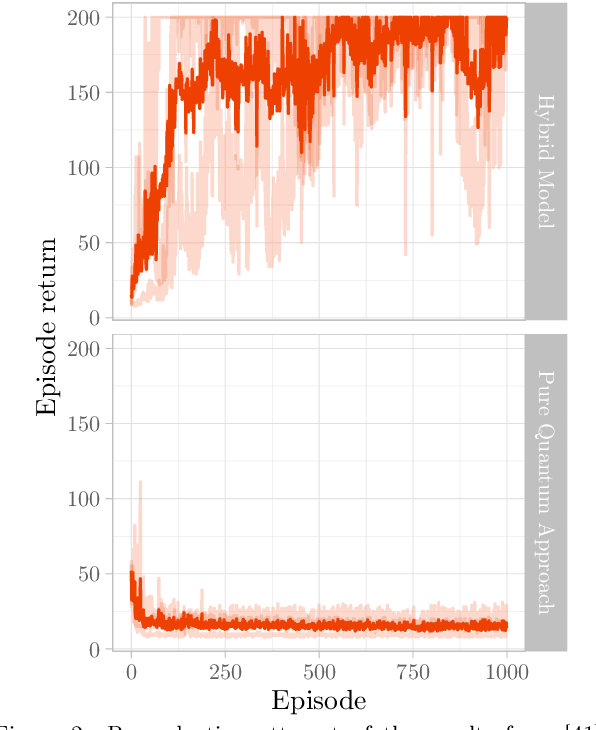

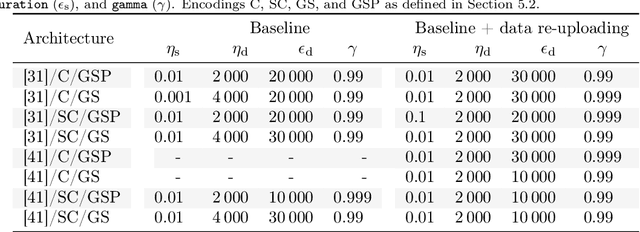

Deep Reinforcement Learning (RL) has considerably advanced over the past decade. At the same time, state-of-the-art RL algorithms require a large computational budget in terms of training time to converge. Recent work has started to approach this problem through the lens of quantum computing, which promises theoretical speed-ups for several traditionally hard tasks. In this work, we examine a class of hybrid quantumclassical RL algorithms that we collectively refer to as variational quantum deep Q-networks (VQ-DQN). We show that VQ-DQN approaches are subject to instabilities that cause the learned policy to diverge, study the extent to which this afflicts reproduciblity of established results based on classical simulation, and perform systematic experiments to identify potential explanations for the observed instabilities. Additionally, and in contrast to most existing work on quantum reinforcement learning, we execute RL algorithms on an actual quantum processing unit (an IBM Quantum Device) and investigate differences in behaviour between simulated and physical quantum systems that suffer from implementation deficiencies. Our experiments show that, contrary to opposite claims in the literature, it cannot be conclusively decided if known quantum approaches, even if simulated without physical imperfections, can provide an advantage as compared to classical approaches. Finally, we provide a robust, universal and well-tested implementation of VQ-DQN as a reproducible testbed for future experiments.

Machine-learning-enhanced time-of-flight mass spectrometry analysis

Oct 02, 2020

Mass spectrometry is a widespread approach to work out what are the constituents of a material. Atoms and molecules are removed from the material and collected, and subsequently, a critical step is to infer their correct identities based from patterns formed in their mass-to-charge ratios and relative isotopic abundances. However, this identification step still mainly relies on individual user's expertise, making its standardization challenging, and hindering efficient data processing. Here, we introduce an approach that leverages modern machine learning technique to identify peak patterns in time-of-flight mass spectra within microseconds, outperforming human users without loss of accuracy. Our approach is cross-validated on mass spectra generated from different time-of-flight mass spectrometry(ToF-MS) techniques, offering the ToF-MS community an open-source, intelligent mass spectra analysis.

Mobile Wireless Rechargeable UAV Networks: Challenges and Solutions

Mar 24, 2022

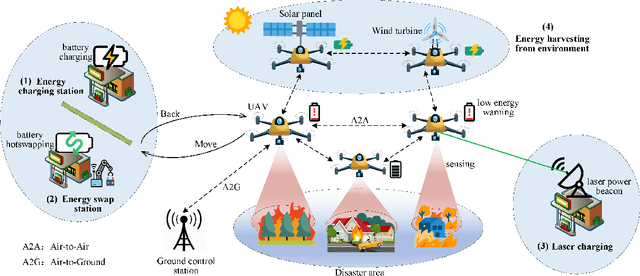

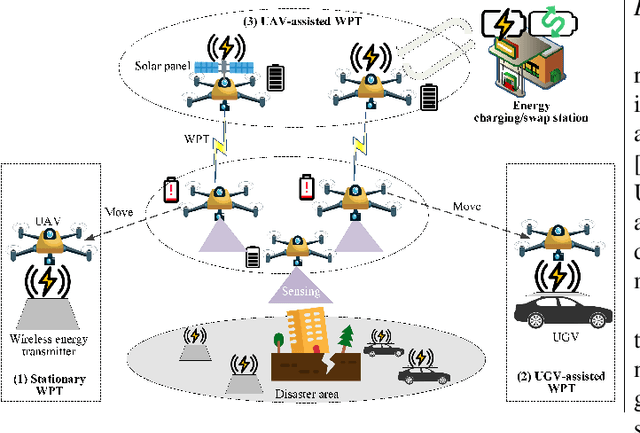

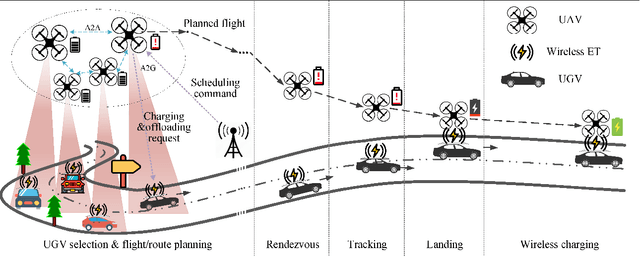

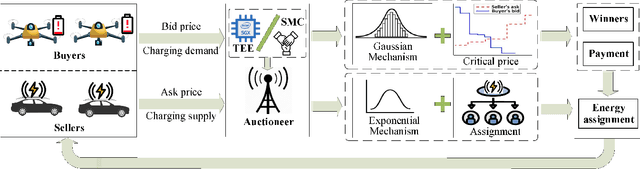

Unmanned aerial vehicles (UAVs) can help facilitate cost-effective and flexible service provisioning in future smart cities. Nevertheless, UAV applications generally suffer severe flight time limitations due to constrained onboard battery capacity, causing a necessity of frequent battery recharging or replacement when performing persistent missions. Utilizing wireless mobile chargers, such as vehicles with wireless charging equipment for on-demand self-recharging has been envisioned as a promising solution to address this issue. In this article, we present a comprehensive study of \underline{v}ehicle-assisted \underline{w}ireless rechargeable \underline{U}AV \underline{n}etworks (VWUNs) to promote on-demand, secure, and efficient UAV recharging services. Specifically, we first discuss the opportunities and challenges of deploying VWUNs and review state-of-the-art solutions in this field. We then propose a secure and privacy-preserving VWUN framework for UAVs and ground vehicles based on differential privacy (DP). Within this framework, an online double auction mechanism is developed for optimal charging scheduling, and a two-phase DP algorithm is devised to preserve the sensitive bidding and energy trading information of participants. Experimental results demonstrate that the proposed framework can effectively enhance charging efficiency and security. Finally, we outline promising directions for future research in this emerging field.

* Accepted by IEEE Communication Magazine

A windowed correlation based feature selection method to improve time series prediction of dengue fever cases

Apr 21, 2021

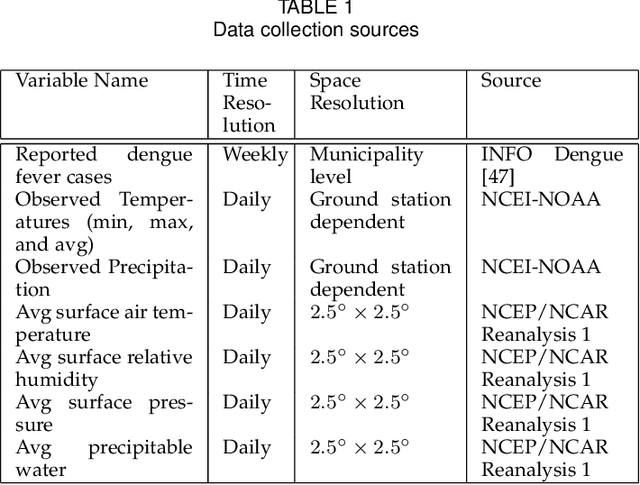

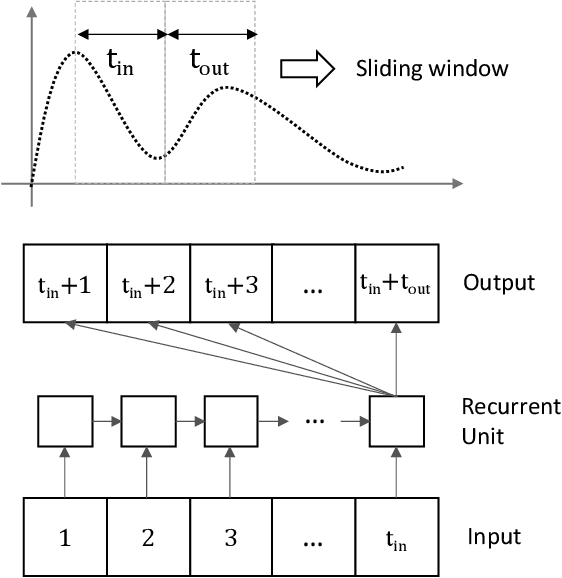

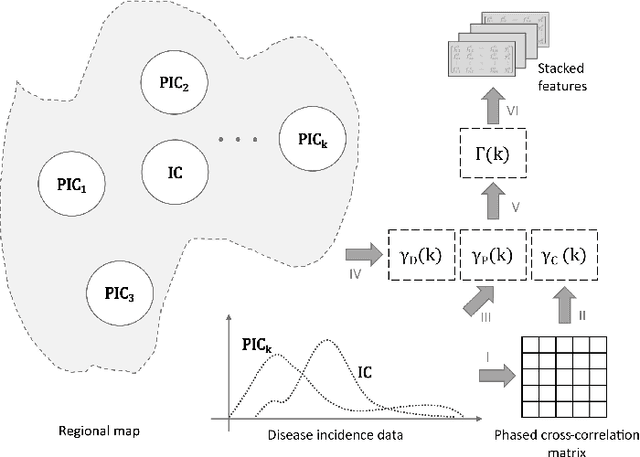

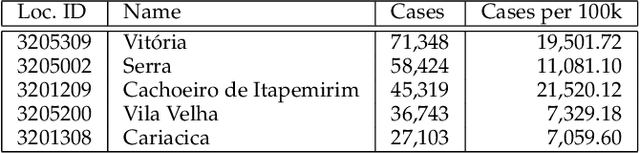

The performance of data-driven prediction models depends on the availability of data samples for model training. A model that learns about dengue fever incidence in a population uses historical data from that corresponding location. Poor performance in prediction can result in places with inadequate data. This work aims to enhance temporally limited dengue case data by methodological addition of epidemically relevant data from nearby locations as predictors (features). A novel framework is presented for windowing incidence data and computing time-shifted correlation-based metrics to quantify feature relevance. The framework ranks incidence data of adjacent locations around a target location by combining the correlation metric with two other metrics: spatial distance and local prevalence. Recurrent neural network-based prediction models achieve up to 33.6% accuracy improvement on average using the proposed method compared to using training data from the target location only. These models achieved mean absolute error (MAE) values as low as 0.128 on [0,1] normalized incidence data for a municipality with the highest dengue prevalence in Brazil's Espirito Santo. When predicting cases aggregated over geographical ecoregions, the models achieved accuracy improvements up to 16.5%, using only 6.5% of incidence data from ranked feature sets. The paper also includes two techniques for windowing time series data: fixed-sized windows and outbreak detection windows. Both of these techniques perform comparably, while the window detection method uses less data for computations. The framework presented in this paper is application-independent, and it could improve the performances of prediction models where data from spatially adjacent locations are available.

Feature visualization for convolutional neural network models trained on neuroimaging data

Mar 24, 2022

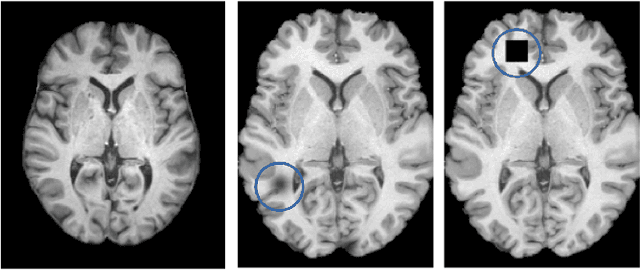

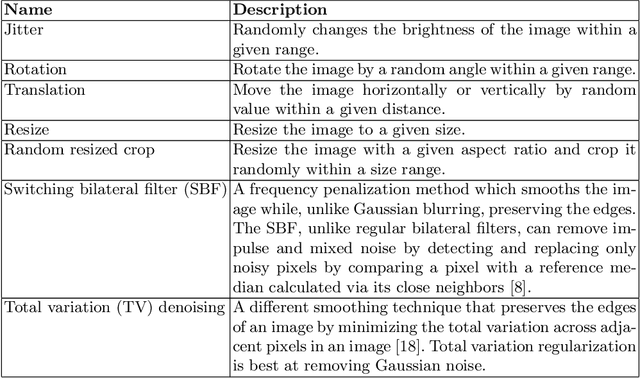

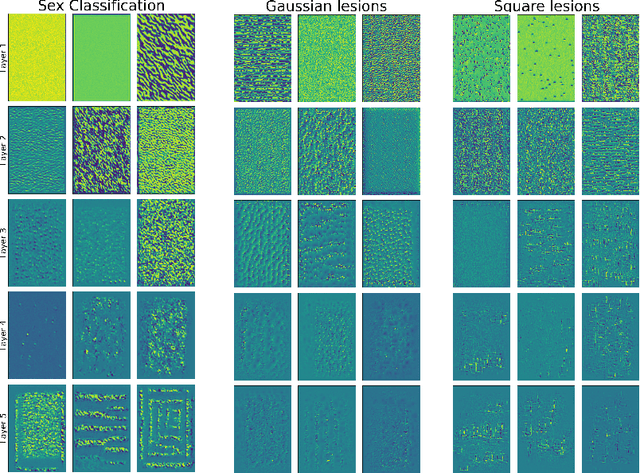

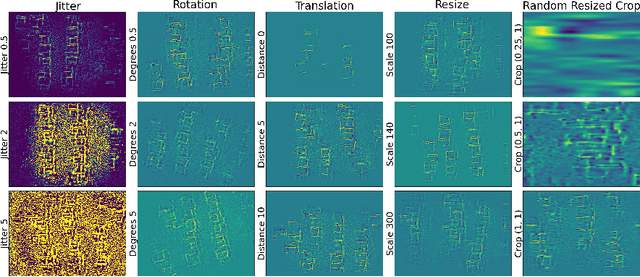

A major prerequisite for the application of machine learning models in clinical decision making is trust and interpretability. Current explainability studies in the neuroimaging community have mostly focused on explaining individual decisions of trained models, e.g. obtained by a convolutional neural network (CNN). Using attribution methods such as layer-wise relevance propagation or SHAP heatmaps can be created that highlight which regions of an input are more relevant for the decision than others. While this allows the detection of potential data set biases and can be used as a guide for a human expert, it does not allow an understanding of the underlying principles the model has learned. In this study, we instead show, to the best of our knowledge, for the first time results using feature visualization of neuroimaging CNNs. Particularly, we have trained CNNs for different tasks including sex classification and artificial lesion classification based on structural magnetic resonance imaging (MRI) data. We have then iteratively generated images that maximally activate specific neurons, in order to visualize the patterns they respond to. To improve the visualizations we compared several regularization strategies. The resulting images reveal the learned concepts of the artificial lesions, including their shapes, but remain hard to interpret for abstract features in the sex classification task.

Choice of technology and evaluation of the production capabilities of a 3d printer robot for creating elements of experimental equipment for the production of biofuel components

Feb 02, 2022Elements of experimental equipment for the production of biofuel components must meet high reliability and safety requirements. At the same time, in the course of research on the subject of creating equipment for the production of biofuels, a variable range of equipment is regularly proposed and should be checked. The manufacture of elements of such equipment by traditional methods is expensive and inefficient, time-consuming, which negatively affects the speed of scientific research. To this end, it is proposed to develop a robotic 3D printing complex that provides maximum flexibility in creating mock-ups and test samples of equipment for the production of biofuel components. The article discusses the experience of successfully creating equipment elements for the production of fuels using 3d printing. Next, the choice of a robotization scheme for a 3D printing installation is described and the choice of printing technology is substantiated. The article also presents the results of calculating the parameters of the 3v-printer robot and the results of calculating the similarity parameters for the implementation and evaluation of control algorithms. The results of a numerical experiment for calculating the strength characteristics of equipment elements manufactured using the selected 3d printing technology are presented.

Real-Time Cardiac Cine MRI with Residual Convolutional Recurrent Neural Network

Aug 20, 2020

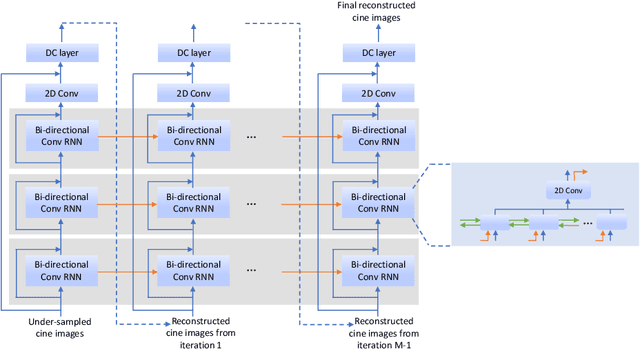

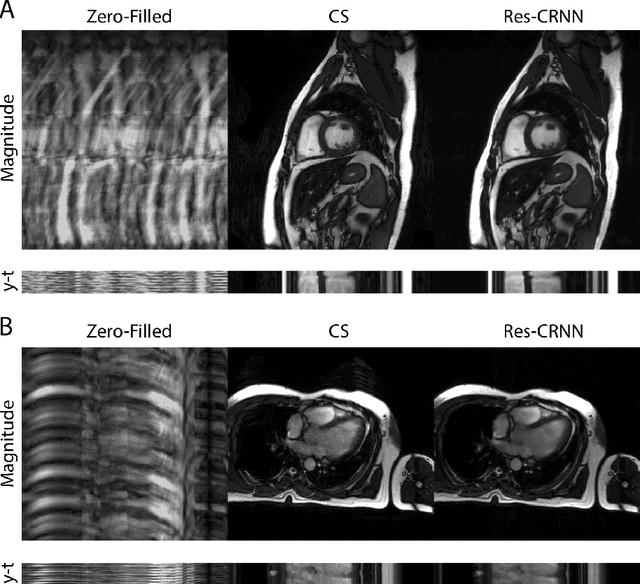

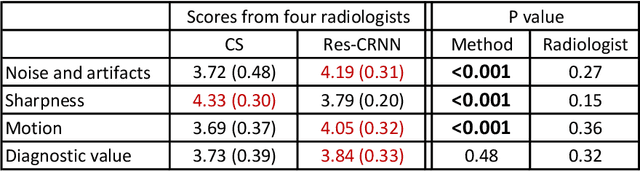

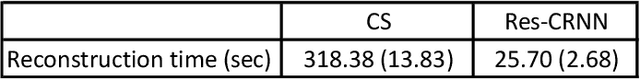

Real-time cardiac cine MRI does not require ECG gating in the data acquisition and is more useful for patients who can not hold their breaths or have abnormal heart rhythms. However, to achieve fast image acquisition, real-time cine commonly acquires highly undersampled data, which imposes a significant challenge for MRI image reconstruction. We propose a residual convolutional RNN for real-time cardiac cine reconstruction. To the best of our knowledge, this is the first work applying deep learning approach to Cartesian real-time cardiac cine reconstruction. Based on the evaluation from radiologists, our deep learning model shows superior performance than compressed sensing.

Demystifying the Neural Tangent Kernel from a Practical Perspective: Can it be trusted for Neural Architecture Search without training?

Mar 28, 2022

In Neural Architecture Search (NAS), reducing the cost of architecture evaluation remains one of the most crucial challenges. Among a plethora of efforts to bypass training of each candidate architecture to convergence for evaluation, the Neural Tangent Kernel (NTK) is emerging as a promising theoretical framework that can be utilized to estimate the performance of a neural architecture at initialization. In this work, we revisit several at-initialization metrics that can be derived from the NTK and reveal their key shortcomings. Then, through the empirical analysis of the time evolution of NTK, we deduce that modern neural architectures exhibit highly non-linear characteristics, making the NTK-based metrics incapable of reliably estimating the performance of an architecture without some amount of training. To take such non-linear characteristics into account, we introduce Label-Gradient Alignment (LGA), a novel NTK-based metric whose inherent formulation allows it to capture the large amount of non-linear advantage present in modern neural architectures. With minimal amount of training, LGA obtains a meaningful level of rank correlation with the post-training test accuracy of an architecture. Lastly, we demonstrate that LGA, complemented with few epochs of training, successfully guides existing search algorithms to achieve competitive search performances with significantly less search cost. The code is available at: https://github.com/nutellamok/DemystifyingNTK.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge