"Time": models, code, and papers

The First AI4TSP Competition: Learning to Solve Stochastic Routing Problems

Jan 25, 2022

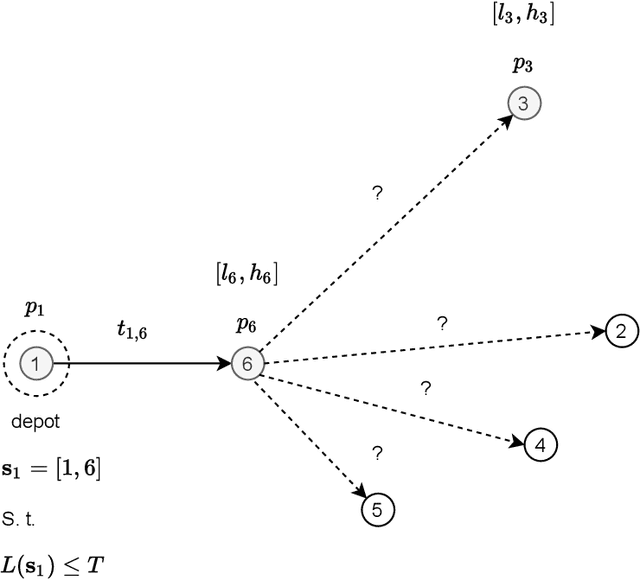

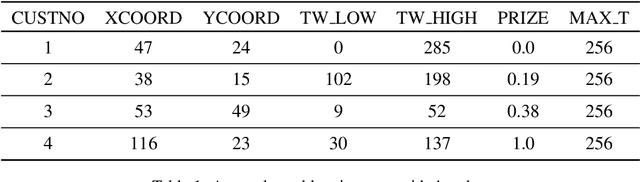

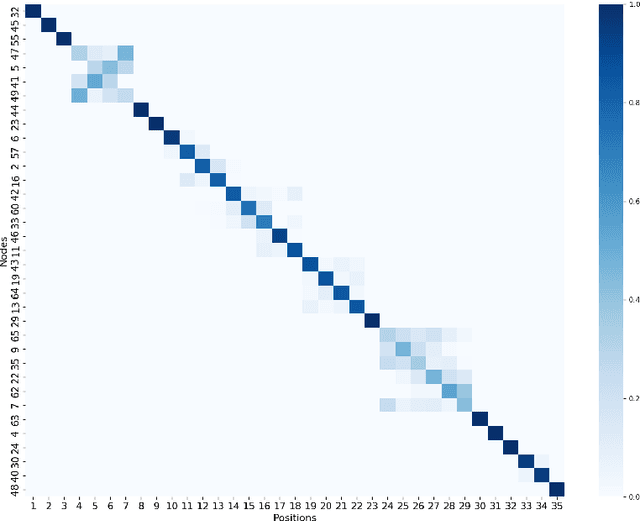

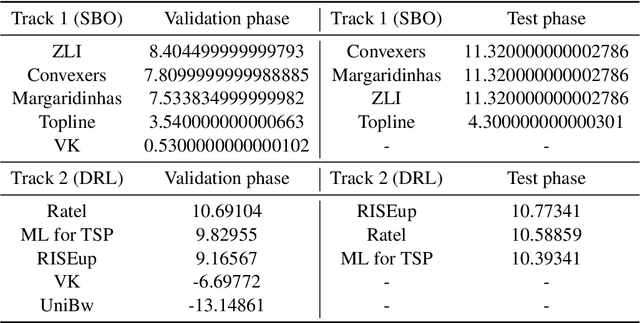

This paper reports on the first international competition on AI for the traveling salesman problem (TSP) at the International Joint Conference on Artificial Intelligence 2021 (IJCAI-21). The TSP is one of the classical combinatorial optimization problems, with many variants inspired by real-world applications. This first competition asked the participants to develop algorithms to solve a time-dependent orienteering problem with stochastic weights and time windows (TD-OPSWTW). It focused on two types of learning approaches: surrogate-based optimization and deep reinforcement learning. In this paper, we describe the problem, the setup of the competition, the winning methods, and give an overview of the results. The winning methods described in this work have advanced the state-of-the-art in using AI for stochastic routing problems. Overall, by organizing this competition we have introduced routing problems as an interesting problem setting for AI researchers. The simulator of the problem has been made open-source and can be used by other researchers as a benchmark for new AI methods.

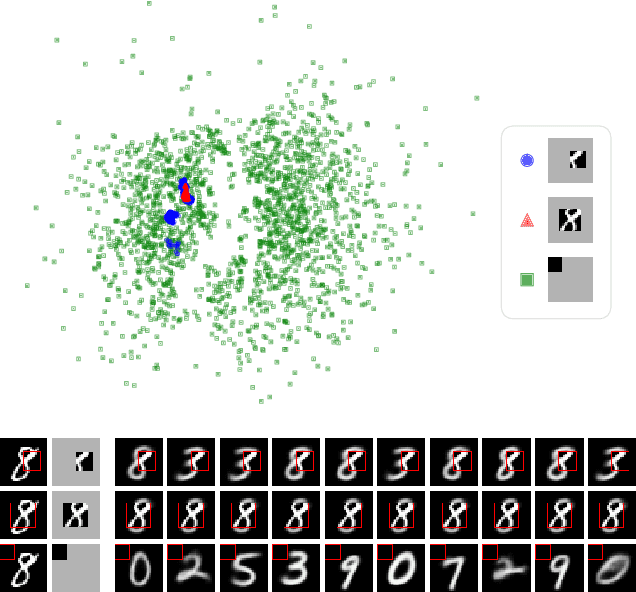

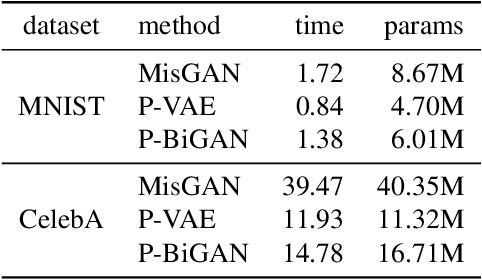

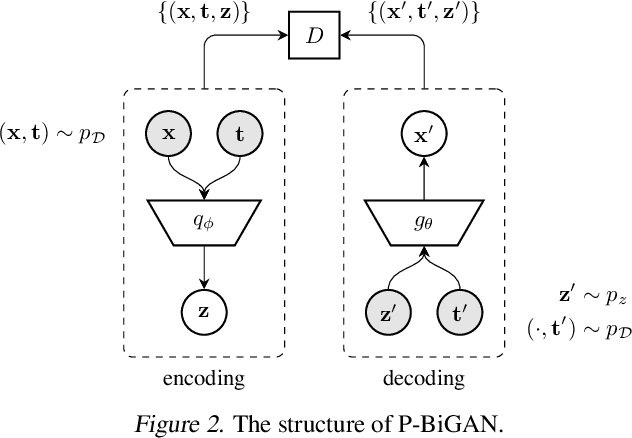

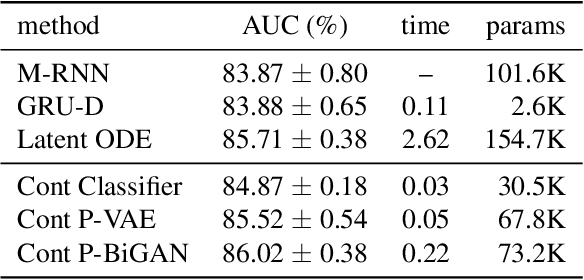

Learning from Irregularly-Sampled Time Series: A Missing Data Perspective

Aug 17, 2020

Irregularly-sampled time series occur in many domains including healthcare. They can be challenging to model because they do not naturally yield a fixed-dimensional representation as required by many standard machine learning models. In this paper, we consider irregular sampling from the perspective of missing data. We model observed irregularly-sampled time series data as a sequence of index-value pairs sampled from a continuous but unobserved function. We introduce an encoder-decoder framework for learning from such generic indexed sequences. We propose learning methods for this framework based on variational autoencoders and generative adversarial networks. For continuous irregularly-sampled time series, we introduce continuous convolutional layers that can efficiently interface with existing neural network architectures. Experiments show that our models are able to achieve competitive or better classification results on irregularly-sampled multivariate time series compared to recent RNN models while offering significantly faster training times.

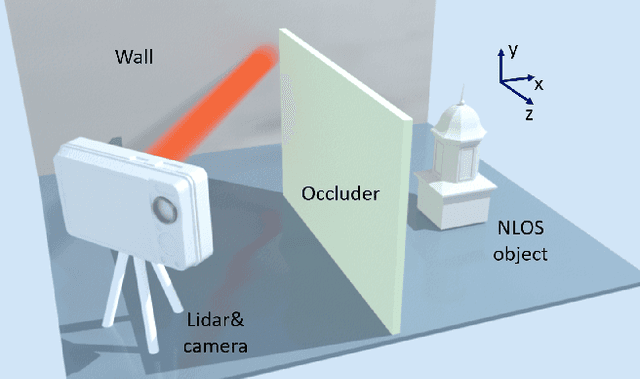

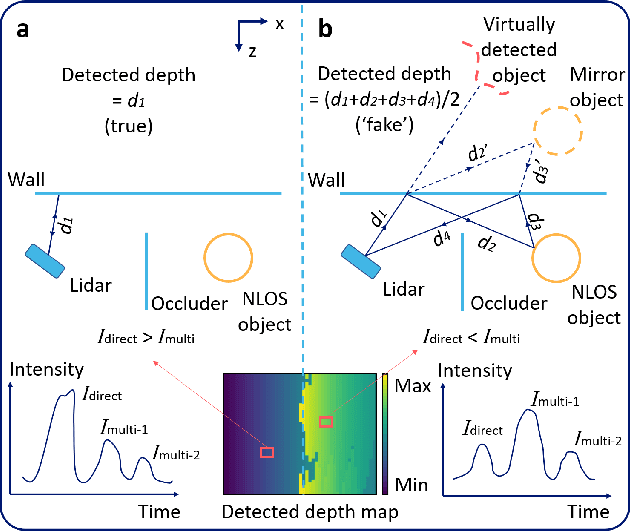

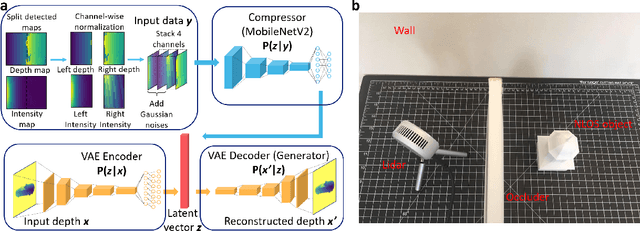

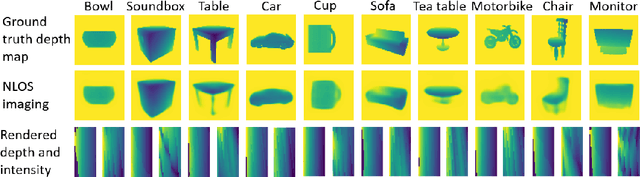

Real-time Non-line-of-sight Imaging with Two-step Deep Remapping

Jan 26, 2021

Conventional imaging only records the photons directly sent from the object to the detector, while non-line-of-sight (NLOS) imaging takes the indirect light into account. To explore the NLOS surroundings, most NLOS solutions employ a transient scanning process, followed by a back-projection based algorithm to reconstruct the NLOS scenes. However, the transient detection requires sophisticated apparatus, with long scanning time and low robustness to ambient environment, and the reconstruction algorithms typically cost tens of minutes with high demand on memory and computational resources. Here we propose a new NLOS solution to address the above defects, with innovations on both detection equipment and reconstruction algorithm. We apply inexpensive commercial Lidar for detection, with much higher scanning speed and better compatibility to real-world imaging tasks. Our reconstruction framework is deep learning based, consisting of a variational autoencoder and a compression neural network. The generative feature and the two-step reconstruction strategy of the framework guarantee high fidelity of NLOS imaging. The overall detection and reconstruction process allows for real-time responses, with state-of-the-art reconstruction performance. We have experimentally tested the proposed solution on both a synthetic dataset and real objects, and further demonstrated our method to be applicable for full-color NLOS imaging.

Interactive Visualization of Protein RINs using NetworKit in the Cloud

Mar 02, 2022

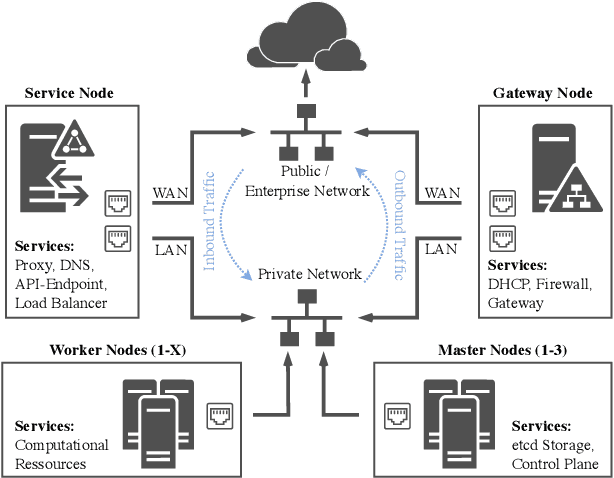

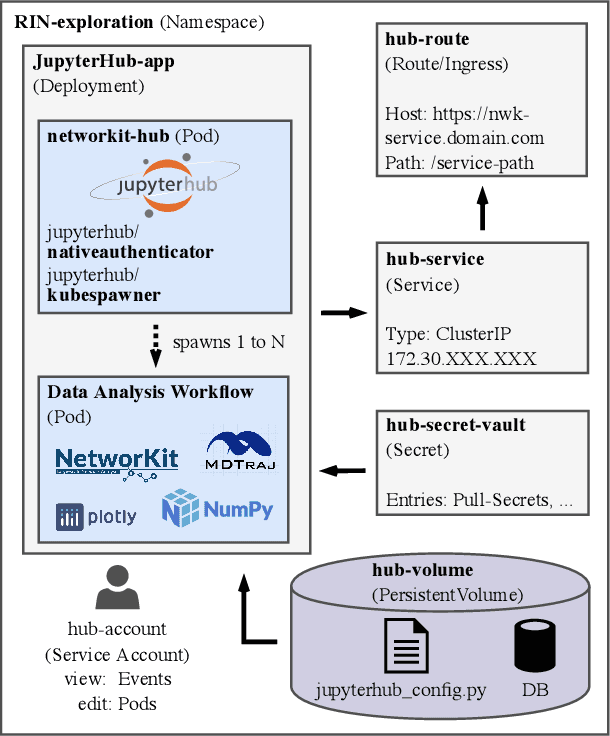

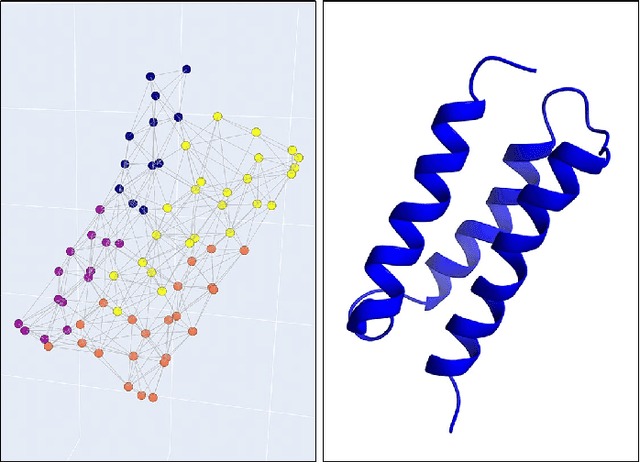

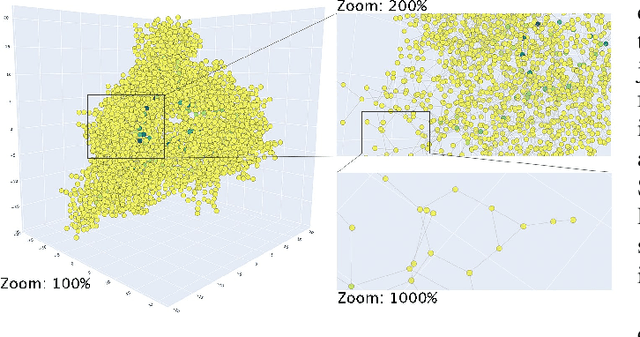

Network analysis has been applied in diverse application domains. In this paper, we consider an example from protein dynamics, specifically residue interaction networks (RINs). In this context, we use NetworKit -- an established package for network analysis -- to build a cloud-based environment that enables domain scientists to run their visualization and analysis workflows on large compute servers, without requiring extensive programming and/or system administration knowledge. To demonstrate the versatility of this approach, we use it to build a custom Jupyter-based widget for RIN visualization. In contrast to existing RIN visualization approaches, our widget can easily be customized through simple modifications of Python code, while both supporting a good feature set and providing near real-time speed. It is also easily integrated into analysis pipelines (e.g., that use Python to feed RIN data into downstream machine learning tasks).

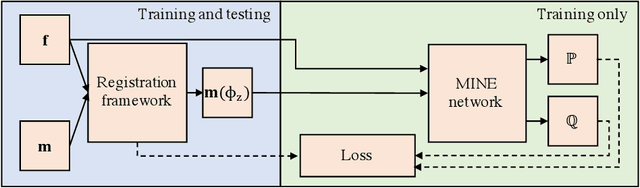

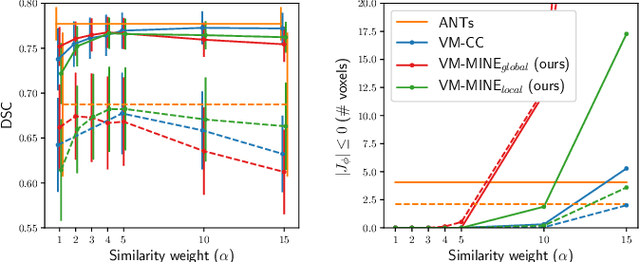

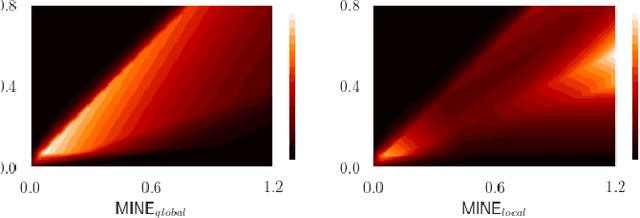

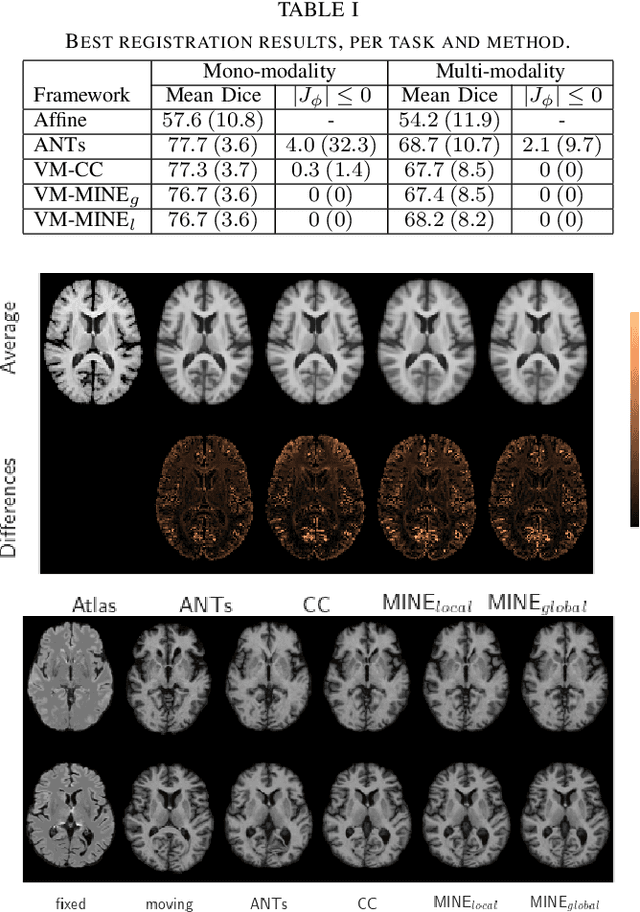

Mutual information neural estimation for unsupervised multi-modal registration of brain images

Jan 25, 2022

Many applications in image-guided surgery and therapy require fast and reliable non-linear, multi-modal image registration. Recently proposed unsupervised deep learning-based registration methods have demonstrated superior performance compared to iterative methods in just a fraction of the time. Most of the learning-based methods have focused on mono-modal image registration. The extension to multi-modal registration depends on the use of an appropriate similarity function, such as the mutual information (MI). We propose guiding the training of a deep learning-based registration method with MI estimation between an image-pair in an end-to-end trainable network. Our results show that a small, 2-layer network produces competitive results in both mono- and multimodal registration, with sub-second run-times. Comparisons to both iterative and deep learning-based methods show that our MI-based method produces topologically and qualitatively superior results with an extremely low rate of non-diffeomorphic transformations. Real-time clinical application will benefit from a better visual matching of anatomical structures and less registration failures/outliers.

Practical Evaluation of Adversarial Robustness via Adaptive Auto Attack

Mar 28, 2022

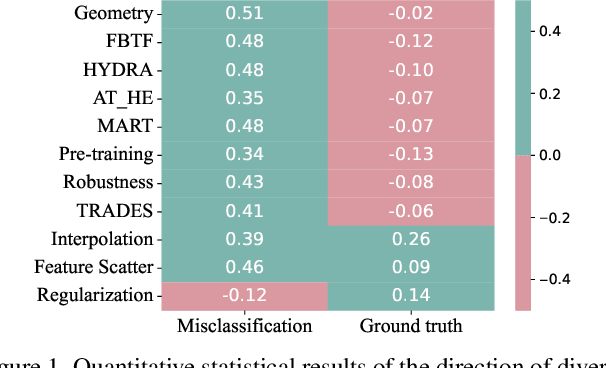

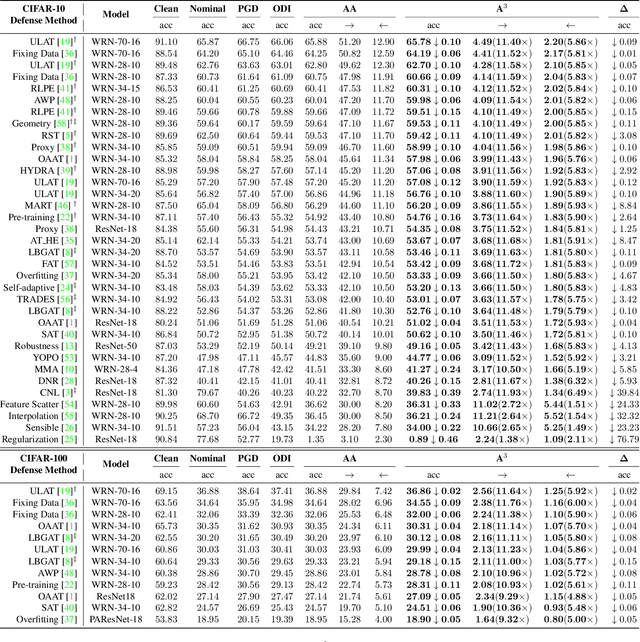

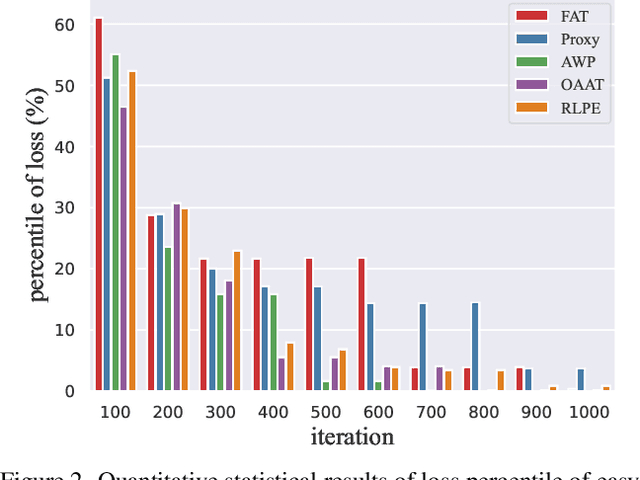

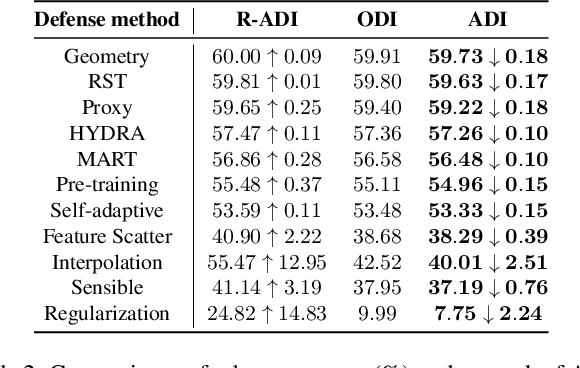

Defense models against adversarial attacks have grown significantly, but the lack of practical evaluation methods has hindered progress. Evaluation can be defined as looking for defense models' lower bound of robustness given a budget number of iterations and a test dataset. A practical evaluation method should be convenient (i.e., parameter-free), efficient (i.e., fewer iterations) and reliable (i.e., approaching the lower bound of robustness). Towards this target, we propose a parameter-free Adaptive Auto Attack (A$^3$) evaluation method which addresses the efficiency and reliability in a test-time-training fashion. Specifically, by observing that adversarial examples to a specific defense model follow some regularities in their starting points, we design an Adaptive Direction Initialization strategy to speed up the evaluation. Furthermore, to approach the lower bound of robustness under the budget number of iterations, we propose an online statistics-based discarding strategy that automatically identifies and abandons hard-to-attack images. Extensive experiments demonstrate the effectiveness of our A$^3$. Particularly, we apply A$^3$ to nearly 50 widely-used defense models. By consuming much fewer iterations than existing methods, i.e., $1/10$ on average (10$\times$ speed up), we achieve lower robust accuracy in all cases. Notably, we won $\textbf{first place}$ out of 1681 teams in CVPR 2021 White-box Adversarial Attacks on Defense Models competitions with this method. Code is available at: $\href{https://github.com/liuye6666/adaptive_auto_attack}{https://github.com/liuye6666/adaptive\_auto\_attack}$

Multi-Objective System-by-Design for mm-Wave Automotive Radar Antennas

Mar 02, 2022

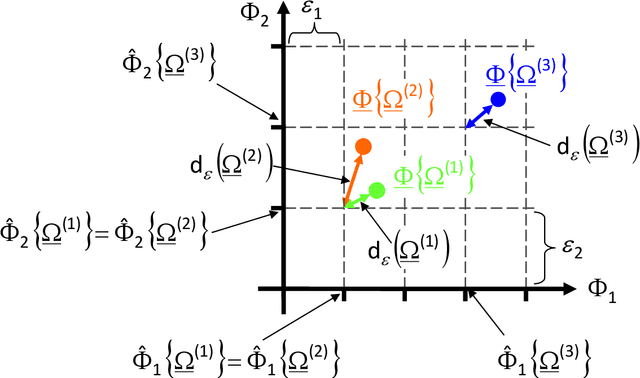

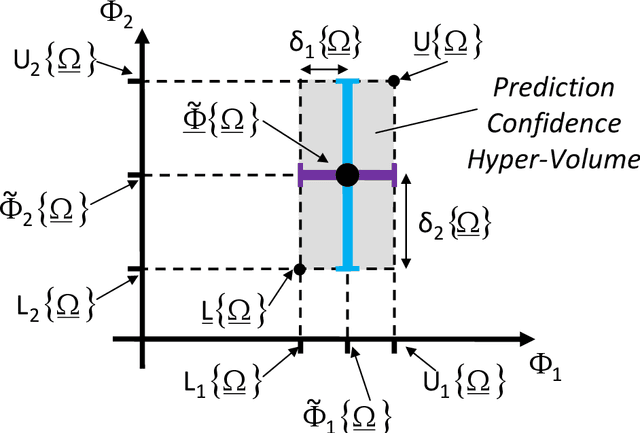

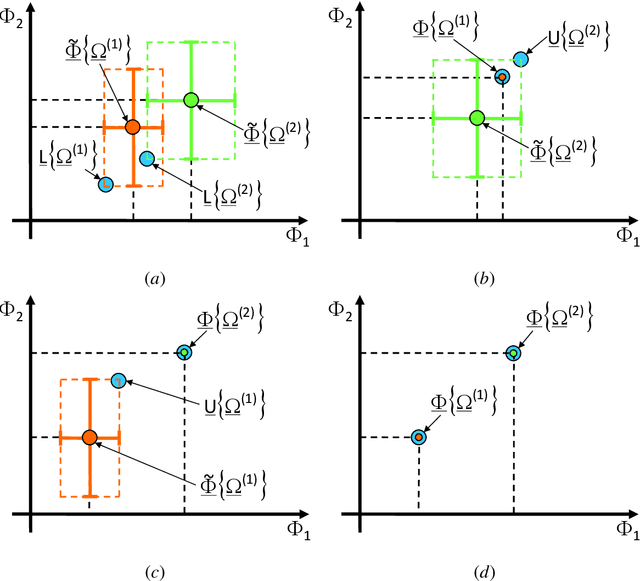

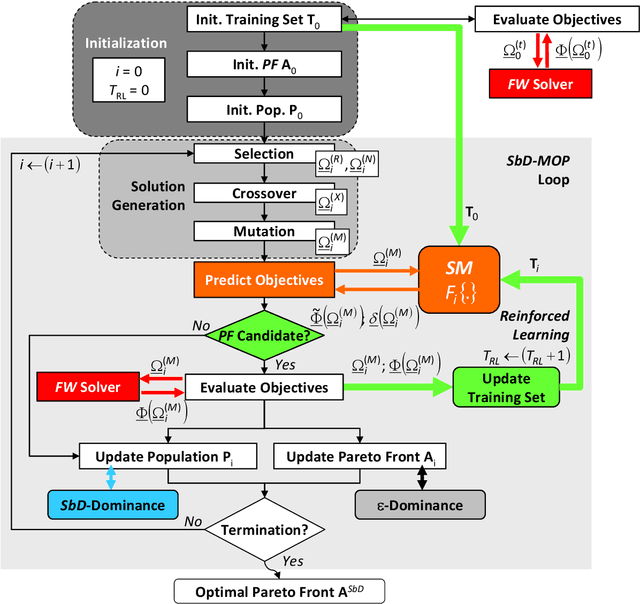

The computationally-efficient solution of multi-objective optimization problems (MOPs) arising in the design of modern electromagnetic (EM) microwave devices is addressed. Towards this end, a novel System-by-Design (SbD) method is developed to effectively explore the solution space and to provide the decision maker with a set of optimal trade-off solutions minimizing multiple and (generally) contrasting objectives. The proposed MO-SbD method proves a high computational efficiency, with a remarkable time saving with respect to a competitive state-of-the-art MOP solution strategy, thanks to the "smart" integration of evolutionary-inspired concepts and operators with artificial intelligence (AI) and machine learning (ML) techniques. Representative numerical results are reported to provide the interested users with useful insights and guidelines on the proposed optimization method as well as to assess its effectiveness in designing mm-wave automotive radar antennas.

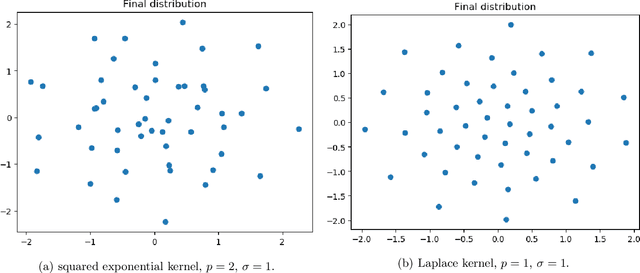

Stein Variational Gradient Descent: many-particle and long-time asymptotics

Feb 25, 2021

Stein variational gradient descent (SVGD) refers to a class of methods for Bayesian inference based on interacting particle systems. In this paper, we consider the originally proposed deterministic dynamics as well as a stochastic variant, each of which represent one of the two main paradigms in Bayesian computational statistics: variational inference and Markov chain Monte Carlo. As it turns out, these are tightly linked through a correspondence between gradient flow structures and large-deviation principles rooted in statistical physics. To expose this relationship, we develop the cotangent space construction for the Stein geometry, prove its basic properties, and determine the large-deviation functional governing the many-particle limit for the empirical measure. Moreover, we identify the Stein-Fisher information (or kernelised Stein discrepancy) as its leading order contribution in the long-time and many-particle regime in the sense of $\Gamma$-convergence, shedding some light on the finite-particle properties of SVGD. Finally, we establish a comparison principle between the Stein-Fisher information and RKHS-norms that might be of independent interest.

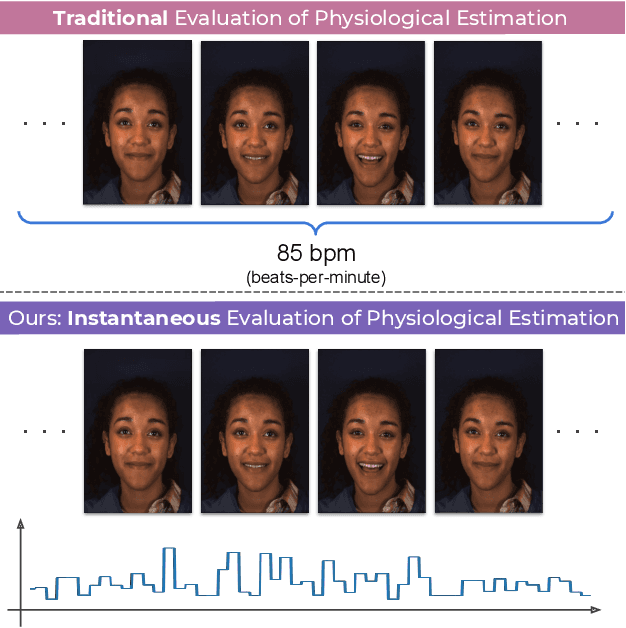

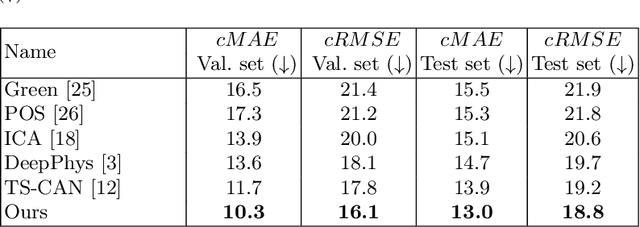

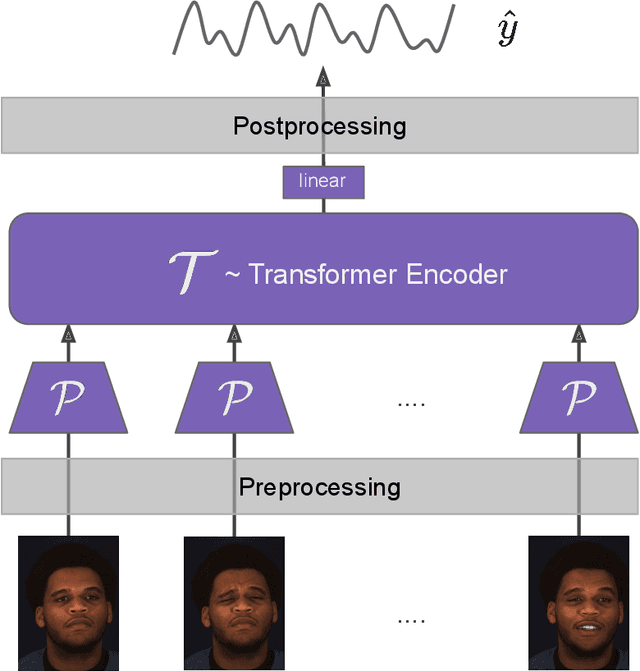

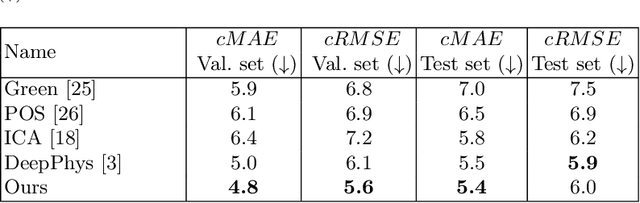

Instantaneous Physiological Estimation using Video Transformers

Feb 24, 2022

Video-based physiological signal estimation has been limited primarily to predicting episodic scores in windowed intervals. While these intermittent values are useful, they provide an incomplete picture of patients' physiological status and may lead to late detection of critical conditions. We propose a video Transformer for estimating instantaneous heart rate and respiration rate from face videos. Physiological signals are typically confounded by alignment errors in space and time. To overcome this, we formulated the loss in the frequency domain. We evaluated the method on the large scale Vision-for-Vitals (V4V) benchmark. It outperformed both shallow and deep learning based methods for instantaneous respiration rate estimation. In the case of heart-rate estimation, it achieved an instantaneous-MAE of 13.0 beats-per-minute.

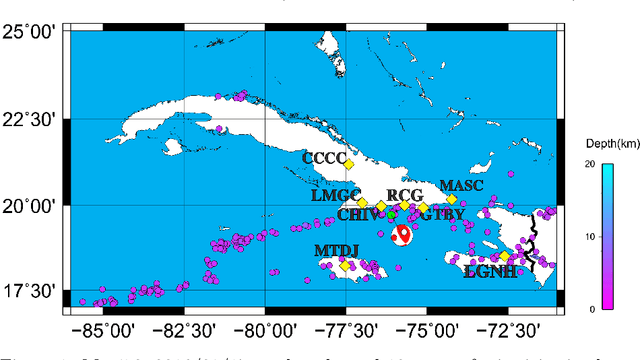

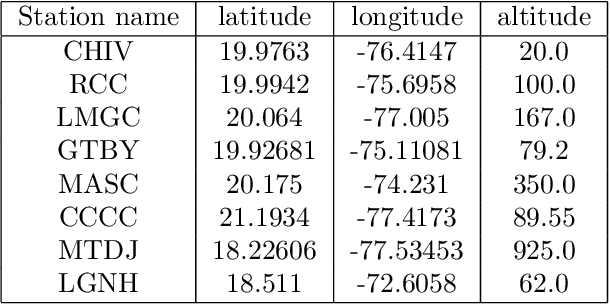

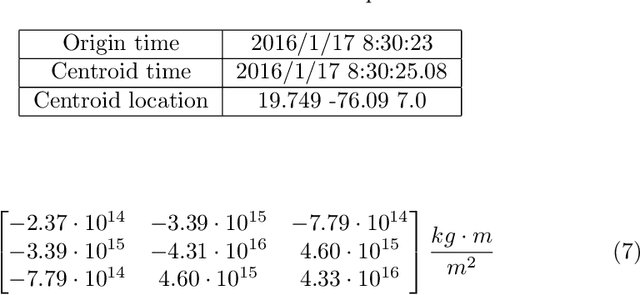

Elastic 3D Wavefield Simulation on budget GPUs using the GLSL shading language

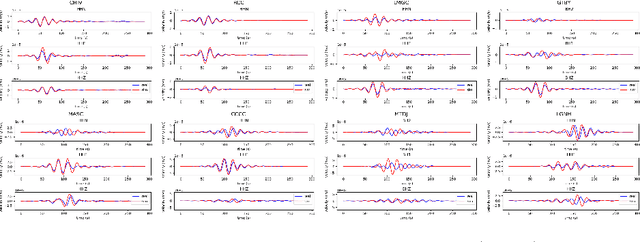

Dec 30, 2021

Forward wavefield simulation is an important step in Full Waveform Inversion systems. Fast simulations are instrumental to get inversion result in reasonable time frames. Most of research and software aims towards utilizing costly computer clusters composed of multiple CPUs and numerous high end GPUs to shorten the forward simulation time. Using this type of hardware has some disadvantages as: high cost, complex programming models and unavailability of resources. In this work, we present a finite difference elastic 3D wavefield forward simulation that takes advantage of any modern low end GPU, by using the GLSL shading language.Some of the advantages of using GLSL are: runs in any modern GPU, has a simplified computing and memory model and provides state of art performance thanks to its very well optimized vendor developed drivers. We show that our GLSL implementation easily outperforms a multicore CPU implementation in a modern PC. We further benchmark our result using a real seismic event, and show that we can get accurate simulations in reasonable time using our system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge