"Time": models, code, and papers

Kartta Labs: Collaborative Time Travel

Oct 07, 2020

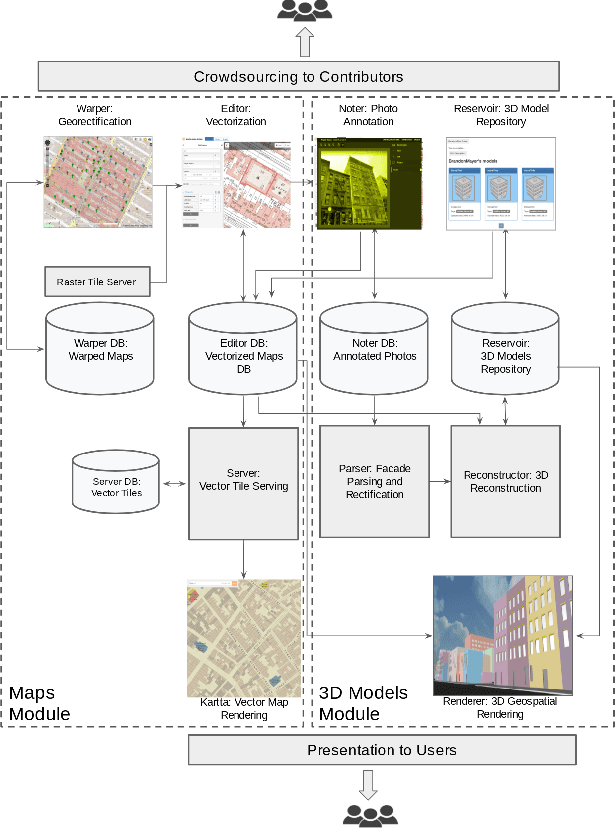

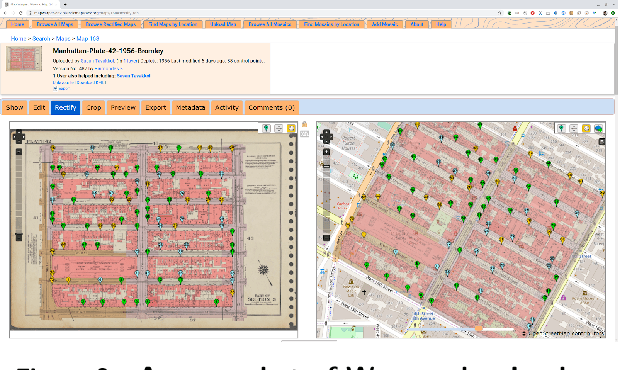

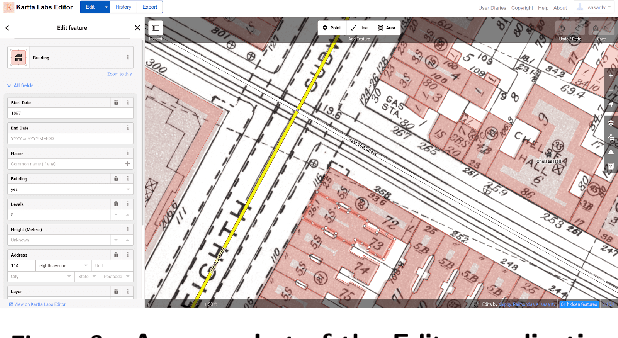

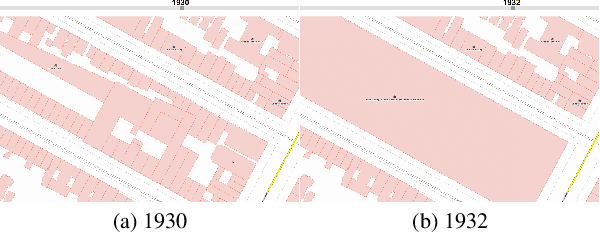

We introduce the modular and scalable design of Kartta Labs, an open source, open data, and scalable system for virtually reconstructing cities from historical maps and photos. Kartta Labs relies on crowdsourcing and artificial intelligence consisting of two major modules: Maps and 3D models. Each module, in turn, consists of sub-modules that enable the system to reconstruct a city from historical maps and photos. The result is a spatiotemporal reference that can be used to integrate various collected data (curated, sensed, or crowdsourced) for research, education, and entertainment purposes. The system empowers the users to experience collaborative time travel such that they work together to reconstruct the past and experience it on an open source and open data platform.

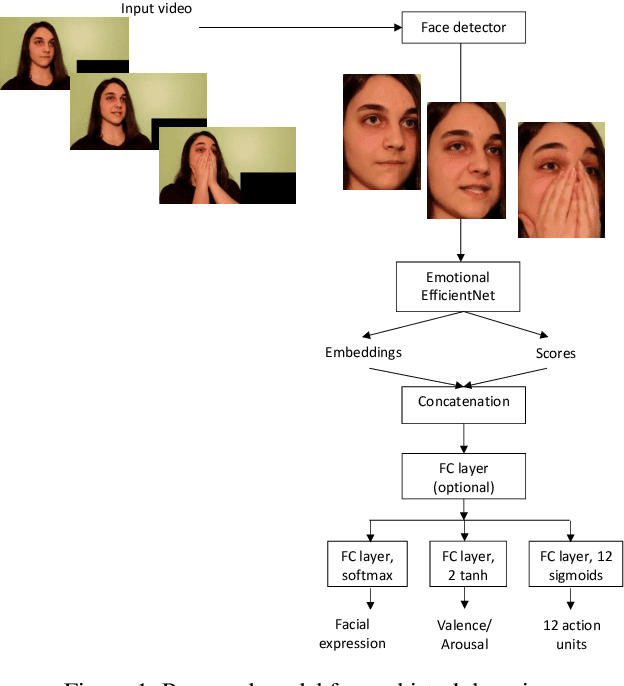

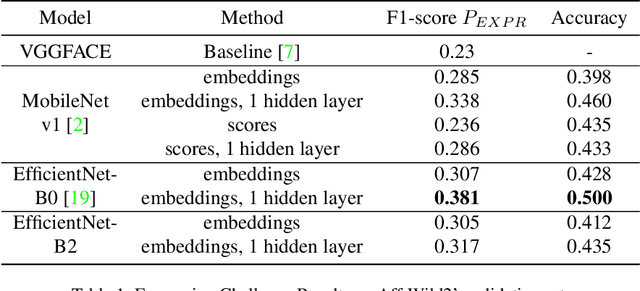

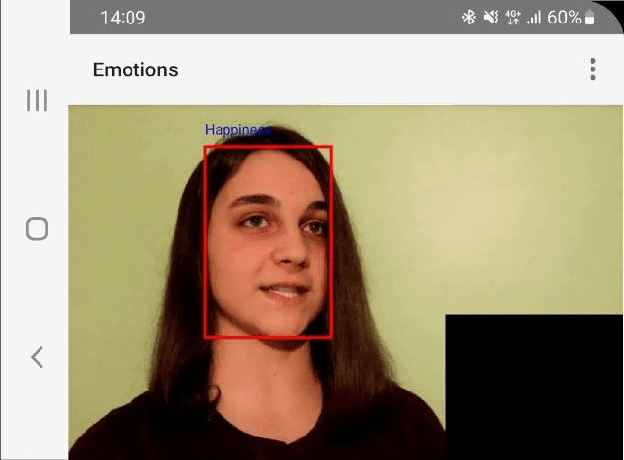

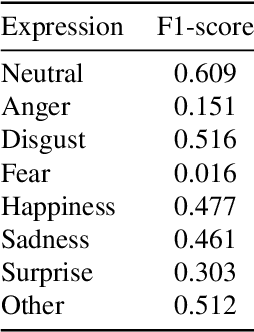

Frame-level Prediction of Facial Expressions, Valence, Arousal and Action Units for Mobile Devices

Mar 25, 2022

In this paper, we consider the problem of real-time video-based facial emotion analytics, namely, facial expression recognition, prediction of valence and arousal and detection of action unit points. We propose the novel frame-level emotion recognition algorithm by extracting facial features with the single EfficientNet model pre-trained on AffectNet. As a result, our approach may be implemented even for video analytics on mobile devices. Experimental results for the large scale Aff-Wild2 database from the third Affective Behavior Analysis in-the-wild (ABAW) Competition demonstrate that our simple model is significantly better when compared to the VggFace baseline. In particular, our method is characterized by 0.15-0.2 higher performance measures for validation sets in uni-task Expression Classification, Valence-Arousal Estimation and Expression Classification. Due to simplicity, our approach may be considered as a new baseline for all four sub-challenges.

National Radio Dynamic Zone Concept with Autonomous Aerial and Ground Spectrum Sensors

Mar 17, 2022

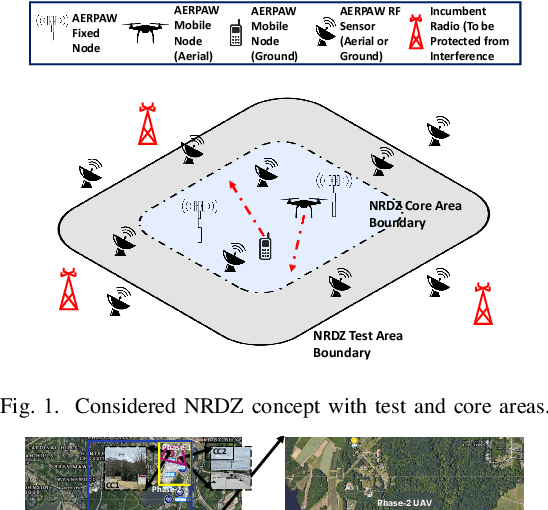

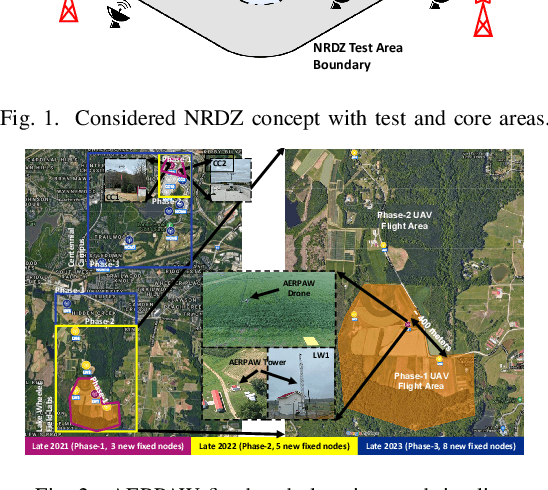

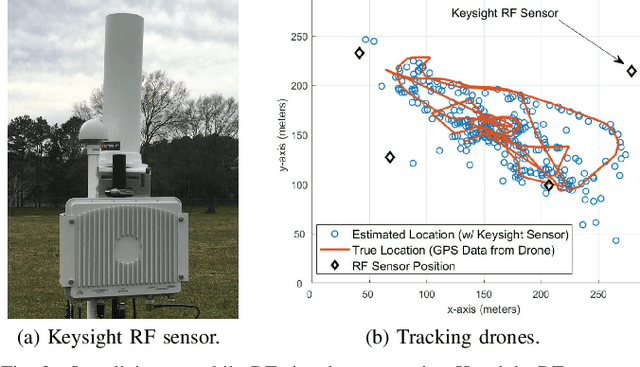

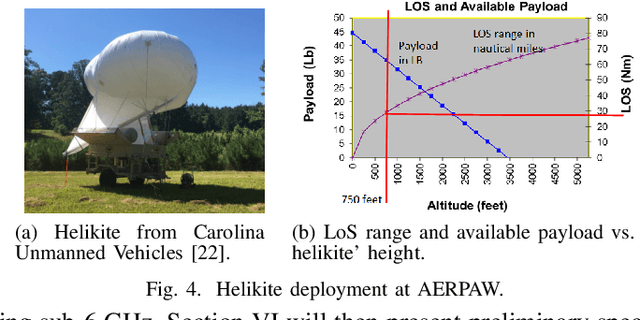

National radio dynamic zone (NRDZs) are intended to be geographically bounded areas within which controlled experiments can be carried out while protecting the nearby licensed users of the spectrum. An NRDZ will facilitate research and development of new spectrum technologies, waveforms, and protocols, in typical outdoor operational environments of such technologies. In this paper, we introduce and describe an NRDZ concept that relies on a combination of autonomous aerial and ground sensor nodes for spectrum sensing and radio environment monitoring (REM). We elaborate on key characteristics and features of an NRDZ to enable advanced wireless experimentation while also coexisting with licensed users. Some preliminary results based on simulation and experimental evaluations are also provided on out-of-zone leakage monitoring and real-time REMs.

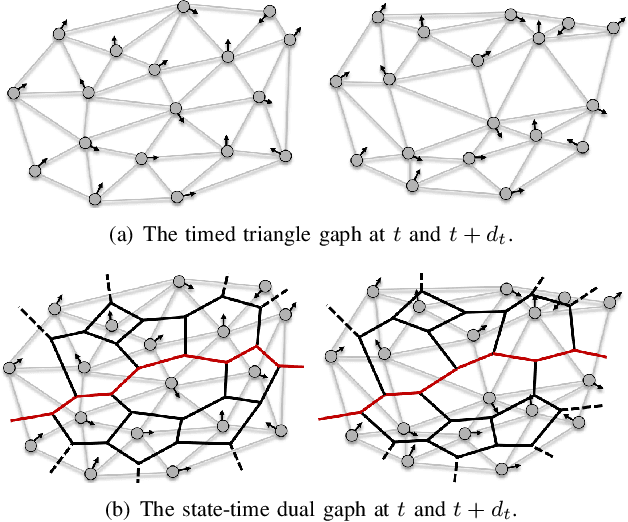

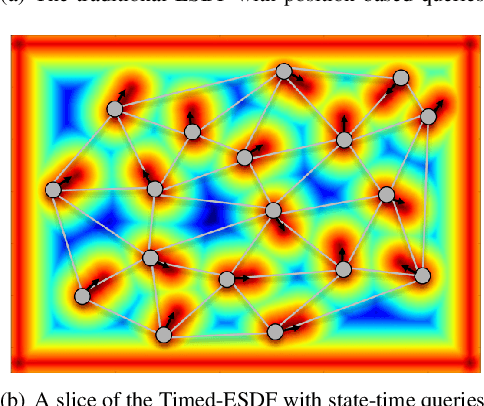

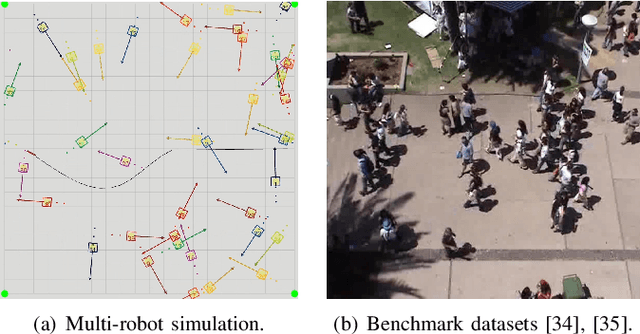

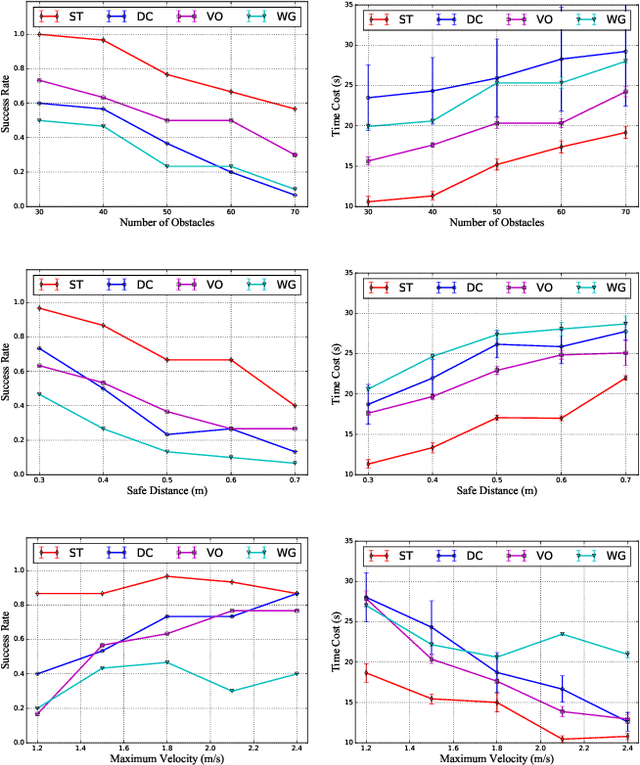

Online State-Time Trajectory Planning Using Timed-ESDF in Highly Dynamic Environments

Oct 29, 2020

Online state-time trajectory planning in highly dynamic environments remains an unsolved problem due to the unpredictable motions of moving obstacles and the curse of dimensionality from the state-time space. Existing state-time planners are typically implemented based on randomized sampling approaches or path searching on discretized state graph. The smoothness, path clearance, and planning efficiency of these planners are usually not satisfying. In this work, we propose a gradient-based planner over the state-time space for online trajectory generation in highly dynamic environments. To enable the gradient-based optimization, we propose a Timed-ESDT that supports distance and gradient queries with state-time keys. Based on the Timed-ESDT, we also define a smooth prior and an obstacle likelihood function that is compatible with the state-time space. The trajectory planning is then formulated to a MAP problem and solved by an efficient numerical optimizer. Moreover, to improve the optimality of the planner, we also define a state-time graph and then conduct path searching on it to find a better initialization for the optimizer. By integrating the graph searching, the planning quality is significantly improved. Experiment results on simulated and benchmark datasets show that our planner can outperform the state-of-the-art methods, demonstrating its significant advantages over the traditional ones.

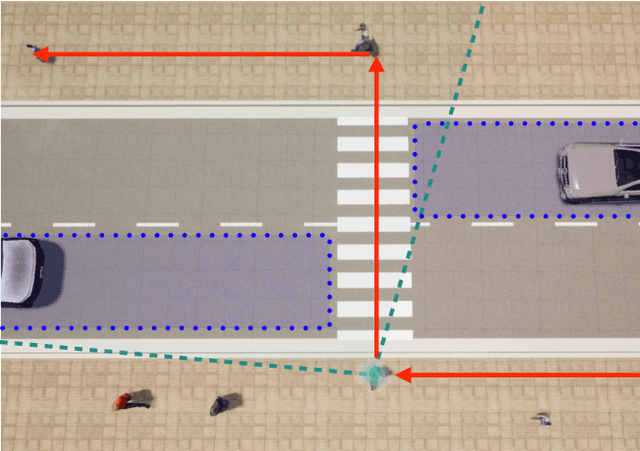

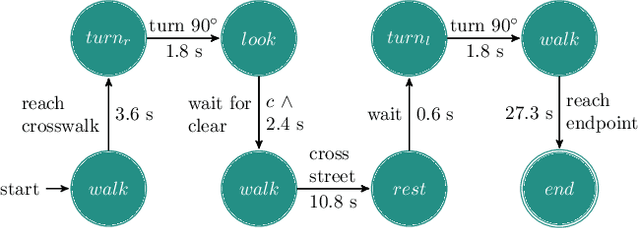

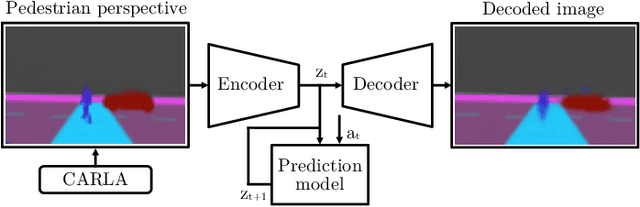

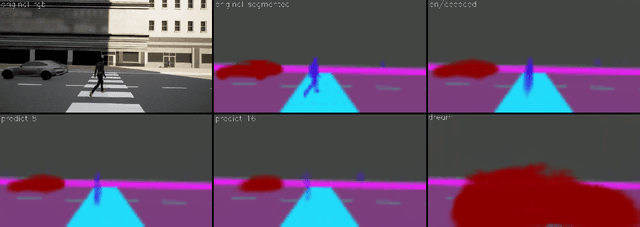

"If you could see me through my eyes": Predicting Pedestrian Perception

Mar 22, 2022

Pedestrians are particularly vulnerable road users in urban traffic. With the arrival of autonomous driving, novel technologies can be developed specifically to protect pedestrians. We propose a machine learning toolchain to train artificial neural networks as models of pedestrian behavior. In a preliminary study, we use synthetic data from simulations of a specific pedestrian crossing scenario to train a variational autoencoder and a long short-term memory network to predict a pedestrian's future visual perception. We can accurately predict a pedestrian's future perceptions within relevant time horizons. By iteratively feeding these predicted frames into these networks, they can be used as simulations of pedestrians as indicated by our results. Such trained networks can later be used to predict pedestrian behaviors even from the perspective of the autonomous car. Another future extension will be to re-train these networks with real-world video data.

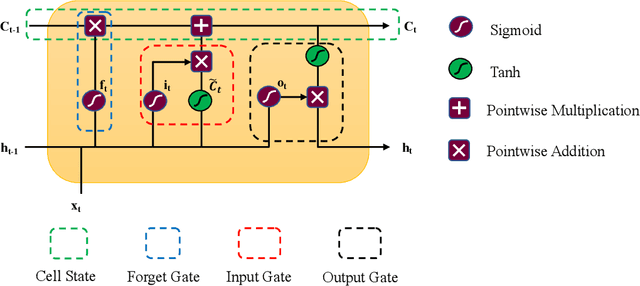

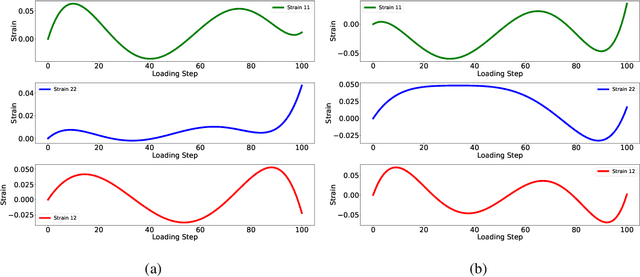

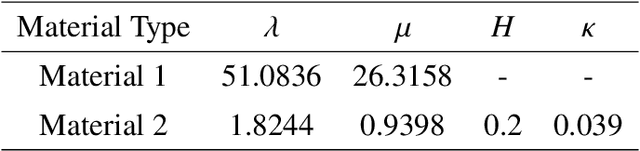

A single Long Short-Term Memory network for enhancing the prediction of path-dependent plasticity with material heterogeneity and anisotropy

Apr 05, 2022

This study presents the applicability of conventional deep recurrent neural networks (RNN) to predict path-dependent plasticity associated with material heterogeneity and anisotropy. Although the architecture of RNN possesses inductive biases toward information over time, it is still challenging to learn the path-dependent material behavior as a function of the loading path considering the change from elastic to elastoplastic regimes. Our attempt is to develop a simple machine-learning-based model that can replicate elastoplastic behaviors considering material heterogeneity and anisotropy. The basic Long-Short Term Memory Unit (LSTM) is adopted for the modeling of plasticity in the two-dimensional space by enhancing the inductive bias toward the past information through manipulating input variables. Our results find that a single LSTM based model can capture the J2 plasticity responses under both monotonic and arbitrary loading paths provided the material heterogeneity. The proposed neural network architecture is then used to model elastoplastic responses of a two-dimensional transversely anisotropic material associated with computational homogenization (FE2). It is also found that a single LSTM model can be used to accurately and effectively capture the path-dependent responses of heterogeneous and anisotropic microstructures under arbitrary mechanical loading conditions.

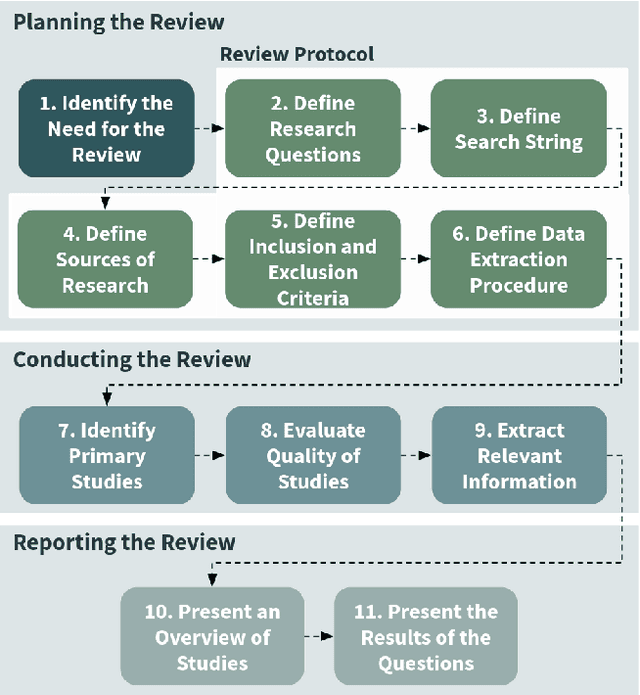

Distributed intelligence on the Edge-to-Cloud Continuum: A systematic literature review

Apr 29, 2022

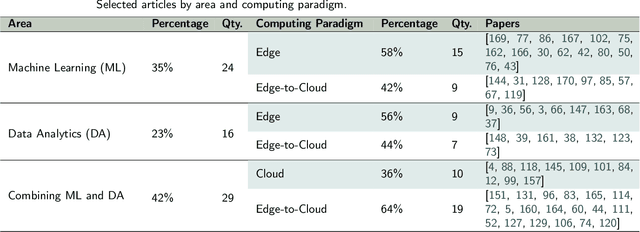

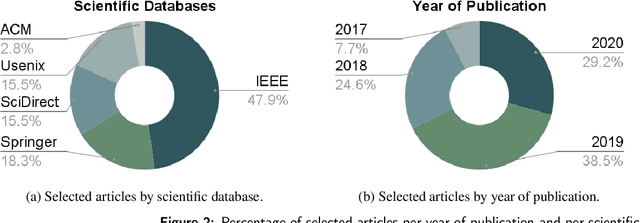

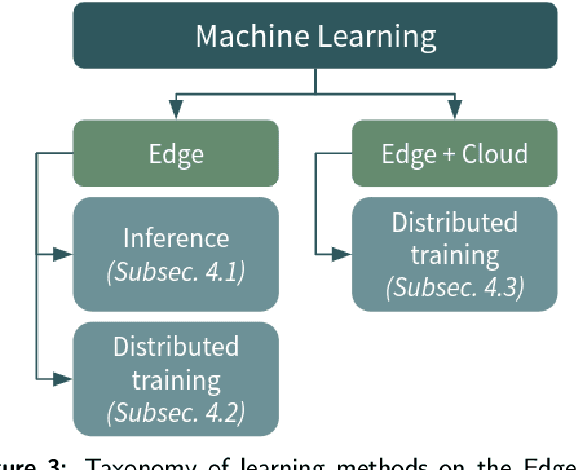

The explosion of data volumes generated by an increasing number of applications is strongly impacting the evolution of distributed digital infrastructures for data analytics and machine learning (ML). While data analytics used to be mainly performed on cloud infrastructures, the rapid development of IoT infrastructures and the requirements for low-latency, secure processing has motivated the development of edge analytics. Today, to balance various trade-offs, ML-based analytics tends to increasingly leverage an interconnected ecosystem that allows complex applications to be executed on hybrid infrastructures where IoT Edge devices are interconnected to Cloud/HPC systems in what is called the Computing Continuum, the Digital Continuum, or the Transcontinuum.Enabling learning-based analytics on such complex infrastructures is challenging. The large scale and optimized deployment of learning-based workflows across the Edge-to-Cloud Continuum requires extensive and reproducible experimental analysis of the application execution on representative testbeds. This is necessary to help understand the performance trade-offs that result from combining a variety of learning paradigms and supportive frameworks. A thorough experimental analysis requires the assessment of the impact of multiple factors, such as: model accuracy, training time, network overhead, energy consumption, processing latency, among others.This review aims at providing a comprehensive vision of the main state-of-the-art libraries and frameworks for machine learning and data analytics available today. It describes the main learning paradigms enabling learning-based analytics on the Edge-to-Cloud Continuum. The main simulation, emulation, deployment systems, and testbeds for experimental research on the Edge-to-Cloud Continuum available today are also surveyed. Furthermore, we analyze how the selected systems provide support for experiment reproducibility. We conclude our review with a detailed discussion of relevant open research challenges and of future directions in this domain such as: holistic understanding of performance; performance optimization of applications;efficient deployment of Artificial Intelligence (AI) workflows on highly heterogeneous infrastructures; and reproducible analysis of experiments on the Computing Continuum.

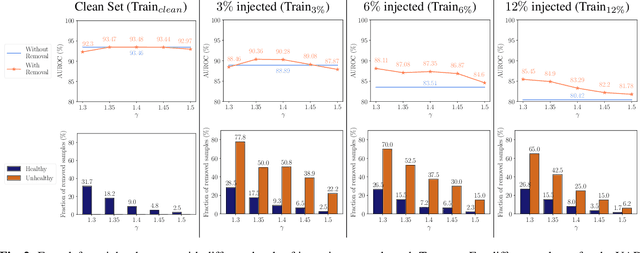

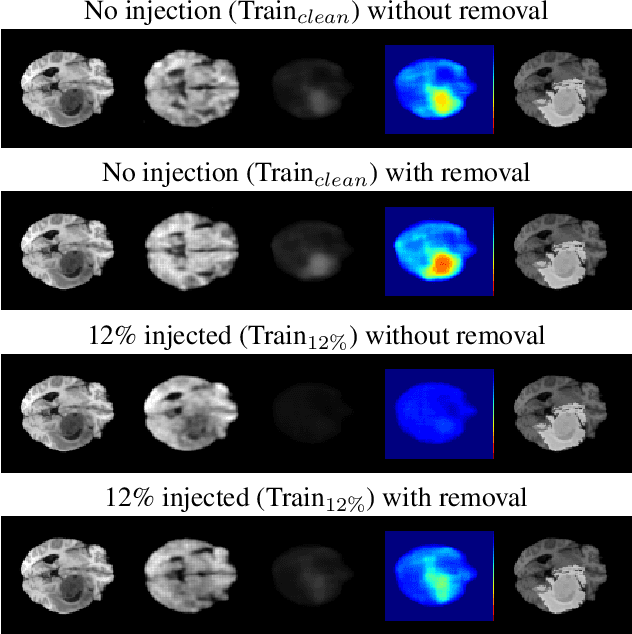

Unsupervised Anomaly Detection in 3D Brain MRI using Deep Learning with impured training data

Apr 12, 2022

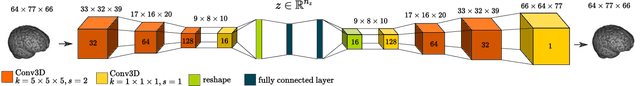

The detection of lesions in magnetic resonance imaging (MRI)-scans of human brains remains challenging, time-consuming and error-prone. Recently, unsupervised anomaly detection (UAD) methods have shown promising results for this task. These methods rely on training data sets that solely contain healthy samples. Compared to supervised approaches, this significantly reduces the need for an extensive amount of labeled training data. However, data labelling remains error-prone. We study how unhealthy samples within the training data affect anomaly detection performance for brain MRI-scans. For our evaluations, we consider three publicly available data sets and use autoencoders (AE) as a well-established baseline method for UAD. We systematically evaluate the effect of impured training data by injecting different quantities of unhealthy samples to our training set of healthy samples from T1-weighted MRI-scans. We evaluate a method to identify falsely labeled samples directly during training based on the reconstruction error of the AE. Our results show that training with impured data decreases the UAD performance notably even with few falsely labeled samples. By performing outlier removal directly during training based on the reconstruction-loss, we demonstrate that falsely labeled data can be detected and removed to mitigate the effect of falsely labeled data. Overall, we highlight the importance of clean data sets for UAD in brain MRI and demonstrate an approach for detecting falsely labeled data directly during training.

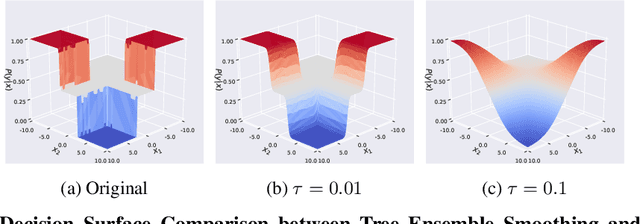

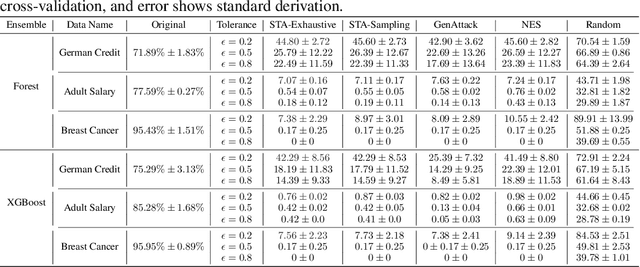

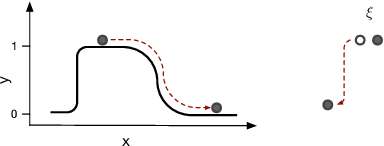

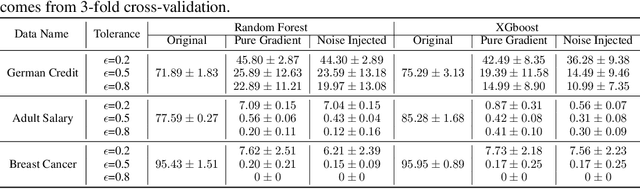

Scalable Whitebox Attacks on Tree-based Models

Mar 31, 2022

Adversarial robustness is one of the essential safety criteria for guaranteeing the reliability of machine learning models. While various adversarial robustness testing approaches were introduced in the last decade, we note that most of them are incompatible with non-differentiable models such as tree ensembles. Since tree ensembles are widely used in industry, this reveals a crucial gap between adversarial robustness research and practical applications. This paper proposes a novel whitebox adversarial robustness testing approach for tree ensemble models. Concretely, the proposed approach smooths the tree ensembles through temperature controlled sigmoid functions, which enables gradient descent-based adversarial attacks. By leveraging sampling and the log-derivative trick, the proposed approach can scale up to testing tasks that were previously unmanageable. We compare the approach against both random perturbations and blackbox approaches on multiple public datasets (and corresponding models). Our results show that the proposed method can 1) successfully reveal the adversarial vulnerability of tree ensemble models without causing computational pressure for testing and 2) flexibly balance the search performance and time complexity to meet various testing criteria.

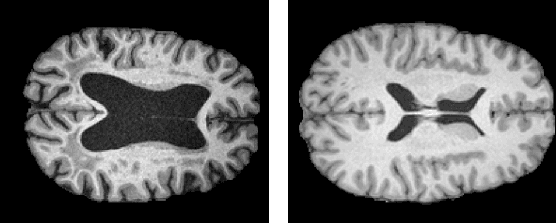

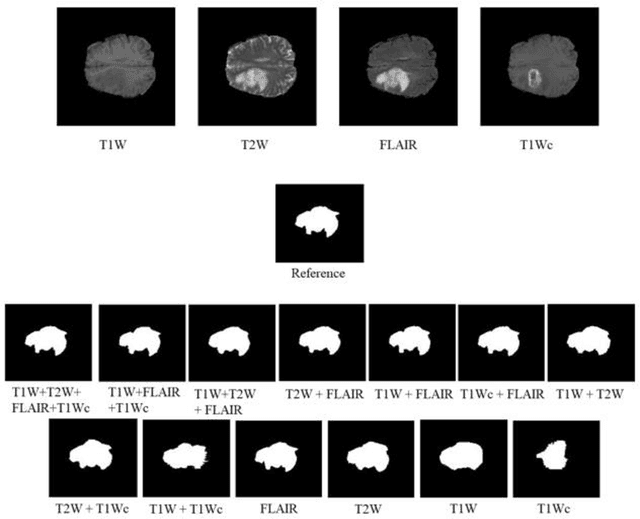

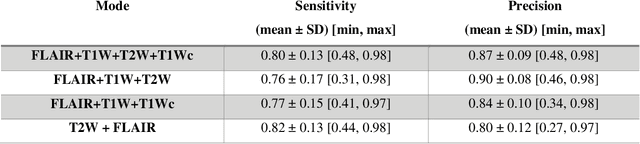

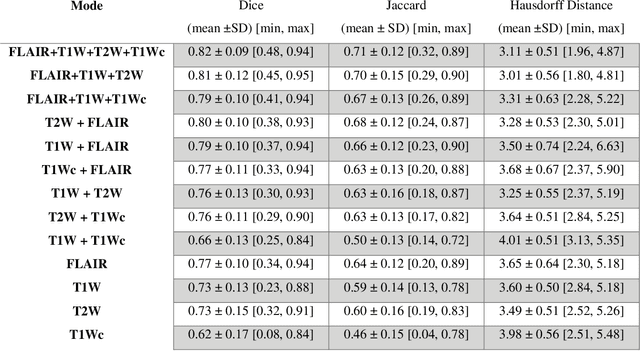

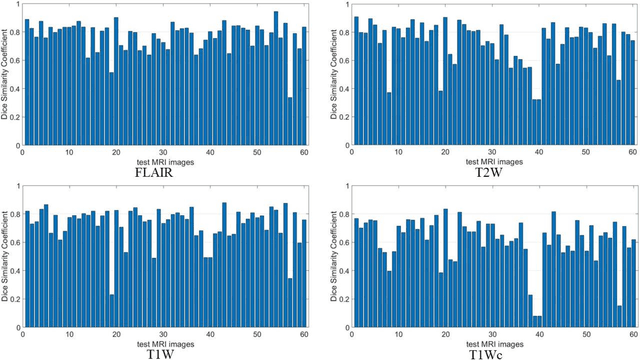

Joint brain tumor segmentation from multi MR sequences through a deep convolutional neural network

Mar 07, 2022

Brain tumor segmentation is highly contributive in diagnosing and treatment planning. The manual brain tumor delineation is a time-consuming and tedious task and varies depending on the radiologists skill. Automated brain tumor segmentation is of high importance, and does not depend on either inter or intra-observation. The objective of this study is to automate the delineation of brain tumors from the FLAIR, T1 weighted, T2 weighted, and T1 weighted contrast-enhanced MR sequences through a deep learning approach, with a focus on determining which MR sequence alone or which combination thereof would lead to the highest accuracy therein.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge