"Time": models, code, and papers

Developing a Production System for Purpose of Call Detection in Business Phone Conversations

May 13, 2022

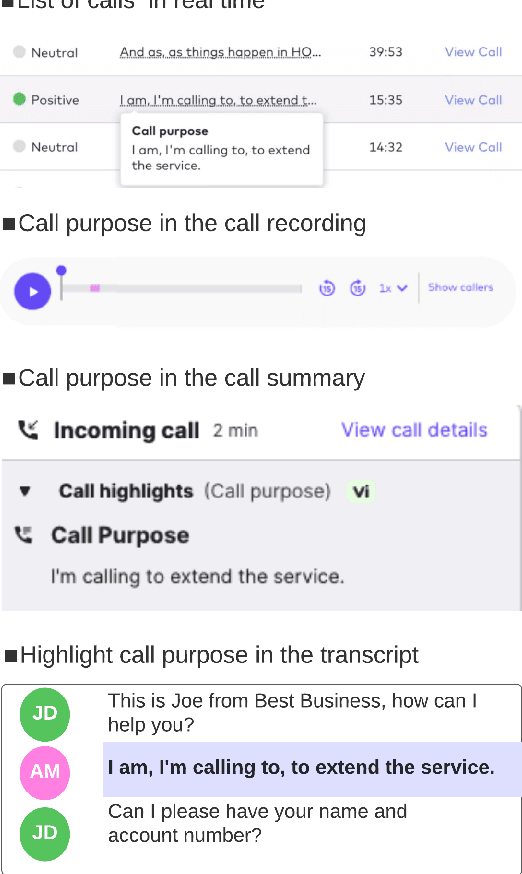

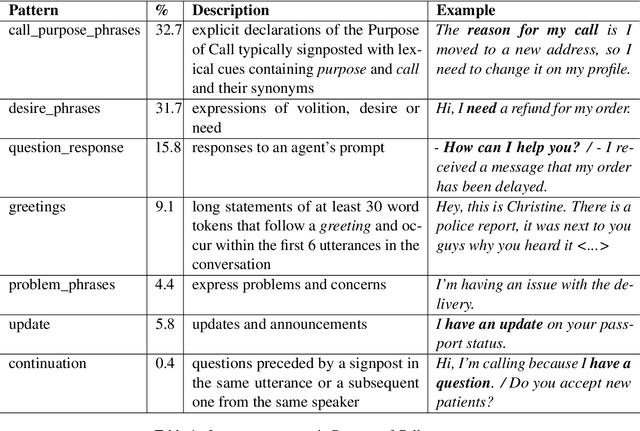

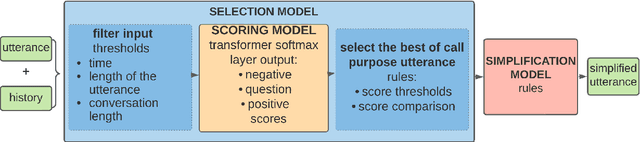

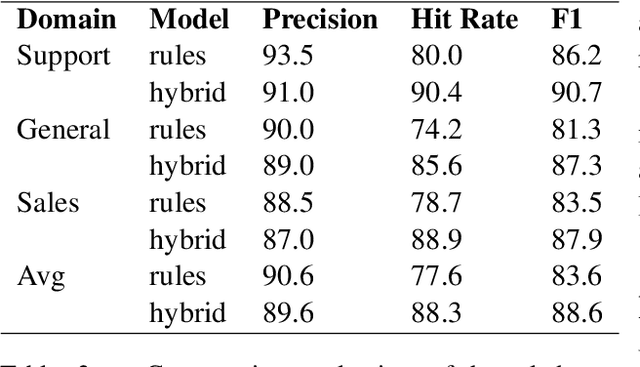

For agents at a contact centre receiving calls, the most important piece of information is the reason for a given call. An agent cannot provide support on a call if they do not know why a customer is calling. In this paper we describe our implementation of a commercial system to detect Purpose of Call statements in English business call transcripts in real time. We present a detailed analysis of types of Purpose of Call statements and language patterns related to them, discuss an approach to collect rich training data by bootstrapping from a set of rules to a neural model, and describe a hybrid model which consists of a transformer-based classifier and a set of rules by leveraging insights from the analysis of call transcripts. The model achieved 88.6 F1 on average in various types of business calls when tested on real life data and has low inference time. We reflect on the challenges and design decisions when developing and deploying the system.

Elastic Similarity Measures for Multivariate Time Series Classification

Feb 20, 2021

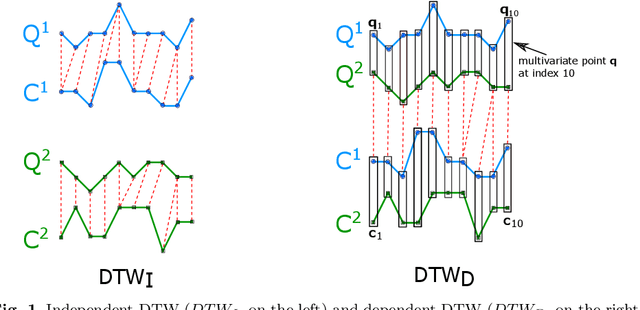

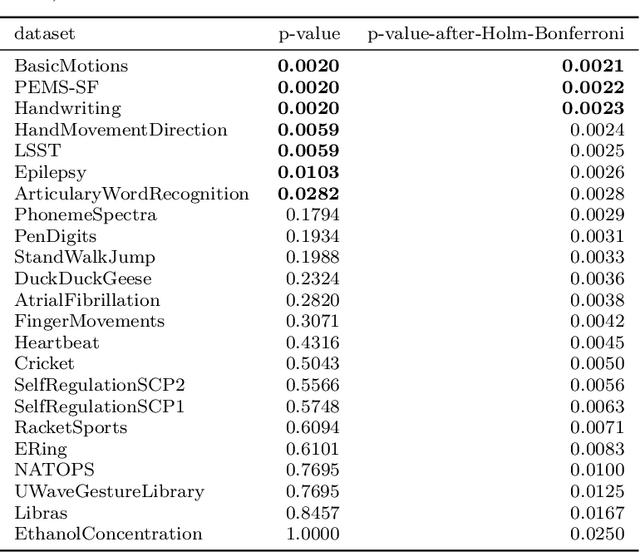

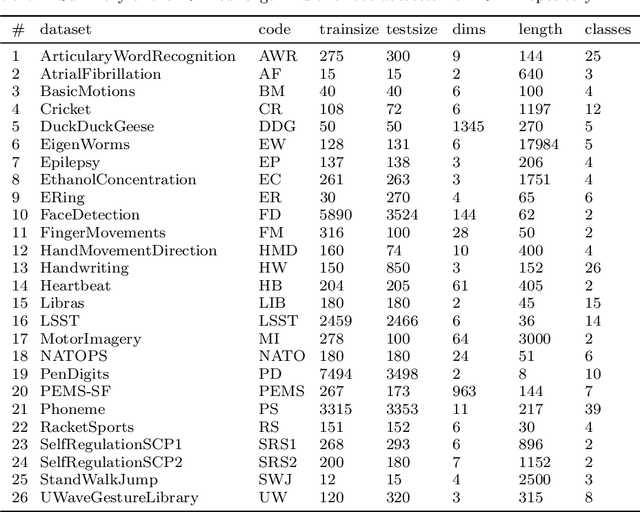

Elastic similarity measures are a class of similarity measures specifically designed to work with time series data. When scoring the similarity between two time series, they allow points that do not correspond in timestamps to be aligned. This can compensate for misalignments in the time axis of time series data, and for similar processes that proceed at variable and differing paces. Elastic similarity measures are widely used in machine learning tasks such as classification, clustering and outlier detection when using time series data. There is a multitude of research on various univariate elastic similarity measures. However, except for multivariate versions of the well known Dynamic Time Warping (DTW) there is a lack of work to generalise other similarity measures for multivariate cases. This paper adapts two existing strategies used in multivariate DTW, namely, Independent and Dependent DTW, to several commonly used elastic similarity measures. Using 23 datasets from the University of East Anglia (UEA) multivariate archive, for nearest neighbour classification, we demonstrate that each measure outperforms all others on at least one dataset and that there are datasets for which either the dependent versions of all measures are more accurate than their independent counterparts or vice versa. This latter finding suggests that these differences arise from a fundamental property of the data. We also show that an ensemble of such nearest neighbour classifiers is highly competitive with other state-of-the-art multivariate time series classifiers.

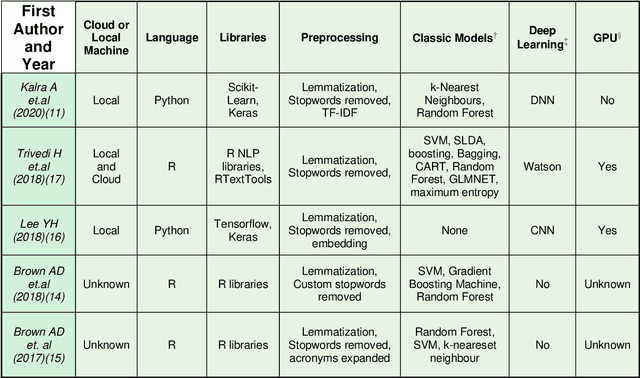

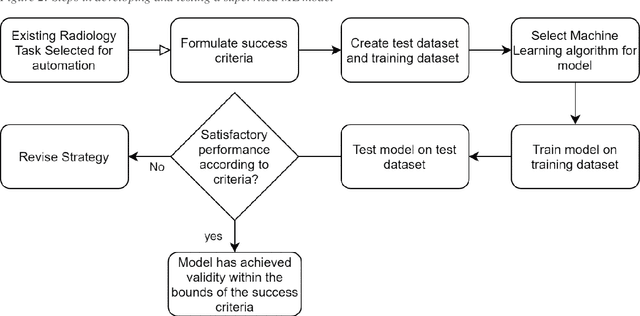

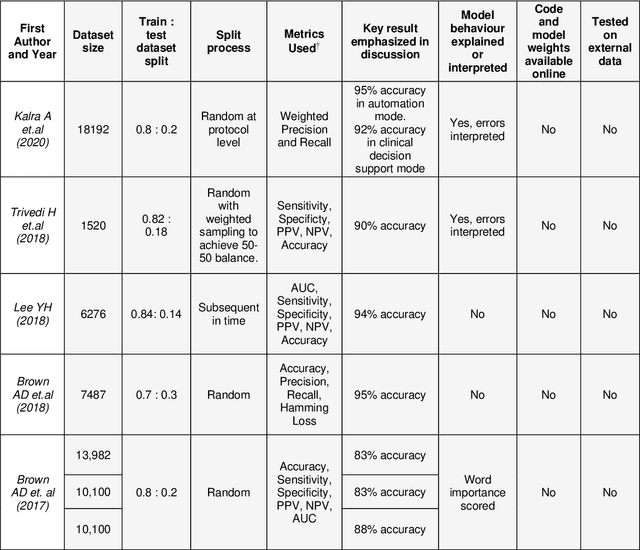

A Review of Published Machine Learning Natural Language Processing Applications for Protocolling Radiology Imaging

Jun 23, 2022

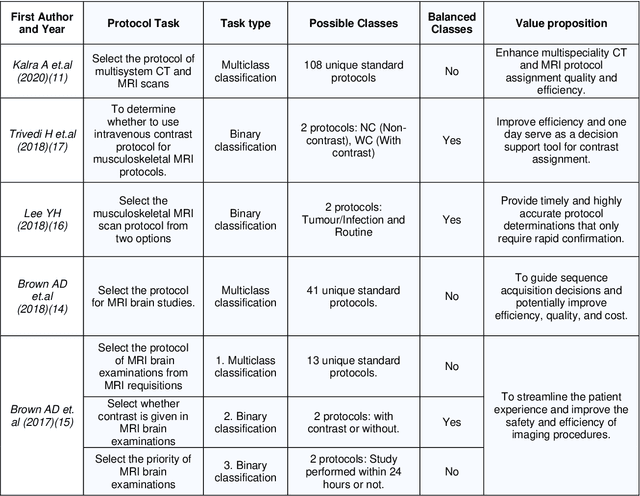

Machine learning (ML) is a subfield of Artificial intelligence (AI), and its applications in radiology are growing at an ever-accelerating rate. The most studied ML application is the automated interpretation of images. However, natural language processing (NLP), which can be combined with ML for text interpretation tasks, also has many potential applications in radiology. One such application is automation of radiology protocolling, which involves interpreting a clinical radiology referral and selecting the appropriate imaging technique. It is an essential task which ensures that the correct imaging is performed. However, the time that a radiologist must dedicate to protocolling could otherwise be spent reporting, communicating with referrers, or teaching. To date, there have been few publications in which ML models were developed that use clinical text to automate protocol selection. This article reviews the existing literature in this field. A systematic assessment of the published models is performed with reference to best practices suggested by machine learning convention. Progress towards implementing automated protocolling in a clinical setting is discussed.

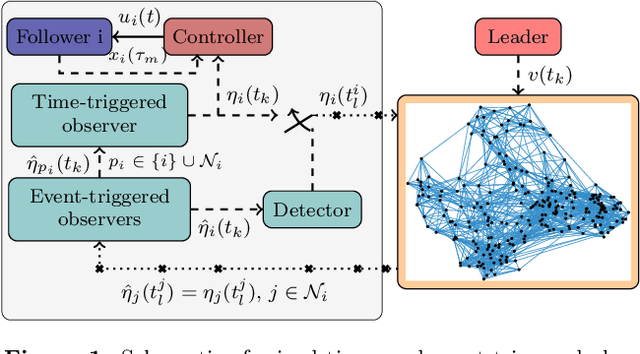

Cooperative Output Regulation with Mixed Time- and Event-triggered Observers

May 05, 2021

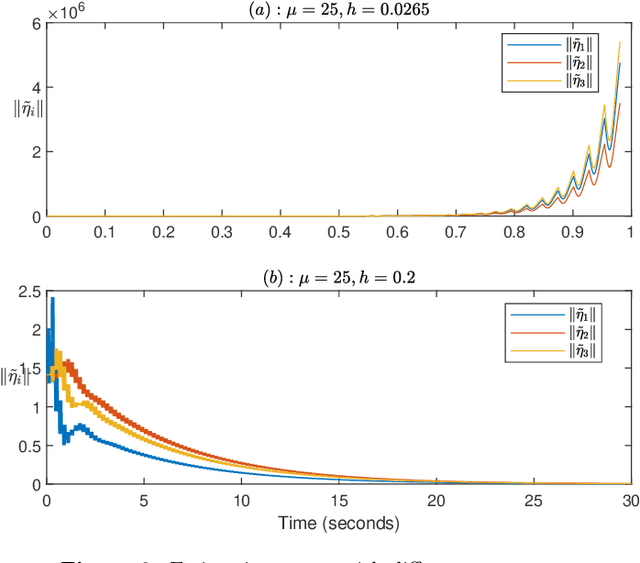

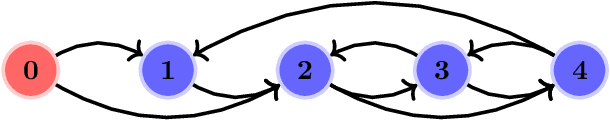

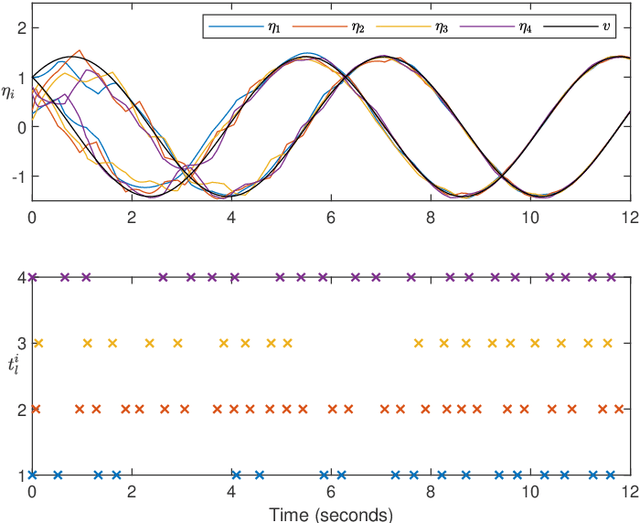

Mixed time- and event-triggered cooperative output regulation for heterogeneous distributed systems is investigated in this paper. A distributed observer with time-triggered observations is proposed to estimate the state of the leader, and an auxiliary observer with event-triggered communication is designed to reduce the information exchange among followers. A necessary and sufficient condition for the existence of desirable time-triggered observers is established, and delicate relationships among sampling periods, topologies, and reference signals are revealed. An event-triggering mechanism based on local sampled data is proposed to regulate the communication among agents; and the convergence of the estimation errors under the mechanism holds for a class of positive and convergent triggering functions, which include the commonly used exponential function as a special case. The mixed time- and event-triggered system naturally excludes the existence of Zeno behavior as the system updates at discrete instants. When the triggering function is bounded by exponential functions, analytical characterization of the relationship among sampling, event triggering, and inter-event behaviour is established. Finally, several examples are provided to illustrate the effectiveness and merits of the theoretical results.

Multi-variant COVID-19 model with heterogeneous transmission rates using deep neural networks

May 13, 2022

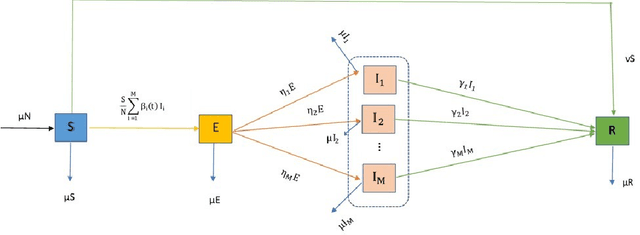

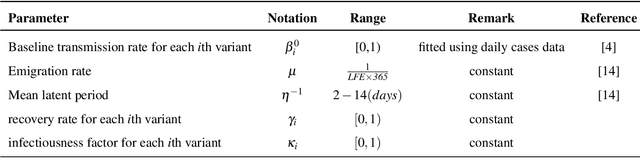

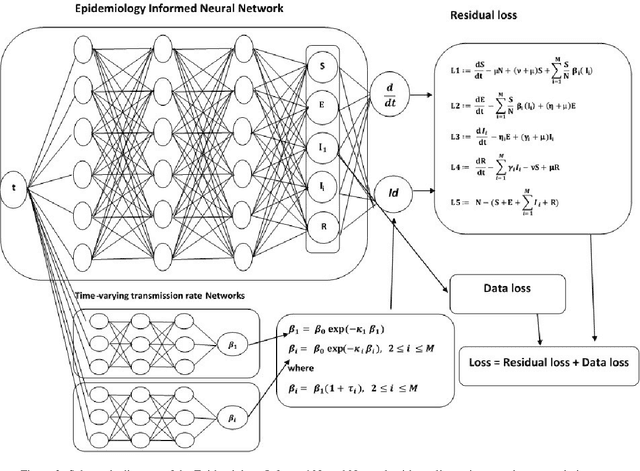

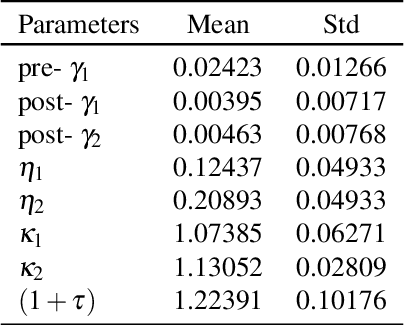

Mutating variants of COVID-19 have been reported across many US states since 2021. In the fight against COVID-19, it has become imperative to study the heterogeneity in the time-varying transmission rates for each variant in the presence of pharmaceutical and non-pharmaceutical mitigation measures. We develop a Susceptible-Exposed-Infected-Recovered mathematical model to highlight the differences in the transmission of the B.1.617.2 delta variant and the original SARS-CoV-2. Theoretical results for the well-posedness of the model are discussed. A Deep neural network is utilized and a deep learning algorithm is developed to learn the time-varying heterogeneous transmission rates for each variant. The accuracy of the algorithm for the model is shown using error metrics in the data-driven simulation for COVID-19 variants in the US states of Florida, Alabama, Tennessee, and Missouri. Short-term forecasting of daily cases is demonstrated using long short term memory neural network and an adaptive neuro-fuzzy inference system.

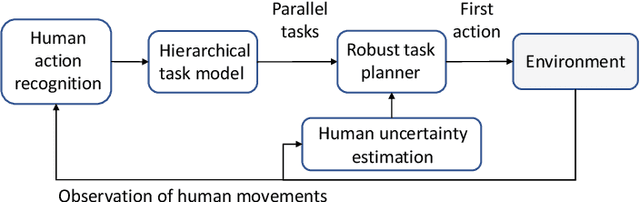

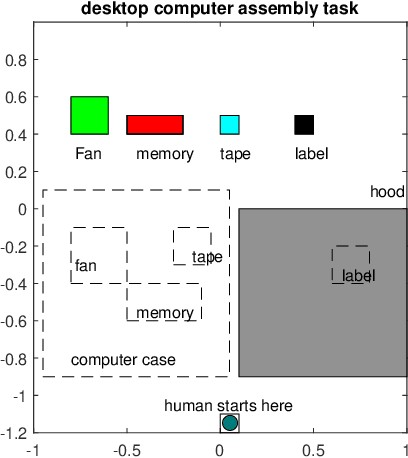

Robust Task Planning for Assembly Lines with Human-Robot Collaboration

Apr 17, 2022

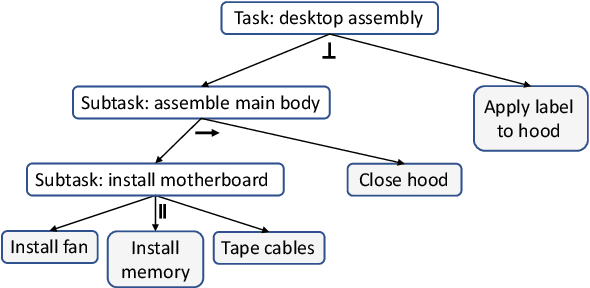

Efficient and robust task planning for a human-robot collaboration (HRC) system remains challenging. The human-aware task planner needs to assign jobs to both robots and human workers so that they can work collaboratively to achieve better time efficiency. However, the complexity of the tasks and the stochastic nature of the human collaborators bring challenges to such task planning. To reduce the complexity of the planning problem, we utilize the hierarchical task model, which explicitly captures the sequential and parallel relationships of the task. We model human movements with the sigma-lognormal functions to account for human-induced uncertainties. A human action model adaptation scheme is applied during run-time, and it provides a measure for modeling the human-induced uncertainties. We propose a sampling-based method to estimate human job completion time uncertainties. Next, we propose a robust task planner, which formulates the planning problem as a robust optimization problem by considering the task structure and the uncertainties. We conduct simulations of a robot arm collaborating with a human worker in an electronics assembly setting. The results show that our proposed planner can reduce task completion time when human-induced uncertainties occur compared to the baseline planner.

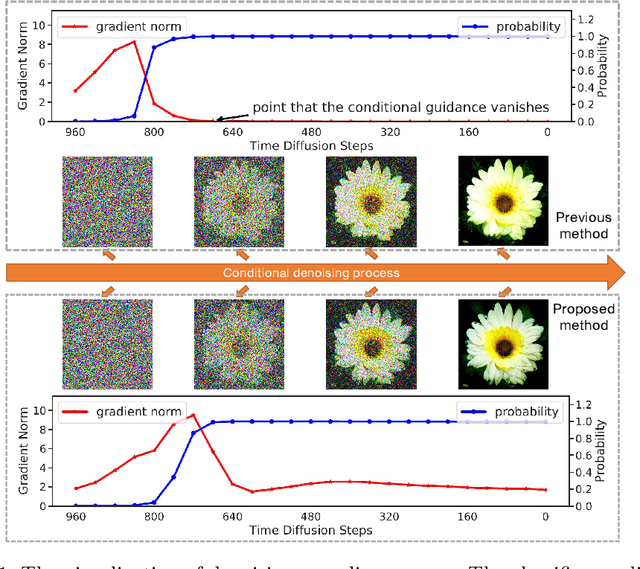

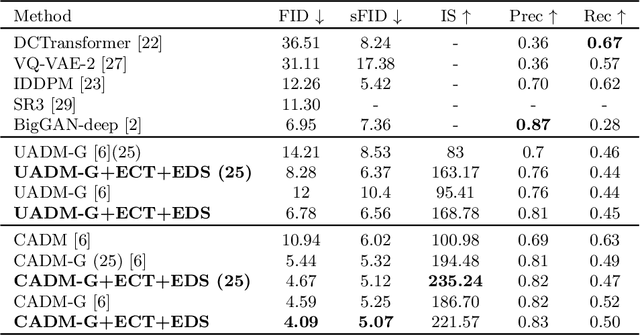

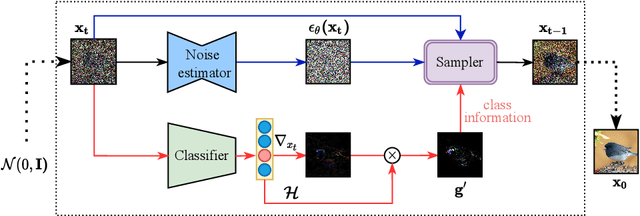

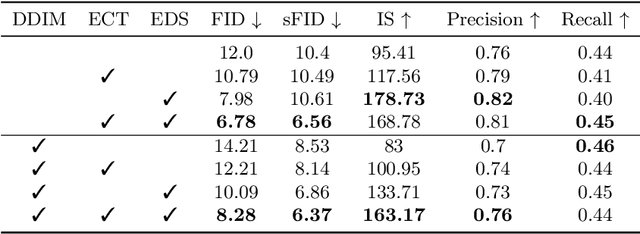

Entropy-driven Sampling and Training Scheme for Conditional Diffusion Generation

Jun 23, 2022

Denoising Diffusion Probabilistic Model (DDPM) is able to make flexible conditional image generation from prior noise to real data, by introducing an independent noise-aware classifier to provide conditional gradient guidance at each time step of denoising process. However, due to the ability of classifier to easily discriminate an incompletely generated image only with high-level structure, the gradient, which is a kind of class information guidance, tends to vanish early, leading to the collapse from conditional generation process into the unconditional process. To address this problem, we propose two simple but effective approaches from two perspectives. For sampling procedure, we introduce the entropy of predicted distribution as the measure of guidance vanishing level and propose an entropy-aware scaling method to adaptively recover the conditional semantic guidance. % for each generated sample. For training stage, we propose the entropy-aware optimization objectives to alleviate the overconfident prediction for noisy data.On ImageNet1000 256x256, with our proposed sampling scheme and trained classifier, the pretrained conditional and unconditional DDPM model can achieve 10.89% (4.59 to 4.09) and 43.5% (12 to 6.78) FID improvement respectively.

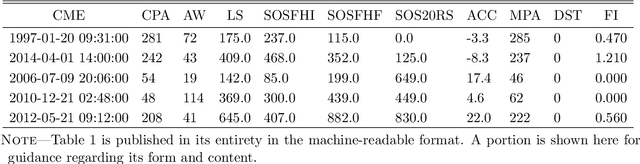

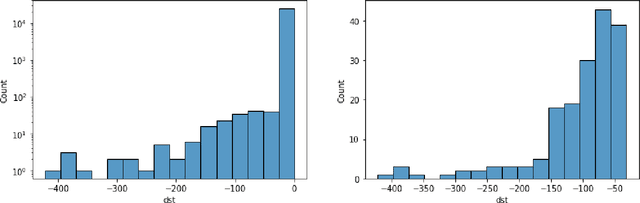

Predicting the Geoeffectiveness of CMEs Using Machine Learning

Jun 23, 2022

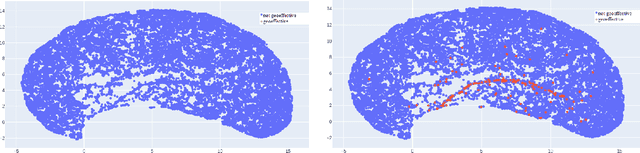

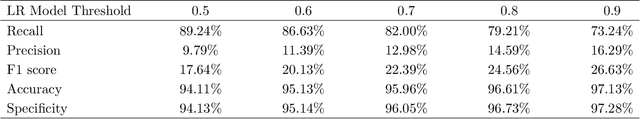

Coronal mass ejections (CMEs) are the most geoeffective space weather phenomena, being associated with large geomagnetic storms, having the potential to cause disturbances to telecommunication, satellite network disruptions, power grid damages and failures. Thus, considering these storms' potential effects on human activities, accurate forecasts of the geoeffectiveness of CMEs are paramount. This work focuses on experimenting with different machine learning methods trained on white-light coronagraph datasets of close to sun CMEs, to estimate whether such a newly erupting ejection has the potential to induce geomagnetic activity. We developed binary classification models using logistic regression, K-Nearest Neighbors, Support Vector Machines, feed forward artificial neural networks, as well as ensemble models. At this time, we limited our forecast to exclusively use solar onset parameters, to ensure extended warning times. We discuss the main challenges of this task, namely the extreme imbalance between the number of geoeffective and ineffective events in our dataset, along with their numerous similarities and the limited number of available variables. We show that even in such conditions, adequate hit rates can be achieved with these models.

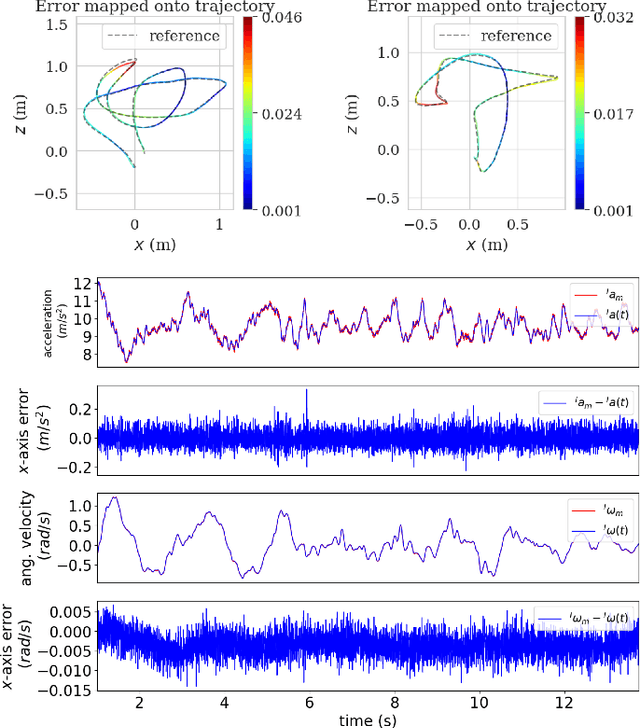

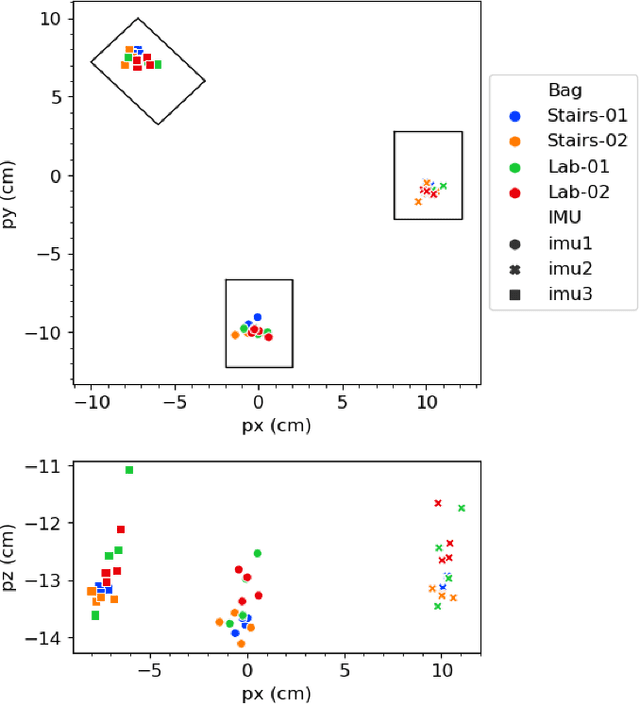

Observability-Aware Intrinsic and Extrinsic Calibration of LiDAR-IMU Systems

May 06, 2022

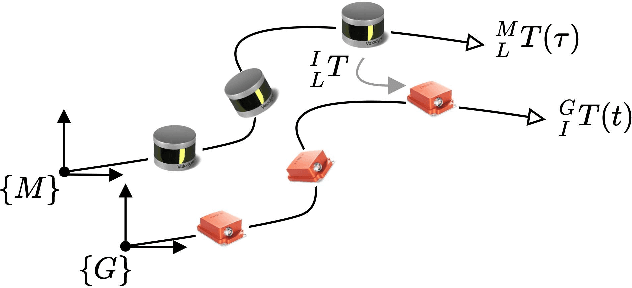

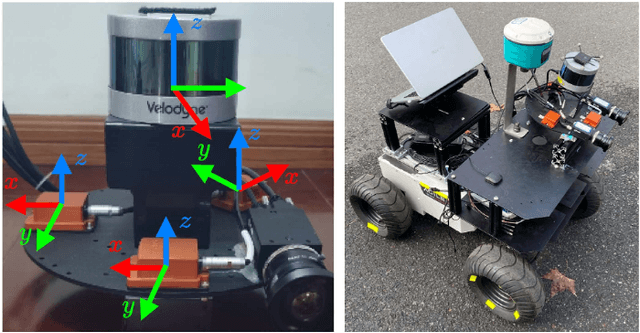

Accurate and reliable sensor calibration is essential to fuse LiDAR and inertial measurements, which are usually available in robotic applications. In this paper, we propose a novel LiDAR-IMU calibration method within the continuous-time batch-optimization framework, where the intrinsics of both sensors and the spatial-temporal extrinsics between sensors are calibrated without using calibration infrastructure such as fiducial tags. Compared to discrete-time approaches, the continuous-time formulation has natural advantages for fusing high rate measurements from LiDAR and IMU sensors. To improve efficiency and address degenerate motions, two observability-aware modules are leveraged: (i) The information-theoretic data selection policy selects only the most informative segments for calibration during data collection, which significantly improves the calibration efficiency by processing only the selected informative segments. (ii) The observability-aware state update mechanism in nonlinear least-squares optimization updates only the identifiable directions in the state space with truncated singular value decomposition (TSVD), which enables accurate calibration results even under degenerate cases where informative data segments are not available. The proposed LiDAR-IMU calibration approach has been validated extensively in both simulated and real-world experiments with different robot platforms, demonstrating its high accuracy and repeatability in commonly-seen human-made environments. We also open source our codebase to benefit the research community: {\url{https://github.com/APRIL-ZJU/OA-LICalib}}.

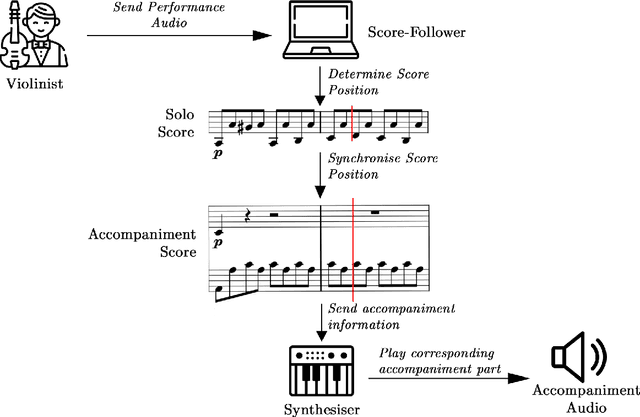

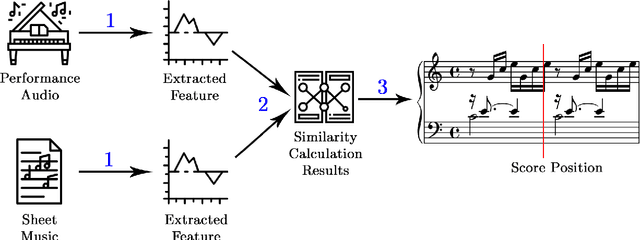

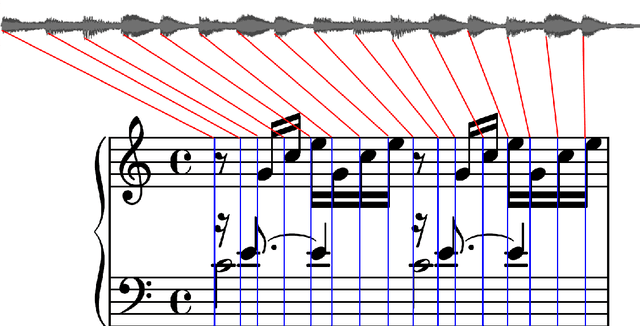

Musical Score Following and Audio Alignment

May 06, 2022

Real-time tracking of the position of a musical performance on a musical score, i.e. score following, can be useful in music practice, performance and production. Example applications of such technology include computer-aided accompaniment and automatic page turning. Score following is a challenging task, especially when considering deviations in performance data from the score stemming from mistakes or expressive choices. In this project, the extensive research present in the field is first explored before two open-source evaluation testbenches for score following--one quantitative and the other qualitative--are introduced. A new way of obtaining quantitative testbench data is proposed, and the QualScofo dataset for qualitative benchmarking is introduced. Subsequently, three different score followers, each of a different class, are implemented. First, a beat-based follower for an interactive conductor application--the TuneApp Conductor--is created to demonstrate an entertaining application of score following. Then, an Approximate String Matching (ASM) non-real-time follower is implemented to complement the quantitative testbench and provide more technical background details of score following. Finally, a Constant Q-Transform (CQT) Dynamic Time Warping (DTW) score follower robust against major challenges in score following (such as polyphonic music and performance deviations) is outlined and implemented; it is shown that this CQT-based approach consistently and significantly outperforms a commonly used FFT-based approach in extracting audio features for score following.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge