"Time": models, code, and papers

Multicarrier-Division Duplex for Solving the Channel Aging Problem in Massive MIMO Systems

Apr 28, 2022

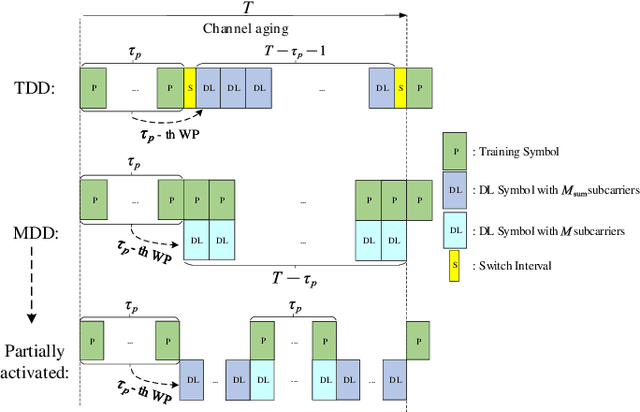

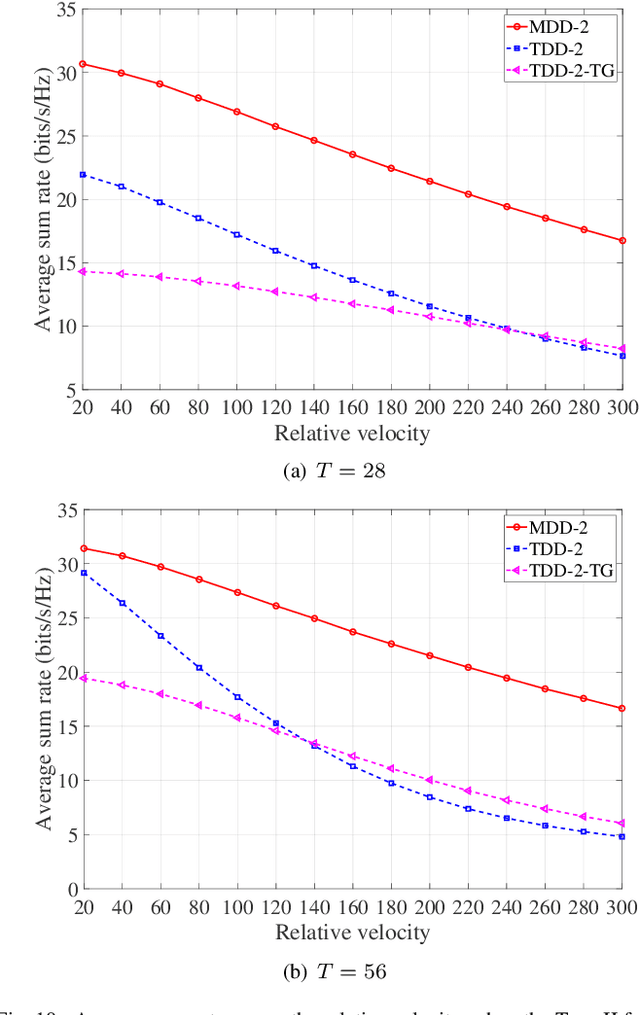

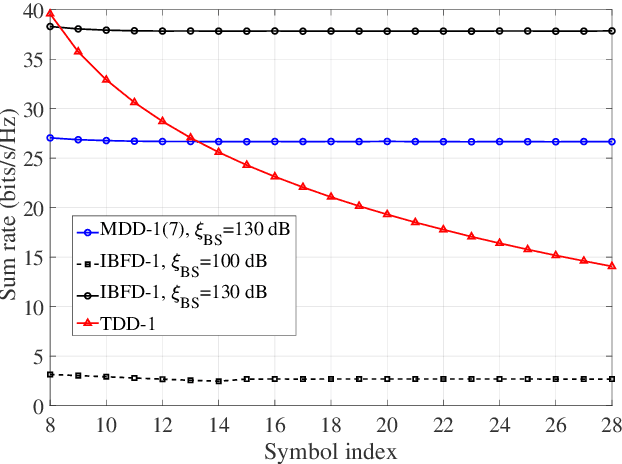

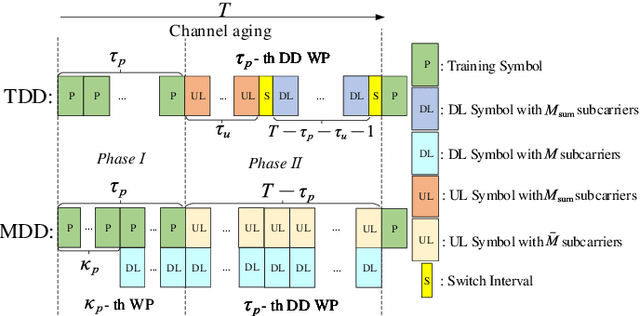

The separation of training and data transmission as well as the frequent uplink/downlink (UL/DL) switching make time-division duplex (TDD)-based massive multiple-input multiple-output (mMIMO) systems less competent in fast time-varying scenarios due to the resulted severe channel aging. To this end, a multicarrier-division duplex (MDD) mMIMO scheme associated with two types of well-designed frame structures are introduced for combating channel aging when communicating over fast time-varying channels. To compare with TDD, the corresponding frame structures related to 3GPP standards and their variant forms are presented. The MDD-specific general Wiener predictor and decision-directed Wiener predictor are introduced to predict the channel state information, respectively, in the time domain based on UL pilots and in the frequency domain based on the detected UL data, considering the impact of residual self-interference (SI). Moreover, by applying the zero-forcing precoding and maximum ratio combining, the closed-form approximations for the lower bounded rate achieved by TDD and MDD systems over time-varying channels are derived. Our main conclusion from this study is that the MDD, endowed with the capability of full-duplex but less demand on SI cancellation than in-band full-duplex (IBFD), outperforms both the conventional TDD and IBFD in combating channel aging.

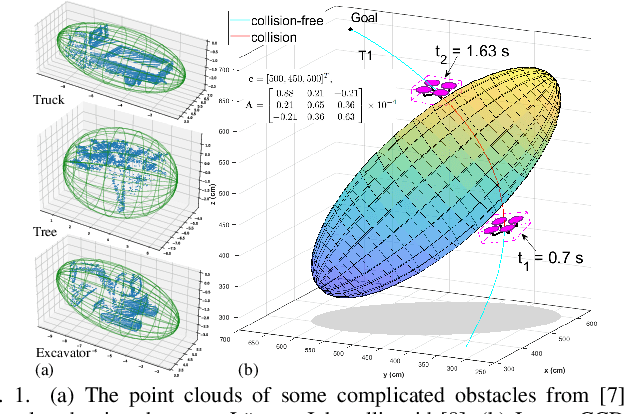

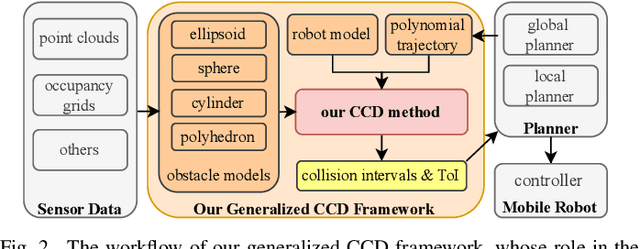

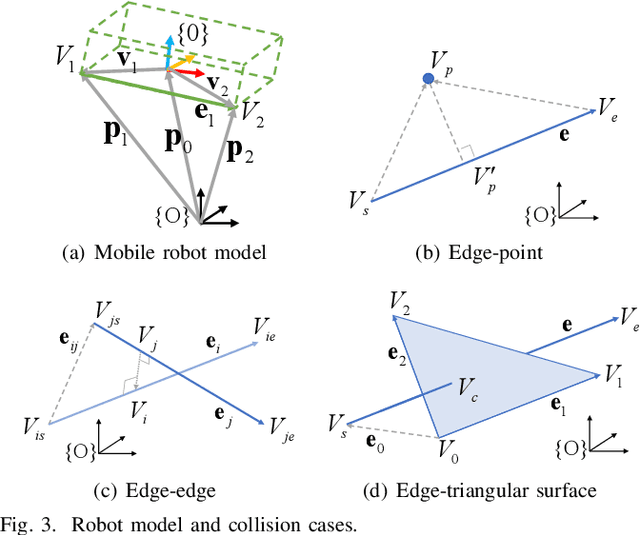

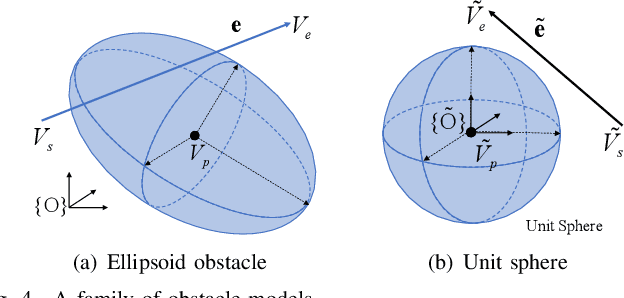

A Generalized Continuous Collision Detection Framework of Polynomial Trajectory for Mobile Robots in Cluttered Environments

Jun 27, 2022

In this paper, we introduce a generalized continuous collision detection (CCD) framework for the mobile robot along the polynomial trajectory in cluttered environments including various static obstacle models. Specifically, we find that the collision conditions between robots and obstacles could be transformed into a set of polynomial inequalities, whose roots can be efficiently solved by the proposed solver. In addition, we test different types of mobile robots with various kinematic and dynamic constraints in our generalized CCD framework and validate that it allows the provable collision checking and can compute the exact time of impact. Furthermore, we combine our architecture with the path planner in the navigation system. Benefiting from our CCD method, the mobile robot is able to work safely in some challenging scenarios.

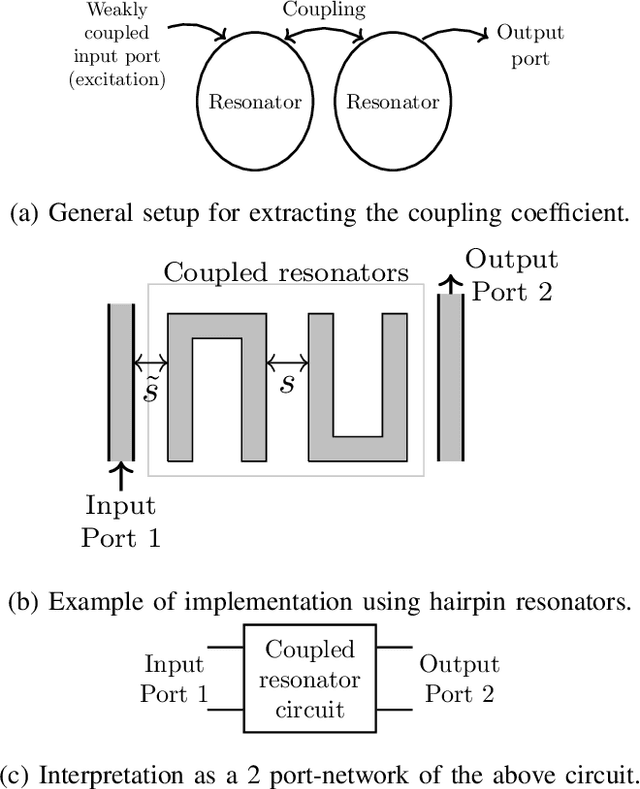

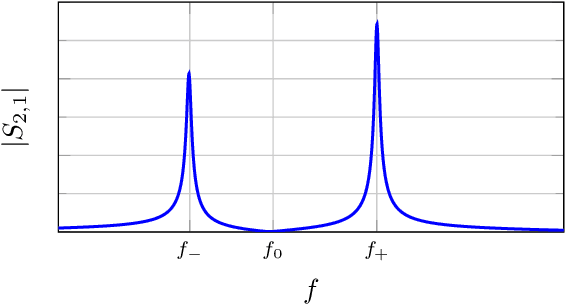

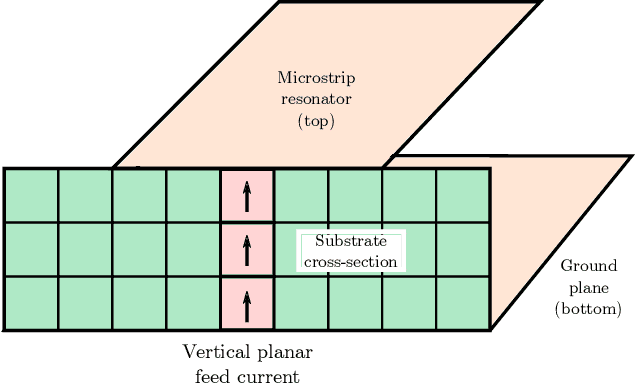

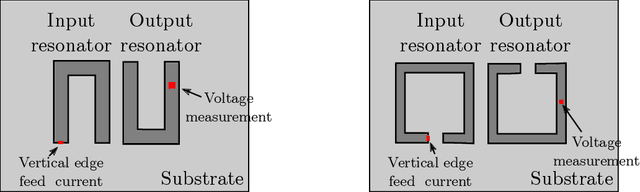

A Time-domain Approach to the Design of Coupled-Resonator Microstrip Filters

Jun 25, 2021

Coupled-resonator microstrip filters are among the most versatile filter topologies. A known design approach uses full-wave electromagnetic simulations to determine the coupling coefficient between resonators as a function of their relative position. This could be done using time-domain simulations using a fast Fourier transform (FFT) to extract the couplings from the S parameters obtained from time-domain signals. However, this approach has a poor performance in terms of resolution and specially for weak couplings, leading to unreasonably long simulation times. To overcome this, we introduce a technique to obtain the couplings directly from time signals, without moving to the frequency domain. This procedure works for strong and weak couplings, with much shorter simulation times and a reduced simulation domain over the FFT approach. This technique is used to design coupled resonator filter efficiently from time domain simulations. We implement this procedure using the finite-difference time-domain framework using an open source solver and discuss our implementation. We show that its results are very similar to previously published ones obtained from frequency domain simulations, even in the case of very weak couplings. Finally, we design and measure a filter to show the good performance of the proposed approach.

Defending Substitution-Based Profile Pollution Attacks on Sequential Recommenders

Jul 19, 2022

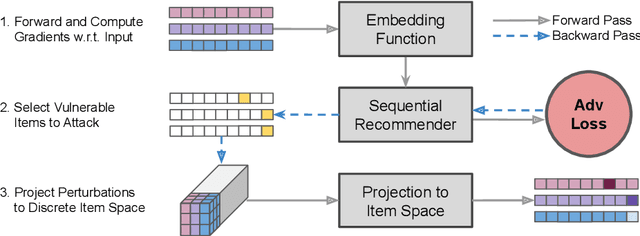

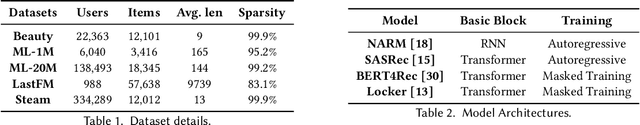

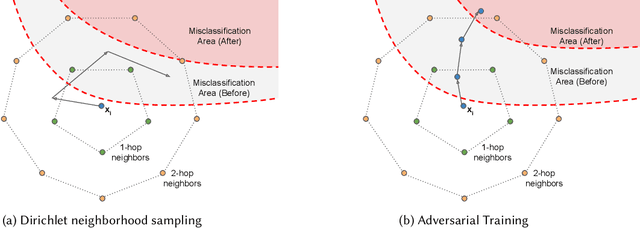

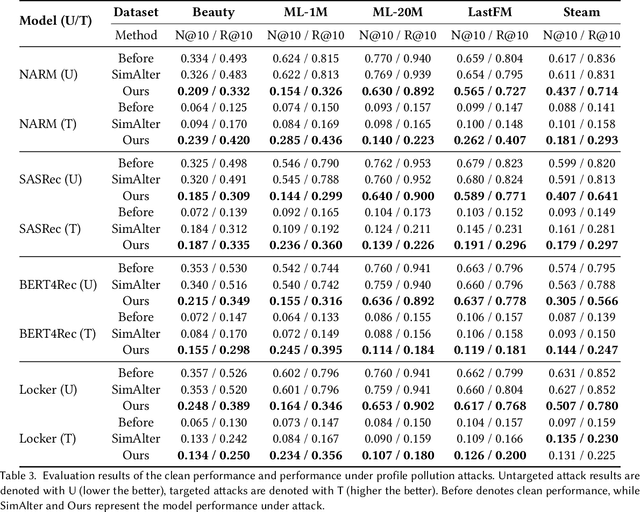

While sequential recommender systems achieve significant improvements on capturing user dynamics, we argue that sequential recommenders are vulnerable against substitution-based profile pollution attacks. To demonstrate our hypothesis, we propose a substitution-based adversarial attack algorithm, which modifies the input sequence by selecting certain vulnerable elements and substituting them with adversarial items. In both untargeted and targeted attack scenarios, we observe significant performance deterioration using the proposed profile pollution algorithm. Motivated by such observations, we design an efficient adversarial defense method called Dirichlet neighborhood sampling. Specifically, we sample item embeddings from a convex hull constructed by multi-hop neighbors to replace the original items in input sequences. During sampling, a Dirichlet distribution is used to approximate the probability distribution in the neighborhood such that the recommender learns to combat local perturbations. Additionally, we design an adversarial training method tailored for sequential recommender systems. In particular, we represent selected items with one-hot encodings and perform gradient ascent on the encodings to search for the worst case linear combination of item embeddings in training. As such, the embedding function learns robust item representations and the trained recommender is resistant to test-time adversarial examples. Extensive experiments show the effectiveness of both our attack and defense methods, which consistently outperform baselines by a significant margin across model architectures and datasets.

A Survey on Collaborative DNN Inference for Edge Intelligence

Jul 16, 2022

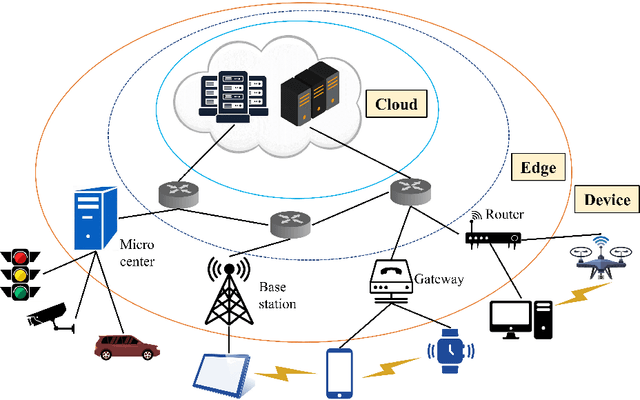

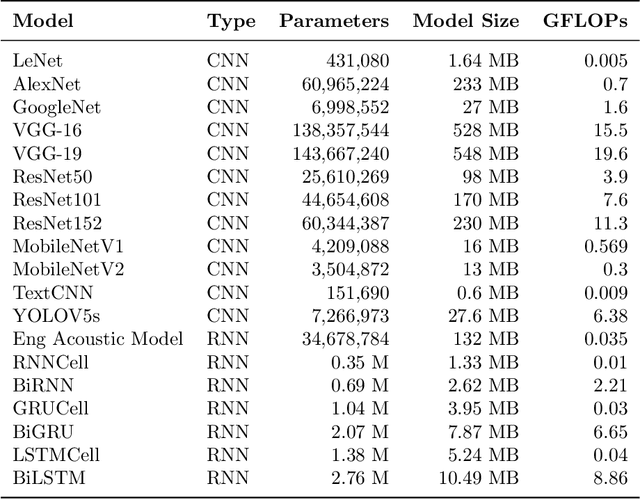

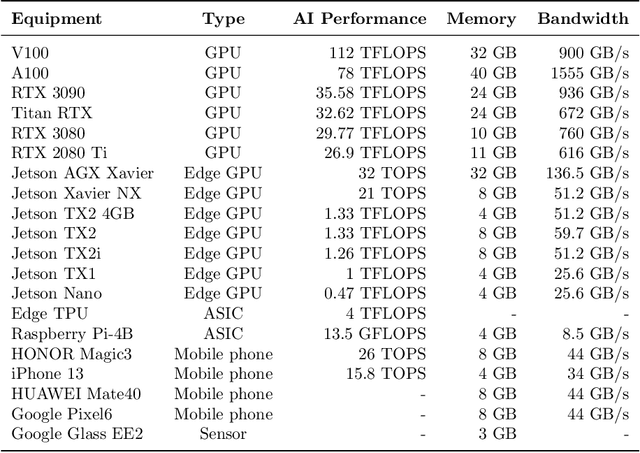

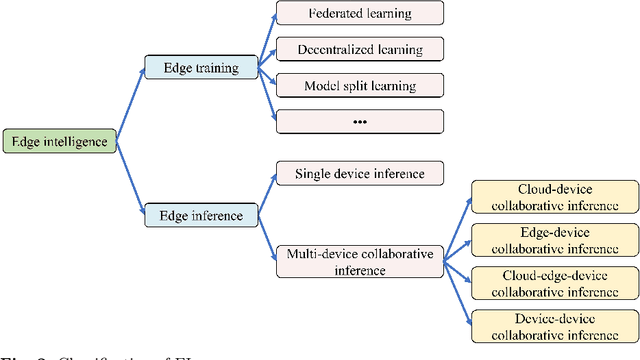

With the vigorous development of artificial intelligence (AI), the intelligent applications based on deep neural network (DNN) change people's lifestyles and the production efficiency. However, the huge amount of computation and data generated from the network edge becomes the major bottleneck, and traditional cloud-based computing mode has been unable to meet the requirements of real-time processing tasks. To solve the above problems, by embedding AI model training and inference capabilities into the network edge, edge intelligence (EI) becomes a cutting-edge direction in the field of AI. Furthermore, collaborative DNN inference among the cloud, edge, and end device provides a promising way to boost the EI. Nevertheless, at present, EI oriented collaborative DNN inference is still in its early stage, lacking a systematic classification and discussion of existing research efforts. Thus motivated, we have made a comprehensive investigation on the recent studies about EI oriented collaborative DNN inference. In this paper, we firstly review the background and motivation of EI. Then, we classify four typical collaborative DNN inference paradigms for EI, and analyze the characteristics and key technologies of them. Finally, we summarize the current challenges of collaborative DNN inference, discuss the future development trend and provide the future research direction.

CSRNet: Cascaded Selective Resolution Network for Real-time Semantic Segmentation

Jun 08, 2021

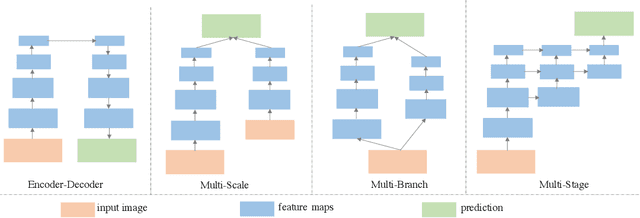

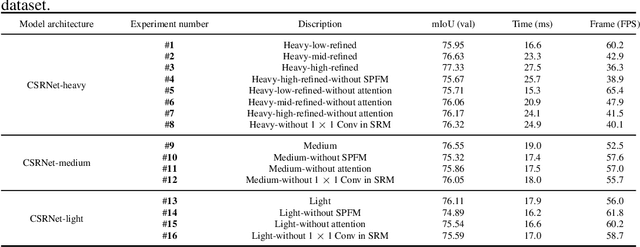

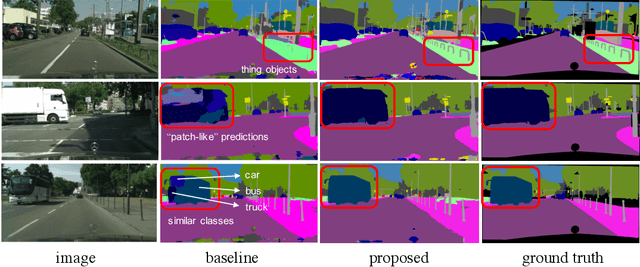

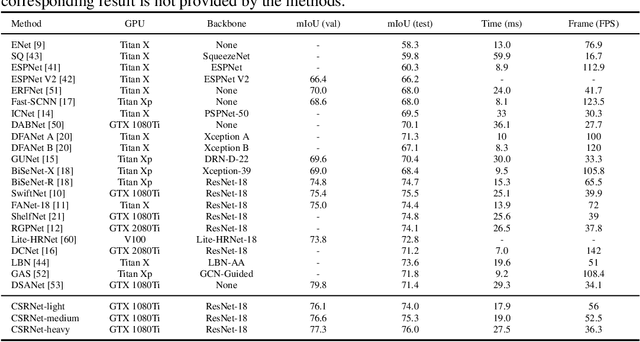

Real-time semantic segmentation has received considerable attention due to growing demands in many practical applications, such as autonomous vehicles, robotics, etc. Existing real-time segmentation approaches often utilize feature fusion to improve segmentation accuracy. However, they fail to fully consider the feature information at different resolutions and the receptive fields of the networks are relatively limited, thereby compromising the performance. To tackle this problem, we propose a light Cascaded Selective Resolution Network (CSRNet) to improve the performance of real-time segmentation through multiple context information embedding and enhanced feature aggregation. The proposed network builds a three-stage segmentation system, which integrates feature information from low resolution to high resolution and achieves feature refinement progressively. CSRNet contains two critical modules: the Shorted Pyramid Fusion Module (SPFM) and the Selective Resolution Module (SRM). The SPFM is a computationally efficient module to incorporate the global context information and significantly enlarge the receptive field at each stage. The SRM is designed to fuse multi-resolution feature maps with various receptive fields, which assigns soft channel attentions across the feature maps and helps to remedy the problem caused by multi-scale objects. Comprehensive experiments on two well-known datasets demonstrate that the proposed CSRNet effectively improves the performance for real-time segmentation.

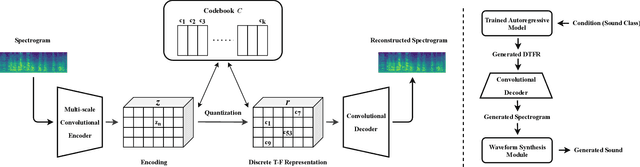

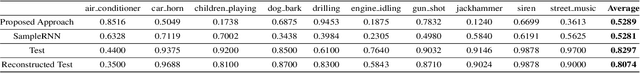

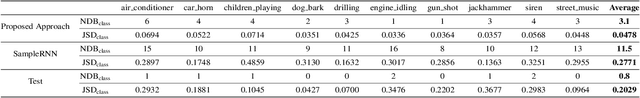

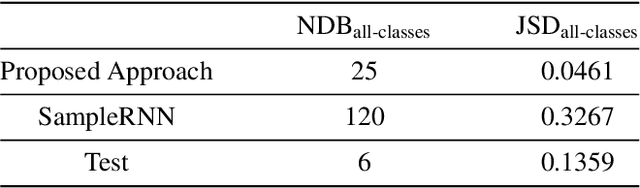

Conditional Sound Generation Using Neural Discrete Time-Frequency Representation Learning

Jul 21, 2021

Deep generative models have recently achieved impressive performance in speech synthesis and music generation. However, compared to the generation of those domain-specific sounds, the generation of general sounds (such as car horn, dog barking, and gun shot) has received less attention, despite their wide potential applications. In our previous work, sounds are generated in the time domain using SampleRNN. However, it is difficult to capture long-range dependencies within sound recordings using this method. In this work, we propose to generate sounds conditioned on sound classes via neural discrete time-frequency representation learning. This offers an advantage in modelling long-range dependencies and retaining local fine-grained structure within a sound clip. We evaluate our proposed approach on the UrbanSound8K dataset, as compared to a SampleRNN baseline, with the performance metrics measuring the quality and diversity of the generated sound samples. Experimental results show that our proposed method offers significantly better performance in diversity and comparable performance in quality, as compared to the baseline method.

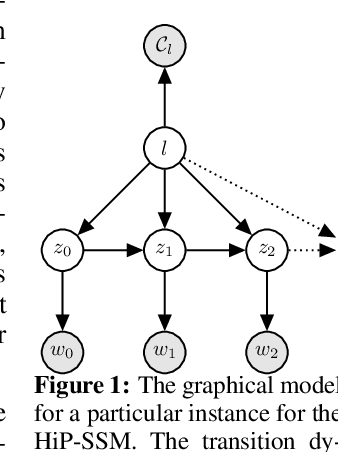

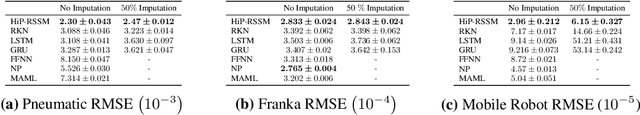

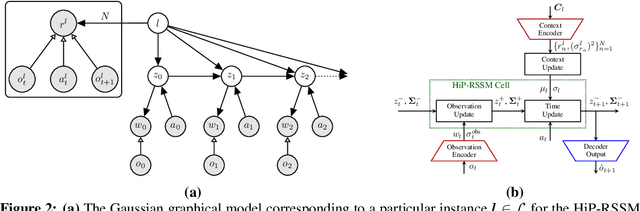

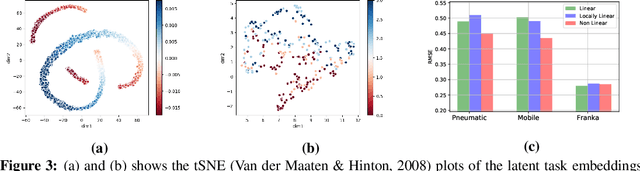

Hidden Parameter Recurrent State Space Models For Changing Dynamics Scenarios

Jun 29, 2022

Recurrent State-space models (RSSMs) are highly expressive models for learning patterns in time series data and system identification. However, these models assume that the dynamics are fixed and unchanging, which is rarely the case in real-world scenarios. Many control applications often exhibit tasks with similar but not identical dynamics which can be modeled as a latent variable. We introduce the Hidden Parameter Recurrent State Space Models (HiP-RSSMs), a framework that parametrizes a family of related dynamical systems with a low-dimensional set of latent factors. We present a simple and effective way of learning and performing inference over this Gaussian graphical model that avoids approximations like variational inference. We show that HiP-RSSMs outperforms RSSMs and competing multi-task models on several challenging robotic benchmarks both on real-world systems and simulations.

Time Matters: Time-Aware LSTMs for Predictive Business Process Monitoring

Oct 16, 2020

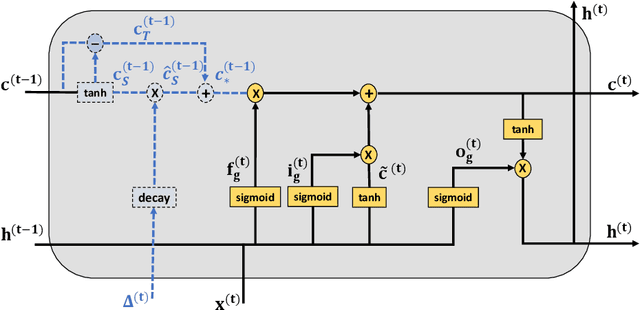

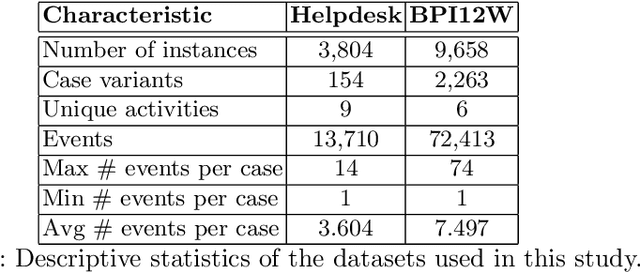

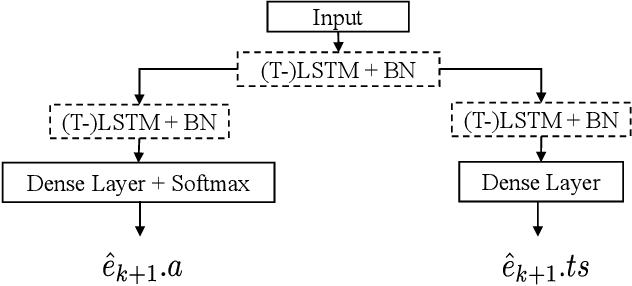

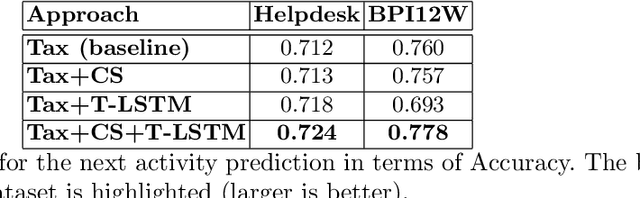

Predictive business process monitoring (PBPM) aims to predict future process behavior during ongoing process executions based on event log data. Especially, techniques for the next activity and timestamp prediction can help to improve the performance of operational business processes. Recently, many PBPM solutions based on deep learning were proposed by researchers. Due to the sequential nature of event log data, a common choice is to apply recurrent neural networks with long short-term memory (LSTM) cells. We argue, that the elapsed time between events is informative. However, current PBPM techniques mainly use 'vanilla' LSTM cells and hand-crafted time-related control flow features. To better model the time dependencies between events, we propose a new PBPM technique based on time-aware LSTM (T-LSTM) cells. T-LSTM cells incorporate the elapsed time between consecutive events inherently to adjust the cell memory. Furthermore, we introduce cost-sensitive learning to account for the common class imbalance in event logs. Our experiments on publicly available benchmark event logs indicate the effectiveness of the introduced techniques.

A Parallel Novelty Search Metaheuristic Applied to a Wildfire Prediction System

Jul 24, 2022

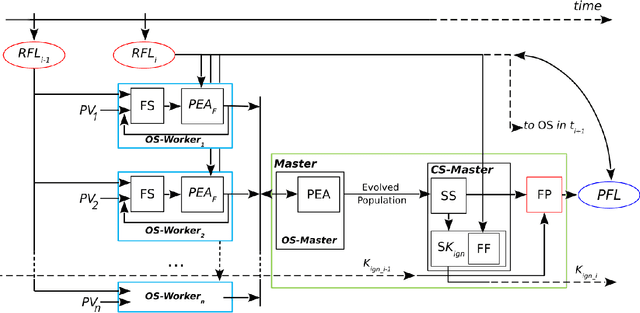

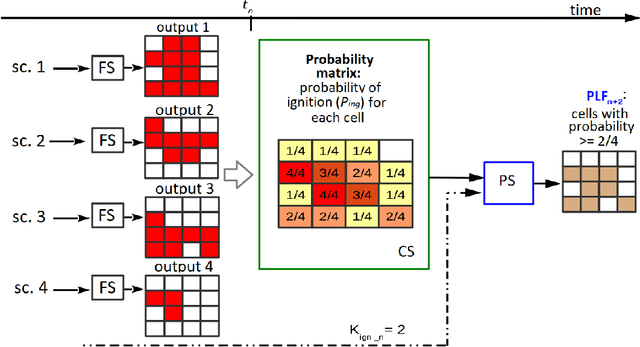

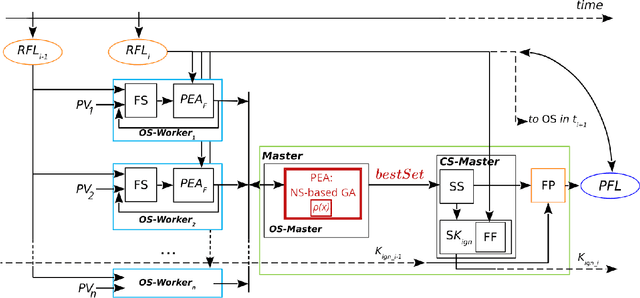

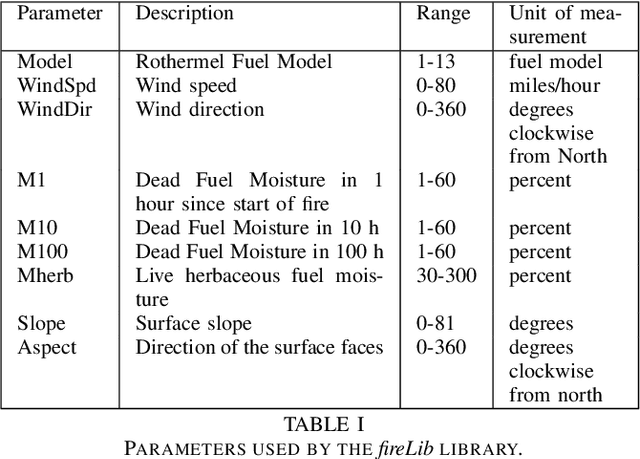

Wildfires are a highly prevalent multi-causal environmental phenomenon. The impact of this phenomenon includes human losses, environmental damage and high economic costs. To mitigate these effects, several computer simulation systems have been developed in order to predict fire behavior based on a set of input parameters, also called a scenario (wind speed and direction; temperature; etc.). However, the results of a simulation usually have a high degree of error due to the uncertainty in the values of some variables, because they are not known, or because their measurement may be imprecise, erroneous, or impossible to perform in real time. Previous works have proposed the combination of multiple results in order to reduce this uncertainty. State-of-the-art methods are based on parallel optimization strategies that use a fitness function to guide the search among all possible scenarios. Although these methods have shown improvements in the quality of predictions, they have some limitations related to the algorithms used for the selection of scenarios. To overcome these limitations, in this work we propose to apply the Novelty Search paradigm, which replaces the objective function by a measure of the novelty of the solutions found, which allows the search to continuously generate solutions with behaviors that differ from one another. This approach avoids local optima and may be able to find useful solutions that would be difficult or impossible to find by other algorithms. As with existing methods, this proposal may also be adapted to other propagation models (floods, avalanches or landslides).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge