"Time": models, code, and papers

Towards Cell-Free Massive MIMO: A Measurement-Based Analysis

Jul 04, 2022

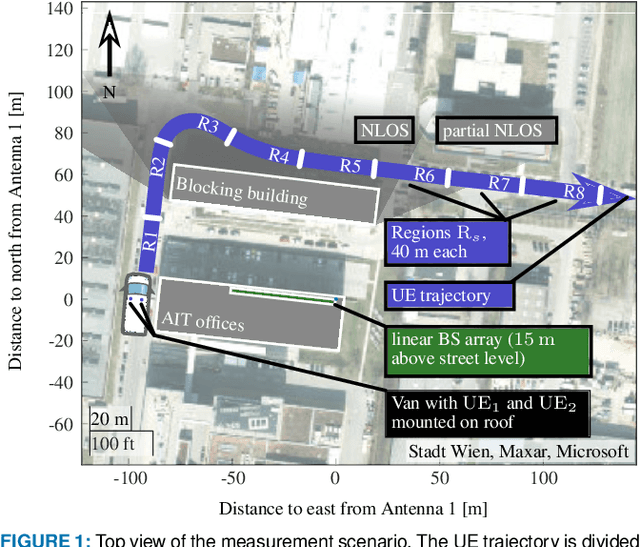

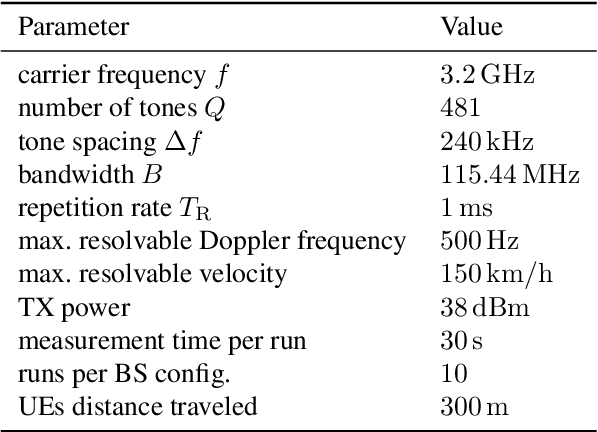

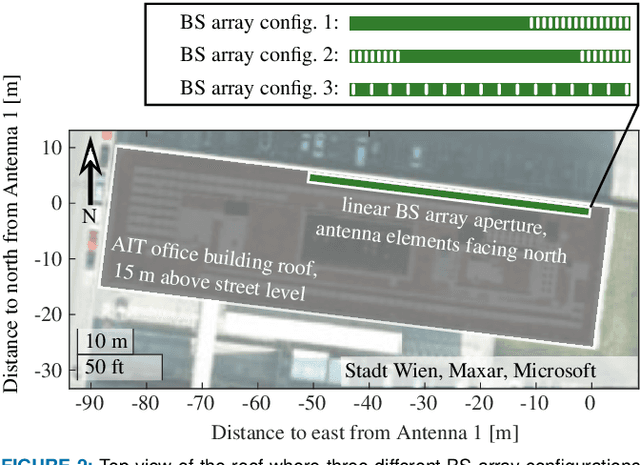

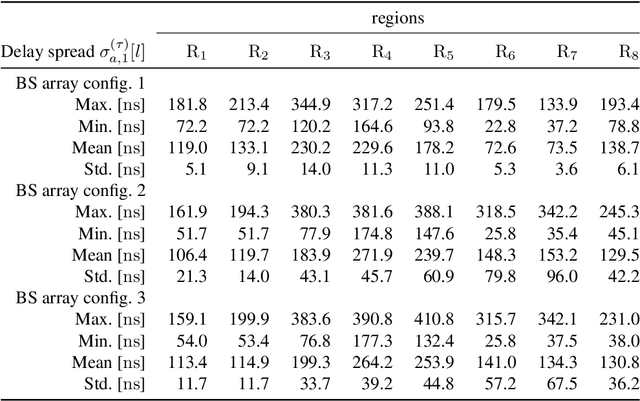

Cell-free widely distributed massive multiple-input multiple-output (MIMO) systems utilize radio units spread out over a large geographical area. The radio signal of a user equipment (UE) is coherently detected by a subset of radio units (RUs) in the vicinity of the UE and processed jointly at the nearest baseband processing unit (BPU). This architecture promises two orders of magnitude less transmit power, spatial focusing at the UE position for high reliability, and consistent throughput over the coverage area. All these properties have been investigated so far from a theoretical point of view. To the best of our knowledge, this work presents the first empirical radio wave propagation measurements in the form of time-variant channel transfer functions for a linear, widely distributed antenna array with 32 single antenna RUs spread out over a range of 46.5 m. The large aperture allows for valuable insights into the propagation characteristics of cell-free systems. Three different co-located and widely distributed RU configurations and their properties in an urban environment are analyzed in terms of time-variant delay-spread, Doppler spread, path loss and the correlation of the local scattering function over space. For the development of 6G cell-free massive MIMO transceiver algorithms, we analyze properties such as channel hardening, channel aging as well as the signal to interference and noise ratio (SINR). Our empirical evidence supports the promising claims for widely distributed cell-free systems.

Gradual Drift Detection in Process Models Using Conformance Metrics

Jul 22, 2022

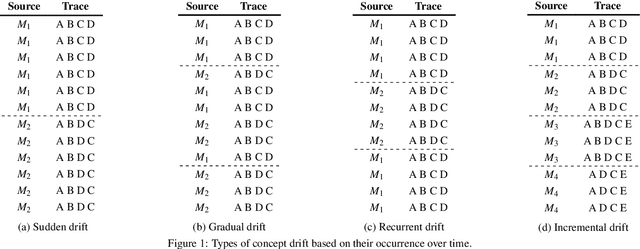

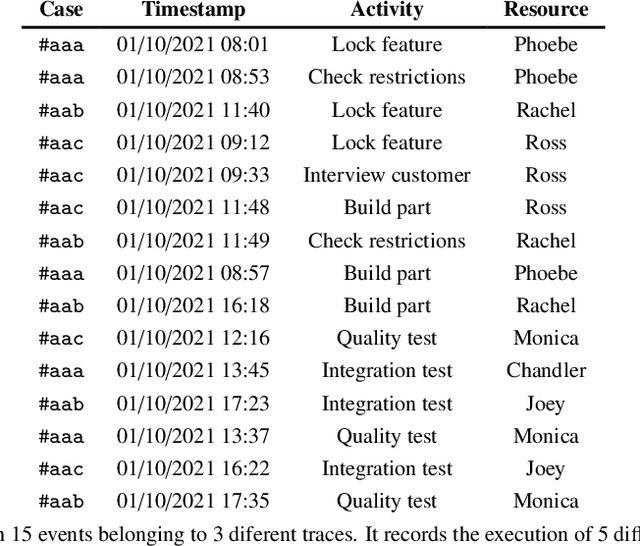

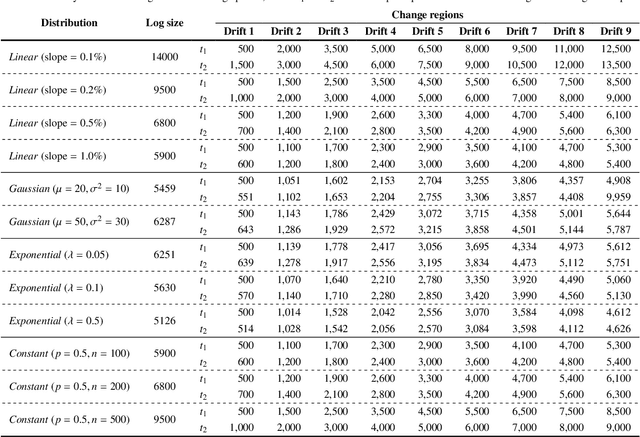

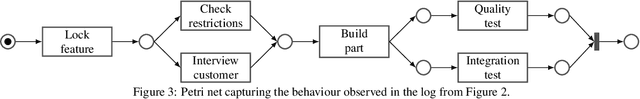

Changes, planned or unexpected, are common during the execution of real-life processes. Detecting these changes is a must for optimizing the performance of organizations running such processes. Most of the algorithms present in the state-of-the-art focus on the detection of sudden changes, leaving aside other types of changes. In this paper, we will focus on the automatic detection of gradual drifts, a special type of change, in which the cases of two models overlap during a period of time. The proposed algorithm relies on conformance checking metrics to carry out the automatic detection of the changes, performing also a fully automatic classification of these changes into sudden or gradual. The approach has been validated with a synthetic dataset consisting of 120 logs with different distributions of changes, getting better results in terms of detection and classification accuracy, delay and change region overlapping than the main state-of-the-art algorithms.

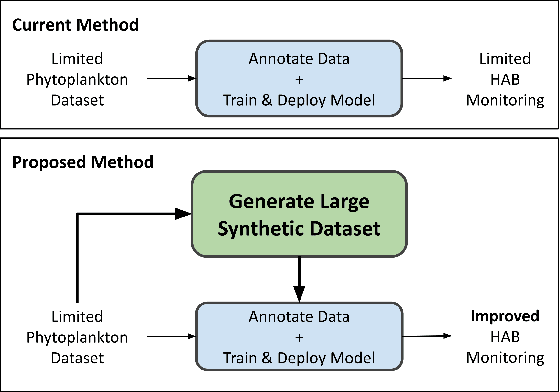

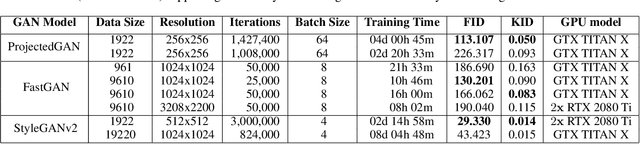

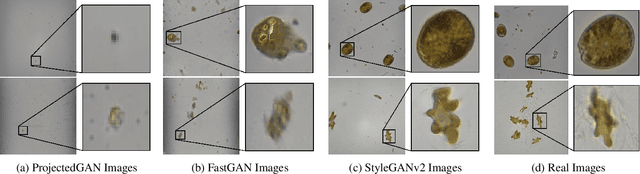

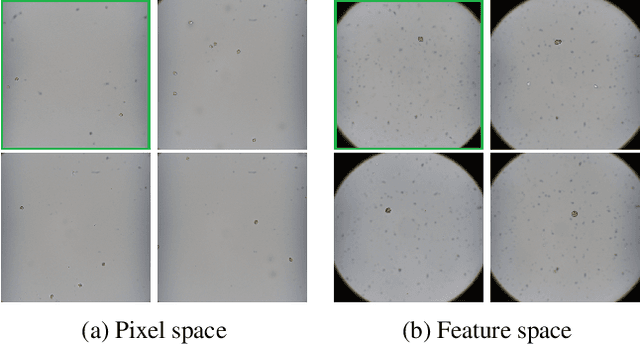

Towards Generating Large Synthetic Phytoplankton Datasets for Efficient Monitoring of Harmful Algal Blooms

Aug 03, 2022

Climate change is increasing the frequency and severity of harmful algal blooms (HABs), which cause significant fish deaths in aquaculture farms. This contributes to ocean pollution and greenhouse gas (GHG) emissions since dead fish are either dumped into the ocean or taken to landfills, which in turn negatively impacts the climate. Currently, the standard method to enumerate harmful algae and other phytoplankton is to manually observe and count them under a microscope. This is a time-consuming, tedious and error-prone process, resulting in compromised management decisions by farmers. Hence, automating this process for quick and accurate HAB monitoring is extremely helpful. However, this requires large and diverse datasets of phytoplankton images, and such datasets are hard to produce quickly. In this work, we explore the feasibility of generating novel high-resolution photorealistic synthetic phytoplankton images, containing multiple species in the same image, given a small dataset of real images. To this end, we employ Generative Adversarial Networks (GANs) to generate synthetic images. We evaluate three different GAN architectures: ProjectedGAN, FastGAN, and StyleGANv2 using standard image quality metrics. We empirically show the generation of high-fidelity synthetic phytoplankton images using a training dataset of only 961 real images. Thus, this work demonstrates the ability of GANs to create large synthetic datasets of phytoplankton from small training datasets, accomplishing a key step towards sustainable systematic monitoring of harmful algal blooms.

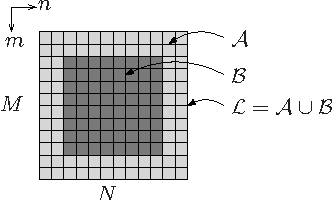

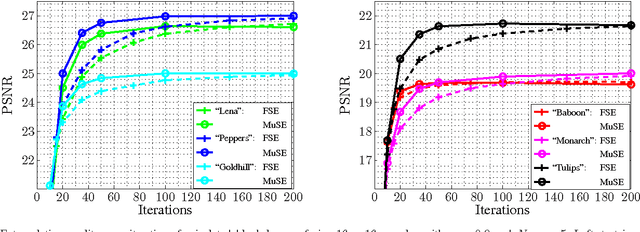

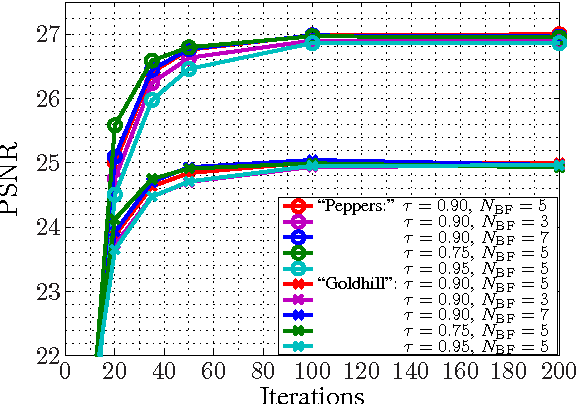

Multiple Selection Extrapolation for Improved Spatial Error Concealment

Jul 14, 2022

This contribution introduces a novel signal extrapolation algorithm and its application to image error concealment. The signal extrapolation is carried out by iteratively generating a model of the signal suffering from distortion. Thereby, the model results from a weighted superposition of two-dimensional basis functions whereas in every iteration step a set of these is selected and the approximation residual is projected onto the subspace they span. The algorithm is an improvement to the Frequency Selective Extrapolation that has proven to be an effective method for concealing lost or distorted image regions. Compared to this algorithm, the novel algorithm is able to reduce the processing time by a factor larger than three, by still preserving the very high extrapolation quality.

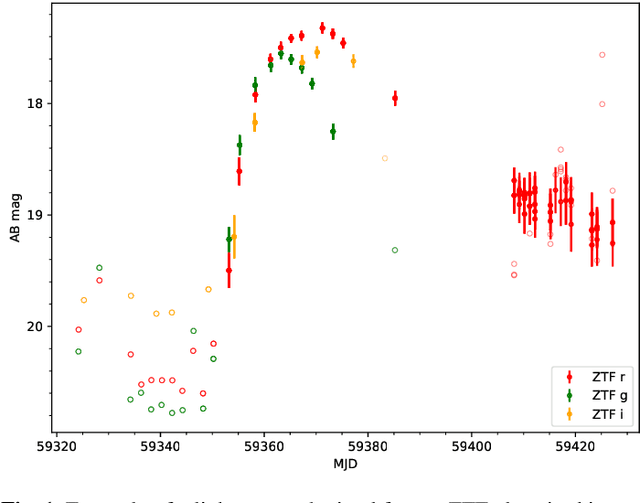

SNGuess: A method for the selection of young extragalactic transients

Aug 13, 2022

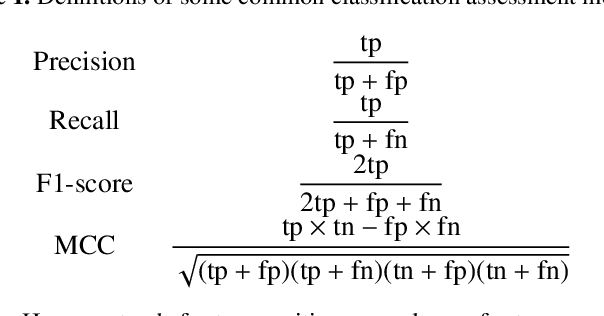

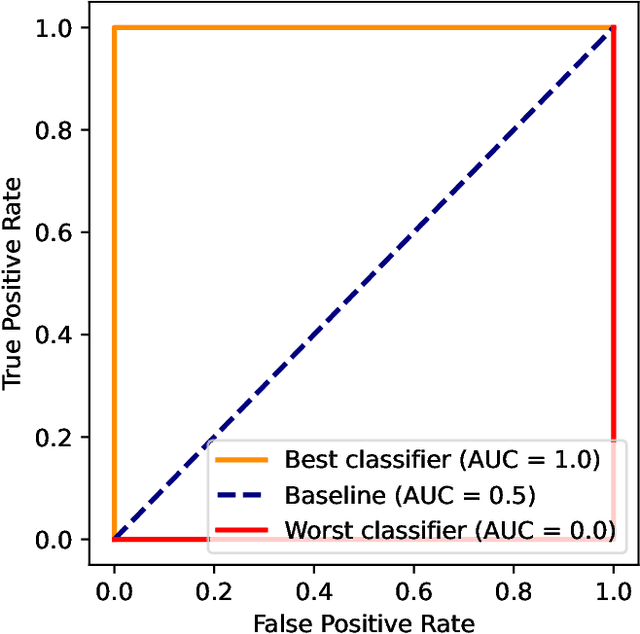

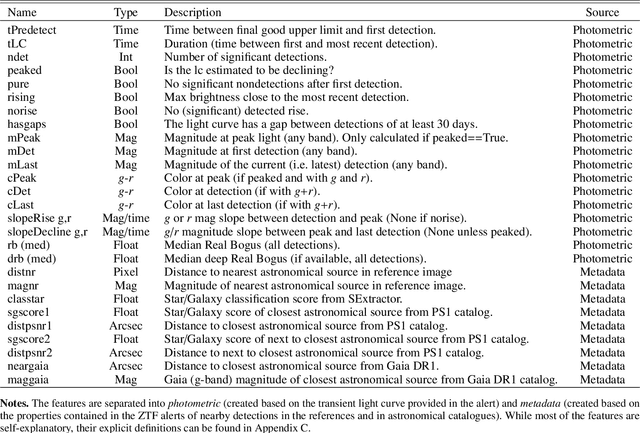

With a rapidly rising number of transients detected in astronomy, classification methods based on machine learning are increasingly being employed. Their goals are typically to obtain a definitive classification of transients, and for good performance they usually require the presence of a large set of observations. However, well-designed, targeted models can reach their classification goals with fewer computing resources. This paper presents SNGuess, a model designed to find young extragalactic nearby transients with high purity. SNGuess works with a set of features that can be efficiently calculated from astronomical alert data. Some of these features are static and associated with the alert metadata, while others must be calculated from the photometric observations contained in the alert. Most of the features are simple enough to be obtained or to be calculated already at the early stages in the lifetime of a transient after its detection. We calculate these features for a set of labeled public alert data obtained over a time span of 15 months from the Zwicky Transient Facility (ZTF). The core model of SNGuess consists of an ensemble of decision trees, which are trained via gradient boosting. Approximately 88% of the candidates suggested by SNGuess from a set of alerts from ZTF spanning from April 2020 to August 2021 were found to be true relevant supernovae (SNe). For alerts with bright detections, this number ranges between 92% and 98%. Since April 2020, transients identified by SNGuess as potential young SNe in the ZTF alert stream are being published to the Transient Name Server (TNS) under the AMPEL_ZTF_NEW group identifier. SNGuess scores for any transient observed by ZTF can be accessed via a web service. The source code of SNGuess is publicly available.

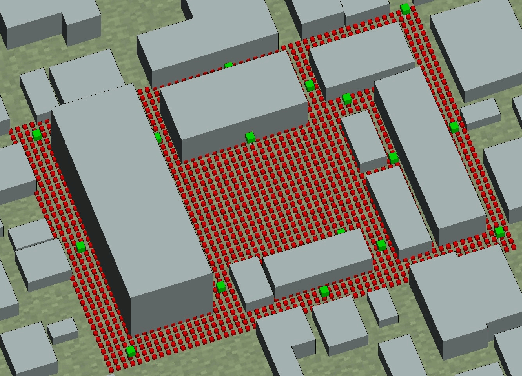

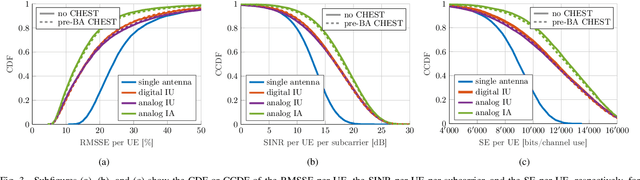

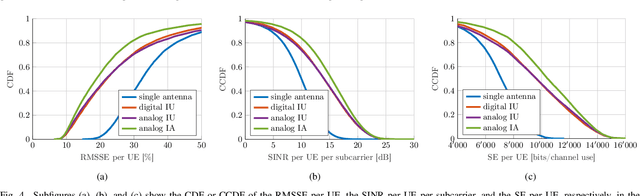

Beam Alignment for the Cell-Free mmWave Massive MU-MIMO Uplink

Jul 20, 2022

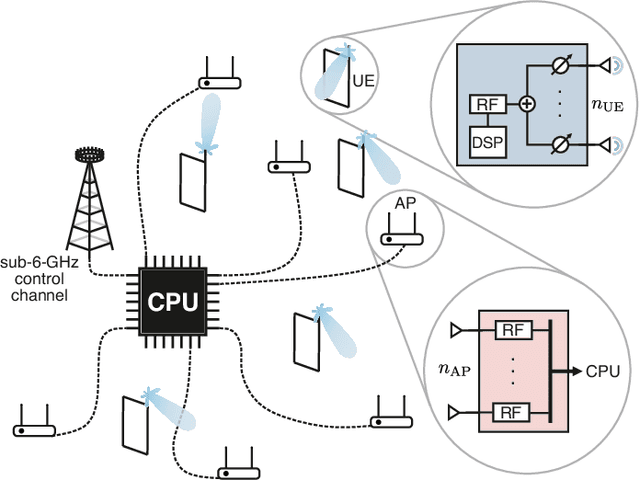

Millimeter-wave (mmWave) cell-free massive multi-user (MU) multiple-input multiple-output (MIMO) systems combine the large bandwidths available at mmWave frequencies with the improved coverage of cell-free systems. However, to combat the high path loss at mmWave frequencies, user equipments (UEs) must form beams in meaningful directions, i.e., to a nearby access point (AP). At the same time, multiple UEs should avoid transmitting to the same AP to reduce MU interference. We propose an interference-aware method for beam alignment (BA) in the cell-free mmWave massive MU-MIMO uplink. In the considered scenario, the APs perform full digital receive beamforming while the UEs perform analog transmit beamforming. We evaluate our method using realistic mmWave channels from a commercial ray-tracer, showing the superiority of the proposed method over omnidirectional transmission as well as over methods that do not take MU interference into account.

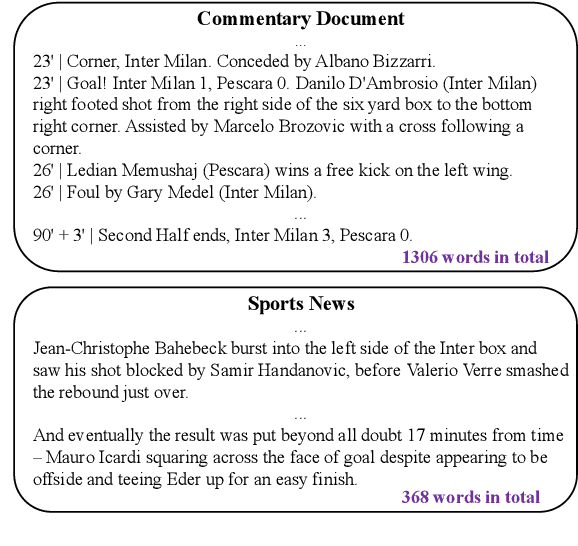

GOAL: Towards Benchmarking Few-Shot Sports Game Summarization

Jul 18, 2022

Sports game summarization aims to generate sports news based on real-time commentaries. The task has attracted wide research attention but is still under-explored probably due to the lack of corresponding English datasets. Therefore, in this paper, we release GOAL, the first English sports game summarization dataset. Specifically, there are 103 commentary-news pairs in GOAL, where the average lengths of commentaries and news are 2724.9 and 476.3 words, respectively. Moreover, to support the research in the semi-supervised setting, GOAL additionally provides 2,160 unlabeled commentary documents. Based on our GOAL, we build and evaluate several baselines, including extractive and abstractive baselines. The experimental results show the challenges of this task still remain. We hope our work could promote the research of sports game summarization. The dataset has been released at https://github.com/krystalan/goal.

Scaled-Time-Attention Robust Edge Network

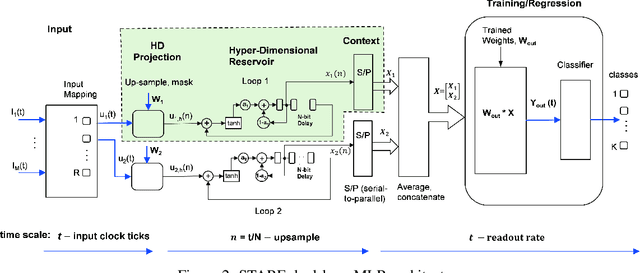

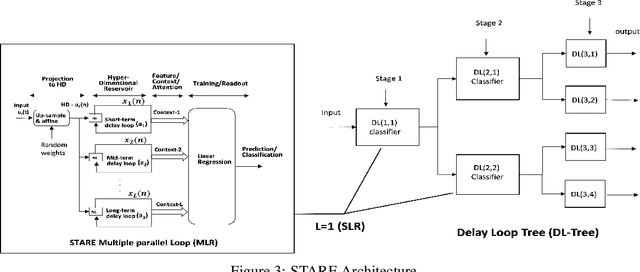

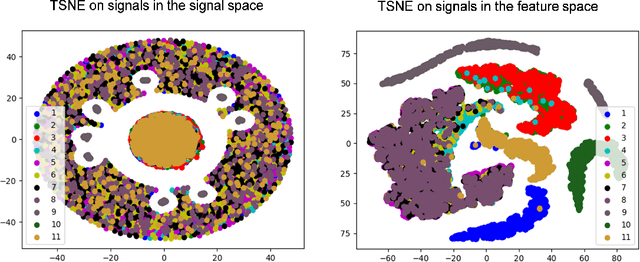

Jul 09, 2021

This paper describes a systematic approach towards building a new family of neural networks based on a delay-loop version of a reservoir neural network. The resulting architecture, called Scaled-Time-Attention Robust Edge (STARE) network, exploits hyper dimensional space and non-multiply-and-add computation to achieve a simpler architecture, which has shallow layers, is simple to train, and is better suited for Edge applications, such as Internet of Things (IoT), over traditional deep neural networks. STARE incorporates new AI concepts such as Attention and Context, and is best suited for temporal feature extraction and classification. We demonstrate that STARE is applicable to a variety of applications with improved performance and lower implementation complexity. In particular, we showed a novel way of applying a dual-loop configuration to detection and identification of drone vs bird in a counter Unmanned Air Systems (UAS) detection application by exploiting both spatial (video frame) and temporal (trajectory) information. We also demonstrated that the STARE performance approaches that of a State-of-the-Art deep neural network in classifying RF modulations, and outperforms Long Short-term Memory (LSTM) in a special case of Mackey Glass time series prediction. To demonstrate hardware efficiency, we designed and developed an FPGA implementation of the STARE algorithm to demonstrate its low-power and high-throughput operations. In addition, we illustrate an efficient structure for integrating a massively parallel implementation of the STARE algorithm for ASIC implementation.

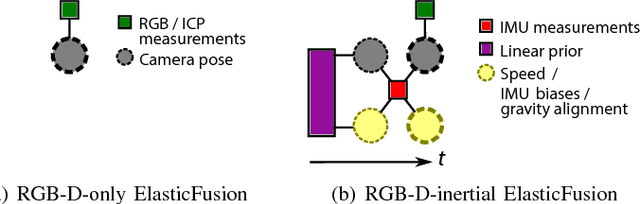

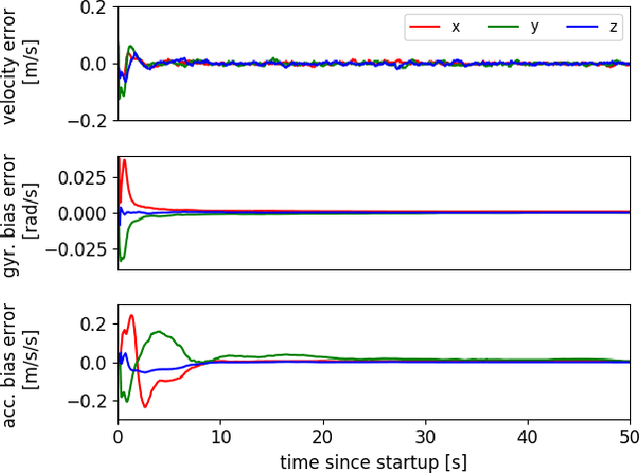

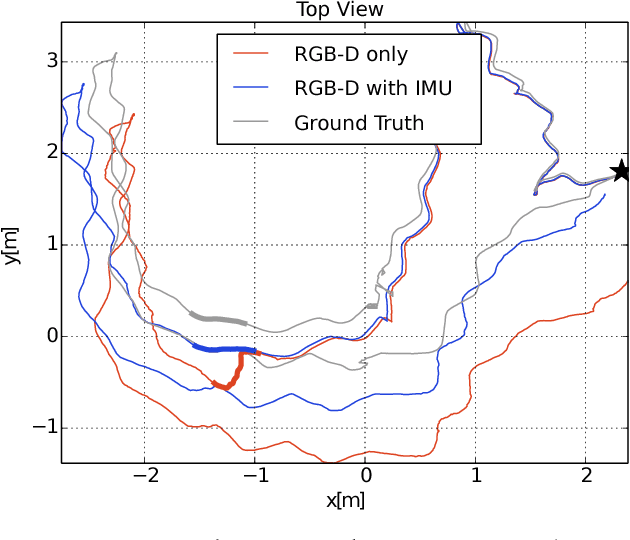

Dense RGB-D-Inertial SLAM with Map Deformations

Jul 22, 2022

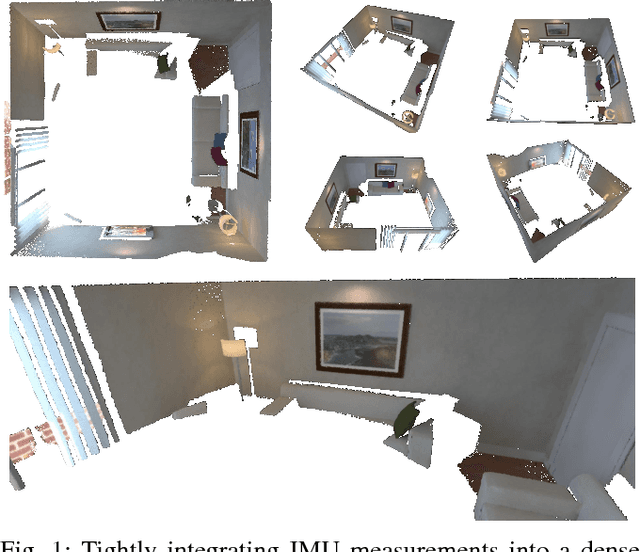

While dense visual SLAM methods are capable of estimating dense reconstructions of the environment, they suffer from a lack of robustness in their tracking step, especially when the optimisation is poorly initialised. Sparse visual SLAM systems have attained high levels of accuracy and robustness through the inclusion of inertial measurements in a tightly-coupled fusion. Inspired by this performance, we propose the first tightly-coupled dense RGB-D-inertial SLAM system. Our system has real-time capability while running on a GPU. It jointly optimises for the camera pose, velocity, IMU biases and gravity direction while building up a globally consistent, fully dense surfel-based 3D reconstruction of the environment. Through a series of experiments on both synthetic and real world datasets, we show that our dense visual-inertial SLAM system is more robust to fast motions and periods of low texture and low geometric variation than a related RGB-D-only SLAM system.

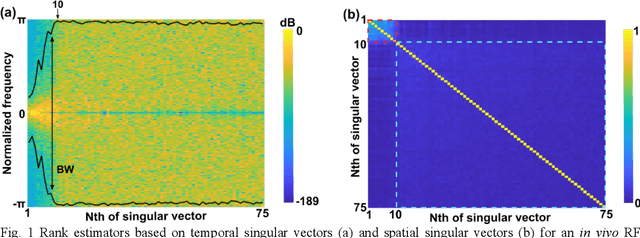

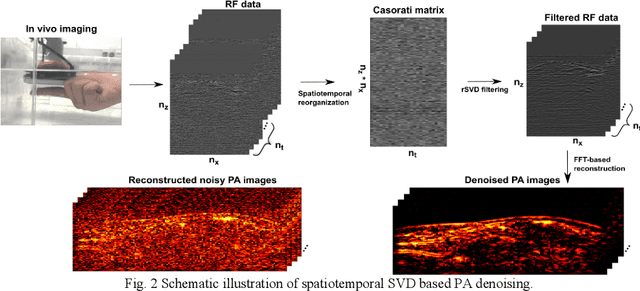

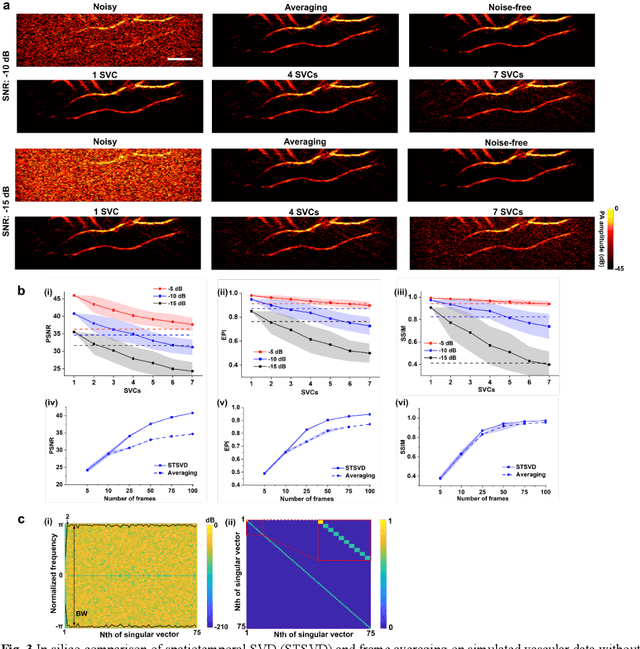

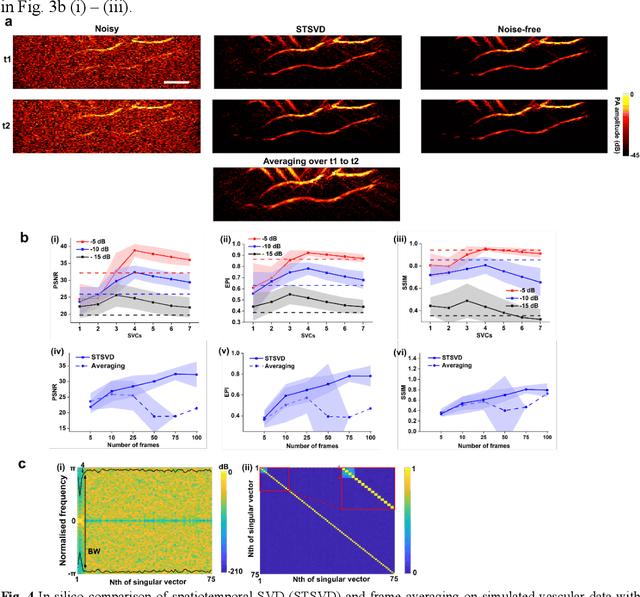

Spatiotemporal singular value decomposition for denoising in photoacoustic imaging with low-energy excitation light source

Jul 09, 2022

Photoacoustic (PA) imaging is an emerging hybrid imaging modality that combines rich optical spectroscopic contrast and high ultrasonic resolution and thus holds tremendous promise for a wide range of pre-clinical and clinical applications. Compact and affordable light sources such as light-emitting diodes (LEDs) and laser diodes (LDs) are promising alternatives to bulky and expensive solid-state laser systems that are commonly used as PA light sources. These could accelerate the clinical translation of PA technology. However, PA signals generated with these light sources are readily degraded by noise due to the low optical fluence, leading to decreased signal-to-noise ratio (SNR) in PA images. In this work, a spatiotemporal singular value decomposition (SVD) based PA denoising method was investigated for these light sources that usually have low fluence and high repetition rates. The proposed method leverages both spatial and temporal correlations between radiofrequency (RF) data frames. Validation was performed on simulations and in vivo PA data acquired from human fingers (2D) and forearm (3D) using a LED-based system. Spatiotemporal SVD greatly enhanced the PA signals of blood vessels corrupted by noise while preserving a high temporal resolution to slow motions, improving the SNR of in vivo PA images by 1.1, 0.7, and 1.9 times compared to single frame-based wavelet denoising, averaging across 200 frames, and single frame without denoising, respectively. The proposed method demonstrated a processing time of around 50 \mus per frame with SVD acceleration and GPU. Thus, spatiotemporal SVD is well suited to PA imaging systems with low-energy excitation light sources for real-time in vivo applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge