"Time": models, code, and papers

GLD-Net: Improving Monaural Speech Enhancement by Learning Global and Local Dependency Features with GLD Block

Jun 30, 2022

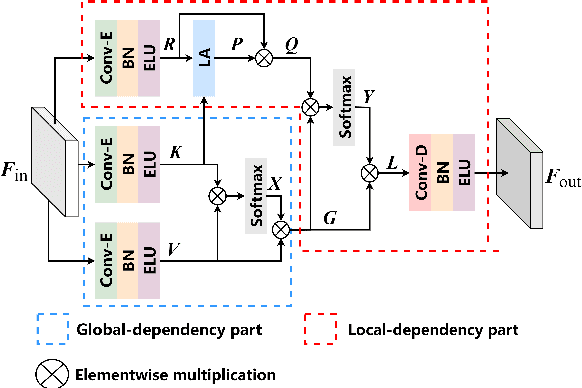

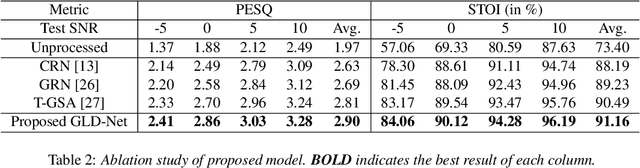

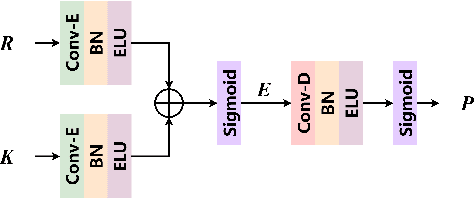

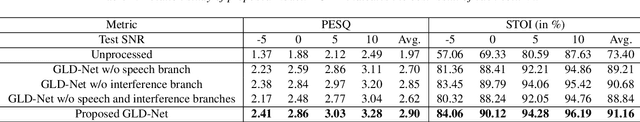

For monaural speech enhancement, contextual information is important for accurate speech estimation. However, commonly used convolution neural networks (CNNs) are weak in capturing temporal contexts since they only build blocks that process one local neighborhood at a time. To address this problem, we learn from human auditory perception to introduce a two-stage trainable reasoning mechanism, referred as global-local dependency (GLD) block. GLD blocks capture long-term dependency of time-frequency bins both in global level and local level from the noisy spectrogram to help detecting correlations among speech part, noise part, and whole noisy input. What is more, we conduct a monaural speech enhancement network called GLD-Net, which adopts encoder-decoder architecture and consists of speech object branch, interference branch, and global noisy branch. The extracted speech feature at global-level and local-level are efficiently reasoned and aggregated in each of the branches. We compare the proposed GLD-Net with existing state-of-art methods on WSJ0 and DEMAND dataset. The results show that GLD-Net outperforms the state-of-the-art methods in terms of PESQ and STOI.

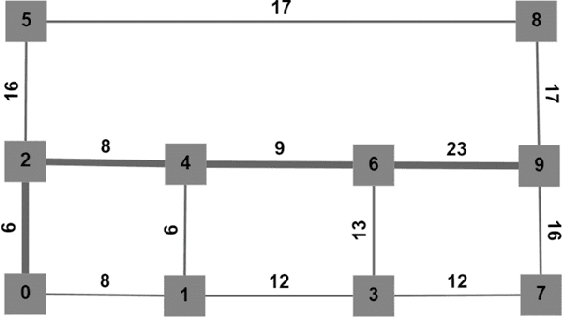

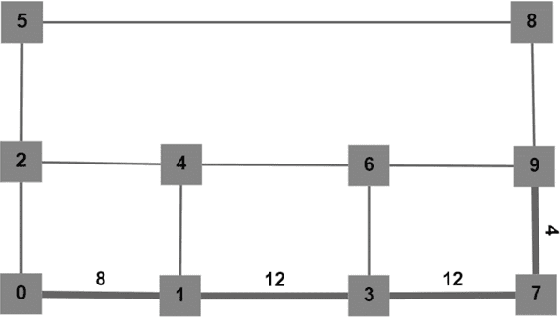

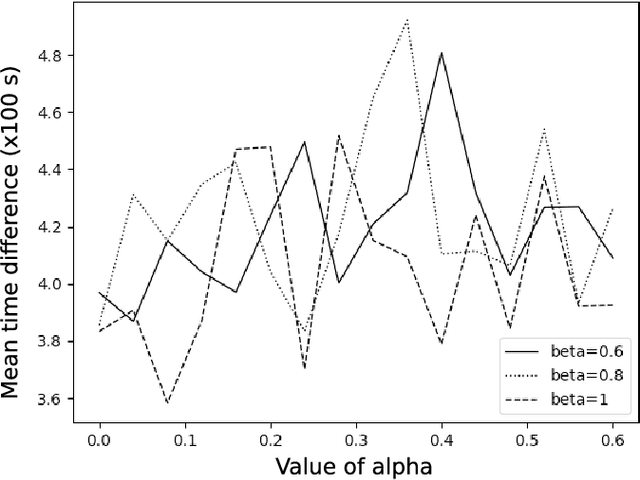

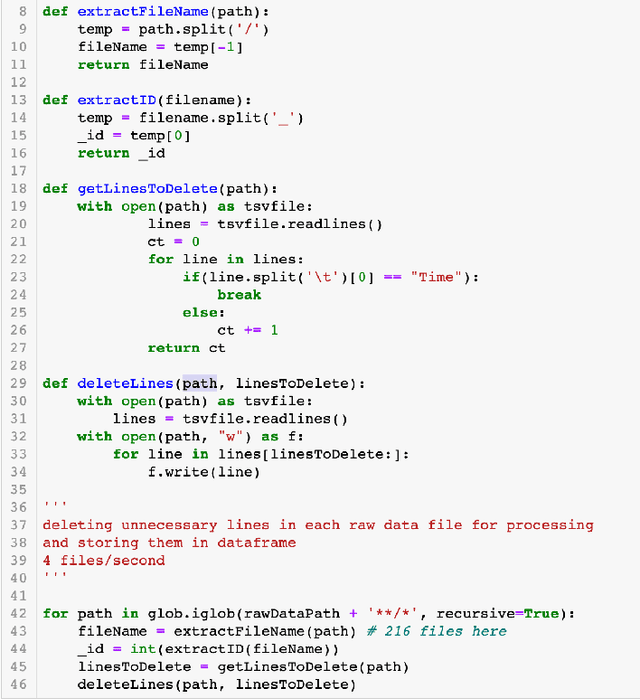

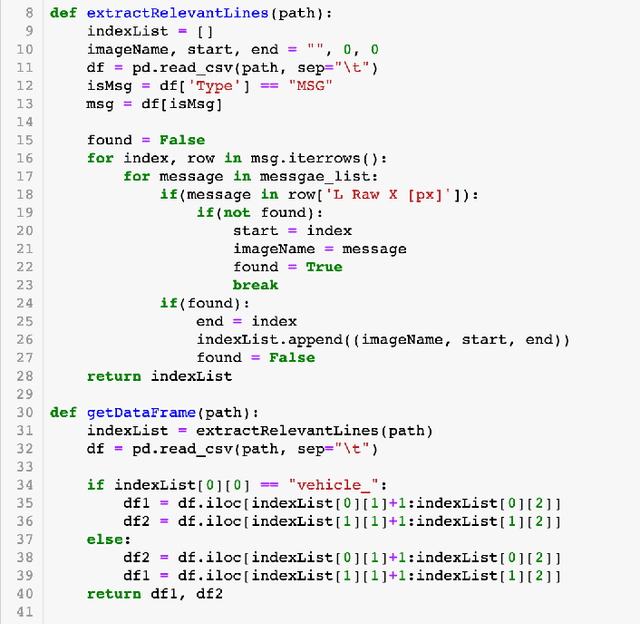

Vehicle Route Planning using Dynamically Weighted Dijkstra's Algorithm with Traffic Prediction

May 30, 2022

Traditional vehicle routing algorithms do not consider the changing nature of traffic. While implementations of Dijkstra's algorithm with varying weights exist, the weights are often changed after the outcome of algorithm is executed, which may not always result in the optimal route being chosen. Hence, this paper proposes a novel vehicle routing algorithm that improves upon Dijkstra's algorithm using a traffic prediction model based on the traffic flow in a road network. Here, Dijkstra's algorithm is adapted to be dynamic and time dependent using traffic flow theory principles during the planning stage itself. The model provides predicted traffic parameters and travel time across each edge of the road network at every time instant, leading to better routing results. The dynamic algorithm proposed here predicts changes in traffic conditions at each time step of planning to give the optimal forward-looking path. The proposed algorithm is verified by comparing it with conventional Dijkstra's algorithm on a graph with randomly simulated traffic, and is shown to predict the optimal route better with continuously changing traffic.

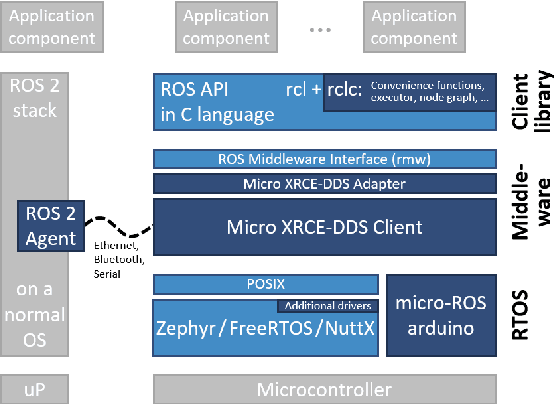

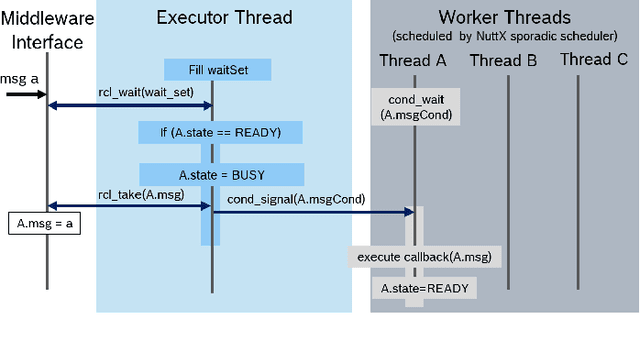

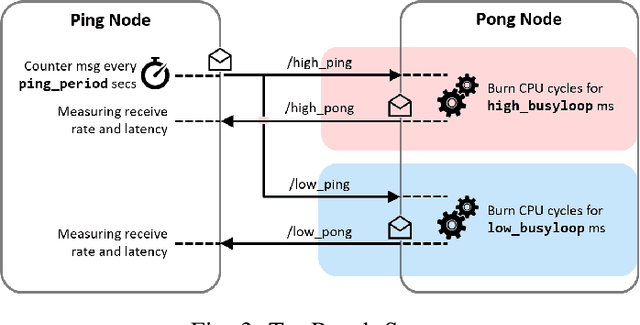

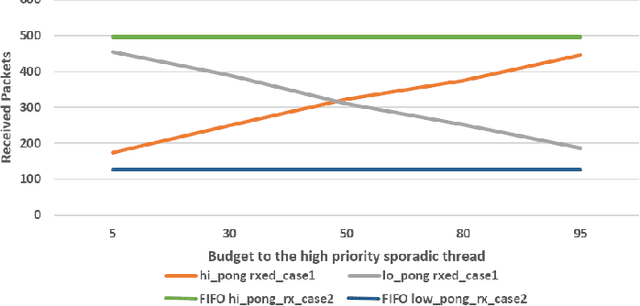

Budget-based real-time Executor for Micro-ROS

May 18, 2021

The Robot Operating System (ROS) is a popular robotics middleware framework. In the last years, it underwent a redesign and reimplementation under the name ROS~2. It now features QoS-configurable communication and a flexible layered architecture. Micro-ROS is a variant developed specifically for resource-constrained microcontrollers (MCU). Such MCUs are commonly used in robotics for sensors and actuators, for time-critical control functions, and for safety. While the execution management of ROS 2 has been addressed by an Executor concept, its lack of real-time capabilities make it unsuitable for industrial use. Neither defining an execution order nor the assignment of scheduling parameters to tasks is possible, despite the fact that advanced real-time scheduling algorithms are well-known and available in modern RTOS's. For example, the NuttX RTOS supports a variant of the reservation-based scheduling which is very attractive for industrial applications: It allows to assign execution time budgets to software components so that a system integrator can thereby guarantee the real-time requirements of the entire system. This paper presents for the first time a ROS~2 Executor design which enables the real-time scheduling capabilities of the operating system. In particular, we successfully demonstrate the budget-based scheduling of the NuttX RTOS with a micro-ROS application on an STM32 microcontroller.

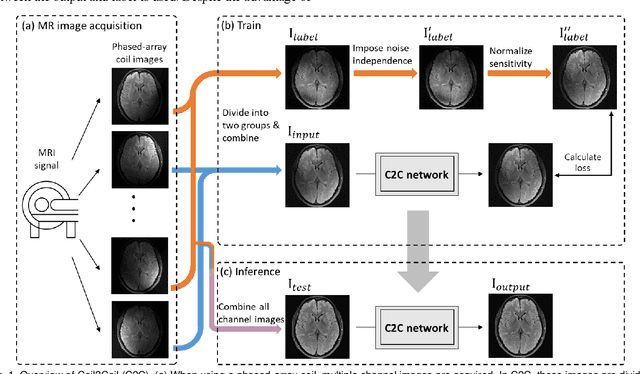

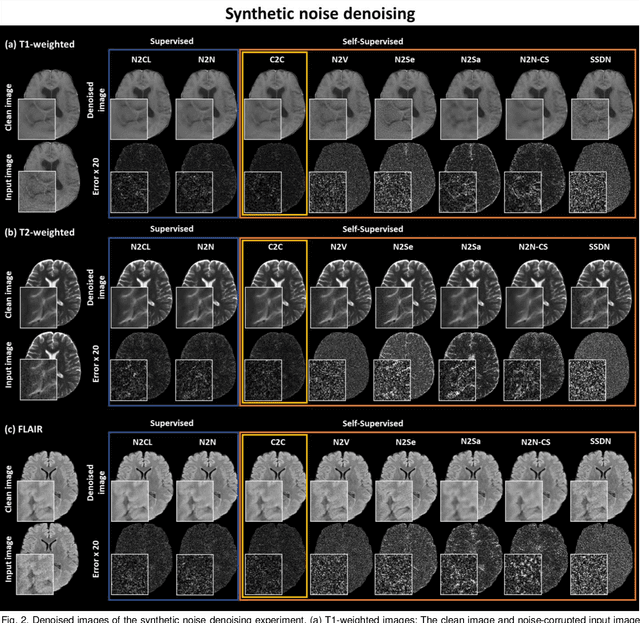

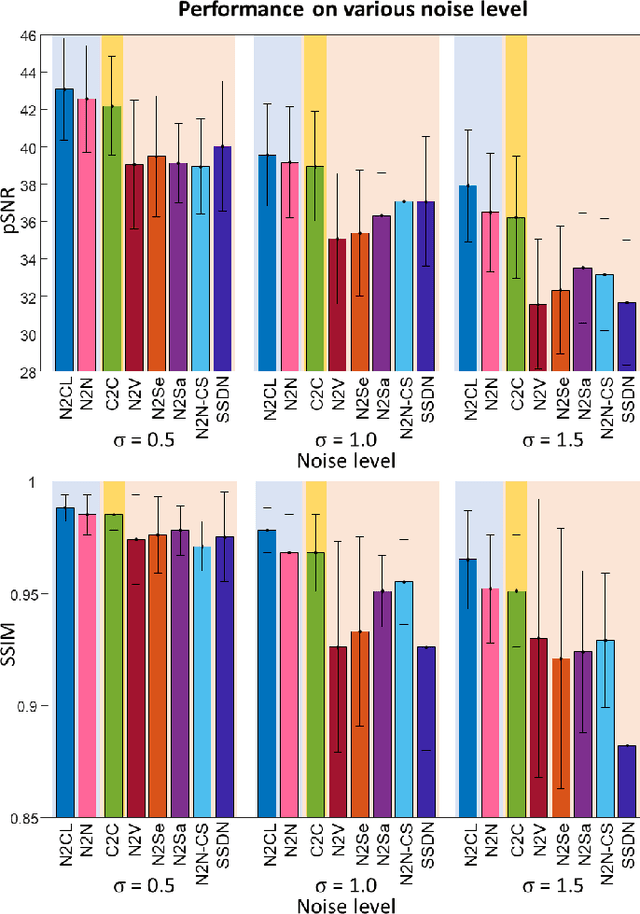

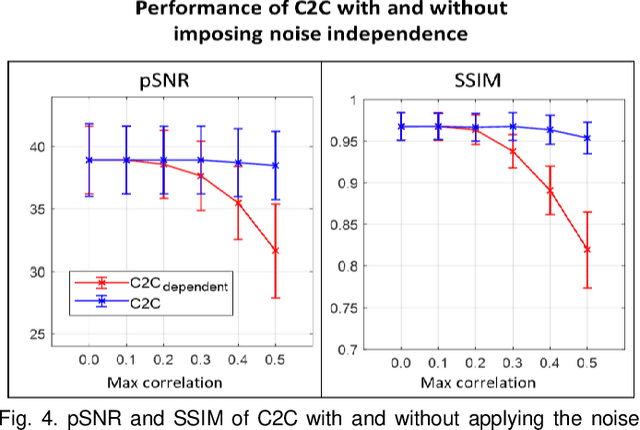

Coil2Coil: Self-supervised MR image denoising using phased-array coil images

Aug 16, 2022

Denoising of magnetic resonance images is beneficial in improving the quality of low signal-to-noise ratio images. Recently, denoising using deep neural networks has demonstrated promising results. Most of these networks, however, utilize supervised learning, which requires large training images of noise-corrupted and clean image pairs. Obtaining training images, particularly clean images, is expensive and time-consuming. Hence, methods such as Noise2Noise (N2N) that require only pairs of noise-corrupted images have been developed to reduce the burden of obtaining training datasets. In this study, we propose a new self-supervised denoising method, Coil2Coil (C2C), that does not require the acquisition of clean images or paired noise-corrupted images for training. Instead, the method utilizes multichannel data from phased-array coils to generate training images. First, it divides and combines multichannel coil images into two images, one for input and the other for label. Then, they are processed to impose noise independence and sensitivity normalization such that they can be used for the training images of N2N. For inference, the method inputs a coil-combined image (e.g., DICOM image), enabling a wide application of the method. When evaluated using synthetic noise-added images, C2C shows the best performance against several self-supervised methods, reporting comparable outcomes to supervised methods. When testing the DICOM images, C2C successfully denoised real noise without showing structure-dependent residuals in the error maps. Because of the significant advantage of not requiring additional scans for clean or paired images, the method can be easily utilized for various clinical applications.

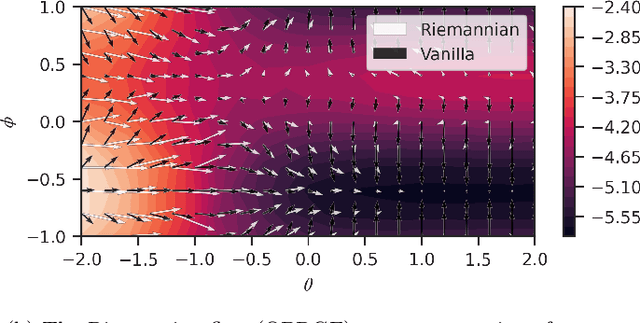

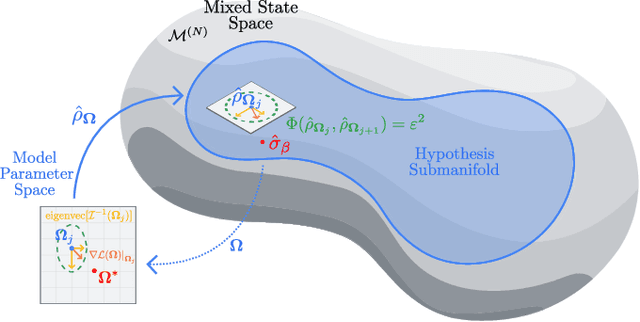

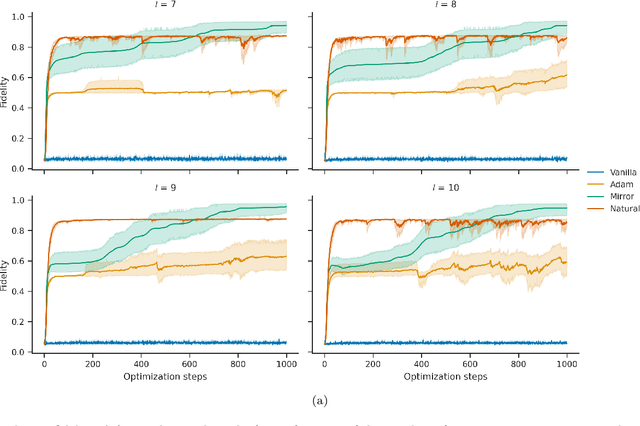

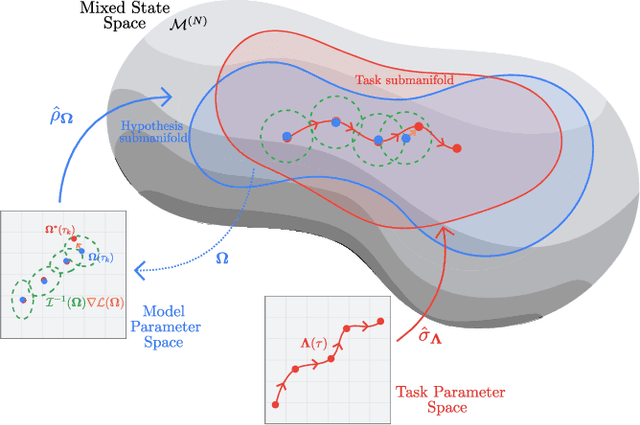

Provably efficient variational generative modeling of quantum many-body systems via quantum-probabilistic information geometry

Jun 09, 2022

The dual tasks of quantum Hamiltonian learning and quantum Gibbs sampling are relevant to many important problems in physics and chemistry. In the low temperature regime, algorithms for these tasks often suffer from intractabilities, for example from poor sample- or time-complexity. With the aim of addressing such intractabilities, we introduce a generalization of quantum natural gradient descent to parameterized mixed states, as well as provide a robust first-order approximating algorithm, Quantum-Probabilistic Mirror Descent. We prove data sample efficiency for the dual tasks using tools from information geometry and quantum metrology, thus generalizing the seminal result of classical Fisher efficiency to a variational quantum algorithm for the first time. Our approaches extend previously sample-efficient techniques to allow for flexibility in model choice, including to spectrally-decomposed models like Quantum Hamiltonian-Based Models, which may circumvent intractable time complexities. Our first-order algorithm is derived using a novel quantum generalization of the classical mirror descent duality. Both results require a special choice of metric, namely, the Bogoliubov-Kubo-Mori metric. To test our proposed algorithms numerically, we compare their performance to existing baselines on the task of quantum Gibbs sampling for the transverse field Ising model. Finally, we propose an initialization strategy leveraging geometric locality for the modelling of sequences of states such as those arising from quantum-stochastic processes. We demonstrate its effectiveness empirically for both real and imaginary time evolution while defining a broader class of potential applications.

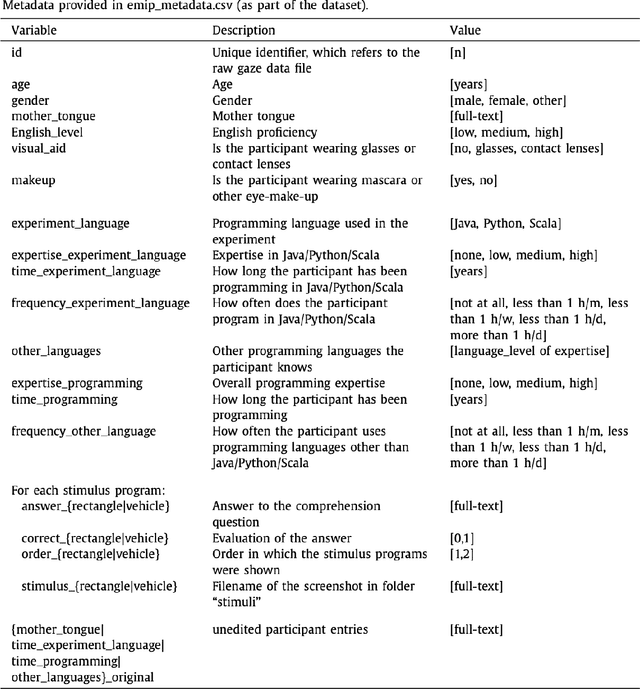

An Open Source Interactive Visual Analytics Tool for Comparative Programming Comprehension

Jul 29, 2022

This paper proposes an open source visual analytics tool consisting of several views and perspectives on eye movement data collected during code reading tasks when writing computer programs. Hence the focus of this work is on code and program comprehension. The source code is shown as a visual stimulus. It can be inspected in combination with overlaid scanpaths in which the saccades can be visually encoded in several forms, including straight, curved, and orthogonal lines, modifiable by interaction techniques. The tool supports interaction techniques like filter functions, aggregations, data sampling, and many more. We illustrate the usefulness of our tool by applying it to the eye movements of 216 programmers of multiple expertise levels that were collected during two code comprehension tasks. Our tool helped to analyze the difference between the strategic program comprehension of programmers based on their demographic background, time taken to complete the task, choice of programming task, and expertise.

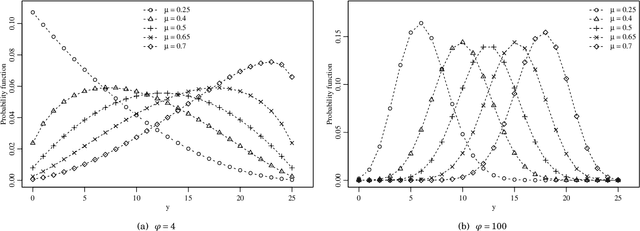

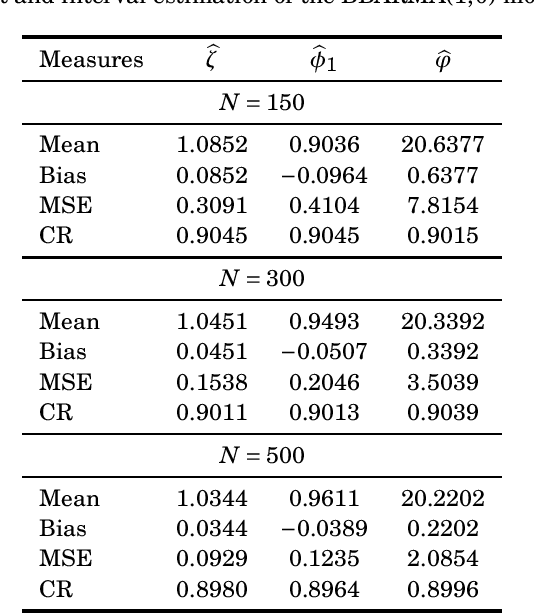

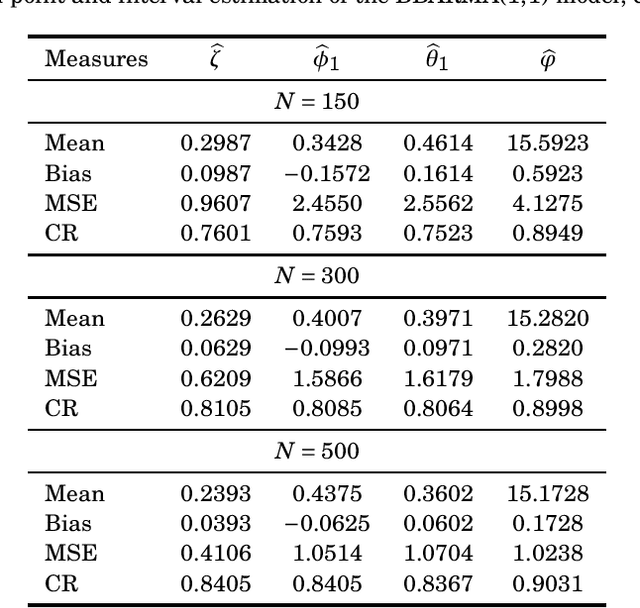

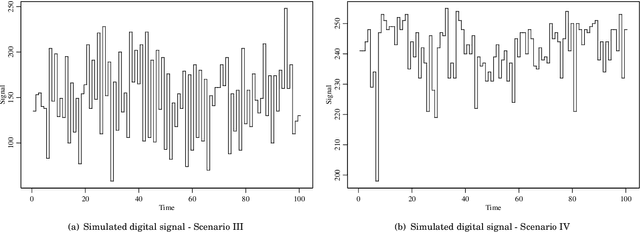

Signal Detection and Inference Based on the Beta Binomial Autoregressive Moving Average Model

Jul 29, 2022

This paper proposes the beta binomial autoregressive moving average model (BBARMA) for modeling quantized amplitude data and bounded count data. The BBARMA model estimates the conditional mean of a beta binomial distributed variable observed over the time by a dynamic structure including: (i) autoregressive and moving average terms; (ii) a set of regressors; and (iii) a link function. Besides introducing the new model, we develop parameter estimation, detection tools, an out-of-signal forecasting scheme, and diagnostic measures. In particular, we provide closed-form expressions for the conditional score vector and the conditional information matrix. The proposed model was submitted to extensive Monte Carlo simulations in order to evaluate the performance of the conditional maximum likelihood estimators and of the proposed detector. The derived detector outperforms the usual ARMA- and Gaussian-based detectors for sinusoidal signal detection. We also presented an experiment for modeling and forecasting the monthly number of rainy days in Recife, Brazil.

* 17 pages, 4 tables, 5 figures

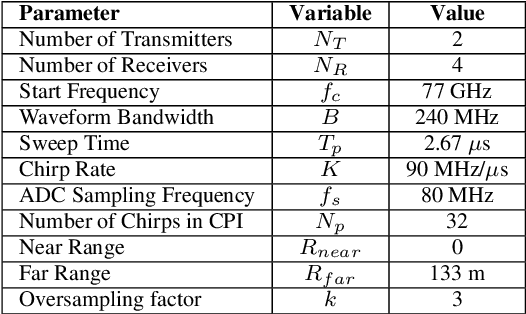

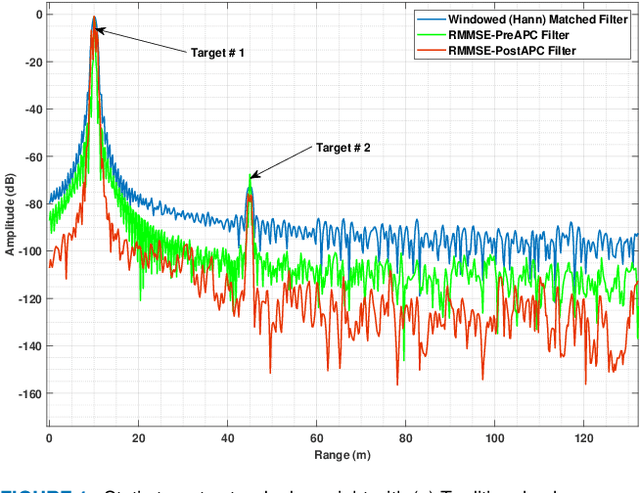

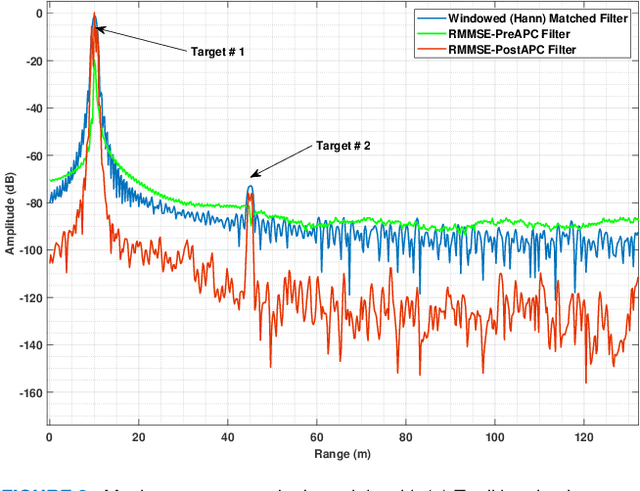

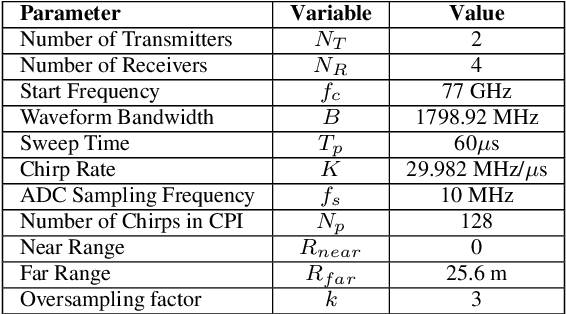

Adaptive Pulse Compression for Sidelobes Reduction in Stretch Processing based MIMO Radars

Aug 18, 2022

Multiple-Input Multiple-Output (MIMO) radars provide various advantages as compared to conventional radars. Among these advantages, improved angular diversity feature is being explored for future fully autonomous vehicles. Improved angular diversity requires use of orthogonal waveforms at transmit as well as receive sides. This orthogonality between waveforms is critical as the cross-correlation between signals can inhibit the detection of weaker targets due to sidelobes of stronger targets. This paper investigates the Reiterative Minimum Mean Squared Error (RMMSE) mismatch filter design for range sidelobes reduction for a Slow-Time Phase-Coded (ST-PC) Frequency Modulated Continuous Wave (FMCW) MIMO radar. Initially, the performance degradation of RMMSE filter is analyzed for improperly decoded received pulses. It is then shown mathematically that proper decoding of received pulses requires phase compensation related to any phase distortions caused due to doppler and spatial locations of targets. To cater for these phase distortions, it is proposed to re-adjust the traditional order of operations in radar signal processing to doppler, angle and range. Additionally, it is also proposed to incorporate sidelobes decoherence for further suppression of sidelobes. This is achieved by modification of the structured covariance matrix of baseline single-input RMMSE mismatch filter. The modified structured covariance matrix is proposed to include the range estimates corresponding to each transmitter. These proposed modifications provide additional sidelobes suppression while it also provides additional fidelity for target peaks. The proposed approach is demonstrated through simulations as well as field experiments. Superior performance in terms of range sidelobes suppression is observed when compared with baseline RMMSE and traditional Hanning windowed range response.

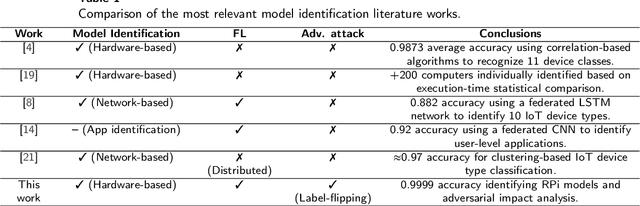

Robust Federated Learning for execution time-based device model identification under label-flipping attack

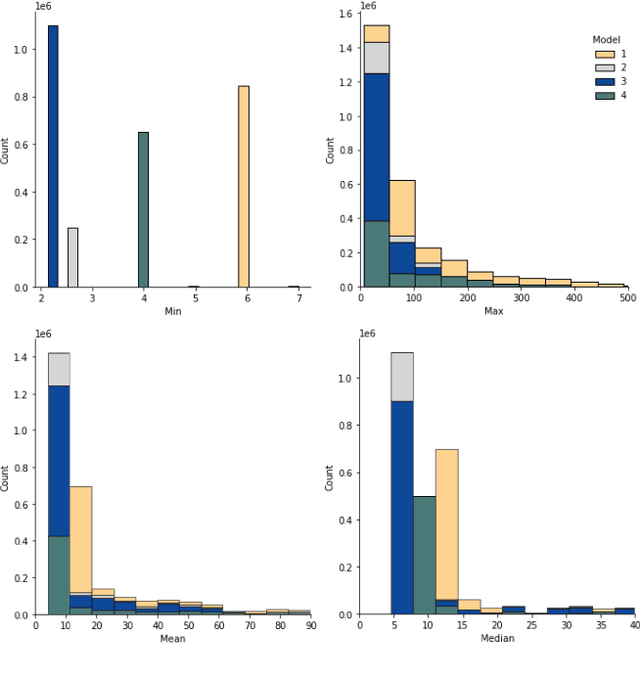

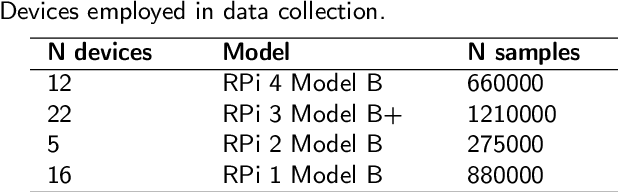

Nov 29, 2021

The computing device deployment explosion experienced in recent years, motivated by the advances of technologies such as Internet-of-Things (IoT) and 5G, has led to a global scenario with increasing cybersecurity risks and threats. Among them, device spoofing and impersonation cyberattacks stand out due to their impact and, usually, low complexity required to be launched. To solve this issue, several solutions have emerged to identify device models and types based on the combination of behavioral fingerprinting and Machine/Deep Learning (ML/DL) techniques. However, these solutions are not appropriated for scenarios where data privacy and protection is a must, as they require data centralization for processing. In this context, newer approaches such as Federated Learning (FL) have not been fully explored yet, especially when malicious clients are present in the scenario setup. The present work analyzes and compares the device model identification performance of a centralized DL model with an FL one while using execution time-based events. For experimental purposes, a dataset containing execution-time features of 55 Raspberry Pis belonging to four different models has been collected and published. Using this dataset, the proposed solution achieved 0.9999 accuracy in both setups, centralized and federated, showing no performance decrease while preserving data privacy. Later, the impact of a label-flipping attack during the federated model training is evaluated, using several aggregation mechanisms as countermeasure. Zeno and coordinate-wise median aggregation show the best performance, although their performance greatly degrades when the percentage of fully malicious clients (all training samples poisoned) grows over 50%.

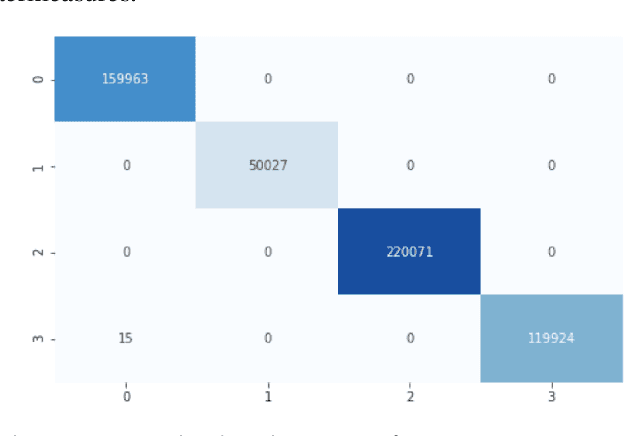

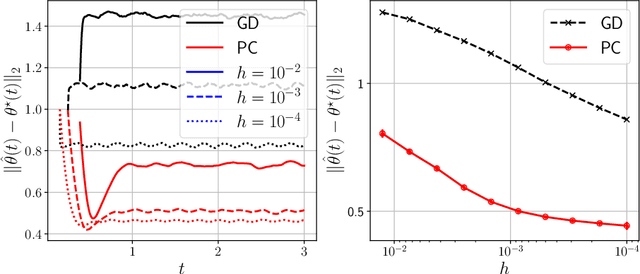

Predictor-corrector algorithms for stochastic optimization under gradual distribution shift

May 26, 2022

Time-varying stochastic optimization problems frequently arise in machine learning practice (e.g. gradual domain shift, object tracking, strategic classification). Although most problems are solved in discrete time, the underlying process is often continuous in nature. We exploit this underlying continuity by developing predictor-corrector algorithms for time-varying stochastic optimizations. We provide error bounds for the iterates, both in presence of pure and noisy access to the queries from the relevant derivatives of the loss function. Furthermore, we show (theoretically and empirically in several examples) that our method outperforms non-predictor corrector methods that do not exploit the underlying continuous process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge