"Time": models, code, and papers

Transforming Autoregression: Interpretable and Expressive Time Series Forecast

Oct 15, 2021

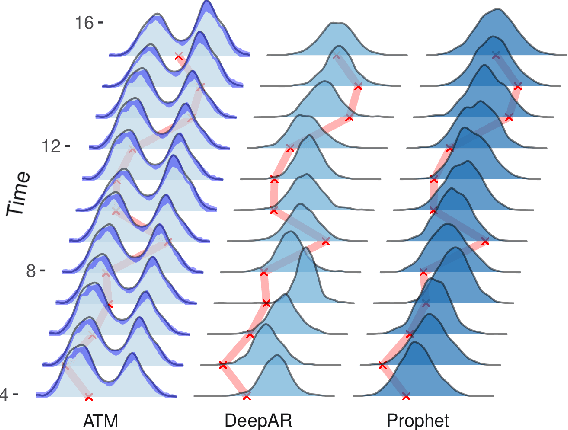

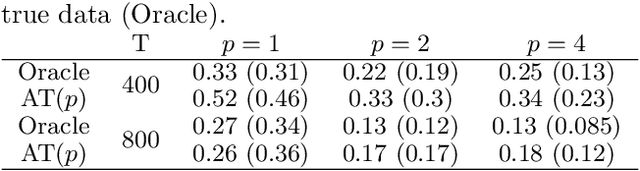

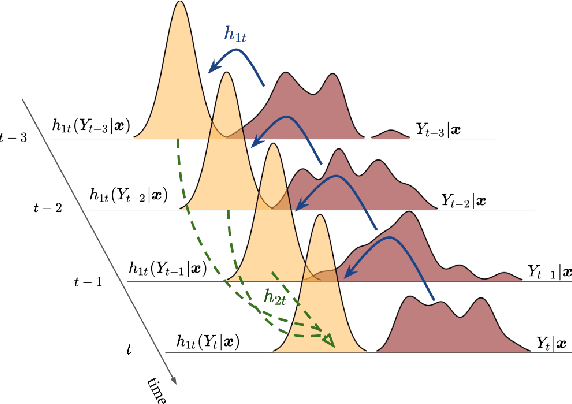

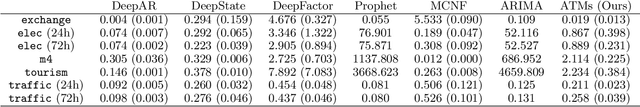

Probabilistic forecasting of time series is an important matter in many applications and research fields. In order to draw conclusions from a probabilistic forecast, we must ensure that the model class used to approximate the true forecasting distribution is expressive enough. Yet, characteristics of the model itself, such as its uncertainty or its general functioning are not of lesser importance. In this paper, we propose Autoregressive Transformation Models (ATMs), a model class inspired from various research directions such as normalizing flows and autoregressive models. ATMs unite expressive distributional forecasts using a semi-parametric distribution assumption with an interpretable model specification and allow for uncertainty quantification based on (asymptotic) Maximum Likelihood theory. We demonstrate the properties of ATMs both theoretically and through empirical evaluation on several simulated and real-world forecasting datasets.

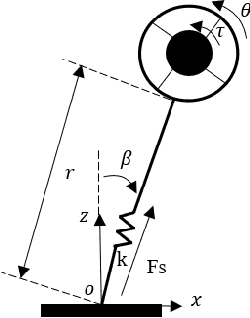

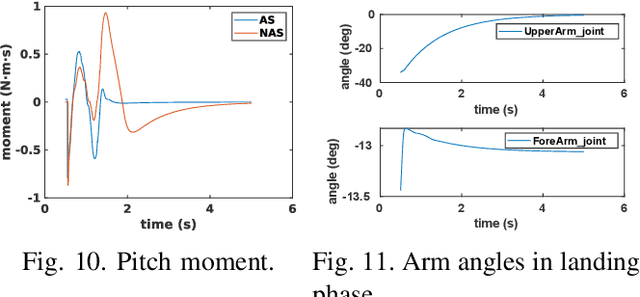

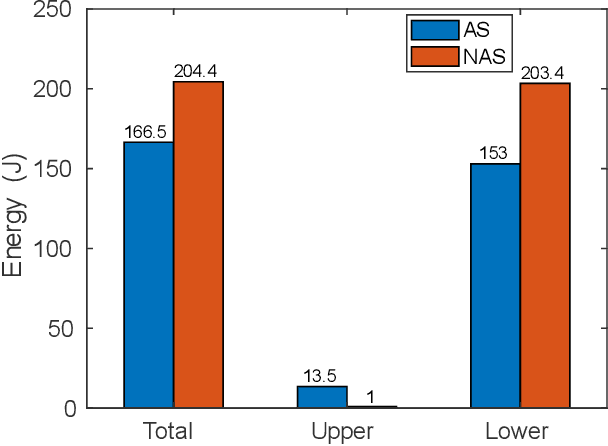

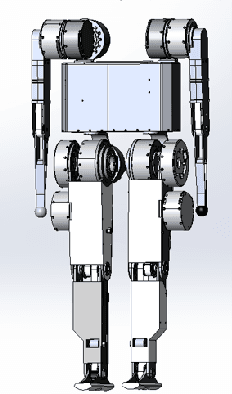

Highly dynamic locomotion control of biped robot enhanced by swing arms

Aug 17, 2022

Swing arms have an irreplaceable role in promoting highly dynamic locomotion on bipedal robots by a larger angular momentum control space from the viewpoint of biomechanics. Few bipedal robots utilize swing arms and its redundancy characteristic of multiple degrees of freedom due to the lack of appropriate locomotion control strategies to perfectly integrate modeling and control. This paper presents a kind of control strategy by modeling the bipedal robot as a flywheel-spring loaded inverted pendulum (F-SLIP) to extract characteristics of swing arms and using the whole-body controller (WBC) to achieve these characteristics, and also proposes a evaluation system including three aspects of agility defined by us, stability and energy consumption for the highly dynamic locomotion of bipedal robots. We design several sets of simulation experiments and analyze the effects of swing arms according to the evaluation system during the jumping motion of Purple (Purple energy rises in the east)V1.0, a kind of bipedal robot designed to test high explosive locomotion. Results show that Purple's agility is increased by more than 10 percent, stabilization time is reduced by a factor of two, and energy consumption is reduced by more than 20 percent after introducing swing arms.

Disentangling Identity and Pose for Facial Expression Recognition

Aug 17, 2022

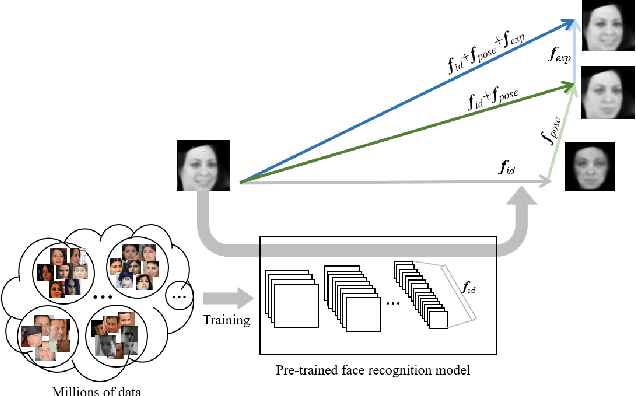

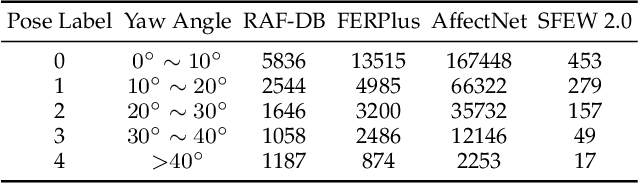

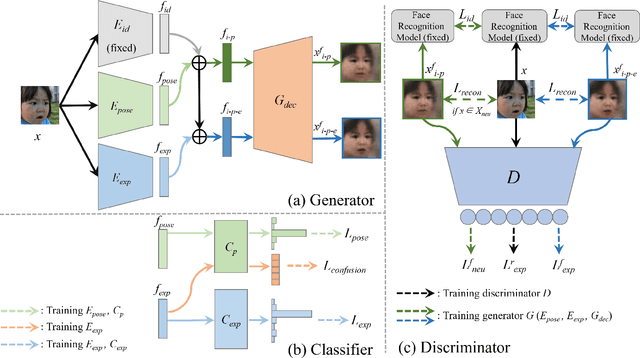

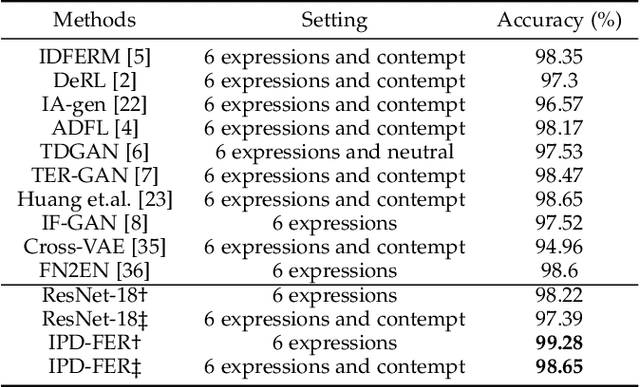

Facial expression recognition (FER) is a challenging problem because the expression component is always entangled with other irrelevant factors, such as identity and head pose. In this work, we propose an identity and pose disentangled facial expression recognition (IPD-FER) model to learn more discriminative feature representation. We regard the holistic facial representation as the combination of identity, pose and expression. These three components are encoded with different encoders. For identity encoder, a well pre-trained face recognition model is utilized and fixed during training, which alleviates the restriction on specific expression training data in previous works and makes the disentanglement practicable on in-the-wild datasets. At the same time, the pose and expression encoder are optimized with corresponding labels. Combining identity and pose feature, a neutral face of input individual should be generated by the decoder. When expression feature is added, the input image should be reconstructed. By comparing the difference between synthesized neutral and expressional images of the same individual, the expression component is further disentangled from identity and pose. Experimental results verify the effectiveness of our method on both lab-controlled and in-the-wild databases and we achieve state-of-the-art recognition performance.

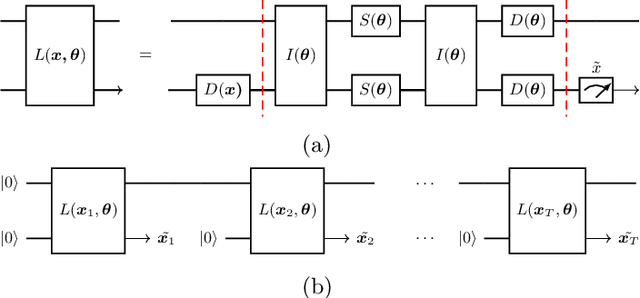

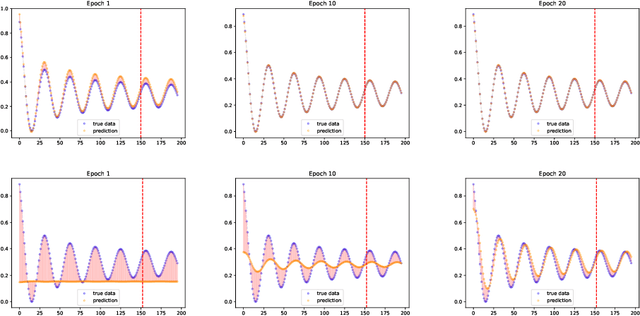

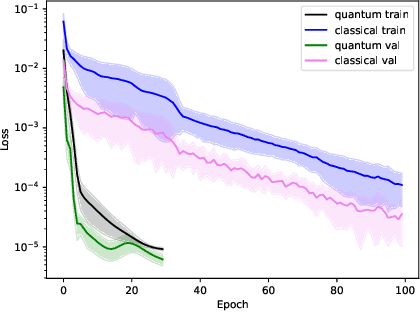

Rapid training of quantum recurrent neural network

Jul 01, 2022

Time series prediction is the crucial task for many human activities e.g. weather forecasts or predicting stock prices. One solution to this problem is to use Recurrent Neural Networks (RNNs). Although they can yield accurate predictions, their learning process is slow and complex. Here we propose a Quantum Recurrent Neural Network (QRNN) to address these obstacles. The design of the network is based on the continuous-variable quantum computing paradigm. We demonstrate that the network is capable of learning time dependence of a few types of temporal data. Our numerical simulations show that the QRNN converges to optimal weights in fewer epochs than the classical network. Furthermore, for a small number of trainable parameters it can achieve lower loss than the latter.

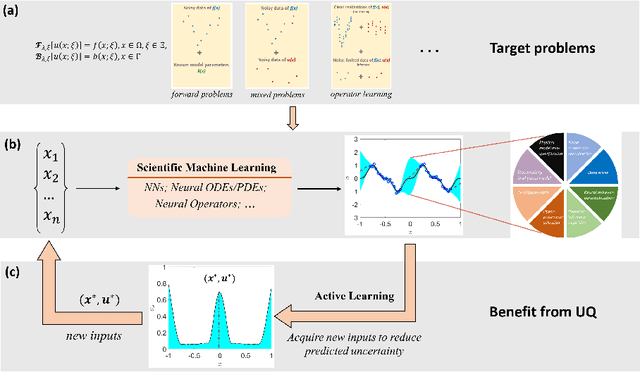

NeuralUQ: A comprehensive library for uncertainty quantification in neural differential equations and operators

Aug 25, 2022

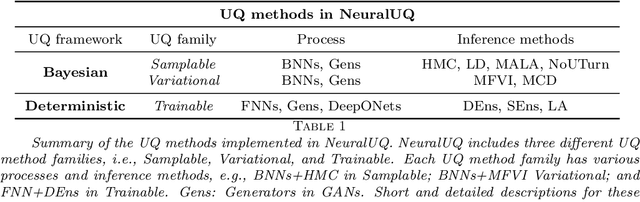

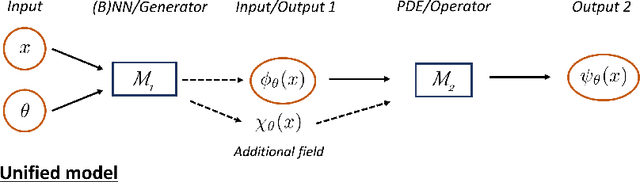

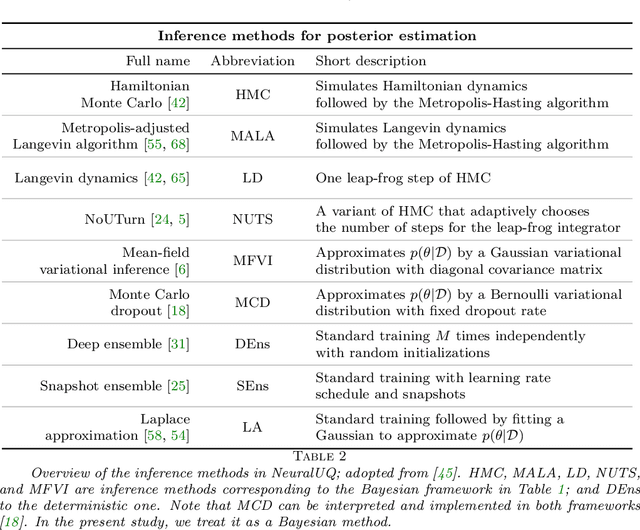

Uncertainty quantification (UQ) in machine learning is currently drawing increasing research interest, driven by the rapid deployment of deep neural networks across different fields, such as computer vision, natural language processing, and the need for reliable tools in risk-sensitive applications. Recently, various machine learning models have also been developed to tackle problems in the field of scientific computing with applications to computational science and engineering (CSE). Physics-informed neural networks and deep operator networks are two such models for solving partial differential equations and learning operator mappings, respectively. In this regard, a comprehensive study of UQ methods tailored specifically for scientific machine learning (SciML) models has been provided in [45]. Nevertheless, and despite their theoretical merit, implementations of these methods are not straightforward, especially in large-scale CSE applications, hindering their broad adoption in both research and industry settings. In this paper, we present an open-source Python library (https://github.com/Crunch-UQ4MI), termed NeuralUQ and accompanied by an educational tutorial, for employing UQ methods for SciML in a convenient and structured manner. The library, designed for both educational and research purposes, supports multiple modern UQ methods and SciML models. It is based on a succinct workflow and facilitates flexible employment and easy extensions by the users. We first present a tutorial of NeuralUQ and subsequently demonstrate its applicability and efficiency in four diverse examples, involving dynamical systems and high-dimensional parametric and time-dependent PDEs.

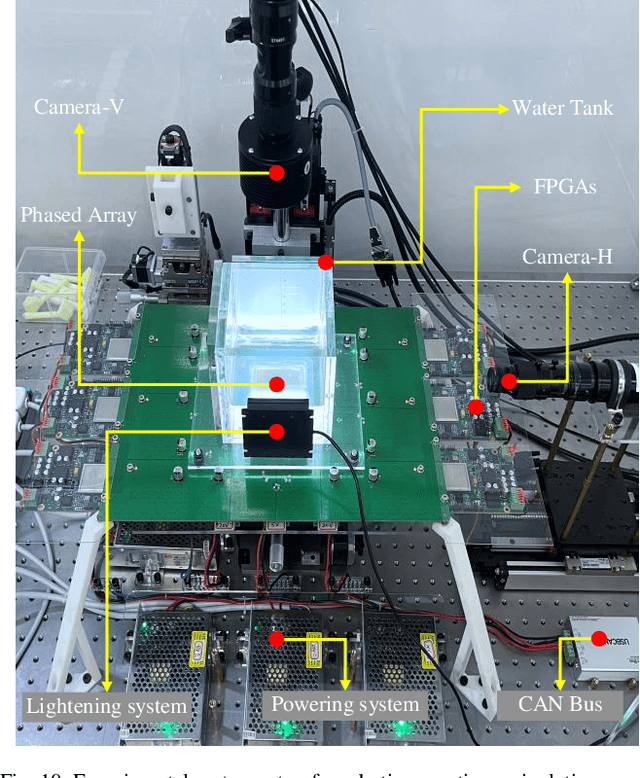

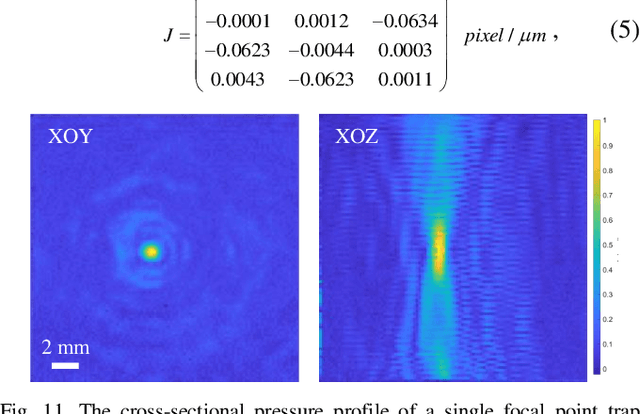

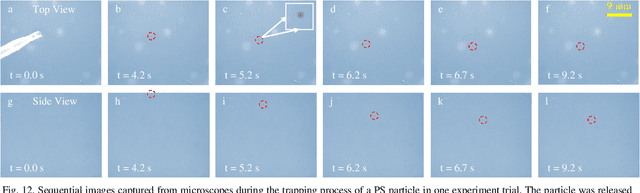

Automated Noncontact Trapping of Moving Micro-particle with Ultrasonic Phased Array System and Microscopic Vision

Aug 22, 2022

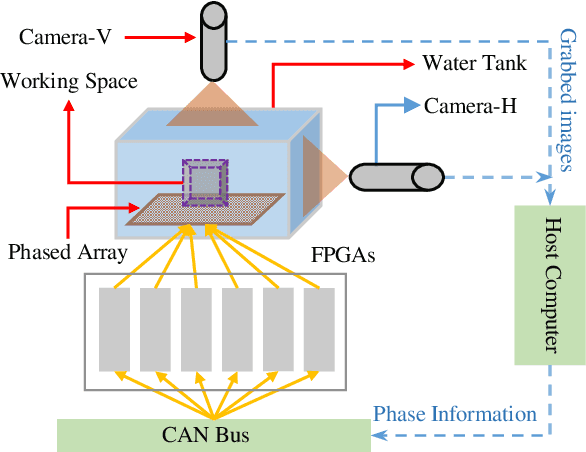

Noncontact particle manipulation (NPM) technology has significantly extended mankind's analysis capability into micro and nano scale, which in turn greatly promoted the development of material science and life science. Though NPM by means of electric, magnetic, and optical field has achieved great success, from the robotic perspective, it is still labor-intensive manipulation since professional human assistance is somehow mandatory in early preparation stage. Therefore, developing automated noncontact trapping of moving particles is worthwhile, particularly for applications where particle samples are rare, fragile or contact sensitive. Taking advantage of latest dynamic acoustic field modulating technology, and particularly by virtue of the great scalability of acoustic manipulation from micro-scale to sub-centimeter-scale, we propose an automated noncontact trapping of moving micro-particles with ultrasonic phased array system and microscopic vision in this paper. The main contribution of this work is for the first time, as far as we know, we achieved fully automated moving micro-particle trapping in acoustic NPM field by resorting to robotic approach. In short, the particle moving status is observed and predicted by binocular microscopic vision system, by referring to which the acoustic trapping zone is calculated and generated to capture and stably hold the particle. The problem of hand-eye relationship of noncontact robotic end-effector is also solved in this work. Experiments demonstrated the effectiveness of this work.

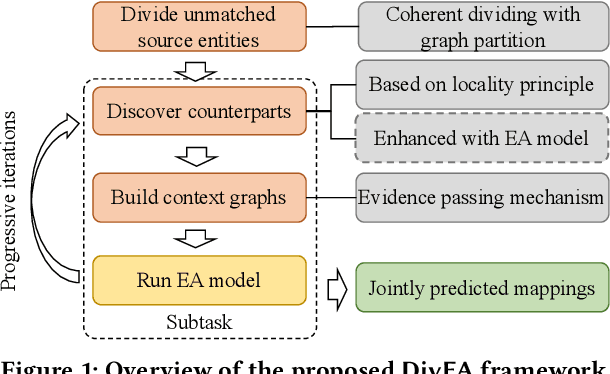

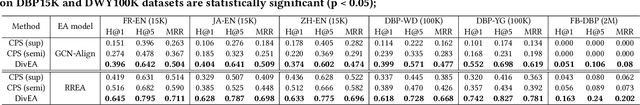

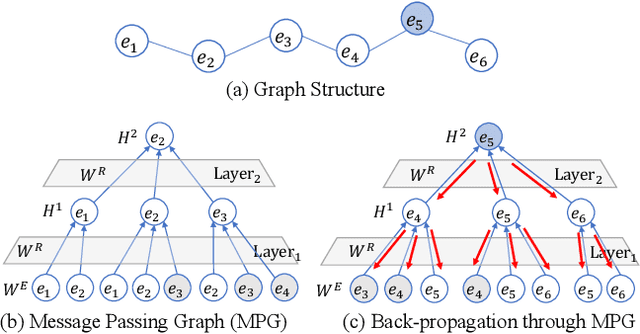

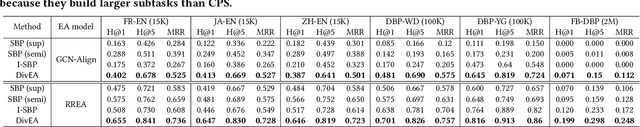

High-quality Task Division for Large-scale Entity Alignment

Aug 22, 2022

Entity Alignment (EA) aims to match equivalent entities that refer to the same real-world objects and is a key step for Knowledge Graph (KG) fusion. Most neural EA models cannot be applied to large-scale real-life KGs due to their excessive consumption of GPU memory and time. One promising solution is to divide a large EA task into several subtasks such that each subtask only needs to match two small subgraphs of the original KGs. However, it is challenging to divide the EA task without losing effectiveness. Existing methods display low coverage of potential mappings, insufficient evidence in context graphs, and largely differing subtask sizes. In this work, we design the DivEA framework for large-scale EA with high-quality task division. To include in the EA subtasks a high proportion of the potential mappings originally present in the large EA task, we devise a counterpart discovery method that exploits the locality principle of the EA task and the power of trained EA models. Unique to our counterpart discovery method is the explicit modelling of the chance of a potential mapping. We also introduce an evidence passing mechanism to quantify the informativeness of context entities and find the most informative context graphs with flexible control of the subtask size. Extensive experiments show that DivEA achieves higher EA performance than alternative state-of-the-art solutions.

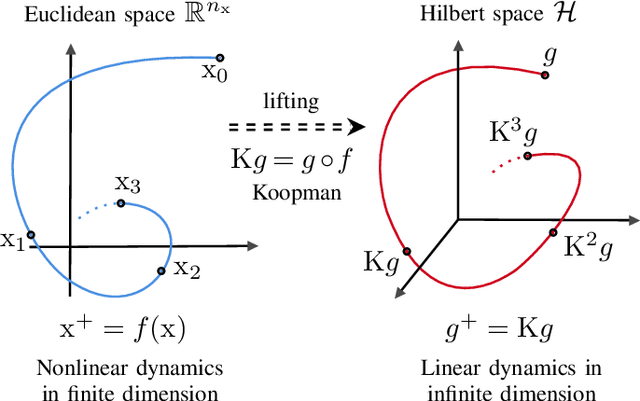

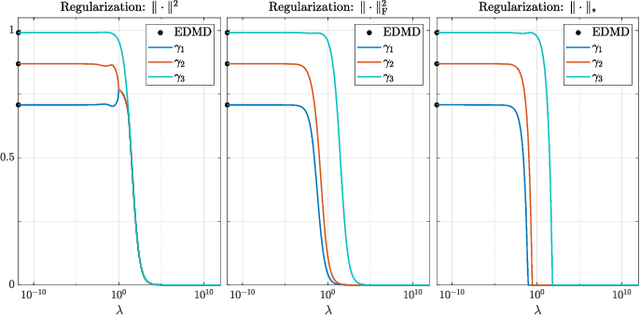

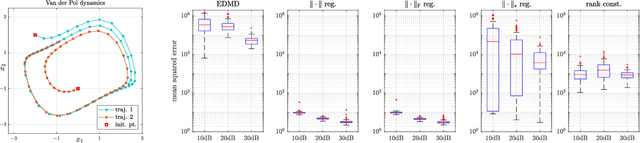

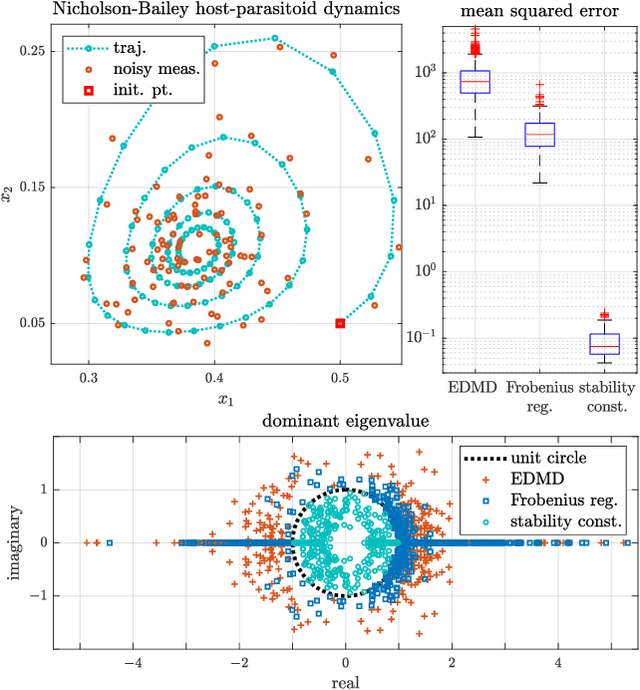

Representer Theorem for Learning Koopman Operators

Aug 02, 2022

In this work, the problem of learning Koopman operator of a discrete-time autonomous system is considered. The learning problem is formulated as a constrained regularized empirical loss minimization in the infinite-dimensional space of linear operators. We show that under certain but general conditions, a representer theorem holds for the learning problem. This allows reformulating the problem in a finite-dimensional space without any approximation and loss of precision. Following this, we consider various cases of regularization and constraints in the learning problem, including the operator norm, the Frobenius norm, rank, nuclear norm, and stability. Subsequently, we derive the corresponding finite-dimensional problem. Furthermore, we discuss the connection between the proposed formulation and the extended dynamic mode decomposition. Finally, we provide an illustrative numerical example.

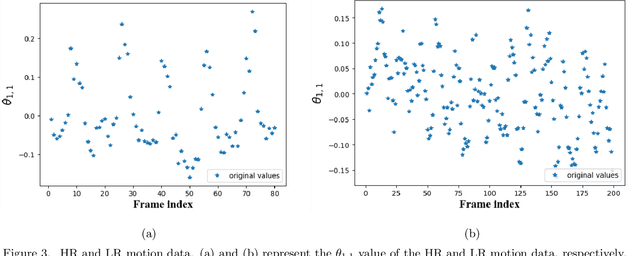

TSAMT: Time-Series-Analysis-based Motion Transfer among Multiple Cameras

Sep 29, 2021

Along with advances in optical sensors is the common practice of building an imaging system with heterogeneous cameras. While high-resolution (HR) videos acquisition and analysis are benefited from hybrid sensors, the intrinsic characteristics of multiple cameras lead to an interesting motion transfer problem. Unfortunately, most of the existing methods provide no theoretical analysis and require intensive training data. In this paper, we propose an algorithm using time series analysis for motion transfer among multiple cameras. Specifically, we firstly identify seasonality in motion data and then build an addictive time series model to extract patterns that could be transferred across cameras. Our approach has a complete and clear mathematical formulation, thus being efficient and interpretable. Through quantitative evaluations on real-world data, we demonstrate the effectiveness of our method. Furthermore, our motion transfer algorithm could combine with and facilitate downstream tasks, e.g., enhancing pose estimation on LR videos with inherent patterns extracted from HR ones. Code is available at https://github.com/IndigoPurple/TSAMT.

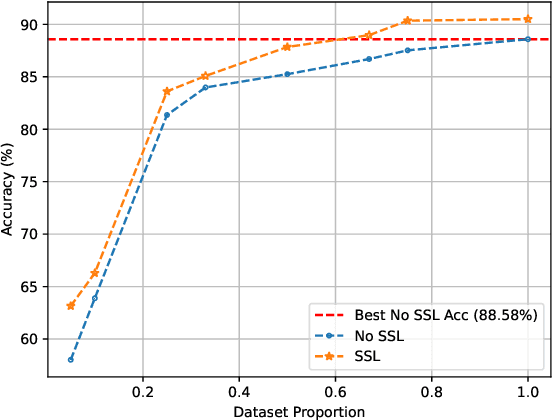

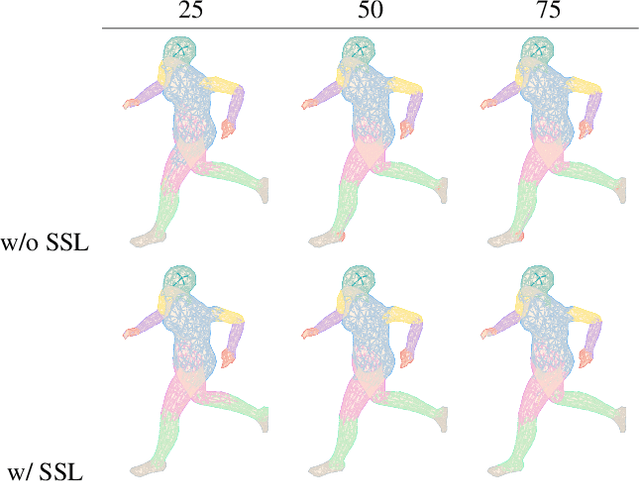

Self-Supervised Contrastive Representation Learning for 3D Mesh Segmentation

Aug 08, 2022

3D deep learning is a growing field of interest due to the vast amount of information stored in 3D formats. Triangular meshes are an efficient representation for irregular, non-uniform 3D objects. However, meshes are often challenging to annotate due to their high geometrical complexity. Specifically, creating segmentation masks for meshes is tedious and time-consuming. Therefore, it is desirable to train segmentation networks with limited-labeled data. Self-supervised learning (SSL), a form of unsupervised representation learning, is a growing alternative to fully-supervised learning which can decrease the burden of supervision for training. We propose SSL-MeshCNN, a self-supervised contrastive learning method for pre-training CNNs for mesh segmentation. We take inspiration from traditional contrastive learning frameworks to design a novel contrastive learning algorithm specifically for meshes. Our preliminary experiments show promising results in reducing the heavy labeled data requirement needed for mesh segmentation by at least 33%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge