"Time": models, code, and papers

Thompson Sampling Efficiently Learns to Control Diffusion Processes

Jun 20, 2022

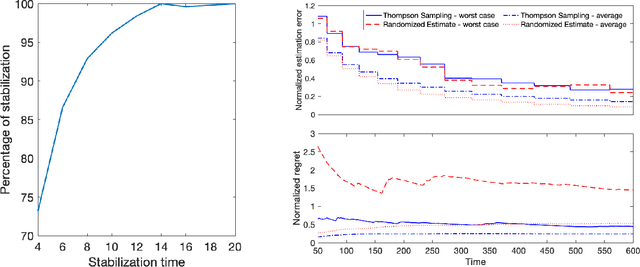

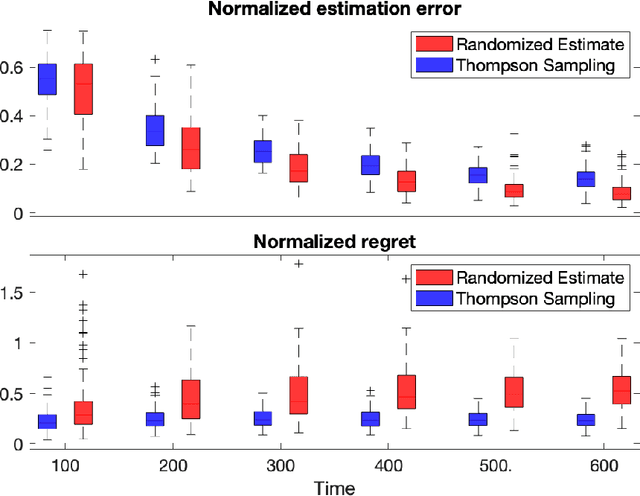

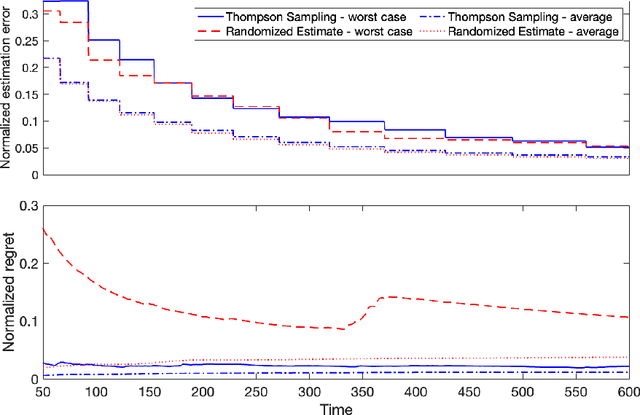

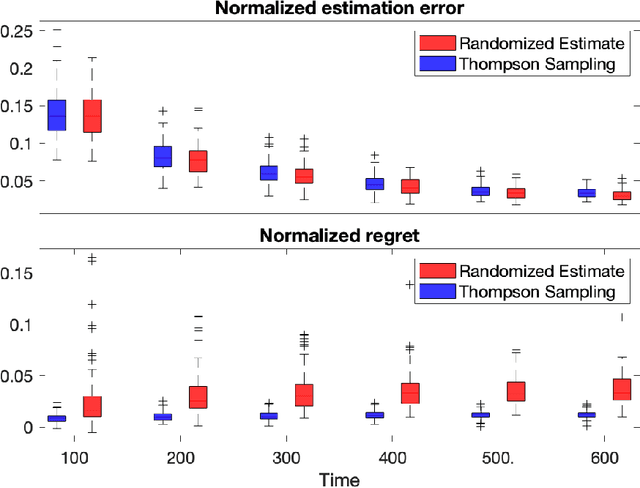

Diffusion processes that evolve according to linear stochastic differential equations are an important family of continuous-time dynamic decision-making models. Optimal policies are well-studied for them, under full certainty about the drift matrices. However, little is known about data-driven control of diffusion processes with uncertain drift matrices as conventional discrete-time analysis techniques are not applicable. In addition, while the task can be viewed as a reinforcement learning problem involving exploration and exploitation trade-off, ensuring system stability is a fundamental component of designing optimal policies. We establish that the popular Thompson sampling algorithm learns optimal actions fast, incurring only a square-root of time regret, and also stabilizes the system in a short time period. To the best of our knowledge, this is the first such result for Thompson sampling in a diffusion process control problem. We validate our theoretical results through empirical simulations with real parameter matrices from two settings of airplane and blood glucose control. Moreover, we observe that Thompson sampling significantly improves (worst-case) regret, compared to the state-of-the-art algorithms, suggesting Thompson sampling explores in a more guarded fashion. Our theoretical analysis involves characterization of a certain optimality manifold that ties the local geometry of the drift parameters to the optimal control of the diffusion process. We expect this technique to be of broader interest.

Visual Foresight With a Local Dynamics Model

Jun 29, 2022

Model-free policy learning has been shown to be capable of learning manipulation policies which can solve long-time horizon tasks using single-step manipulation primitives. However, training these policies is a time-consuming process requiring large amounts of data. We propose the Local Dynamics Model (LDM) which efficiently learns the state-transition function for these manipulation primitives. By combining the LDM with model-free policy learning, we can learn policies which can solve complex manipulation tasks using one-step lookahead planning. We show that the LDM is both more sample-efficient and outperforms other model architectures. When combined with planning, we can outperform other model-based and model-free policies on several challenging manipulation tasks in simulation.

AdaRNN: Adaptive Learning and Forecasting of Time Series

Aug 11, 2021

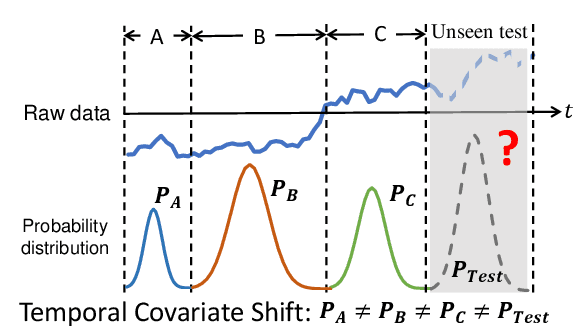

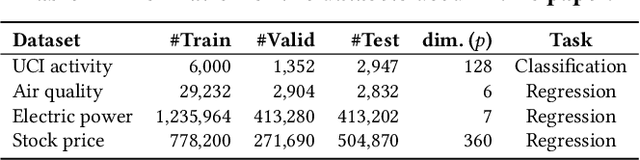

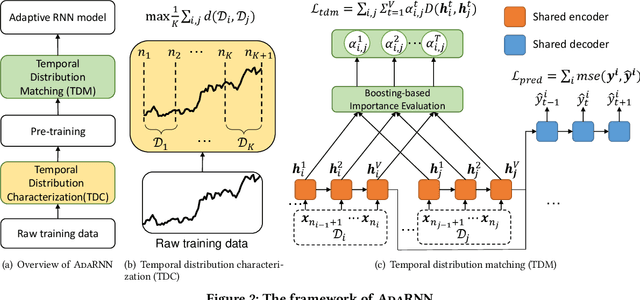

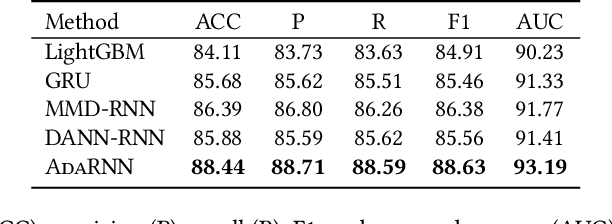

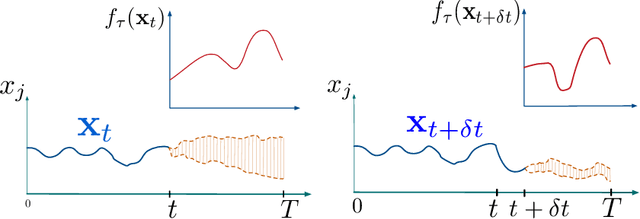

Time series has wide applications in the real world and is known to be difficult to forecast. Since its statistical properties change over time, its distribution also changes temporally, which will cause severe distribution shift problem to existing methods. However, it remains unexplored to model the time series in the distribution perspective. In this paper, we term this as Temporal Covariate Shift (TCS). This paper proposes Adaptive RNNs (AdaRNN) to tackle the TCS problem by building an adaptive model that generalizes well on the unseen test data. AdaRNN is sequentially composed of two novel algorithms. First, we propose Temporal Distribution Characterization to better characterize the distribution information in the TS. Second, we propose Temporal Distribution Matching to reduce the distribution mismatch in TS to learn the adaptive TS model. AdaRNN is a general framework with flexible distribution distances integrated. Experiments on human activity recognition, air quality prediction, and financial analysis show that AdaRNN outperforms the latest methods by a classification accuracy of 2.6% and significantly reduces the RMSE by 9.0%. We also show that the temporal distribution matching algorithm can be extended in Transformer structure to boost its performance.

DRAGON: Decentralized Fault Tolerance in Edge Federations

Aug 16, 2022

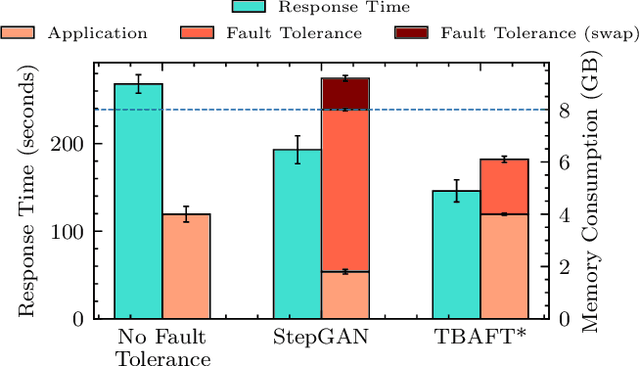

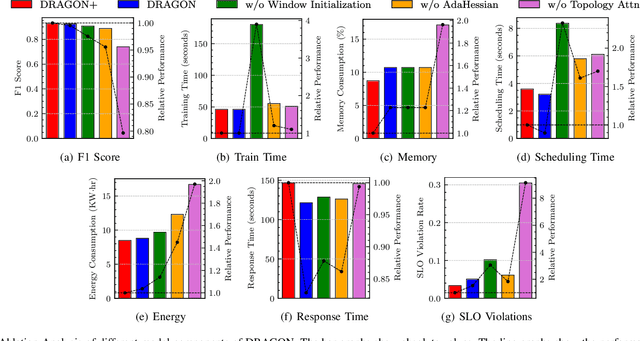

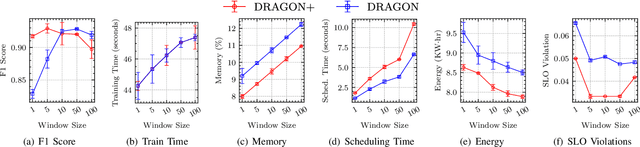

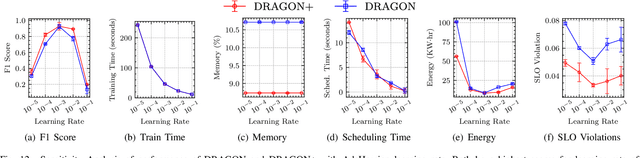

Edge Federation is a new computing paradigm that seamlessly interconnects the resources of multiple edge service providers. A key challenge in such systems is the deployment of latency-critical and AI based resource-intensive applications in constrained devices. To address this challenge, we propose a novel memory-efficient deep learning based model, namely generative optimization networks (GON). Unlike GANs, GONs use a single network to both discriminate input and generate samples, significantly reducing their memory footprint. Leveraging the low memory footprint of GONs, we propose a decentralized fault-tolerance method called DRAGON that runs simulations (as per a digital modeling twin) to quickly predict and optimize the performance of the edge federation. Extensive experiments with real-world edge computing benchmarks on multiple Raspberry-Pi based federated edge configurations show that DRAGON can outperform the baseline methods in fault-detection and Quality of Service (QoS) metrics. Specifically, the proposed method gives higher F1 scores for fault-detection than the best deep learning (DL) method, while consuming lower memory than the heuristic methods. This allows for improvement in energy consumption, response time and service level agreement violations by up to 74, 63 and 82 percent, respectively.

From WSI-level to Patch-level: Structure Prior Guided Binuclear Cell Fine-grained Detection

Aug 26, 2022

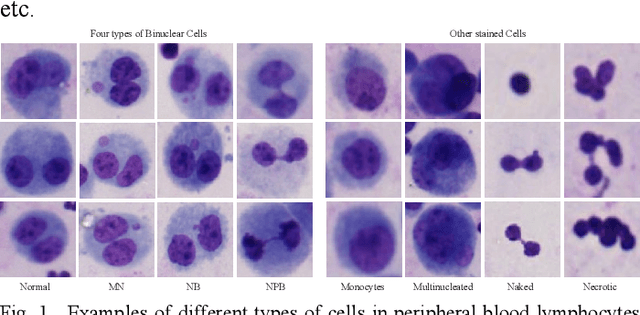

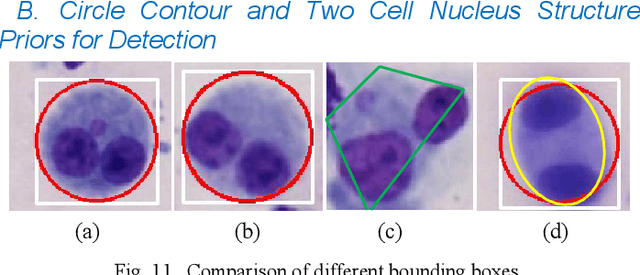

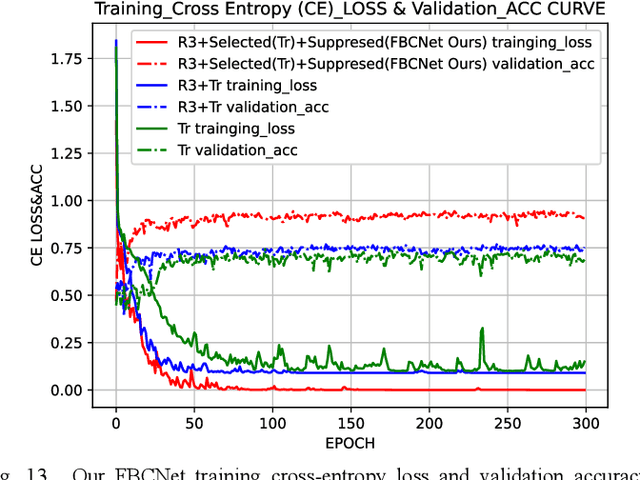

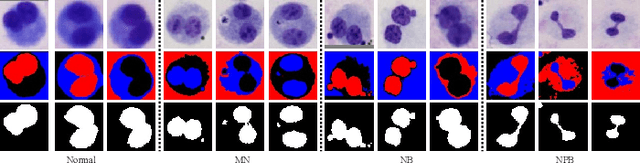

Accurately and quickly binuclear cell (BC) detection plays a significant role in predicting the risk of leukemia and other malignant tumors. However, manual microscopy counting is time-consuming and lacks objectivity. Moreover, with the limitation of staining quality and diversity of morphology features in BC microscopy whole slide images (WSIs), traditional image processing approaches are helpless. To overcome this challenge, we propose a two-stage detection method inspired by the structure prior of BC based on deep learning, which cascades to implement BCs coarse detection at the WSI-level and fine-grained classification in patch-level. The coarse detection network is a multi-task detection framework based on circular bounding boxes for cells detection, and central key points for nucleus detection. The circle representation reduces the degrees of freedom, mitigates the effect of surrounding impurities compared to usual rectangular boxes and can be rotation invariant in WSI. Detecting key points in the nucleus can assist network perception and be used for unsupervised color layer segmentation in later fine-grained classification. The fine classification network consists of a background region suppression module based on color layer mask supervision and a key region selection module based on a transformer due to its global modeling capability. Additionally, an unsupervised and unpaired cytoplasm generator network is firstly proposed to expand the long-tailed distribution dataset. Finally, experiments are performed on BC multicenter datasets. The proposed BC fine detection method outperforms other benchmarks in almost all the evaluation criteria, providing clarification and support for tasks such as cancer screenings.

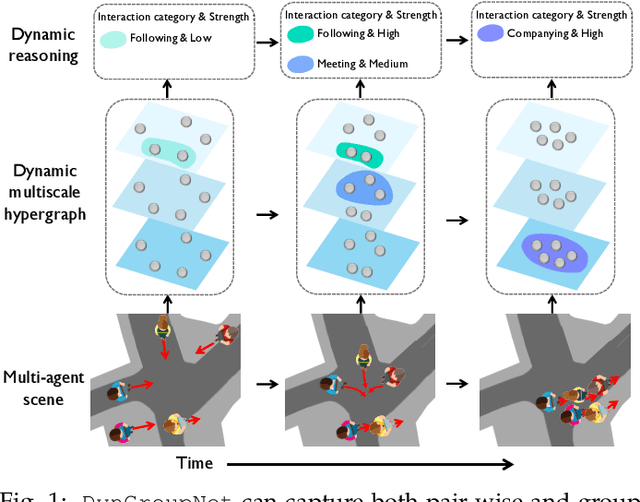

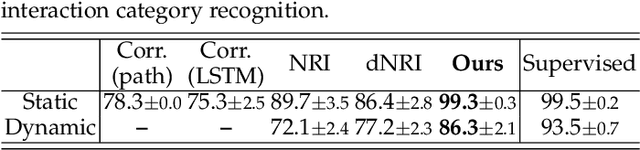

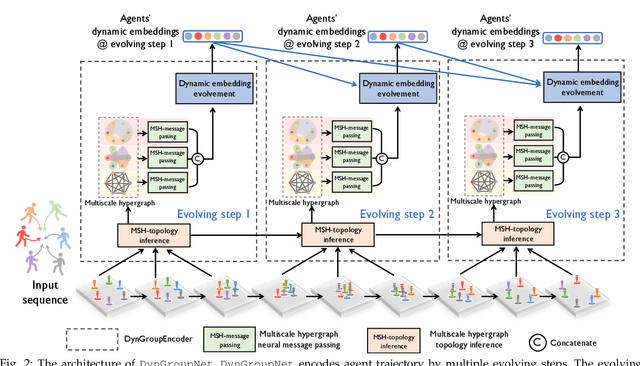

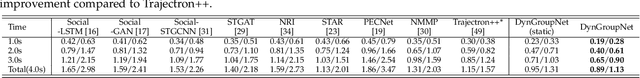

Dynamic-Group-Aware Networks for Multi-Agent Trajectory Prediction with Relational Reasoning

Jun 27, 2022

Demystifying the interactions among multiple agents from their past trajectories is fundamental to precise and interpretable trajectory prediction. However, previous works mainly consider static, pair-wise interactions with limited relational reasoning. To promote more comprehensive interaction modeling and relational reasoning, we propose DynGroupNet, a dynamic-group-aware network, which can i) model time-varying interactions in highly dynamic scenes; ii) capture both pair-wise and group-wise interactions; and iii) reason both interaction strength and category without direct supervision. Based on DynGroupNet, we further design a prediction system to forecast socially plausible trajectories with dynamic relational reasoning. The proposed prediction system leverages the Gaussian mixture model, multiple sampling and prediction refinement to promote prediction diversity, training stability and trajectory smoothness, respectively. Extensive experiments show that: 1)DynGroupNet can capture time-varying group behaviors, infer time-varying interaction category and interaction strength during trajectory prediction without any relation supervision on physical simulation datasets; 2)DynGroupNet outperforms the state-of-the-art trajectory prediction methods by a significant improvement of 22.6%/28.0%, 26.9%/34.9%, 5.1%/13.0% in ADE/FDE on the NBA, NFL Football and SDD datasets and achieve the state-of-the-art performance on the ETH-UCY dataset.

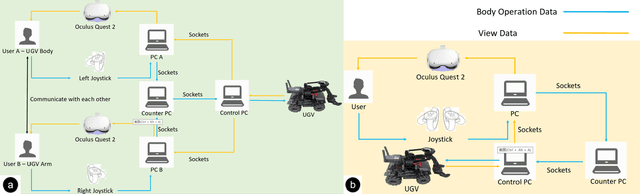

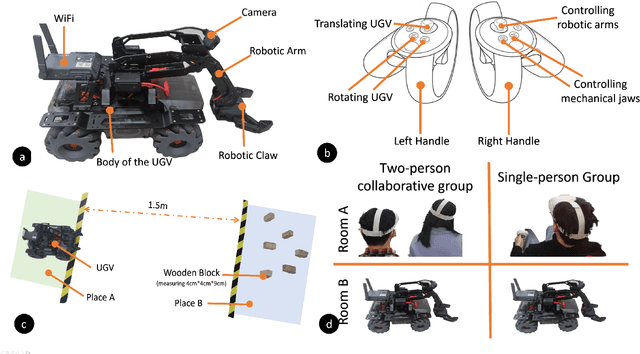

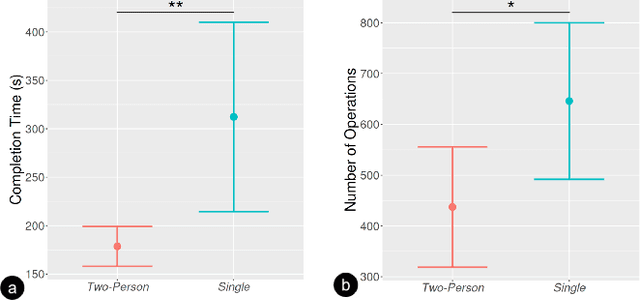

Collaborative Remote Control of Unmanned Ground Vehicles in Virtual Reality

Aug 24, 2022

Virtual reality (VR) technology is commonly used in entertainment applications; however, it has also been deployed in practical applications in more serious aspects of our lives, such as safety. To support people working in dangerous industries, VR can ensure operators manipulate standardized tasks and work collaboratively to deal with potential risks. Surprisingly, little research has focused on how people can collaboratively work in VR environments. Few studies have paid attention to the cognitive load of operators in their collaborative tasks. Once task demands become complex, many researchers focus on optimizing the design of the interaction interfaces to reduce the cognitive load on the operator. That approach could be of merit; however, it can actually subject operators to a more significant cognitive load and potentially more errors and a failure of collaboration. In this paper, we propose a new collaborative VR system to support two teleoperators working in the VR environment to remote control an uncrewed ground vehicle. We use a compared experiment to evaluate the collaborative VR systems, focusing on the time spent on tasks and the total number of operations. Our results show that the total number of processes and the cognitive load during operations were significantly lower in the two-person group than in the single-person group. Our study sheds light on designing VR systems to support collaborative work with respect to the flow of work of teleoperators instead of simply optimizing the design outcomes.

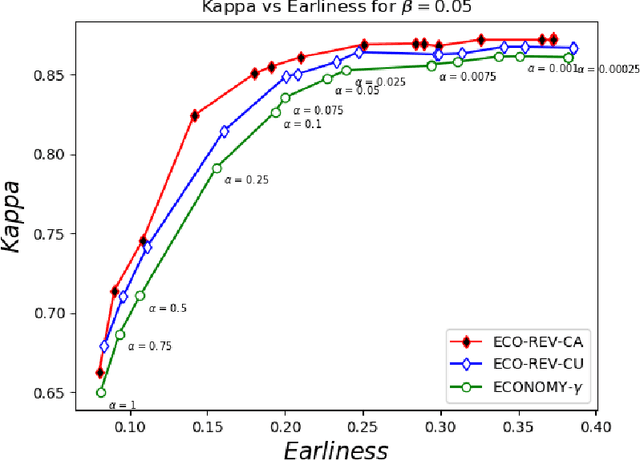

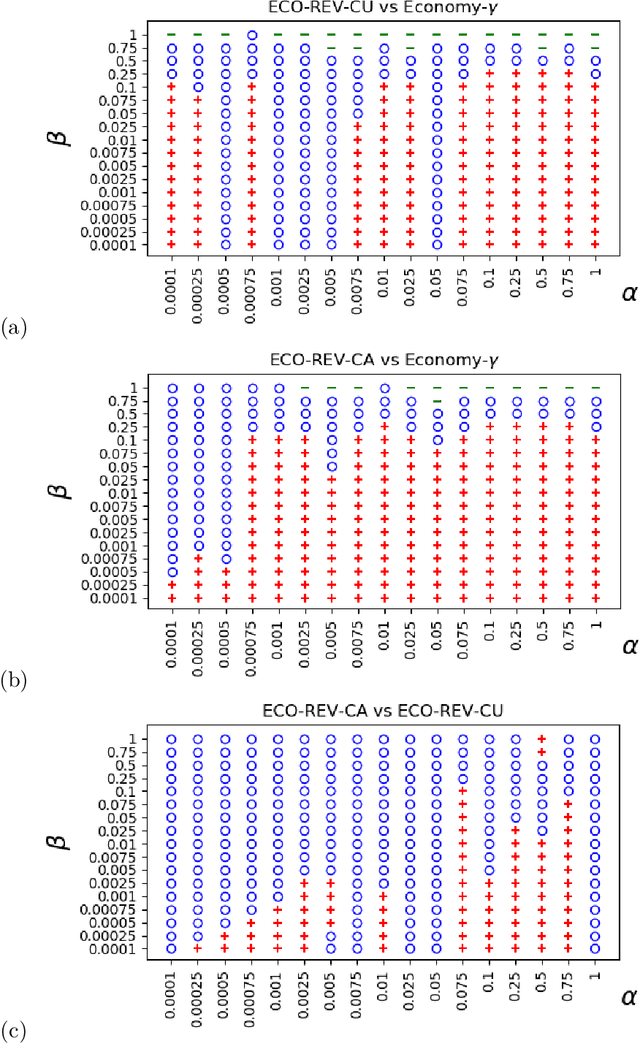

Early and Revocable Time Series Classification

Sep 22, 2021

Many approaches have been proposed for early classification of time series in light of itssignificance in a wide range of applications including healthcare, transportation and fi-nance. Until now, the early classification problem has been dealt with by considering onlyirrevocable decisions. This paper introduces a new problem calledearly and revocabletimeseries classification, where the decision maker can revoke its earlier decisions based on thenew available measurements. In order to formalize and tackle this problem, we propose anew cost-based framework and derive two new approaches from it. The first approach doesnot consider explicitly the cost of changing decision, while the second one does. Exten-sive experiments are conducted to evaluate these approaches on a large benchmark of realdatasets. The empirical results obtained convincingly show (i) that the ability of revok-ing decisions significantly improves performance over the irrevocable regime, and (ii) thattaking into account the cost of changing decision brings even better results in general.Keywords:revocable decisions, cost estimation, online decision making

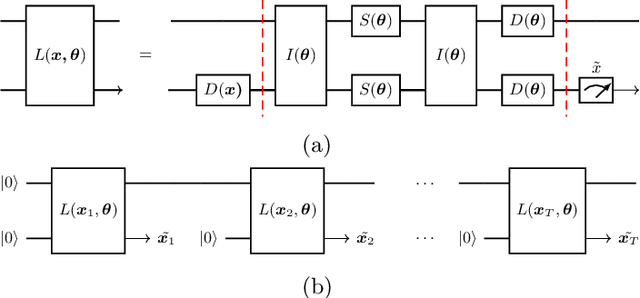

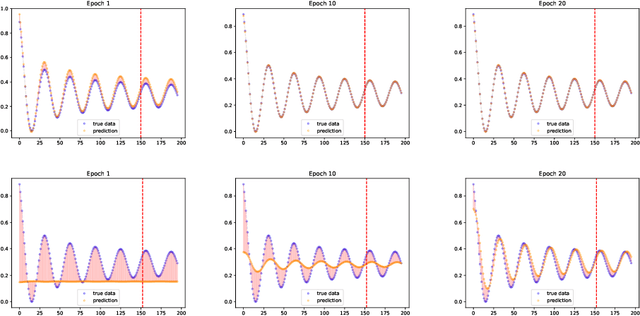

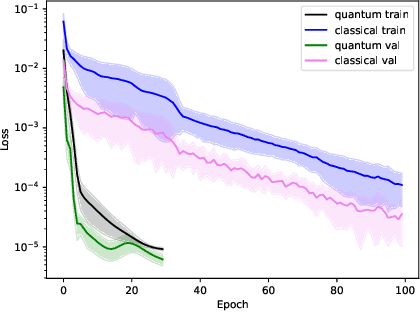

Rapid training of quantum recurrent neural network

Jul 01, 2022

Time series prediction is the crucial task for many human activities e.g. weather forecasts or predicting stock prices. One solution to this problem is to use Recurrent Neural Networks (RNNs). Although they can yield accurate predictions, their learning process is slow and complex. Here we propose a Quantum Recurrent Neural Network (QRNN) to address these obstacles. The design of the network is based on the continuous-variable quantum computing paradigm. We demonstrate that the network is capable of learning time dependence of a few types of temporal data. Our numerical simulations show that the QRNN converges to optimal weights in fewer epochs than the classical network. Furthermore, for a small number of trainable parameters it can achieve lower loss than the latter.

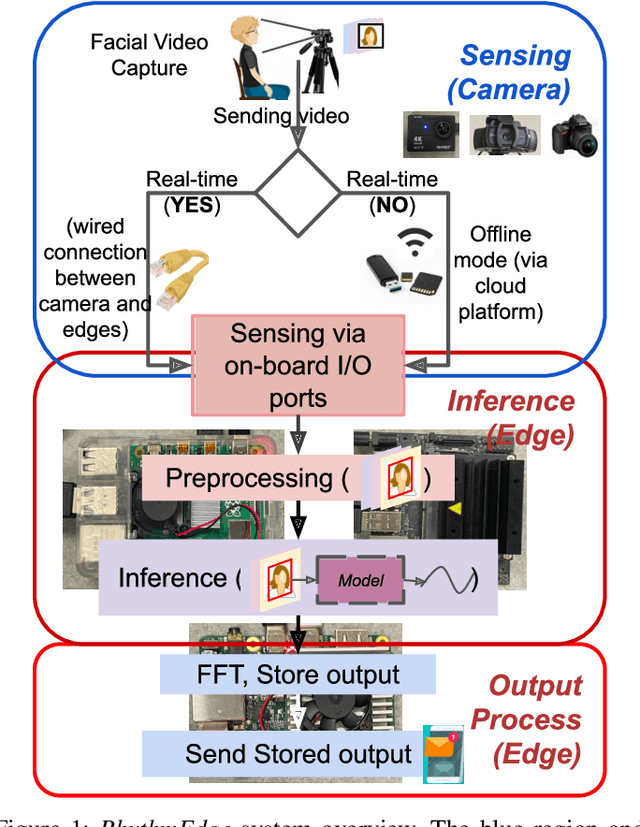

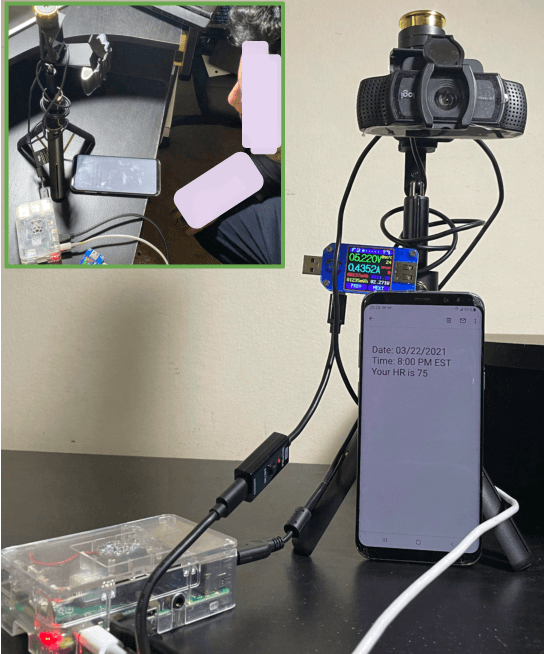

Demo: RhythmEdge: Enabling Contactless Heart Rate Estimation on the Edge

Aug 13, 2022

In this demo paper, we design and prototype RhythmEdge, a low-cost, deep-learning-based contact-less system for regular HR monitoring applications. RhythmEdge benefits over existing approaches by facilitating contact-less nature, real-time/offline operation, inexpensive and available sensing components, and computing devices. Our RhythmEdge system is portable and easily deployable for reliable HR estimation in moderately controlled indoor or outdoor environments. RhythmEdge measures HR via detecting changes in blood volume from facial videos (Remote Photoplethysmography; rPPG) and provides instant assessment using off-the-shelf commercially available resource-constrained edge platforms and video cameras. We demonstrate the scalability, flexibility, and compatibility of the RhythmEdge by deploying it on three resource-constrained platforms of differing architectures (NVIDIA Jetson Nano, Google Coral Development Board, Raspberry Pi) and three heterogeneous cameras of differing sensitivity, resolution, properties (web camera, action camera, and DSLR). RhythmEdge further stores longitudinal cardiovascular information and provides instant notification to the users. We thoroughly test the prototype stability, latency, and feasibility for three edge computing platforms by profiling their runtime, memory, and power usage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge