"Time": models, code, and papers

SALAD: Self-Adaptive Lightweight Anomaly Detection for Real-time Recurrent Time Series

Apr 19, 2021

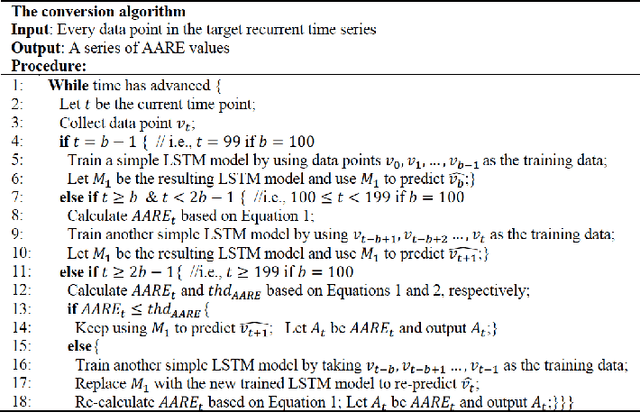

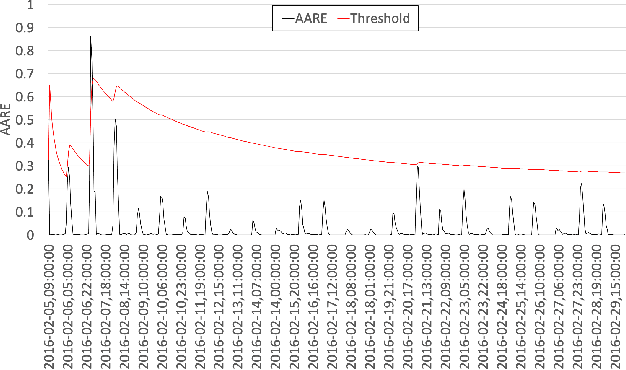

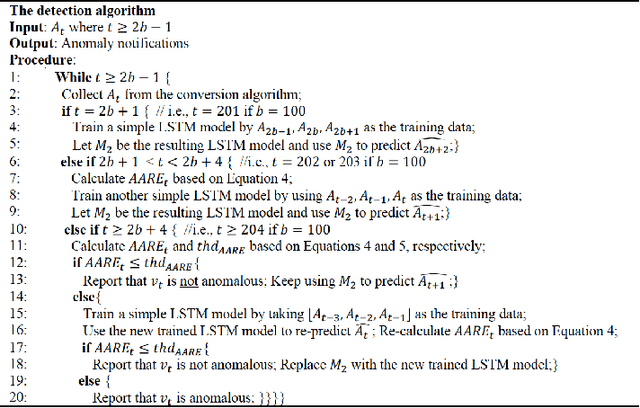

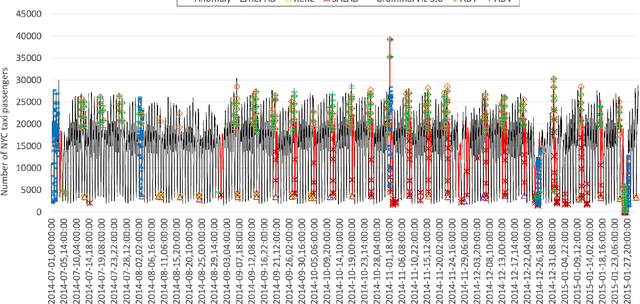

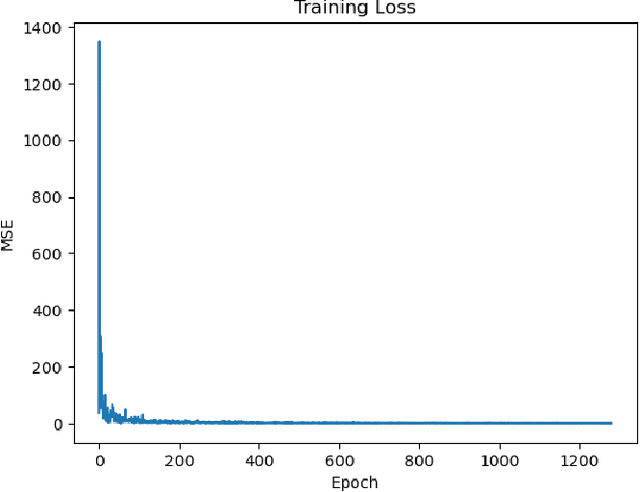

Real-world time series data often present recurrent or repetitive patterns and it is often generated in real time, such as transportation passenger volume, network traffic, system resource consumption, energy usage, and human gait. Detecting anomalous events based on machine learning approaches in such time series data has been an active research topic in many different areas. However, most machine learning approaches require labeled datasets, offline training, and may suffer from high computation complexity, consequently hindering their applicability. Providing a lightweight self-adaptive approach that does not need offline training in advance and meanwhile is able to detect anomalies in real time could be highly beneficial. Such an approach could be immediately applied and deployed on any commodity machine to provide timely anomaly alerts. To facilitate such an approach, this paper introduces SALAD, which is a Self-Adaptive Lightweight Anomaly Detection approach based on a special type of recurrent neural networks called Long Short-Term Memory (LSTM). Instead of using offline training, SALAD converts a target time series into a series of average absolute relative error (AARE) values on the fly and predicts an AARE value for every upcoming data point based on short-term historical AARE values. If the difference between a calculated AARE value and its corresponding forecast AARE value is higher than a self-adaptive detection threshold, the corresponding data point is considered anomalous. Otherwise, the data point is considered normal. Experiments based on two real-world open-source time series datasets demonstrate that SALAD outperforms five other state-of-the-art anomaly detection approaches in terms of detection accuracy. In addition, the results also show that SALAD is lightweight and can be deployed on a commodity machine.

Exploring the Impact of Temporal Bias in Point-of-Interest Recommendation

Jul 23, 2022

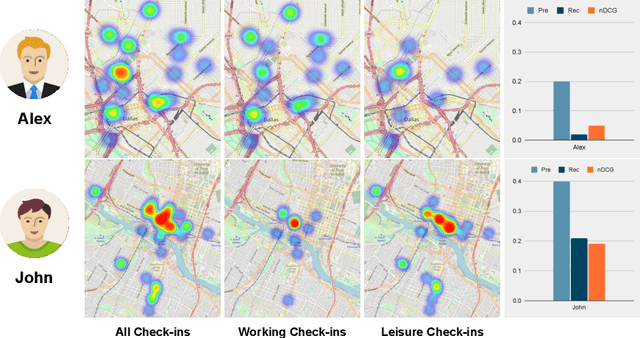

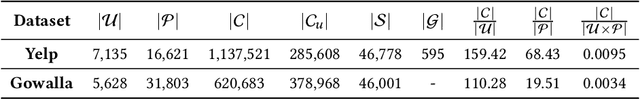

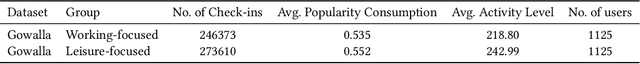

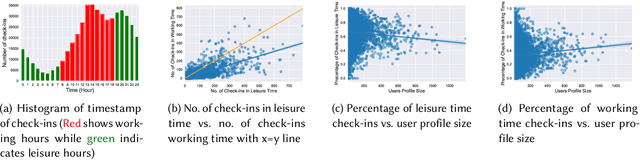

Recommending appropriate travel destinations to consumers based on contextual information such as their check-in time and location is a primary objective of Point-of-Interest (POI) recommender systems. However, the issue of contextual bias (i.e., how much consumers prefer one situation over another) has received little attention from the research community. This paper examines the effect of temporal bias, defined as the difference between users' check-in hours, leisure vs.~work hours, on the consumer-side fairness of context-aware recommendation algorithms. We believe that eliminating this type of temporal (and geographical) bias might contribute to a drop in traffic-related air pollution, noting that rush-hour traffic may be more congested. To surface effective POI recommendations, we evaluated the sensitivity of state-of-the-art context-aware models to the temporal bias contained in users' check-in activities on two POI datasets, namely Gowalla and Yelp. The findings show that the examined context-aware recommendation models prefer one group of users over another based on the time of check-in and that this preference persists even when users have the same amount of interactions.

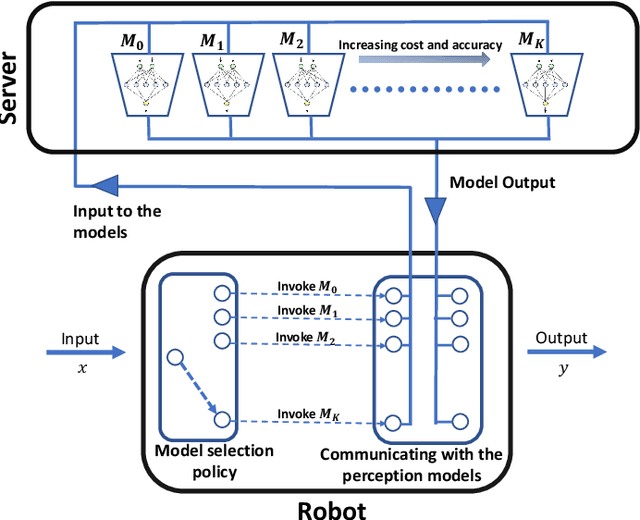

Dynamic Selection of Perception Models for Robotic Control

Jul 13, 2022

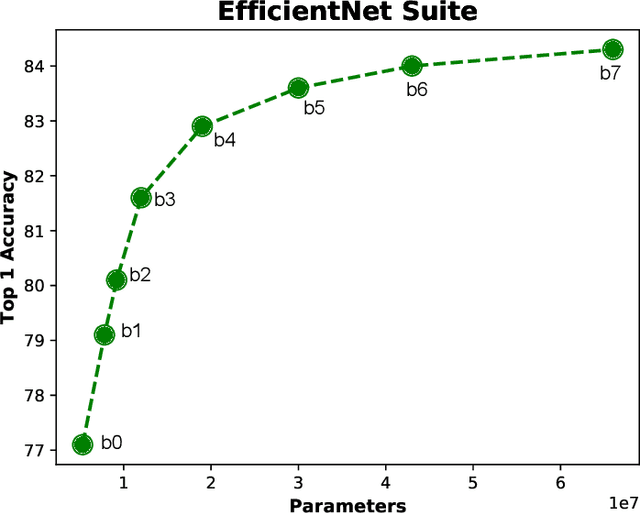

Robotic perception models, such as Deep Neural Networks (DNNs), are becoming more computationally intensive and there are several models being trained with accuracy and latency trade-offs. However, modern latency accuracy trade-offs largely report mean accuracy for single-step vision tasks, but there is little work showing which model to invoke for multi-step control tasks in robotics. The key challenge in a multi-step decision making is to make use of the right models at right times to accomplish the given task. That is, the accomplishment of the task with a minimum control cost and minimum perception time is a desideratum; this is known as the model selection problem. In this work, we precisely address this problem of invoking the correct sequence of perception models for multi-step control. In other words, we provide a provably optimal solution to the model selection problem by casting it as a multi-objective optimization problem balancing the control cost and perception time. The key insight obtained from our solution is how the variance of the perception models matters (not just the mean accuracy) for multi-step decision making, and to show how to use diverse perception models as a primitive for energy-efficient robotics. Further, we demonstrate our approach on a photo-realistic drone landing simulation using visual navigation in AirSim. Using our proposed policy, we achieved 38.04% lower control cost with 79.1% less perception time than other competing benchmarks.

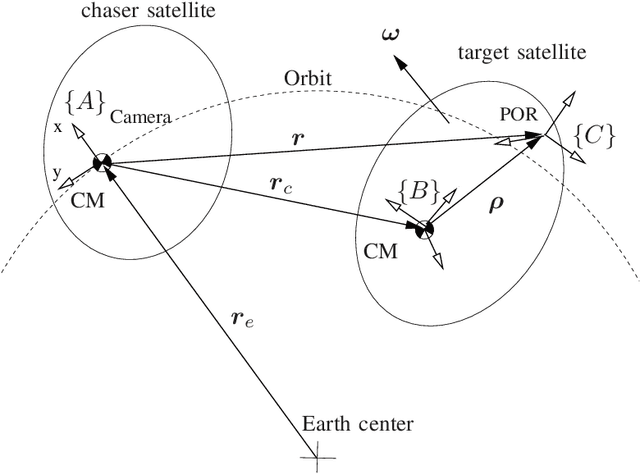

Robust 3D Vision for Autonomous Robots

Aug 30, 2022

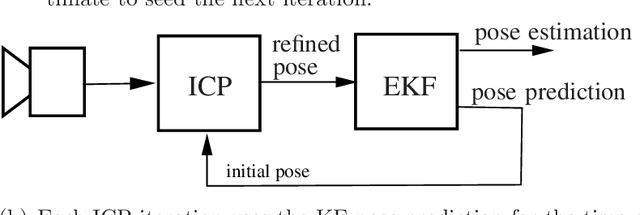

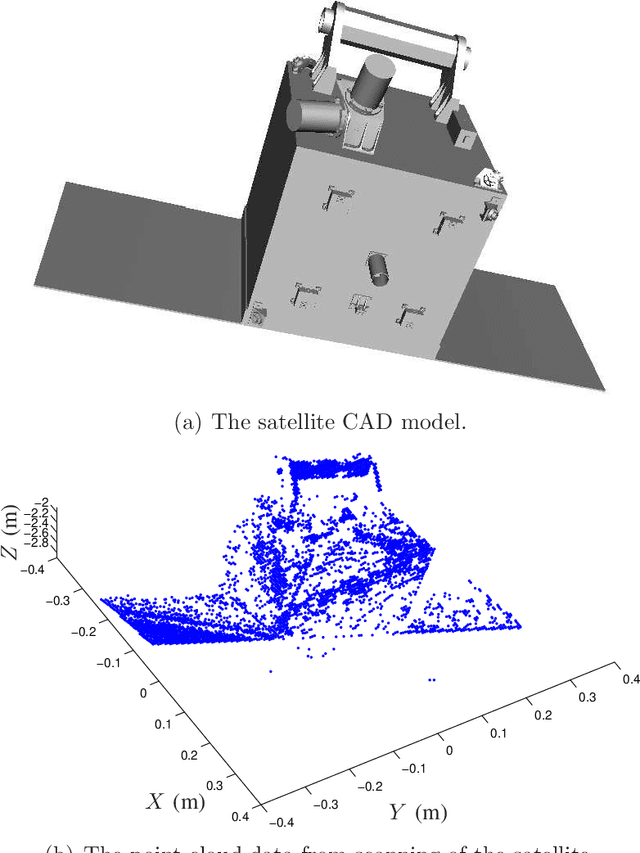

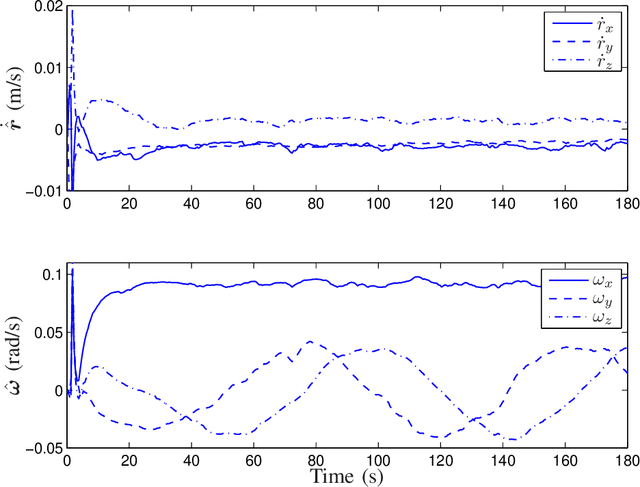

This paper presents a fault-tolerant 3D vision system for autonomous robotic operation. In particular, pose estimation of space objects is achieved using 3D vision data in an integrated Kalman filter (KF) and an Iterative Closest Point (ICP) algorithm in a closed-loop configuration. The initial guess for the internal ICP iteration is provided by the state estimate propagation of the Kalman filer. The Kalman filter is capable of not only estimating the target's states but also its inertial parameters. This allows the motion of the target to be predictable as soon as the filter converges. Consequently, the ICP can maintain pose tracking over a wider range of velocity due to the increased precision of ICP initialization. Furthermore, incorporation of the target's dynamics model in the estimation process allows the estimator continuously provide pose estimation even when the sensor temporally loses its signal namely due to obstruction. The capabilities of the pose estimation methodology is demonstrated by a ground testbed for Automated Rendezvous & Docking. In this experiment, Neptec's Laser Camera System (LCS) is used for real-time scanning of a satellite model attached to a manipulator arm, which is driven by a simulator according to orbital and attitude dynamics. The results showed that robust tracking of the free-floating tumbling satellite can be achieved only if the Kalman filter and ICP are in a closed-loop configuration.

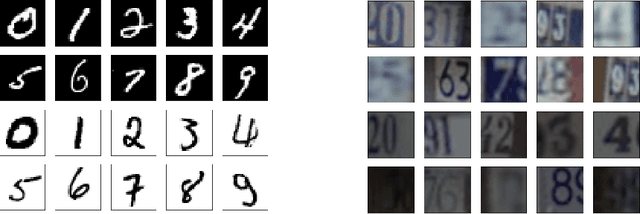

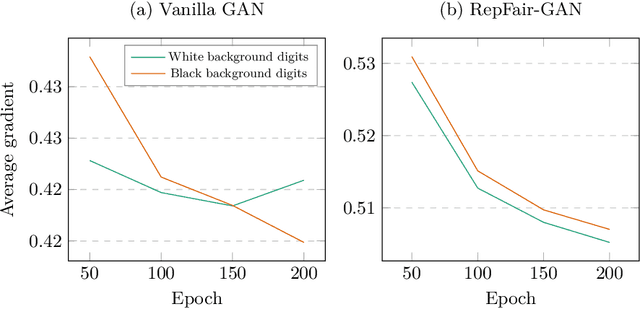

RepFair-GAN: Mitigating Representation Bias in GANs Using Gradient Clipping

Jul 13, 2022

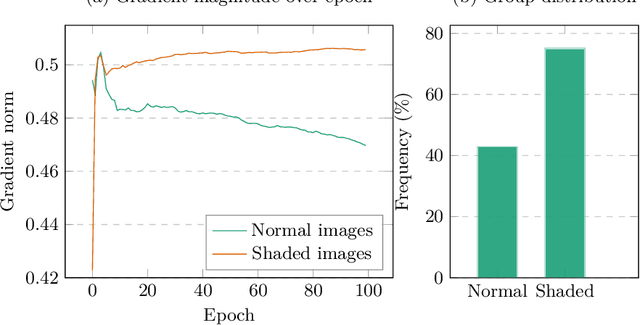

Fairness has become an essential problem in many domains of Machine Learning (ML), such as classification, natural language processing, and Generative Adversarial Networks (GANs). In this research effort, we study the unfairness of GANs. We formally define a new fairness notion for generative models in terms of the distribution of generated samples sharing the same protected attributes (gender, race, etc.). The defined fairness notion (representational fairness) requires the distribution of the sensitive attributes at the test time to be uniform, and, in particular for GAN model, we show that this fairness notion is violated even when the dataset contains equally represented groups, i.e., the generator favors generating one group of samples over the others at the test time. In this work, we shed light on the source of this representation bias in GANs along with a straightforward method to overcome this problem. We first show on two widely used datasets (MNIST, SVHN) that when the norm of the gradient of one group is more important than the other during the discriminator's training, the generator favours sampling data from one group more than the other at test time. We then show that controlling the groups' gradient norm by performing group-wise gradient norm clipping in the discriminator during the training leads to a more fair data generation in terms of representational fairness compared to existing models while preserving the quality of generated samples.

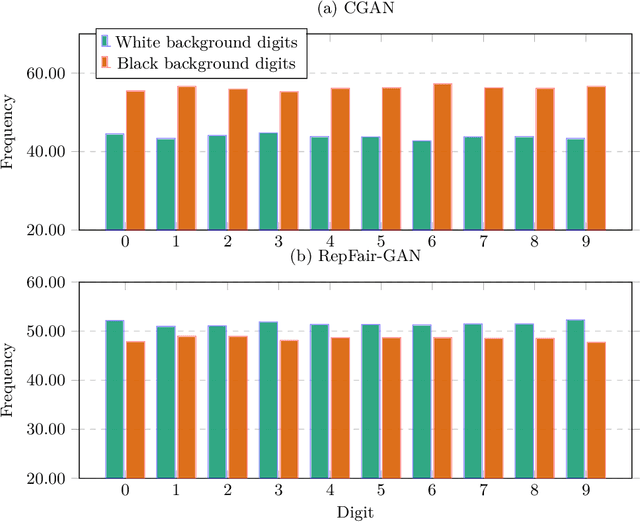

PromptFL: Let Federated Participants Cooperatively Learn Prompts Instead of Models -- Federated Learning in Age of Foundation Model

Aug 24, 2022

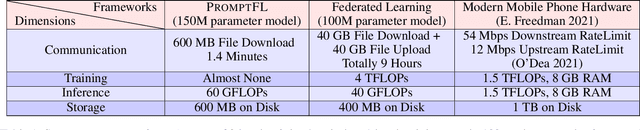

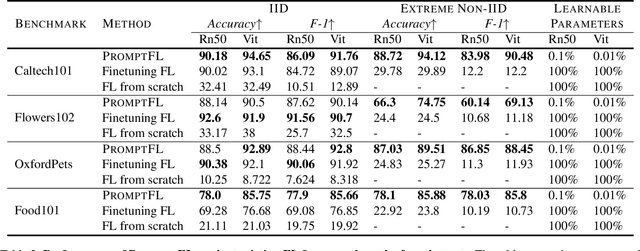

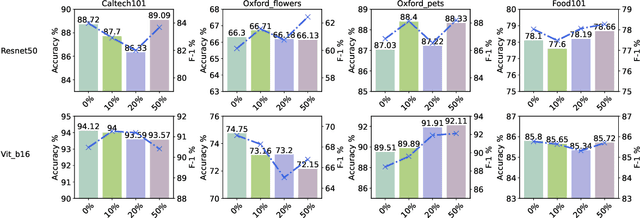

Quick global aggregation of effective distributed parameters is crucial to federated learning (FL), which requires adequate bandwidth for parameters communication and sufficient user data for local training. Otherwise, FL may cost excessive training time for convergence and produce inaccurate models. In this paper, we propose a brand-new FL framework, PromptFL, that replaces the federated model training with the federated prompt training, i.e., let federated participants train prompts instead of a shared model, to simultaneously achieve the efficient global aggregation and local training on insufficient data by exploiting the power of foundation models (FM) in a distributed way. PromptFL ships an off-the-shelf FM, i.e., CLIP, to distributed clients who would cooperatively train shared soft prompts based on very few local data. Since PromptFL only needs to update the prompts instead of the whole model, both the local training and the global aggregation can be significantly accelerated. And FM trained over large scale data can provide strong adaptation capability to distributed users tasks with the trained soft prompts. We empirically analyze the PromptFL via extensive experiments, and show its superiority in terms of system feasibility, user privacy, and performance.

Real-time Recognition of Yoga Poses using computer Vision for Smart Health Care

Jan 19, 2022

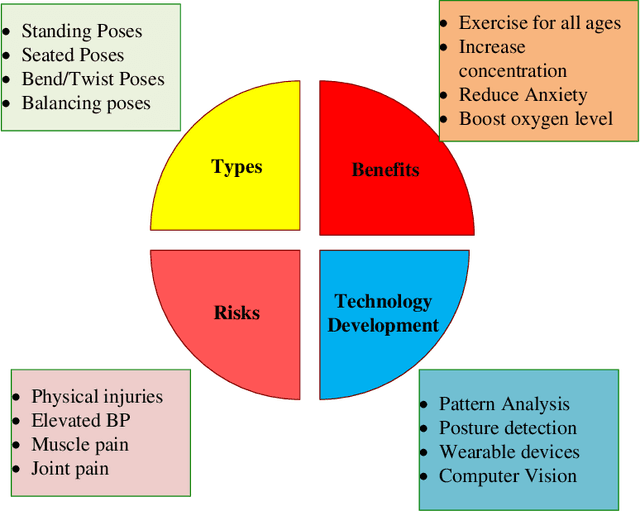

Nowadays, yoga has become a part of life for many people. Exercises and sports technological assistance is implemented in yoga pose identification. In this work, a self-assistance based yoga posture identification technique is developed, which helps users to perform Yoga with the correction feature in Real-time. The work also presents Yoga-hand mudra (hand gestures) identification. The YOGI dataset has been developed which include 10 Yoga postures with around 400-900 images of each pose and also contain 5 mudras for identification of mudras postures. It contains around 500 images of each mudra. The feature has been extracted by making a skeleton on the body for yoga poses and hand for mudra poses. Two different algorithms have been used for creating a skeleton one for yoga poses and the second for hand mudras. Angles of the joints have been extracted as a features for different machine learning and deep learning models. among all the models XGBoost with RandomSearch CV is most accurate and gives 99.2\% accuracy. The complete design framework is described in the present paper.

Short Duration Traffic Flow Prediction Using Kalman Filtering

Aug 06, 2022

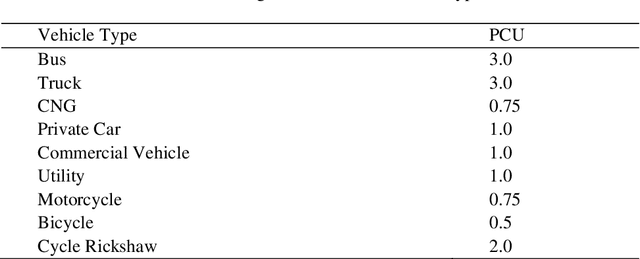

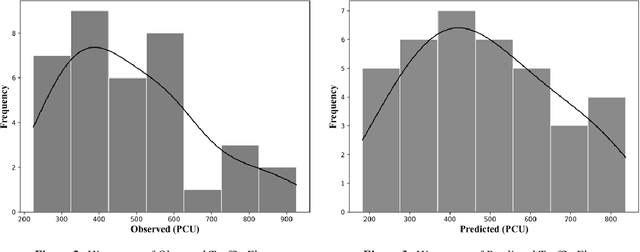

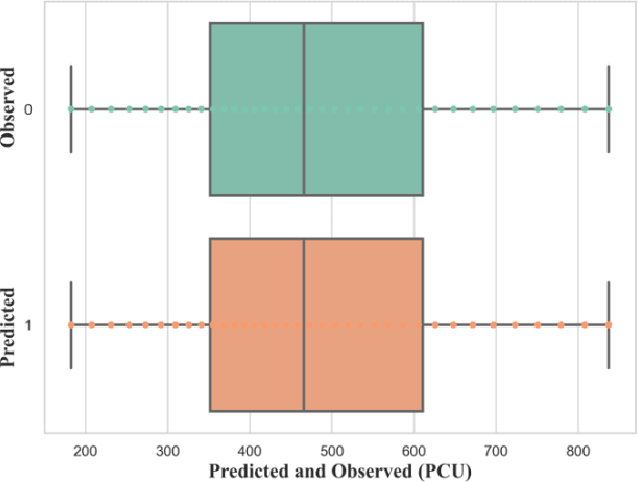

The research examined predicting short-duration traffic flow counts with the Kalman filtering technique (KFT), a computational filtering method. Short-term traffic prediction is an important tool for operation in traffic management and transportation system. The short-term traffic flow value results can be used for travel time estimation by route guidance and advanced traveler information systems. Though the KFT has been tested for homogeneous traffic, its efficiency in heterogeneous traffic has yet to be investigated. The research was conducted on Mirpur Road in Dhaka, near the Sobhanbagh Mosque. The stream contains a heterogeneous mix of traffic, which implies uncertainty in prediction. The propositioned method is executed in Python using the pykalman library. The library is mostly used in advanced database modeling in the KFT framework, which addresses uncertainty. The data was derived from a three-hour traffic count of the vehicle. According to the Geometric Design Standards Manual published by Roads and Highways Division (RHD), Bangladesh in 2005, the heterogeneous traffic flow value was translated into an equivalent passenger car unit (PCU). The PCU obtained from five-minute aggregation was then utilized as the suggested model's dataset. The propositioned model has a mean absolute percent error (MAPE) of 14.62, indicating that the KFT model can forecast reasonably well. The root mean square percent error (RMSPE) shows an 18.73% accuracy which is less than 25%; hence the model is acceptable. The developed model has an R2 value of 0.879, indicating that it can explain 87.9 percent of the variability in the dataset. If the data were collected over a more extended period of time, the R2 value could be closer to 1.0.

Terminal Embeddings in Sublinear Time

Oct 17, 2021Recently (Elkin, Filtser, Neiman 2017) introduced the concept of a {\it terminal embedding} from one metric space $(X,d_X)$ to another $(Y,d_Y)$ with a set of designated terminals $T\subset X$. Such an embedding $f$ is said to have distortion $\rho\ge 1$ if $\rho$ is the smallest value such that there exists a constant $C>0$ satisfying \begin{equation*} \forall x\in T\ \forall q\in X,\ C d_X(x, q) \le d_Y(f(x), f(q)) \le C \rho d_X(x, q) . \end{equation*} In the case that $X,Y$ are both Euclidean metrics with $Y$ being $m$-dimensional, recently (Narayanan, Nelson 2019), following work of (Mahabadi, Makarychev, Makarychev, Razenshteyn 2018), showed that distortion $1+\epsilon$ is achievable via such a terminal embedding with $m = O(\epsilon^{-2}\log n)$ for $n := |T|$. This generalizes the Johnson-Lindenstrauss lemma, which only preserves distances within $T$ and not to $T$ from the rest of space. The downside is that evaluating the embedding on some $q\in \mathbb{R}^d$ required solving a semidefinite program with $\Theta(n)$ constraints in $m$ variables and thus required some superlinear $\mathrm{poly}(n)$ runtime. Our main contribution in this work is to give a new data structure for computing terminal embeddings. We show how to pre-process $T$ to obtain an almost linear-space data structure that supports computing the terminal embedding image of any $q\in\mathbb{R}^d$ in sublinear time $n^{1-\Theta(\epsilon^2)+o(1)} + dn^{o(1)}$. To accomplish this, we leverage tools developed in the context of approximate nearest neighbor search.

Learning crop type mapping from regional label proportions in large-scale SAR and optical imagery

Aug 24, 2022

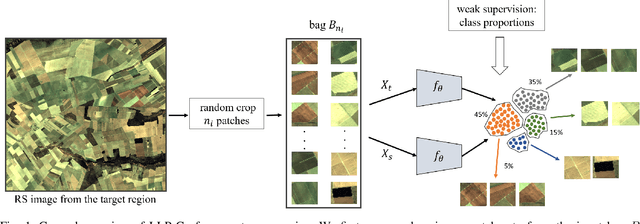

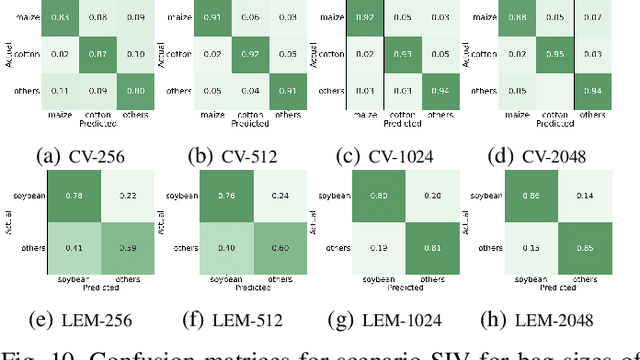

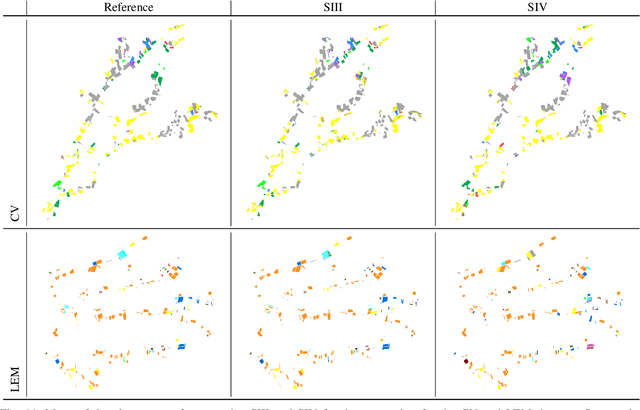

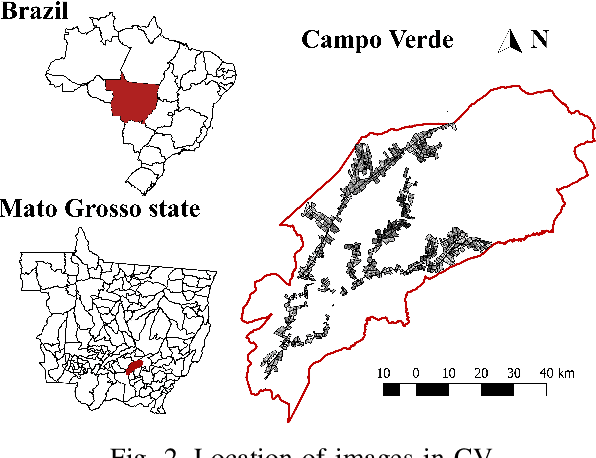

The application of deep learning algorithms to Earth observation (EO) in recent years has enabled substantial progress in fields that rely on remotely sensed data. However, given the data scale in EO, creating large datasets with pixel-level annotations by experts is expensive and highly time-consuming. In this context, priors are seen as an attractive way to alleviate the burden of manual labeling when training deep learning methods for EO. For some applications, those priors are readily available. Motivated by the great success of contrastive-learning methods for self-supervised feature representation learning in many computer-vision tasks, this study proposes an online deep clustering method using crop label proportions as priors to learn a sample-level classifier based on government crop-proportion data for a whole agricultural region. We evaluate the method using two large datasets from two different agricultural regions in Brazil. Extensive experiments demonstrate that the method is robust to different data types (synthetic-aperture radar and optical images), reporting higher accuracy values considering the major crop types in the target regions. Thus, it can alleviate the burden of large-scale image annotation in EO applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge