"Time": models, code, and papers

CAPER: Coarsen, Align, Project, Refine - A General Multilevel Framework for Network Alignment

Aug 23, 2022

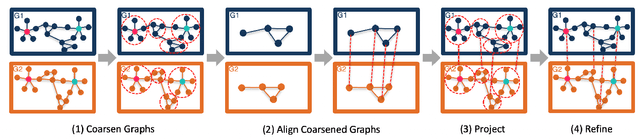

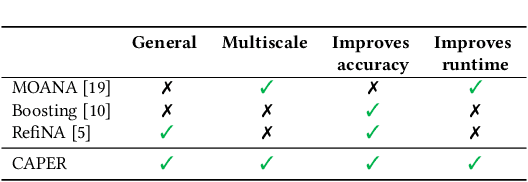

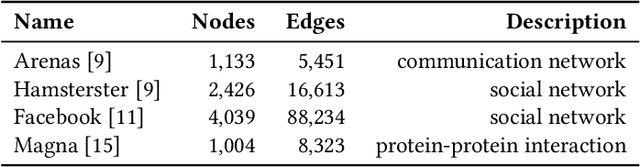

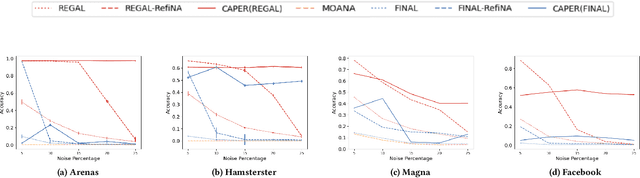

Network alignment, or the task of finding corresponding nodes in different networks, is an important problem formulation in many application domains. We propose CAPER, a multilevel alignment framework that Coarsens the input graphs, Aligns the coarsened graphs, Projects the alignment solution to finer levels and Refines the alignment solution. We show that CAPER can improve upon many different existing network alignment algorithms by enforcing alignment consistency across multiple graph resolutions: nodes matched at finer levels should also be matched at coarser levels. CAPER also accelerates the use of slower network alignment methods, at the modest cost of linear-time coarsening and refinement steps, by allowing them to be run on smaller coarsened versions of the input graphs. Experiments show that CAPER can improve upon diverse network alignment methods by an average of 33% in accuracy and/or an order of magnitude faster in runtime.

Wacky Weights in Learned Sparse Representations and the Revenge of Score-at-a-Time Query Evaluation

Oct 28, 2021

Recent advances in retrieval models based on learned sparse representations generated by transformers have led us to, once again, consider score-at-a-time query evaluation techniques for the top-k retrieval problem. Previous studies comparing document-at-a-time and score-at-a-time approaches have consistently found that the former approach yields lower mean query latency, although the latter approach has more predictable query latency. In our experiments with four different retrieval models that exploit representational learning with bags of words, we find that transformers generate "wacky weights" that appear to greatly reduce the opportunities for skipping and early exiting optimizations that lie at the core of standard document-at-a-time techniques. As a result, score-at-a-time approaches appear to be more competitive in terms of query evaluation latency than in previous studies. We find that, if an effectiveness loss of up to three percent can be tolerated, a score-at-a-time approach can yield substantial gains in mean query latency while at the same time dramatically reducing tail latency.

Lensless multicore-fiber microendoscope for real-time tailored light field generation with phase encoder neural network (CoreNet)

Nov 24, 2021

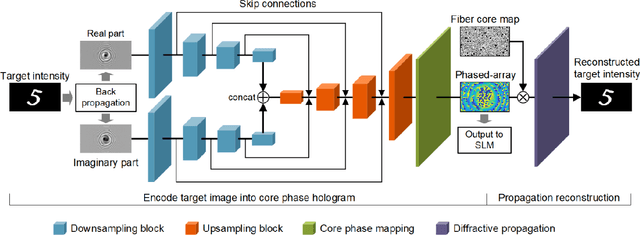

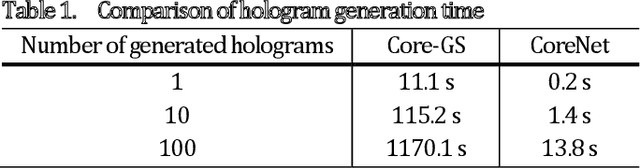

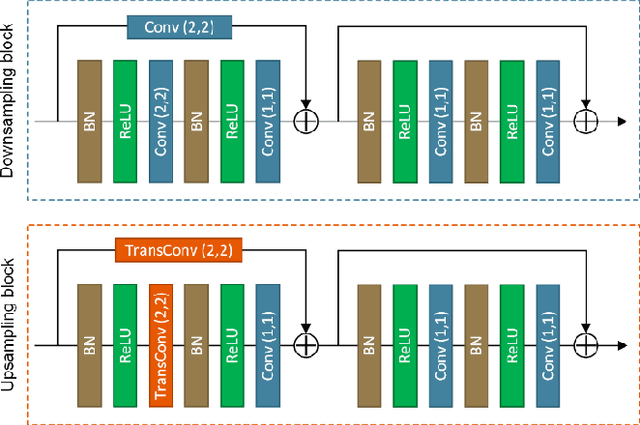

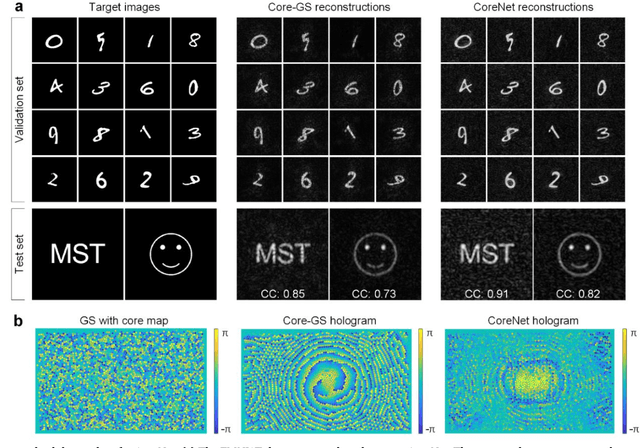

The generation of tailored light with multi-core fiber (MCF) lensless microendoscopes is widely used in biomedicine. However, the computer-generated holograms (CGHs) used for such applications are typically generated by iterative algorithms, which demand high computation effort, limiting advanced applications like in vivo optogenetic stimulation and fiber-optic cell manipulation. The random and discrete distribution of the fiber cores induces strong spatial aliasing to the CGHs, hence, an approach that can rapidly generate tailored CGHs for MCFs is highly demanded. We demonstrate a novel phase encoder deep neural network (CoreNet), which can generate accurate tailored CGHs for MCFs at a near video-rate. Simulations show that CoreNet can speed up the computation time by two magnitudes and increase the fidelity of the generated light field compared to the conventional CGH techniques. For the first time, real-time generated tailored CGHs are on-the-fly loaded to the phase-only SLM for dynamic light fields generation through the MCF microendoscope in experiments. This paves the avenue for real-time cell rotation and several further applications that require real-time high-fidelity light delivery in biomedicine.

TraverseNet: Unifying Space and Time in Message Passing

Aug 25, 2021

This paper aims to unify spatial dependency and temporal dependency in a non-Euclidean space while capturing the inner spatial-temporal dependencies for spatial-temporal graph data. For spatial-temporal attribute entities with topological structure, the space-time is consecutive and unified while each node's current status is influenced by its neighbors' past states over variant periods of each neighbor. Most spatial-temporal neural networks study spatial dependency and temporal correlation separately in processing, gravely impaired the space-time continuum, and ignore the fact that the neighbors' temporal dependency period for a node can be delayed and dynamic. To model this actual condition, we propose TraverseNet, a novel spatial-temporal graph neural network, viewing space and time as an inseparable whole, to mine spatial-temporal graphs while exploiting the evolving spatial-temporal dependencies for each node via message traverse mechanisms. Experiments with ablation and parameter studies have validated the effectiveness of the proposed TraverseNets, and the detailed implementation can be found from https://github.com/nnzhan/TraverseNet.

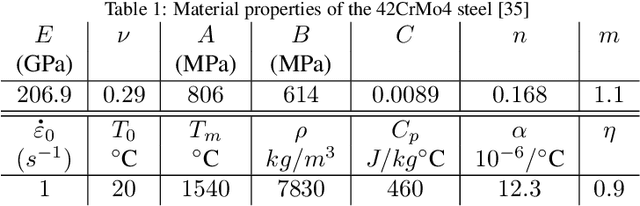

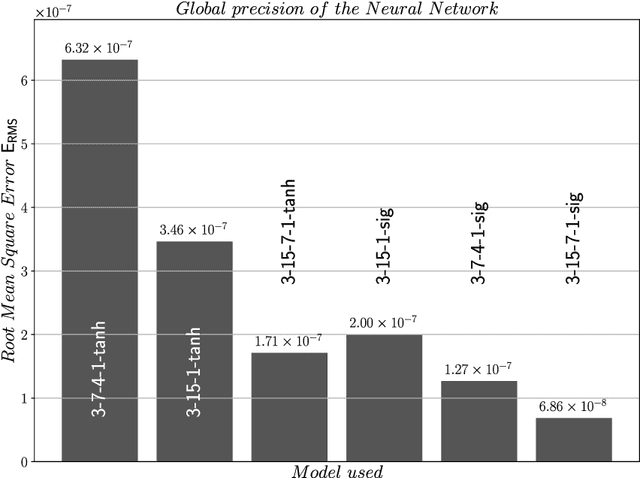

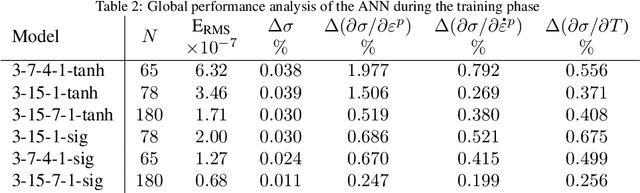

Efficient Implementation of Non-linear Flow Law Using Neural Network into the Abaqus Explicit FEM code

Sep 07, 2022

Machine learning techniques are increasingly used to predict material behavior in scientific applications and offer a significant advantage over conventional numerical methods. In this work, an Artificial Neural Network (ANN) model is used in a finite element formulation to define the flow law of a metallic material as a function of plastic strain, plastic strain rate and temperature. First, we present the general structure of the neural network, its operation and focus on the ability of the network to deduce, without prior learning, the derivatives of the flow law with respect to the model inputs. In order to validate the robustness and accuracy of the proposed model, we compare and analyze the performance of several network architectures with respect to the analytical formulation of a Johnson-Cook behavior law for a 42CrMo4 steel. In a second part, after having selected an Artificial Neural Network architecture with $2$ hidden layers, we present the implementation of this model in the Abaqus Explicit computational code in the form of a VUHARD subroutine. The predictive capability of the proposed model is then demonstrated during the numerical simulation of two test cases: the necking of a circular bar and a Taylor impact test. The results obtained show a very high capability of the ANN to replace the analytical formulation of a Johnson-Cook behavior law in a finite element code, while remaining competitive in terms of numerical simulation time compared to a classical approach.

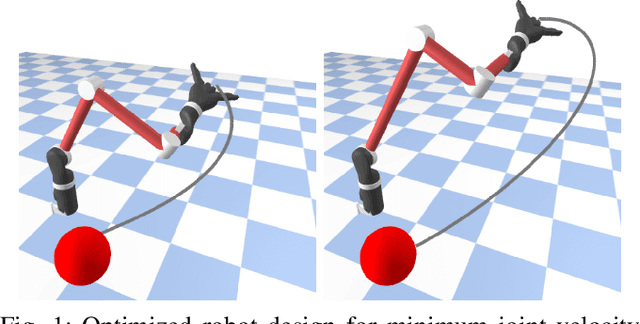

Differentiable Optimal Control via Differential Dynamic Programming

Sep 02, 2022

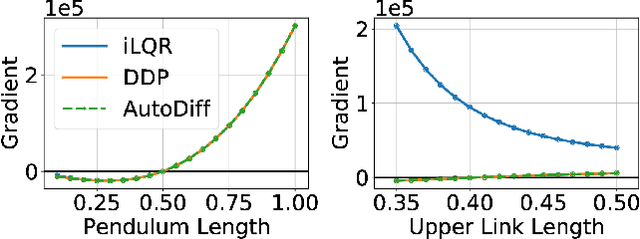

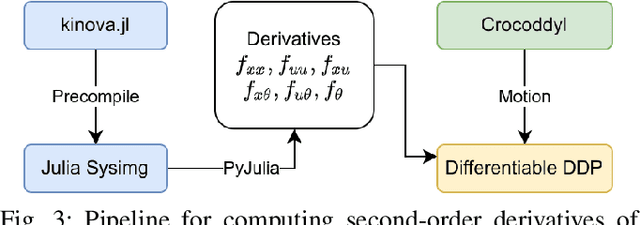

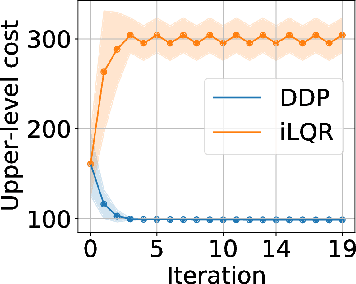

Robot design optimization, imitation learning and system identification share a common problem which requires optimization over robot or task parameters at the same time as optimizing the robot motion. To solve these problems, we can use differentiable optimal control for which the gradients of the robot's motion with respect to the parameters are required. We propose a method to efficiently compute these gradients analytically via the differential dynamic programming (DDP) algorithm using sensitivity analysis (SA). We show that we must include second-order dynamics terms when computing the gradients. However, we do not need to include them when computing the motion. We validate our approach on the pendulum and double pendulum systems. Furthermore, we compare against using the derivatives of the iterative linear quadratic regulator (iLQR), which ignores these second-order terms everywhere, on a co-design task for the Kinova arm, where we optimize the link lengths of the robot for a target reaching task. We show that optimizing using iLQR gradients diverges as ignoring the second-order dynamics affects the computation of the derivatives. Instead, optimizing using DDP gradients converges to the same optimum for a range of initial designs allowing our formulation to scale to complex systems.

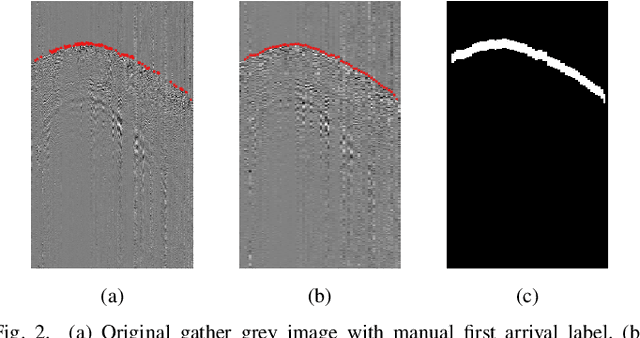

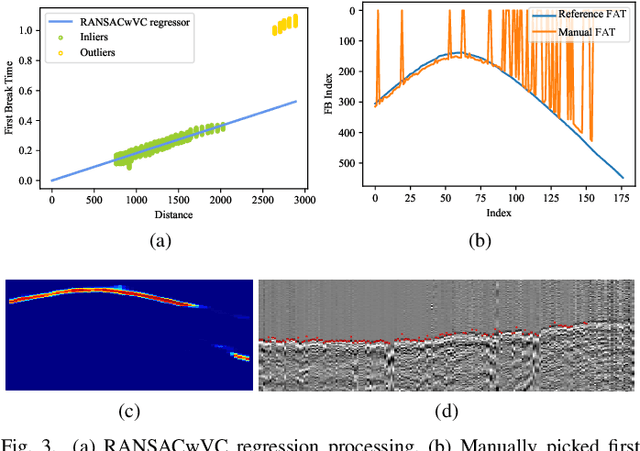

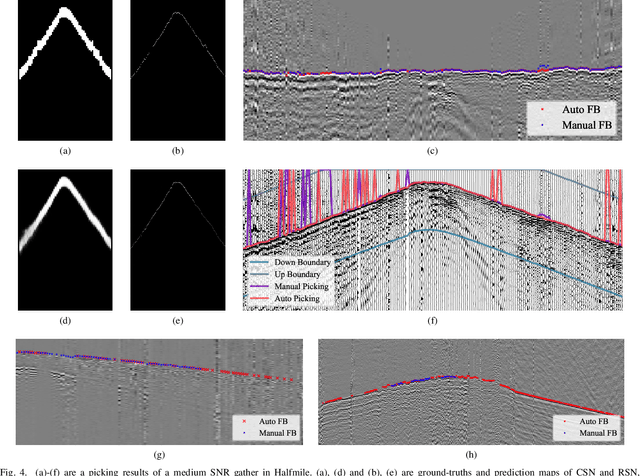

MSSPN: Automatic First Arrival Picking using Multi-Stage Segmentation Picking Network

Sep 07, 2022

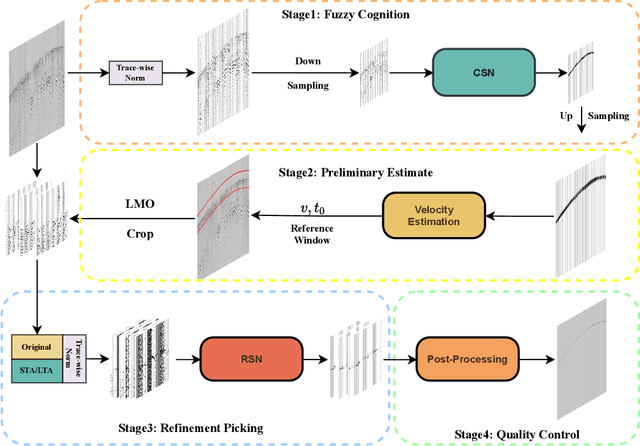

Picking the first arrival times of prestack gathers is called First Arrival Time (FAT) picking, which is an indispensable step in seismic data processing, and is mainly solved manually in the past. With the current increasing density of seismic data collection, the efficiency of manual picking has been unable to meet the actual needs. Therefore, automatic picking methods have been greatly developed in recent decades, especially those based on deep learning. However, few of the current supervised deep learning-based method can avoid the dependence on labeled samples. Besides, since the gather data is a set of signals which are greatly different from the natural images, it is difficult for the current method to solve the FAT picking problem in case of a low Signal to Noise Ratio (SNR). In this paper, for hard rock seismic gather data, we propose a Multi-Stage Segmentation Pickup Network (MSSPN), which solves the generalization problem across worksites and the picking problem in the case of low SNR. In MSSPN, there are four sub-models to simulate the manually picking processing, which is assumed to four stages from coarse to fine. Experiments on seven field datasets with different qualities show that our MSSPN outperforms benchmarks by a large margin.Particularly, our method can achieve more than 90\% accurate picking across worksites in the case of medium and high SNRs, and even fine-tuned model can achieve 88\% accurate picking of the dataset with low SNR.

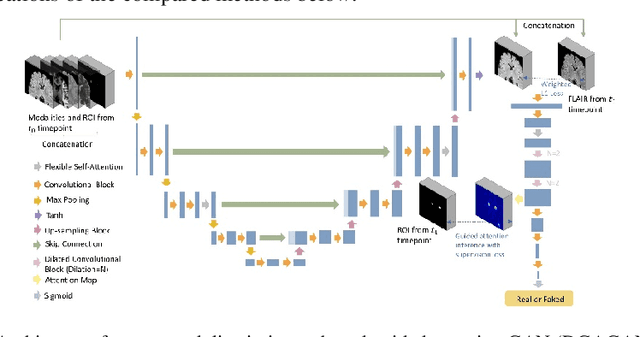

Lesion-Specific Prediction with Discriminator-Based Supervised Guided Attention Module Enabled GANs in Multiple Sclerosis

Aug 30, 2022

Multiple Sclerosis (MS) is a chronic neurological condition characterized by the development of lesions in the white matter of the brain. T2-fluid attenuated inversion recovery (FLAIR) brain magnetic resonance imaging (MRI) provides superior visualization and characterization of MS lesions, relative to other MRI modalities. Follow-up brain FLAIR MRI in MS provides helpful information for clinicians towards monitoring disease progression. In this study, we propose a novel modification to generative adversarial networks (GANs) to predict future lesion-specific FLAIR MRI for MS at fixed time intervals. We use supervised guided attention and dilated convolutions in the discriminator, which supports making an informed prediction of whether the generated images are real or not based on attention to the lesion area, which in turn has potential to help improve the generator to predict the lesion area of future examinations more accurately. We compared our method to several baselines and one state-of-art CF-SAGAN model [1]. In conclusion, our results indicate that the proposed method achieves higher accuracy and reduces the standard deviation of the prediction errors in the lesion area compared with other models with similar overall performance.

MSMDFusion: Fusing LiDAR and Camera at Multiple Scales with Multi-Depth Seeds for 3D Object Detection

Sep 07, 2022

Fusing LiDAR and camera information is essential for achieving accurate and reliable 3D object detection in autonomous driving systems. However, this is challenging due to the difficulty of combining multi-granularity geometric and semantic features from two drastically different modalities. Recent approaches aim at exploring the semantic densities of camera features through lifting points in 2D camera images (referred to as seeds) into 3D space for fusion, and they can be roughly divided into 1) early fusion of raw points that aims at augmenting the 3D point cloud at the early input stage, and 2) late fusion of BEV (bird-eye view) maps that merges LiDAR and camera BEV features before the detection head. While both have their merits in enhancing the representation power of the combined features, this single-level fusion strategy is a suboptimal solution to the aforementioned challenge. Their major drawbacks are the inability to interact the multi-granularity semantic features from two distinct modalities sufficiently. To this end, we propose a novel framework that focuses on the multi-scale progressive interaction of the multi-granularity LiDAR and camera features. Our proposed method, abbreviated as MDMSFusion, achieves state-of-the-art results in 3D object detection, with 69.1 mAP and 71.8 NDS on nuScenes validation set, and 70.8 mAP and 73.2 NDS on nuScenes test set, which rank 1st and 2nd respectively among single-model non-ensemble approaches by the time of submission.

Fast and Precise Binary Instance Segmentation of 2D Objects for Automotive Applications

Aug 24, 2022

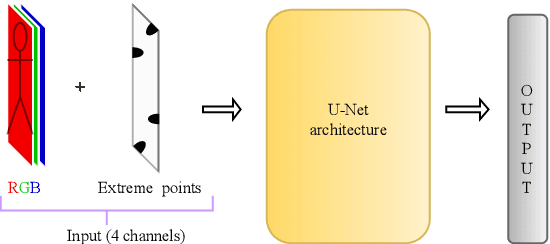

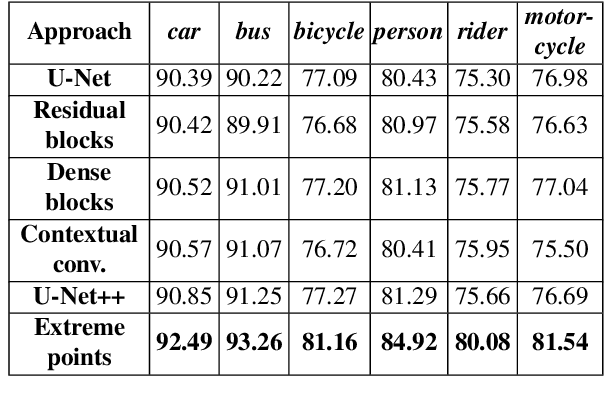

In this paper, we focus on improving binary 2D instance segmentation to assist humans in labeling ground truth datasets with polygons. Humans labeler just have to draw boxes around objects, and polygons are generated automatically. To be useful, our system has to run on CPUs in real-time. The most usual approach for binary instance segmentation involves encoder-decoder networks. This report evaluates state-of-the-art encoder-decoder networks and proposes a method for improving instance segmentation quality using these networks. Alongside network architecture improvements, our proposed method relies upon providing extra information to the network input, so-called extreme points, i.e. the outermost points on the object silhouette. The user can label them instead of a bounding box almost as quickly. The bounding box can be deduced from the extreme points as well. This method produces better IoU compared to other state-of-the-art encoder-decoder networks and also runs fast enough when it is deployed on a CPU.

* 4 pages, 4 figures, WSCG 2022 conference [WSCG 2022 Proceedings, CSRN 3201, ISSN 2464-4617]

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge