"Time": models, code, and papers

MEGAN: Memory Enhanced Graph Attention Network for Space-Time Video Super-Resolution

Oct 28, 2021

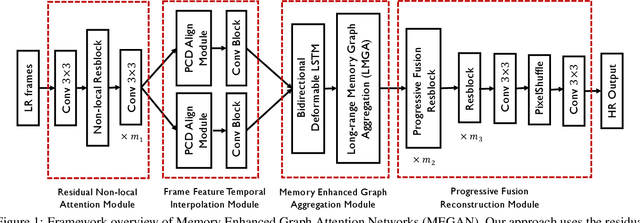

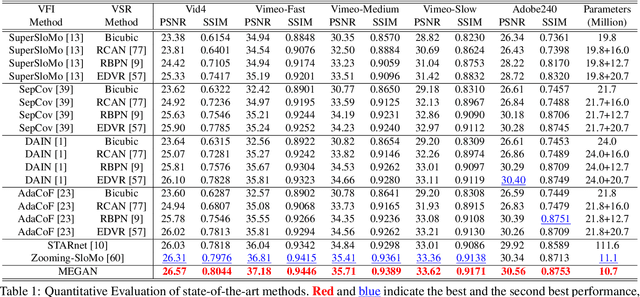

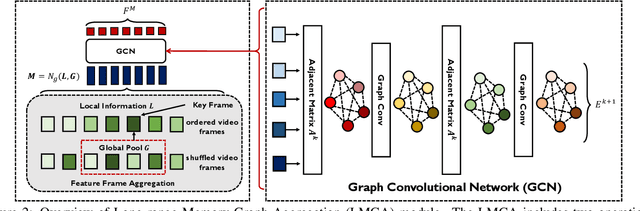

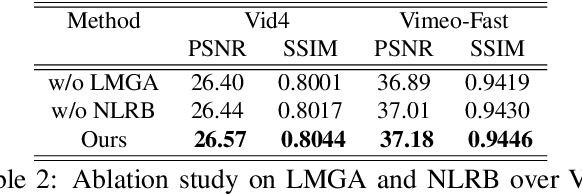

Space-time video super-resolution (STVSR) aims to construct a high space-time resolution video sequence from the corresponding low-frame-rate, low-resolution video sequence. Inspired by the recent success to consider spatial-temporal information for space-time super-resolution, our main goal in this work is to take full considerations of spatial and temporal correlations within the video sequences of fast dynamic events. To this end, we propose a novel one-stage memory enhanced graph attention network (MEGAN) for space-time video super-resolution. Specifically, we build a novel long-range memory graph aggregation (LMGA) module to dynamically capture correlations along the channel dimensions of the feature maps and adaptively aggregate channel features to enhance the feature representations. We introduce a non-local residual block, which enables each channel-wise feature to attend global spatial hierarchical features. In addition, we adopt a progressive fusion module to further enhance the representation ability by extensively exploiting spatial-temporal correlations from multiple frames. Experiment results demonstrate that our method achieves better results compared with the state-of-the-art methods quantitatively and visually.

Boosting Anomaly Detection Using Unsupervised Diverse Test-Time Augmentation

Oct 29, 2021

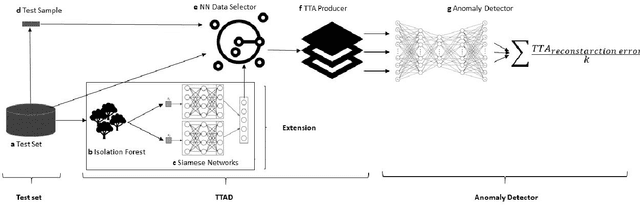

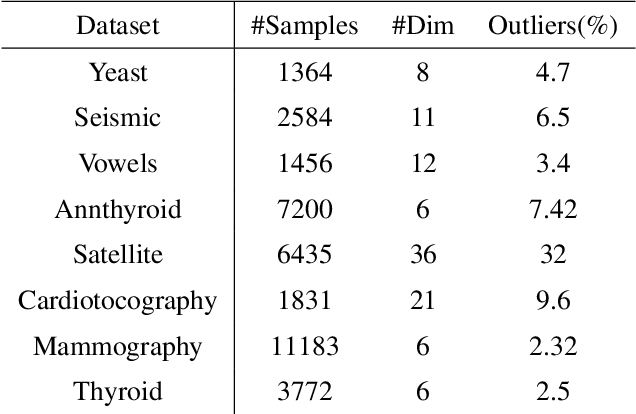

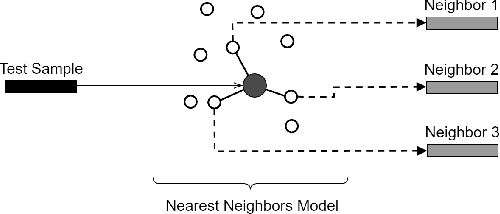

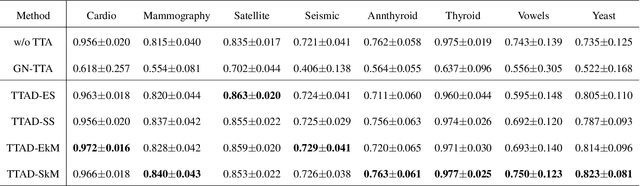

Anomaly detection is a well-known task that involves the identification of abnormal events that occur relatively infrequently. Methods for improving anomaly detection performance have been widely studied. However, no studies utilizing test-time augmentation (TTA) for anomaly detection in tabular data have been performed. TTA involves aggregating the predictions of several synthetic versions of a given test sample; TTA produces different points of view for a specific test instance and might decrease its prediction bias. We propose the Test-Time Augmentation for anomaly Detection (TTAD) technique, a TTA-based method aimed at improving anomaly detection performance. TTAD augments a test instance based on its nearest neighbors; various methods, including the k-Means centroid and SMOTE methods, are used to produce the augmentations. Our technique utilizes a Siamese network to learn an advanced distance metric when retrieving a test instance's neighbors. Our experiments show that the anomaly detector that uses our TTA technique achieved significantly higher AUC results on all datasets evaluated.

A New Scheme for Image Compression and Encryption Using ECIES, Henon Map, and AEGAN

Aug 24, 2022

Providing security in the transmission of images and other multimedia data has become one of the most important scientific and practical issues. In this paper, a method for compressing and encryption images is proposed, which can safely transmit images in low-bandwidth data transmission channels. At first, using the autoencoding generative adversarial network (AEGAN) model, the images are mapped to a vector in the latent space with low dimensions. In the next step, the obtained vector is encrypted using public key encryption methods. In the proposed method, Henon chaotic map is used for permutation, which makes information transfer more secure. To evaluate the results of the proposed scheme, three criteria SSIM, PSNR, and execution time have been used.

Efficiency Comparison of AI classification algorithms for Image Detection and Recognition in Real-time

Jun 12, 2022

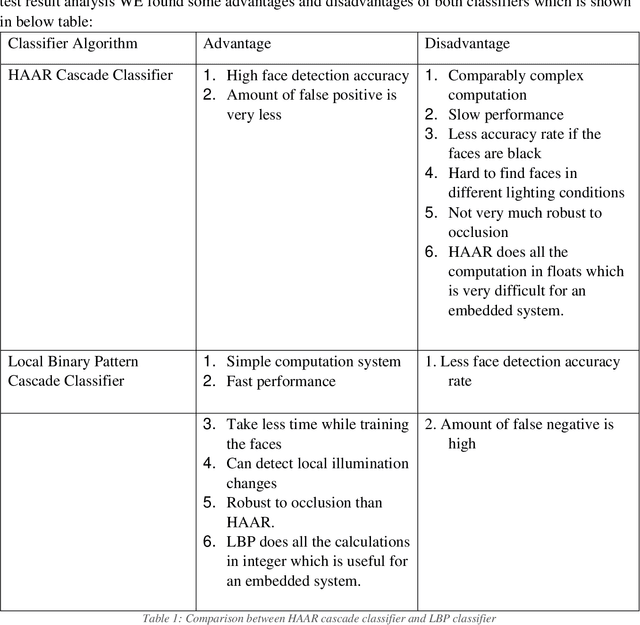

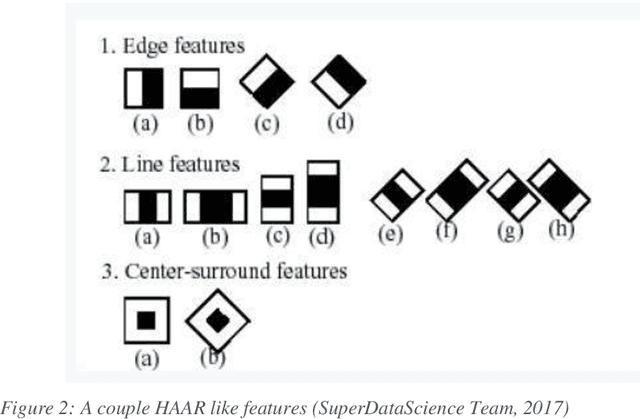

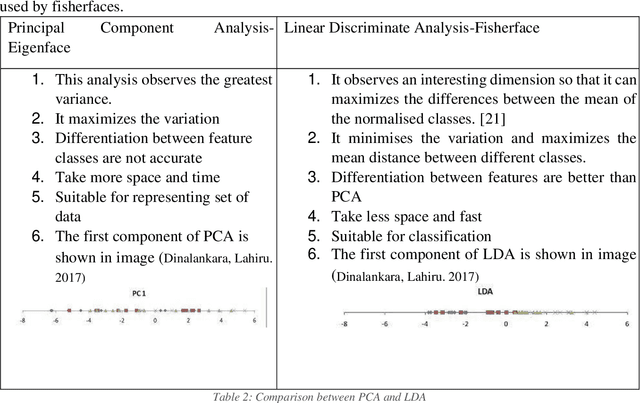

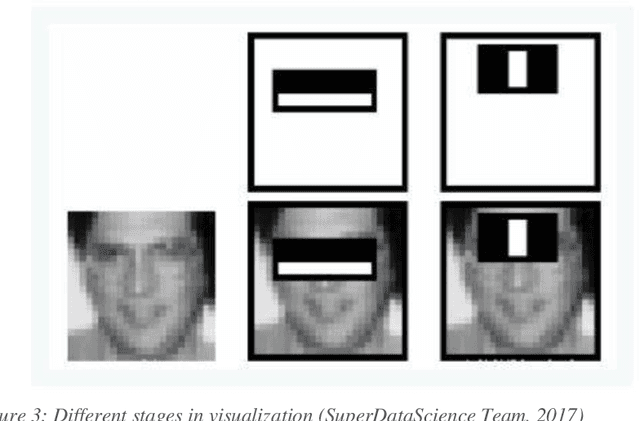

Face detection and identification is the most difficult and often used task in Artificial Intelligence systems. The goal of this study is to present and compare the results of several face detection and recognition algorithms used in the system. This system begins with a training image of a human, then continues on to the test image, identifying the face, comparing it to the trained face, and finally classifying it using OpenCV classifiers. This research will discuss the most effective and successful tactics used in the system, which are implemented using Python, OpenCV, and Matplotlib. It may also be used in locations with CCTV, such as public spaces, shopping malls, and ATM booths.

Dyadic Interaction Assessment from Free-living Audio for Depression Severity Assessment

Sep 08, 2022

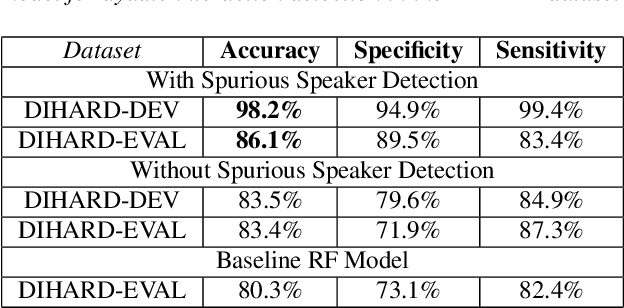

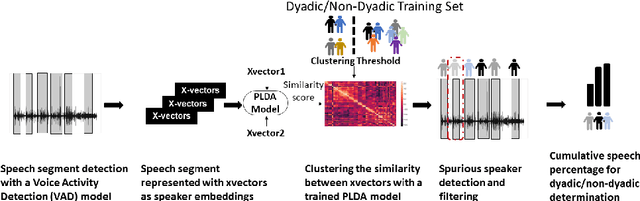

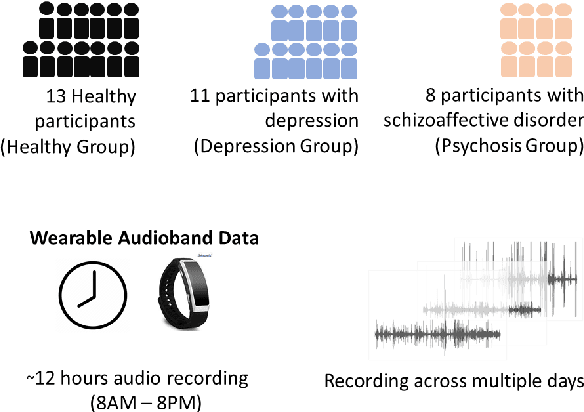

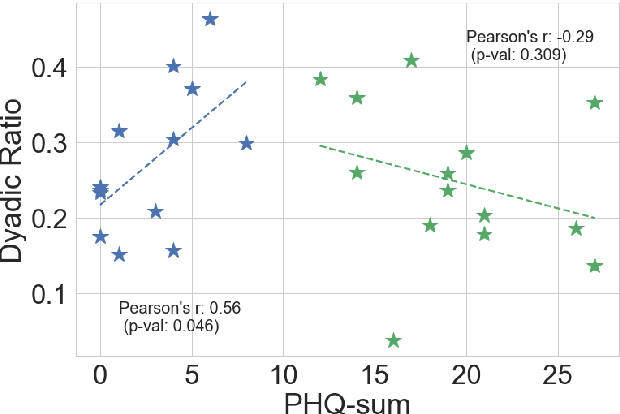

Psychomotor retardation in depression has been associated with speech timing changes from dyadic clinical interviews. In this work, we investigate speech timing features from free-living dyadic interactions. Apart from the possibility of continuous monitoring to complement clinical visits, a study in free-living conditions would also allow inferring sociability features such as dyadic interaction frequency implicated in depression. We adapted a speaker count estimator as a dyadic interaction detector with a specificity of 89.5% and a sensitivity of 86.1% in the DIHARD dataset. Using the detector, we obtained speech timing features from the detected dyadic interactions in multi-day audio recordings of 32 participants comprised of 13 healthy individuals, 11 individuals with depression, and 8 individuals with psychotic disorders. The dyadic interaction frequency increased with depression severity in participants with no or mild depression, indicating a potential diagnostic marker of depression onset. However, the dyadic interaction frequency decreased with increasing depression severity for participants with moderate or severe depression. In terms of speech timing features, the response time had a significant positive correlation with depression severity. Our work shows the potential of dyadic interaction analysis from audio recordings of free-living to obtain markers of depression severity.

Risk-aware Resource Allocation for Multiple UAVs-UGVs Recharging Rendezvous

Sep 13, 2022

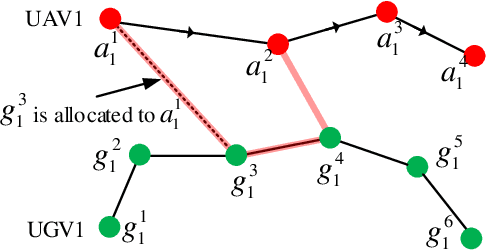

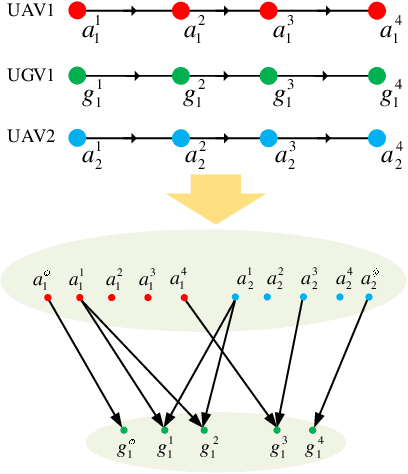

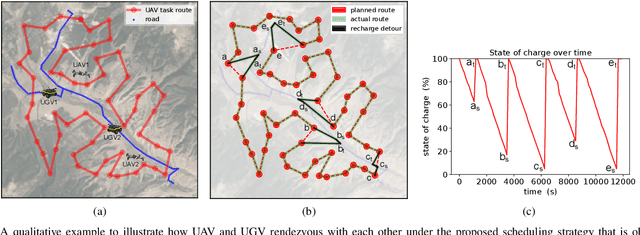

We study a resource allocation problem for the cooperative aerial-ground vehicle routing application, in which multiple Unmanned Aerial Vehicles (UAVs) with limited battery capacity and multiple Unmanned Ground Vehicles (UGVs) that can also act as a mobile recharging stations need to jointly accomplish a mission such as persistently monitoring a set of points. Due to the limited battery capacity of the UAVs, they sometimes have to deviate from their task to rendezvous with the UGVs and get recharged. Each UGV can serve a limited number of UAVs at a time. In contrast to prior work on deterministic multi-robot scheduling, we consider the challenge imposed by the stochasticity of the energy consumption of the UAV. We are interested in finding the optimal recharging schedule of the UAVs such that the travel cost is minimized and the probability that no UAV runs out of charge within the planning horizon is greater than a user-defined tolerance. We formulate this problem ({Risk-aware Recharging Rendezvous Problem (RRRP))} as an Integer Linear Program (ILP), in which the matching constraint captures the resource availability constraints and the knapsack constraint captures the success probability constraints. We propose a bicriteria approximation algorithm to solve RRRP. We demonstrate the effectiveness of our formulation and algorithm in the context of one persistent monitoring mission.

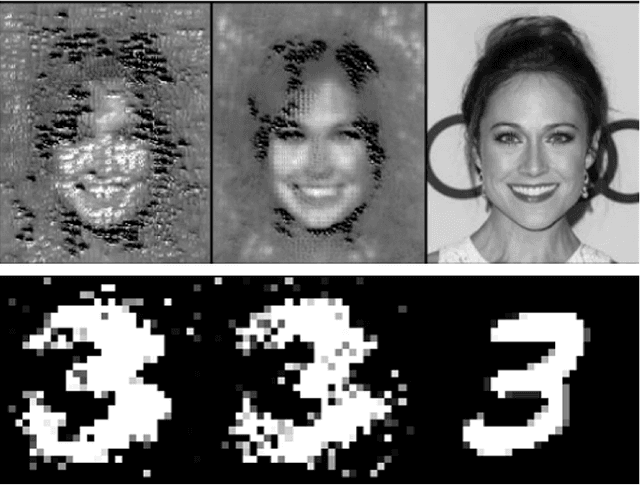

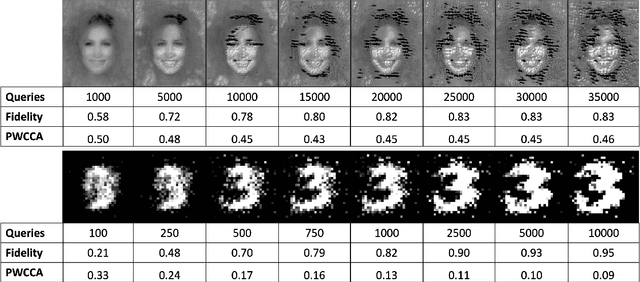

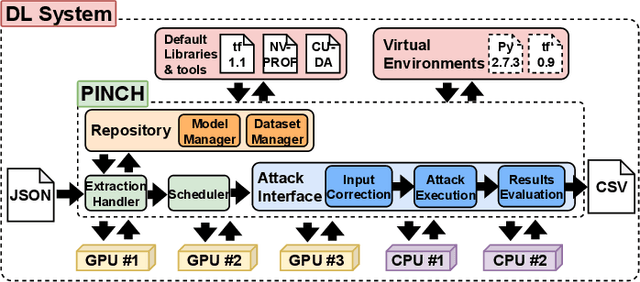

PINCH: An Adversarial Extraction Attack Framework for Deep Learning Models

Sep 13, 2022

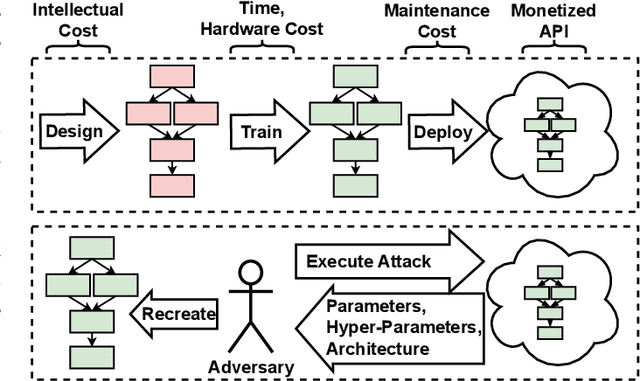

Deep Learning (DL) models increasingly power a diversity of applications. Unfortunately, this pervasiveness also makes them attractive targets for extraction attacks which can steal the architecture, parameters, and hyper-parameters of a targeted DL model. Existing extraction attack studies have observed varying levels of attack success for different DL models and datasets, yet the underlying cause(s) behind their susceptibility often remain unclear. Ascertaining such root-cause weaknesses would help facilitate secure DL systems, though this requires studying extraction attacks in a wide variety of scenarios to identify commonalities across attack success and DL characteristics. The overwhelmingly high technical effort and time required to understand, implement, and evaluate even a single attack makes it infeasible to explore the large number of unique extraction attack scenarios in existence, with current frameworks typically designed to only operate for specific attack types, datasets and hardware platforms. In this paper we present PINCH: an efficient and automated extraction attack framework capable of deploying and evaluating multiple DL models and attacks across heterogeneous hardware platforms. We demonstrate the effectiveness of PINCH by empirically evaluating a large number of previously unexplored extraction attack scenarios, as well as secondary attack staging. Our key findings show that 1) multiple characteristics affect extraction attack success spanning DL model architecture, dataset complexity, hardware, attack type, and 2) partially successful extraction attacks significantly enhance the success of further adversarial attack staging.

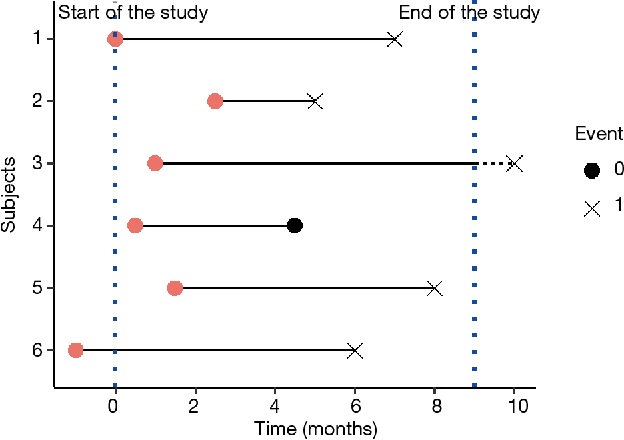

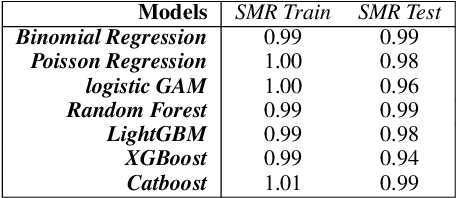

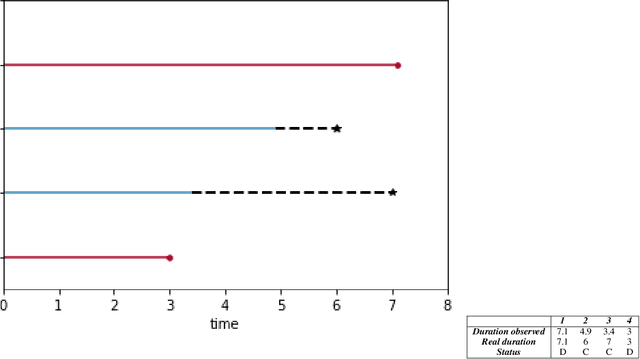

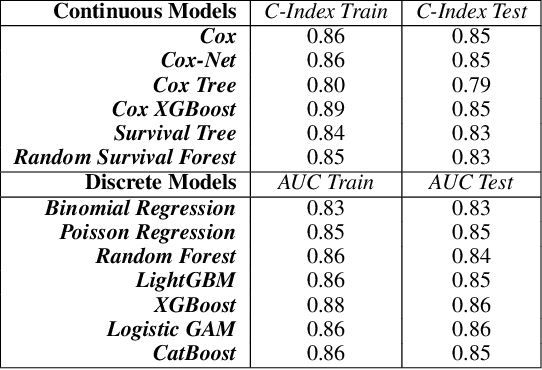

Applying Machine Learning to Life Insurance: some knowledge sharing to master it

Sep 05, 2022

Machine Learning permeates many industries, which brings new source of benefits for companies. However within the life insurance industry, Machine Learning is not widely used in practice as over the past years statistical models have shown their efficiency for risk assessment. Thus insurers may face difficulties to assess the value of the artificial intelligence. Focusing on the modification of the life insurance industry over time highlights the stake of using Machine Learning for insurers and benefits that it can bring by unleashing data value. This paper reviews traditional actuarial methodologies for survival modeling and extends them with Machine Learning techniques. It points out differences with regular machine learning models and emphasizes importance of specific implementations to face censored data with machine learning models family.In complement to this article, a Python library has been developed. Different open-source Machine Learning algorithms have been adjusted to adapt the specificities of life insurance data, namely censoring and truncation. Such models can be easily applied from this SCOR library to accurately model life insurance risks.

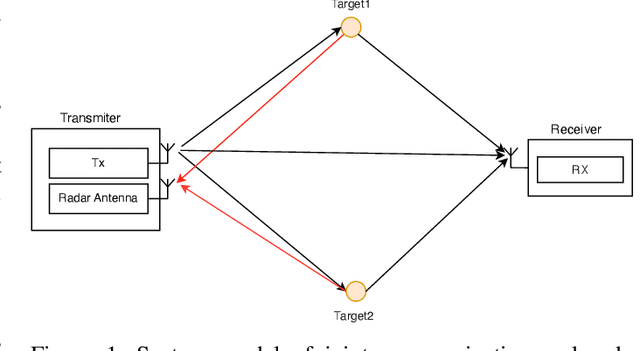

An Efficient Radar Receiver for OFDM-based Joint Radar and Communication Systems

Sep 08, 2022

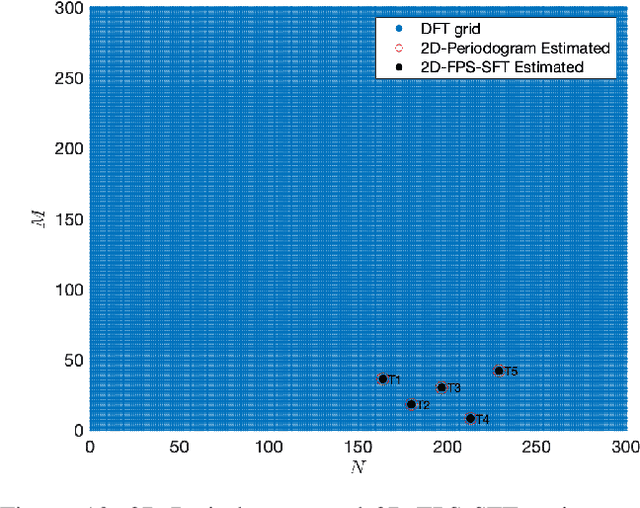

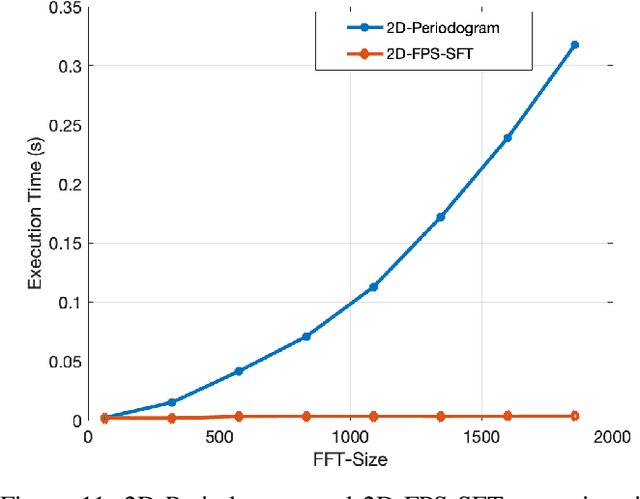

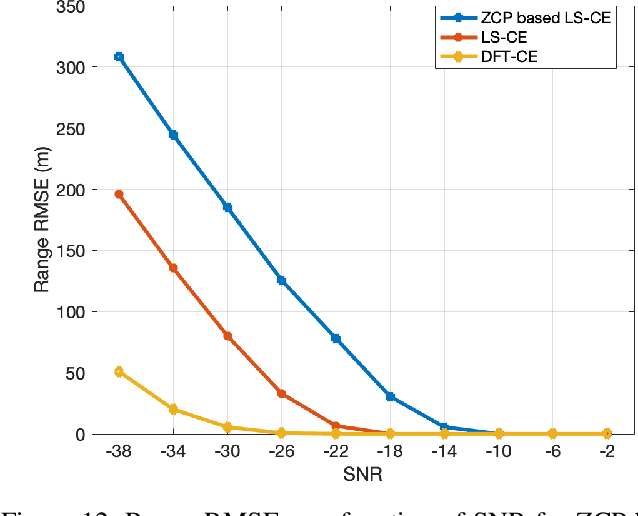

We propose in this work a radar detection system for orthogonal-frequency division multiplexing (OFDM) transmission. We assume that the transmitting antenna Tx is colocated with a monostatic radar. The latter knows the transmitted signal and listens to echoes coming from the reflection of fixed or moving targets. We estimate the targets parameters (range and velocity) using a 2D-Periodogram. Moreover, we improve the estimation performance in low signal to noise ratio (SNR) conditions using the discrete Fourier transform channel estimation (DFT-CE) and we show that Zadoff-Chu precoding (ZCP) adopted for communication, does not degrade the radar estimation in good SNR conditions. Furthermore, since the dimensions of the data matrix can be much larger than the number of targets to be detected, we propose a sparse Fourier transform based Fourier projection-slice (FPS-SFT) algorithm to reduce the computational complexity of the 2D-Periodogram. An appropriate system parameterization in the 77GHz industrial, scientific and medical (ISM) band, allows to achieve a range resolution of 30.52 cm and a velocity resolution of 0.67 m/s and to reduce the periodogram computation time up to around 98.84%.

Time Encoding of Finite-Rate-of-Innovation Signals

Jul 07, 2021

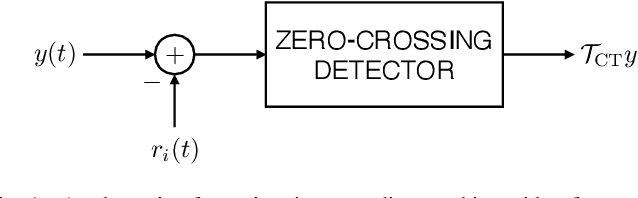

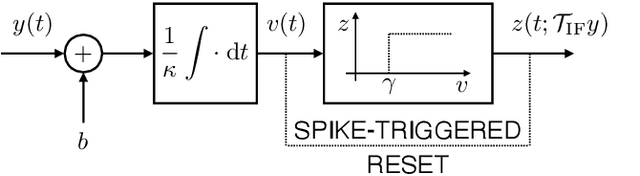

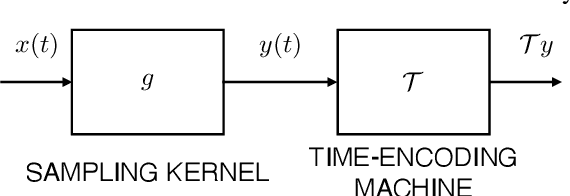

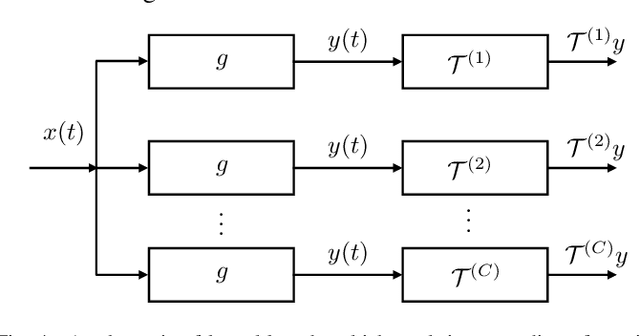

Time encoding of continuous-time signals is an alternative sampling paradigm to conventional methods like Shannon sampling. In time encoding, the signal is encoded using a sequence of time instants where an event occurs, and hence fall under event-driven sampling methods. Time encoding can be designed agnostic to the global clock of the sampling hardware which means the sampling is asynchronous. Moreover, the samples are sparse. This makes time encoding energy efficient. However, the signal representation is nonstandard and in general, nonuniform. In this paper, we consider the extension to time encoding of finite-rate-of-innovation signals, and in particular, periodic signals composed of weighted and time-shifted versions of a known pulse. We consider time encoding using crossing-time-encoding machine (C-TEM) and integrate-and-fire time encoding machine (IF-TEM). We analyse time encoding in the Fourier domain and arrive at the familiar sum-of-sinusoids structure in the Fourier-series coefficients that can be obtained from the time-encoded measurements via some forward linear transformation, where standard techniques in FRI signal recovery can be applied. Further, we extend this theory to multichannel time encoding such that each channel operates with a lower sampling requirement. We then study the effect of measurement noise, where the temporal measurements are perturbed by additive jitter. To combat the effect of noise, we propose a robust optimisation framework to simultaneously denoise the Fourier-series coefficients and recover the annihilating filter. We provide sufficient conditions for time encoding and perfect reconstruction using C-TEM and IF-TEM, and provide extensive simulations to support our claims.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge