"Time": models, code, and papers

KuaiRand: An Unbiased Sequential Recommendation Dataset with Randomly Exposed Videos

Aug 23, 2022

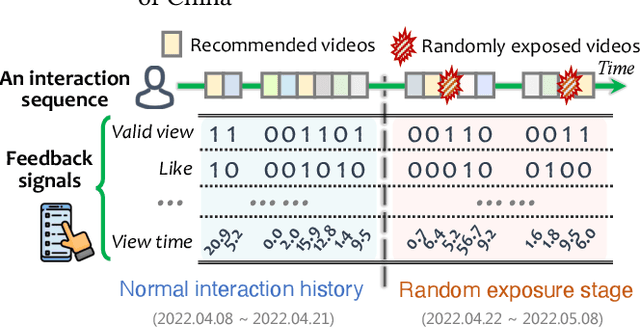

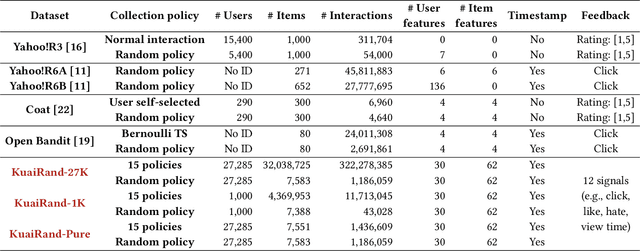

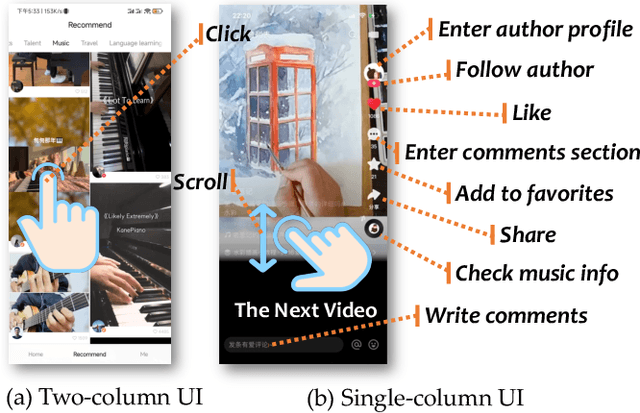

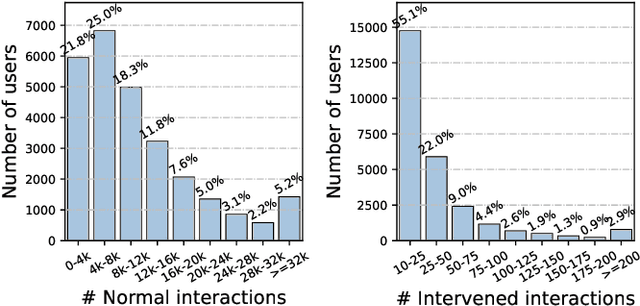

Recommender systems deployed in real-world applications can have inherent exposure bias, which leads to the biased logged data plaguing the researchers. A fundamental way to address this thorny problem is to collect users' interactions on randomly expose items, i.e., the missing-at-random data. A few works have asked certain users to rate or select randomly recommended items, e.g., Yahoo!, Coat, and OpenBandit. However, these datasets are either too small in size or lack key information, such as unique user ID or the features of users/items. In this work, we present KuaiRand, an unbiased sequential recommendation dataset containing millions of intervened interactions on randomly exposed videos, collected from the video-sharing mobile App, Kuaishou. Different from existing datasets, KuaiRand records 12 kinds of user feedback signals (e.g., click, like, and view time) on randomly exposed videos inserted in the recommendation feeds in two weeks. To facilitate model learning, we further collect rich features of users and items as well as users' behavior history. By releasing this dataset, we enable the research of advanced debiasing large-scale recommendation scenarios for the first time. Also, with its distinctive features, KuaiRand can support various other research directions such as interactive recommendation, long sequential behavior modeling, and multi-task learning. The dataset and its news will be available at https://kuairand.com.

iSimLoc: Visual Global Localization for Previously Unseen Environments with Simulated Images

Sep 14, 2022

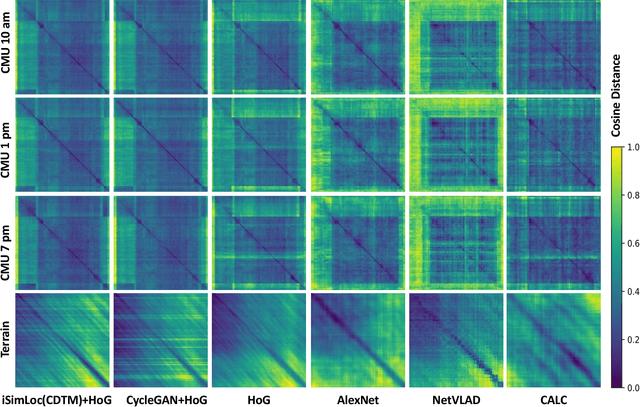

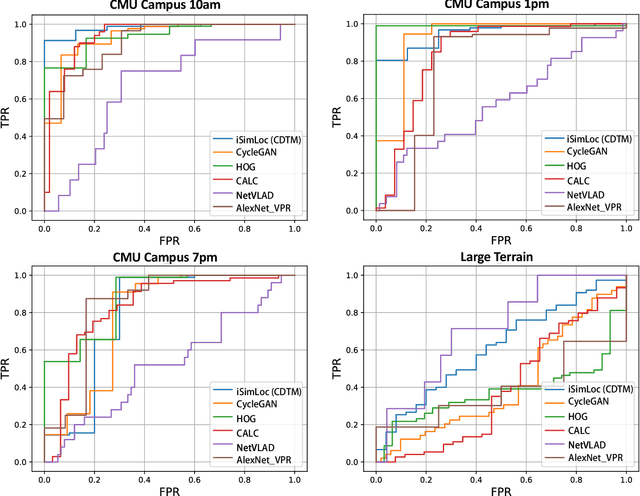

The visual camera is an attractive device in beyond visual line of sight (B-VLOS) drone operation, since they are low in size, weight, power, and cost, and can provide redundant modality to GPS failures. However, state-of-the-art visual localization algorithms are unable to match visual data that have a significantly different appearance due to illuminations or viewpoints. This paper presents iSimLoc, a condition/viewpoint consistent hierarchical global re-localization approach. The place features of iSimLoc can be utilized to search target images under changing appearances and viewpoints. Additionally, our hierarchical global re-localization module refines in a coarse-to-fine manner, allowing iSimLoc to perform a fast and accurate estimation. We evaluate our method on one dataset with appearance variations and one dataset that focuses on demonstrating large-scale matching over a long flight in complicated environments. On our two datasets, iSimLoc achieves 88.7\% and 83.8\% successful retrieval rates with 1.5s inferencing time, compared to 45.8% and 39.7% using the next best method. These results demonstrate robust localization in a range of environments.

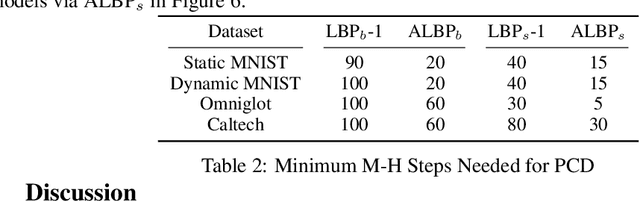

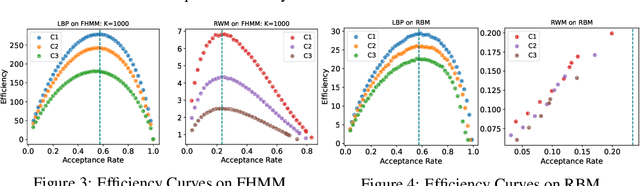

Optimal Scaling for Locally Balanced Proposals in Discrete Spaces

Sep 16, 2022

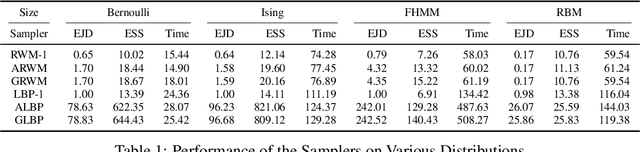

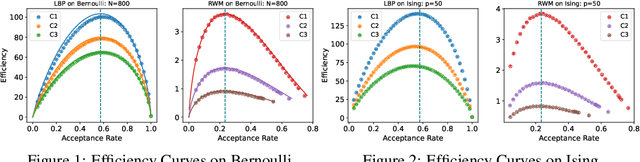

Optimal scaling has been well studied for Metropolis-Hastings (M-H) algorithms in continuous spaces, but a similar understanding has been lacking in discrete spaces. Recently, a family of locally balanced proposals (LBP) for discrete spaces has been proved to be asymptotically optimal, but the question of optimal scaling has remained open. In this paper, we establish, for the first time, that the efficiency of M-H in discrete spaces can also be characterized by an asymptotic acceptance rate that is independent of the target distribution. Moreover, we verify, both theoretically and empirically, that the optimal acceptance rates for LBP and random walk Metropolis (RWM) are $0.574$ and $0.234$ respectively. These results also help establish that LBP is asymptotically $O(N^\frac{2}{3})$ more efficient than RWM with respect to model dimension $N$. Knowledge of the optimal acceptance rate allows one to automatically tune the neighborhood size of a proposal distribution in a discrete space, directly analogous to step-size control in continuous spaces. We demonstrate empirically that such adaptive M-H sampling can robustly improve sampling in a variety of target distributions in discrete spaces, including training deep energy based models.

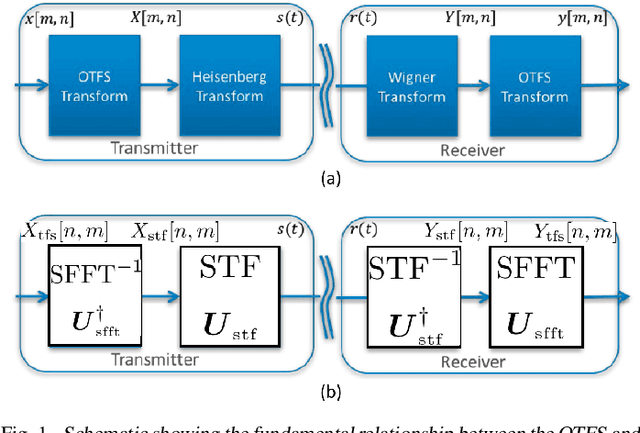

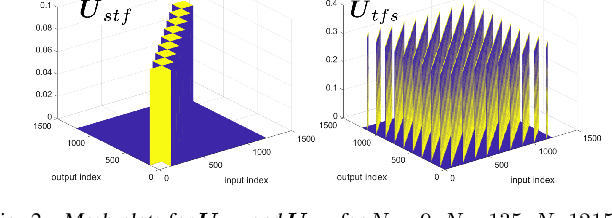

How is Time Frequency Space Modulation Related to Short Time Fourier Signaling?

Sep 13, 2021

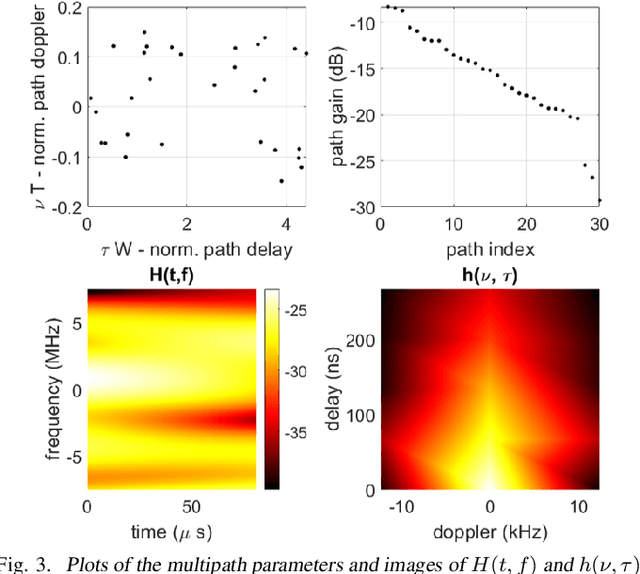

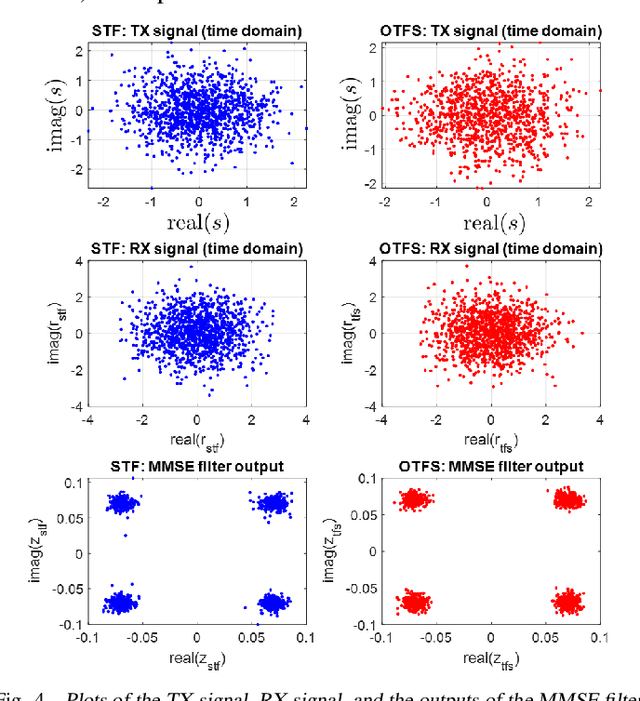

We investigate the relationship between Orthogonal Time Frequency Space (OTFS) modulation and Orthogonal Short Time Fourier (OSTF) signaling. OTFS was recently proposed as a new scheme for high Doppler scenarios and builds on OSTF. We first show that the two schemes are unitarily equivalent in the digital domain. However, OSTF defines the analog-digital interface with the waveform domain. We then develop a critically sampled matrix-vector model for the two systems and consider linear minimum mean-squared error (MMSE) filtering at the receiver to suppress inter-symbol interference. Initial comparison of capacity and (uncoded) probability of error reveals a surprising observation: OTFS under-performs OSTF in capacity but over-performs in probability of error. This result can be attributed to characteristics of the channel matrices induced by the two systems. In particular, the diagonal entries of OTFS matrix exhibit nearly identical magnitude, whereas those of the OSTF matrix exhibit wild fluctuations induced by multipath randomness. It is observed that by simply replacing the unitary matrix, relating OTFS to OSTF, by an arbitrary unitary matrix results in performance nearly identical to OTFS. We then extend our analysis to orthogonal frequency division multiplexing (OFDM) and also consider a more extreme scenario of relatively large delay and Doppler spreads. Our results demonstrate the significance of using OSTF basis waveforms rather than sinusoidal ones in OFDM in highly dynamic environments, and also highlight the impact of the level of channel state information used at the receiver.

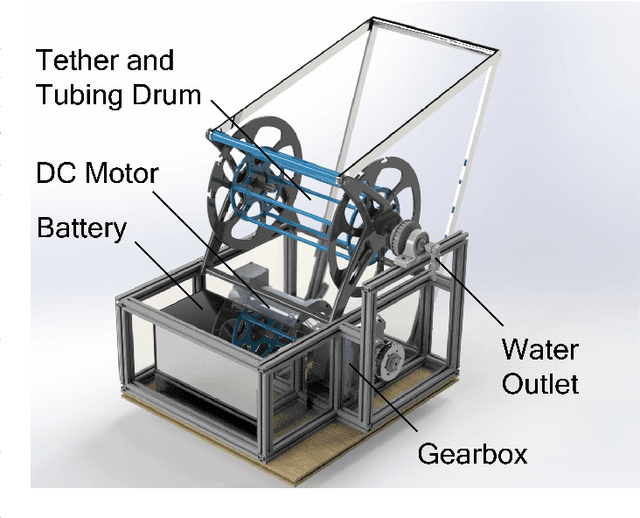

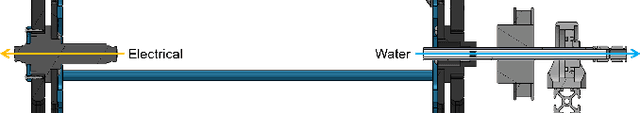

Enabling Under-Ice Geochemical Observations with a Size, Weight, and Power-Constrained Robot

Sep 10, 2022

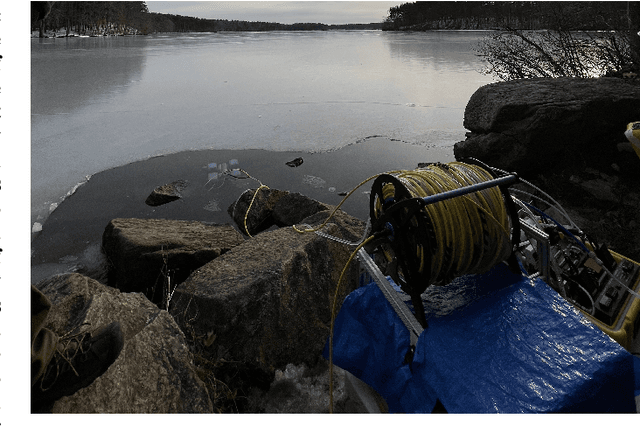

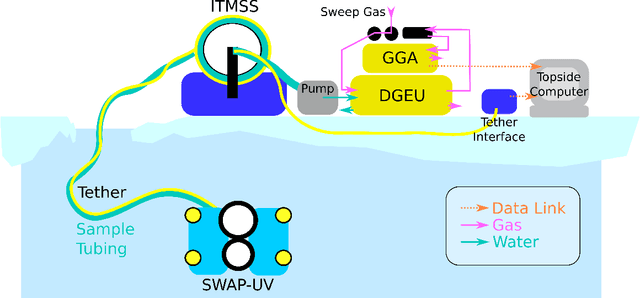

Estimates of greenhouse gas emissions from Arctic estuarine environments are dominated by in situ summer-time ice-free dissolved gas measurements due to the logistical ease of performing field observations in these conditions. Recent evidence in coastal Arctic environments, however, has demonstrated that dissolved methane (CH4) and carbon dioxide (CO2) are strongly seasonally variable, and at least one significant gas ventilation event occurs during the spring freshet. Whether the Arctic serves as a source or sink of greenhouse emissions has significant implications on modeling climate change and its feedback mechanisms. To enable higher resolution spatiotemporal measurements of dissolved gases in typically undersampled conditions, remotely operated vehicles (ROVs) can be used to extract near continuous water samples below ice before and during the spring freshet. Here, we present a size, weight, and power-constrained (SWAP) underwater vehicle (UV) and novel geochemical sampling system suitable for taking under-ice geochemical observations and demonstrate the proposed system in a field-analog setting for Arctic estuarine studies.

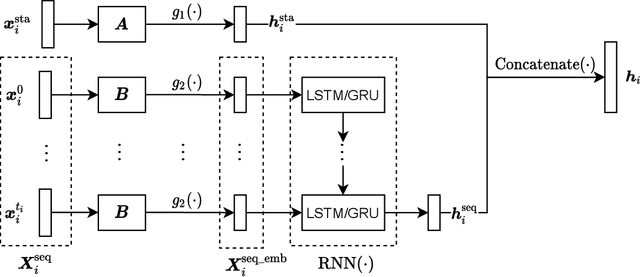

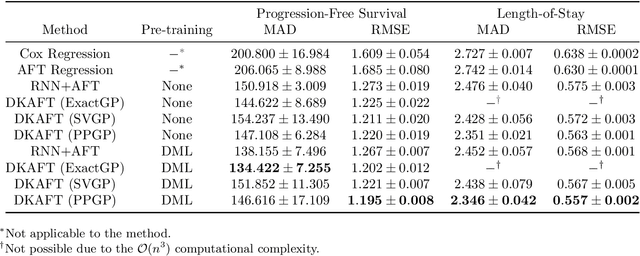

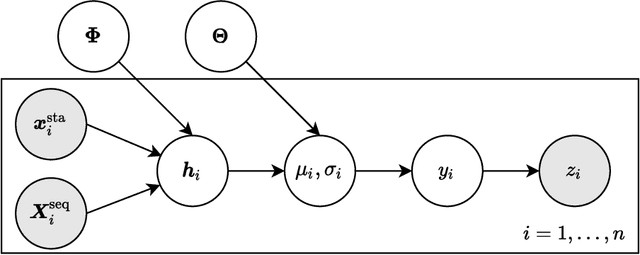

Uncertainty-Aware Time-to-Event Prediction using Deep Kernel Accelerated Failure Time Models

Jul 26, 2021

Recurrent neural network based solutions are increasingly being used in the analysis of longitudinal Electronic Health Record data. However, most works focus on prediction accuracy and neglect prediction uncertainty. We propose Deep Kernel Accelerated Failure Time models for the time-to-event prediction task, enabling uncertainty-awareness of the prediction by a pipeline of a recurrent neural network and a sparse Gaussian Process. Furthermore, a deep metric learning based pre-training step is adapted to enhance the proposed model. Our model shows better point estimate performance than recurrent neural network based baselines in experiments on two real-world datasets. More importantly, the predictive variance from our model can be used to quantify the uncertainty estimates of the time-to-event prediction: Our model delivers better performance when it is more confident in its prediction. Compared to related methods, such as Monte Carlo Dropout, our model offers better uncertainty estimates by leveraging an analytical solution and is more computationally efficient.

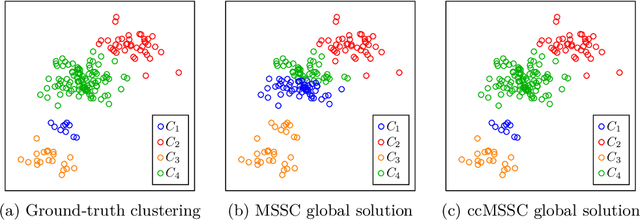

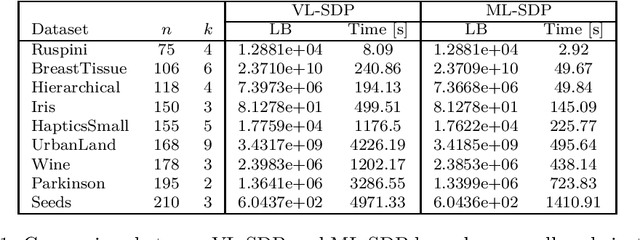

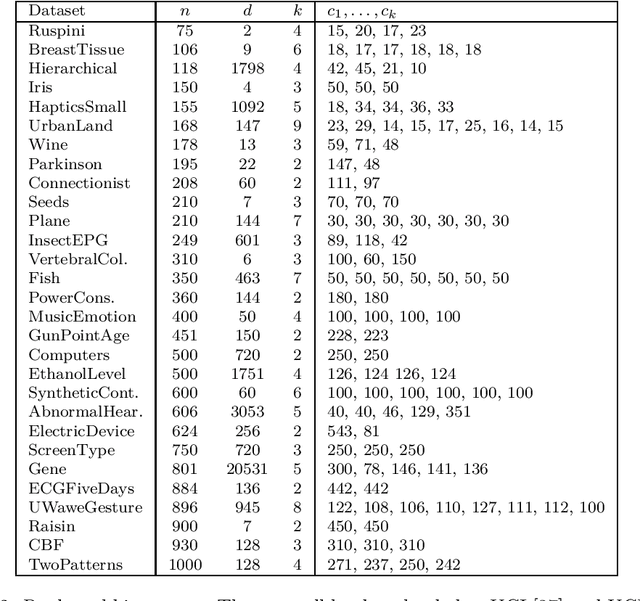

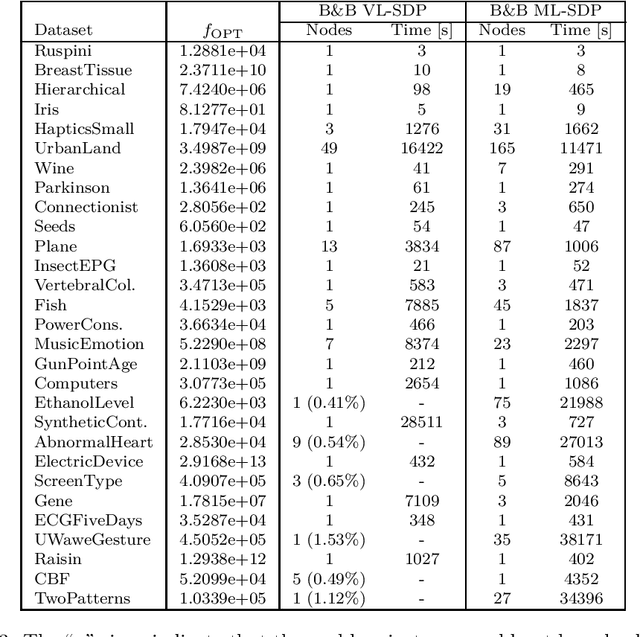

Global Optimization for Cardinality-constrained Minimum Sum-of-Squares Clustering via Semidefinite Programming

Sep 19, 2022

The minimum sum-of-squares clustering (MSSC), or k-means type clustering, has been recently extended to exploit prior knowledge on the cardinality of each cluster. Such knowledge is used to increase performance as well as solution quality. In this paper, we propose an exact approach based on the branch-and-cut technique to solve the cardinality-constrained MSSC. For the lower bound routine, we use the semidefinite programming (SDP) relaxation recently proposed by Rujeerapaiboon et al. [SIAM J. Optim. 29(2), 1211-1239, (2019)]. However, this relaxation can be used in a branch-and-cut method only for small-size instances. Therefore, we derive a new SDP relaxation that scales better with the instance size and the number of clusters. In both cases, we strengthen the bound by adding polyhedral cuts. Benefiting from a tailored branching strategy which enforces pairwise constraints, we reduce the complexity of the problems arising in the children nodes. For the upper bound, instead, we present a local search procedure that exploits the solution of the SDP relaxation solved at each node. Computational results show that the proposed algorithm globally solves, for the first time, real-world instances of size 10 times larger than those solved by state-of-the-art exact methods.

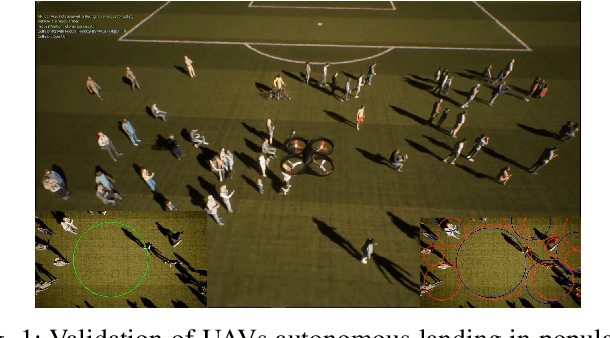

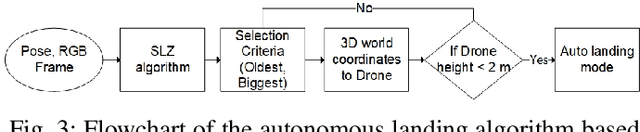

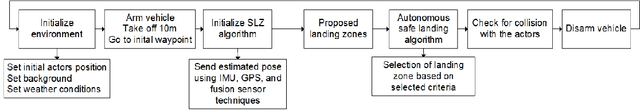

Visual-based Safe Landing for UAVs in Populated Areas: Real-time Validation in Virtual Environments

Mar 25, 2022

Safe autonomous landing for Unmanned Aerial Vehicles (UAVs) in populated areas is a crucial aspect for successful urban deployment, particularly in emergency landing situations. Nonetheless, validating autonomous landing in real scenarios is a challenging task involving a high risk of injuring people. In this work, we propose a framework for real-time safe and thorough evaluation of vision-based autonomous landing in populated scenarios, using photo-realistic virtual environments. We propose to use the Unreal graphics engine coupled with the AirSim plugin for drone's simulation, and evaluate autonomous landing strategies based on visual detection of Safe Landing Zones (SLZ) in populated scenarios. Then, we study two different criteria for selecting the "best" SLZ, and evaluate them during autonomous landing of a virtual drone in different scenarios and conditions, under different distributions of people in urban scenes, including moving people. We evaluate different metrics to quantify the performance of the landing strategies, establishing a baseline for comparison with future works in this challenging task, and analyze them through an important number of randomized iterations. The study suggests that the use of the autonomous landing algorithms considerably helps to prevent accidents involving humans, which may allow to unleash the full potential of drones in urban environments near to people.

Deterministic and Stochastic Analysis of Deep Reinforcement Learning for Low Dimensional Sensing-based Navigation of Mobile Robots

Sep 13, 2022

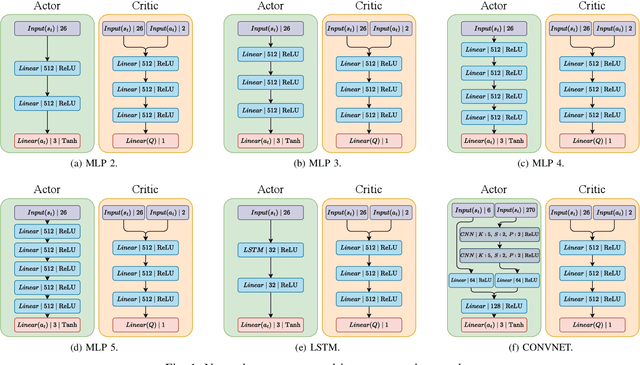

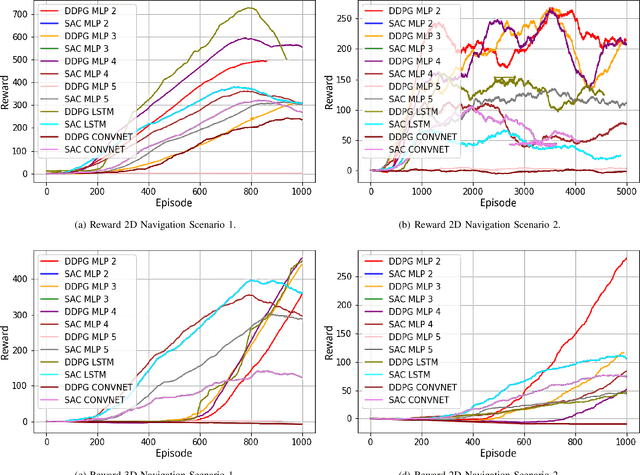

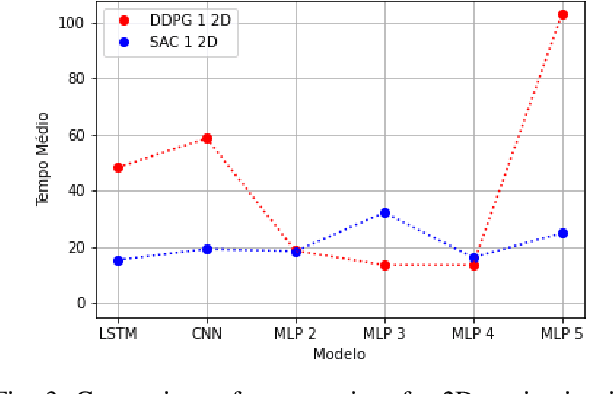

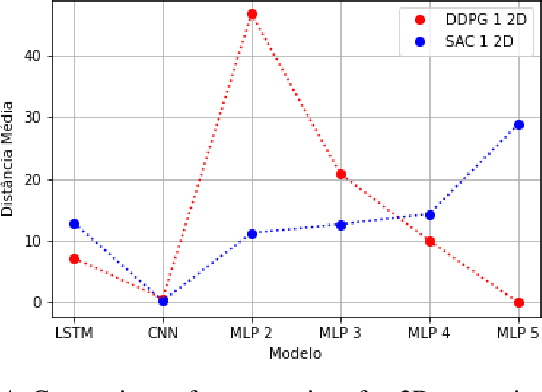

Deterministic and Stochastic techniques in Deep Reinforcement Learning (Deep-RL) have become a promising solution to improve motion control and the decision-making tasks for a wide variety of robots. Previous works showed that these Deep-RL algorithms can be applied to perform mapless navigation of mobile robots in general. However, they tend to use simple sensing strategies since it has been shown that they perform poorly with a high dimensional state spaces, such as the ones yielded from image-based sensing. This paper presents a comparative analysis of two Deep-RL techniques - Deep Deterministic Policy Gradients (DDPG) and Soft Actor-Critic (SAC) - when performing tasks of mapless navigation for mobile robots. We aim to contribute by showing how the neural network architecture influences the learning itself, presenting quantitative results based on the time and distance of navigation of aerial mobile robots for each approach. Overall, our analysis of six distinct architectures highlights that the stochastic approach (SAC) better suits with deeper architectures, while the opposite happens with the deterministic approach (DDPG).

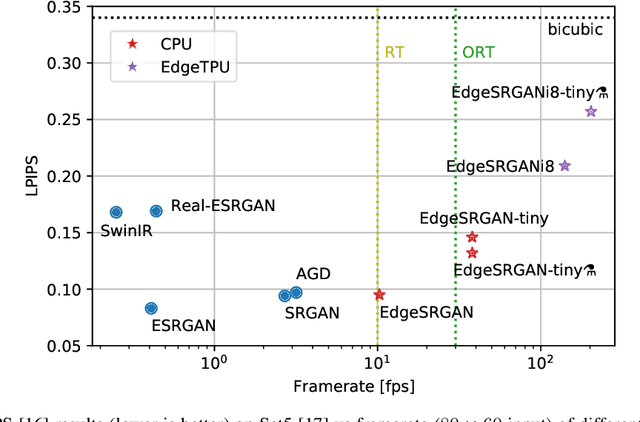

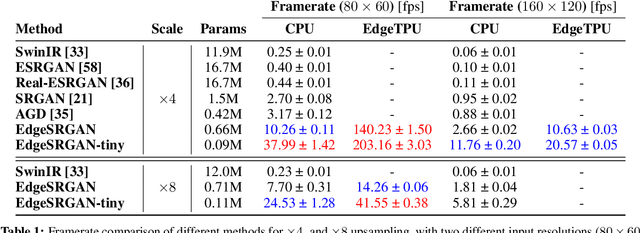

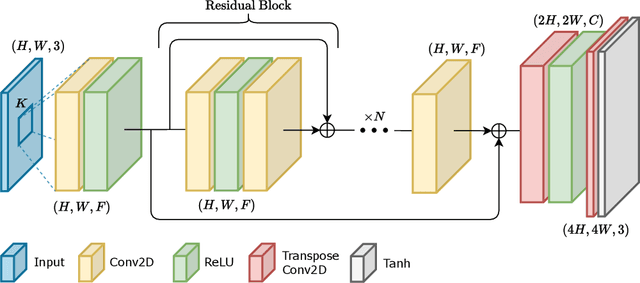

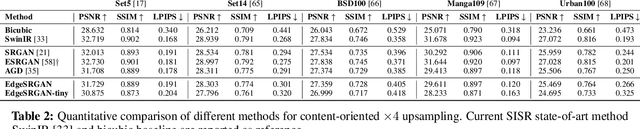

Generative Adversarial Super-Resolution at the Edge with Knowledge Distillation

Sep 07, 2022

Single-Image Super-Resolution can support robotic tasks in environments where a reliable visual stream is required to monitor the mission, handle teleoperation or study relevant visual details. In this work, we propose an efficient Generative Adversarial Network model for real-time Super-Resolution. We adopt a tailored architecture of the original SRGAN and model quantization to boost the execution on CPU and Edge TPU devices, achieving up to 200 fps inference. We further optimize our model by distilling its knowledge to a smaller version of the network and obtain remarkable improvements compared to the standard training approach. Our experiments show that our fast and lightweight model preserves considerably satisfying image quality compared to heavier state-of-the-art models. Finally, we conduct experiments on image transmission with bandwidth degradation to highlight the advantages of the proposed system for mobile robotic applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge