"Time": models, code, and papers

Real-Time Risk-Bounded Tube-Based Trajectory Safety Verification

Oct 01, 2021

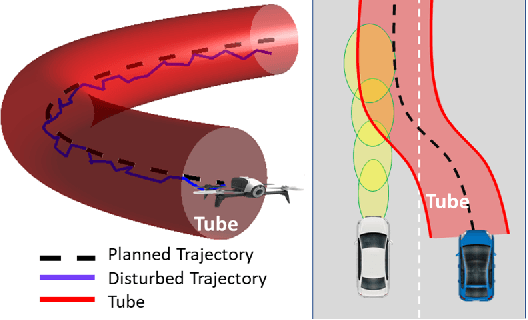

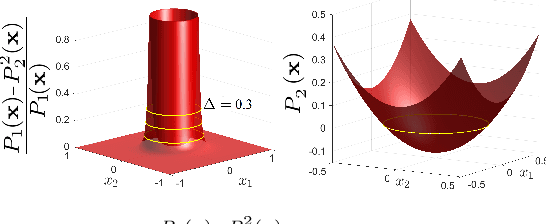

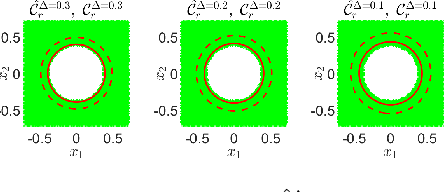

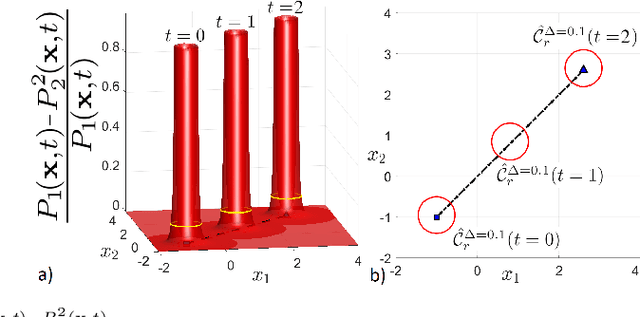

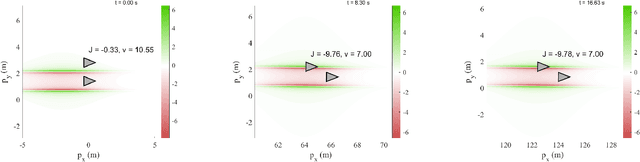

In this paper, we address the real-time risk-bounded safety verification problem of continuous-time state trajectories of autonomous systems in the presence of uncertain time-varying nonlinear safety constraints. Risk is defined as the probability of not satisfying the uncertain safety constraints. Existing approaches to address the safety verification problems under uncertainties either are limited to particular classes of uncertainties and safety constraints, e.g., Gaussian uncertainties and linear constraints, or rely on sampling based methods. In this paper, we provide a fast convex algorithm to efficiently evaluate the probabilistic nonlinear safety constraints in the presence of arbitrary probability distributions and long planning horizons in real-time, without the need for uncertainty samples and time discretization. The provided approach verifies the safety of the given state trajectory and its neighborhood (tube) to account for the execution uncertainties and risk. In the provided approach, we first use the moments of the probability distributions of the uncertainties to transform the probabilistic safety constraints into a set of deterministic safety constraints. We then use convex methods based on sum-of-squares polynomials to verify the obtained deterministic safety constraints over the entire planning time horizon without time discretization. To illustrate the performance of the proposed method, we apply the provided method to the safety verification problem of self-driving vehicles and autonomous aerial vehicles.

A Constraint-Driven Approach to Line Flocking: The V Formation as an Energy-Saving Strategy

Sep 23, 2022

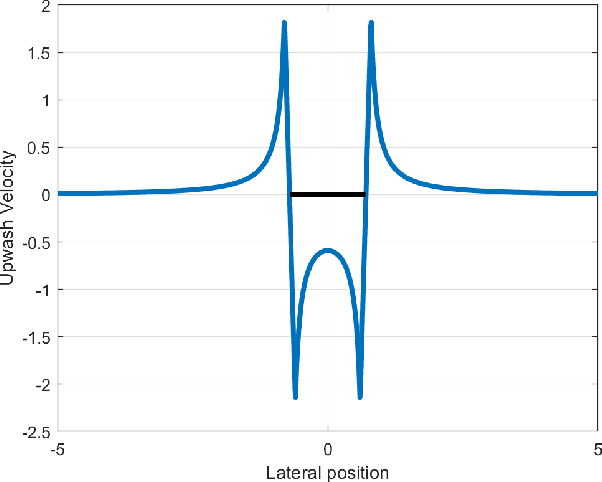

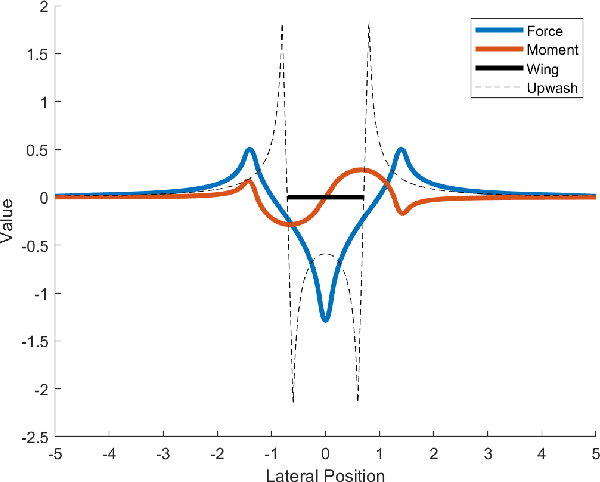

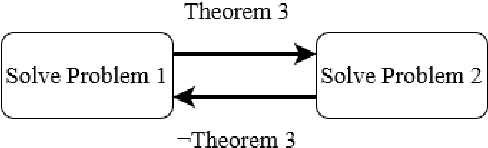

The study of robotic flocking has received significant attention in the past twenty years. In this article, we present a constraint-driven control algorithm that minimizes the energy consumption of individual agents and yields an emergent V formation. As the formation emerges from the decentralized interaction between agents, our approach is robust to the spontaneous addition or removal of agents to the system. First, we present an analytical model for the trailing upwash behind a fixed-wing UAV, and we derive the optimal air speed for trailing UAVs to maximize their travel endurance. Next, we prove that simply flying at the optimal airspeed will never lead to emergent flocking behavior, and we propose a new decentralized "anseroid" behavior that yields emergent V formations. We encode these behaviors in a constraint-driven control algorithm that minimizes the locomotive power of each UAV. Finally, we prove that UAVs initialized in an approximate V or echelon formation will converge under our proposed control law, and we demonstrate this emergence occurs in real-time in simulation and in physical experiments with a fleet of Crazyflie quadrotors.

Multi-fidelity surrogate modeling using long short-term memory networks

Aug 05, 2022

When evaluating quantities of interest that depend on the solutions to differential equations, we inevitably face the trade-off between accuracy and efficiency. Especially for parametrized, time dependent problems in engineering computations, it is often the case that acceptable computational budgets limit the availability of high-fidelity, accurate simulation data. Multi-fidelity surrogate modeling has emerged as an effective strategy to overcome this difficulty. Its key idea is to leverage many low-fidelity simulation data, less accurate but much faster to compute, to improve the approximations with limited high-fidelity data. In this work, we introduce a novel data-driven framework of multi-fidelity surrogate modeling for parametrized, time-dependent problems using long short-term memory (LSTM) networks, to enhance output predictions both for unseen parameter values and forward in time simultaneously - a task known to be particularly challenging for data-driven models. We demonstrate the wide applicability of the proposed approaches in a variety of engineering problems with high- and low-fidelity data generated through fine versus coarse meshes, small versus large time steps, or finite element full-order versus deep learning reduced-order models. Numerical results show that the proposed multi-fidelity LSTM networks not only improve single-fidelity regression significantly, but also outperform the multi-fidelity models based on feed-forward neural networks.

Generative Adversarial Networks for the fast simulation of the Time Projection Chamber responses at the MPD detector

Mar 30, 2022

The detailed detector simulation models are vital for the successful operation of modern high-energy physics experiments. In most cases, such detailed models require a significant amount of computing resources to run. Often this may not be afforded and less resource-intensive approaches are desired. In this work, we demonstrate the applicability of Generative Adversarial Networks (GAN) as the basis for such fast-simulation models for the case of the Time Projection Chamber (TPC) at the MPD detector at the NICA accelerator complex. Our prototype GAN-based model of TPC works more than an order of magnitude faster compared to the detailed simulation without any noticeable drop in the quality of the high-level reconstruction characteristics for the generated data. Approaches with direct and indirect quality metrics optimization are compared.

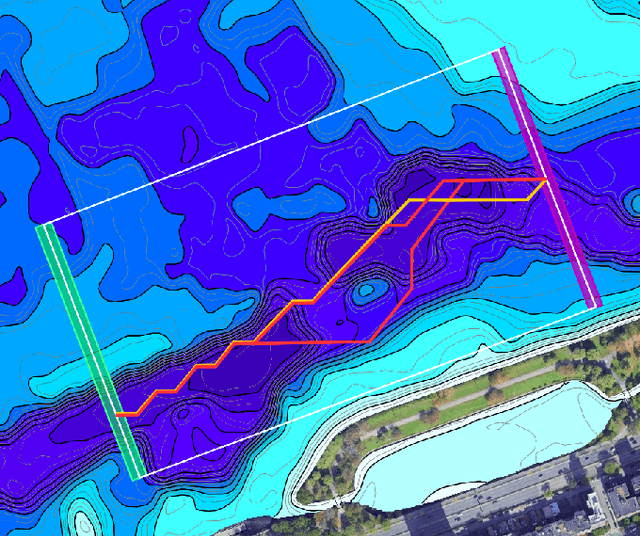

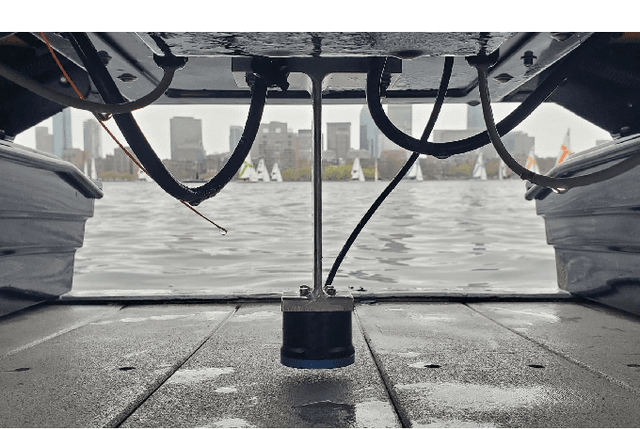

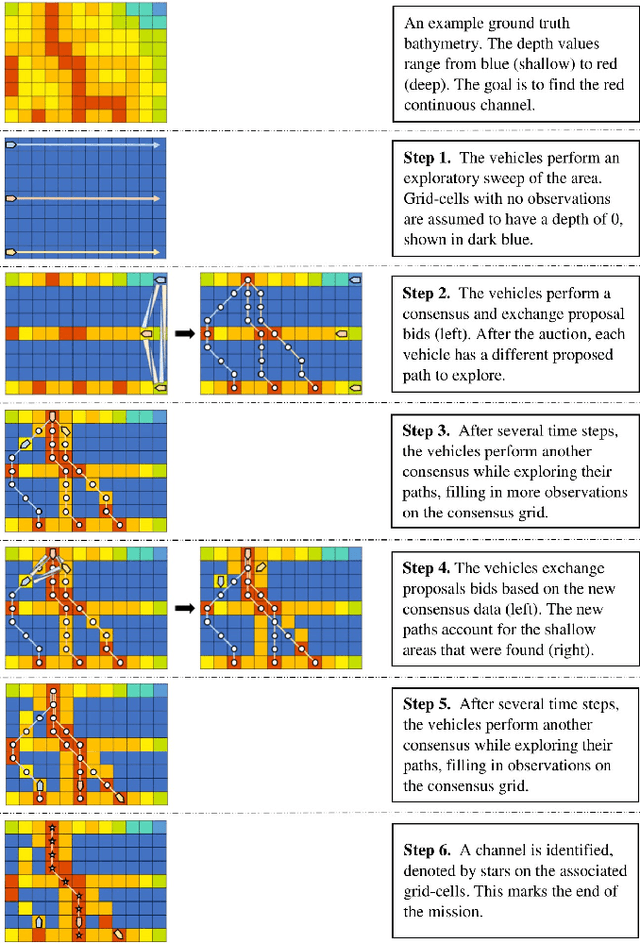

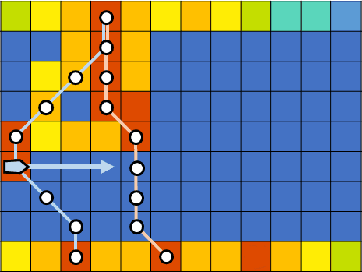

Adaptive and Collaborative Bathymetric Channel-Finding Approach for Multiple Autonomous Marine Vehicle

Sep 20, 2022

This paper reports an investigation into the problem of rapid identification of a channel that crosses a body of water using one or more Unmanned Surface Vehicles (USV). A new algorithm called Proposal Based Adaptive Channel Search (PBACS) is presented as a potential solution that improves upon current methods. The empirical performance of PBACS is compared to lawnmower surveying and to Markov decision process (MDP) planning with two state-of-the-art reward functions: Upper Confidence Bound (UCB) and Maximum Value Information (MVI). The performance of each method is evaluated through comparison of the time it takes to identify a continuous channel through an area, using one, two, three, or four USVs. The performance of each method is compared across ten simulated bathymetry scenarios and one field area, each with different channel layouts. The results from simulations and field trials indicate that on average multi-vehicle PBACS outperforms lawnmower, UCB, and MVI based methods, especially when at least three vehicles are used.

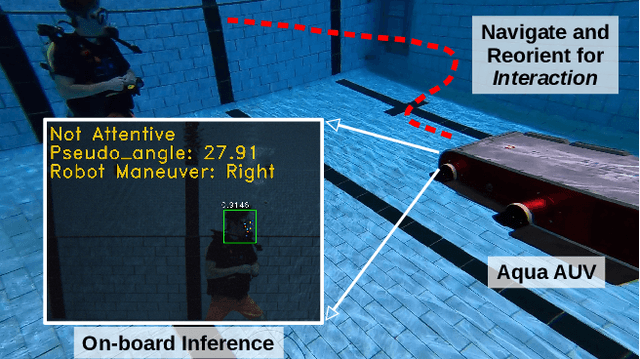

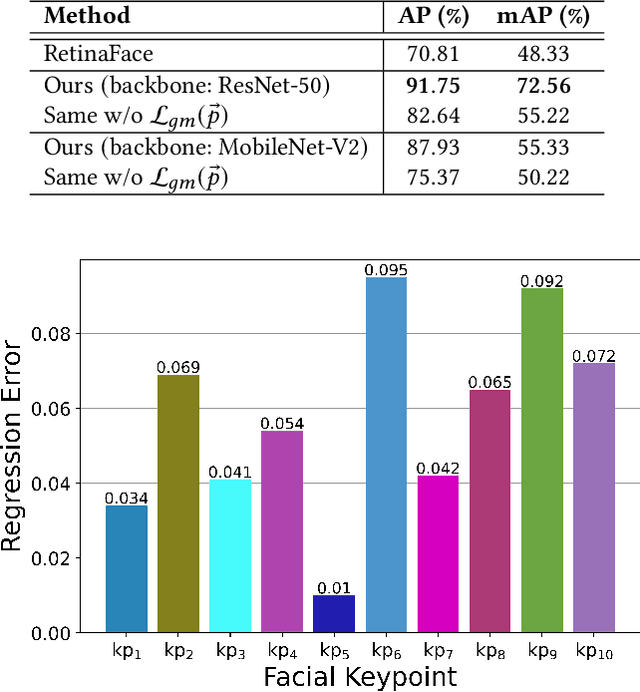

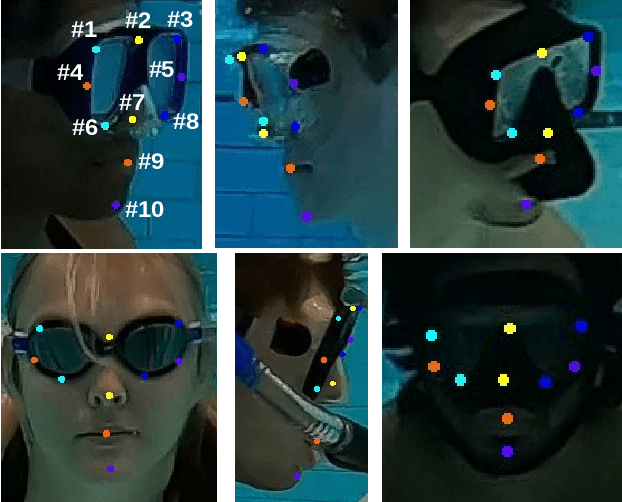

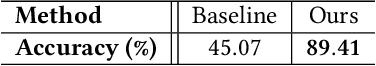

Visual Detection of Diver Attentiveness for Underwater Human-Robot Interaction

Sep 28, 2022

Many underwater tasks, such as cable-and-wreckage inspection, search-and-rescue, benefit from robust human-robot interaction (HRI) capabilities. With the recent advancements in vision-based underwater HRI methods, autonomous underwater vehicles (AUVs) can communicate with their human partners even during a mission. However, these interactions usually require active participation especially from humans (e.g., one must keep looking at the robot during an interaction). Therefore, an AUV must know when to start interacting with a human partner, i.e., if the human is paying attention to the AUV or not. In this paper, we present a diver attention estimation framework for AUVs to autonomously detect the attentiveness of a diver and then navigate and reorient itself, if required, with respect to the diver to initiate an interaction. The core element of the framework is a deep neural network (called DATT-Net) which exploits the geometric relation among 10 facial keypoints of the divers to determine their head orientation. Our on-the-bench experimental evaluations (using unseen data) demonstrate that the proposed DATT-Net architecture can determine the attentiveness of human divers with promising accuracy. Our real-world experiments also confirm the efficacy of DATT-Net which enables real-time inference and allows the AUV to position itself for an AUV-diver interaction.

Orthogonal Delay Scale Space Modulation: A New Technique for Wideband Time-Varying Channels

Nov 21, 2021

Orthogonal Time Frequency Space (OTFS) modulation is a recently proposed scheme for time-varying narrowband channels in terrestrial radio-frequency communications. Underwater acoustic (UWA) and ultra-wideband (UWB) communication systems, on the other hand, confront wideband time-varying channels. Unlike narrowband channels, for which time contractions or dilations due to Doppler effect can be approximated by frequency-shifts, the Doppler effect in wideband channels results in frequency-dependent non uniform shift of signal frequencies across the band. In this paper, we develop an OTFS-like modulation scheme -- Orthogonal Delay Scale Space (ODSS) modulation -- for handling wideband time-varying channels. We derive the ODSS transmission and reception schemes from first principles. In the process, we introduce the notion of $\omega$ convolution in the delay-scale space that parallels the twisted convolution used in the time-frequency space. The preprocessing 2D transformation from the Fourier-Mellin domain to the delay-scale space in ODSS, which plays the role of inverse symplectic Fourier transform (ISFFT) in OTFS, improves the bit error rate performance compared to OTFS and Orthogonal Frequency Division Multiplexing (OFDM) in wideband time-varying channels. Furthermore, since the channel matrix is rendered near-diagonal, ODSS retains the advantage of OFDM in terms of its low-complexity receiver structure.

Continual Transfer Learning for Cross-Domain Click-Through Rate Prediction at Taobao

Aug 11, 2022

As one of the largest e-commerce platforms in the world, Taobao's recommendation systems (RSs) serve the demands of shopping for hundreds of millions of customers. Click-Through Rate (CTR) prediction is a core component of the RS. One of the biggest characteristics in CTR prediction at Taobao is that there exist multiple recommendation domains where the scales of different domains vary significantly. Therefore, it is crucial to perform cross-domain CTR prediction to transfer knowledge from large domains to small domains to alleviate the data sparsity issue. However, existing cross-domain CTR prediction methods are proposed for static knowledge transfer, ignoring that all domains in real-world RSs are continually time-evolving. In light of this, we present a necessary but novel task named Continual Transfer Learning (CTL), which transfers knowledge from a time-evolving source domain to a time-evolving target domain. In this work, we propose a simple and effective CTL model called CTNet to solve the problem of continual cross-domain CTR prediction at Taobao, and CTNet can be trained efficiently. Particularly, CTNet considers an important characteristic in the industry that models has been continually well-trained for a very long time. So CTNet aims to fully utilize all the well-trained model parameters in both source domain and target domain to avoid losing historically acquired knowledge, and only needs incremental target domain data for training to guarantee efficiency. Extensive offline experiments and online A/B testing at Taobao demonstrate the efficiency and effectiveness of CTNet. CTNet is now deployed online in the recommender systems of Taobao, serving the main traffic of hundreds of millions of active users.

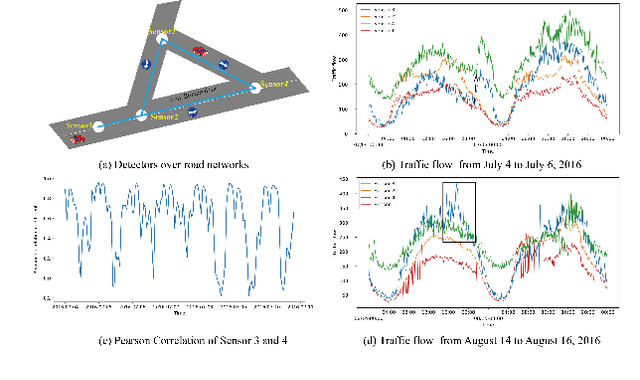

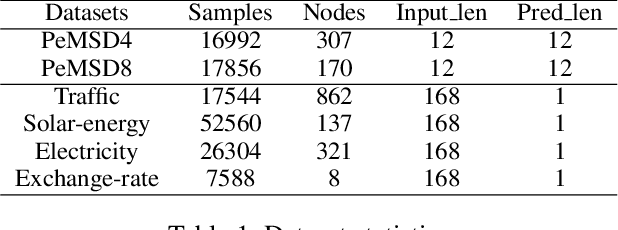

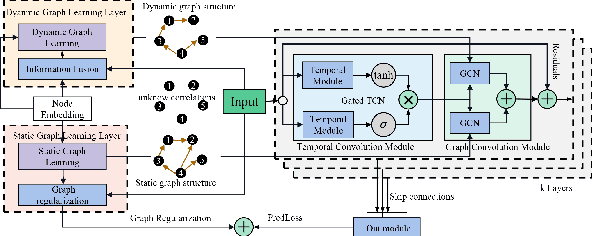

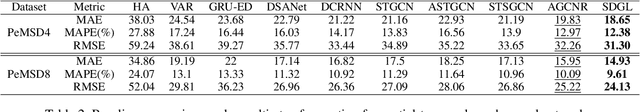

Dynamic Graph Learning-Neural Network for Multivariate Time Series Modeling

Dec 06, 2021

Multivariate time series forecasting is a challenging task because the data involves a mixture of long- and short-term patterns, with dynamic spatio-temporal dependencies among variables. Existing graph neural networks (GNN) typically model multivariate relationships with a pre-defined spatial graph or learned fixed adjacency graph. It limits the application of GNN and fails to handle the above challenges. In this paper, we propose a novel framework, namely static- and dynamic-graph learning-neural network (SDGL). The model acquires static and dynamic graph matrices from data to model long- and short-term patterns respectively. Static matric is developed to capture the fixed long-term association pattern via node embeddings, and we leverage graph regularity for controlling the quality of the learned static graph. To capture dynamic dependencies among variables, we propose dynamic graphs learning method to generate time-varying matrices based on changing node features and static node embeddings. And in the method, we integrate the learned static graph information as inductive bias to construct dynamic graphs and local spatio-temporal patterns better. Extensive experiments are conducted on two traffic datasets with extra structural information and four time series datasets, which show that our approach achieves state-of-the-art performance on almost all datasets. If the paper is accepted, I will open the source code on github.

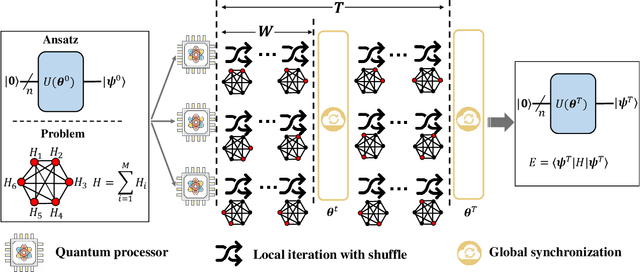

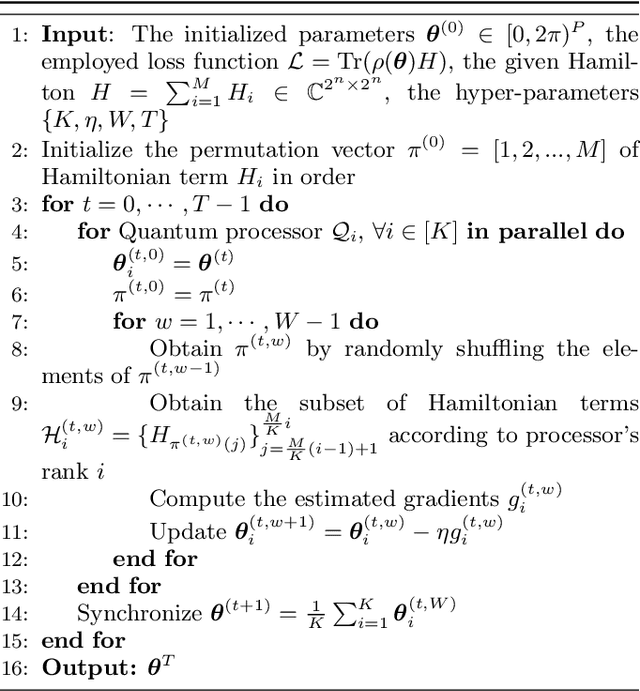

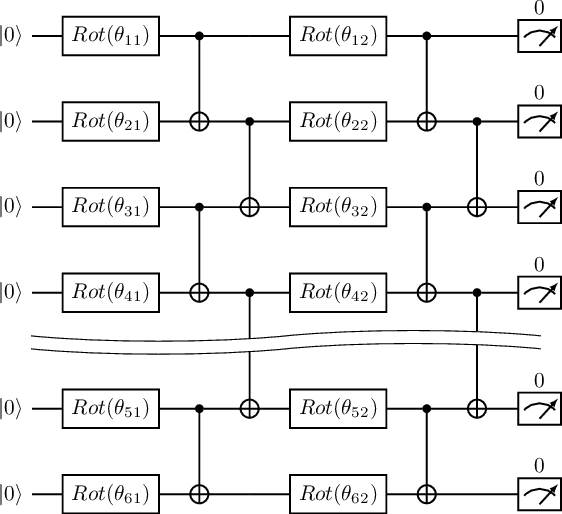

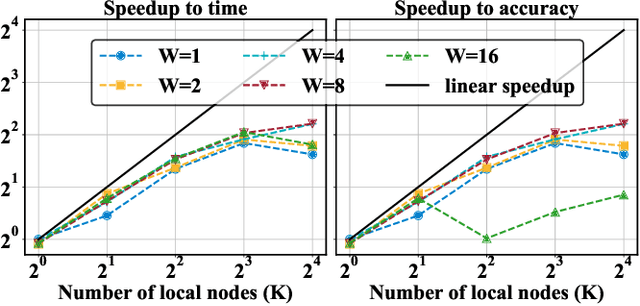

Shuffle-QUDIO: accelerate distributed VQE with trainability enhancement and measurement reduction

Sep 26, 2022

The variational quantum eigensolver (VQE) is a leading strategy that exploits noisy intermediate-scale quantum (NISQ) machines to tackle chemical problems outperforming classical approaches. To gain such computational advantages on large-scale problems, a feasible solution is the QUantum DIstributed Optimization (QUDIO) scheme, which partitions the original problem into $K$ subproblems and allocates them to $K$ quantum machines followed by the parallel optimization. Despite the provable acceleration ratio, the efficiency of QUDIO may heavily degrade by the synchronization operation. To conquer this issue, here we propose Shuffle-QUDIO to involve shuffle operations into local Hamiltonians during the quantum distributed optimization. Compared with QUDIO, Shuffle-QUDIO significantly reduces the communication frequency among quantum processors and simultaneously achieves better trainability. Particularly, we prove that Shuffle-QUDIO enables a faster convergence rate over QUDIO. Extensive numerical experiments are conducted to verify that Shuffle-QUDIO allows both a wall-clock time speedup and low approximation error in the tasks of estimating the ground state energy of molecule. We empirically demonstrate that our proposal can be seamlessly integrated with other acceleration techniques, such as operator grouping, to further improve the efficacy of VQE.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge