"Time": models, code, and papers

Multi-Modal Temporal Attention Models for Crop Mapping from Satellite Time Series

Dec 14, 2021

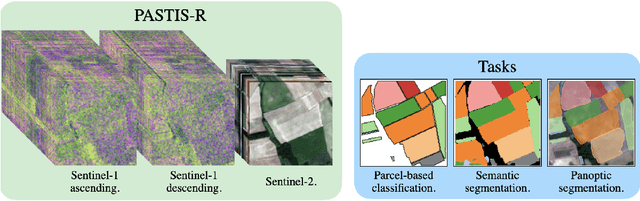

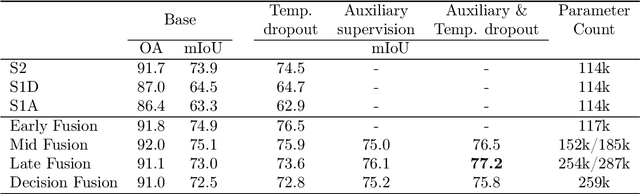

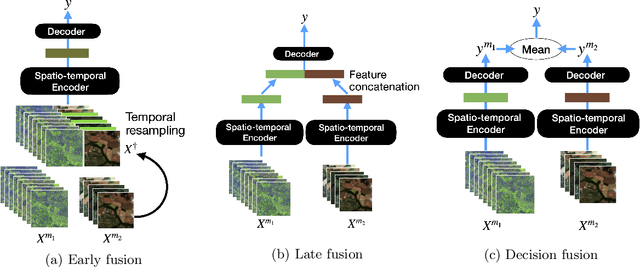

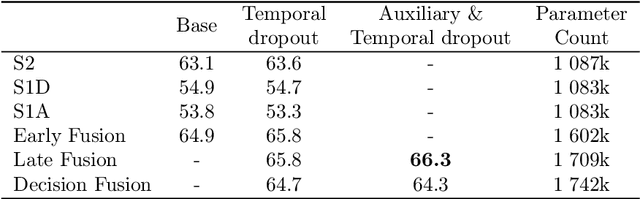

Optical and radar satellite time series are synergetic: optical images contain rich spectral information, while C-band radar captures useful geometrical information and is immune to cloud cover. Motivated by the recent success of temporal attention-based methods across multiple crop mapping tasks, we propose to investigate how these models can be adapted to operate on several modalities. We implement and evaluate multiple fusion schemes, including a novel approach and simple adjustments to the training procedure, significantly improving performance and efficiency with little added complexity. We show that most fusion schemes have advantages and drawbacks, making them relevant for specific settings. We then evaluate the benefit of multimodality across several tasks: parcel classification, pixel-based segmentation, and panoptic parcel segmentation. We show that by leveraging both optical and radar time series, multimodal temporal attention-based models can outmatch single-modality models in terms of performance and resilience to cloud cover. To conduct these experiments, we augment the PASTIS dataset with spatially aligned radar image time series. The resulting dataset, PASTIS-R, constitutes the first large-scale, multimodal, and open-access satellite time series dataset with semantic and instance annotations.

Self-supervised Learning for Heterogeneous Graph via Structure Information based on Metapath

Sep 09, 2022

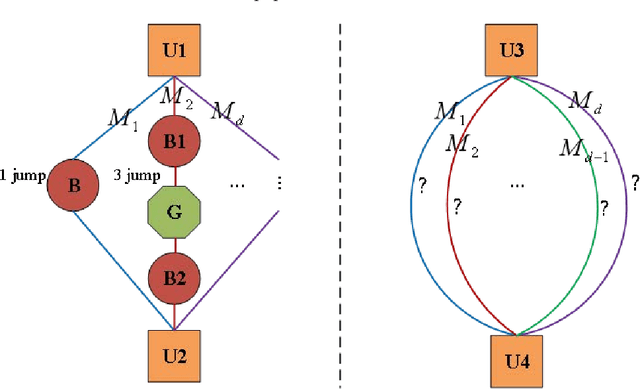

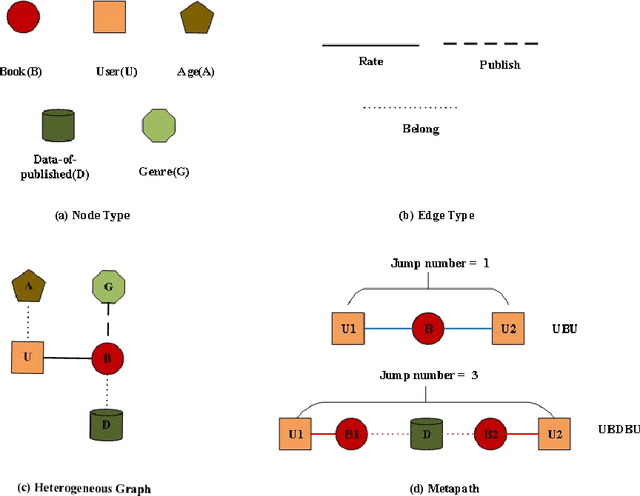

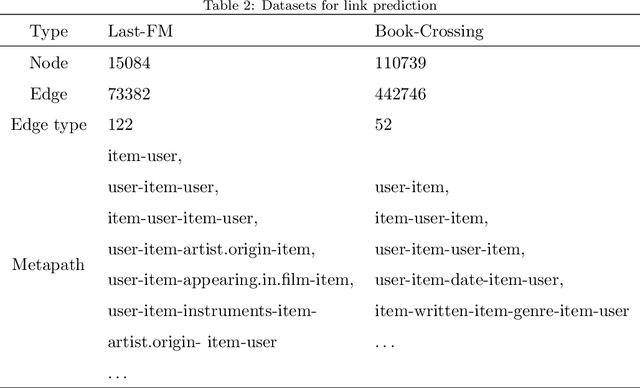

graph neural networks (GNNs) are the dominant paradigm for modeling and handling graph structure data by learning universal node representation. The traditional way of training GNNs depends on a great many labeled data, which results in high requirements on cost and time. In some special scene, it is even unavailable and impracticable. Self-supervised representation learning, which can generate labels by graph structure data itself, is a potential approach to tackle this problem. And turning to research on self-supervised learning problem for heterogeneous graphs is more challenging than dealing with homogeneous graphs, also there are fewer studies about it. In this paper, we propose a SElfsupervised learning method for heterogeneous graph via Structure Information based on Metapath (SESIM). The proposed model can construct pretext tasks by predicting jump number between nodes in each metapath to improve the representation ability of primary task. In order to predict jump number, SESIM uses data itself to generate labels, avoiding time-consuming manual labeling. Moreover, predicting jump number in each metapath can effectively utilize graph structure information, which is the essential property between nodes. Therefore, SESIM deepens the understanding of models for graph structure. At last, we train primary task and pretext tasks jointly, and use meta-learning to balance the contribution of pretext tasks for primary task. Empirical results validate the performance of SESIM method and demonstrate that this method can improve the representation ability of traditional neural networks on link prediction task and node classification task.

Using Full-Text Content to Characterize and Identify Best Seller Books

Oct 05, 2022

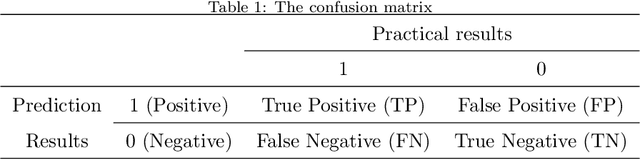

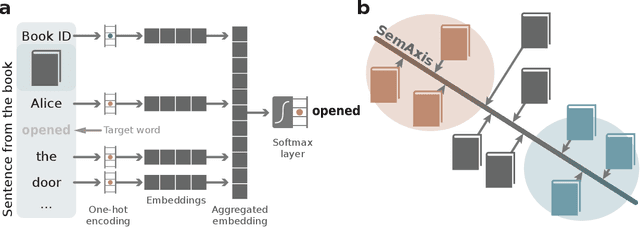

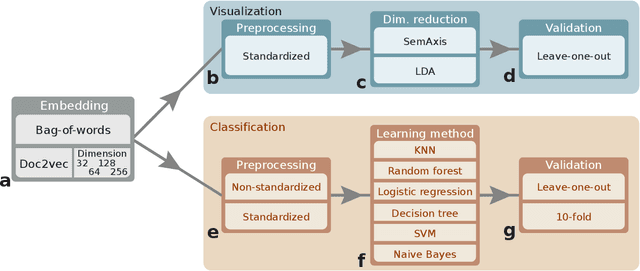

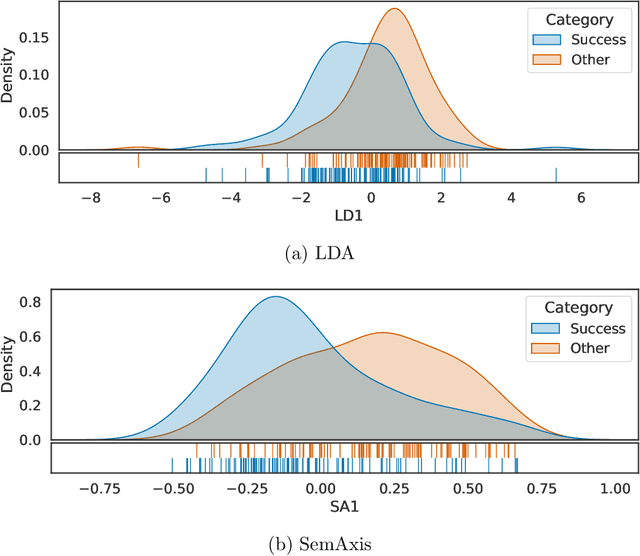

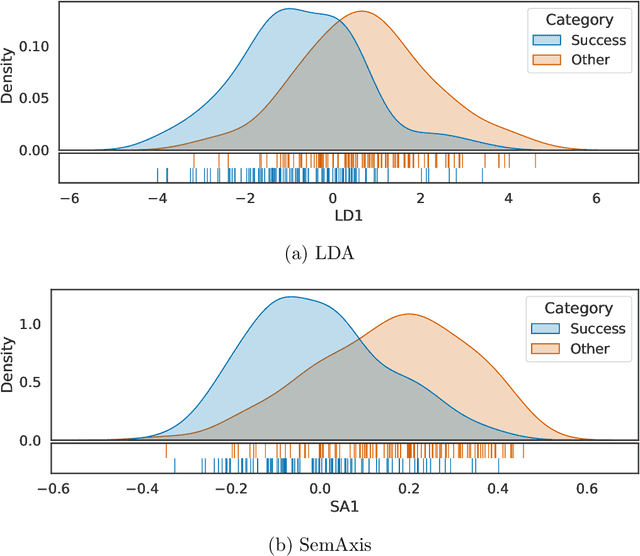

Artistic pieces can be studied from several perspectives, one example being their reception among readers over time. In the present work, we approach this interesting topic from the standpoint of literary works, particularly assessing the task of predicting whether a book will become a best seller. Dissimilarly from previous approaches, we focused on the full content of books and considered visualization and classification tasks. We employed visualization for the preliminary exploration of the data structure and properties, involving SemAxis and linear discriminant analyses. Then, to obtain quantitative and more objective results, we employed various classifiers. Such approaches were used along with a dataset containing (i) books published from 1895 to 1924 and consecrated as best sellers by the \emph{Publishers Weekly Bestseller Lists} and (ii) literary works published in the same period but not being mentioned in that list. Our comparison of methods revealed that the best-achieved result - combining a bag-of-words representation with a logistic regression classifier - led to an average accuracy of 0.75 both for the leave-one-out and 10-fold cross-validations. Such an outcome suggests that it is unfeasible to predict the success of books with high accuracy using only the full content of the texts. Nevertheless, our findings provide insights into the factors leading to the relative success of a literary work.

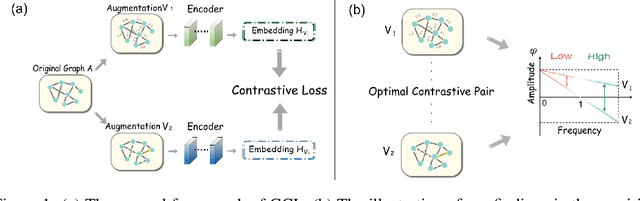

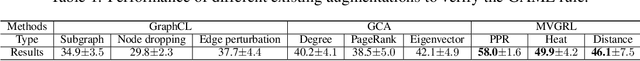

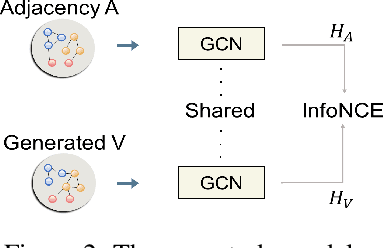

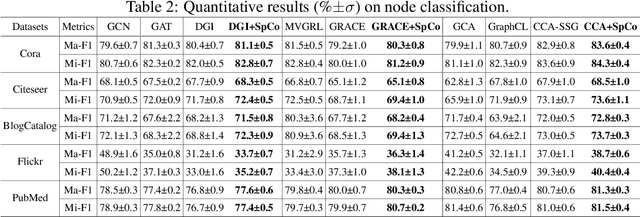

Revisiting Graph Contrastive Learning from the Perspective of Graph Spectrum

Oct 05, 2022

Graph Contrastive Learning (GCL), learning the node representations by augmenting graphs, has attracted considerable attentions. Despite the proliferation of various graph augmentation strategies, some fundamental questions still remain unclear: what information is essentially encoded into the learned representations by GCL? Are there some general graph augmentation rules behind different augmentations? If so, what are they and what insights can they bring? In this paper, we answer these questions by establishing the connection between GCL and graph spectrum. By an experimental investigation in spectral domain, we firstly find the General grAph augMEntation (GAME) rule for GCL, i.e., the difference of the high-frequency parts between two augmented graphs should be larger than that of low-frequency parts. This rule reveals the fundamental principle to revisit the current graph augmentations and design new effective graph augmentations. Then we theoretically prove that GCL is able to learn the invariance information by contrastive invariance theorem, together with our GAME rule, for the first time, we uncover that the learned representations by GCL essentially encode the low-frequency information, which explains why GCL works. Guided by this rule, we propose a spectral graph contrastive learning module (SpCo), which is a general and GCL-friendly plug-in. We combine it with different existing GCL models, and extensive experiments well demonstrate that it can further improve the performances of a wide variety of different GCL methods.

An Effective Technique for Increasing Capacity and Improving Bandwidth in 5G NB-IoT

Aug 29, 2022

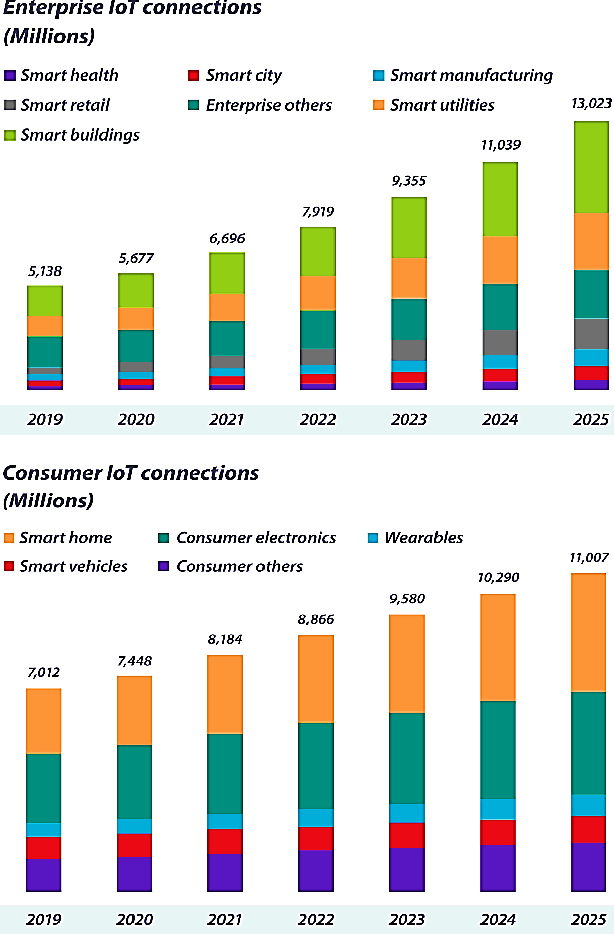

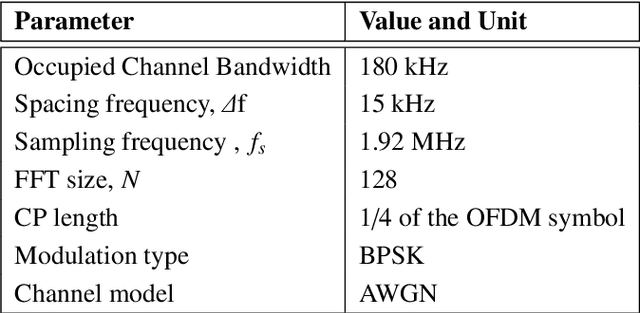

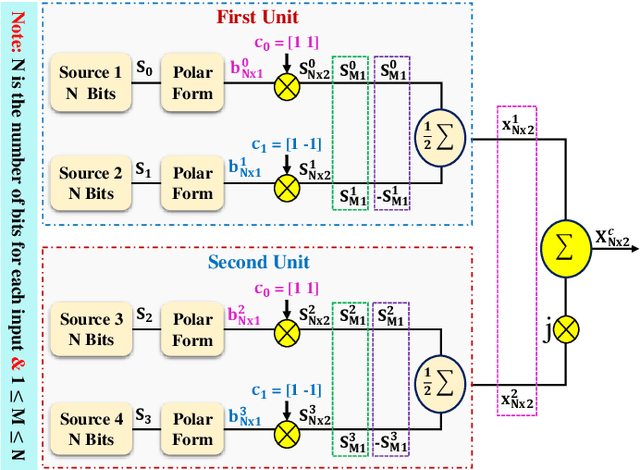

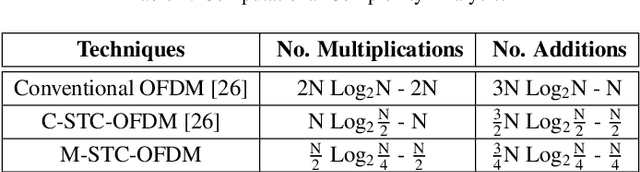

With hundreds of billions of the IoT connected devices, it is important for researchers to create effective resource management approach to satisfy the quality of service (QoS) requirements of 5th generation (5G) and beyond. Furthermore, wireless spectrum is increasingly scarce as demand for wireless services develops, demanding imaginative approaches to increase capacity within a limited spectral resource in order to meet service demands. In this article, the modified symbol time compression (M-STC) technique is suggested to paves the way for 5G networks and beyond to enhance the capacity and throughput. The M-STC method is a compressed signal waveform technique that increases the capacity by compressing the occupied bandwidth without increasing the complexity, losing data throughput or bit error rate (BER) performance. A comparative analysis is provided between the traditional orthogonal frequency division multiplexing (OFDM) system, OFDM using conventional symbol time compression (C-STC-OFDM) and OFDM using the proposed technique (M-STC-OFDM). The simulation results using Matlab-2021a show that the suggested method, M-STC-OFDM, drastically lowers the time needed for each OFDM signal by 75%. As a consequence, the M-STC-OFDM system decreases bandwidth (BW) by 75% when compared to a standard OFDM system (BW_OFDM = 180 kHz and BW_M-STC-OFDM = 45 kHz), while the C-STC-OFDM system reduces BW by 50% (BW_C-STC-OFDM = 90 kHz). Furthermore, using the M-STC-OFDM system reduces peak to average-power-ratio (PAPR) by 2.09 dB when compared to the standard OFDM system and 1.18 dB when compared to C-STC-OFDM with no BER deterioration. Moreover, as compared to the 16QAM-OFDM system, the proposed M-STC-OFDM system reduces the signal-to-noise-ratio (SNR) by 3.8 dB to transmit the same amount of data.

Approximating the full-field temperature evolution in 3D electronic systems from randomized "Minecraft" systems

Sep 21, 2022

Neural Networks as fast physics simulators have a large potential for many engineering design tasks. Prerequisites for a wide-spread application are an easy-to-use workflow for generating training datasets in a reasonable time, and the capability of the network to generalize to unseen systems. In contrast to most previous works where training systems are similar to the evaluation dataset, we propose to adapt the type of training system to the network architecture. Specifically, we apply a fully convolutional network and, thus, design 3D systems of randomly located voxels with randomly assigned physical properties. The idea is tested for the transient heat diffusion in electronic systems. Training only on random "Minecraft" systems, we obtain good generalization to electronic systems four times as large as the training systems (one-step prediction error of 0.07% vs 0.8%).

One-Shot Learning of Stochastic Differential Equations with Computational Graph Completion

Sep 24, 2022

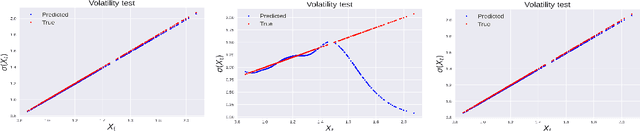

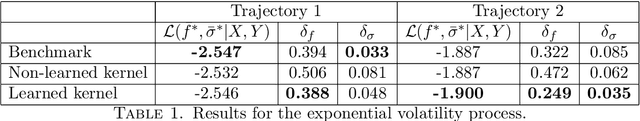

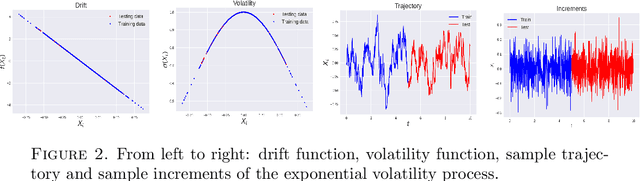

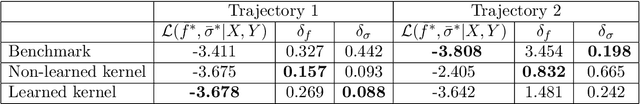

We consider the problem of learning Stochastic Differential Equations of the form $dX_t = f(X_t)dt+\sigma(X_t)dW_t $ from one sample trajectory. This problem is more challenging than learning deterministic dynamical systems because one sample trajectory only provides indirect information on the unknown functions $f$, $\sigma$, and stochastic process $dW_t$ representing the drift, the diffusion, and the stochastic forcing terms, respectively. We propose a simple kernel-based solution to this problem that can be decomposed as follows: (1) Represent the time-increment map $X_t \rightarrow X_{t+dt}$ as a Computational Graph in which $f$, $\sigma$ and $dW_t$ appear as unknown functions and random variables. (2) Complete the graph (approximate unknown functions and random variables) via Maximum a Posteriori Estimation (given the data) with Gaussian Process (GP) priors on the unknown functions. (3) Learn the covariance functions (kernels) of the GP priors from data with randomized cross-validation. Numerical experiments illustrate the efficacy, robustness, and scope of our method.

LEADER: Learning Attention over Driving Behaviors for Planning under Uncertainty

Sep 23, 2022

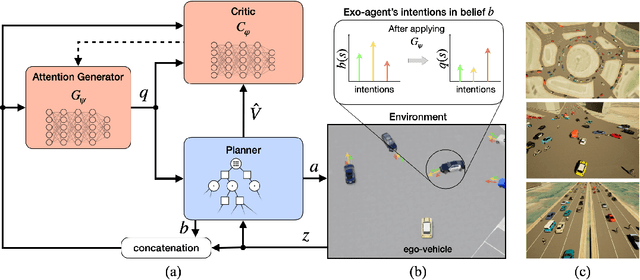

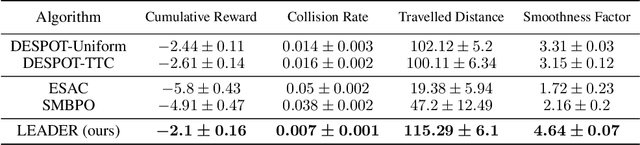

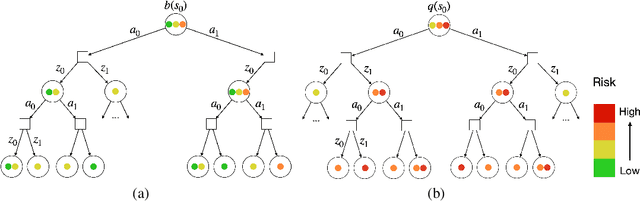

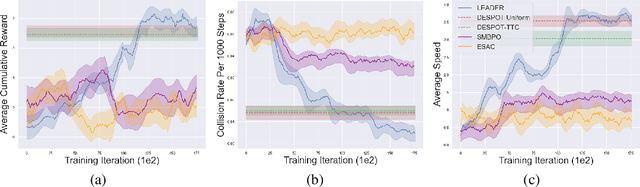

Uncertainty on human behaviors poses a significant challenge to autonomous driving in crowded urban environments. The partially observable Markov decision processes (POMDPs) offer a principled framework for planning under uncertainty, often leveraging Monte Carlo sampling to achieve online performance for complex tasks. However, sampling also raises safety concerns by potentially missing critical events. To address this, we propose a new algorithm, LEarning Attention over Driving bEhavioRs (LEADER), that learns to attend to critical human behaviors during planning. LEADER learns a neural network generator to provide attention over human behaviors in real-time situations. It integrates the attention into a belief-space planner, using importance sampling to bias reasoning towards critical events. To train the algorithm, we let the attention generator and the planner form a min-max game. By solving the min-max game, LEADER learns to perform risk-aware planning without human labeling.

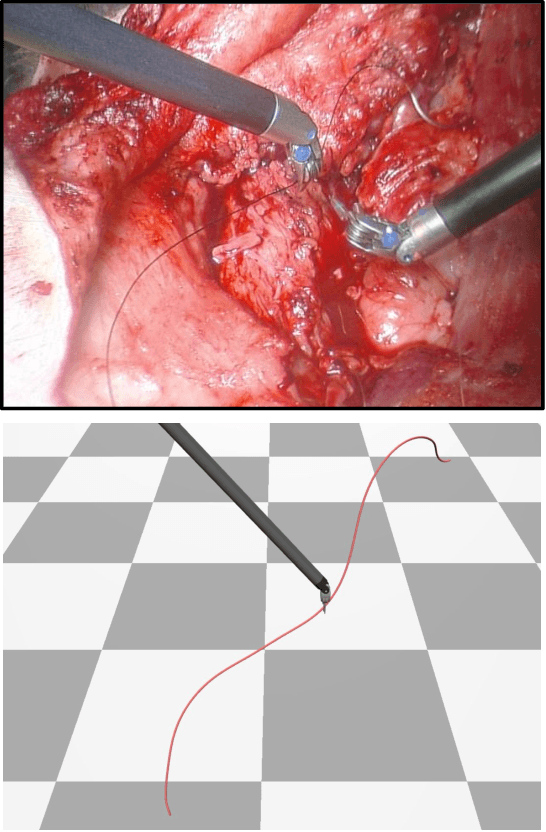

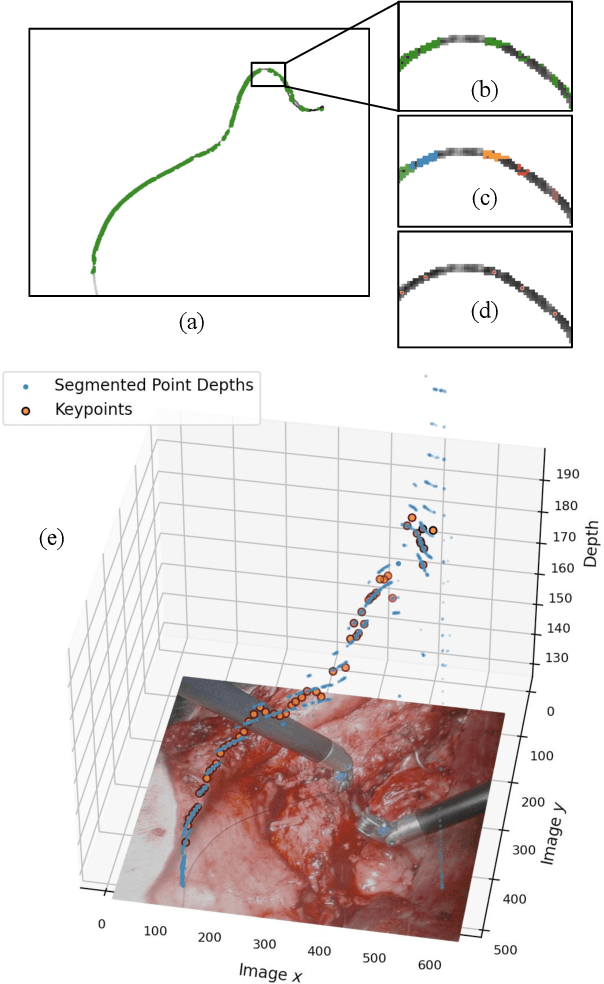

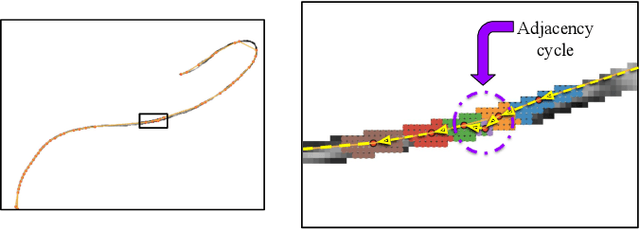

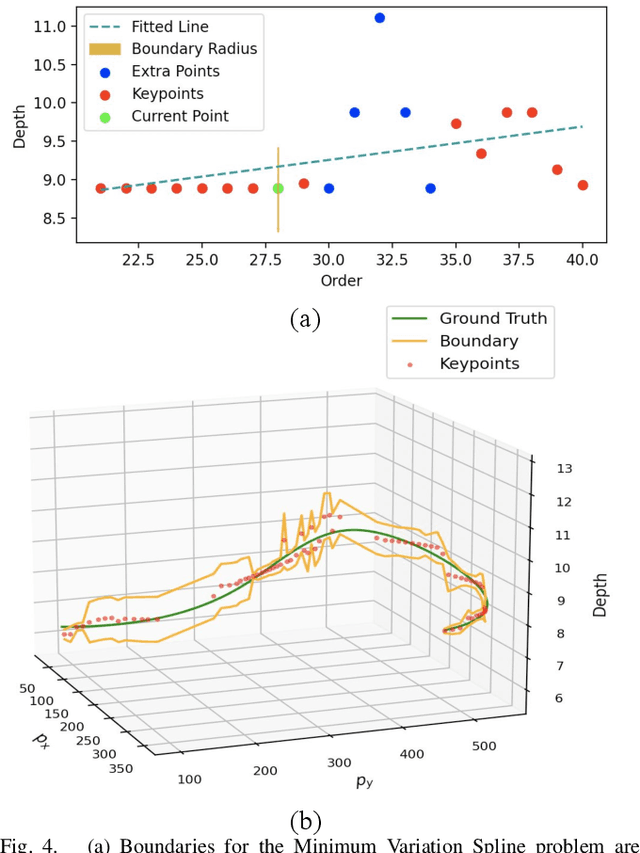

Suture Thread Spline Reconstruction from Endoscopic Images for Robotic Surgery with Reliability-driven Keypoint Detection

Sep 27, 2022

Automating the process of manipulating and delivering sutures during robotic surgery is a prominent problem at the frontier of surgical robotics, as automating this task can significantly reduce surgeons' fatigue during tele-operated surgery and allow them to spend more time addressing higher-level clinical decision making. Accomplishing autonomous suturing and suture manipulation in the real world requires accurate suture thread localization and reconstruction, the process of creating a 3D shape representation of suture thread from 2D stereo camera surgical image pairs. This is a very challenging problem due to how limited pixel information is available for the threads, as well as their sensitivity to lighting and specular reflection. We present a suture thread reconstruction work that uses reliable keypoints and a Minimum Variation Spline (MVS) smoothing optimization to construct a 3D centerline from a segmented surgical image pair. This method is comparable to previous suture thread reconstruction works, with the possible benefit of increased accuracy of grasping point estimation. Our code and datasets will be available at: https://github.com/ucsdarclab/thread-reconstruction.

In-situ animal behavior classification using knowledge distillation and fixed-point quantization

Sep 09, 2022

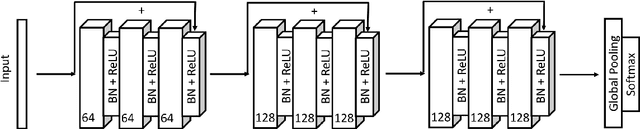

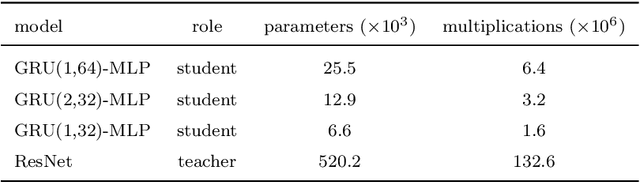

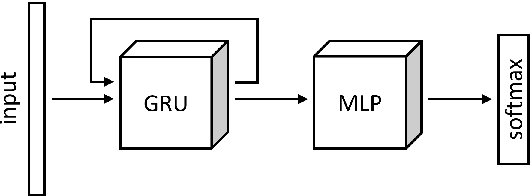

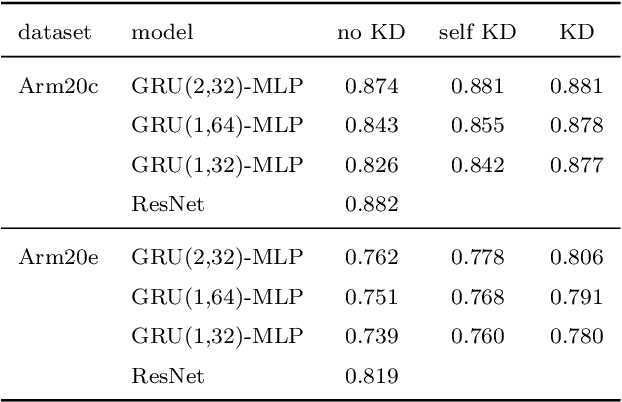

We explore the use of knowledge distillation (KD) for learning compact and accurate models that enable classification of animal behavior from accelerometry data on wearable devices. To this end, we take a deep and complex convolutional neural network, known as residual neural network (ResNet), as the teacher model. ResNet is specifically designed for multivariate time-series classification. We use ResNet to distil the knowledge of animal behavior classification datasets into soft labels, which consist of the predicted pseudo-probabilities of every class for each datapoint. We then use the soft labels to train our significantly less complex student models, which are based on the gated recurrent unit (GRU) and multilayer perceptron (MLP). The evaluation results using two real-world animal behavior classification datasets show that the classification accuracy of the student GRU-MLP models improves appreciably through KD, approaching that of the teacher ResNet model. To further reduce the computational and memory requirements of performing inference using the student models trained via KD, we utilize dynamic fixed-point quantization through an appropriate modification of the computational graphs of the models. We implement both unquantized and quantized versions of the developed KD-based models on the embedded systems of our purpose-built collar and ear tag devices to classify animal behavior in situ and in real time. The results corroborate the effectiveness of KD and quantization in improving the inference performance in terms of both classification accuracy and computational and memory efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge