"Time": models, code, and papers

Games of Artificial Intelligence: A Continuous-Time Approach

Feb 12, 2022

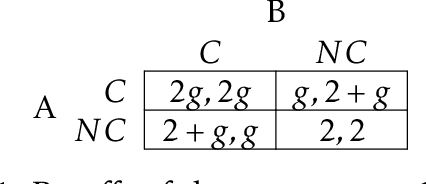

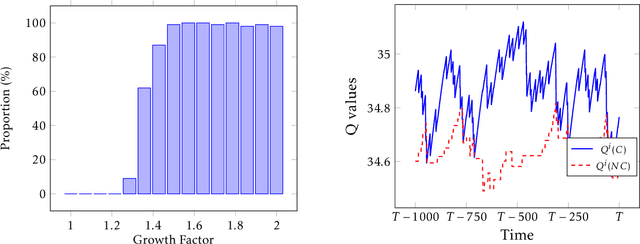

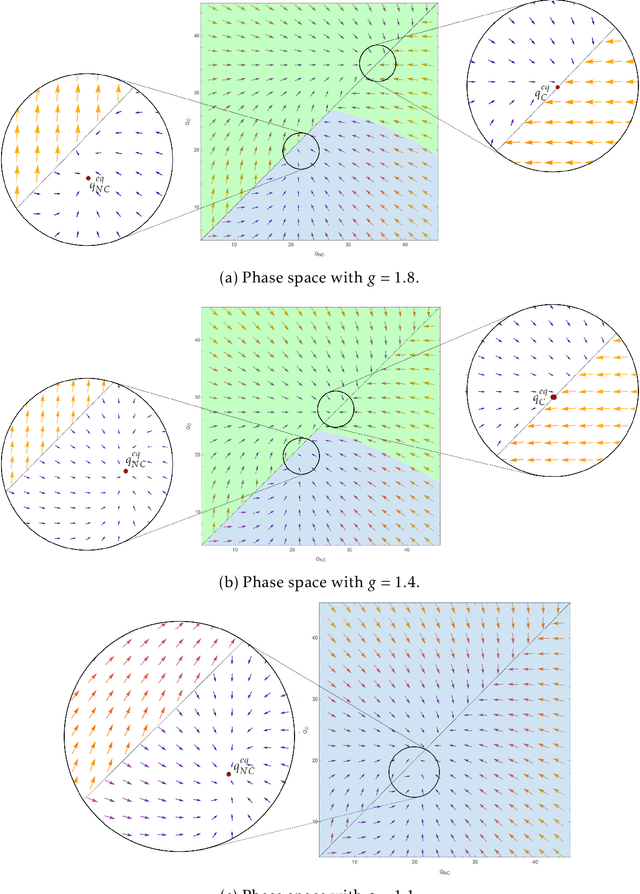

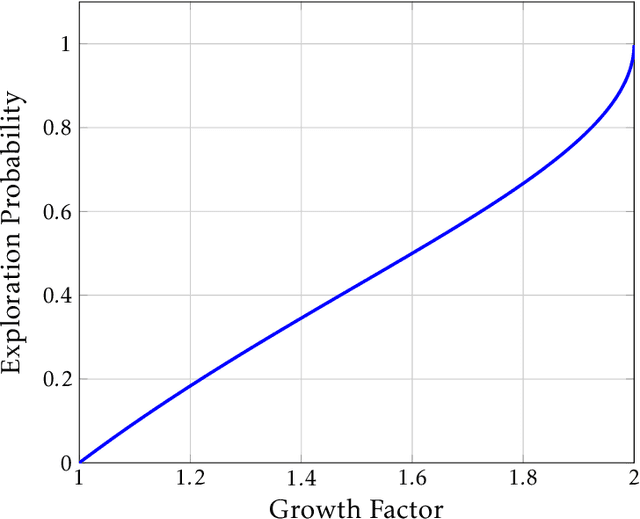

This paper studies the strategic interaction of algorithms in economic games. We analyze games where learning algorithms play against each other while searching for the best strategy. We first establish a fluid approximation technique that enables us to characterize the learning outcomes in continuous time. This tool allows to identify the equilibria of games played by Artificial Intelligence algorithms and perform comparative statics analysis. Thus, our results bridge a gap between traditional learning theory and applied models, allowing quantitative analysis of traditionally experimental systems. We describe the outcomes of a social dilemma, and we provide analytical guidance for the design of pricing algorithms in a Bertrand game. We uncover a new phenomenon, the coordination bias, which explains how algorithms may fail to learn dominant strategies.

Centroid Distance Keypoint Detector for Colored Point Clouds

Oct 04, 2022

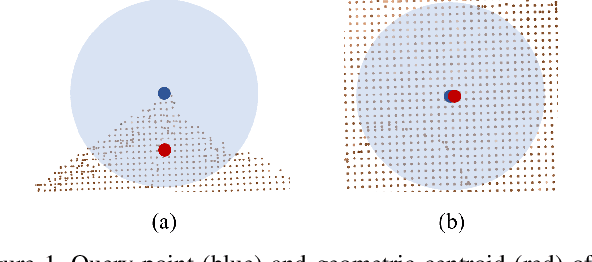

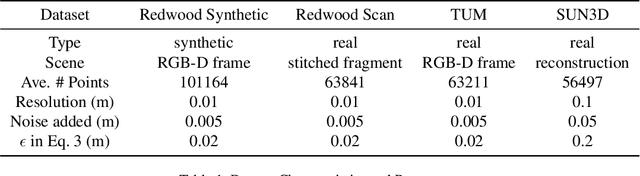

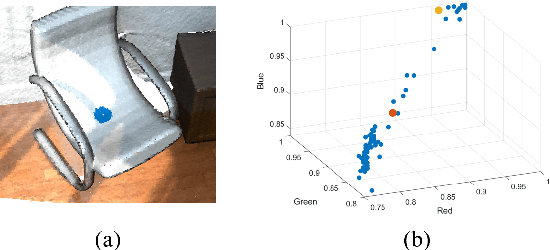

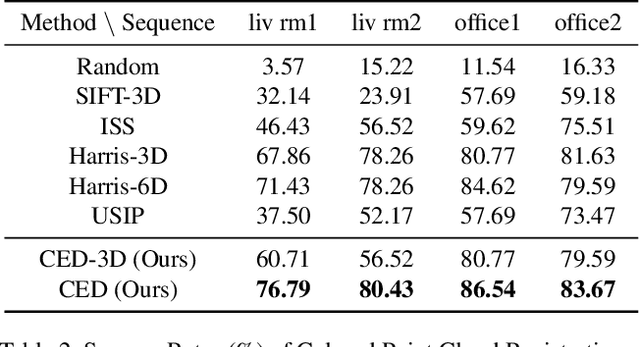

Keypoint detection serves as the basis for many computer vision and robotics applications. Despite the fact that colored point clouds can be readily obtained, most existing keypoint detectors extract only geometry-salient keypoints, which can impede the overall performance of systems that intend to (or have the potential to) leverage color information. To promote advances in such systems, we propose an efficient multi-modal keypoint detector that can extract both geometry-salient and color-salient keypoints in colored point clouds. The proposed CEntroid Distance (CED) keypoint detector comprises an intuitive and effective saliency measure, the centroid distance, that can be used in both 3D space and color space, and a multi-modal non-maximum suppression algorithm that can select keypoints with high saliency in two or more modalities. The proposed saliency measure leverages directly the distribution of points in a local neighborhood and does not require normal estimation or eigenvalue decomposition. We evaluate the proposed method in terms of repeatability and computational efficiency (i.e. running time) against state-of-the-art keypoint detectors on both synthetic and real-world datasets. Results demonstrate that our proposed CED keypoint detector requires minimal computational time while attaining high repeatability. To showcase one of the potential applications of the proposed method, we further investigate the task of colored point cloud registration. Results suggest that our proposed CED detector outperforms state-of-the-art handcrafted and learning-based keypoint detectors in the evaluated scenes. The C++ implementation of the proposed method is made publicly available at https://github.com/UCR-Robotics/CED_Detector.

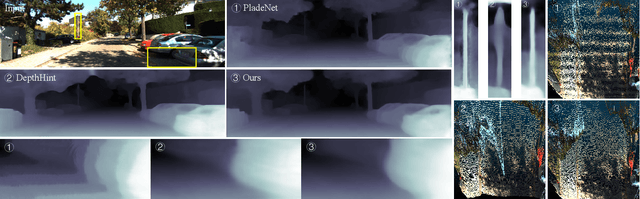

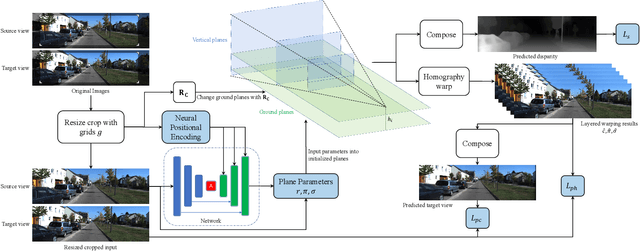

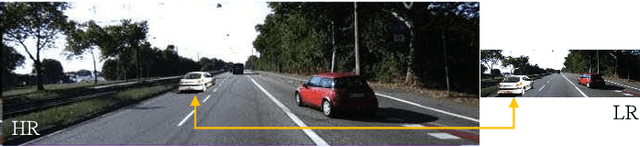

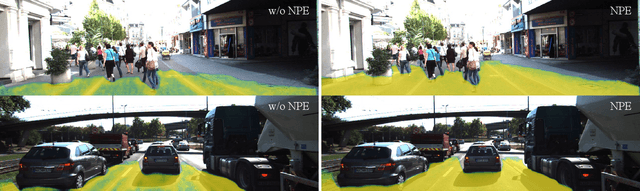

PlaneDepth: Plane-Based Self-Supervised Monocular Depth Estimation

Oct 04, 2022

Self-supervised monocular depth estimation refers to training a monocular depth estimation (MDE) network using only RGB images to overcome the difficulty of collecting dense ground truth depth. Many previous works addressed this problem using depth classification or depth regression. However, depth classification tends to fall into local minima due to the bilinear interpolation search on the target view. Depth classification overcomes this problem using pre-divided depth bins, but those depth candidates lead to discontinuities in the final depth result, and using the same probability for weighted summation of color and depth is ambiguous. To overcome these limitations, we use some predefined planes that are parallel to the ground, allowing us to automatically segment the ground and predict continuous depth for it. We further model depth as a mixture Laplace distribution, which provides a more certain objective for optimization. Previous works have shown that MDE networks only use the vertical image position of objects to estimate the depth and ignore relative sizes. We address this problem for the first time in both stereo and monocular training using resize cropping data augmentation. Based on our analysis of resize cropping, we combine it with our plane definition and improve our training strategy so that the network could learn the relationship between depth and both the vertical image position and relative size of objects. We further combine the self-distillation stage with post-processing to provide more accurate supervision and save extra time in post-processing. We conduct extensive experiments to demonstrate the effectiveness of our analysis and improvements.

Development of an Ultrahigh Bandwidth Software-defined Radio Platform

Aug 25, 2022

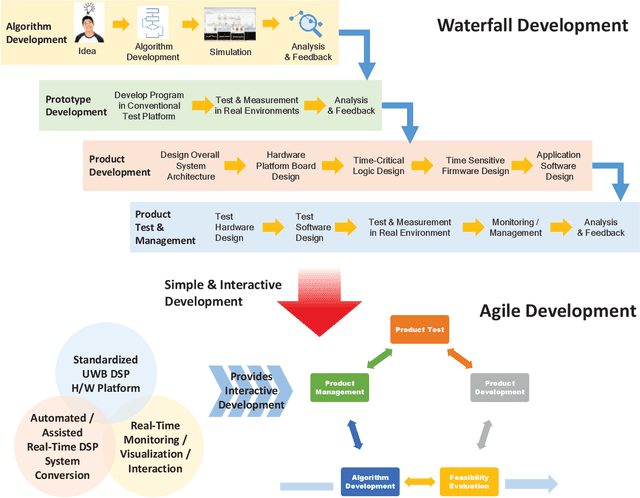

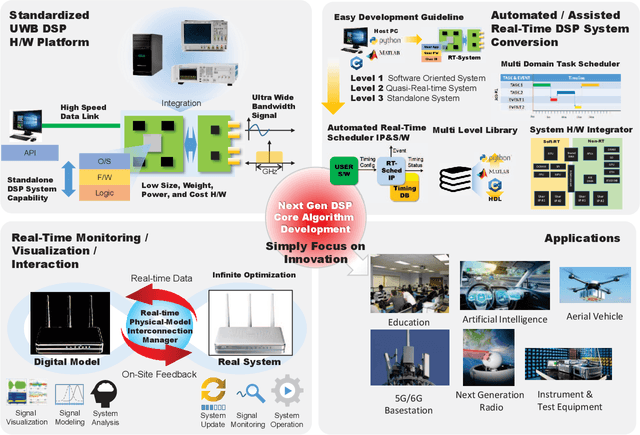

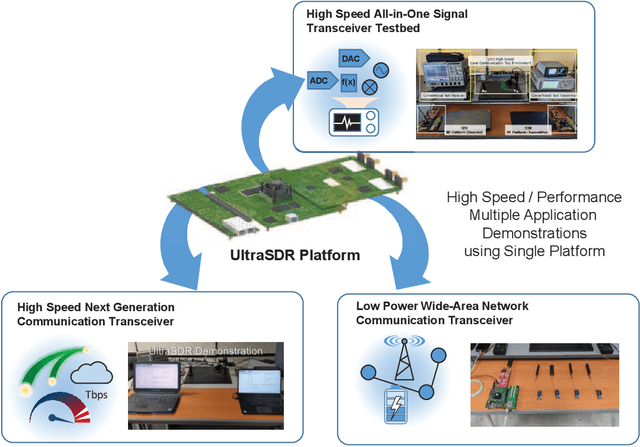

For the development of new digital signal processing systems and services, the rapid, easy, and convenient prototyping of ideas and the rapid time-to-market of products are becoming important with advances in technology. Conventionally, for the development stage, particularly when confirming the feasibility or performance of a new system or service, an idea is first confirmed through a computerbased software simulation after developing an accurate model of the operating environment. Next, this idea is validated and tested in the real operating environment. The new systems or services and their operating environments are becoming increasingly complicated. Hence, their development processes too are more complex cost- and time-intensive tasks that require engineers with skill and professional knowledge/experience. Furthermore, for ensuring fast time-to-market, all the development processes encompassing the (i) algorithm development, (ii) product prototyping, and (iii) final product development, must be closely linked such that they can be quickly completed. In this context, the aim of this paper is to propose an ultrahigh bandwidth software-defined radio platform that can prototype a quasi-real-time operating system without a developer having sophisticated hardware/software expertise. This platform allows the realization of a software-implemented digital signal processing system in minimal time with minimal efforts and without the need of a host computer.

Opportunities for Intelligent Reflecting Surfaces in 6G-Empowered V2X Communications

Oct 02, 2022

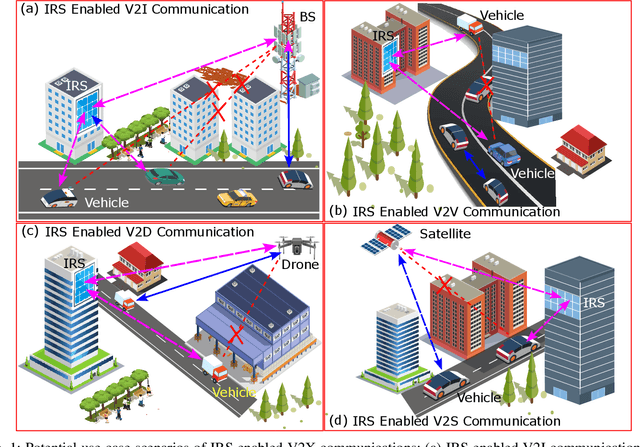

This paper first describes the introduction of 6G-empowered V2X communications and IRS technology. Then it discusses different use case scenarios of IRS enabled V2X communications and reports recent advances in the existing literature. Next, we focus our attention on the scenario of vehicular edge computing involving IRS enabled drone communications in order to reduce vehicle computational time via optimal computational and communication resource allocation. At the end, this paper highlights current challenges and discusses future perspectives of IRS enabled V2X communications in order to improve current work and spark new ideas.

Efficient Noise Filtration of Images by Low-Rank Singular Vector Approximations of Geodesics' Gramian Matrix

Sep 27, 2022

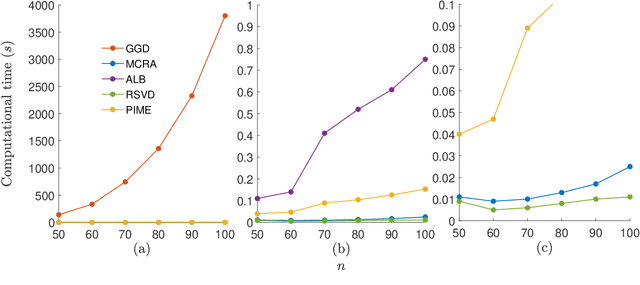

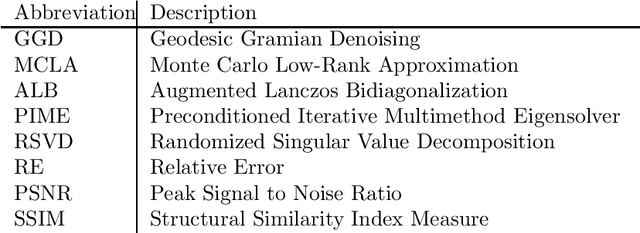

Modern society is interested in capturing high-resolution and fine-quality images due to the surge of sophisticated cameras. However, the noise contamination in the images not only inferior people's expectations but also conversely affects the subsequent processes if such images are utilized in computer vision tasks such as remote sensing, object tracking, etc. Even though noise filtration plays an essential role, real-time processing of a high-resolution image is limited by the hardware limitations of the image-capturing instruments. Geodesic Gramian Denoising (GGD) is a manifold-based noise filtering method that we introduced in our past research which utilizes a few prominent singular vectors of the geodesics' Gramian matrix for the noise filtering process. The applicability of GDD is limited as it encounters $\mathcal{O}(n^6)$ when denoising a given image of size $n\times n$ since GGD computes the prominent singular vectors of a $n^2 \times n^2$ data matrix that is implemented by singular value decomposition (SVD). In this research, we increase the efficiency of our GGD framework by replacing its SVD step with four diverse singular vector approximation techniques. Here, we compare both the computational time and the noise filtering performance between the four techniques integrated into GGD.

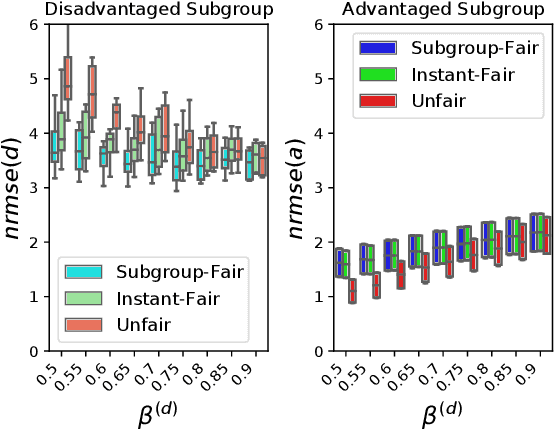

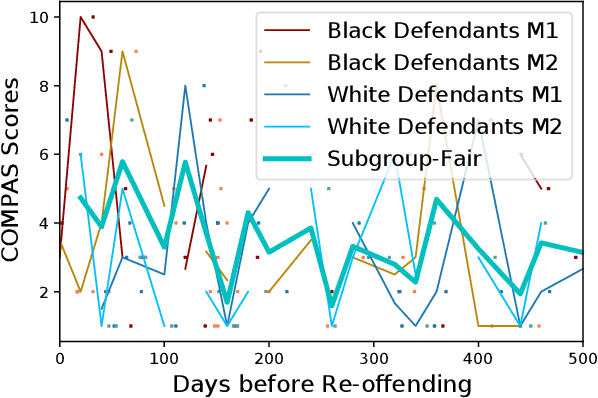

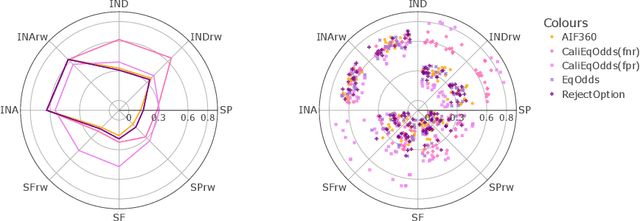

Fairness in Forecasting of Observations of Linear Dynamical Systems

Sep 12, 2022

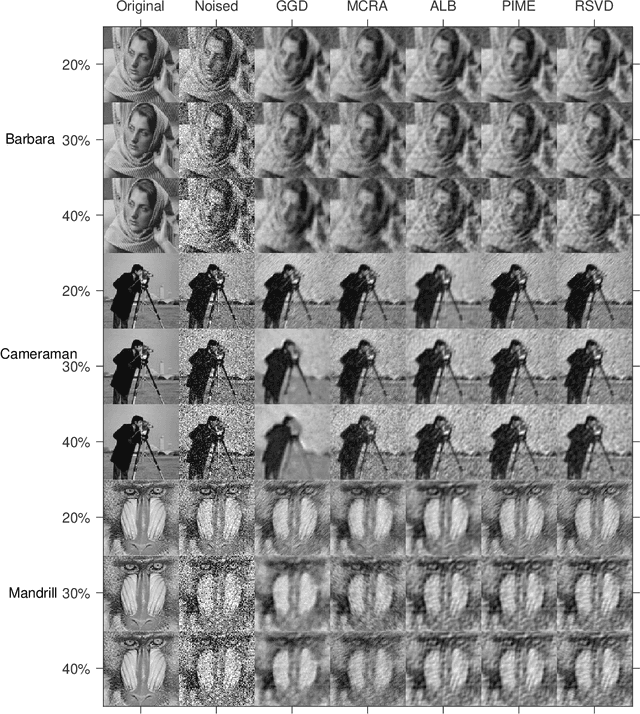

In machine learning, training data often capture the behaviour of multiple subgroups of some underlying human population. When the nature of training data for subgroups are not controlled carefully, under-representation bias arises. To counter this effect we introduce two natural notions of subgroup fairness and instantaneous fairness to address such under-representation bias in time-series forecasting problems. Here we show globally convergent methods for the fairness-constrained learning problems using hierarchies of convexifications of non-commutative polynomial optimisation problems. Our empirical results on a biased data set motivated by insurance applications and the well-known COMPAS data set demonstrate the efficacy of our methods. We also show that by exploiting sparsity in the convexifications, we can reduce the run time of our methods considerably.

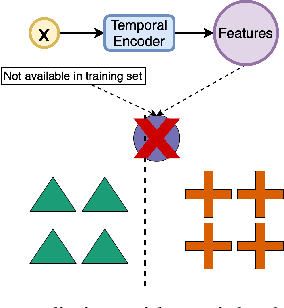

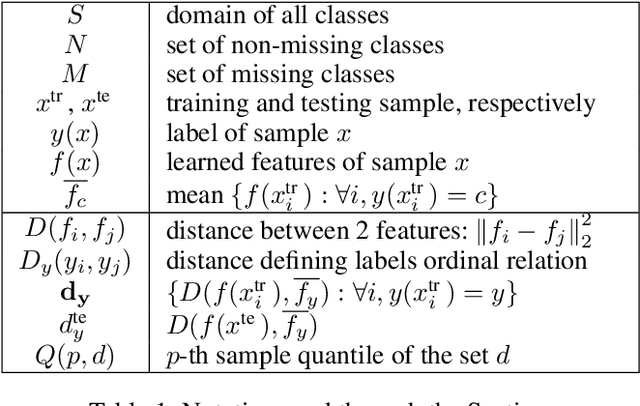

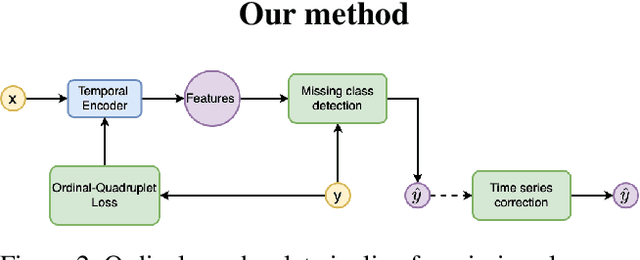

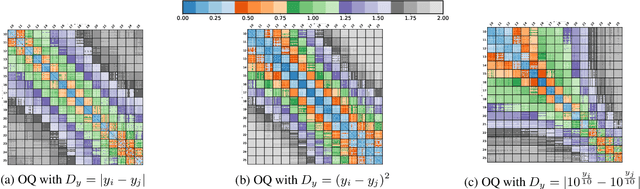

Ordinal-Quadruplet: Retrieval of Missing Classes in Ordinal Time Series

Jan 24, 2022

In this paper, we propose an ordered time series classification framework that is robust against missing classes in the training data, i.e., during testing we can prescribe classes that are missing during training. This framework relies on two main components: (1) our newly proposed ordinal-quadruplet loss, which forces the model to learn latent representation while preserving the ordinal relation among labels, (2) testing procedure, which utilizes the property of latent representation (order preservation). We conduct experiments based on real world multivariate time series data and show the significant improvement in the prediction of missing labels even with 40% of the classes are missing from training. Compared with the well-known triplet loss optimization augmented with interpolation for missing information, in some cases, we nearly double the accuracy.

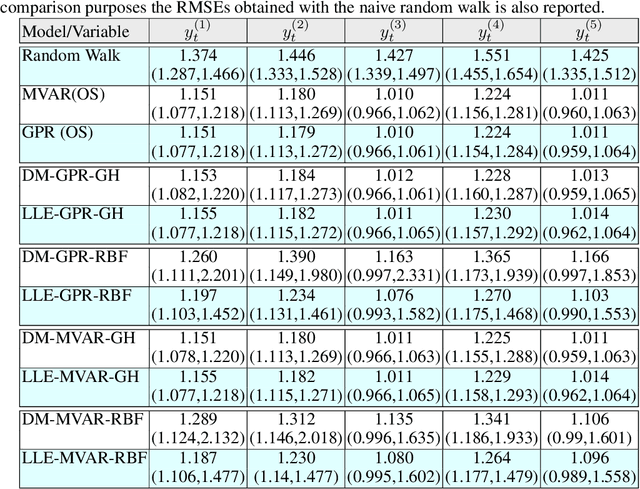

Time Series Forecasting Using Manifold Learning

Oct 08, 2021

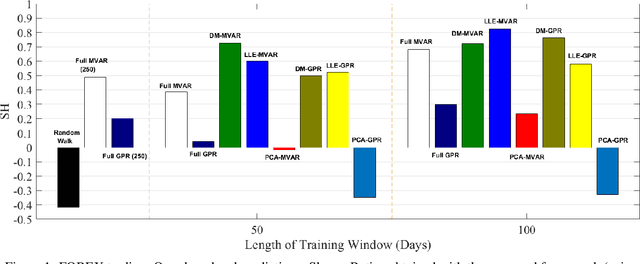

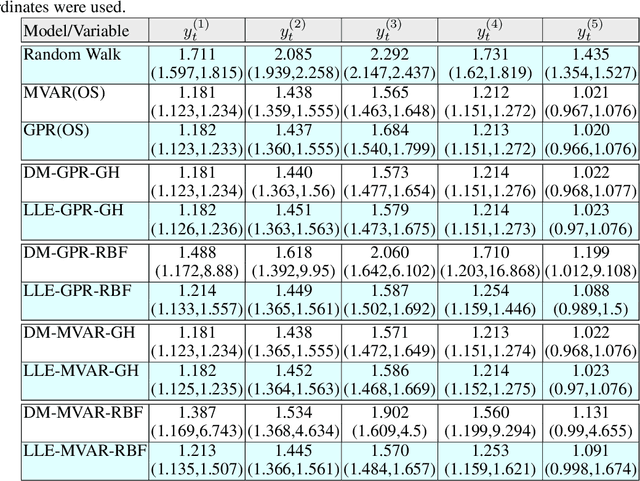

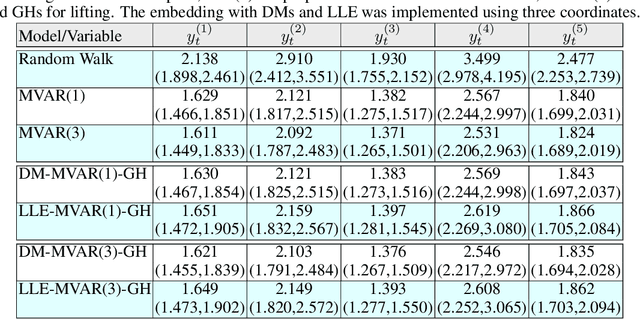

We address a three-tier numerical framework based on manifold learning for the forecasting of high-dimensional time series. At the first step, we embed the time series into a reduced low-dimensional space using a nonlinear manifold learning algorithm such as Locally Linear Embedding and Diffusion Maps. At the second step, we construct reduced-order regression models on the manifold, in particular Multivariate Autoregressive (MVAR) and Gaussian Process Regression (GPR) models, to forecast the embedded dynamics. At the final step, we lift the embedded time series back to the original high-dimensional space using Radial Basis Functions interpolation and Geometric Harmonics. For our illustrations, we test the forecasting performance of the proposed numerical scheme with four sets of time series: three synthetic stochastic ones resembling EEG signals produced from linear and nonlinear stochastic models with different model orders, and one real-world data set containing daily time series of 10 key foreign exchange rates (FOREX) spanning the time period 03/09/2001-29/10/2020. The forecasting performance of the proposed numerical scheme is assessed using the combinations of manifold learning, modelling and lifting approaches. We also provide a comparison with the Principal Component Analysis algorithm as well as with the naive random walk model and the MVAR and GPR models trained and implemented directly in the high-dimensional space.

Multilevel Robustness for 2D Vector Field Feature Tracking, Selection, and Comparison

Sep 19, 2022

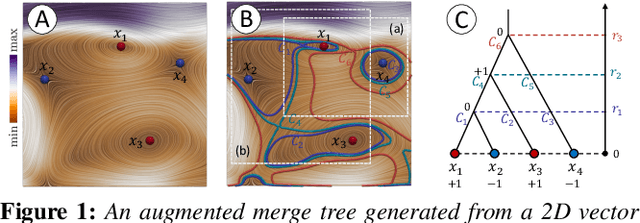

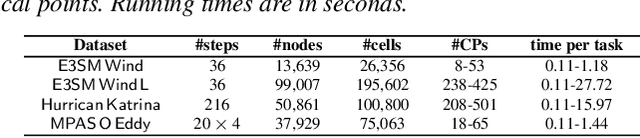

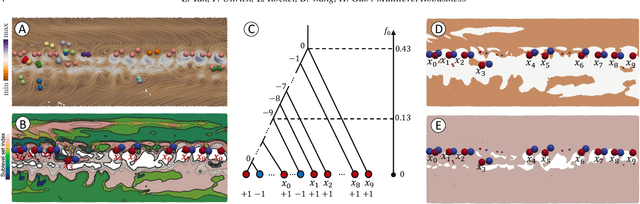

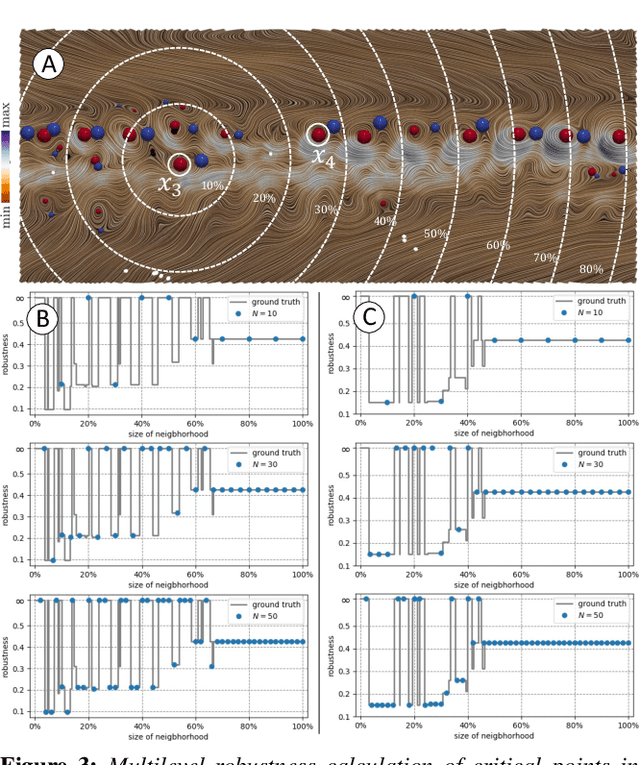

Critical point tracking is a core topic in scientific visualization for understanding the dynamic behavior of time-varying vector field data. The topological notion of robustness has been introduced recently to quantify the structural stability of critical points, that is, the robustness of a critical point is the minimum amount of perturbation to the vector field necessary to cancel it. A theoretical basis has been established previously that relates critical point tracking with the notion of robustness, in particular, critical points could be tracked based on their closeness in stability, measured by robustness, instead of just distance proximities within the domain. However, in practice, the computation of classic robustness may produce artifacts when a critical point is close to the boundary of the domain; thus, we do not have a complete picture of the vector field behavior within its local neighborhood. To alleviate these issues, we introduce a multilevel robustness framework for the study of 2D time-varying vector fields. We compute the robustness of critical points across varying neighborhoods to capture the multiscale nature of the data and to mitigate the boundary effect suffered by the classic robustness computation. We demonstrate via experiments that such a new notion of robustness can be combined seamlessly with existing feature tracking algorithms to improve the visual interpretability of vector fields in terms of feature tracking, selection, and comparison for large-scale scientific simulations. We observe, for the first time, that the minimum multilevel robustness is highly correlated with physical quantities used by domain scientists in studying a real-world tropical cyclone dataset. Such observation helps to increase the physical interpretability of robustness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge