"Time": models, code, and papers

BERT-Flow-VAE: A Weakly-supervised Model for Multi-Label Text Classification

Oct 27, 2022

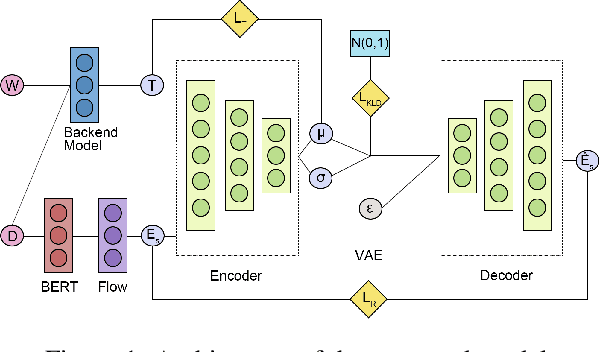

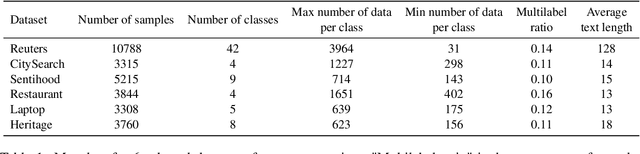

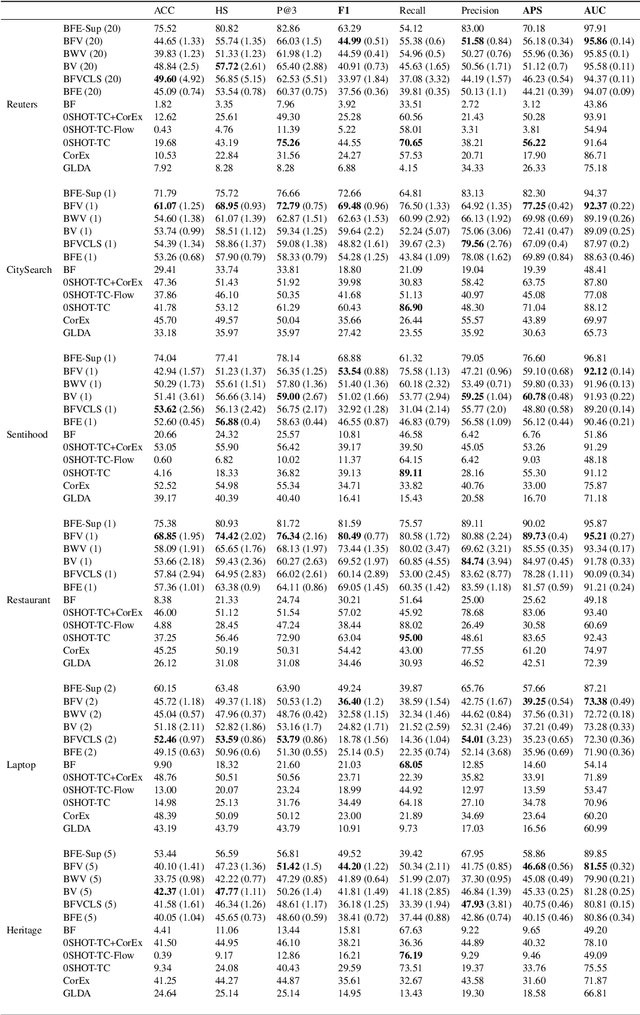

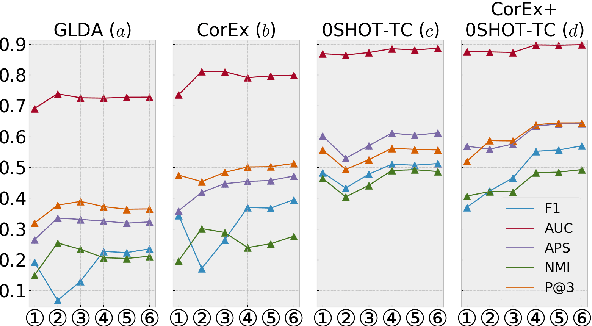

Multi-label Text Classification (MLTC) is the task of categorizing documents into one or more topics. Considering the large volumes of data and varying domains of such tasks, fully supervised learning requires manually fully annotated datasets which is costly and time-consuming. In this paper, we propose BERT-Flow-VAE (BFV), a Weakly-Supervised Multi-Label Text Classification (WSMLTC) model that reduces the need for full supervision. This new model (1) produces BERT sentence embeddings and calibrates them using a flow model, (2) generates an initial topic-document matrix by averaging results of a seeded sparse topic model and a textual entailment model which only require surface name of topics and 4-6 seed words per topic, and (3) adopts a VAE framework to reconstruct the embeddings under the guidance of the topic-document matrix. Finally, (4) it uses the means produced by the encoder model in the VAE architecture as predictions for MLTC. Experimental results on 6 multi-label datasets show that BFV can substantially outperform other baseline WSMLTC models in key metrics and achieve approximately 84% performance of a fully-supervised model.

* 8 pages, 4 figures

Towards Multi-spatiotemporal-scale Generalized PDE Modeling

Sep 30, 2022

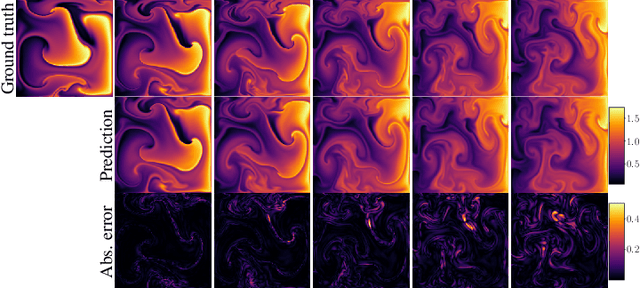

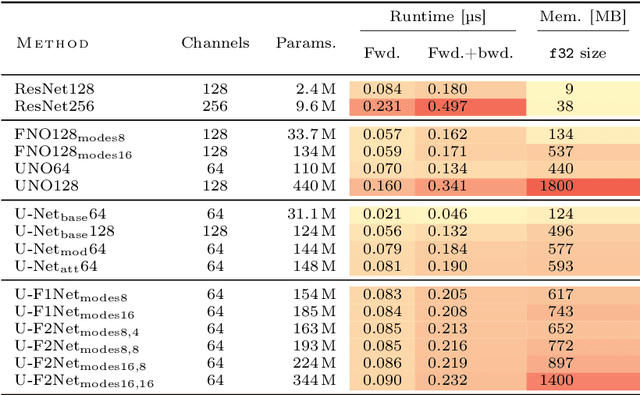

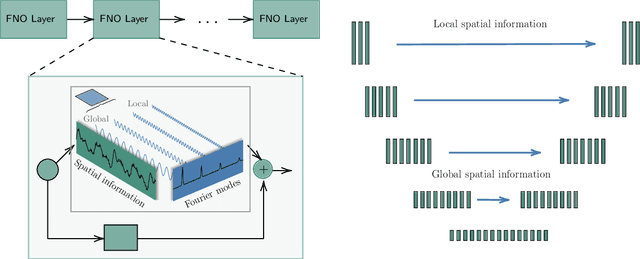

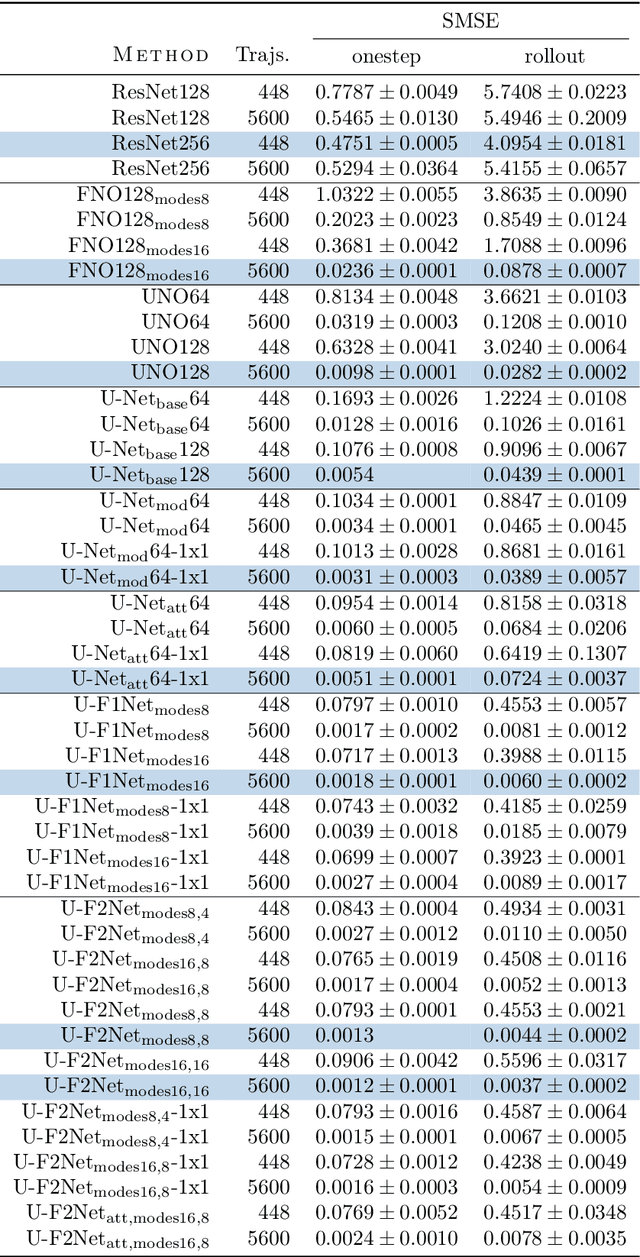

Partial differential equations (PDEs) are central to describing complex physical system simulations. Their expensive solution techniques have led to an increased interest in deep neural network based surrogates. However, the practical utility of training such surrogates is contingent on their ability to model complex multi-scale spatio-temporal phenomena. Various neural network architectures have been proposed to target such phenomena, most notably Fourier Neural Operators (FNOs) which give a natural handle over local \& global spatial information via parameterization of different Fourier modes, and U-Nets which treat local and global information via downsampling and upsampling paths. However, generalizing across different equation parameters or different time-scales still remains a challenge. In this work, we make a comprehensive comparison between various FNO and U-Net like approaches on fluid mechanics problems in both vorticity-stream and velocity function form. For U-Nets, we transfer recent architectural improvements from computer vision, most notably from object segmentation and generative modeling. We further analyze the design considerations for using FNO layers to improve performance of U-Net architectures without major degradation of computational performance. Finally, we show promising results on generalization to different PDE parameters and time-scales with a single surrogate model.

Low Latency Real-Time Seizure Detection Using Transfer Deep Learning

Feb 16, 2022

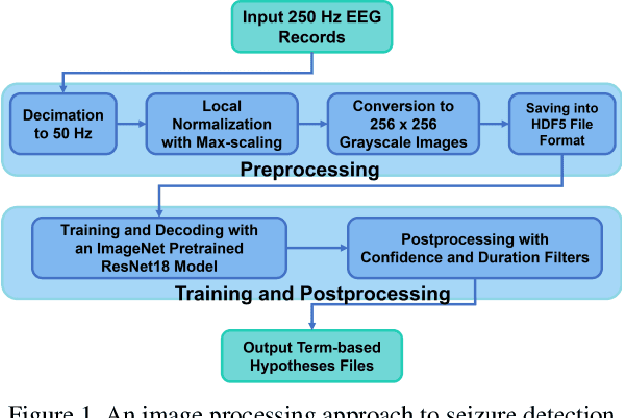

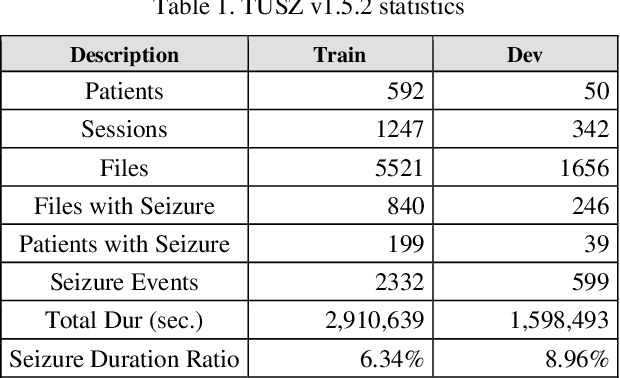

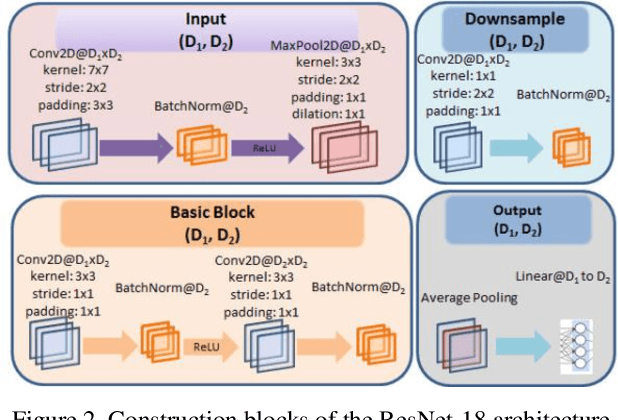

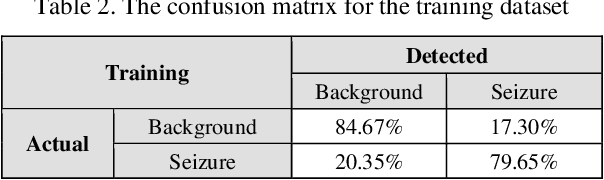

Scalp electroencephalogram (EEG) signals inherently have a low signal-to-noise ratio due to the way the signal is electrically transduced. Temporal and spatial information must be exploited to achieve accurate detection of seizure events. Most popular approaches to seizure detection using deep learning do not jointly model this information or require multiple passes over the signal, which makes the systems inherently non-causal. In this paper, we exploit both simultaneously by converting the multichannel signal to a grayscale image and using transfer learning to achieve high performance. The proposed system is trained end-to-end with only very simple pre- and postprocessing operations which are computationally lightweight and have low latency, making them conducive to clinical applications that require real-time processing. We have achieved a performance of 42.05% sensitivity with 5.78 false alarms per 24 hours on the development dataset of v1.5.2 of the Temple University Hospital Seizure Detection Corpus. On a single-core CPU operating at 1.7 GHz, the system runs faster than real-time (0.58 xRT), uses 16 Gbytes of memory, and has a latency of 300 msec.

Design of a Multimodal Fingertip Sensor for Dynamic Manipulation

Sep 23, 2022

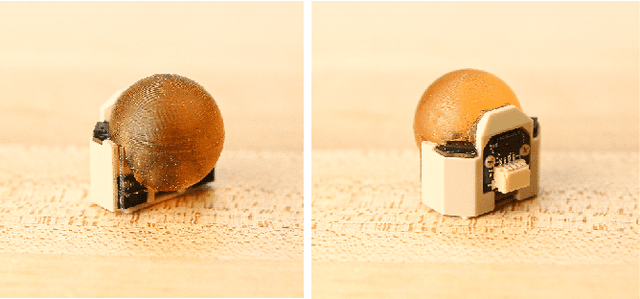

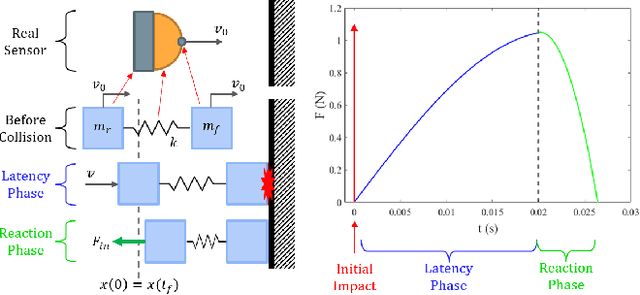

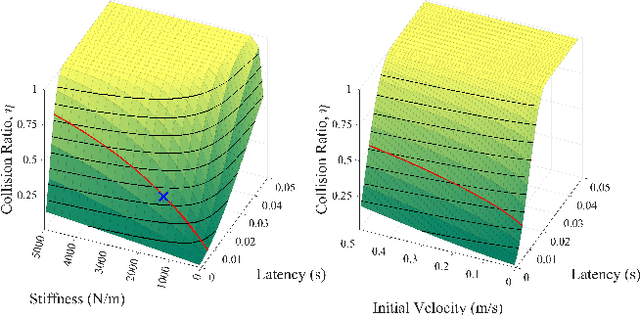

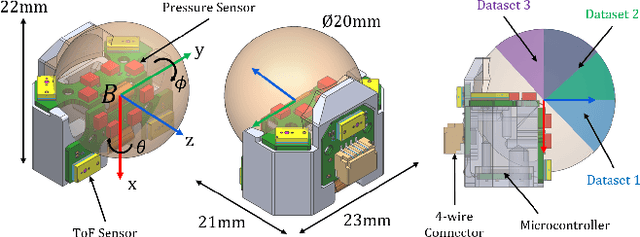

We introduce a spherical fingertip sensor for dynamic manipulation. It is based on barometric pressure and time-of-flight proximity sensors and is low-latency, compact, and physically robust. The sensor uses a trained neural network to estimate the contact location and three-axis contact forces based on data from the pressure sensors, which are embedded within the sensor's sphere of polyurethane rubber. The time-of-flight sensors face in three different outward directions, and an integrated microcontroller samples each of the individual sensors at up to 200 Hz. To quantify the effect of system latency on dynamic manipulation performance, we develop and analyze a metric called the collision impulse ratio and characterize the end-to-end latency of our new sensor. We also present experimental demonstrations with the sensor, including measuring contact transitions, performing coarse mapping, maintaining a contact force with a moving object, and reacting to avoid collisions.

LiP-Flow: Learning Inference-time Priors for Codec Avatars via Normalizing Flows in Latent Space

Mar 15, 2022

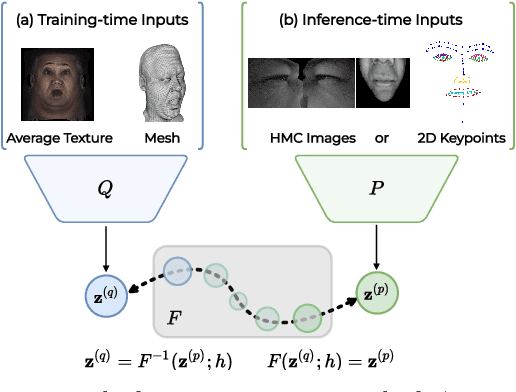

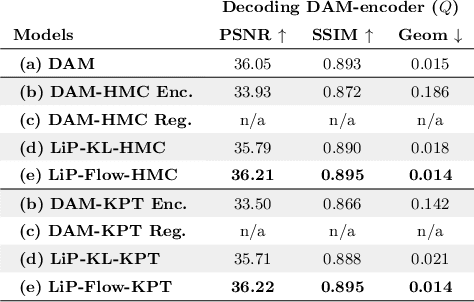

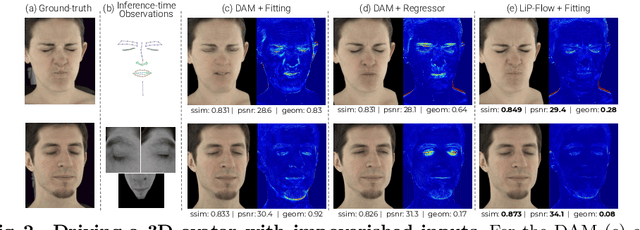

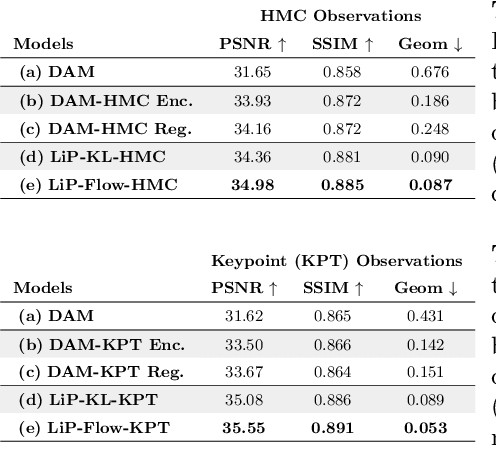

Neural face avatars that are trained from multi-view data captured in camera domes can produce photo-realistic 3D reconstructions. However, at inference time, they must be driven by limited inputs such as partial views recorded by headset-mounted cameras or a front-facing camera, and sparse facial landmarks. To mitigate this asymmetry, we introduce a prior model that is conditioned on the runtime inputs and tie this prior space to the 3D face model via a normalizing flow in the latent space. Our proposed model, LiP-Flow, consists of two encoders that learn representations from the rich training-time and impoverished inference-time observations. A normalizing flow bridges the two representation spaces and transforms latent samples from one domain to another, allowing us to define a latent likelihood objective. We trained our model end-to-end to maximize the similarity of both representation spaces and the reconstruction quality, making the 3D face model aware of the limited driving signals. We conduct extensive evaluations where the latent codes are optimized to reconstruct 3D avatars from partial or sparse observations. We show that our approach leads to an expressive and effective prior, capturing facial dynamics and subtle expressions better.

Panoptic-PHNet: Towards Real-Time and High-Precision LiDAR Panoptic Segmentation via Clustering Pseudo Heatmap

May 14, 2022

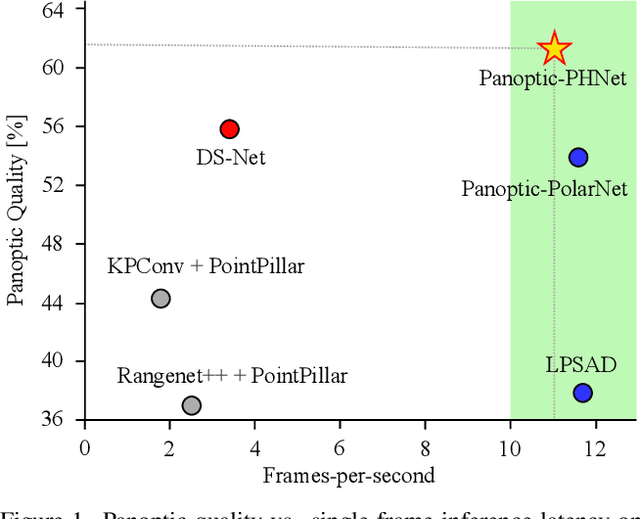

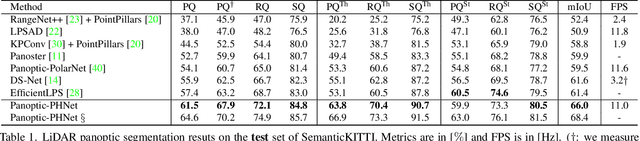

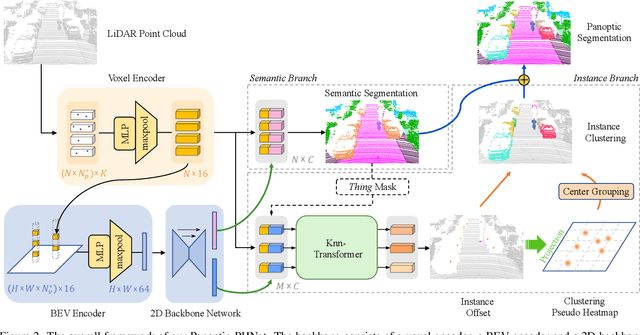

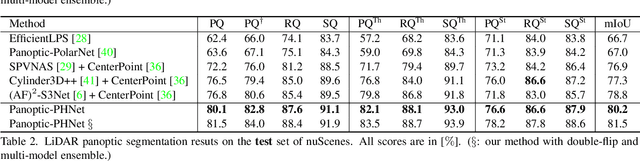

As a rising task, panoptic segmentation is faced with challenges in both semantic segmentation and instance segmentation. However, in terms of speed and accuracy, existing LiDAR methods in the field are still limited. In this paper, we propose a fast and high-performance LiDAR-based framework, referred to as Panoptic-PHNet, with three attractive aspects: 1) We introduce a clustering pseudo heatmap as a new paradigm, which, followed by a center grouping module, yields instance centers for efficient clustering without object-level learning tasks. 2) A knn-transformer module is proposed to model the interaction among foreground points for accurate offset regression. 3) For backbone design, we fuse the fine-grained voxel features and the 2D Bird's Eye View (BEV) features with different receptive fields to utilize both detailed and global information. Extensive experiments on both SemanticKITTI dataset and nuScenes dataset show that our Panoptic-PHNet surpasses state-of-the-art methods by remarkable margins with a real-time speed. We achieve the 1st place on the public leaderboard of SemanticKITTI and leading performance on the recently released leaderboard of nuScenes.

ObSynth: An Interactive Synthesis System for Generating Object Models from Natural Language Specifications

Oct 20, 2022

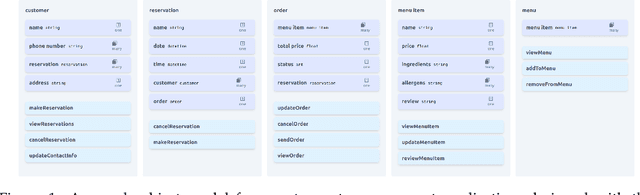

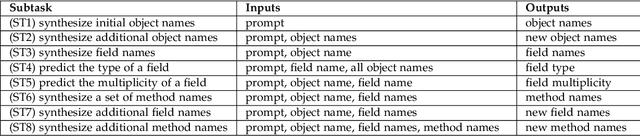

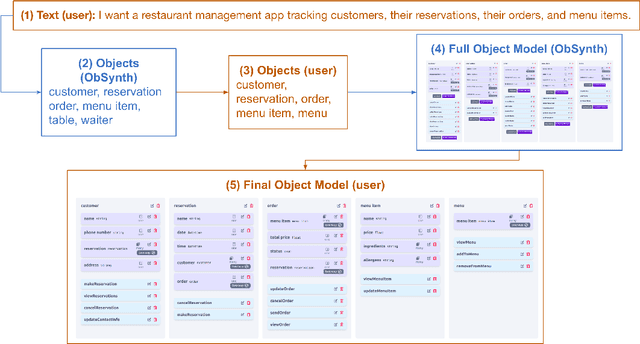

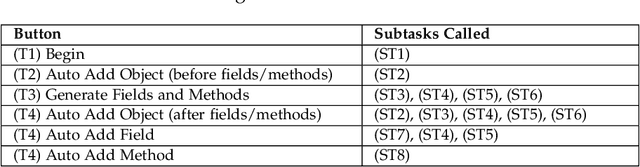

We introduce ObSynth, an interactive system leveraging the domain knowledge embedded in large language models (LLMs) to help users design object models from high level natural language prompts. This is an example of specification reification, the process of taking a high-level, potentially vague specification and reifying it into a more concrete form. We evaluate ObSynth via a user study, leading to three key findings: first, object models designed using ObSynth are more detailed, showing that it often synthesizes fields users might have otherwise omitted. Second, a majority of objects, methods, and fields generated by ObSynth are kept by the user in the final object model, highlighting the quality of generated components. Third, ObSynth altered the workflow of participants: they focus on checking that synthesized components were correct rather than generating them from scratch, though ObSynth did not reduce the time participants took to generate object models.

Scientific Impact of Graph-Based Approaches in Deep Learning Studies -- A Bibliometric Comparison

Oct 13, 2022

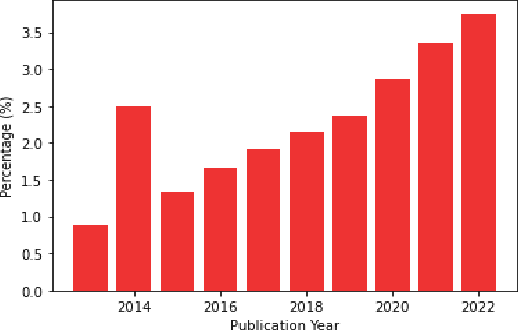

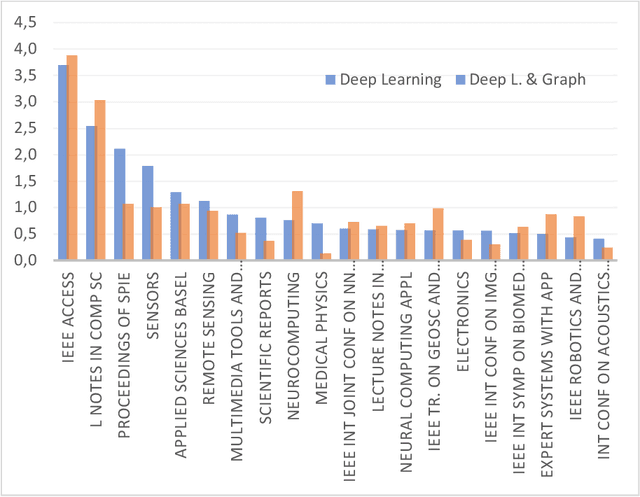

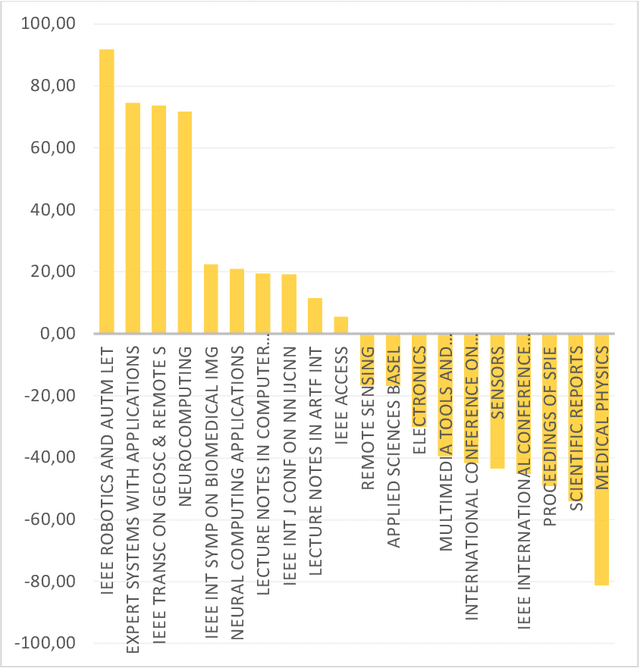

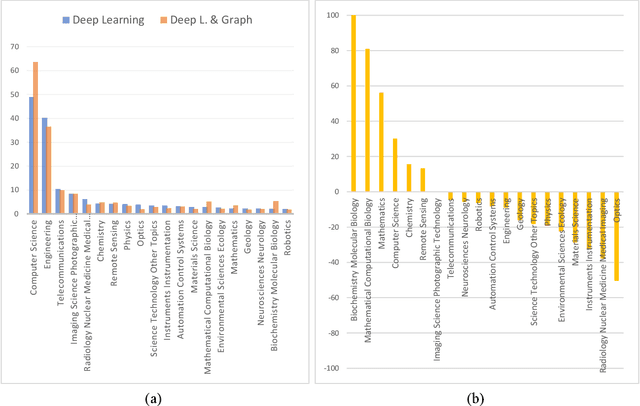

Applying graph-based approaches in deep learning receives more attention over time. This study presents statistical analysis on the use of graph-based approaches in deep learning and examines the scientific impact of the related articles. Processing the data obtained from the Web of Science database, metrics such as the type of the articles, funding availability, indexing type, annual average number of citations and the number of access were analyzed to quantitatively reveal the effects on the scientific audience. It's outlined that deep learning-based studies gained momentum after year 2013, and the rate of graph-based approaches in all deep learning studies increased linearly from 1% to 4% within the following 10 years. Conference publications scanned in the Conference Proceeding Citation Index (CPCI) on the graph-based approaches receive significantly more citations. The citation counts of the SCI-Expanded and Emerging SCI indexed publications of the two streams are close to each other. While the citation performances of the supported and unsupported publications of the two sides were similar, pure deep learning studies received more citations on the journal publication side and graph-based approaches received more citations on the conference side. Despite their similar performance in recent years, graph-based studies show twice more citation performance as they get older, compared to traditional approaches. Annual average citation performance per article for all deep learning studies is 11.051 in 2014, while it is 22.483 for graph-based studies. Also, despite receiving 16% more access, graph-based papers get almost the same overall citation over time with the pure counterpart. This is an indication that graph-based approaches need a greater bunch of attention to follow, while pure deep learning counterpart is relatively simpler to get inside.

Bayesian Algorithms Learn to Stabilize Unknown Continuous-Time Systems

Dec 30, 2021

Linear dynamical systems are canonical models for learning-based control of plants with uncertain dynamics. The setting consists of a stochastic differential equation that captures the state evolution of the plant understudy, while the true dynamics matrices are unknown and need to be learned from the observed data of state trajectory. An important issue is to ensure that the system is stabilized and destabilizing control actions due to model uncertainties are precluded as soon as possible. A reliable stabilization procedure for this purpose that can effectively learn from unstable data to stabilize the system in a finite time is not currently available. In this work, we propose a novel Bayesian learning algorithm that stabilizes unknown continuous-time stochastic linear systems. The presented algorithm is flexible and exposes effective stabilization performance after a remarkably short time period of interacting with the system.

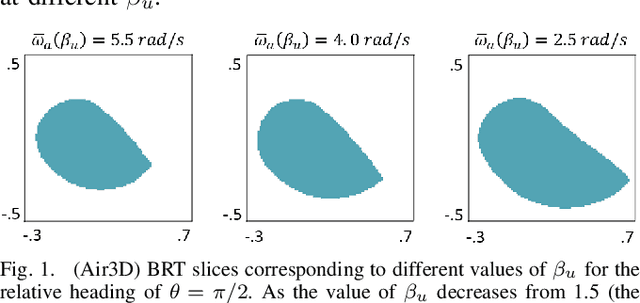

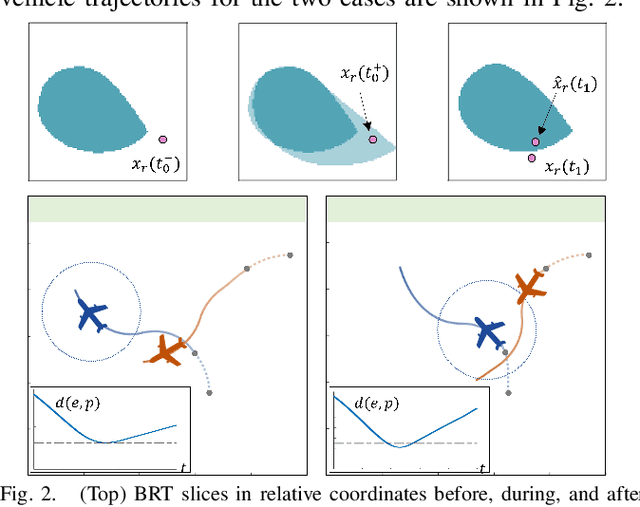

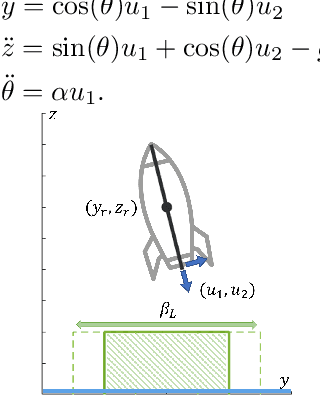

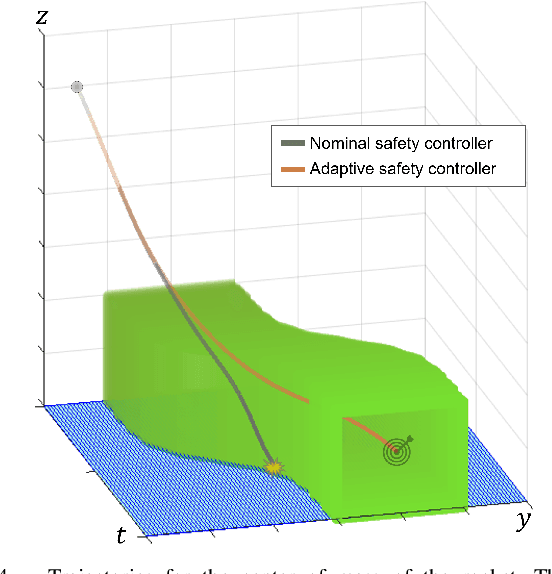

Parameter-Conditioned Reachable Sets for Updating Safety Assurances Online

Sep 29, 2022

Hamilton-Jacobi (HJ) reachability analysis is a powerful tool for analyzing the safety of autonomous systems. However, the provided safety assurances are often predicated on the assumption that once deployed, the system or its environment does not evolve. Online, however, an autonomous system might experience changes in system dynamics, control authority, external disturbances, and/or the surrounding environment, requiring updated safety assurances. Rather than restarting the safety analysis from scratch, which can be time-consuming and often intractable to perform online, we propose to compute \textit{parameter-conditioned} reachable sets. Assuming expected system and environment changes can be parameterized, we treat these parameters as virtual states in the system and leverage recent advances in high-dimensional reachability analysis to solve the corresponding reachability problem offline. This results in a family of reachable sets that is parameterized by the environment and system factors. Online, as these factors change, the system can simply query the corresponding safety function from this family to ensure system safety, enabling a real-time update of the safety assurances. Through various simulation studies, we demonstrate the capability of our approach in maintaining system safety despite the system and environment evolution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge