"Time": models, code, and papers

Can Ensemble of Classifiers Provide Better Recognition Results in Packaging Activity?

Nov 05, 2022Skeleton-based Motion Capture (MoCap) systems have been widely used in the game and film industry for mimicking complex human actions for a long time. MoCap data has also proved its effectiveness in human activity recognition tasks. However, it is a quite challenging task for smaller datasets. The lack of such data for industrial activities further adds to the difficulties. In this work, we have proposed an ensemble-based machine learning methodology that is targeted to work better on MoCap datasets. The experiments have been performed on the MoCap data given in the Bento Packaging Activity Recognition Challenge 2021. Bento is a Japanese word that resembles lunch-box. Upon processing the raw MoCap data at first, we have achieved an astonishing accuracy of 98% on 10-fold Cross-Validation and 82% on Leave-One-Out-Cross-Validation by using the proposed ensemble model.

Modeling Multi-Dimensional Datasets via a Fast Scale-Free Network Model

Nov 05, 2022

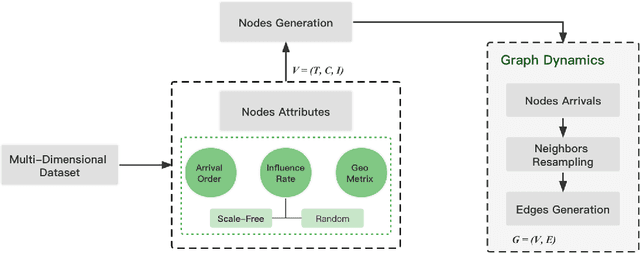

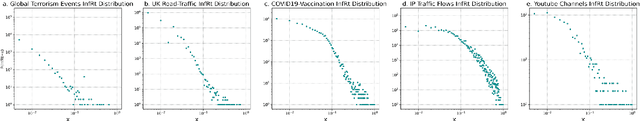

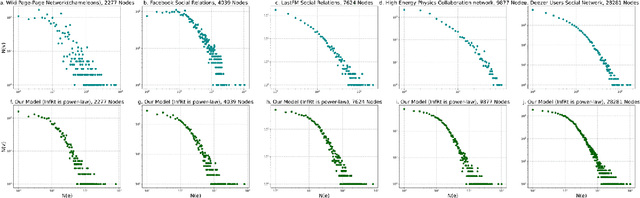

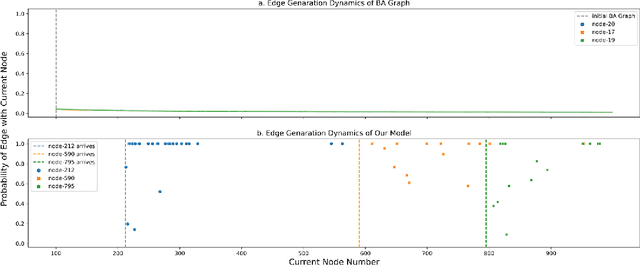

Compared with network datasets, multi-dimensional data are much more common nowadays. If we can model multi-dimensional datasets into networks with accurate network properties, while, in the meantime, preserving the original dataset features, we can not only explore the dataset dynamic but also acquire abundant synthetic network data. This paper proposed a fast scale-free network model for large-scale multi-dimensional data not limited to the network domain. The proposed network model is dynamic and able to generate scale-free graphs within linear time regardless of the scale or field of the modeled dataset. We further argued that in a dynamic network where edge-generation probability represents influence, as the network evolves, that influence also decays. We demonstrated how this influence decay phenomenon is reflected in our model and provided a case study using the Global Terrorism Database.

Conversational Pattern Mining using Motif Detection

Nov 13, 2022

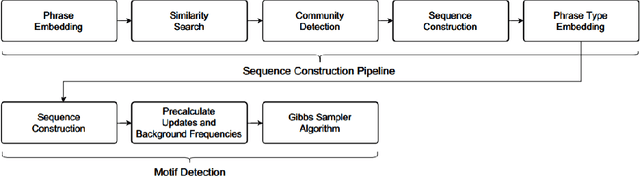

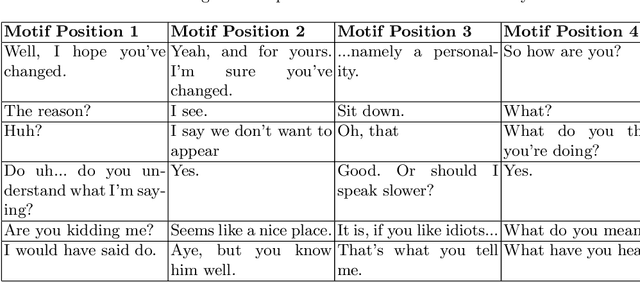

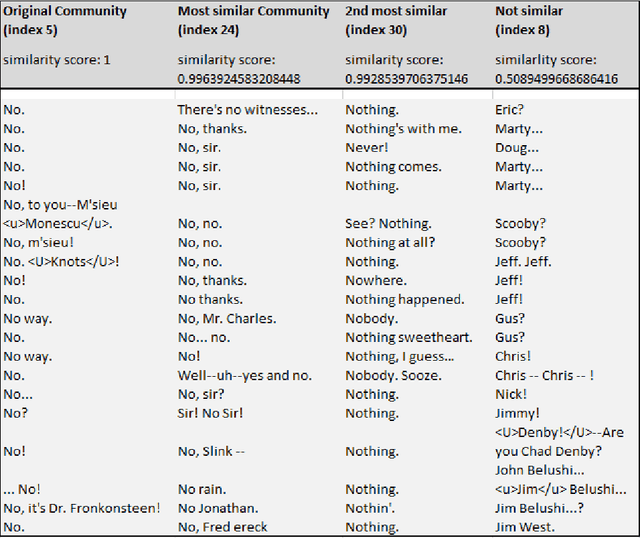

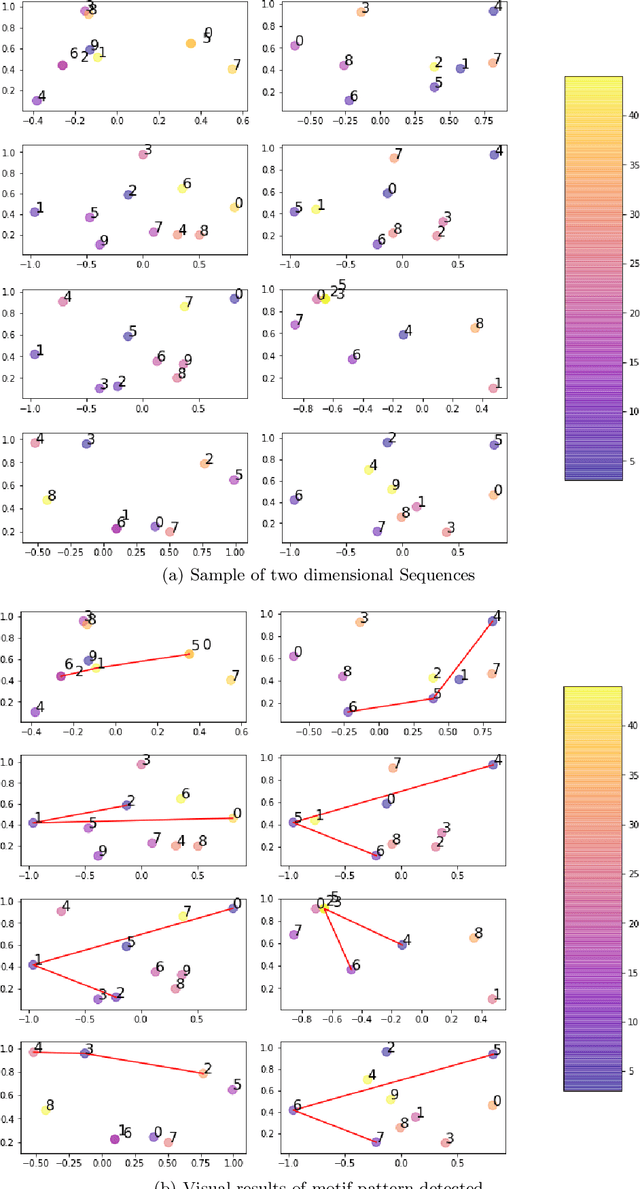

The subject of conversational mining has become of great interest recently due to the explosion of social and other online media. Supplementing this explosion of text is the advancement in pre-trained language models which have helped us to leverage these sources of information. An interesting domain to analyse is conversations in terms of complexity and value. Complexity arises due to the fact that a conversation can be asynchronous and can involve multiple parties. It is also computationally intensive to process. We use unsupervised methods in our work in order to develop a conversational pattern mining technique which does not require time consuming, knowledge demanding and resource intensive labelling exercises. The task of identifying repeating patterns in sequences is well researched in the Bioinformatics field. In our work, we adapt this to the field of Natural Language Processing and make several extensions to a motif detection algorithm. In order to demonstrate the application of the algorithm on a dynamic, real world data set; we extract motifs from an open-source film script data source. We run an exploratory investigation into the types of motifs we are able to mine.

Layerwise Sparsifying Training and Sequential Learning Strategy for Neural Architecture Adaptation

Nov 13, 2022

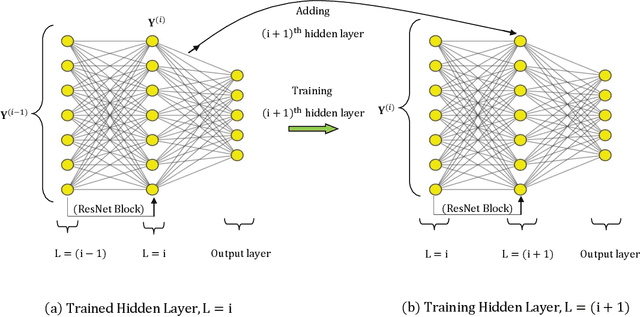

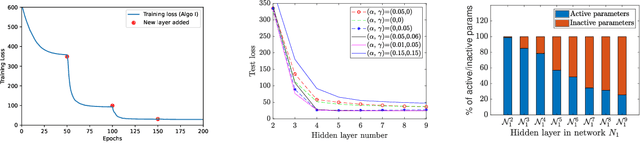

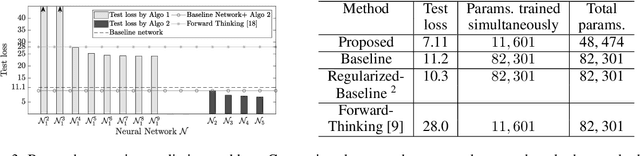

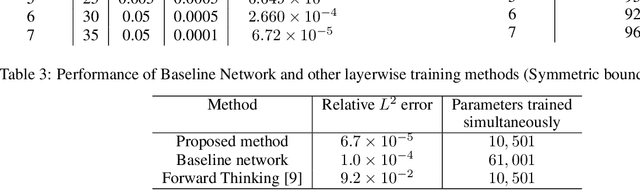

This work presents a two-stage framework for progressively developing neural architectures to adapt/ generalize well on a given training data set. In the first stage, a manifold-regularized layerwise sparsifying training approach is adopted where a new layer is added each time and trained independently by freezing parameters in the previous layers. In order to constrain the functions that should be learned by each layer, we employ a sparsity regularization term, manifold regularization term and a physics-informed term. We derive the necessary conditions for trainability of a newly added layer and analyze the role of manifold regularization. In the second stage of the Algorithm, a sequential learning process is adopted where a sequence of small networks is employed to extract information from the residual produced in stage I and thereby making robust and more accurate predictions. Numerical investigations with fully connected network on prototype regression problem, and classification problem demonstrate that the proposed approach can outperform adhoc baseline networks. Further, application to physics-informed neural network problems suggests that the method could be employed for creating interpretable hidden layers in a deep network while outperforming equivalent baseline networks.

Enhancing Spatiotemporal Prediction Model using Modular Design and Beyond

Oct 04, 2022

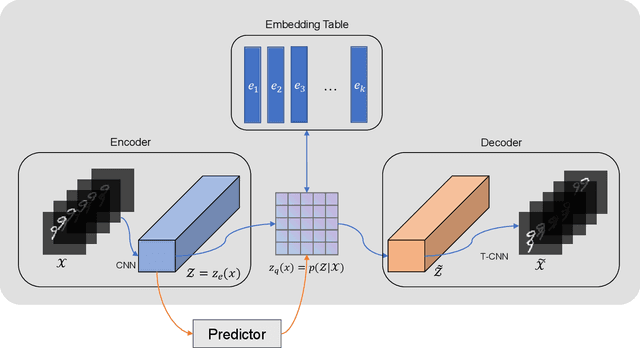

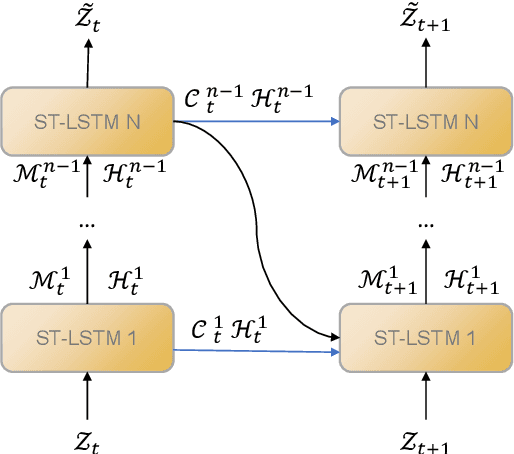

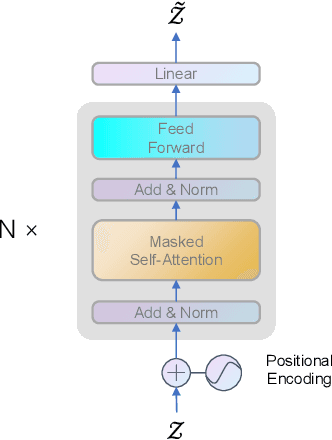

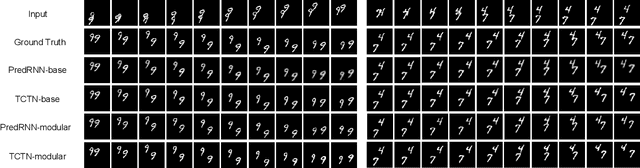

Predictive learning uses a known state to generate a future state over a period of time. It is a challenging task to predict spatiotemporal sequence because the spatiotemporal sequence varies both in time and space. The mainstream method is to model spatial and temporal structures at the same time using RNN-based or transformer-based architecture, and then generates future data by using learned experience in the way of auto-regressive. The method of learning spatial and temporal features simultaneously brings a lot of parameters to the model, which makes the model difficult to be convergent. In this paper, a modular design is proposed, which decomposes spatiotemporal sequence model into two modules: a spatial encoder-decoder and a predictor. These two modules can extract spatial features and predict future data respectively. The spatial encoder-decoder maps the data into a latent embedding space and generates data from the latent space while the predictor forecasts future embedding from past. By applying the design to the current research and performing experiments on KTH-Action and MovingMNIST datasets, we both improve computational performance and obtain state-of-the-art results.

Synthetic Data Supervised Salient Object Detection

Oct 25, 2022

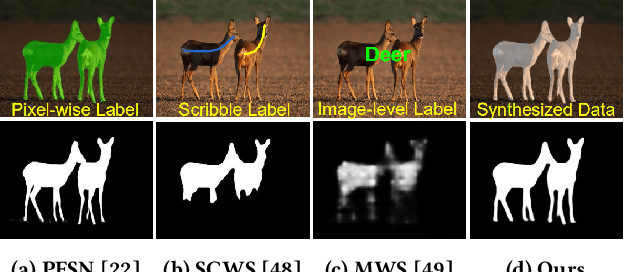

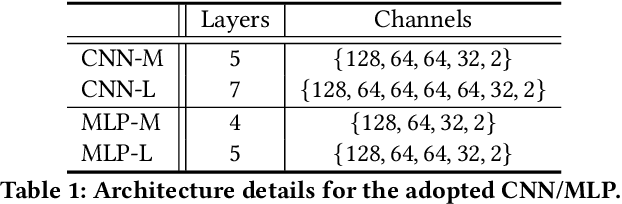

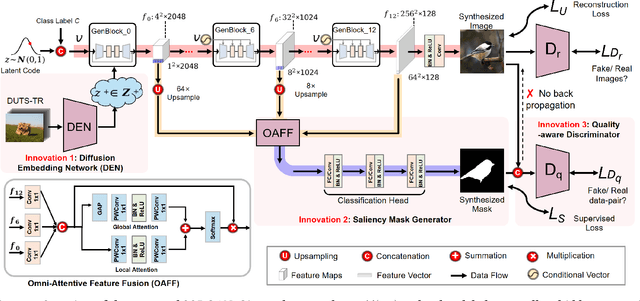

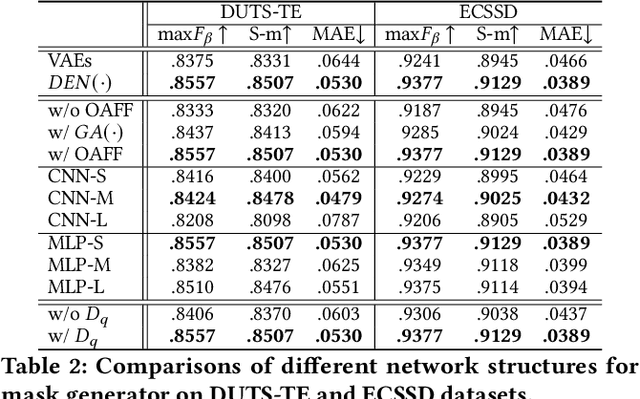

Although deep salient object detection (SOD) has achieved remarkable progress, deep SOD models are extremely data-hungry, requiring large-scale pixel-wise annotations to deliver such promising results. In this paper, we propose a novel yet effective method for SOD, coined SODGAN, which can generate infinite high-quality image-mask pairs requiring only a few labeled data, and these synthesized pairs can replace the human-labeled DUTS-TR to train any off-the-shelf SOD model. Its contribution is three-fold. 1) Our proposed diffusion embedding network can address the manifold mismatch and is tractable for the latent code generation, better matching with the ImageNet latent space. 2) For the first time, our proposed few-shot saliency mask generator can synthesize infinite accurate image synchronized saliency masks with a few labeled data. 3) Our proposed quality-aware discriminator can select highquality synthesized image-mask pairs from noisy synthetic data pool, improving the quality of synthetic data. For the first time, our SODGAN tackles SOD with synthetic data directly generated from the generative model, which opens up a new research paradigm for SOD. Extensive experimental results show that the saliency model trained on synthetic data can achieve $98.4\%$ F-measure of the saliency model trained on the DUTS-TR. Moreover, our approach achieves a new SOTA performance in semi/weakly-supervised methods, and even outperforms several fully-supervised SOTA methods. Code is available at https://github.com/wuzhenyubuaa/SODGAN

* 9 pages, 8 figures

One-Shot Messaging at Any Load Through Random Sub-Channeling in OFDM

Sep 22, 2022

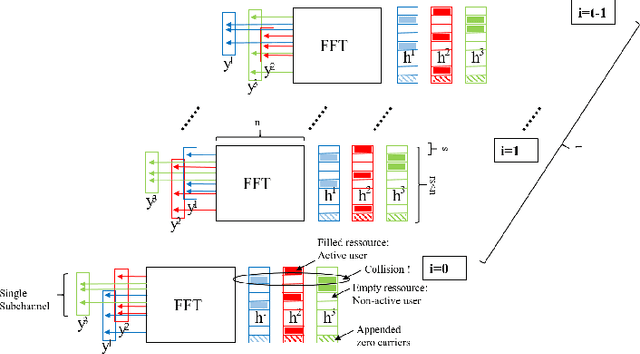

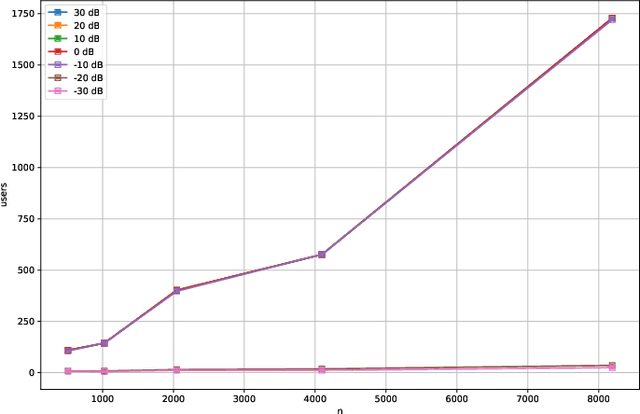

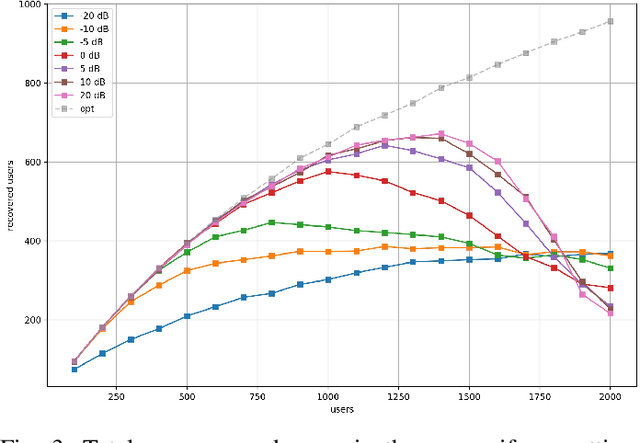

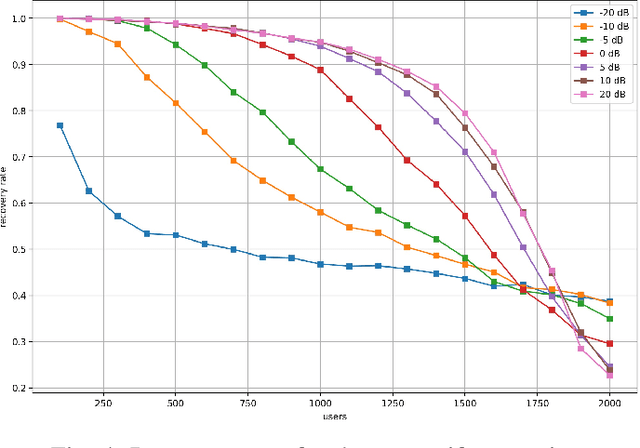

Compressive Sensing has well boosted massive random access protocols over the last decade. In this paper we apply an orthogonal FFT basis as it is used in OFDM, but subdivide its image into so-called sub-channels and let each sub-channel take only a fraction of the load. In a random fashion the subdivision is consecutively applied over a suitable number of time-slots. Within the time-slots the users will not change their sub-channel assignment and send in parallel the data. Activity detection is carried out jointly across time-slots in each of the sub-channels. For such system design we derive three rather fundamental results: i) First, we prove that the subdivision can be driven to the extent that the activity in each sub-channel is sparse by design. An effect that we call sparsity capture effect. ii) Second, we prove that effectively the system can sustain any overload situation relative to the FFT dimension, i.e. detection failure of active and non-active users can be kept below any desired threshold regardless of the number of users. The only price to pay is delay, i.e. the number of time-slots over which cross-detection is performed. We achieve this by jointly exploring the effect of measure concentration in time and frequency and careful system parameter scaling. iii) Third, we prove that parallel to activity detection active users can carry one symbol per pilot resource and time-slot so it supports so-called one-shot messaging. The key to proving these results are new concentration results for sequences of randomly sub-sampled FFTs detecting the sparse vectors "en bloc". Eventually, we show by simulations that the system is scalable resulting in a coarsely 30-fold capacity increase compared to standard OFDM.

What is the best RNN-cell structure for forecasting each time series behavior?

Mar 15, 2022

It is unquestionable that time series forecasting is of paramount importance in many fields. The most used machine learning models to address time series forecasting tasks are Recurrent Neural Networks (RNNs). Typically, those models are built using one of the three most popular cells, ELMAN, Long-Short Term Memory (LSTM), or Gated Recurrent Unit (GRU) cells, each cell has a different structure and implies a different computational cost. However, it is not clear why and when to use each RNN-cell structure. Actually, there is no comprehensive characterization of all the possible time series behaviors and no guidance on what RNN cell structure is the most suitable for each behavior. The objective of this study is two-fold: it presents a comprehensive taxonomy of all-time series behaviors (deterministic, random-walk, nonlinear, long-memory, and chaotic), and provides insights into the best RNN cell structure for each time series behavior. We conducted two experiments: (1) The first experiment evaluates and analyzes the role of each component in the LSTM-Vanilla cell by creating 11 variants based on one alteration in its basic architecture (removing, adding, or substituting one cell component). (2) The second experiment evaluates and analyzes the performance of 20 possible RNN-cell structures. Our results showed that the MGU-SLIM3 cell is the most recommended for deterministic and nonlinear behaviors, the MGU-SLIM2 cell is the most suitable for random-walk behavior, FB1 cell is advocated for long-memory behavior, and LSTM-SLIM1 for chaotic behavior.

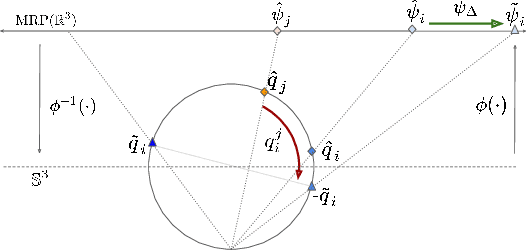

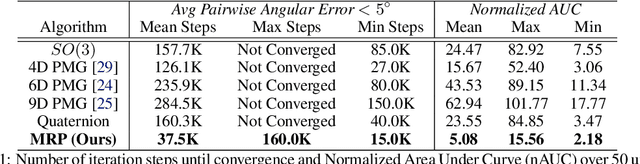

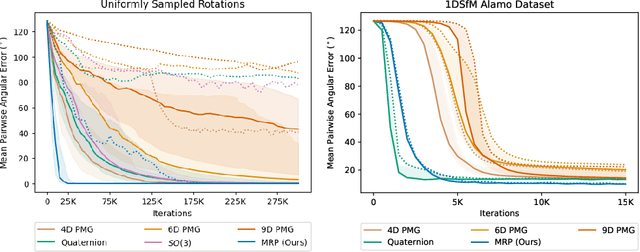

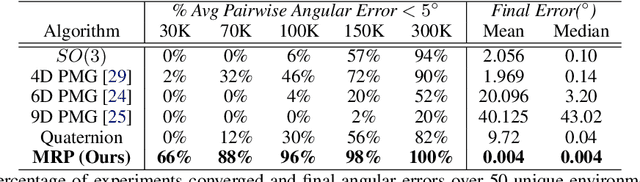

Deep Projective Rotation Estimation through Relative Supervision

Nov 21, 2022

Orientation estimation is the core to a variety of vision and robotics tasks such as camera and object pose estimation. Deep learning has offered a way to develop image-based orientation estimators; however, such estimators often require training on a large labeled dataset, which can be time-intensive to collect. In this work, we explore whether self-supervised learning from unlabeled data can be used to alleviate this issue. Specifically, we assume access to estimates of the relative orientation between neighboring poses, such that can be obtained via a local alignment method. While self-supervised learning has been used successfully for translational object keypoints, in this work, we show that naively applying relative supervision to the rotational group $SO(3)$ will often fail to converge due to the non-convexity of the rotational space. To tackle this challenge, we propose a new algorithm for self-supervised orientation estimation which utilizes Modified Rodrigues Parameters to stereographically project the closed manifold of $SO(3)$ to the open manifold of $\mathbb{R}^{3}$, allowing the optimization to be done in an open Euclidean space. We empirically validate the benefits of the proposed algorithm for rotational averaging problem in two settings: (1) direct optimization on rotation parameters, and (2) optimization of parameters of a convolutional neural network that predicts object orientations from images. In both settings, we demonstrate that our proposed algorithm is able to converge to a consistent relative orientation frame much faster than algorithms that purely operate in the $SO(3)$ space. Additional information can be found at https://sites.google.com/view/deep-projective-rotation/home .

Mixed Reality Interface for Digital Twin of Plant Factory

Oct 29, 2022

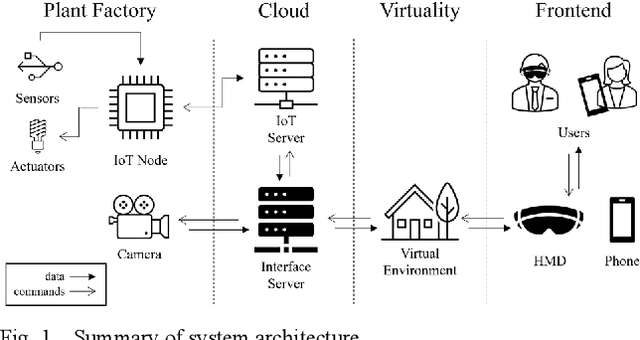

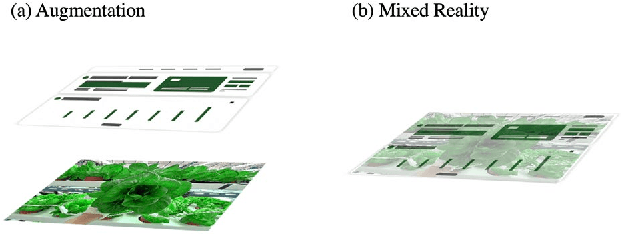

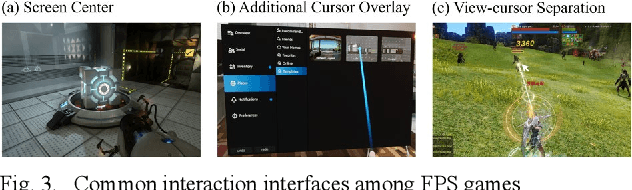

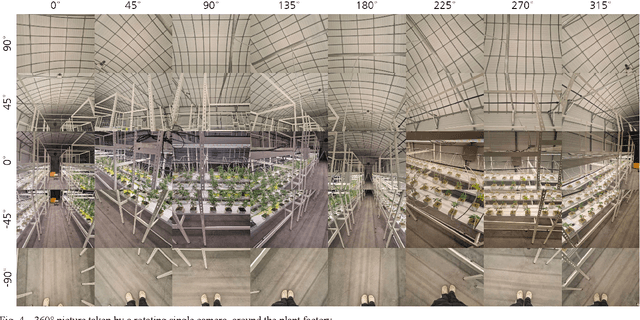

An easier and intuitive interface architecture is necessary for digital twin of plant factory. I suggest an immersive and interactive mixed reality interface for digital twin models of smart farming, for remote work rather than simulation of components. The environment is constructed with UI display and a streaming background scene, which is a real time scene taken from camera device located in the plant factory, processed with deformable neural radiance fields. User can monitor and control the remote plant factory facilities with HMD or 2D display based mixed reality environment. This paper also introduces detailed concept and describes the system architecture to implement suggested mixed reality interface.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge