"Time": models, code, and papers

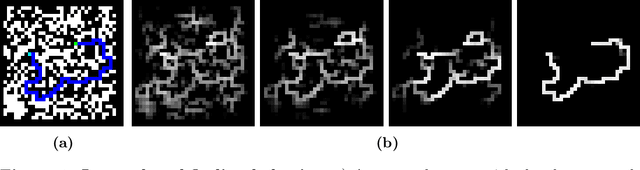

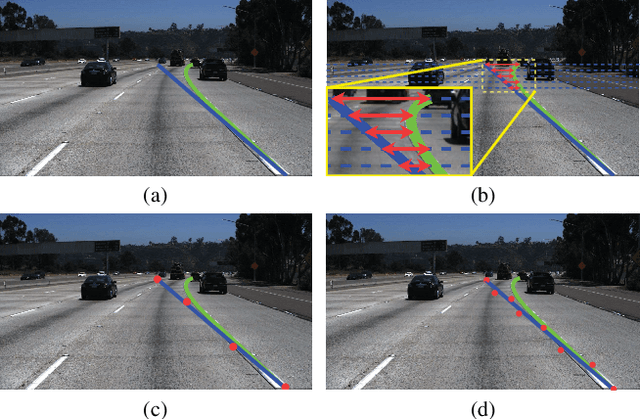

Real-Time Robust Video Object Detection System Against Physical-World Adversarial Attacks

Aug 19, 2022

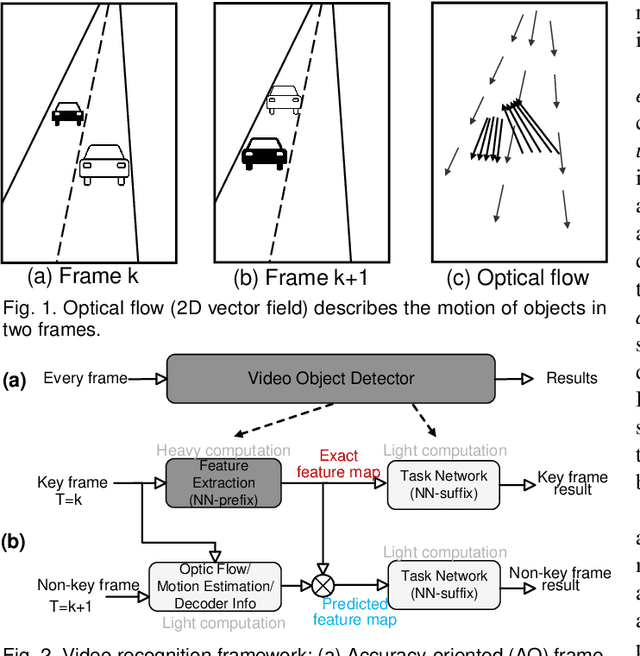

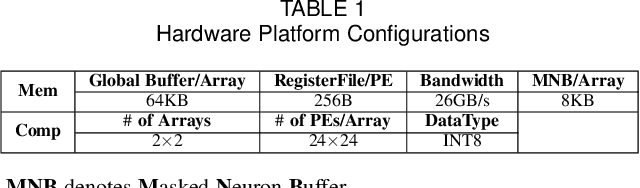

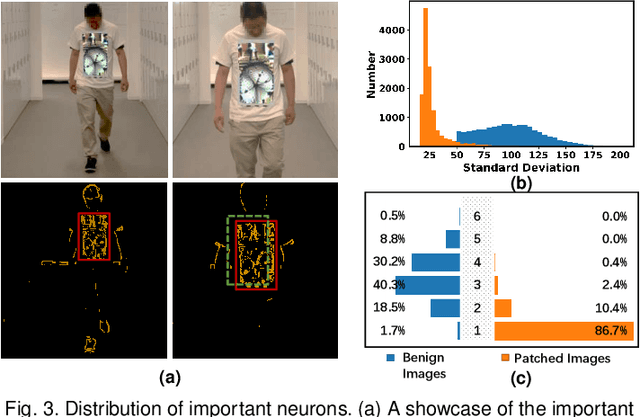

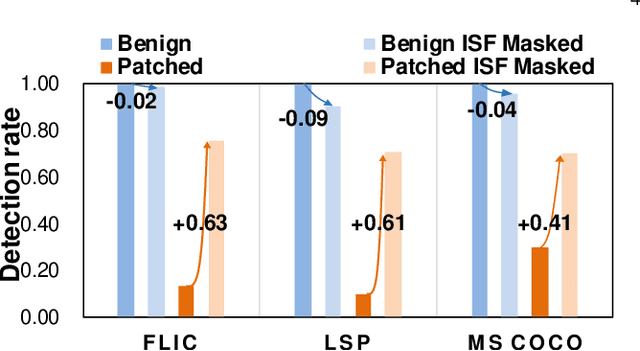

DNN-based video object detection (VOD) powers autonomous driving and video surveillance industries with rising importance and promising opportunities. However, adversarial patch attack yields huge concern in live vision tasks because of its practicality, feasibility, and powerful attack effectiveness. This work proposes Themis, a software/hardware system to defend against adversarial patches for real-time robust video object detection. We observe that adversarial patches exhibit extremely localized superficial feature importance in a small region with non-robust predictions, and thus propose the adversarial region detection algorithm for adversarial effect elimination. Themis also proposes a systematic design to efficiently support the algorithm by eliminating redundant computations and memory traffics. Experimental results show that the proposed methodology can effectively recover the system from the adversarial attack with negligible hardware overhead.

Subgraph Centralization: A Necessary Step for Graph Anomaly Detection

Jan 17, 2023

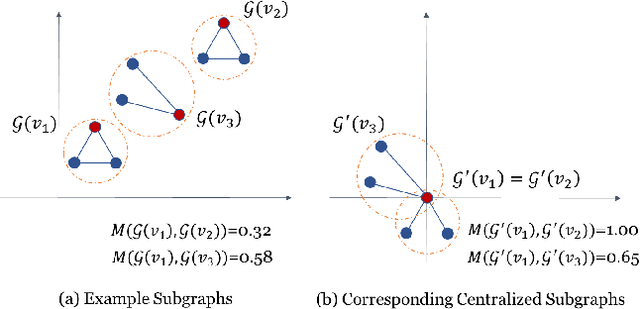

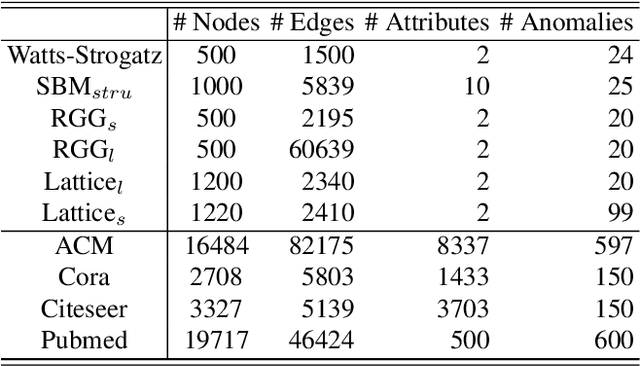

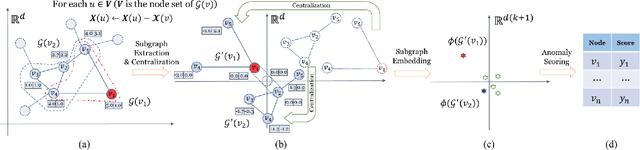

Graph anomaly detection has attracted a lot of interest recently. Despite their successes, existing detectors have at least two of the three weaknesses: (a) high computational cost which limits them to small-scale networks only; (b) existing treatment of subgraphs produces suboptimal detection accuracy; and (c) unable to provide an explanation as to why a node is anomalous, once it is identified. We identify that the root cause of these weaknesses is a lack of a proper treatment for subgraphs. A treatment called Subgraph Centralization for graph anomaly detection is proposed to address all the above weaknesses. Its importance is shown in two ways. First, we present a simple yet effective new framework called Graph-Centric Anomaly Detection (GCAD). The key advantages of GCAD over existing detectors including deep-learning detectors are: (i) better anomaly detection accuracy; (ii) linear time complexity with respect to the number of nodes; and (iii) it is a generic framework that admits an existing point anomaly detector to be used to detect node anomalies in a network. Second, we show that Subgraph Centralization can be incorporated into two existing detectors to overcome the above-mentioned weaknesses.

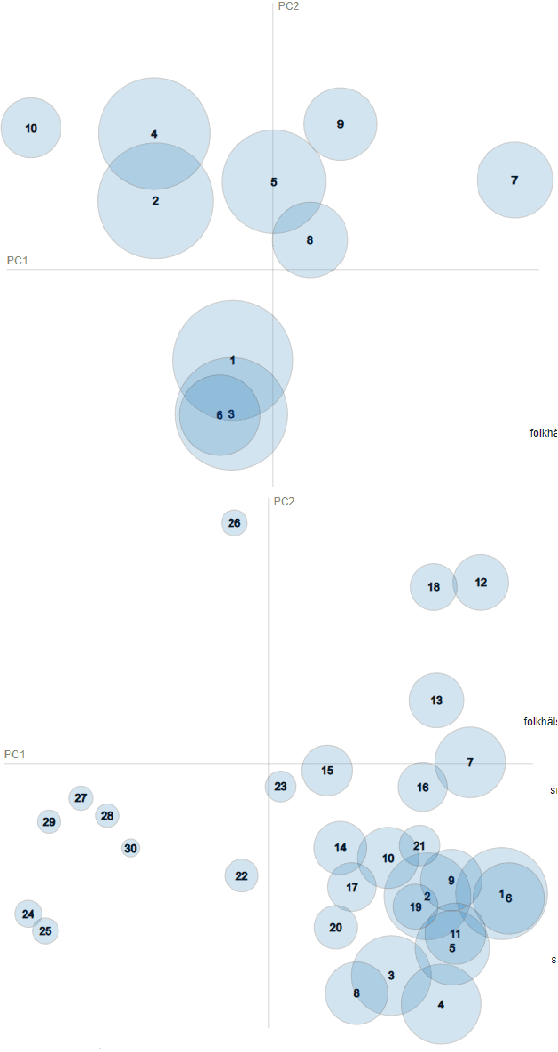

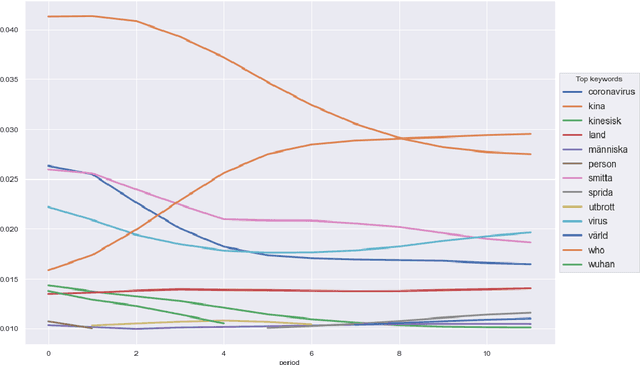

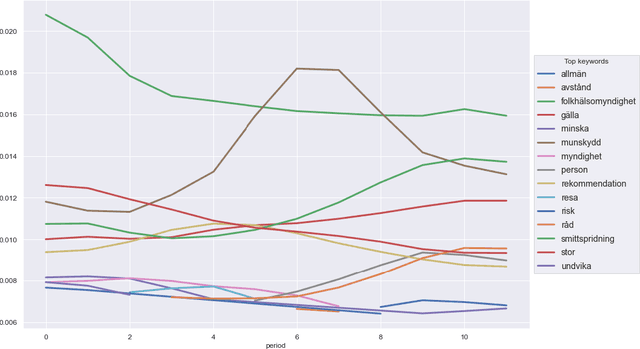

Topic Modelling of Swedish Newspaper Articles about Coronavirus: a Case Study using Latent Dirichlet Allocation Method

Jan 17, 2023

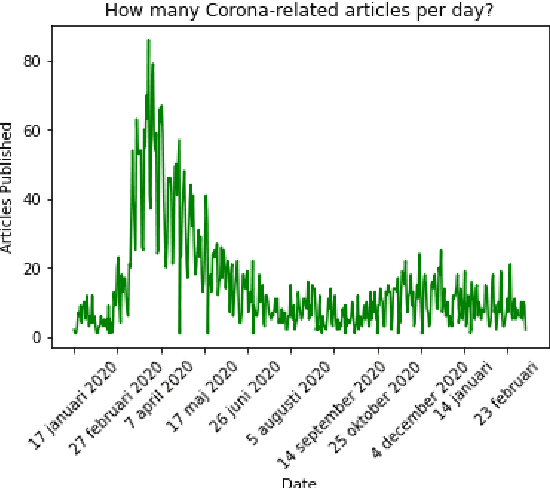

Topic Modelling (TM) is from the research branches of natural language understanding (NLU) and natural language processing (NLP) that is to facilitate insightful analysis from large documents and datasets, such as a summarisation of main topics and the topic changes. This kind of discovery is getting more popular in real-life applications due to its impact on big data analytics. In this study, from the social-media and healthcare domain, we apply popular Latent Dirichlet Allocation (LDA) methods to model the topic changes in Swedish newspaper articles about Coronavirus. We describe the corpus we created including 6515 articles, methods applied, and statistics on topic changes over approximately 1 year and two months period of time from 17th January 2020 to 13th March 2021. We hope this work can be an asset for grounding applications of topic modelling and can be inspiring for similar case studies in an era with pandemics, to support socio-economic impact research as well as clinical and healthcare analytics. Our data and source code are openly available at https://github. com/poethan/Swed_Covid_TM Keywords: Latent Dirichlet Allocation (LDA); Topic Modelling; Coronavirus; Pandemics; Natural Language Understanding

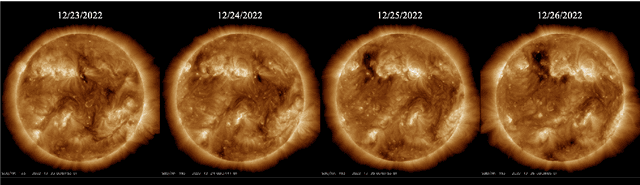

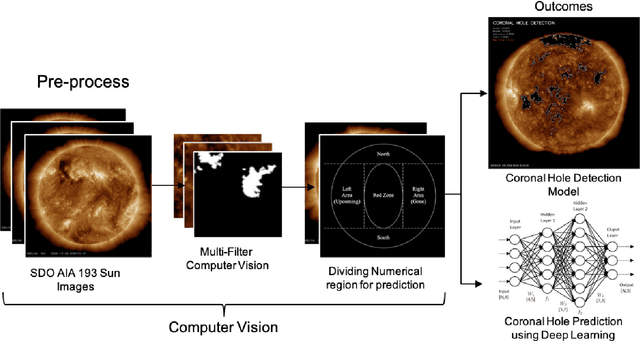

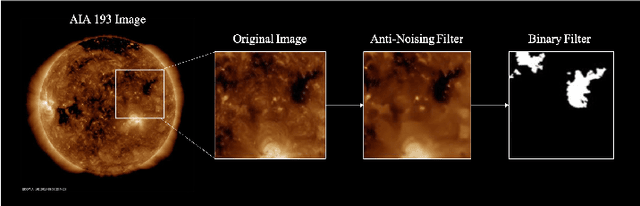

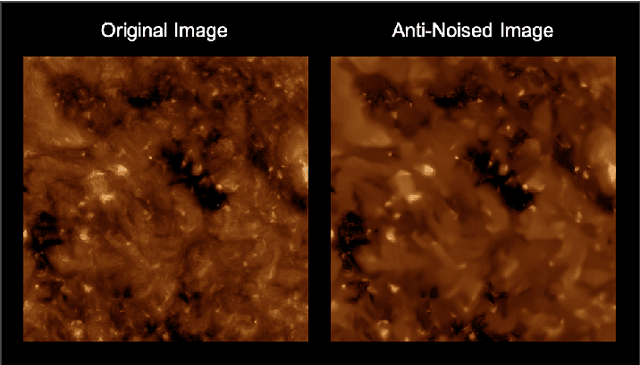

Coronal Hole Analysis and Prediction using Computer Vision and LSTM Neural Network

Jan 17, 2023

As humanity has begun to explore space, the significance of space weather has become apparent. It has been established that coronal holes, a type of space weather phenomenon, can impact the operation of aircraft and satellites. The coronal hole is an area on the sun characterized by open magnetic field lines and relatively low temperatures, which result in the emission of the solar wind at higher than average rates. In this study, To prepare for the impact of coronal holes on the Earth, we use computer vision to detect the coronal hole region and calculate its size based on images from the Solar Dynamics Observatory (SDO). We then implement deep learning techniques, specifically the Long Short-Term Memory (LSTM) method, to analyze trends in the coronal hole area data and predict its size for different sun regions over 7 days. By analyzing time series data on the coronal hole area, this study aims to identify patterns and trends in coronal hole behavior and understand how they may impact space weather events. This research represents an important step towards improving our ability to predict and prepare for space weather events that can affect Earth and technological systems.

Pathfinding Neural Cellular Automata

Jan 17, 2023

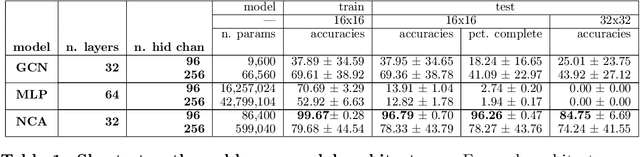

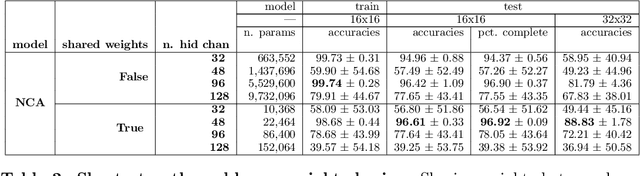

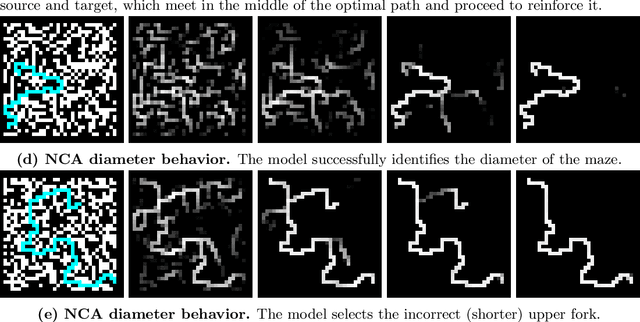

Pathfinding makes up an important sub-component of a broad range of complex tasks in AI, such as robot path planning, transport routing, and game playing. While classical algorithms can efficiently compute shortest paths, neural networks could be better suited to adapting these sub-routines to more complex and intractable tasks. As a step toward developing such networks, we hand-code and learn models for Breadth-First Search (BFS), i.e. shortest path finding, using the unified architectural framework of Neural Cellular Automata, which are iterative neural networks with equal-size inputs and outputs. Similarly, we present a neural implementation of Depth-First Search (DFS), and outline how it can be combined with neural BFS to produce an NCA for computing diameter of a graph. We experiment with architectural modifications inspired by these hand-coded NCAs, training networks from scratch to solve the diameter problem on grid mazes while exhibiting strong generalization ability. Finally, we introduce a scheme in which data points are mutated adversarially during training. We find that adversarially evolving mazes leads to increased generalization on out-of-distribution examples, while at the same time generating data-sets with significantly more complex solutions for reasoning tasks.

BSNet: Lane Detection via Draw B-spline Curves Nearby

Jan 17, 2023

Curve-based methods are one of the classic lane detection methods. They learn the holistic representation of lane lines, which is intuitive and concise. However, their performance lags behind the recent state-of-the-art methods due to the limitation of their lane representation and optimization. In this paper, we revisit the curve-based lane detection methods from the perspectives of the lane representations' globality and locality. The globality of lane representation is the ability to complete invisible parts of lanes with visible parts. The locality of lane representation is the ability to modify lanes locally which can simplify parameter optimization. Specifically, we first propose to exploit the b-spline curve to fit lane lines since it meets the locality and globality. Second, we design a simple yet efficient network BSNet to ensure the acquisition of global and local features. Third, we propose a new curve distance to make the lane detection optimization objective more reasonable and alleviate ill-conditioned problems. The proposed methods achieve state-of-the-art performance on the Tusimple, CULane, and LLAMAS datasets, which dramatically improved the accuracy of curve-based methods in the lane detection task while running far beyond real-time (197FPS).

TempSAL -- Uncovering Temporal Information for Deep Saliency Prediction

Jan 05, 2023

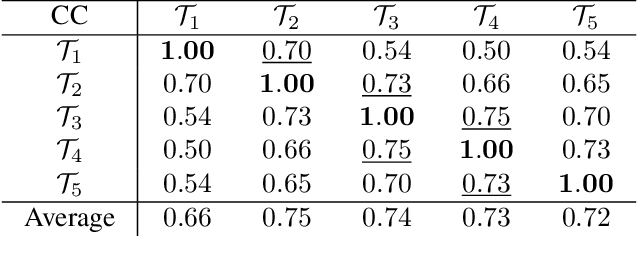

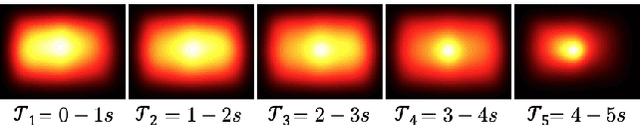

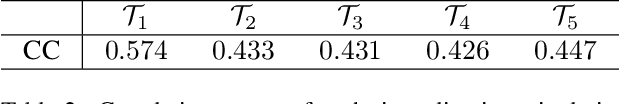

Deep saliency prediction algorithms complement the object recognition features, they typically rely on additional information, such as scene context, semantic relationships, gaze direction, and object dissimilarity. However, none of these models consider the temporal nature of gaze shifts during image observation. We introduce a novel saliency prediction model that learns to output saliency maps in sequential time intervals by exploiting human temporal attention patterns. Our approach locally modulates the saliency predictions by combining the learned temporal maps. Our experiments show that our method outperforms the state-of-the-art models, including a multi-duration saliency model, on the SALICON benchmark. Our code will be publicly available on GitHub.

Same Words, Different Meanings: Semantic Polarization in Broadcast Media Language Forecasts Polarization on Social Media Discourse

Jan 25, 2023With the growth of online news over the past decade, empirical studies on political discourse and news consumption have focused on the phenomenon of filter bubbles and echo chambers. Yet recently, scholars have revealed limited evidence around the impact of such phenomenon, leading some to argue that partisan segregation across news audiences cannot be fully explained by online news consumption alone and that the role of traditional legacy media may be as salient in polarizing public discourse around current events. In this work, we expand the scope of analysis to include both online and more traditional media by investigating the relationship between broadcast news media language and social media discourse. By analyzing a decade's worth of closed captions (2 million speaker turns) from CNN and Fox News along with topically corresponding discourse from Twitter, we provide a novel framework for measuring semantic polarization between America's two major broadcast networks to demonstrate how semantic polarization between these outlets has evolved (Study 1), peaked (Study 2) and influenced partisan discussions on Twitter (Study 3) across the last decade. Our results demonstrate a sharp increase in polarization in how topically important keywords are discussed between the two channels, especially after 2016, with overall highest peaks occurring in 2020. The two stations discuss identical topics in drastically distinct contexts in 2020, to the extent that there is barely any linguistic overlap in how identical keywords are contextually discussed. Further, we demonstrate at scale, how such partisan division in broadcast media language significantly shapes semantic polarity trends on Twitter (and vice-versa), empirically linking for the first time, how online discussions are influenced by televised media.

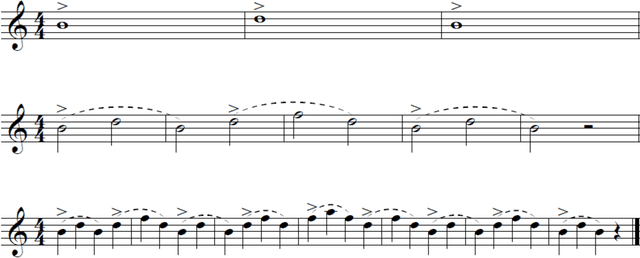

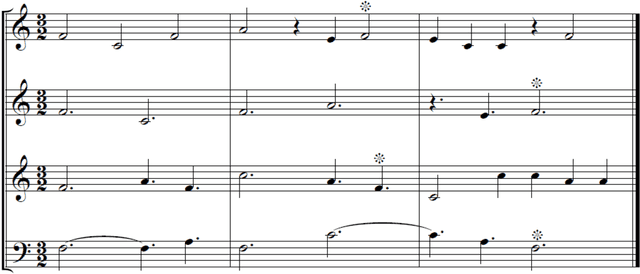

Fractal Patterns in Music

Dec 23, 2022

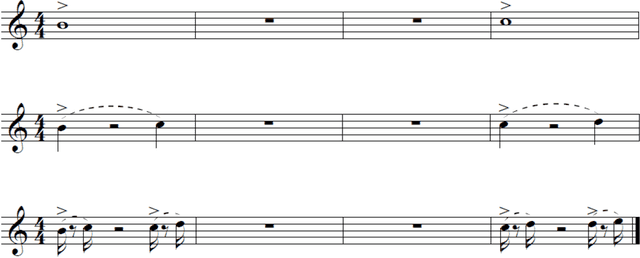

If our aesthetic preferences are affected by fractal geometry of nature, scaling regularities would be expected to appear in all art forms, including music. While a variety of statistical tools have been proposed to analyze time series in sound, no consensus has as yet emerged regarding the most meaningful measure of complexity in music, or how to discern fractal patterns in compositions in the first place. Here we offer a new approach based on self-similarity of the melodic lines recurring at various temporal scales. In contrast to the statistical analyses advanced in recent literature, the proposed method does not depend on averaging within time-windows and is distinctively local. The corresponding definition of the fractal dimension is based on the temporal scaling hierarchy and depends on the tonal contours of the musical motifs. The new concepts are tested on musical 'renditions' of the Cantor Set and Koch Curve, and then applied to a number of carefully selected masterful compositions spanning five centuries of music making.

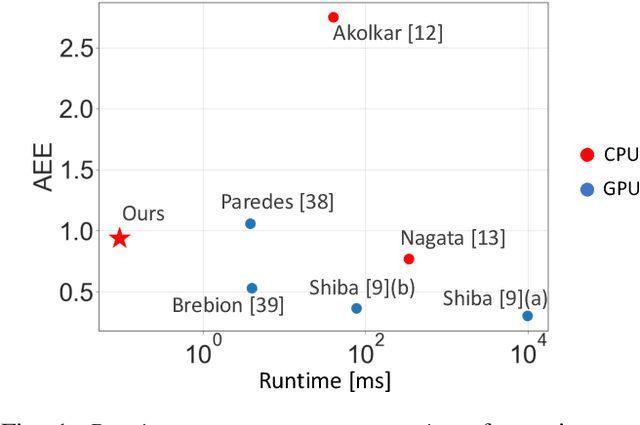

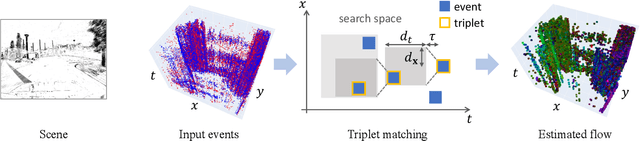

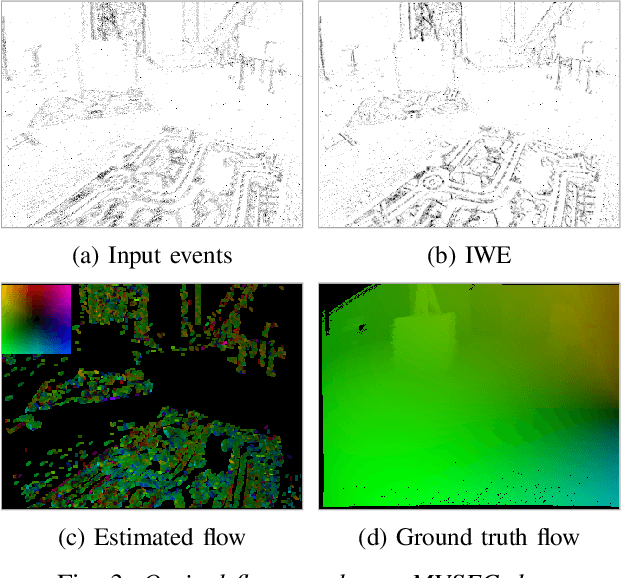

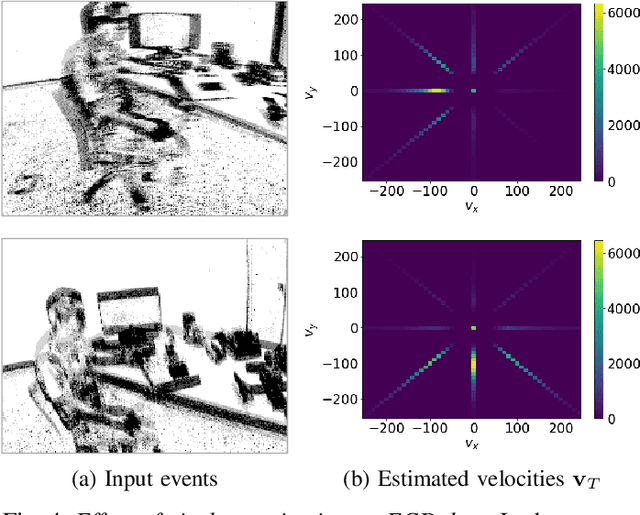

Fast Event-based Optical Flow Estimation by Triplet Matching

Dec 23, 2022

Event cameras are novel bio-inspired sensors that offer advantages over traditional cameras (low latency, high dynamic range, low power, etc.). Optical flow estimation methods that work on packets of events trade off speed for accuracy, while event-by-event (incremental) methods have strong assumptions and have not been tested on common benchmarks that quantify progress in the field. Towards applications on resource-constrained devices, it is important to develop optical flow algorithms that are fast, light-weight and accurate. This work leverages insights from neuroscience, and proposes a novel optical flow estimation scheme based on triplet matching. The experiments on publicly available benchmarks demonstrate its capability to handle complex scenes with comparable results as prior packet-based algorithms. In addition, the proposed method achieves the fastest execution time (> 10 kHz) on standard CPUs as it requires only three events in estimation. We hope that our research opens the door to real-time, incremental motion estimation methods and applications in real-world scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge