"Time": models, code, and papers

Convolutional Neural Network-Based Automatic Classification of Colorectal and Prostate Tumor Biopsies Using Multispectral Imagery: System Development Study

Jan 30, 2023

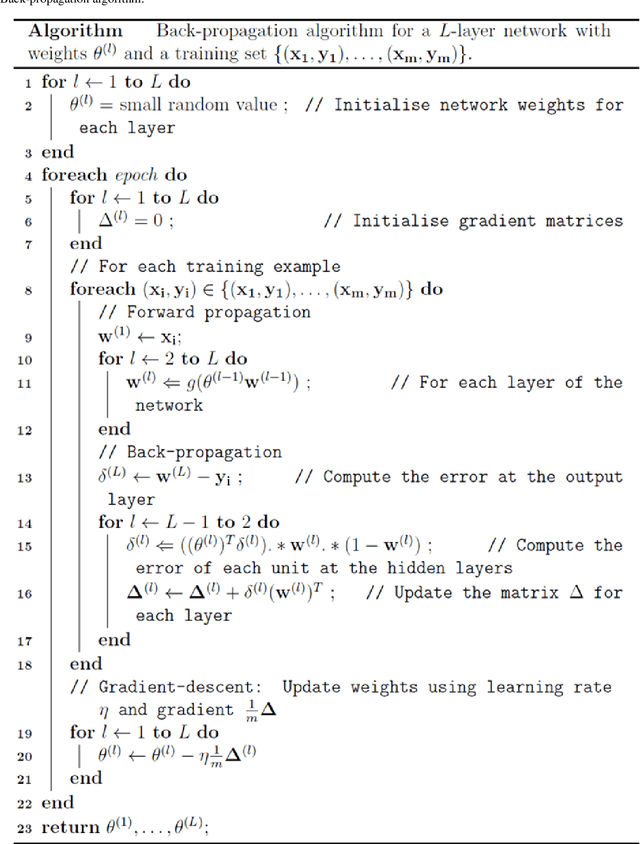

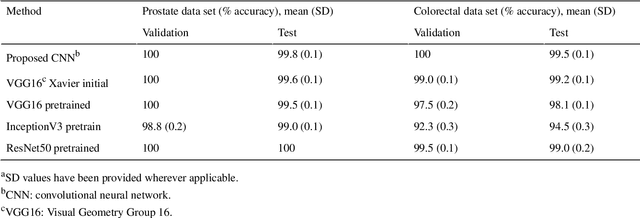

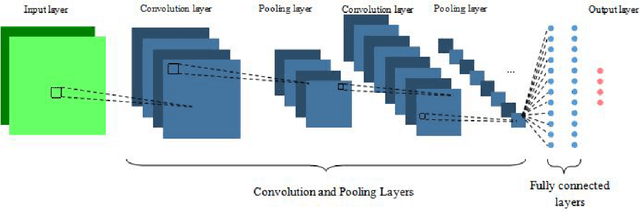

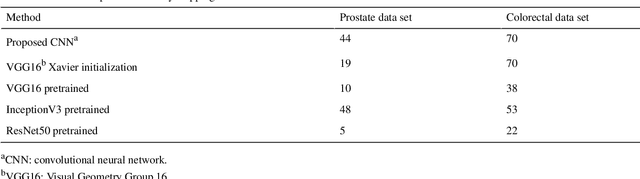

Colorectal and prostate cancers are the most common types of cancer in men worldwide. To diagnose colorectal and prostate cancer, a pathologist performs a histological analysis on needle biopsy samples. This manual process is time-consuming and error-prone, resulting in high intra and interobserver variability, which affects diagnosis reliability. This study aims to develop an automatic computerized system for diagnosing colorectal and prostate tumors by using images of biopsy samples to reduce time and diagnosis error rates associated with human analysis. We propose a CNN model for classifying colorectal and prostate tumors from multispectral images of biopsy samples. The key idea was to remove the last block of the convolutional layers and halve the number of filters per layer. Our results showed excellent performance, with an average test accuracy of 99.8% and 99.5% for the prostate and colorectal data sets, respectively. The system showed excellent performance when compared with pretrained CNNs and other classification methods, as it avoids the preprocessing phase while using a single CNN model for classification. Overall, the proposed CNN architecture was globally the best-performing system for classifying colorectal and prostate tumor images. The proposed CNN was detailed and compared with previously trained network models used as feature extractors. These CNNs were also compared with other classification techniques. As opposed to pretrained CNNs and other classification approaches, the proposed CNN yielded excellent results. The computational complexity of the CNNs was also investigated, it was shown that the proposed CNN is better at classifying images than pretrained networks because it does not require preprocessing. Thus, the overall analysis was that the proposed CNN architecture was globally the best-performing system for classifying colorectal and prostate tumor images.

Real-Time Gesture Recognition with Virtual Glove Markers

Jul 06, 2022

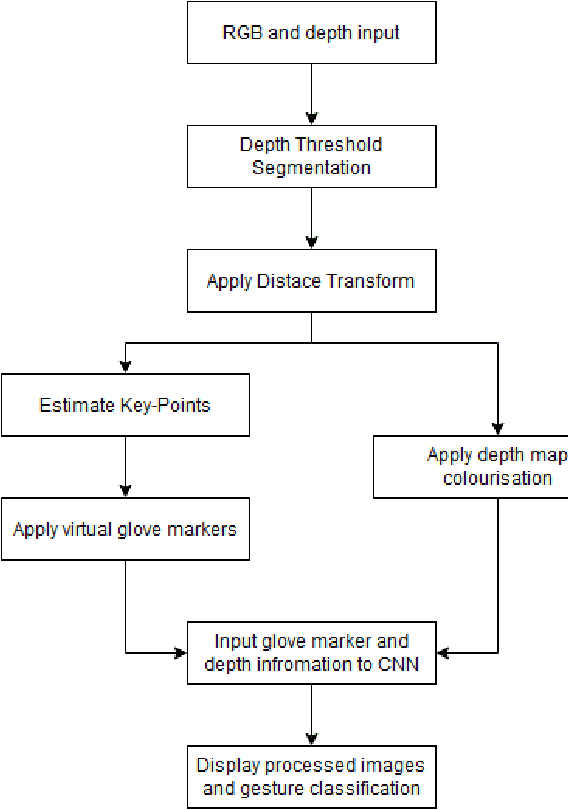

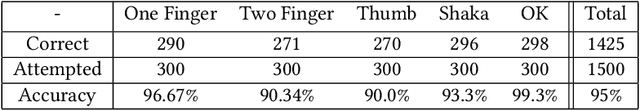

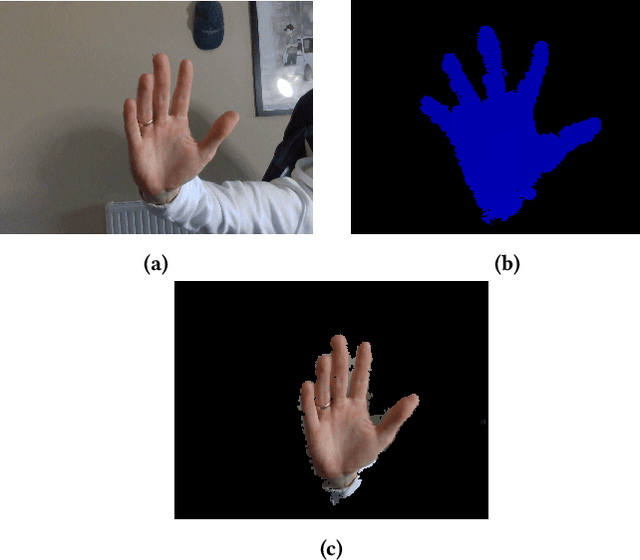

Due to the universal non-verbal natural communication approach that allows for effective communication between humans, gesture recognition technology has been steadily developing over the previous few decades. Many different strategies have been presented in research articles based on gesture recognition to try to create an effective system to send non-verbal natural communication information to computers, using both physical sensors and computer vision. Hyper accurate real-time systems, on the other hand, have only recently began to occupy the study field, with each adopting a range of methodologies due to past limits such as usability, cost, speed, and accuracy. A real-time computer vision-based human-computer interaction tool for gesture recognition applications that acts as a natural user interface is proposed. Virtual glove markers on users hands will be created and used as input to a deep learning model for the real-time recognition of gestures. The results obtained show that the proposed system would be effective in real-time applications including social interaction through telepresence and rehabilitation.

BSA-OMP: Beam-Split-Aware Orthogonal Matching Pursuit for THz Channel Estimation

Feb 05, 2023

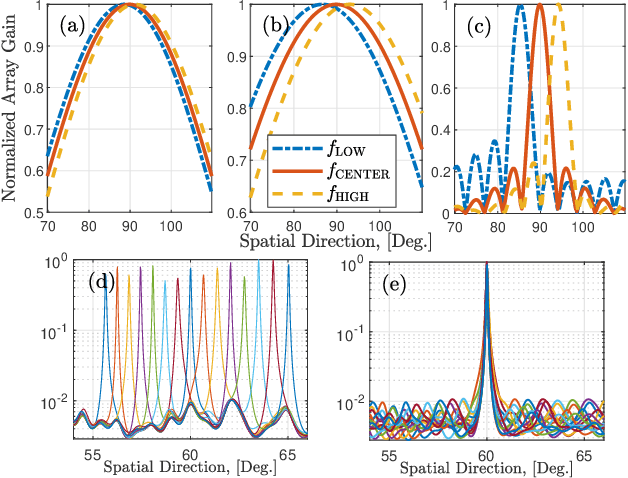

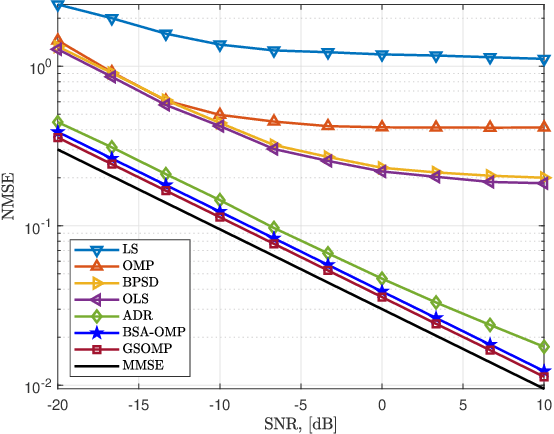

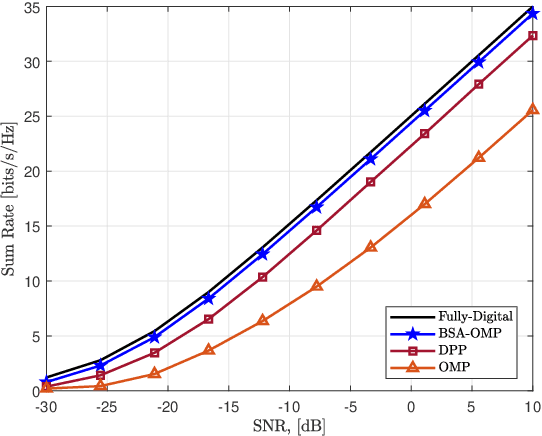

Terahertz (THz)-band has been envisioned for the sixth generation wireless networks thanks to its ultra-wide bandwidth and very narrow beamwidth. Nevertheless, THz-band transmission faces several unique challenges, one of which is beam-split which occurs due to the usage of subcarrier-independent analog beamformers and causes the generated beams at different subcarriers split, and point to different directions. Unlike the prior works dealing with beam-split by employing additional complex hardware components, e.g., time-delayer networks, a beam-split-aware orthogonal matching pursuit (BSA-OMP) approach is introduced to efficiently estimate the THz channel and beamformer design without any additional hardware. Specifically, we design a BSA dictionary comprised of beam-split-corrected steering vectors which inherently include the effect of beam-split so that the proposed BSA-OMP solution automatically yields the beam-split-corrected physical channel directions. Numerical results demonstrate the superior performance of BSA-OMP approach against the existing state-of-the-art techniques.

Machine Learning Methods for Evaluating Public Crisis: Meta-Analysis

Feb 05, 2023

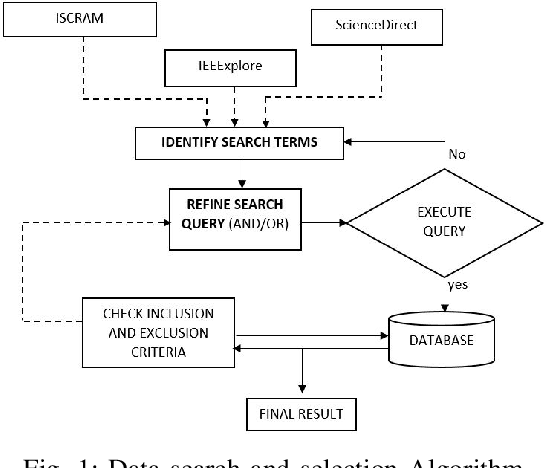

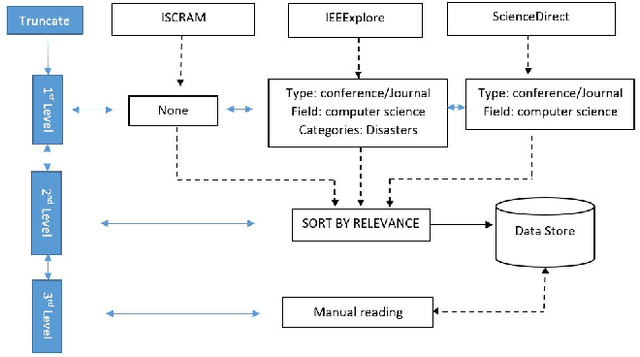

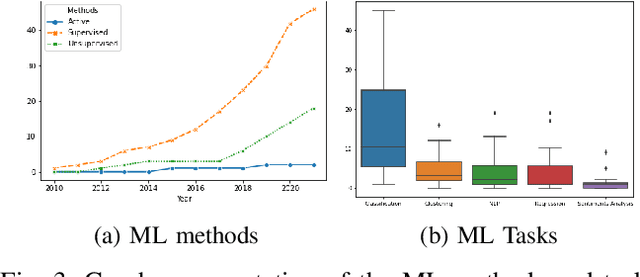

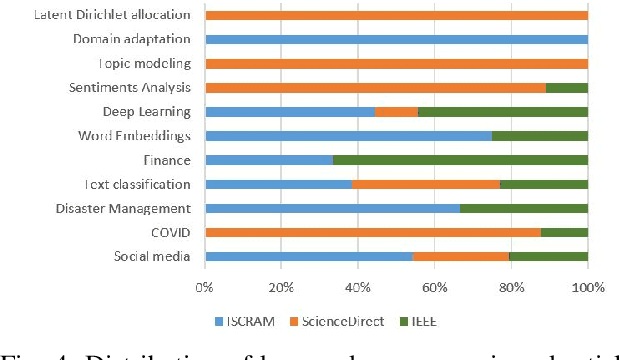

This study examines machine learning methods used in crisis management. Analyzing detected patterns from a crisis involves the collection and evaluation of historical or near-real-time datasets through automated means. This paper utilized the meta-review method to analyze scientific literature that utilized machine learning techniques to evaluate human actions during crises. Selected studies were condensed into themes and emerging trends using a systematic literature evaluation of published works accessed from three scholarly databases. Results show that data from social media was prominent in the evaluated articles with 27% usage, followed by disaster management, health (COVID) and crisis informatics, amongst many other themes. Additionally, the supervised machine learning method, with an application of 69% across the board, was predominant. The classification technique stood out among other machine learning tasks with 41% usage. The algorithms that played major roles were the Support Vector Machine, Neural Networks, Naive Bayes, and Random Forest, with 23%, 16%, 15%, and 12% contributions, respectively.

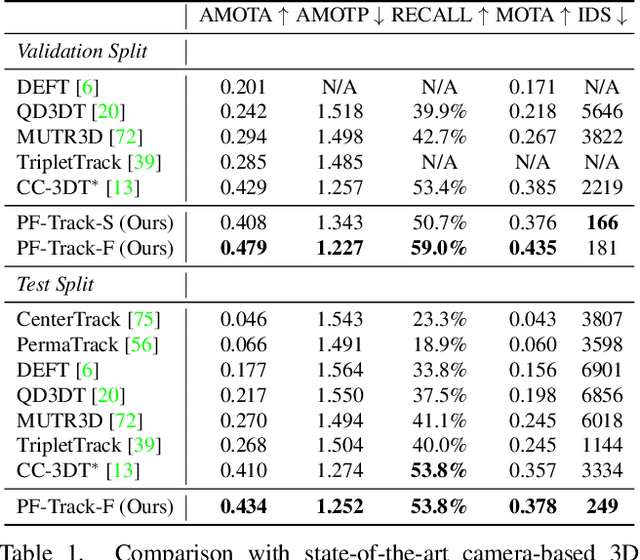

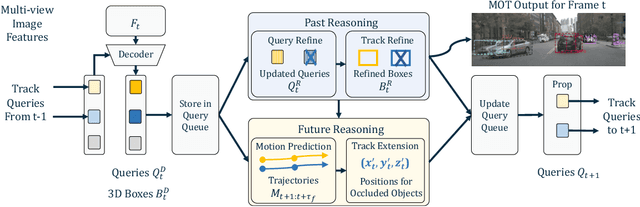

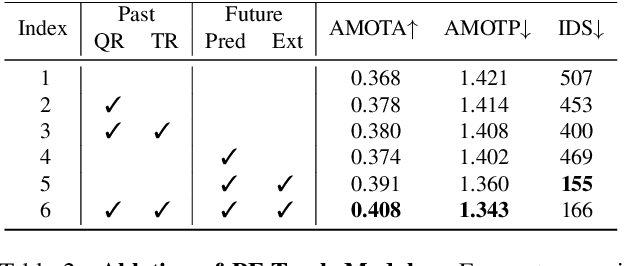

Standing Between Past and Future: Spatio-Temporal Modeling for Multi-Camera 3D Multi-Object Tracking

Feb 07, 2023

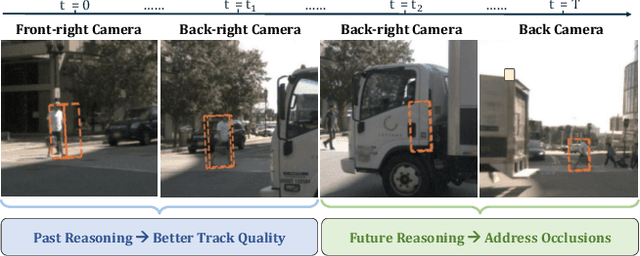

This work proposes an end-to-end multi-camera 3D multi-object tracking (MOT) framework. It emphasizes spatio-temporal continuity and integrates both past and future reasoning for tracked objects. Thus, we name it "Past-and-Future reasoning for Tracking" (PF-Track). Specifically, our method adapts the "tracking by attention" framework and represents tracked instances coherently over time with object queries. To explicitly use historical cues, our "Past Reasoning" module learns to refine the tracks and enhance the object features by cross-attending to queries from previous frames and other objects. The "Future Reasoning" module digests historical information and predicts robust future trajectories. In the case of long-term occlusions, our method maintains the object positions and enables re-association by integrating motion predictions. On the nuScenes dataset, our method improves AMOTA by a large margin and remarkably reduces ID-Switches by 90% compared to prior approaches, which is an order of magnitude less. The code and models are made available at https://github.com/TRI-ML/PF-Track.

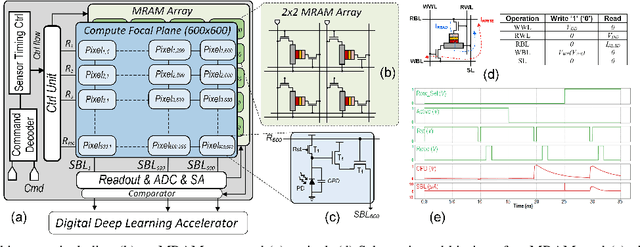

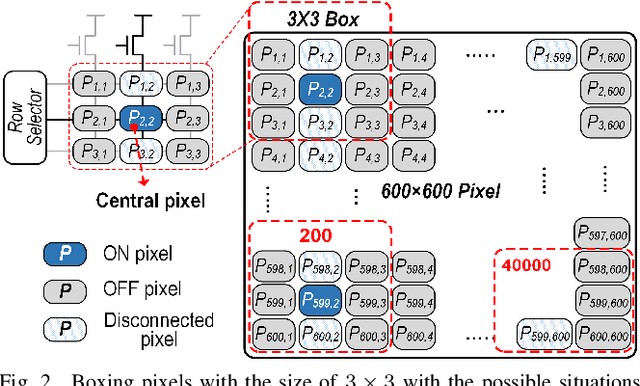

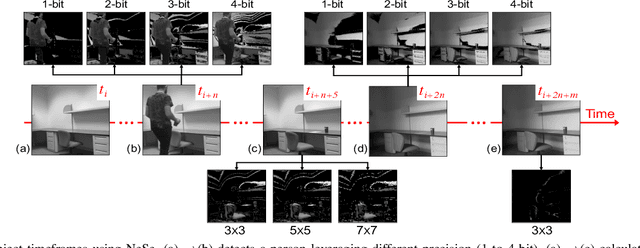

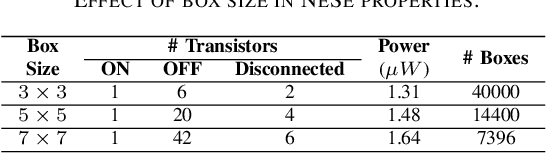

NeSe: Near-Sensor Event-Driven Scheme for Low Power Energy Harvesting Sensors

Feb 07, 2023

Digital technologies have made it possible to deploy visual sensor nodes capable of detecting motion events in the coverage area cost-effectively. However, background subtraction, as a widely used approach, remains an intractable task due to its inability to achieve competitive accuracy and reduced computation cost simultaneously. In this paper, an effective background subtraction approach, namely NeSe, for tiny energy-harvested sensors is proposed leveraging non-volatile memory (NVM). Using the developed software/hardware method, the accuracy and efficiency of event detection can be adjusted at runtime by changing the precision depending on the application's needs. Due to the near-sensor implementation of background subtraction and NVM usage, the proposed design reduces the data movement overhead while ensuring intermittent resiliency. The background is stored for a specific time interval within NVMs and compared with the next frame. If the power is cut, the background remains unchanged and is updated after the interval passes. Once the moving object is detected, the device switches to the high-powered sensor mode to capture the image.

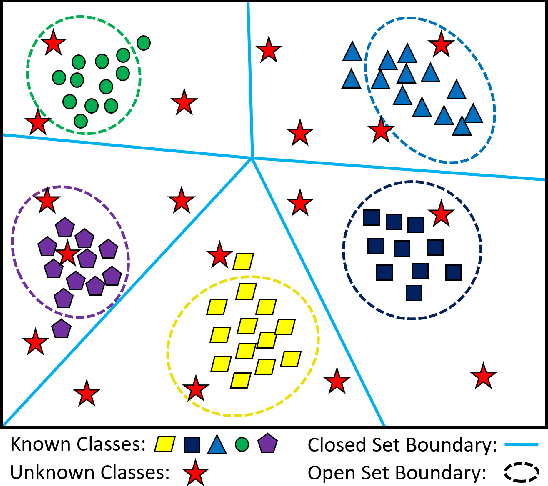

Open Set Wireless Signal Classification: Augmenting Deep Learning with Expert Feature Classifiers

Feb 07, 2023

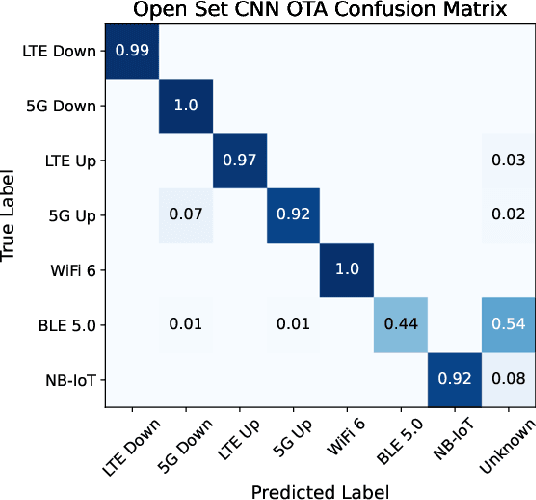

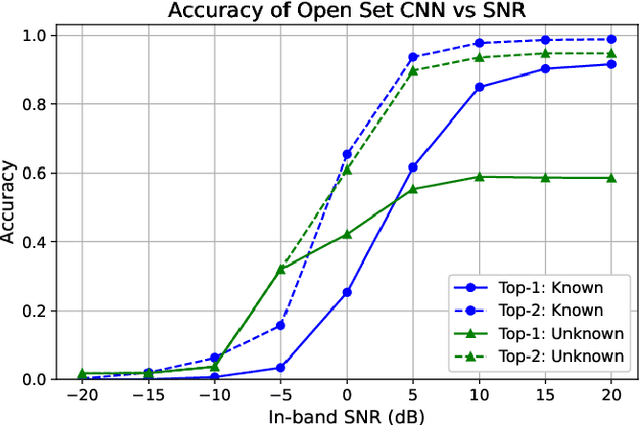

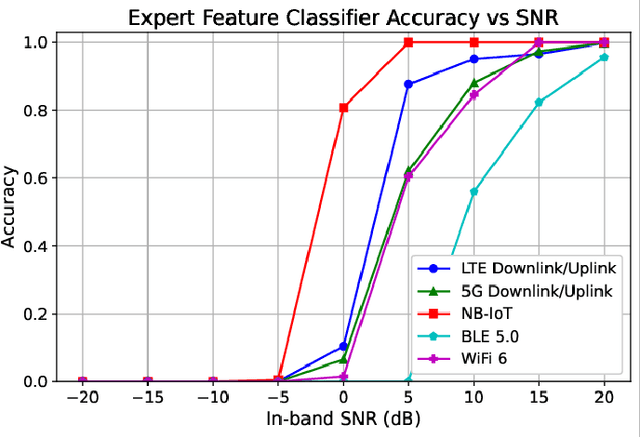

In shared spectrum with multiple radio access technologies, wireless standard classification is vital for applications such as dynamic spectrum access (DSA) and wideband spectrum monitoring. However, interfering signals and the presence of unknown classes of signals can diminish classification accuracy. To reduce interference, signals can be isolated in time, frequency, and space, but the isolation process adds distortion that reduces the accuracy of deep learning classifiers. We find that the distortion can be partially mitigated by augmenting the classifier training data with the signal isolation steps. To address unknown signals, we propose an open set hybrid classifier, which combines deep learning and expert feature classifiers to leverage the reliability and explainability of expert feature classifiers and the lower computational complexity of deep learning classifiers. The hybrid classifier reduces the computational complexity by 2 to 7 times on average compared to the expert feature classifiers, while achieving an accuracy of 95% at 15 dB SNR for known signal classes. The hybrid classifier manages to detect unknown classes at nearly 100% accuracy, due to the robustness of the expert feature classifiers.

MPC-based Motion Planning for Autonomous Truck-Trailer Maneuvering

Feb 07, 2023

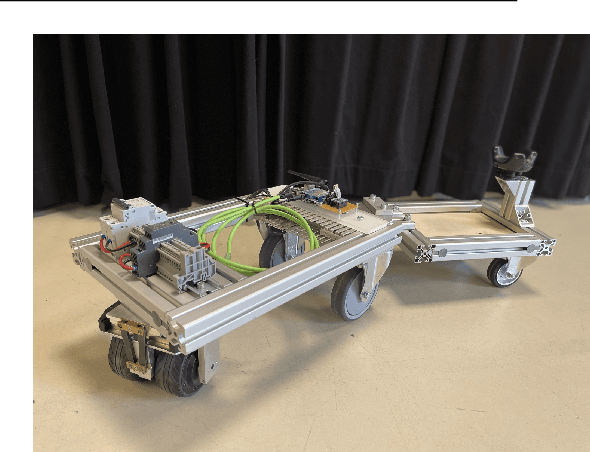

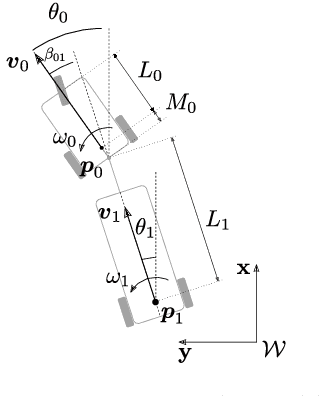

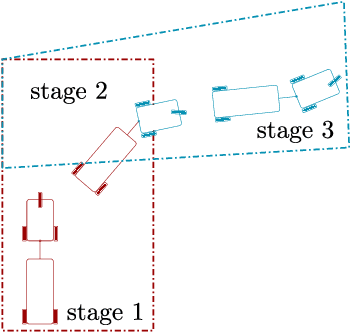

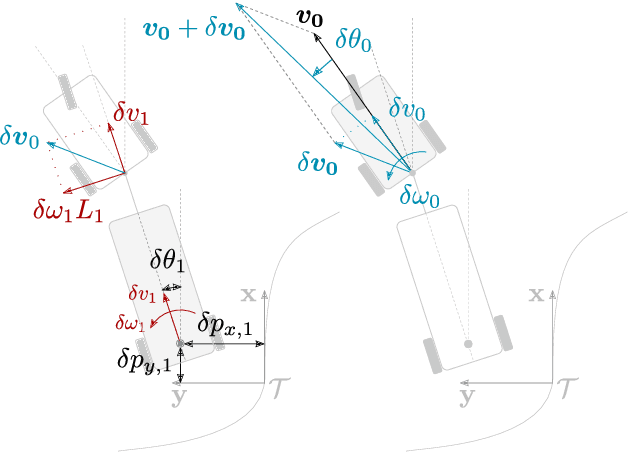

Time-optimal motion planning of autonomous vehicles in complex environments is a highly researched topic. This paper describes a novel approach to optimize and execute locally feasible trajectories for the maneuvering of a truck-trailer Autonomous Mobile Robot (AMR), by dividing the environment in a sequence or route of freely accessible overlapping corridors. Multi-stage optimal control generates local trajectories through advancing subsets of this route. To cope with the advancing subsets and changing environments, the optimal control problem is solved online with a receding horizon in a Model Predictive Control (MPC) fashion with an improved update strategy. This strategy seamlessly integrates the computationally expensive MPC updates with a low-cost feedback controller for trajectory tracking, for disturbance rejection, and for stabilization of the unstable kinematics of the reversing truck-trailer AMR. This methodology is implemented in a flexible software framework for an effortless transition from offline simulations to deployment of experiments. An experimental setup showcasing the truck-trailer AMR performing two reverse parking maneuvers validates the presented method.

Identification of Power System Oscillation Modes using Blind Source Separation based on Copula Statistic

Feb 07, 2023

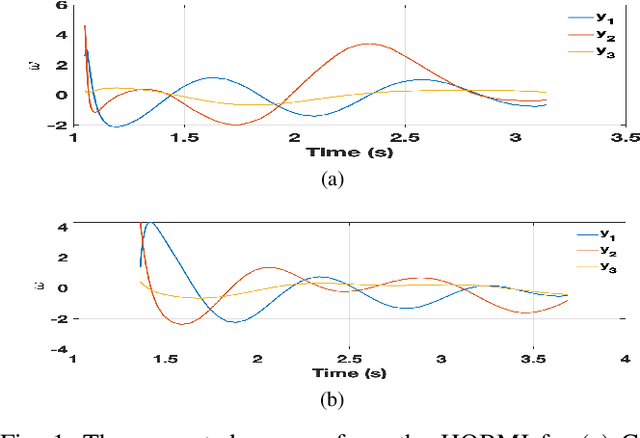

The dynamics of a power system with large penetration of renewable energy resources are becoming more nonlinear due to the intermittence of these resources and the switching of their power electronic devices. Therefore, it is crucial to accurately identify the dynamical modes of oscillation of such a power system when it is subject to disturbances to initiate appropriate preventive or corrective control actions. In this paper, we propose a high-order blind source identification (HOBI) algorithm based on the copula statistic to address these non-linear dynamics in modal analysis. The method combined with Hilbert transform (HOBI-HT) and iteration procedure (HOBMI) can identify all the modes as well as the model order from the observation signals obtained from the number of channels as low as one. We access the performance of the proposed method on numerical simulation signals and recorded data from a simulation of time domain analysis on the classical 11-Bus 4-Machine test system. Our simulation results outperform the state-of-the-art method in accuracy and effectiveness.

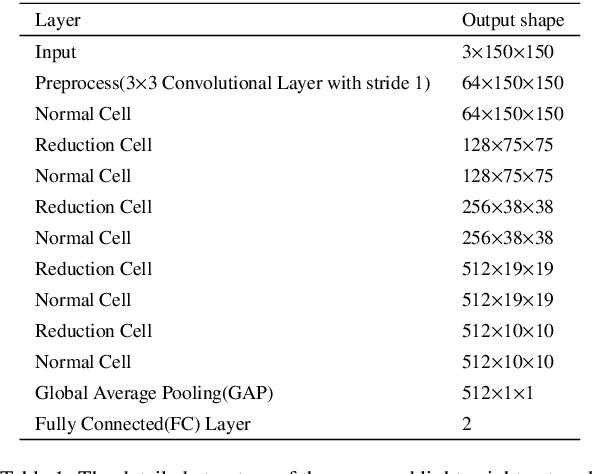

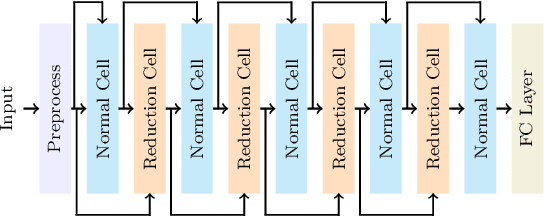

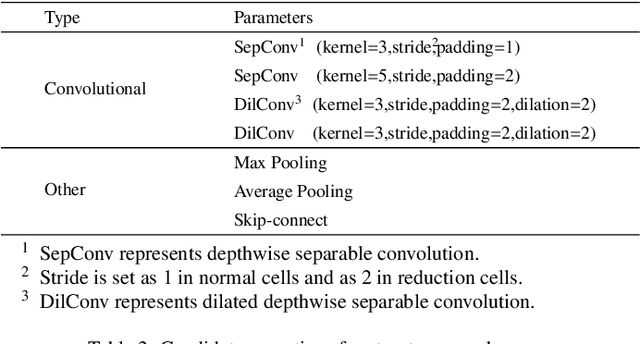

A lightweight network for photovoltaic cell defect detection in electroluminescence images based on neural architecture search and knowledge distillation

Feb 15, 2023

Nowadays, the rapid development of photovoltaic(PV) power stations requires increasingly reliable maintenance and fault diagnosis of PV modules in the field. Due to the effectiveness, convolutional neural network (CNN) has been widely used in the existing automatic defect detection of PV cells. However, the parameters of these CNN-based models are very large, which require stringent hardware resources and it is difficult to be applied in actual industrial projects. To solve these problems, we propose a novel lightweight high-performance model for automatic defect detection of PV cells in electroluminescence(EL) images based on neural architecture search and knowledge distillation. To auto-design an effective lightweight model, we introduce neural architecture search to the field of PV cell defect classification for the first time. Since the defect can be any size, we design a proper search structure of network to better exploit the multi-scale characteristic. To improve the overall performance of the searched lightweight model, we further transfer the knowledge learned by the existing pre-trained large-scale model based on knowledge distillation. Different kinds of knowledge are exploited and transferred, including attention information, feature information, logit information and task-oriented information. Experiments have demonstrated that the proposed model achieves the state-of-the-art performance on the public PV cell dataset of EL images under online data augmentation with accuracy of 91.74% and the parameters of 1.85M. The proposed lightweight high-performance model can be easily deployed to the end devices of the actual industrial projects and retain the accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge