"Time": models, code, and papers

Design of an Autonomous Agriculture Robot for Real Time Weed Detection using CNN

Nov 22, 2022

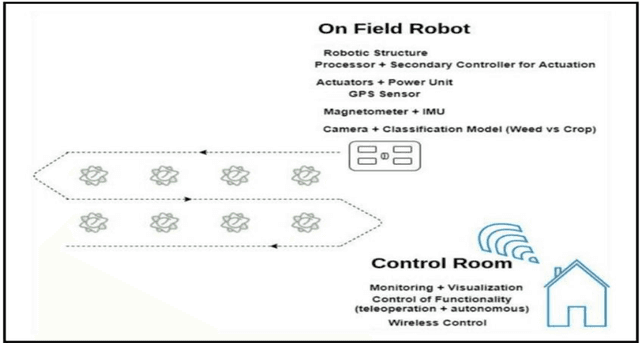

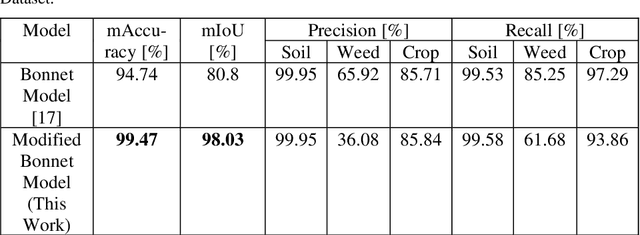

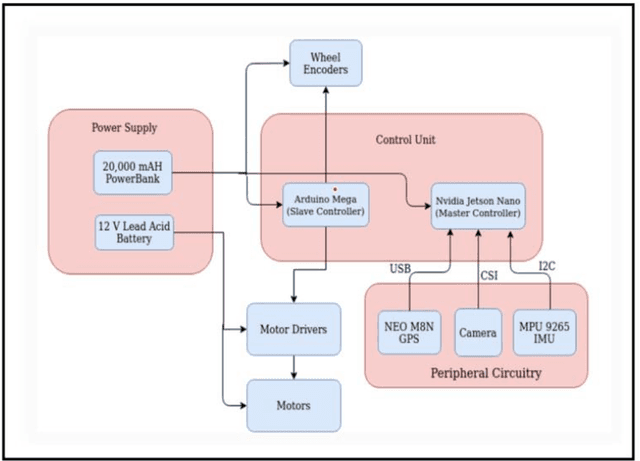

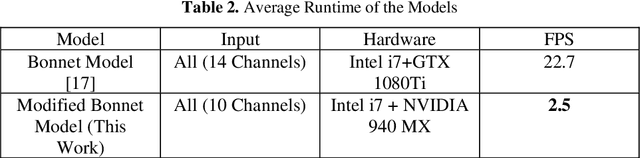

Agriculture has always remained an integral part of the world. As the human population keeps on rising, the demand for food also increases, and so is the dependency on the agriculture industry. But in today's scenario, because of low yield, less rainfall, etc., a dearth of manpower is created in this agricultural sector, and people are moving to live in the cities, and villages are becoming more and more urbanized. On the other hand, the field of robotics has seen tremendous development in the past few years. The concepts like Deep Learning (DL), Artificial Intelligence (AI), and Machine Learning (ML) are being incorporated with robotics to create autonomous systems for various sectors like automotive, agriculture, assembly line management, etc. Deploying such autonomous systems in the agricultural sector help in many aspects like reducing manpower, better yield, and nutritional quality of crops. So, in this paper, the system design of an autonomous agricultural robot which primarily focuses on weed detection is described. A modified deep-learning model for the purpose of weed detection is also proposed. The primary objective of this robot is the detection of weed on a real-time basis without any human involvement, but it can also be extended to design robots in various other applications involved in farming like weed removal, plowing, harvesting, etc., in turn making the farming industry more efficient. Source code and other details can be found at https://github.com/Dhruv2012/Autonomous-Farm-Robot

Real-Time Dense Field Phase-to-Space Simulation of Imaging through Atmospheric Turbulence

Oct 13, 2022

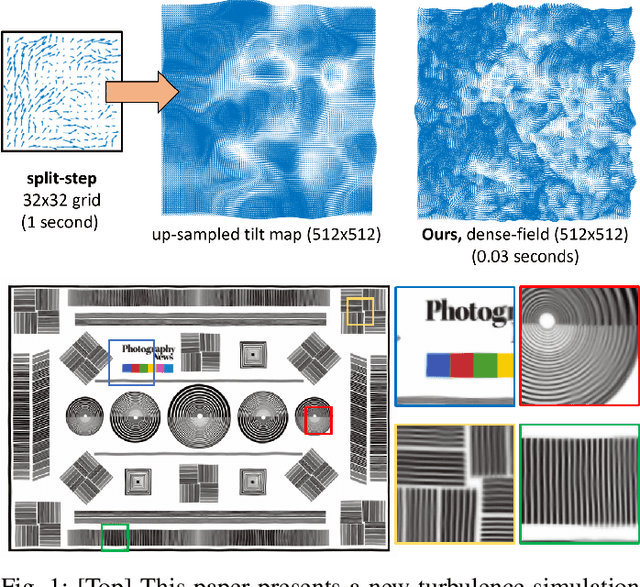

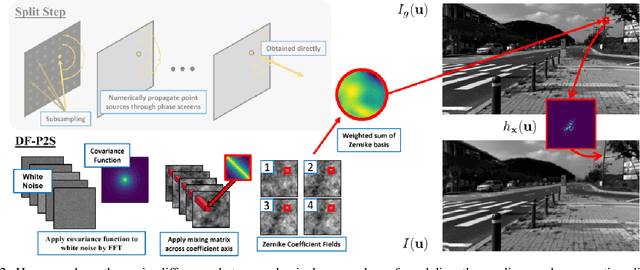

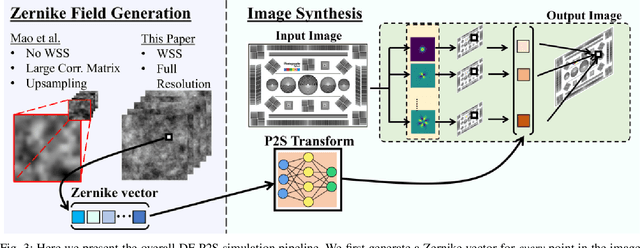

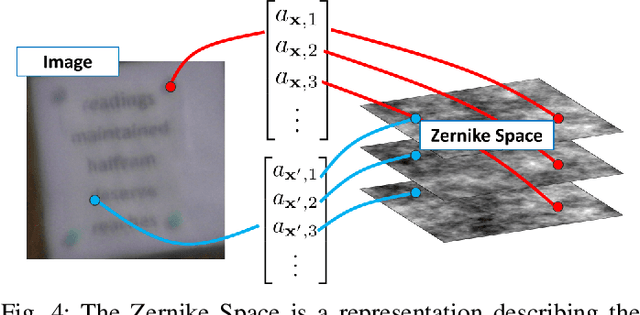

Numerical simulation of atmospheric turbulence is one of the biggest bottlenecks in developing computational techniques for solving the inverse problem in long-range imaging. The classical split-step method is based upon numerical wave propagation which splits the propagation path into many segments and propagates every pixel in each segment individually via the Fresnel integral. This repeated evaluation becomes increasingly time-consuming for larger images. As a result, the split-step simulation is often done only on a sparse grid of points followed by an interpolation to the other pixels. Even so, the computation is expensive for real-time applications. In this paper, we present a new simulation method that enables \emph{real-time} processing over a \emph{dense} grid of points. Building upon the recently developed multi-aperture model and the phase-to-space transform, we overcome the memory bottleneck in drawing random samples from the Zernike correlation tensor. We show that the cross-correlation of the Zernike modes has an insignificant contribution to the statistics of the random samples. By approximating these cross-correlation blocks in the Zernike tensor, we restore the homogeneity of the tensor which then enables Fourier-based random sampling. On a $512\times512$ image, the new simulator achieves 0.025 seconds per frame over a dense field. On a $3840 \times 2160$ image which would have taken 13 hours to simulate using the split-step method, the new simulator can run at approximately 60 seconds per frame.

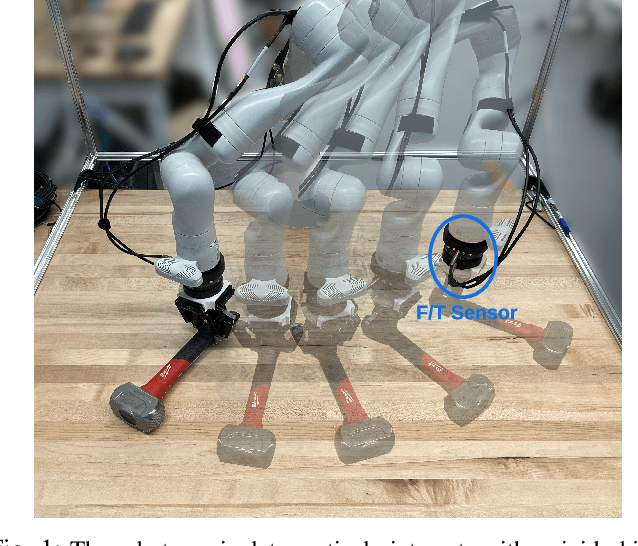

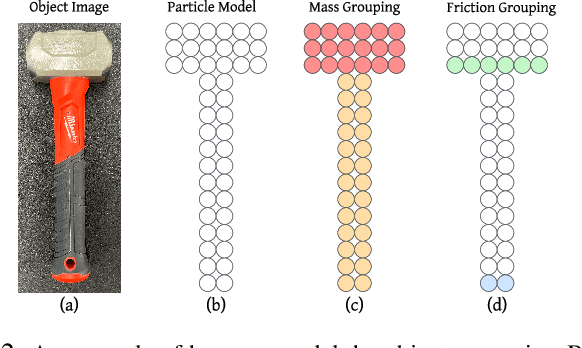

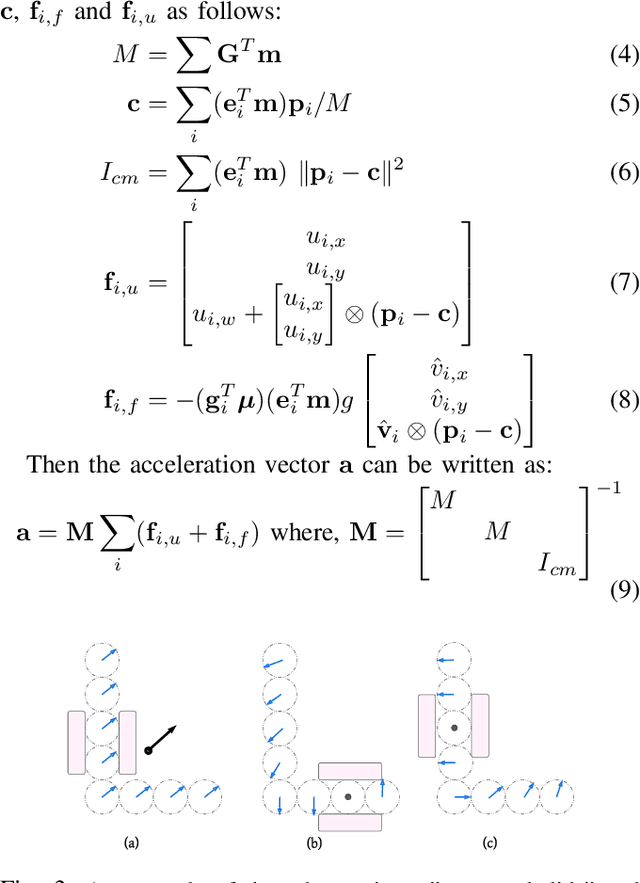

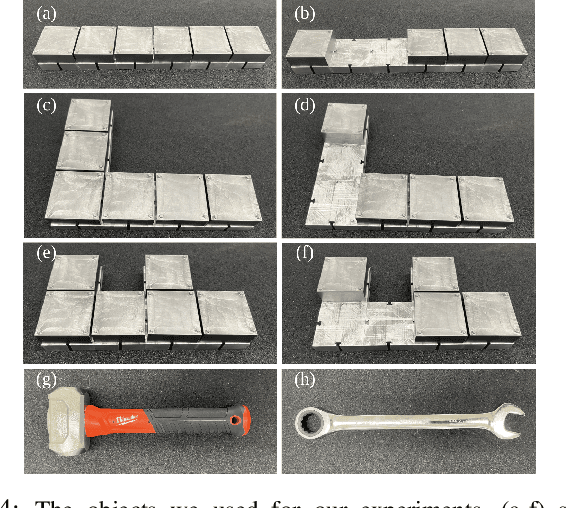

Active Mass Distribution Estimation from Tactile Feedback

Mar 02, 2023

In this work, we present a method to estimate the mass distribution of a rigid object through robotic interactions and tactile feedback. This is a challenging problem because of the complexity of physical dynamics modeling and the action dependencies across the model parameters. We propose a sequential estimation strategy combined with a set of robot action selection rules based on the analytical formulation of a discrete-time dynamics model. To evaluate the performance of our approach, we also manufactured re-configurable block objects that allow us to modify the object mass distribution while having access to the ground truth values. We compare our approach against multiple baselines and show that our approach can estimate the mass distribution with around 10% error, while the baselines have errors ranging from 18% to 68%.

Efficient subtyping of ovarian cancer histopathology whole slide images using active sampling in multiple instance learning

Feb 22, 2023

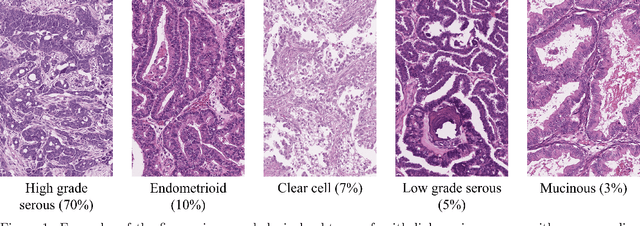

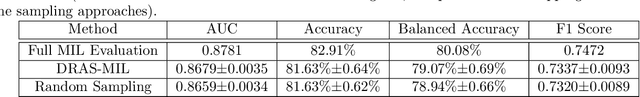

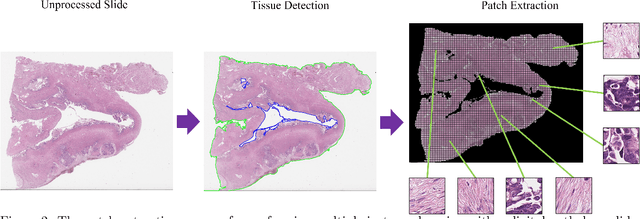

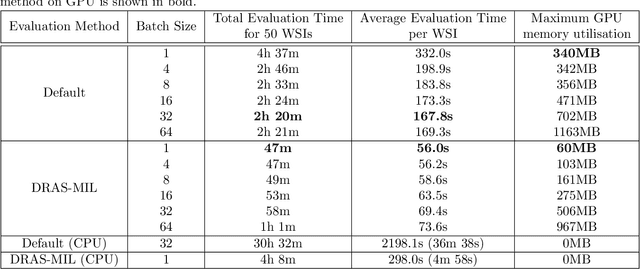

Weakly-supervised classification of histopathology slides is a computationally intensive task, with a typical whole slide image (WSI) containing billions of pixels to process. We propose Discriminative Region Active Sampling for Multiple Instance Learning (DRAS-MIL), a computationally efficient slide classification method using attention scores to focus sampling on highly discriminative regions. We apply this to the diagnosis of ovarian cancer histological subtypes, which is an essential part of the patient care pathway as different subtypes have different genetic and molecular profiles, treatment options, and patient outcomes. We use a dataset of 714 WSIs acquired from 147 epithelial ovarian cancer patients at Leeds Teaching Hospitals NHS Trust to distinguish the most common subtype, high-grade serous carcinoma, from the other four subtypes (low-grade serous, endometrioid, clear cell, and mucinous carcinomas) combined. We demonstrate that DRAS-MIL can achieve similar classification performance to exhaustive slide analysis, with a 3-fold cross-validated AUC of 0.8679 compared to 0.8781 with standard attention-based MIL classification. Our approach uses at most 18% as much memory as the standard approach, while taking 33% of the time when evaluating on a GPU and only 14% on a CPU alone. Reducing prediction time and memory requirements may benefit clinical deployment and the democratisation of AI, reducing the extent to which computational hardware limits end-user adoption.

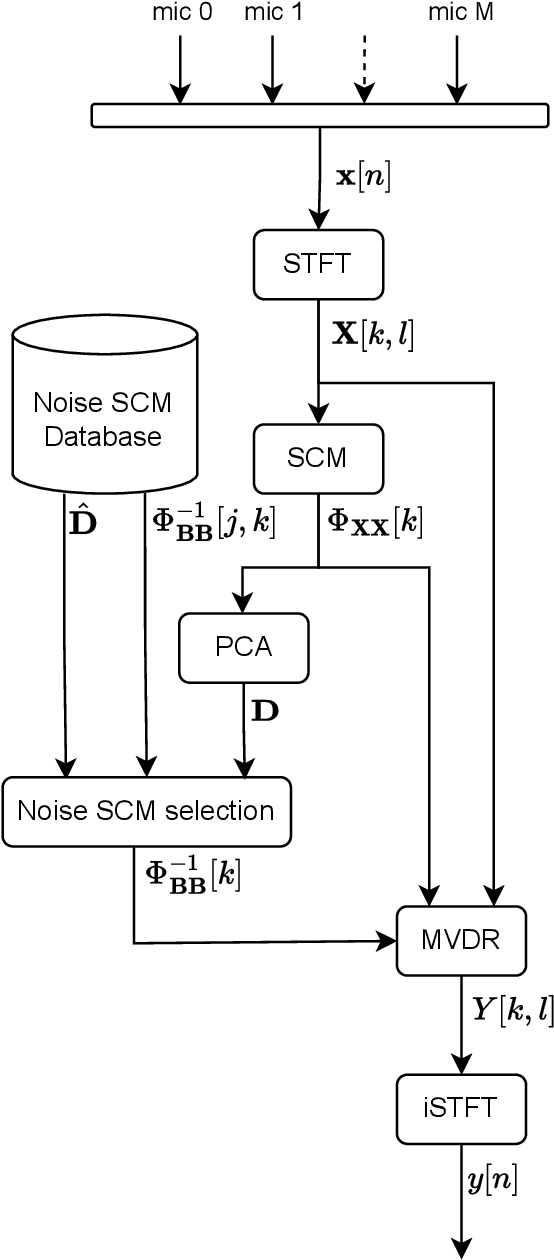

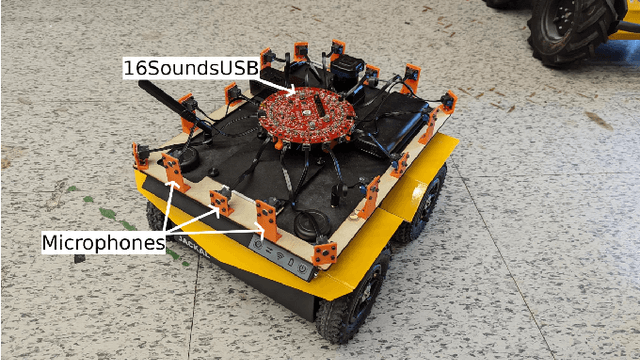

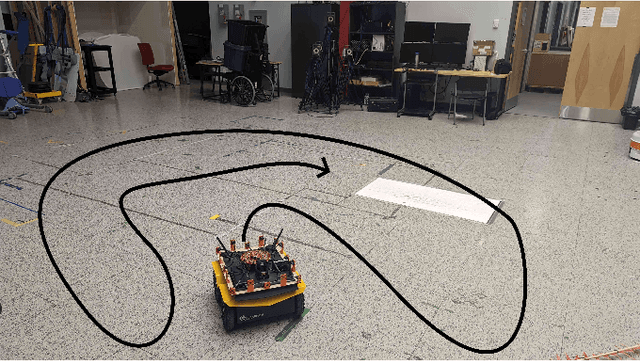

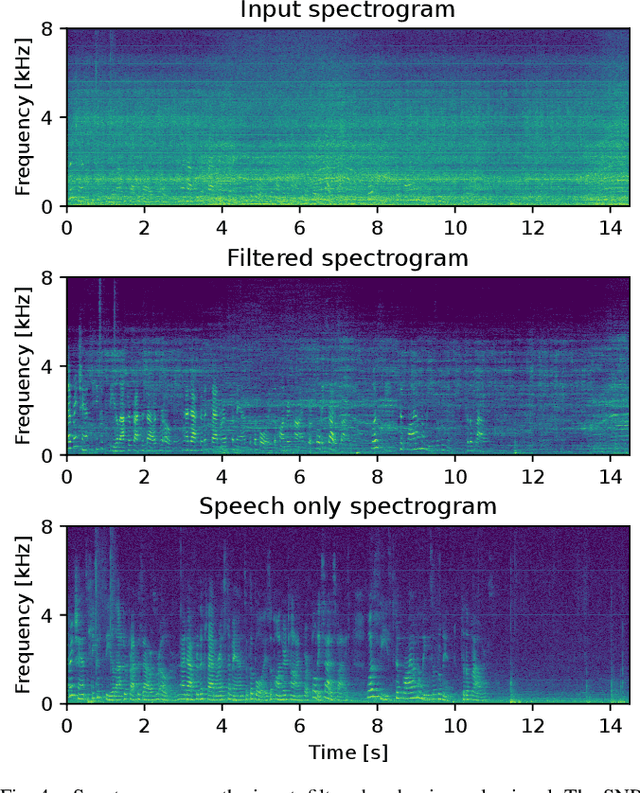

Ego-noise reduction of a mobile robot using noise spatial covariance matrix learning and minimum variance distortionless response

Mar 07, 2023

The performance of speech and events recognition systems significantly improved recently thanks to deep learning methods. However, some of these tasks remain challenging when algorithms are deployed on robots due to the unseen mechanical noise and electrical interference generated by their actuators while training the neural networks. Ego-noise reduction as a preprocessing step therefore can help solve this issue when using pre-trained speech and event recognition algorithms on robots. In this paper, we propose a new method to reduce ego-noise using only a microphone array and less than two minute of noise recordings. Using Principal Component Analysis (PCA), the best covariance matrix candidate is selected from a dictionary created online during calibration and used with the Minimum Variance Distortionless Response (MVDR) beamformer. Results show that the proposed method runs in real-time, improves the signal-to-distortion ratio (SDR) by up to 10 dB, decreases the word error rate (WER) by 55\% in some cases and increases the Average Precision (AP) of event detection by up to 0.2.

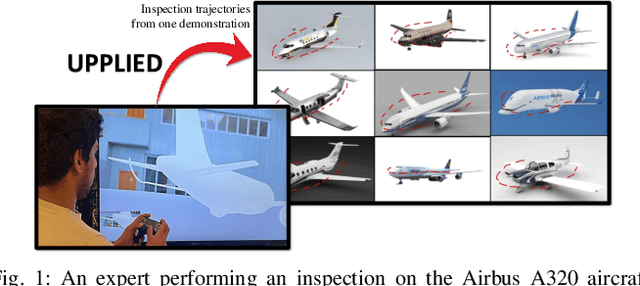

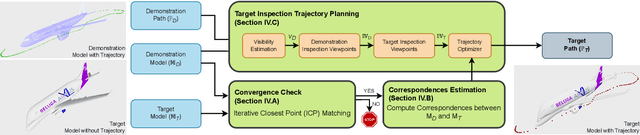

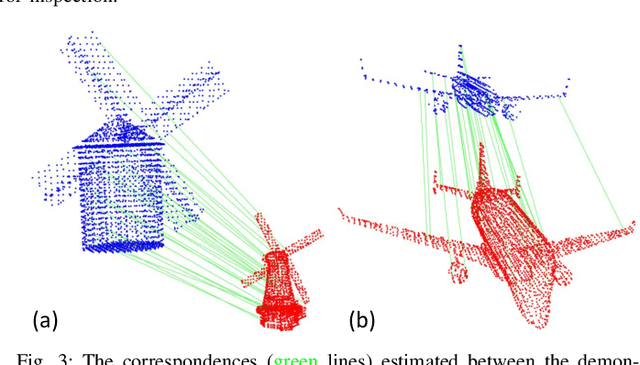

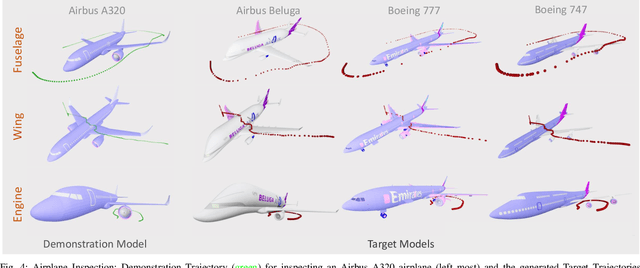

UPPLIED: UAV Path Planning for Inspection through Demonstration

Mar 07, 2023

In this paper, a new demonstration-based path-planning framework for the visual inspection of large structures using UAVs is proposed. We introduce UPPLIED: UAV Path PLanning for InspEction through Demonstration, which utilizes a demonstrated trajectory to generate a new trajectory to inspect other structures of the same kind. The demonstrated trajectory can inspect specific regions of the structure and the new trajectory generated by UPPLIED inspects similar regions in the other structure. The proposed method generates inspection points from the demonstrated trajectory and uses standardization to translate those inspection points to inspect the new structure. Finally, the position of these inspection points is optimized to refine their view. Numerous experiments were conducted with various structures and the proposed framework was able to generate inspection trajectories of various kinds for different structures based on the demonstration. The trajectories generated match with the demonstrated trajectory in geometry and at the same time inspect the regions inspected by the demonstration trajectory with minimum deviation. The experimental video of the work can be found at https://youtu.be/YqPx-cLkv04.

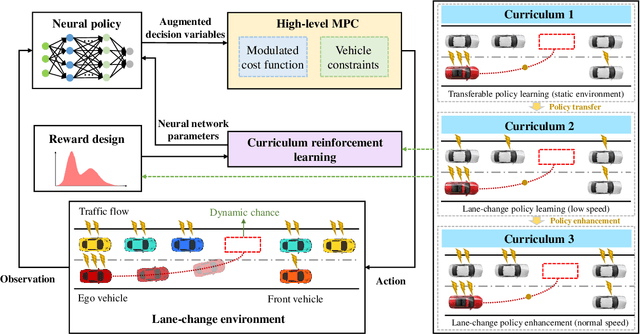

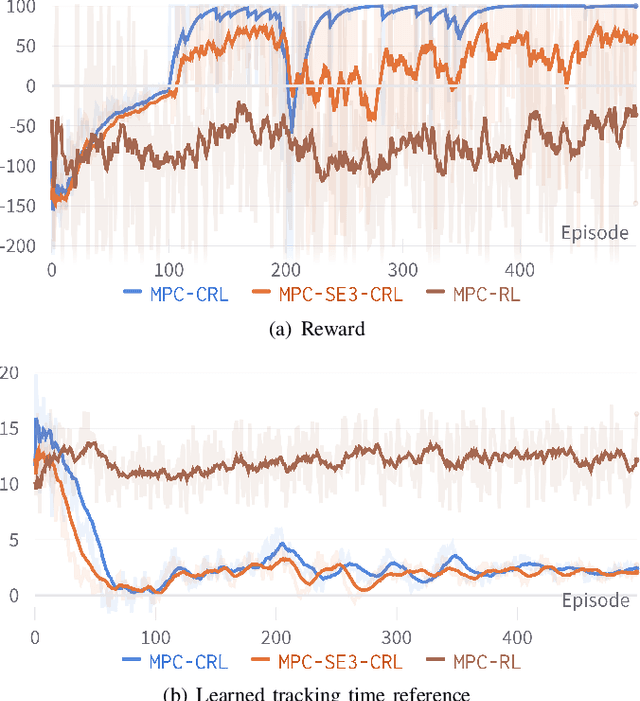

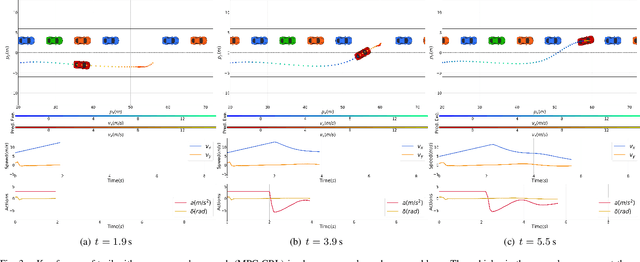

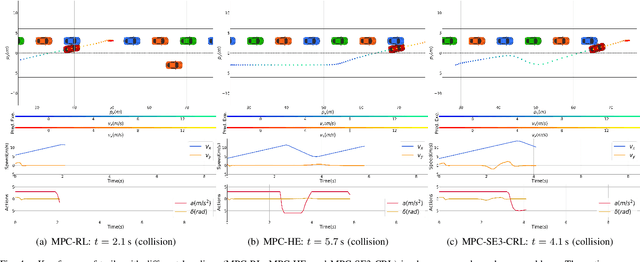

Chance-Aware Lane Change with High-Level Model Predictive Control Through Curriculum Reinforcement Learning

Mar 07, 2023

Lane change in dense traffic is considered a challenging problem that typically requires the recognization of an opportune and appropriate time for maneuvers. In this work, we propose a chance-aware lane-change strategy with high-level model predictive control (MPC) through curriculum reinforcement learning (CRL). The embodied high-level MPC in our proposed framework is parameterized with augmented decision variables, where full-state references and regulatory factors concerning their importance are introduced. In this sense, improved adaptiveness to dense and dynamic environments with high complexity is exhibited. Furthermore, to improve the convergence speed and ensure a high-quality policy, effective curriculum design is integrated into the reinforcement learning (RL) framework with policy transfer and enhancement. With comprehensive experiments towards the chance-aware lane-change scenario, accelerated convergence speed and improved reward performance are demonstrated through comparisons with representative baseline methods. It is noteworthy that, given a narrow chance in the dense and dynamic traffic flow, the proposed approach generates high-quality lane-change maneuvers such that the vehicle merges into the traffic flow with a high success rate.

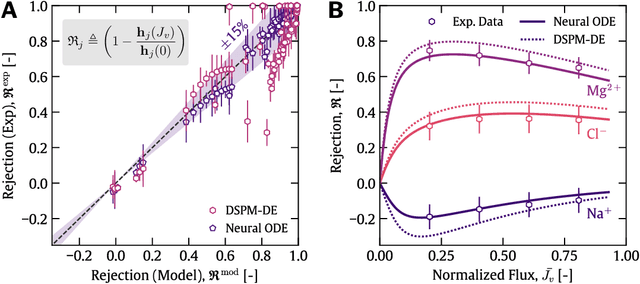

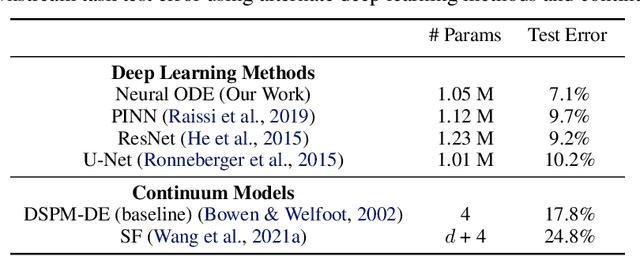

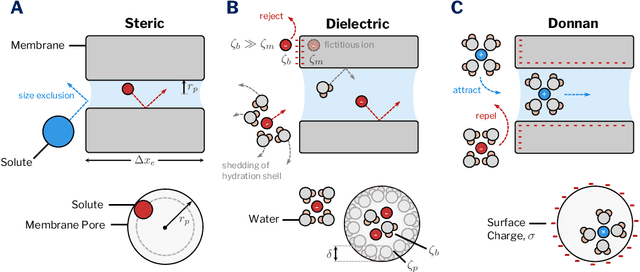

Physics-constrained neural differential equations for learning multi-ionic transport

Mar 07, 2023

Continuum models for ion transport through polyamide nanopores require solving partial differential equations (PDEs) through complex pore geometries. Resolving spatiotemporal features at this length and time-scale can make solving these equations computationally intractable. In addition, mechanistic models frequently require functional relationships between ion interaction parameters under nano-confinement, which are often too challenging to measure experimentally or know a priori. In this work, we develop the first physics-informed deep learning model to learn ion transport behaviour across polyamide nanopores. The proposed architecture leverages neural differential equations in conjunction with classical closure models as inductive biases directly encoded into the neural framework. The neural differential equations are pre-trained on simulated data from continuum models and fine-tuned on independent experimental data to learn ion rejection behaviour. Gaussian noise augmentations from experimental uncertainty estimates are also introduced into the measured data to improve model generalization. Our approach is compared to other physics-informed deep learning models and shows strong agreement with experimental measurements across all studied datasets.

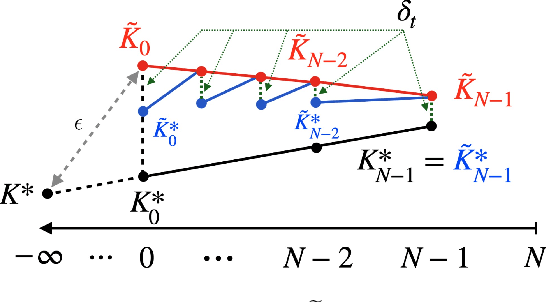

Revisiting LQR Control from the Perspective of Receding-Horizon Policy Gradient

Feb 25, 2023

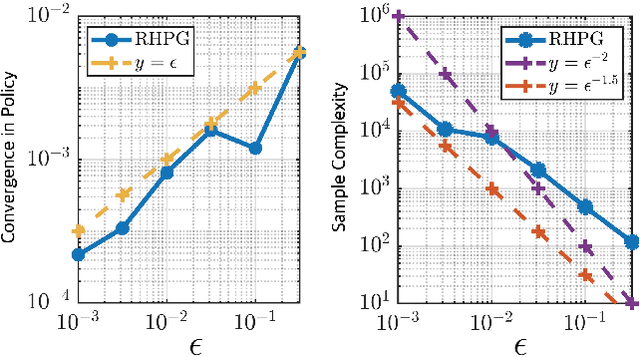

We revisit in this paper the discrete-time linear quadratic regulator (LQR) problem from the perspective of receding-horizon policy gradient (RHPG), a newly developed model-free learning framework for control applications. We provide a fine-grained sample complexity analysis for RHPG to learn a control policy that is both stabilizing and $\epsilon$-close to the optimal LQR solution, and our algorithm does not require knowing a stabilizing control policy for initialization. Combined with the recent application of RHPG in learning the Kalman filter, we demonstrate the general applicability of RHPG in linear control and estimation with streamlined analyses.

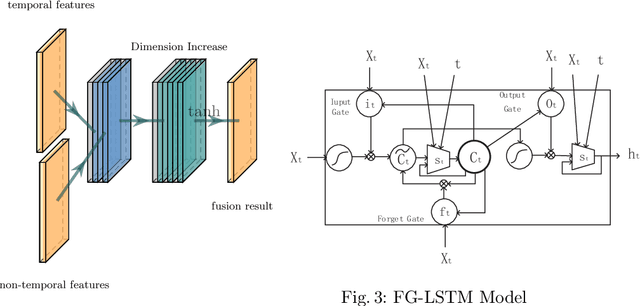

Features Fusion Framework for Multimodal Irregular Time-series Events

Sep 05, 2022

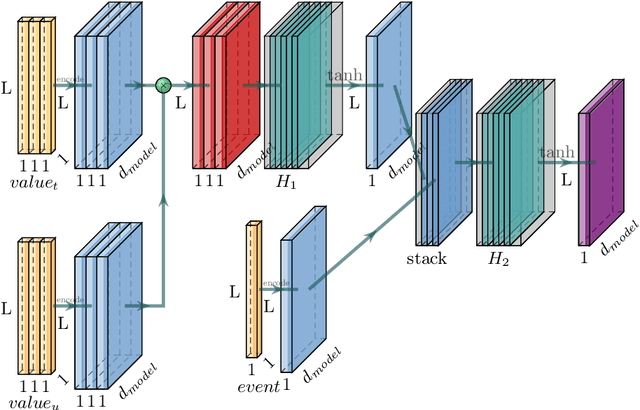

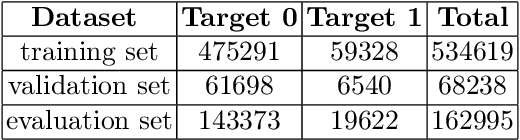

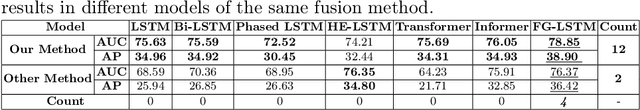

Some data from multiple sources can be modeled as multimodal time-series events which have different sampling frequencies, data compositions, temporal relations and characteristics. Different types of events have complex nonlinear relationships, and the time of each event is irregular. Neither the classical Recurrent Neural Network (RNN) model nor the current state-of-the-art Transformer model can deal with these features well. In this paper, a features fusion framework for multimodal irregular time-series events is proposed based on the Long Short-Term Memory networks (LSTM). Firstly, the complex features are extracted according to the irregular patterns of different events. Secondly, the nonlinear correlation and complex temporal dependencies relationship between complex features are captured and fused into a tensor. Finally, a feature gate are used to control the access frequency of different tensors. Extensive experiments on MIMIC-III dataset demonstrate that the proposed framework significantly outperforms to the existing methods in terms of AUC (the area under Receiver Operating Characteristic curve) and AP (Average Precision).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge