"Time": models, code, and papers

Image Deblurring by Exploring In-depth Properties of Transformer

Mar 24, 2023

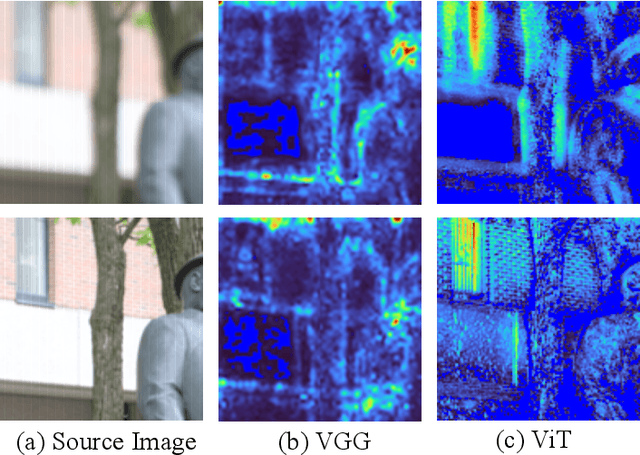

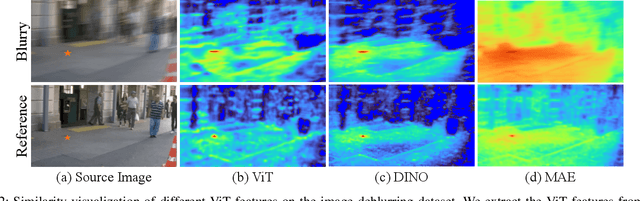

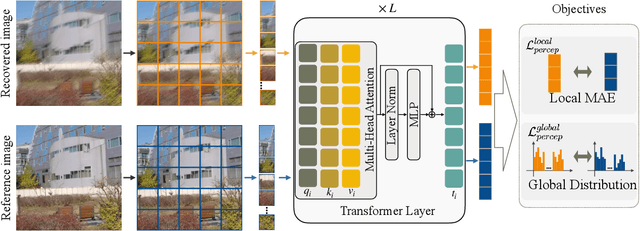

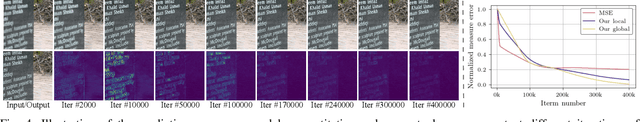

Image deblurring continues to achieve impressive performance with the development of generative models. Nonetheless, there still remains a displeasing problem if one wants to improve perceptual quality and quantitative scores of recovered image at the same time. In this study, drawing inspiration from the research of transformer properties, we introduce the pretrained transformers to address this problem. In particular, we leverage deep features extracted from a pretrained vision transformer (ViT) to encourage recovered images to be sharp without sacrificing the performance measured by the quantitative metrics. The pretrained transformer can capture the global topological relations (i.e., self-similarity) of image, and we observe that the captured topological relations about the sharp image will change when blur occurs. By comparing the transformer features between recovered image and target one, the pretrained transformer provides high-resolution blur-sensitive semantic information, which is critical in measuring the sharpness of the deblurred image. On the basis of the advantages, we present two types of novel perceptual losses to guide image deblurring. One regards the features as vectors and computes the discrepancy between representations extracted from recovered image and target one in Euclidean space. The other type considers the features extracted from an image as a distribution and compares the distribution discrepancy between recovered image and target one. We demonstrate the effectiveness of transformer properties in improving the perceptual quality while not sacrificing the quantitative scores (PSNR) over the most competitive models, such as Uformer, Restormer, and NAFNet, on defocus deblurring and motion deblurring tasks.

Non-invasive urinary bladder volume estimation with artefact-suppressed bio-impedance measurements

Mar 24, 2023

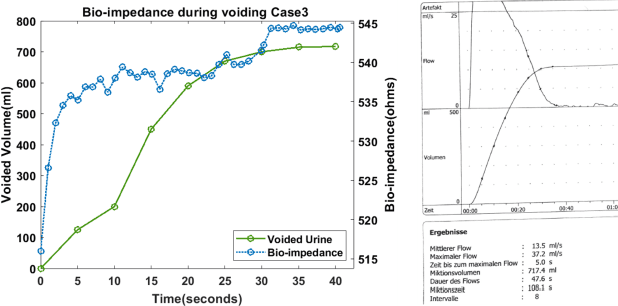

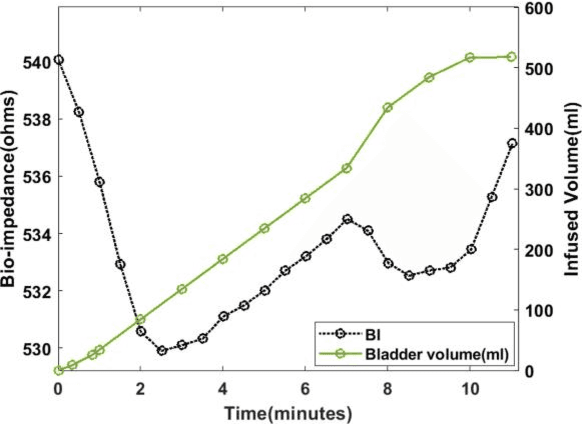

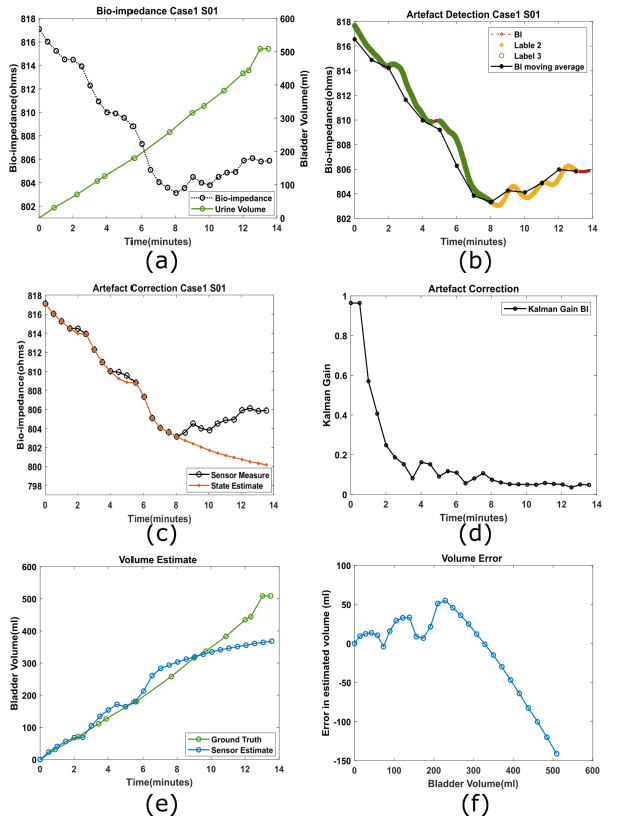

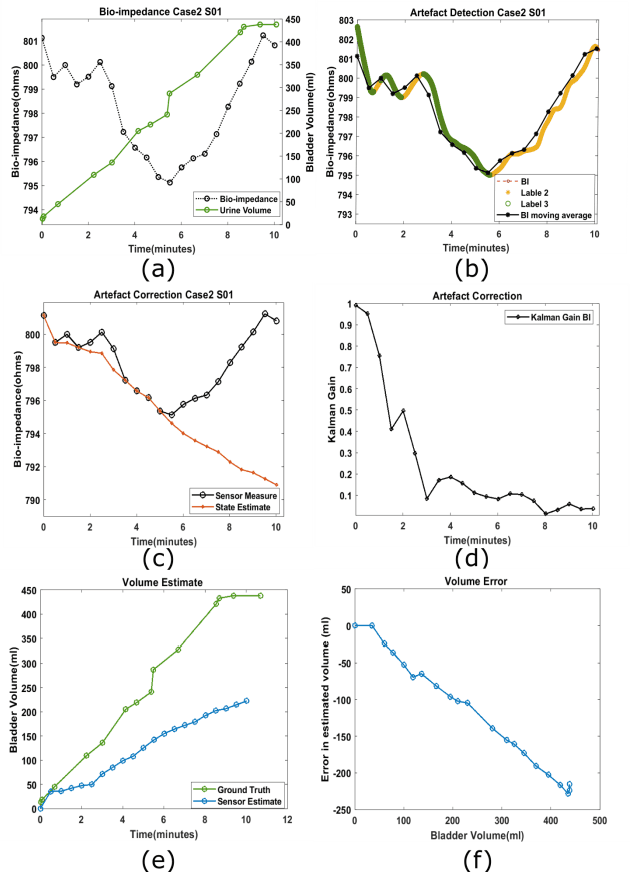

Urine output is a vital parameter to gauge kidney health. Current monitoring methods include manually written records, invasive urinary catheterization or ultrasound measurements performed by highly skilled personnel. Catheterization bears high risks of infection while intermittent ultrasound measures and manual recording are time consuming and might miss early signs of kidney malfunction. Bioimpedance (BI) measurements may serve as a non-invasive alternative for measuring urine volume in vivo. However, limited robustness have prevented its clinical translation. Here, a deep learning-based algorithm is presented that processes the local BI of the lower abdomen and suppresses artefacts to measure the bladder volume quantitatively, non-invasively and without the continuous need for additional personnel. A tetrapolar BI wearable system called ANUVIS was used to collect continuous bladder volume data from three healthy subjects to demonstrate feasibility of operation, while clinical gold standards of urodynamic (n=6) and uroflowmetry tests (n=8) provided the ground truth. Optimized location for electrode placement and a model for the change in BI with changing bladder volume is deduced. The average error for full bladder volume estimation and for residual volume estimation was -29 +/-87.6 ml, thus, comparable to commercial portable ultrasound devices (Bland Altman analysis showed a bias of -5.2 ml with LoA between 119.7 ml to -130.1 ml), while providing the additional benefit of hands-free, non-invasive, and continuous bladder volume estimation. The combination of the wearable BI sensor node and the presented algorithm provides an attractive alternative to current standard of care with potential benefits in providing insights into kidney function.

4D iRIOM: 4D Imaging Radar Inertial Odometry and Mapping

Mar 24, 2023

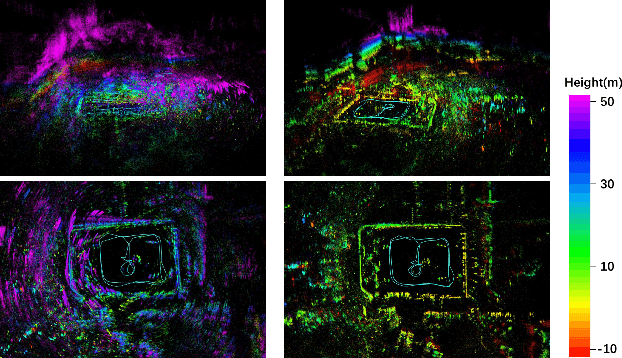

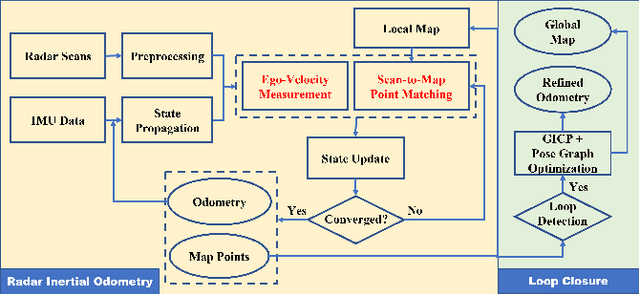

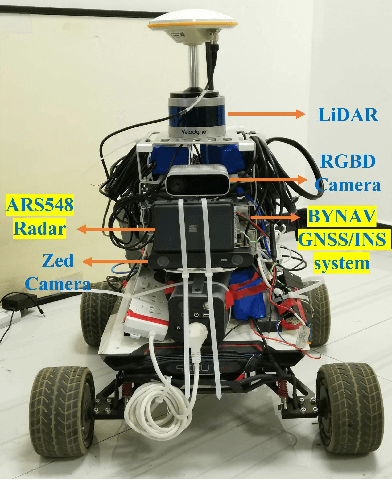

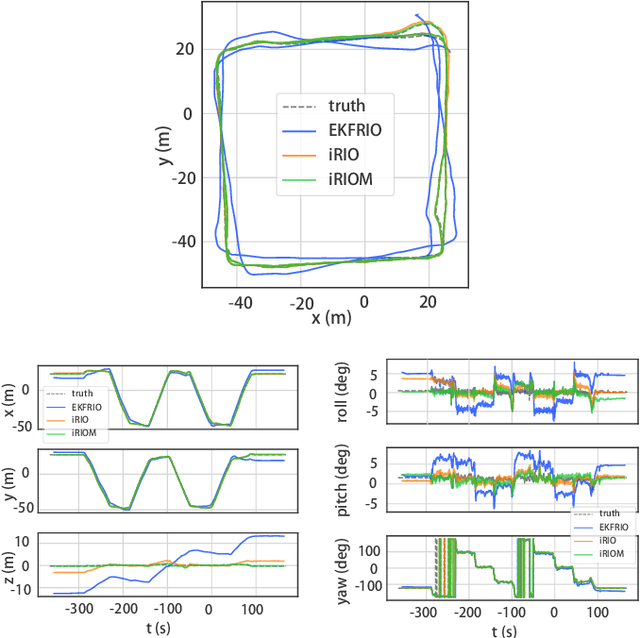

Millimeter wave radar can measure distances, directions, and Doppler velocity for objects in harsh conditions such as fog. The 4D imaging radar with both vertical and horizontal data resembling an image can also measure objects' height. Previous studies have used 3D radars for ego-motion estimation. But few methods leveraged the rich data of imaging radars, and they usually omitted the mapping aspect which is affected by the radar multipath returns, thus leading to inferior odometry accuracy. This paper presents a real-time imaging radar inertial odometry and mapping method, iRIOM, based on the submap concept. To fend off moving objects and multipath reflections, the iteratively reweighted least squares method is used for getting the ego-velocity from a single scan. To measure the agreement between sparse non-repetitive radar scan points and submap points, the distribution-to-multi-distribution distance for matches is adopted. The ego-velocity, scan-to-submap matches are fused with the 6D inertial data by an iterative extended Kalman filter to get the platform's 3D position and orientation. A loop closure module is also developed to curb the odometry module's drift. To our knowledge, iRIOM based on the two modules is the first 4D radar inertial SLAM system. On our and third-party data, we show iRIOM's favorable odometry accuracy and mapping consistency against the FastLIO-SLAM and the EKFRIO. Also, the ablation study reveal the benefit of inertial data versus the constant velocity model, the scan-to-submap matching versus the scan-to-scans matching, and loop closure.

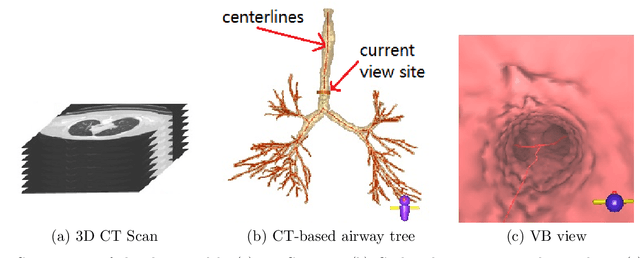

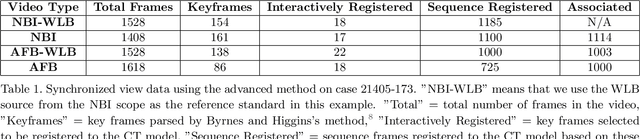

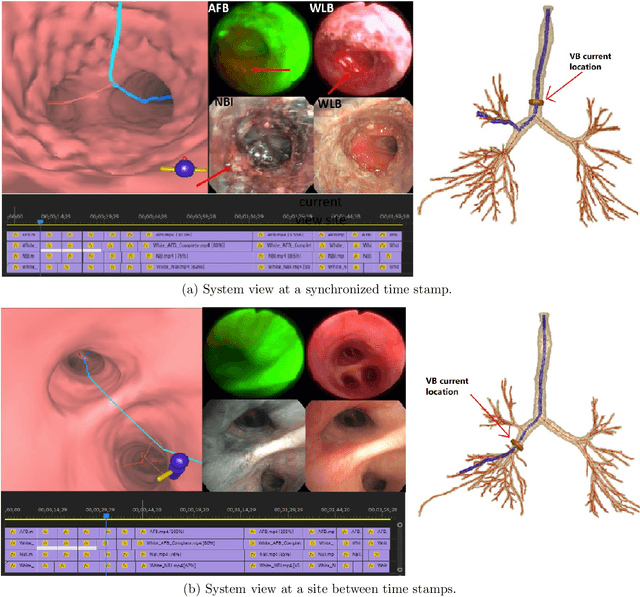

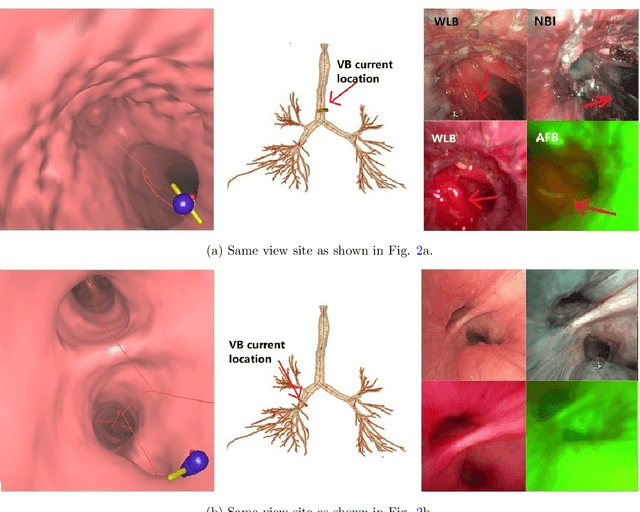

Bronchoscopic video synchronization for interactive multimodal inspection of bronchial lesions

Mar 20, 2023

With lung cancer being the most fatal cancer worldwide, it is important to detect the disease early. A potentially effective way of detecting early cancer lesions developing along the airway walls (epithelium) is bronchoscopy. To this end, developments in bronchoscopy offer three promising noninvasive modalities for imaging bronchial lesions: white-light bronchoscopy (WLB), autofluorescence bronchoscopy (AFB), and narrow-band imaging (NBI). While these modalities give complementary views of the airway epithelium, the physician must manually inspect each video stream produced by a given modality to locate the suspect cancer lesions. Unfortunately, no effort has been made to rectify this situation by providing efficient quantitative and visual tools for analyzing these video streams. This makes the lesion search process extremely time-consuming and error-prone, thereby making it impractical to utilize these rich data sources effectively. We propose a framework for synchronizing multiple bronchoscopic videos to enable an interactive multimodal analysis of bronchial lesions. Our methods first register the video streams to a reference 3D chest computed-tomography (CT) scan to produce multimodal linkages to the airway tree. Our methods then temporally correlate the videos to one another to enable synchronous visualization of the resulting multimodal data set. Pictorial and quantitative results illustrate the potential of the methods.

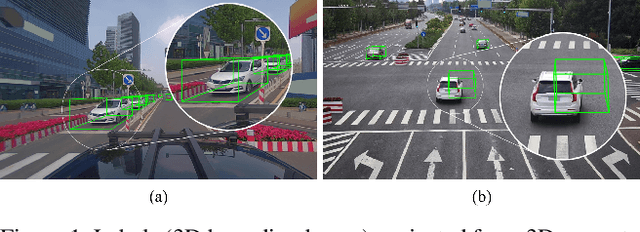

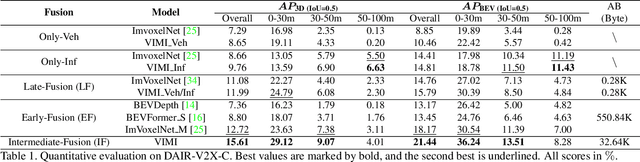

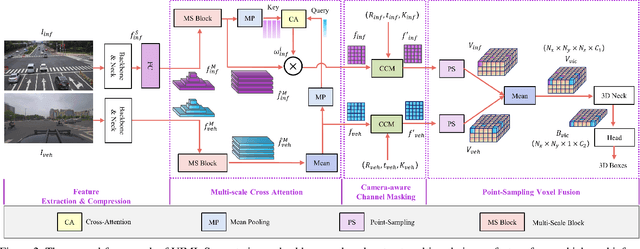

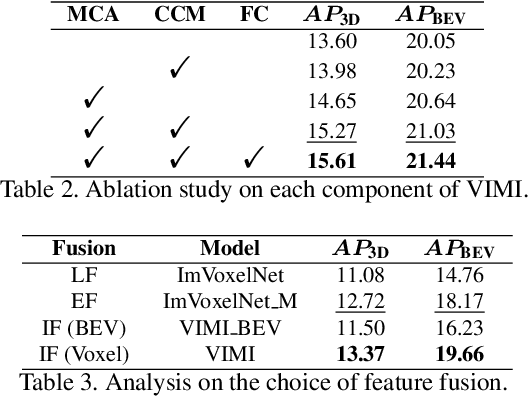

VIMI: Vehicle-Infrastructure Multi-view Intermediate Fusion for Camera-based 3D Object Detection

Mar 20, 2023

In autonomous driving, Vehicle-Infrastructure Cooperative 3D Object Detection (VIC3D) makes use of multi-view cameras from both vehicles and traffic infrastructure, providing a global vantage point with rich semantic context of road conditions beyond a single vehicle viewpoint. Two major challenges prevail in VIC3D: 1) inherent calibration noise when fusing multi-view images, caused by time asynchrony across cameras; 2) information loss when projecting 2D features into 3D space. To address these issues, We propose a novel 3D object detection framework, Vehicles-Infrastructure Multi-view Intermediate fusion (VIMI). First, to fully exploit the holistic perspectives from both vehicles and infrastructure, we propose a Multi-scale Cross Attention (MCA) module that fuses infrastructure and vehicle features on selective multi-scales to correct the calibration noise introduced by camera asynchrony. Then, we design a Camera-aware Channel Masking (CCM) module that uses camera parameters as priors to augment the fused features. We further introduce a Feature Compression (FC) module with channel and spatial compression blocks to reduce the size of transmitted features for enhanced efficiency. Experiments show that VIMI achieves 15.61% overall AP_3D and 21.44% AP_BEV on the new VIC3D dataset, DAIR-V2X-C, significantly outperforming state-of-the-art early fusion and late fusion methods with comparable transmission cost.

Generating Real-Time Strategy Game Units Using Search-Based Procedural Content Generation and Monte Carlo Tree Search

Dec 07, 2022

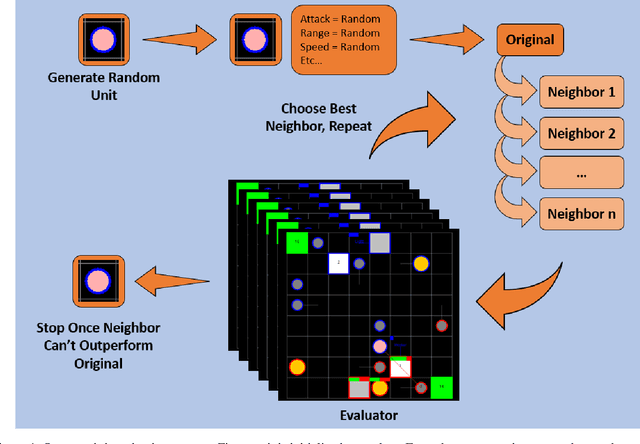

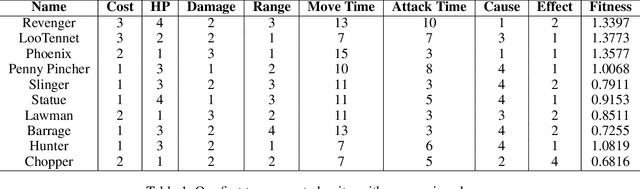

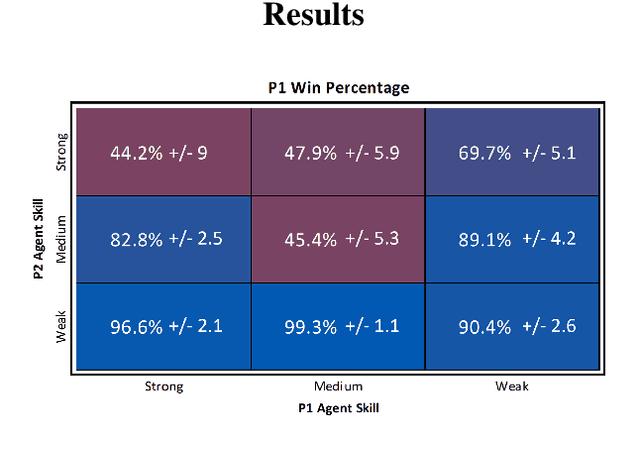

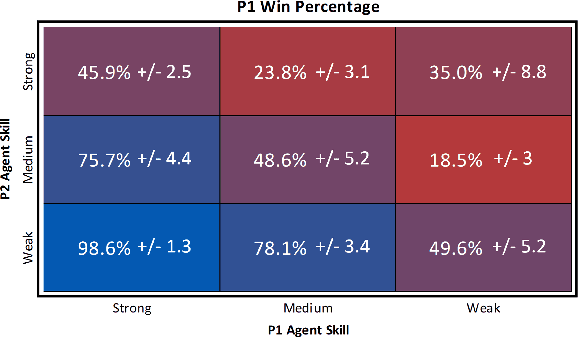

Real-Time Strategy (RTS) game unit generation is an unexplored area of Procedural Content Generation (PCG) research, which leaves the question of how to automatically generate interesting and balanced units unanswered. Creating unique and balanced units can be a difficult task when designing an RTS game, even for humans. Having an automated method of designing units could help developers speed up the creation process as well as find new ideas. In this work we propose a method of generating balanced and useful RTS units. We draw on Search-Based PCG and a fitness function based on Monte Carlo Tree Search (MCTS). We present ten units generated by our system designed to be used in the game microRTS, as well as results demonstrating that these units are unique, useful, and balanced.

ICICLE: Interpretable Class Incremental Continual Learning

Mar 14, 2023

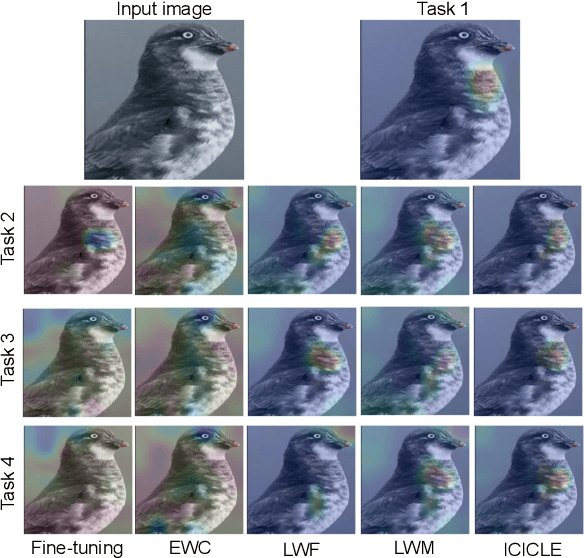

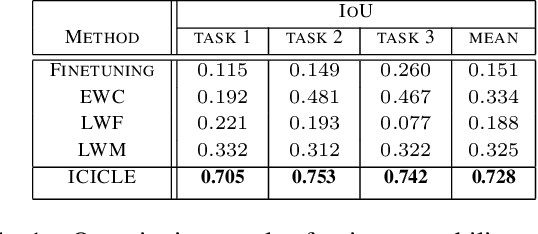

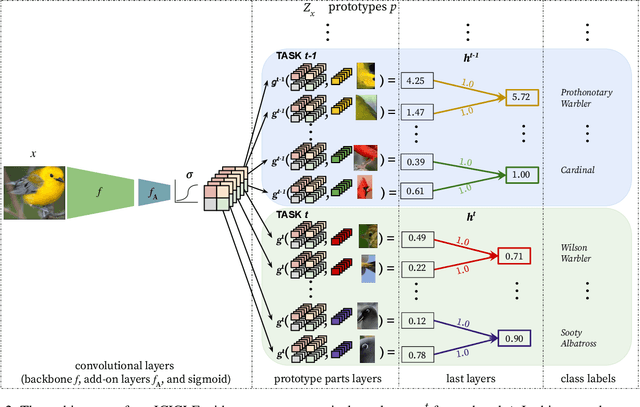

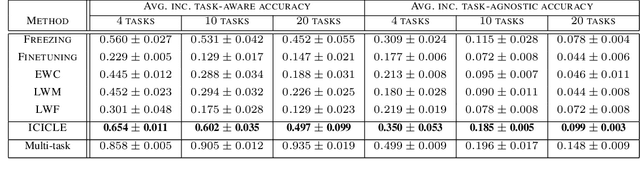

Continual learning enables incremental learning of new tasks without forgetting those previously learned, resulting in positive knowledge transfer that can enhance performance on both new and old tasks. However, continual learning poses new challenges for interpretability, as the rationale behind model predictions may change over time, leading to interpretability concept drift. We address this problem by proposing Interpretable Class-InCremental LEarning (ICICLE), an exemplar-free approach that adopts a prototypical part-based approach. It consists of three crucial novelties: interpretability regularization that distills previously learned concepts while preserving user-friendly positive reasoning; proximity-based prototype initialization strategy dedicated to the fine-grained setting; and task-recency bias compensation devoted to prototypical parts. Our experimental results demonstrate that ICICLE reduces the interpretability concept drift and outperforms the existing exemplar-free methods of common class-incremental learning when applied to concept-based models. We make the code available.

Localizing Spatial Information in Neural Spatiospectral Filters

Mar 14, 2023

Beamforming for multichannel speech enhancement relies on the estimation of spatial characteristics of the acoustic scene. In its simplest form, the delay-and-sum beamformer (DSB) introduces a time delay to all channels to align the desired signal components for constructive superposition. Recent investigations of neural spatiospectral filtering revealed that these filters can be characterized by a beampattern similar to one of traditional beamformers, which shows that artificial neural networks can learn and explicitly represent spatial structure. Using the Complex-valued Spatial Autoencoder (COSPA) as an exemplary neural spatiospectral filter for multichannel speech enhancement, we investigate where and how such networks represent spatial information. We show via clustering that for COSPA the spatial information is represented by the features generated by a gated recurrent unit (GRU) layer that has access to all channels simultaneously and that these features are not source -- but only direction of arrival-dependent.

Class-level Multiple Distributions Representation are Necessary for Semantic Segmentation

Mar 14, 2023

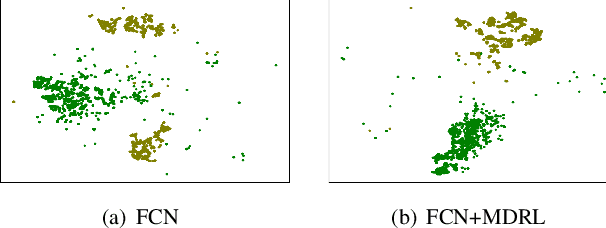

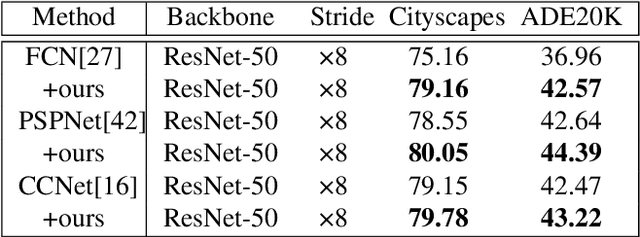

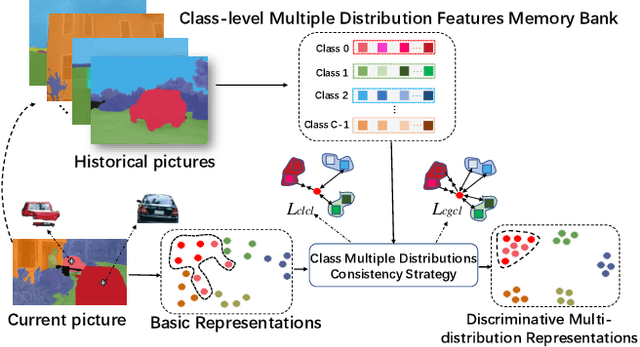

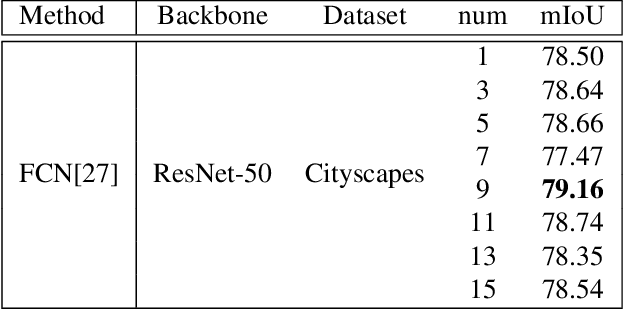

Existing approaches focus on using class-level features to improve semantic segmentation performance. How to characterize the relationships of intra-class pixels and inter-class pixels is the key to extract the discriminative representative class-level features. In this paper, we introduce for the first time to describe intra-class variations by multiple distributions. Then, multiple distributions representation learning(\textbf{MDRL}) is proposed to augment the pixel representations for semantic segmentation. Meanwhile, we design a class multiple distributions consistency strategy to construct discriminative multiple distribution representations of embedded pixels. Moreover, we put forward a multiple distribution semantic aggregation module to aggregate multiple distributions of the corresponding class to enhance pixel semantic information. Our approach can be seamlessly integrated into popular segmentation frameworks FCN/PSPNet/CCNet and achieve 5.61\%/1.75\%/0.75\% mIoU improvements on ADE20K. Extensive experiments on the Cityscapes, ADE20K datasets have proved that our method can bring significant performance improvement.

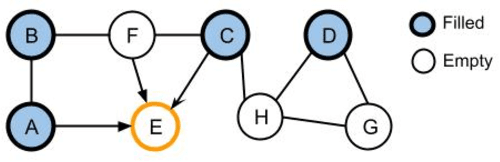

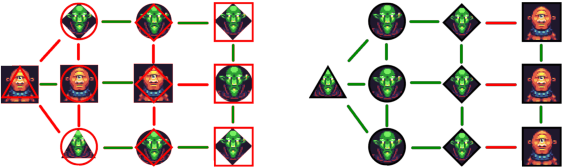

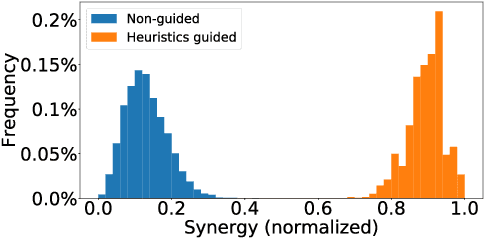

Automated Graph Genetic Algorithm based Puzzle Validation for Faster Game Design

Feb 21, 2023

Many games are reliant on creating new and engaging content constantly to maintain the interest of their player-base. One such example are puzzle games, in such it is common to have a recurrent need to create new puzzles. Creating new puzzles requires guaranteeing that they are solvable and interesting to players, both of which require significant time from the designers. Automatic validation of puzzles provides designers with a significant time saving and potential boost in quality. Automation allows puzzle designers to estimate different properties, increase the variety of constraints, and even personalize puzzles to specific players. Puzzles often have a large design space, which renders exhaustive search approaches infeasible, if they require significant time. Specifically, those puzzles can be formulated as quadratic combinatorial optimization problems. This paper presents an evolutionary algorithm, empowered by expert-knowledge informed heuristics, for solving logical puzzles in video games efficiently, leading to a more efficient design process. We discuss multiple variations of hybrid genetic approaches for constraint satisfaction problems that allow us to find a diverse set of near-optimal solutions for puzzles. We demonstrate our approach on a fantasy Party Building Puzzle game, and discuss how it can be applied more broadly to other puzzles to guide designers in their creative process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge