"Time": models, code, and papers

Automatic Number Plate Recognition using Random Forest Classifier

Mar 26, 2023Automatic Number Plate Recognition System (ANPRS) is a mass surveillance embedded system that recognizes the number plate of the vehicle. This system is generally used for traffic management applications. It should be very efficient in detecting the number plate in noisy as well as in low illumination and also within required time frame. This paper proposes a number plate recognition method by processing vehicle's rear or front image. After image is captured, processing is divided into four steps which are Pre-Processing, Number plate localization, Character segmentation and Character recognition. Pre-Processing enhances the image for further processing, number plate localization extracts the number plate region from the image, character segmentation separates the individual characters from the extracted number plate and character recognition identifies the optical characters by using random forest classification algorithm. Experimental results reveal that the accuracy of this method is 90.9%.

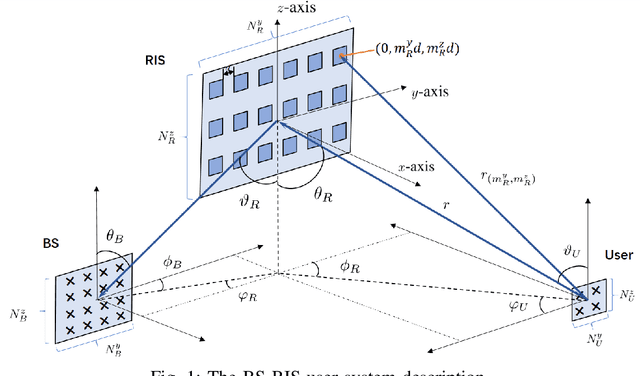

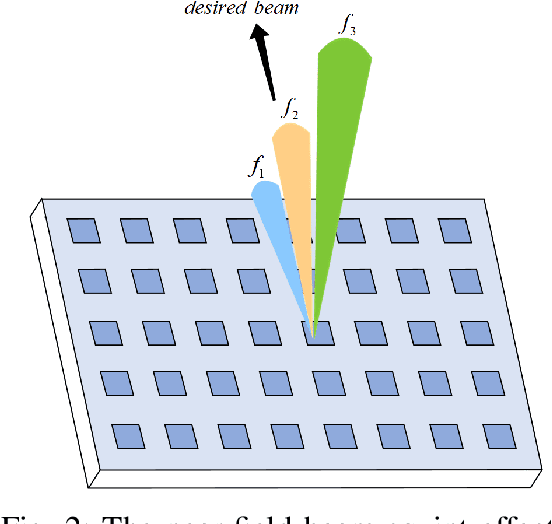

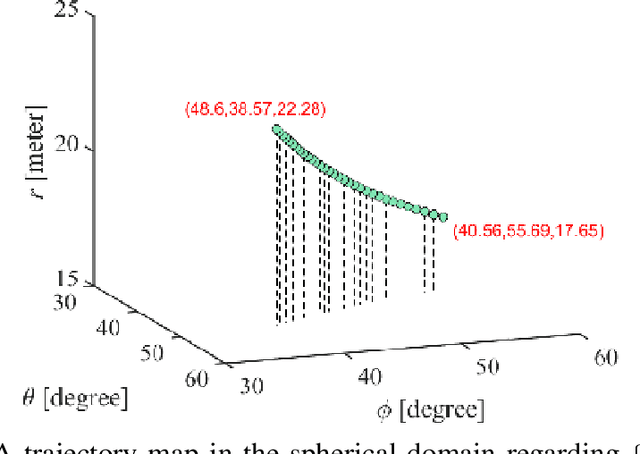

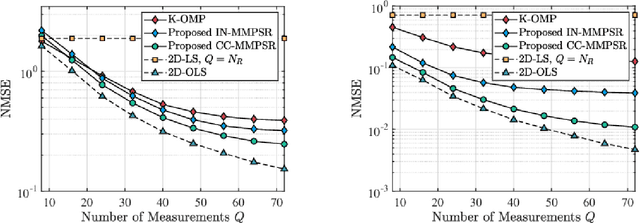

Near-Field Channel Estimation for Extremely Large-Scale Reconfigurable Intelligent Surface (XL-RIS)-Aided Wideband mmWave Systems

Apr 02, 2023

Near-field communications present new opportunities over near-field channels, however, the spherical wavefront propagation makes near-field signal processing challenging. In this context, this paper proposes efficient near-field channel estimation methods for wideband MIMO mmWave systems with the aid of extremely large-scale reconfigurable intelligent surfaces (XL-RIS). For the wideband signals reflected by the analog RIS, we characterize their near-field beam squint effect in both angle and distance domains. Based on the mathematical analysis of the near-field beam patterns over all frequencies, a wideband spherical-domain dictionary is constructed by minimizing the coherence of two arbitrary beams. In light of this, we formulate a two-dimensional compressive sensing problem to recover the channel parameter based on the spherical-domain sparsity of mmWave channels. To this end, we present a correlation coefficient-based atom matching method within our proposed multi-frequency parallelizable subspace recovery framework for efficient solutions. Additionally, we propose a two-dimensional oracle estimator as a benchmark and derive its lower bound across all subcarriers. Our findings emphasize the significance of system hyperparameters and the sensing matrix of each subcarrier in determining the accuracy of the estimation. Finally, numerical results show that our proposed method achieves considerable performance compared with the lower bound and has a time complexity linear to the number of RIS elements.

Sequence-aware item recommendations for multiply repeated user-item interactions

Apr 02, 2023

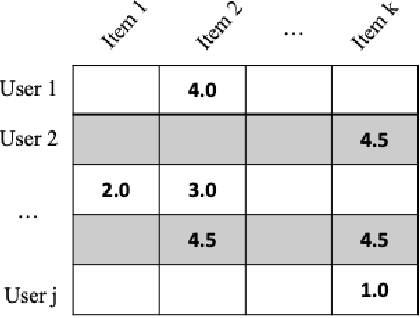

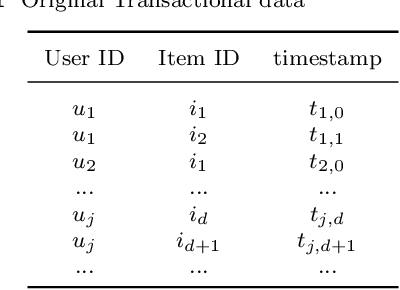

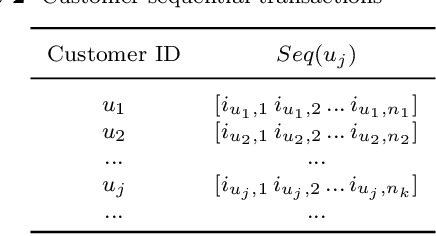

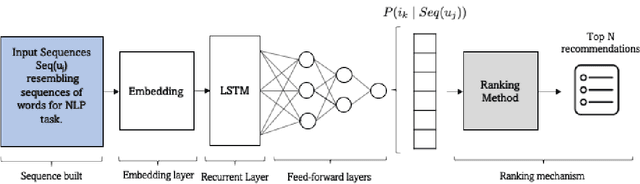

Recommender systems are one of the most successful applications of machine learning and data science. They are successful in a wide variety of application domains, including e-commerce, media streaming content, email marketing, and virtually every industry where personalisation facilitates better user experience or boosts sales and customer engagement. The main goal of these systems is to analyse past user behaviour to predict which items are of most interest to users. They are typically built with the use of matrix-completion techniques such as collaborative filtering or matrix factorisation. However, although these approaches have achieved tremendous success in numerous real-world applications, their effectiveness is still limited when users might interact multiple times with the same items, or when user preferences change over time. We were inspired by the approach that Natural Language Processing techniques take to compress, process, and analyse sequences of text. We designed a recommender system that induces the temporal dimension in the task of item recommendation and considers sequences of item interactions for each user in order to make recommendations. This method is empirically shown to give highly accurate predictions of user-items interactions for all users in a retail environment, without explicit feedback, besides increasing total sales by 5% and individual customer expenditure by over 50% in an A/B live test.

Safe Hierarchical Navigation in Crowded Dynamic Uncertain Environments

Mar 24, 2023

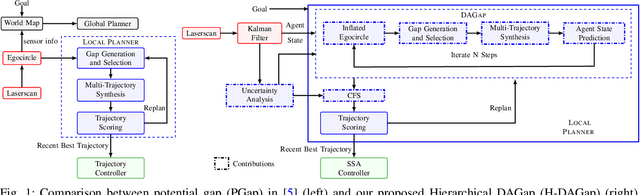

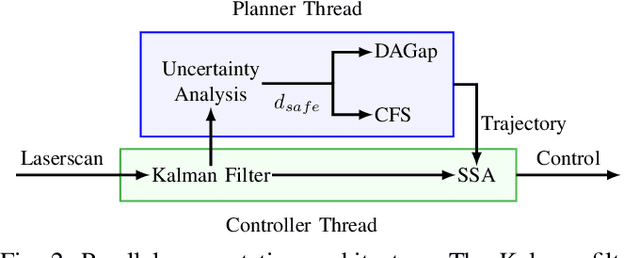

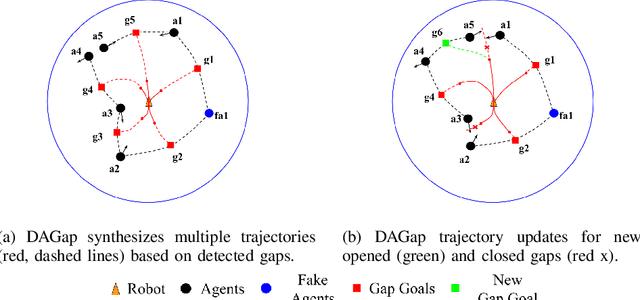

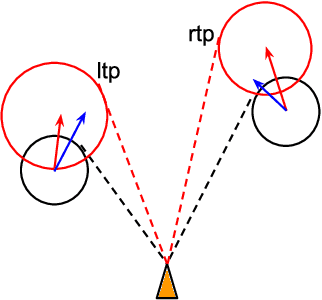

This paper describes a hierarchical solution consisting of a multi-phase planner and a low-level safe controller to jointly solve the safe navigation problem in crowded, dynamic, and uncertain environments. The planner employs dynamic gap analysis and trajectory optimization to achieve collision avoidance with respect to the predicted trajectories of dynamic agents within the sensing and planning horizon and with robustness to agent uncertainty. To address uncertainty over the planning horizon and real-time safety, a fast reactive safe set algorithm (SSA) is adopted, which monitors and modifies the unsafe control during trajectory tracking. Compared to other existing methods, our approach offers theoretical guarantees of safety and achieves collision-free navigation with higher probability in uncertain environments, as demonstrated in scenarios with 20 and 50 dynamic agents. Project website: https://hychen-naza.github.io/projects/HDAGap/.

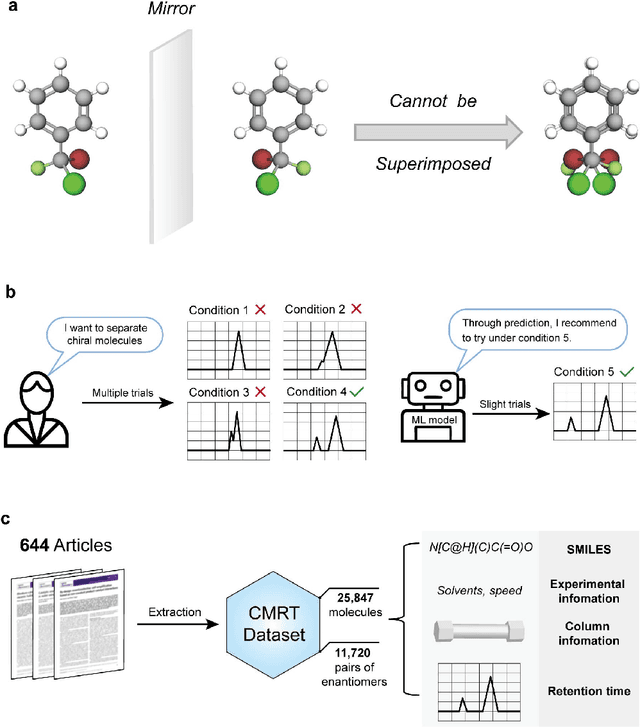

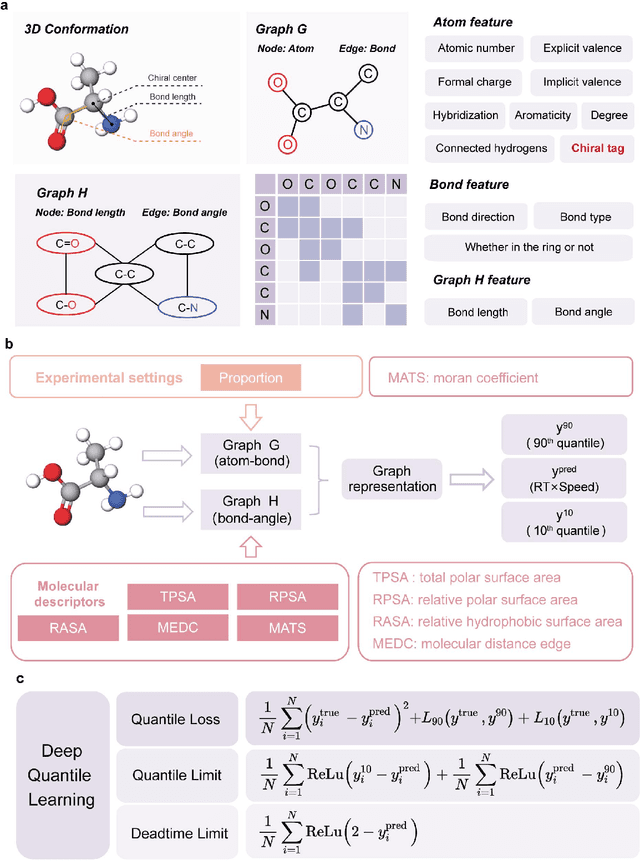

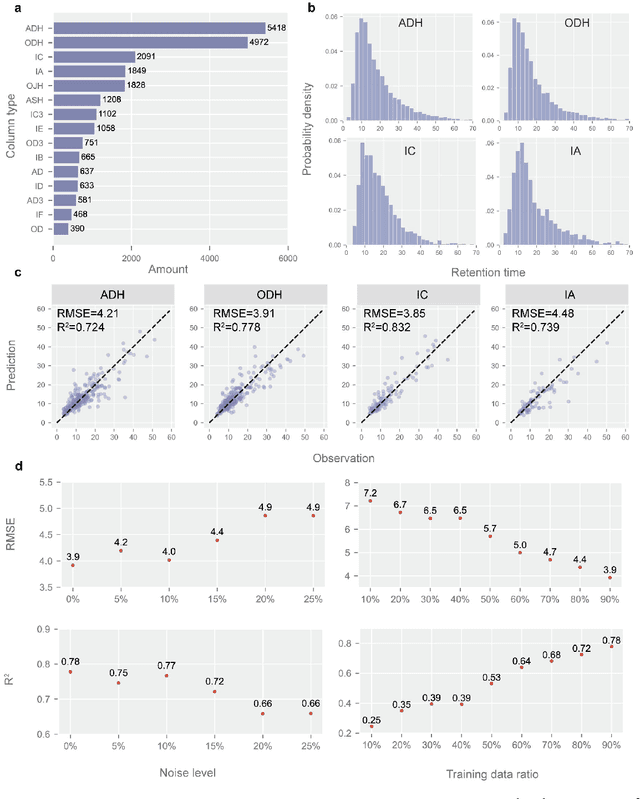

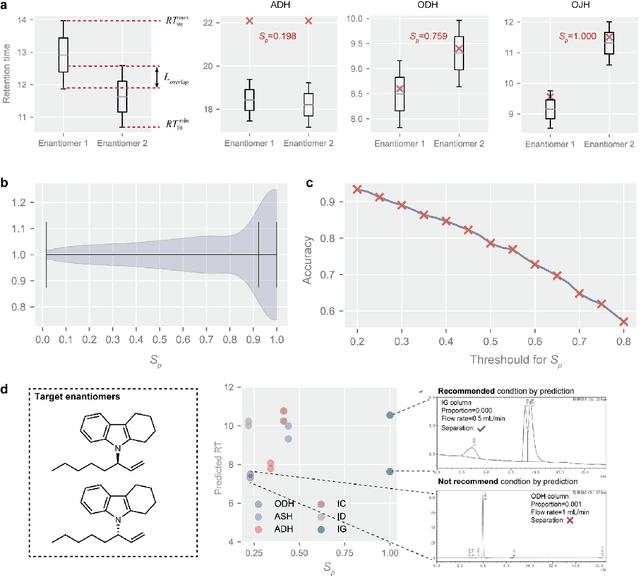

Retention Time Prediction for Chromatographic Enantioseparation by Quantile Geometry-enhanced Graph Neural Network

Nov 19, 2022

A new research framework is proposed to incorporate machine learning techniques into the field of experimental chemistry to facilitate chromatographic enantioseparation. A documentary dataset of chiral molecular retention times (CMRT dataset) in high-performance liquid chromatography is established to handle the challenge of data acquisition. Based on the CMRT dataset, a quantile geometry-enhanced graph neural network is proposed to learn the molecular structure-retention time relationship, which shows a satisfactory predictive ability for enantiomers. The domain knowledge of chromatography is incorporated into the machine learning model to achieve multi-column prediction, which paves the way for chromatographic enantioseparation prediction by calculating the separation probability. Experiments confirm that the proposed research framework works well in retention time prediction and chromatographic enantioseparation facilitation, which sheds light on the application of machine learning techniques to the experimental scene and improves the efficiency of experimenters to speed up scientific discovery.

Few-shot Geometry-Aware Keypoint Localization

Mar 30, 2023

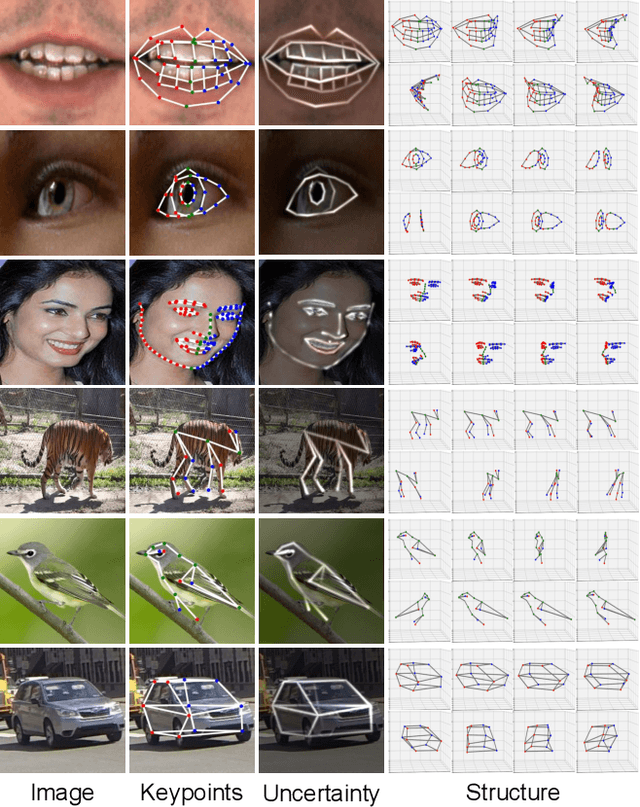

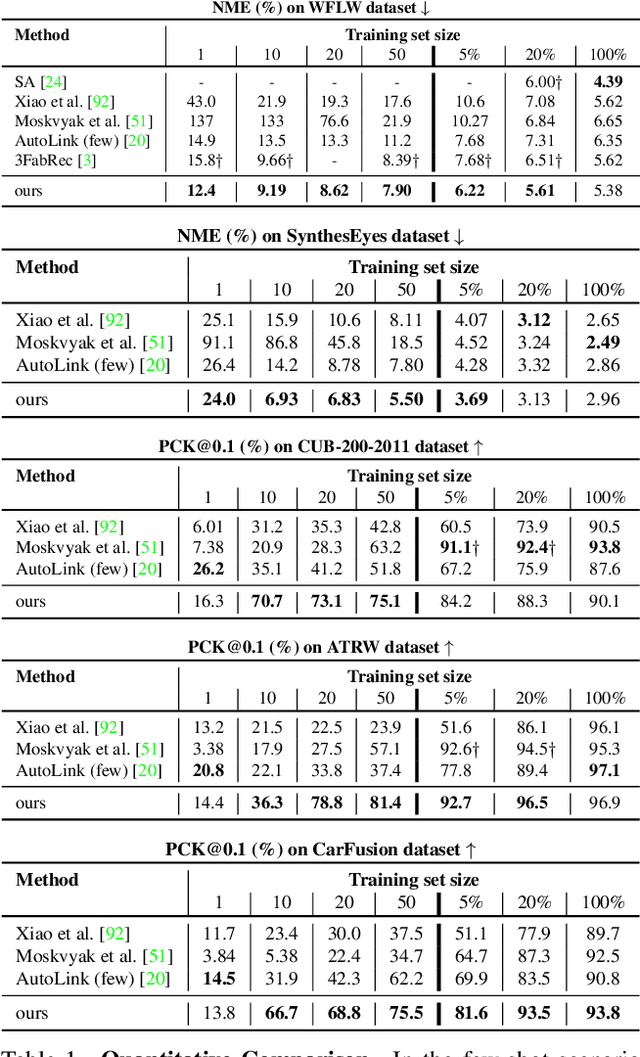

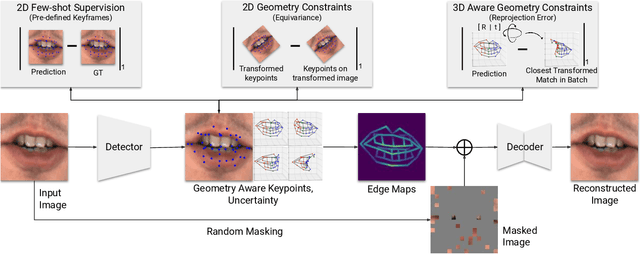

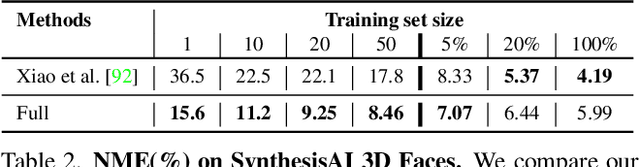

Supervised keypoint localization methods rely on large manually labeled image datasets, where objects can deform, articulate, or occlude. However, creating such large keypoint labels is time-consuming and costly, and is often error-prone due to inconsistent labeling. Thus, we desire an approach that can learn keypoint localization with fewer yet consistently annotated images. To this end, we present a novel formulation that learns to localize semantically consistent keypoint definitions, even for occluded regions, for varying object categories. We use a few user-labeled 2D images as input examples, which are extended via self-supervision using a larger unlabeled dataset. Unlike unsupervised methods, the few-shot images act as semantic shape constraints for object localization. Furthermore, we introduce 3D geometry-aware constraints to uplift keypoints, achieving more accurate 2D localization. Our general-purpose formulation paves the way for semantically conditioned generative modeling and attains competitive or state-of-the-art accuracy on several datasets, including human faces, eyes, animals, cars, and never-before-seen mouth interior (teeth) localization tasks, not attempted by the previous few-shot methods. Project page: https://xingzhehe.github.io/FewShot3DKP/}{https://xingzhehe.github.io/FewShot3DKP/

* CVPR 2023

Event-based Agile Object Catching with a Quadrupedal Robot

Mar 30, 2023

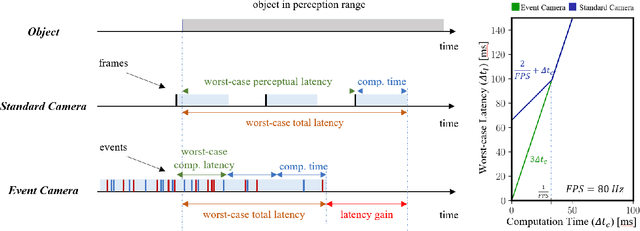

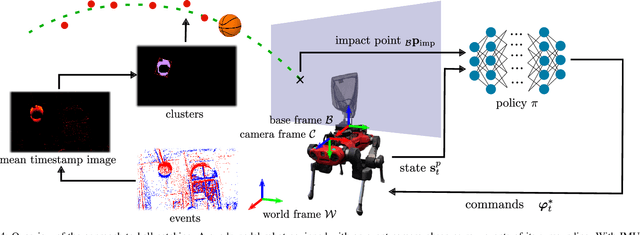

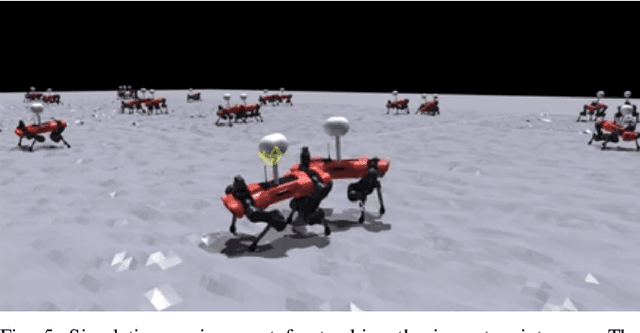

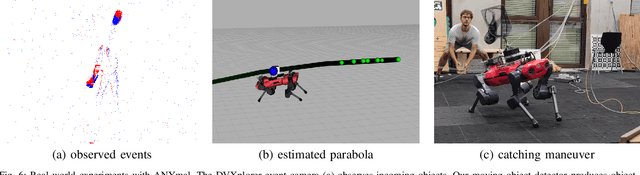

Quadrupedal robots are conquering various indoor and outdoor applications due to their ability to navigate challenging uneven terrains. Exteroceptive information greatly enhances this capability since perceiving their surroundings allows them to adapt their controller and thus achieve higher levels of robustness. However, sensors such as LiDARs and RGB cameras do not provide sufficient information to quickly and precisely react in a highly dynamic environment since they suffer from a bandwidth-latency tradeoff. They require significant bandwidth at high frame rates while featuring significant perceptual latency at lower frame rates, thereby limiting their versatility on resource-constrained platforms. In this work, we tackle this problem by equipping our quadruped with an event camera, which does not suffer from this tradeoff due to its asynchronous and sparse operation. In leveraging the low latency of the events, we push the limits of quadruped agility and demonstrate high-speed ball catching for the first time. We show that our quadruped equipped with an event camera can catch objects with speeds up to 15 m/s from 4 meters, with a success rate of 83%. Using a VGA event camera, our method runs at 100 Hz on an NVIDIA Jetson Orin.

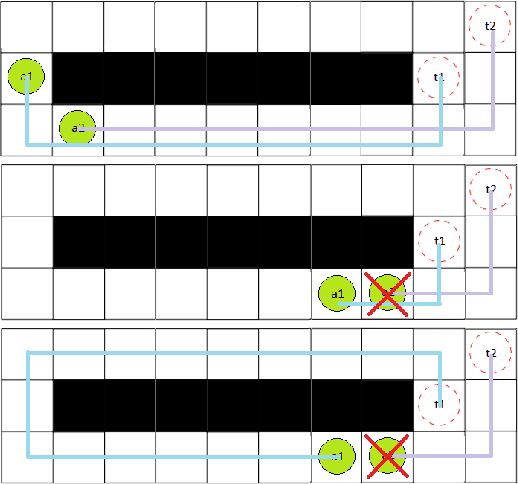

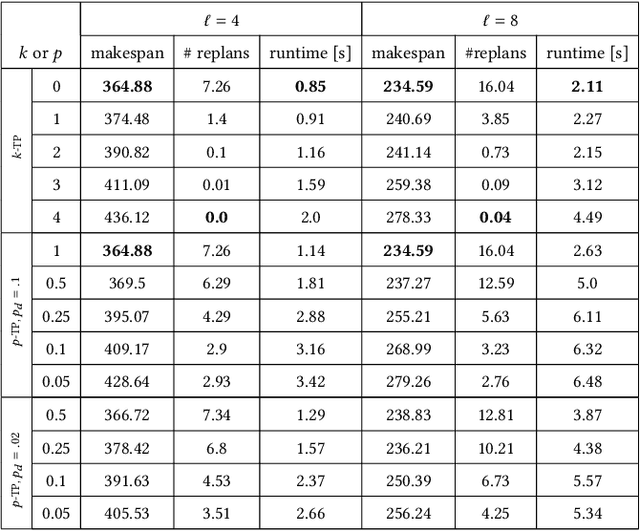

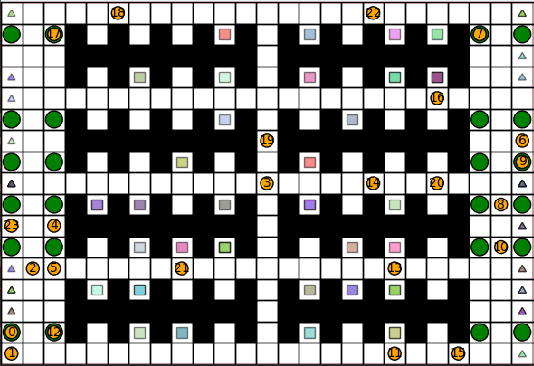

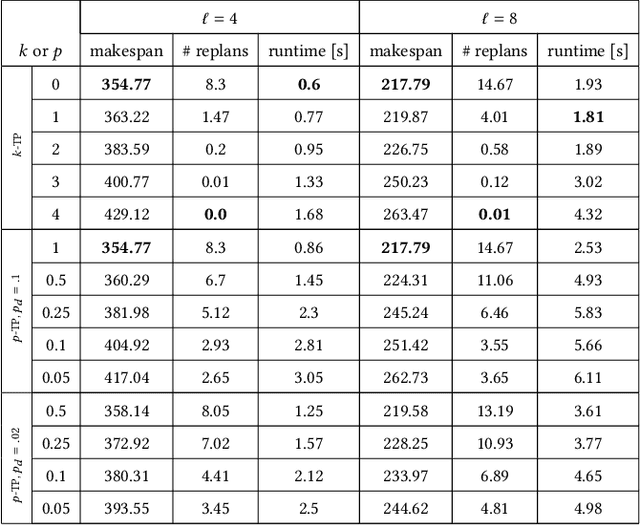

Robust Multi-Agent Pickup and Delivery with Delays

Mar 30, 2023

Multi-Agent Pickup and Delivery (MAPD) is the problem of computing collision-free paths for a group of agents such that they can safely reach delivery locations from pickup ones. These locations are provided at runtime, making MAPD a combination between classical Multi-Agent Path Finding (MAPF) and online task assignment. Current algorithms for MAPD do not consider many of the practical issues encountered in real applications: real agents often do not follow the planned paths perfectly, and may be subject to delays and failures. In this paper, we study the problem of MAPD with delays, and we present two solution approaches that provide robustness guarantees by planning paths that limit the effects of imperfect execution. In particular, we introduce two algorithms, k-TP and p-TP, both based on a decentralized algorithm typically used to solve MAPD, Token Passing (TP), which offer deterministic and probabilistic guarantees, respectively. Experimentally, we compare our algorithms against a version of TP enriched with online replanning. k-TP and p-TP provide robust solutions, significantly reducing the number of replans caused by delays, with little or no increase in solution cost and running time.

Lifting uniform learners via distributional decomposition

Mar 30, 2023

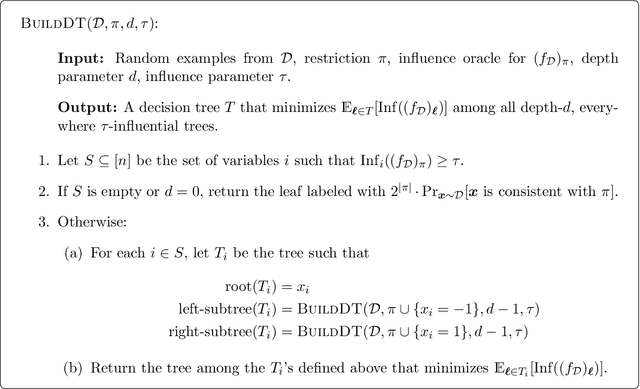

We show how any PAC learning algorithm that works under the uniform distribution can be transformed, in a blackbox fashion, into one that works under an arbitrary and unknown distribution $\mathcal{D}$. The efficiency of our transformation scales with the inherent complexity of $\mathcal{D}$, running in $\mathrm{poly}(n, (md)^d)$ time for distributions over $\{\pm 1\}^n$ whose pmfs are computed by depth-$d$ decision trees, where $m$ is the sample complexity of the original algorithm. For monotone distributions our transformation uses only samples from $\mathcal{D}$, and for general ones it uses subcube conditioning samples. A key technical ingredient is an algorithm which, given the aforementioned access to $\mathcal{D}$, produces an optimal decision tree decomposition of $\mathcal{D}$: an approximation of $\mathcal{D}$ as a mixture of uniform distributions over disjoint subcubes. With this decomposition in hand, we run the uniform-distribution learner on each subcube and combine the hypotheses using the decision tree. This algorithmic decomposition lemma also yields new algorithms for learning decision tree distributions with runtimes that exponentially improve on the prior state of the art -- results of independent interest in distribution learning.

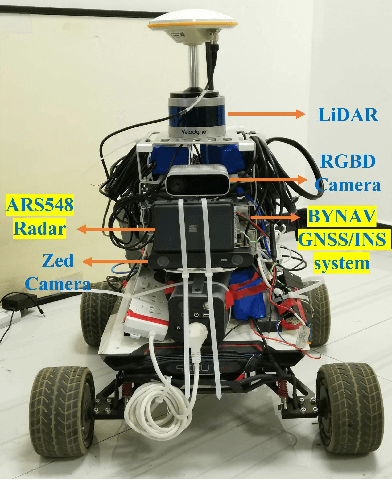

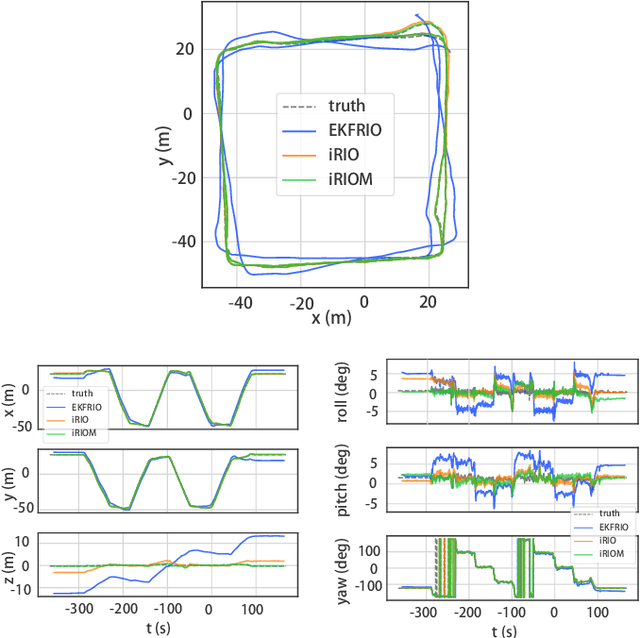

4D iRIOM: 4D Imaging Radar Inertial Odometry and Mapping

Apr 03, 2023

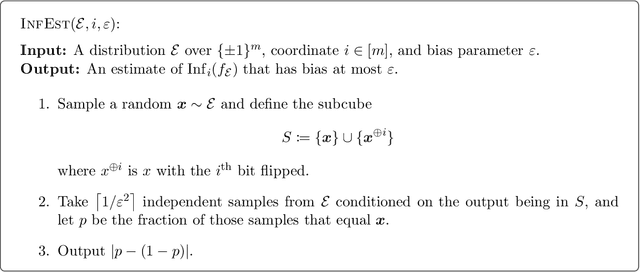

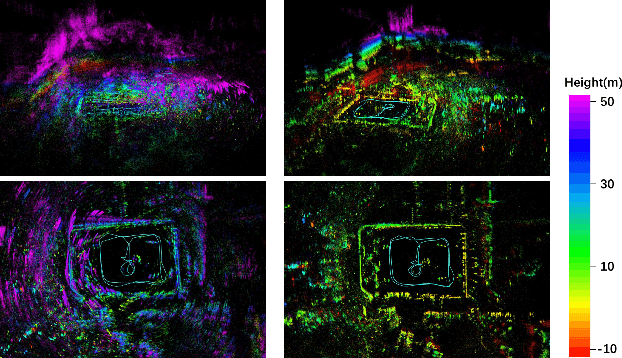

Millimeter wave radar can measure distances, directions, and Doppler velocity for objects in harsh conditions such as fog. The 4D imaging radar with both vertical and horizontal data resembling an image can also measure objects' height. Previous studies have used 3D radars for ego-motion estimation. But few methods leveraged the rich data of imaging radars, and they usually omitted the mapping aspect, thus leading to inferior odometry accuracy. This paper presents a real-time imaging radar inertial odometry and mapping method, iRIOM, based on the submap concept. To deal with moving objects and multipath reflections, we use the graduated non-convexity method to robustly and efficiently estimate ego-velocity from a single scan. To measure the agreement between sparse non-repetitive radar scan points and submap points, the distribution-to-multi-distribution distance for matches is adopted. The ego-velocity, scan-to-submap matches are fused with the 6D inertial data by an iterative extended Kalman filter to get the platform's 3D position and orientation. A loop closure module is also developed to curb the odometry module's drift. To our knowledge, iRIOM based on the two modules is the first 4D radar inertial SLAM system. On our and third-party data, we show iRIOM's favorable odometry accuracy and mapping consistency against the FastLIO-SLAM and the EKFRIO. Also, the ablation study reveal the benefit of inertial data versus the constant velocity model, and scan-to-submap matching versus scan-to-scan matching.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge