"Time": models, code, and papers

Robotic Navigation Autonomy for Subretinal Injection via Intelligent Real-Time Virtual iOCT Volume Slicing

Jan 17, 2023

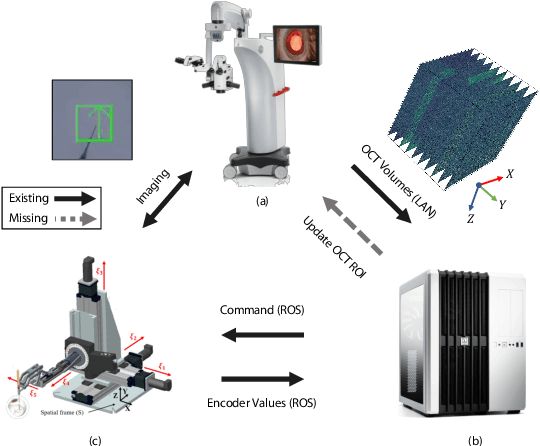

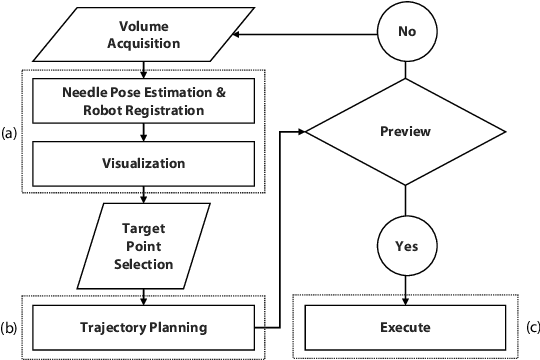

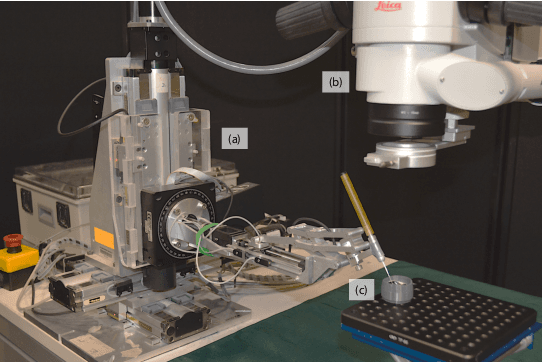

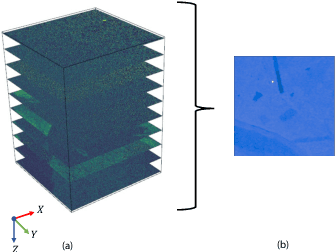

In the last decade, various robotic platforms have been introduced that could support delicate retinal surgeries. Concurrently, to provide semantic understanding of the surgical area, recent advances have enabled microscope-integrated intraoperative Optical Coherent Tomography (iOCT) with high-resolution 3D imaging at near video rate. The combination of robotics and semantic understanding enables task autonomy in robotic retinal surgery, such as for subretinal injection. This procedure requires precise needle insertion for best treatment outcomes. However, merging robotic systems with iOCT introduces new challenges. These include, but are not limited to high demands on data processing rates and dynamic registration of these systems during the procedure. In this work, we propose a framework for autonomous robotic navigation for subretinal injection, based on intelligent real-time processing of iOCT volumes. Our method consists of an instrument pose estimation method, an online registration between the robotic and the iOCT system, and trajectory planning tailored for navigation to an injection target. We also introduce intelligent virtual B-scans, a volume slicing approach for rapid instrument pose estimation, which is enabled by Convolutional Neural Networks (CNNs). Our experiments on ex-vivo porcine eyes demonstrate the precision and repeatability of the method. Finally, we discuss identified challenges in this work and suggest potential solutions to further the development of such systems.

DynamoPMU: A Physics Informed Anomaly Detection and Prediction Methodology using non-linear dynamics from $μ$PMU Measurement Data

Mar 31, 2023

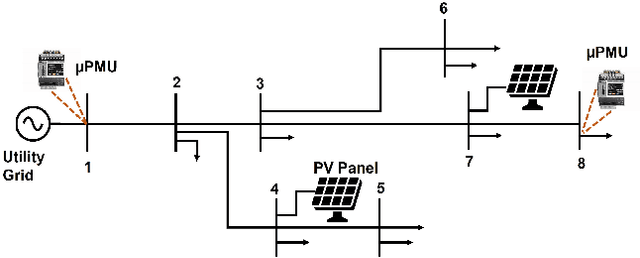

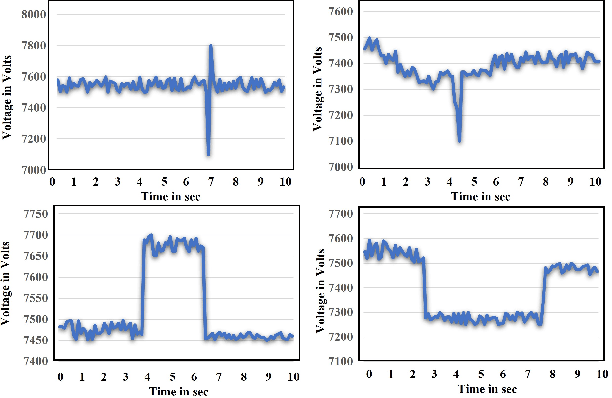

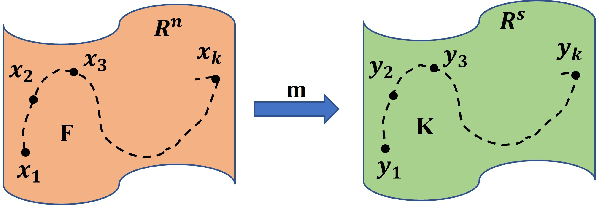

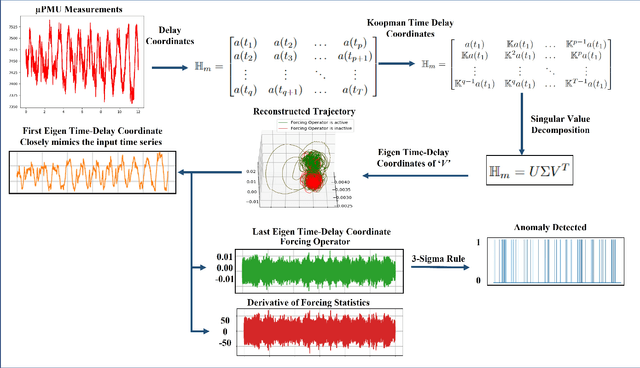

The expansion in technology and attainability of a large number of sensors has led to a huge amount of real-time streaming data. The real-time data in the electrical distribution system is collected through distribution-level phasor measurement units referred to as $\mu$PMU which report high-resolution phasor measurements comprising various event signatures which provide situational awareness and enable a level of visibility into the distribution system. These events are infrequent, unschedule, and uncertain; it is a challenge to scrutinize, detect and predict the occurrence of such events. For electrical distribution systems, it is challenging to explicitly identify evolution functions that describe the complex, non-linear, and non-stationary signature patterns of events. In this paper, we seek to address this problem by developing a physics dynamics-based approach to detect anomalies in the $\mu$PMU streaming data and simultaneously predict the events using governing equations. We propose a data-driven approach based on the Hankel alternative view of the Koopman (HAVOK) operator, called DynamoPMU, to analyze the underlying dynamics of the distribution system by representing them in a linear intrinsic space. The key technical idea is that the proposed method separates out the linear dynamical behaviour pattern and intermittent forcing (anomalous events) in sequential data which turns out to be very useful for anomaly detection and simultaneous data prediction. We demonstrate the efficacy of our proposed framework through analysis of real $\mu$PMU data taken from the LBNL distribution grid. DynamoPMU is suitable for real-time event detection as well as prediction in an unsupervised way and adapts to varying statistics.

Multi model LSTM architecture for Track Association based on Automatic Identification System Data

Apr 04, 2023

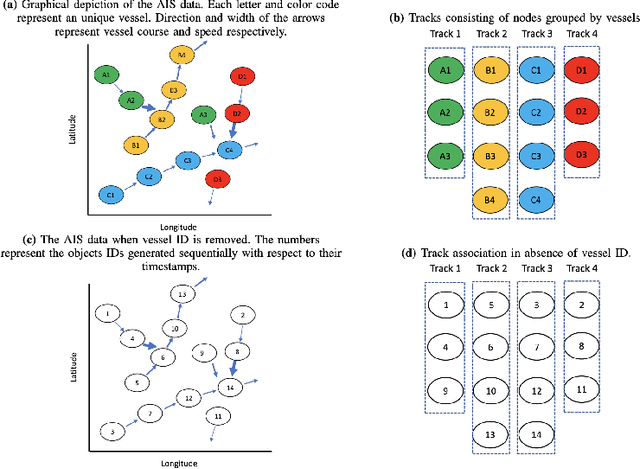

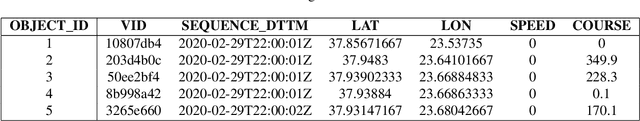

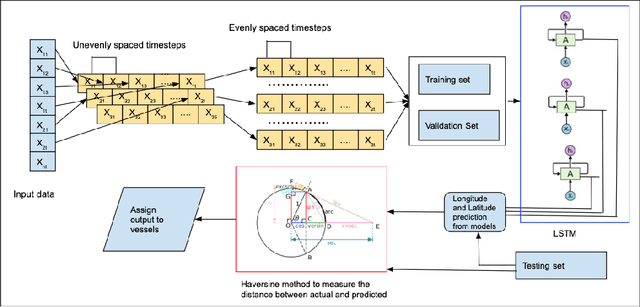

For decades, track association has been a challenging problem in marine surveillance, which involves the identification and association of vessel observations over time. However, the Automatic Identification System (AIS) has provided a new opportunity for researchers to tackle this problem by offering a large database of dynamic and geo-spatial information of marine vessels. With the availability of such large databases, researchers can now develop sophisticated models and algorithms that leverage the increased availability of data to address the track association challenge effectively. Furthermore, with the advent of deep learning, track association can now be approached as a data-intensive problem. In this study, we propose a Long Short-Term Memory (LSTM) based multi-model framework for track association. LSTM is a recurrent neural network architecture that is capable of processing multivariate temporal data collected over time in a sequential manner, enabling it to predict current vessel locations from historical observations. Based on these predictions, a geodesic distance based similarity metric is then utilized to associate the unclassified observations to their true tracks (vessels). We evaluate the performance of our approach using standard performance metrics, such as precision, recall, and F1 score, which provide a comprehensive summary of the accuracy of the proposed framework.

Side Channel-Assisted Inference Leakage from Machine Learning-based ECG Classification

Apr 04, 2023

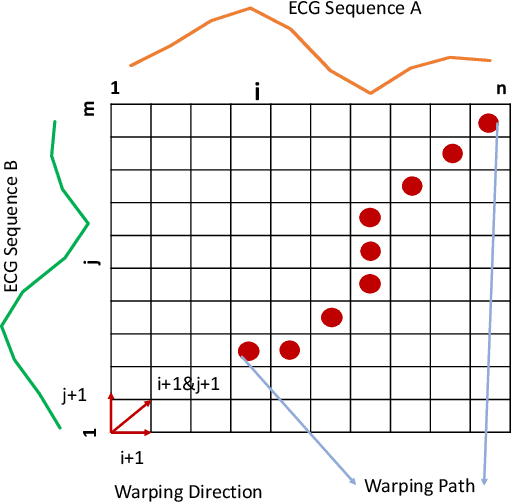

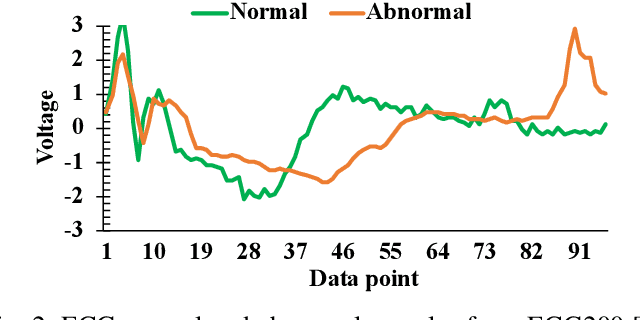

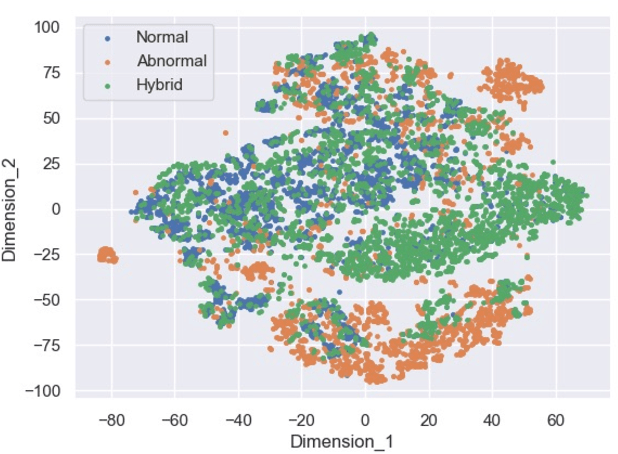

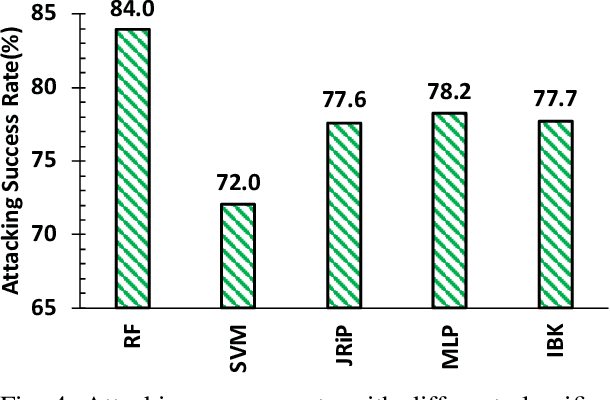

The Electrocardiogram (ECG) measures the electrical cardiac activity generated by the heart to detect abnormal heartbeat and heart attack. However, the irregular occurrence of the abnormalities demands continuous monitoring of heartbeats. Machine learning techniques are leveraged to automate the task to reduce labor work needed during monitoring. In recent years, many companies have launched products with ECG monitoring and irregular heartbeat alert. Among all classification algorithms, the time series-based algorithm dynamic time warping (DTW) is widely adopted to undertake the ECG classification task. Though progress has been achieved, the DTW-based ECG classification also brings a new attacking vector of leaking the patients' diagnosis results. This paper shows that the ECG input samples' labels can be stolen via a side-channel attack, Flush+Reload. In particular, we first identify the vulnerability of DTW for ECG classification, i.e., the correlation between warping path choice and prediction results. Then we implement an attack that leverages Flush+Reload to monitor the warping path selection with known ECG data and then build a predictor for constructing the relation between warping path selection and labels of input ECG samples. Based on experiments, we find that the Flush+Reload-based inference leakage can achieve an 84.0\% attacking success rate to identify the labels of the two samples in DTW.

Pac-HuBERT: Self-Supervised Music Source Separation via Primitive Auditory Clustering and Hidden-Unit BERT

Apr 04, 2023

In spite of the progress in music source separation research, the small amount of publicly-available clean source data remains a constant limiting factor for performance. Thus, recent advances in self-supervised learning present a largely-unexplored opportunity for improving separation models by leveraging unlabelled music data. In this paper, we propose a self-supervised learning framework for music source separation inspired by the HuBERT speech representation model. We first investigate the potential impact of the original HuBERT model by inserting an adapted version of it into the well-known Demucs V2 time-domain separation model architecture. We then propose a time-frequency-domain self-supervised model, Pac-HuBERT (for primitive auditory clustering HuBERT), that we later use in combination with a Res-U-Net decoder for source separation. Pac-HuBERT uses primitive auditory features of music as unsupervised clustering labels to initialize the self-supervised pretraining process using the Free Music Archive (FMA) dataset. The resulting framework achieves better source-to-distortion ratio (SDR) performance on the MusDB18 test set than the original Demucs V2 and Res-U-Net models. We further demonstrate that it can boost performance with small amounts of supervised data. Ultimately, our proposed framework is an effective solution to the challenge of limited clean source data for music source separation.

OpenDriver: an open-road driver state detection dataset

Apr 09, 2023

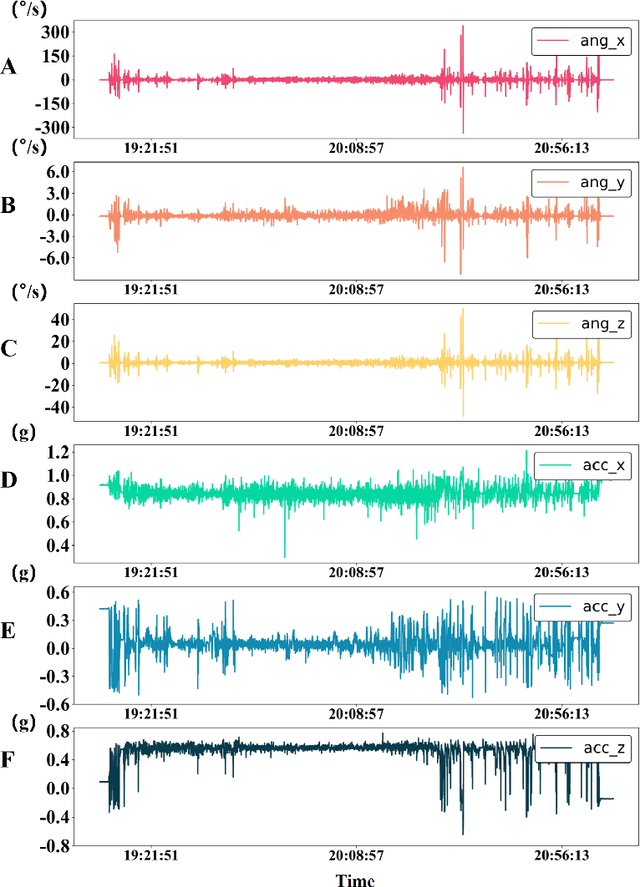

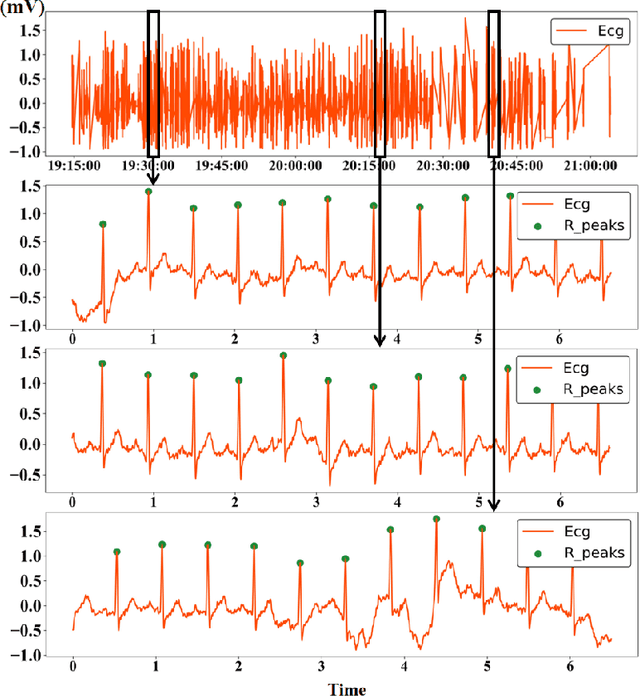

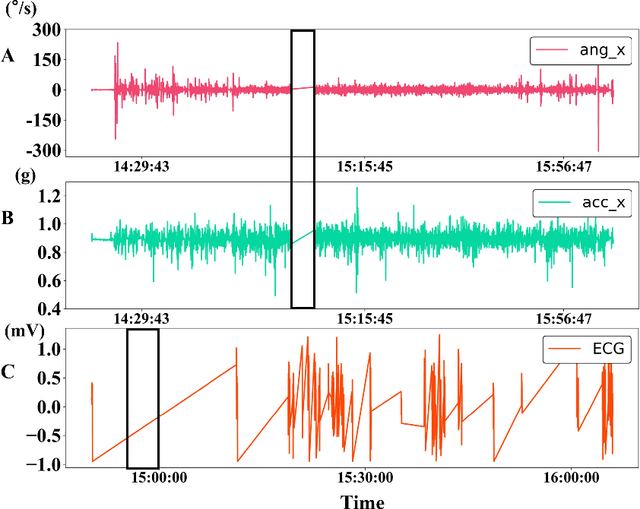

In modern society, road safety relies heavily on the psychological and physiological state of drivers. Negative factors such as fatigue, drowsiness, and stress can impair drivers' reaction time and decision making abilities, leading to an increased incidence of traffic accidents. Among the numerous studies for impaired driving detection, wearable physiological measurement is a real-time approach to monitoring a driver's state. However, currently, there are few driver physiological datasets in open road scenarios and the existing datasets suffer from issues such as poor signal quality, small sample sizes, and short data collection periods. Therefore, in this paper, a large-scale multimodal driving dataset for driver impairment detection and biometric data recognition is designed and described. The dataset contains two modalities of driving signals: six-axis inertial signals and electrocardiogram (ECG) signals, which were recorded while over one hundred drivers were following the same route through open roads during several months. Both the ECG signal sensor and the six-axis inertial signal sensor are installed on a specially designed steering wheel cover, allowing for data collection without disturbing the driver. Additionally, electrodermal activity (EDA) signals were also recorded during the driving process and will be integrated into the presented dataset soon. Future work can build upon this dataset to advance the field of driver impairment detection. New methods can be explored for integrating other types of biometric signals, such as eye tracking, to further enhance the understanding of driver states. The insights gained from this dataset can also inform the development of new driver assistance systems, promoting safer driving practices and reducing the risk of traffic accidents. The OpenDriver dataset will be publicly available soon.

High-Speed and Energy-Efficient Non-Binary Computing with Polymorphic Electro-Optic Circuits and Architectures

Apr 15, 2023

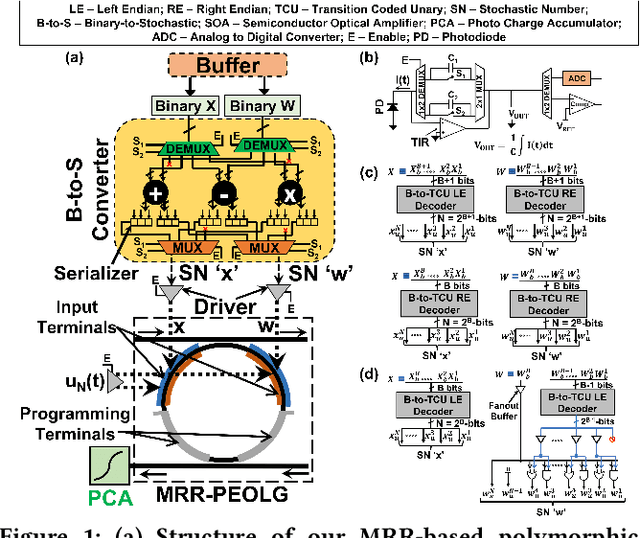

In this paper, we present microring resonator (MRR) based polymorphic E-O circuits and architectures that can be employed for high-speed and energy-efficient non-binary reconfigurable computing. Our polymorphic E-O circuits can be dynamically programmed to implement different logic and arithmetic functions at different times. They can provide compactness and polymorphism to consequently improve operand handling, reduce idle time, and increase amortization of area and static power overheads. When combined with flexible photodetectors with the innate ability to accumulate a high number of optical pulses in situ, our circuits can support energy-efficient processing of data in non-binary formats such as stochastic/unary and high-dimensional reservoir formats. Furthermore, our polymorphic E-O circuits enable configurable E-O computing accelerator architectures for processing binarized and integer quantized convolutional neural networks (CNNs). We compare our designed polymorphic E-O circuits and architectures to several circuits and architectures from prior works in terms of area, latency, and energy consumption.

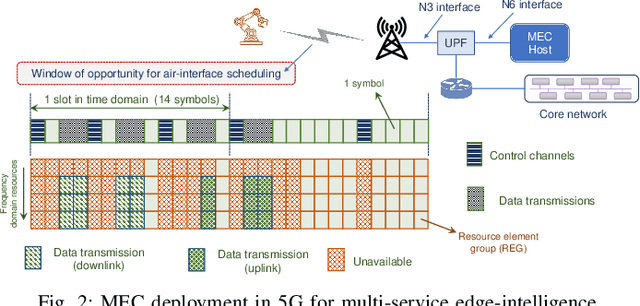

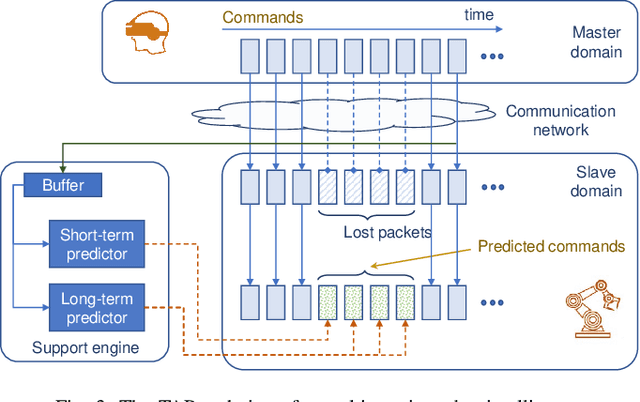

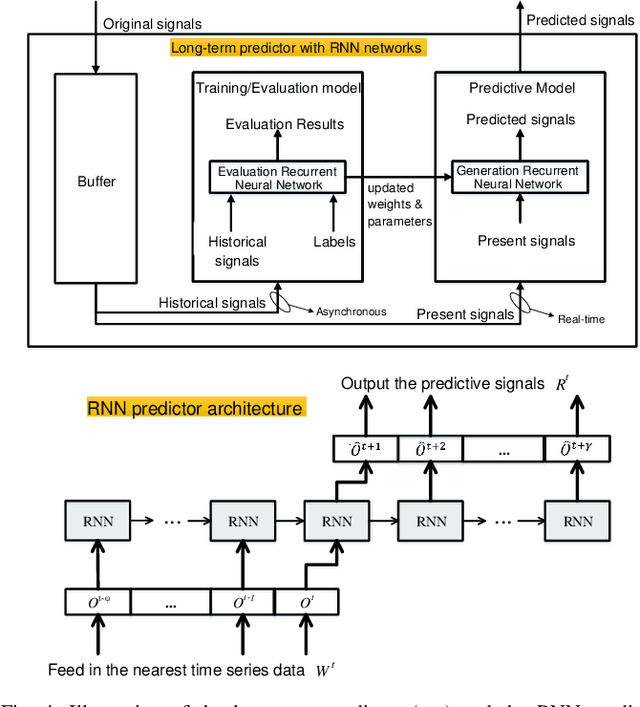

Toward Multi-Service Edge-Intelligence Paradigm: Temporal-Adaptive Prediction for Time-Critical Control over Wireless

Dec 12, 2022

Time-critical control applications typically pose stringent connectivity requirements for communication networks. The imperfections associated with the wireless medium such as packet losses, synchronization errors, and varying delays have a detrimental effect on performance of real-time control, often with safety implications. This paper introduces multi-service edge-intelligence as a new paradigm for realizing time-critical control over wireless. It presents the concept of multi-service edge-intelligence which revolves around tight integration of wireless access, edge-computing and machine learning techniques, in order to provide stability guarantees under wireless imperfections. The paper articulates some of the key system design aspects of multi-service edge-intelligence. It also presents a temporal-adaptive prediction technique to cope with dynamically changing wireless environments. It provides performance results in a robotic teleoperation scenario. Finally, it discusses some open research and design challenges for multi-service edge-intelligence.

Randomized and Deterministic Attention Sparsification Algorithms for Over-parameterized Feature Dimension

Apr 10, 2023Large language models (LLMs) have shown their power in different areas. Attention computation, as an important subroutine of LLMs, has also attracted interests in theory. Recently the static computation and dynamic maintenance of attention matrix has been studied by [Alman and Song 2023] and [Brand, Song and Zhou 2023] from both algorithmic perspective and hardness perspective. In this work, we consider the sparsification of the attention problem. We make one simplification which is the logit matrix is symmetric. Let $n$ denote the length of sentence, let $d$ denote the embedding dimension. Given a matrix $X \in \mathbb{R}^{n \times d}$, suppose $d \gg n$ and $\| X X^\top \|_{\infty} < r$ with $r \in (0,0.1)$, then we aim for finding $Y \in \mathbb{R}^{n \times m}$ (where $m\ll d$) such that \begin{align*} \| D(Y)^{-1} \exp( Y Y^\top ) - D(X)^{-1} \exp( X X^\top) \|_{\infty} \leq O(r) \end{align*} We provide two results for this problem. $\bullet$ Our first result is a randomized algorithm. It runs in $\widetilde{O}(\mathrm{nnz}(X) + n^{\omega} ) $ time, has $1-\delta$ succeed probability, and chooses $m = O(n \log(n/\delta))$. Here $\mathrm{nnz}(X)$ denotes the number of non-zero entries in $X$. We use $\omega$ to denote the exponent of matrix multiplication. Currently $\omega \approx 2.373$. $\bullet$ Our second result is a deterministic algorithm. It runs in $\widetilde{O}(\min\{\sum_{i\in[d]}\mathrm{nnz}(X_i)^2, dn^{\omega-1}\} + n^{\omega+1})$ time and chooses $m = O(n)$. Here $X_i$ denote the $i$-th column of matrix $X$. Our main findings have the following implication for applied LLMs task: for any super large feature dimension, we can reduce it down to the size nearly linear in length of sentence.

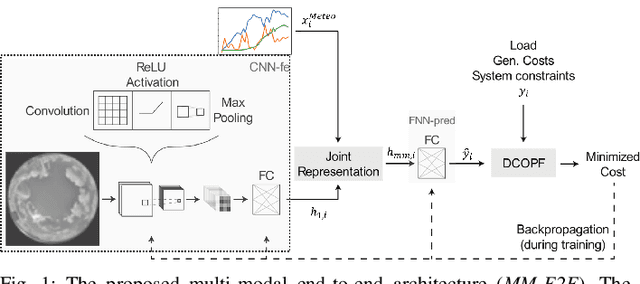

End-to-End Learning with Multiple Modalities for System-Optimised Renewables Nowcasting

Apr 14, 2023

With the increasing penetration of renewable power sources such as wind and solar, accurate short-term, nowcasting renewable power prediction is becoming increasingly important. This paper investigates the multi-modal (MM) learning and end-to-end (E2E) learning for nowcasting renewable power as an intermediate to energy management systems. MM combines features from all-sky imagery and meteorological sensor data as two modalities to predict renewable power generation that otherwise could not be combined effectively. The combined, predicted values are then input to a differentiable optimal power flow (OPF) formulation simulating the energy management. For the first time, MM is combined with E2E training of the model that minimises the expected total system cost. The case study tests the proposed methodology on the real sky and meteorological data from the Netherlands. In our study, the proposed MM-E2E model reduced system cost by 30% compared to uni-modal baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge