"Time": models, code, and papers

Investigating Sindy As a Tool For Causal Discovery In Time Series Signals

Dec 29, 2022

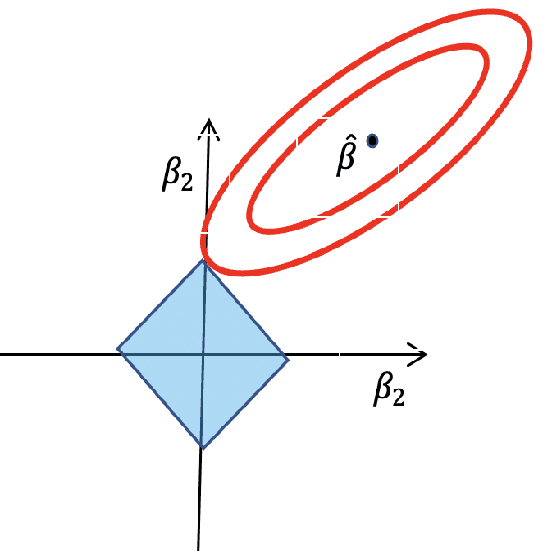

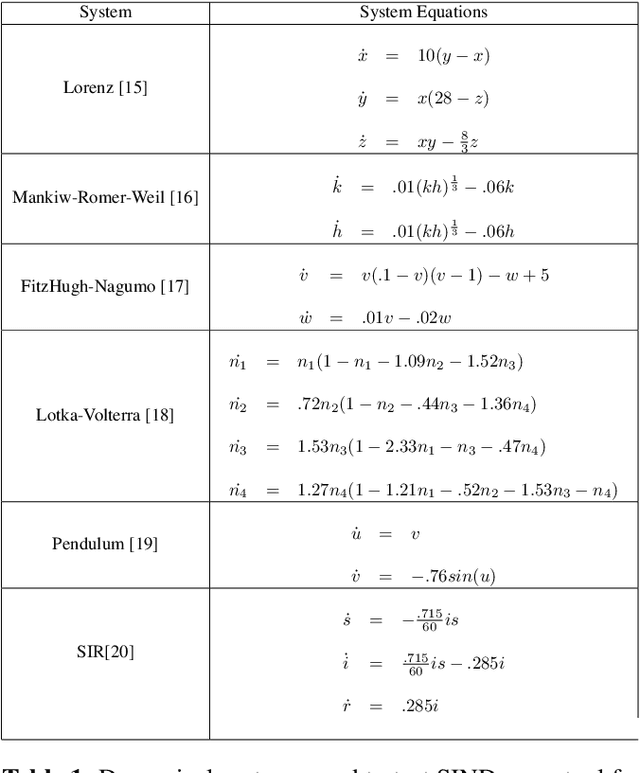

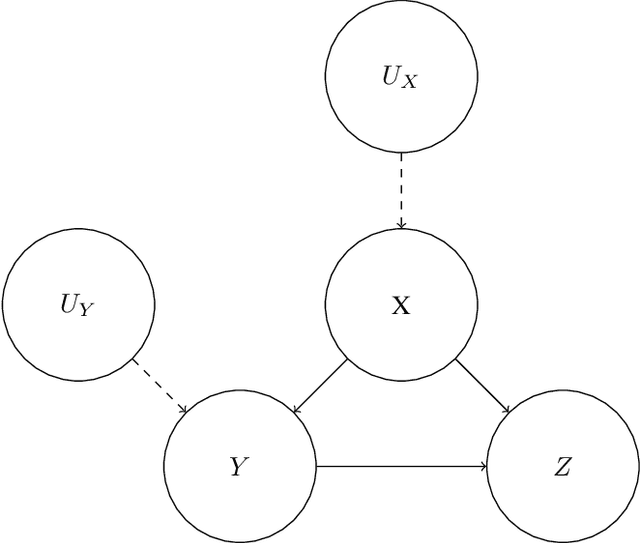

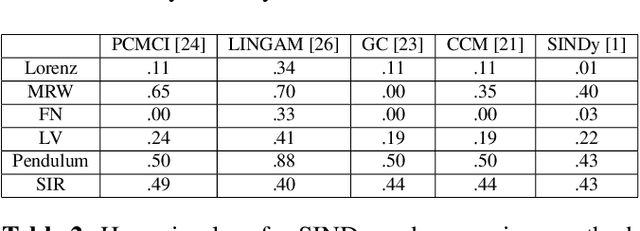

The SINDy algorithm has been successfully used to identify the governing equations of dynamical systems from time series data. In this paper, we argue that this makes SINDy a potentially useful tool for causal discovery and that existing tools for causal discovery can be used to dramatically improve the performance of SINDy as tool for robust sparse modeling and system identification. We then demonstrate empirically that augmenting the SINDy algorithm with tools from causal discovery can provides engineers with a tool for learning causally robust governing equations.

Auxiliary-Adaptive Control Barrier Functions for Safety Critical Systems

Apr 01, 2023

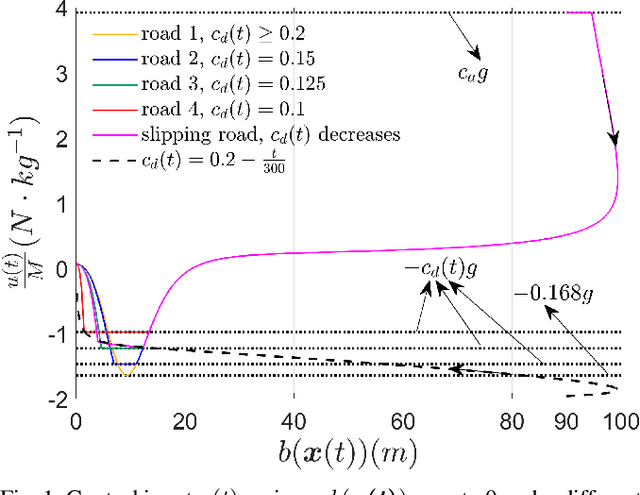

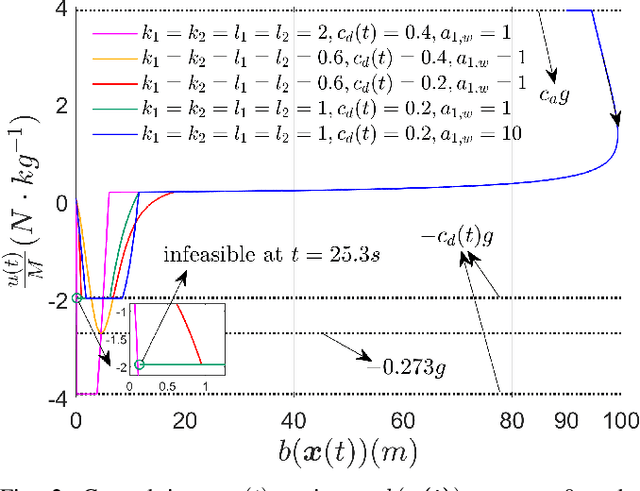

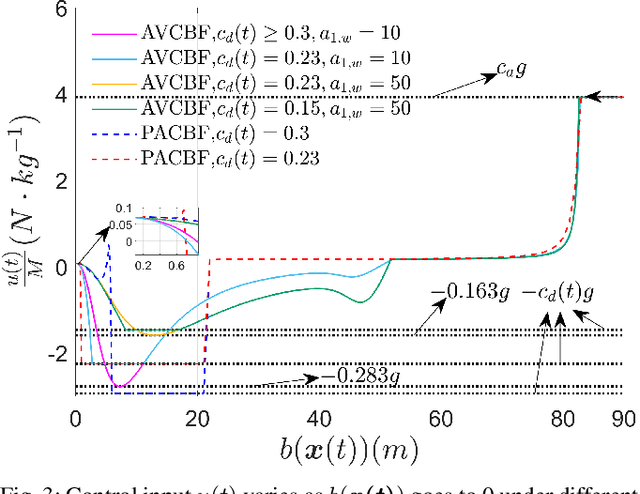

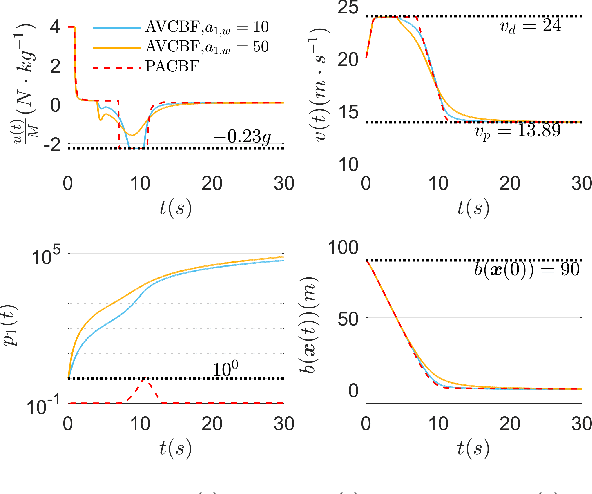

This paper studies safety guarantees for systems with time-varying control bounds. It has been shown that optimizing quadratic costs subject to state and control constraints can be reduced to a sequence of Quadratic Programs (QPs) using Control Barrier Functions (CBFs). One of the main challenges in this method is that the CBF-based QP could easily become infeasible under tight control bounds, especially when the control bounds are time-varying. The recently proposed adaptive CBFs have addressed such infeasibility issues, but require extensive and non-trivial hyperparameter tuning for the CBF-based QP and may introduce overshooting control near the boundaries of safe sets. To address these issues, we propose a new type of adaptive CBFs called Auxiliary Variable CBFs (AVCBFs). Specifically, we introduce an auxiliary variable that multiplies each CBF itself, and define dynamics for the auxiliary variable to adapt it in constructing the corresponding CBF constraint. In this way, we can improve the feasibility of the CBF-based QP while avoiding extensive parameter tuning with non-overshooting control since the formulation is identical to classical CBF methods. We demonstrate the advantages of using AVCBFs and compare them with existing techniques on an Adaptive Cruise Control (ACC) problem with time-varying control bounds.

Distribution estimation and change-point detection for time series via DNN-based GANs

Nov 26, 2022

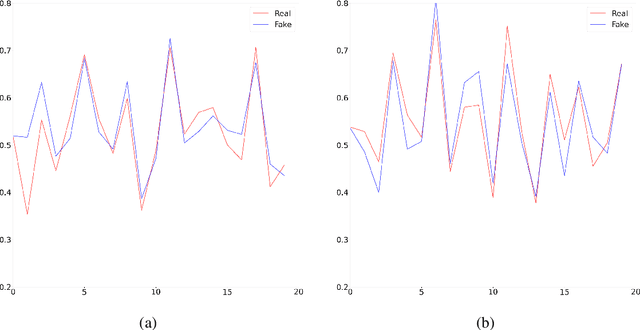

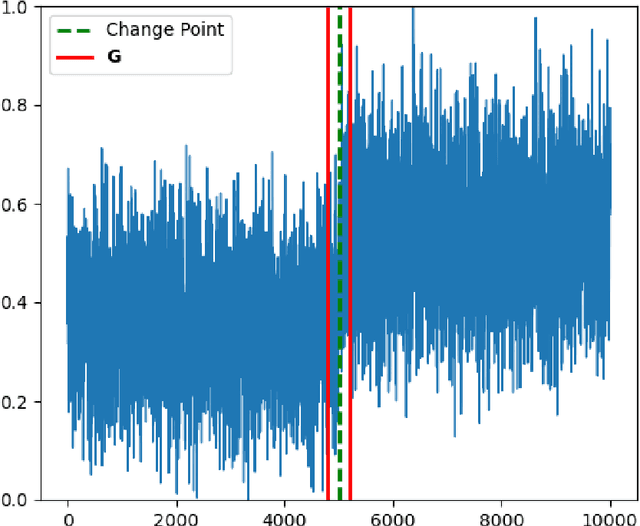

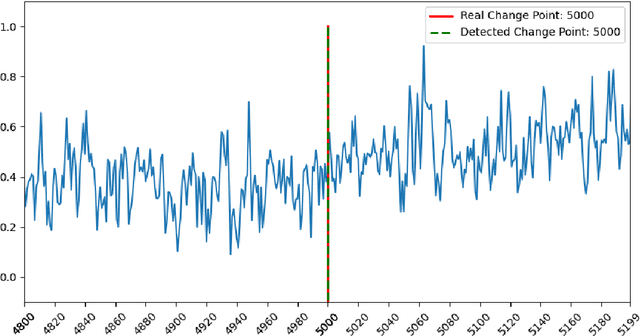

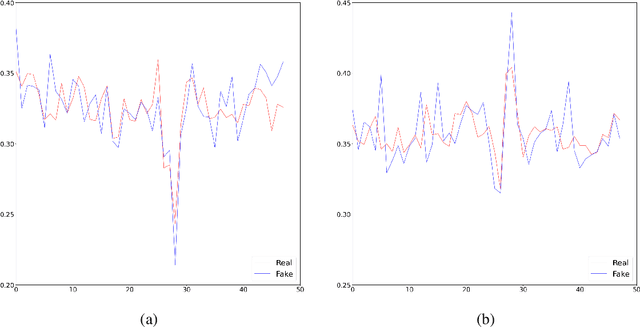

The generative adversarial networks (GANs) have recently been applied to estimating the distribution of independent and identically distributed data, and got excellent performances. In this paper, we use the blocking technique to demonstrate the effectiveness of GANs for estimating the distribution of stationary time series. Theoretically, we obtain a non-asymptotic error bound for the Deep Neural Network (DNN)-based GANs estimator for the stationary distribution of the time series. Based on our theoretical analysis, we put forward an algorithm for detecting the change-point in time series. We simulate in our first experiment a stationary time series by the multivariate autoregressive model to test our GAN estimator, while the second experiment is to use our proposed algorithm to detect the change-point in a time series sequence. Both perform very well. The third experiment is to use our GAN estimator to learn the distribution of a real financial time series data, which is not stationary, we can see from the experiment results that our estimator cannot match the distribution of the time series very well but give the right changing tendency.

An Edge Assisted Robust Smart Traffic Management and Signalling System for Guiding Emergency Vehicles During Peak Hours

May 02, 2023Congestion in traffic is an unavoidable circumstance in many cities in India and other countries. It is an issue of major concern. The steep rise in the number of automobiles on the roads followed by old infrastructure, accidents, pedestrian traffic, and traffic rule violations all add to challenging traffic conditions. Given these poor conditions of traffic, there is a critical need for automatically detecting and signaling systems. There are already various technologies that are used for traffic management and signaling systems like video analysis, infrared sensors, and wireless sensors. The main issue with these methods is they are very costly and high maintenance is required. In this paper, we have proposed a three-phase system that can guide emergency vehicles and manage traffic based on the degree of congestion. In the first phase, the system processes the captured images and calculates the Index value which is used to discover the degree of congestion. The Index value of a particular road depends on its width and the length up to which the camera captures images of that road. We have to take input for the parameters (length and width) while setting up the system. In the second phase, the system checks whether there are any emergency vehicles present or not in any lane. In the third phase, the whole processing and decision-making part is performed at the edge server. The proposed model is robust and it takes into consideration adverse weather conditions such as hazy, foggy, and windy. It works very efficiently in low light conditions also. The edge server is a strategically placed server that provides us with low latency and better connectivity. Using Edge technology in this traffic management system reduces the strain on cloud servers and the system becomes more reliable in real-time because the latency and bandwidth get reduced due to processing at the intermediate edge server.

ThreatCrawl: A BERT-based Focused Crawler for the Cybersecurity Domain

Apr 26, 2023

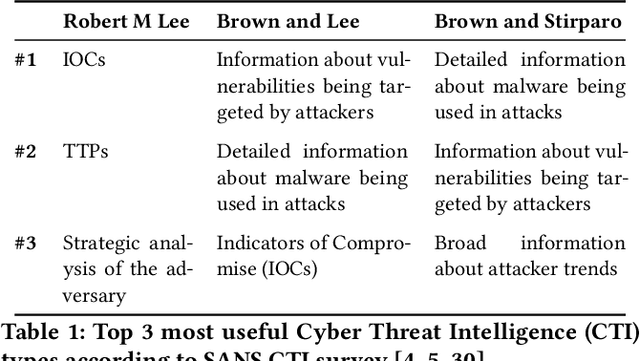

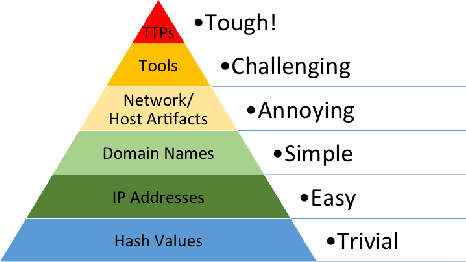

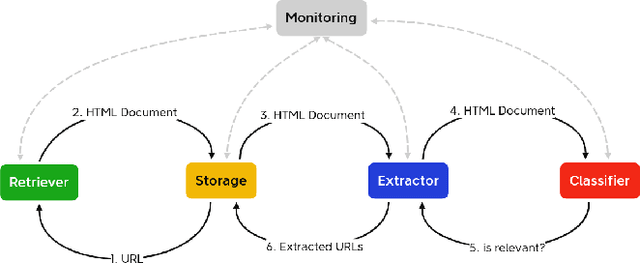

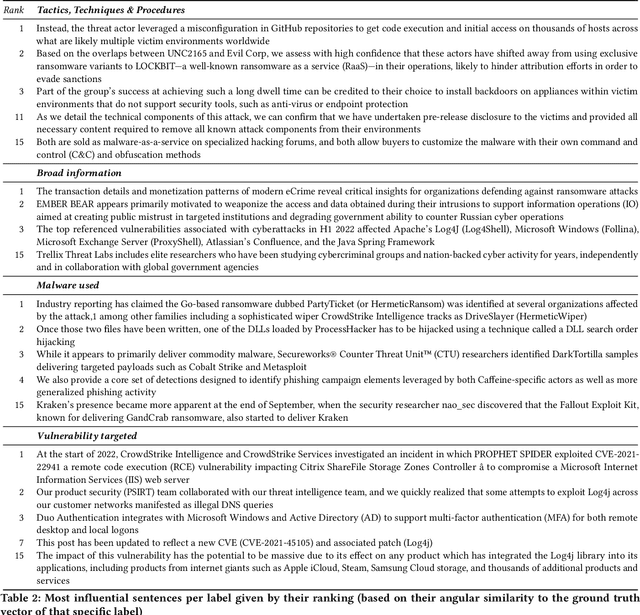

Publicly available information contains valuable information for Cyber Threat Intelligence (CTI). This can be used to prevent attacks that have already taken place on other systems. Ideally, only the initial attack succeeds and all subsequent ones are detected and stopped. But while there are different standards to exchange this information, a lot of it is shared in articles or blog posts in non-standardized ways. Manually scanning through multiple online portals and news pages to discover new threats and extracting them is a time-consuming task. To automize parts of this scanning process, multiple papers propose extractors that use Natural Language Processing (NLP) to extract Indicators of Compromise (IOCs) from documents. However, while this already solves the problem of extracting the information out of documents, the search for these documents is rarely considered. In this paper, a new focused crawler is proposed called ThreatCrawl, which uses Bidirectional Encoder Representations from Transformers (BERT)-based models to classify documents and adapt its crawling path dynamically. While ThreatCrawl has difficulties to classify the specific type of Open Source Intelligence (OSINT) named in texts, e.g., IOC content, it can successfully find relevant documents and modify its path accordingly. It yields harvest rates of up to 52%, which are, to the best of our knowledge, better than the current state of the art.

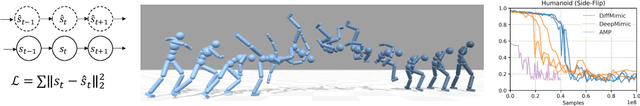

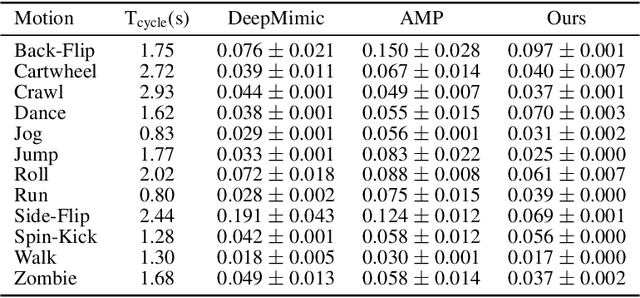

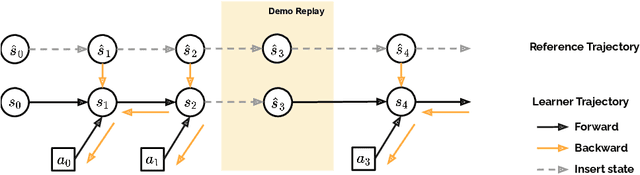

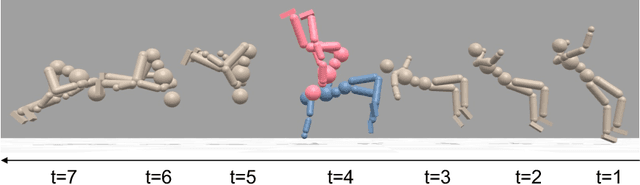

DiffMimic: Efficient Motion Mimicking with Differentiable Physics

Apr 26, 2023

Motion mimicking is a foundational task in physics-based character animation. However, most existing motion mimicking methods are built upon reinforcement learning (RL) and suffer from heavy reward engineering, high variance, and slow convergence with hard explorations. Specifically, they usually take tens of hours or even days of training to mimic a simple motion sequence, resulting in poor scalability. In this work, we leverage differentiable physics simulators (DPS) and propose an efficient motion mimicking method dubbed DiffMimic. Our key insight is that DPS casts a complex policy learning task to a much simpler state matching problem. In particular, DPS learns a stable policy by analytical gradients with ground-truth physical priors hence leading to significantly faster and stabler convergence than RL-based methods. Moreover, to escape from local optima, we utilize a Demonstration Replay mechanism to enable stable gradient backpropagation in a long horizon. Extensive experiments on standard benchmarks show that DiffMimic has a better sample efficiency and time efficiency than existing methods (e.g., DeepMimic). Notably, DiffMimic allows a physically simulated character to learn Backflip after 10 minutes of training and be able to cycle it after 3 hours of training, while the existing approach may require about a day of training to cycle Backflip. More importantly, we hope DiffMimic can benefit more differentiable animation systems with techniques like differentiable clothes simulation in future research.

MHfit: Mobile Health Data for Predicting Athletics Fitness Using Machine Learning

Apr 26, 2023

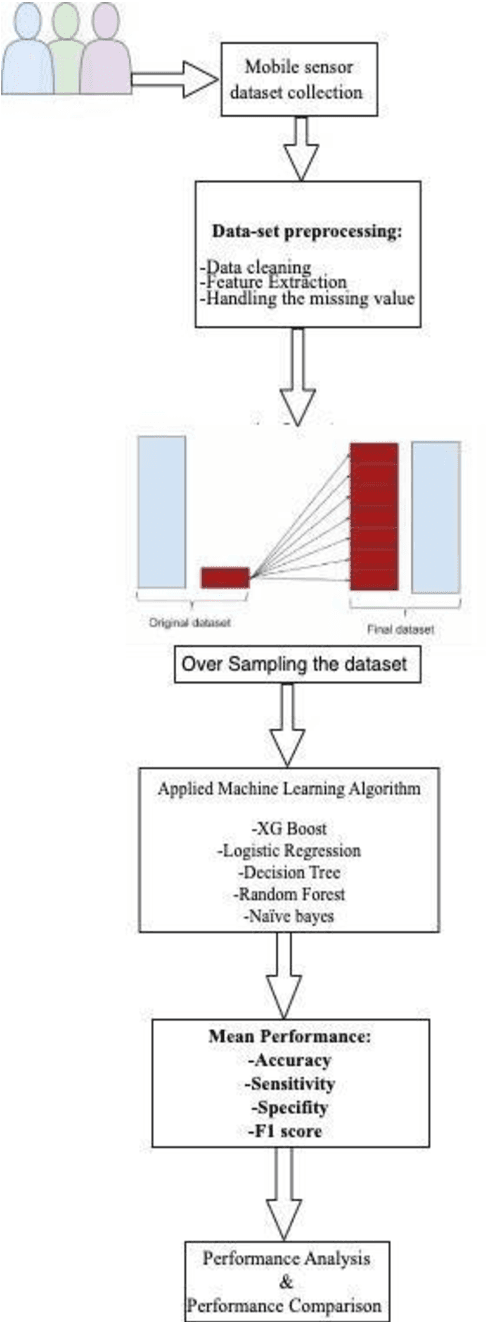

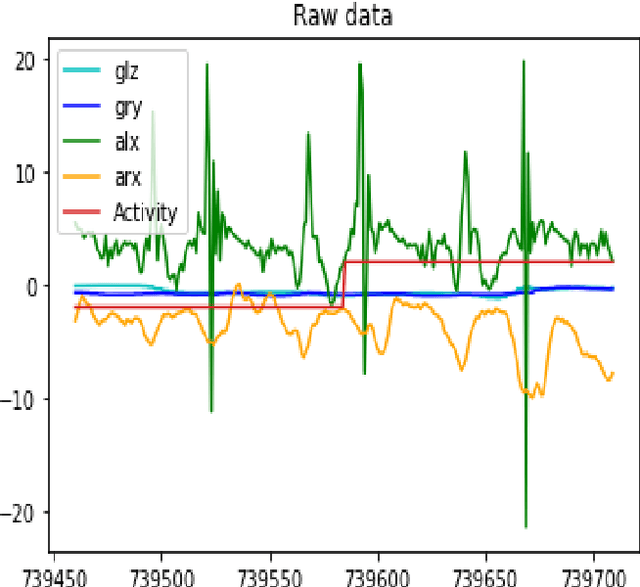

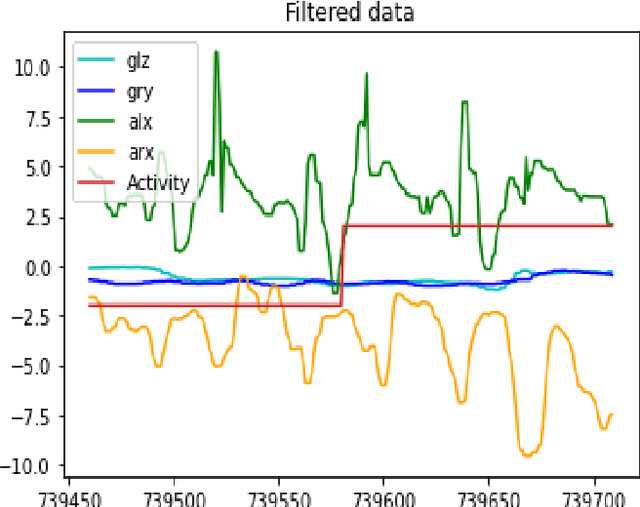

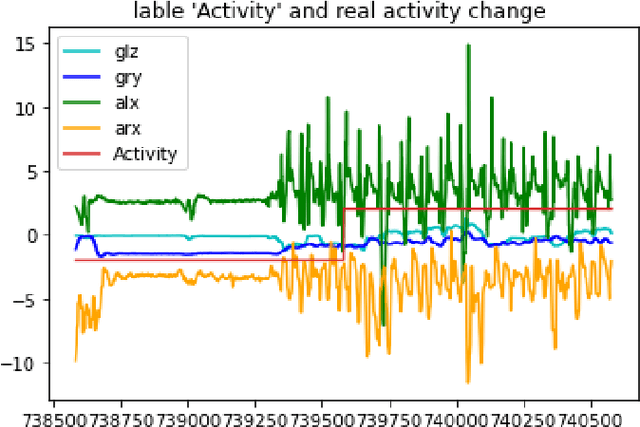

Mobile phones and other electronic gadgets or devices have aided in collecting data without the need for data entry. This paper will specifically focus on Mobile health data. Mobile health data use mobile devices to gather clinical health data and track patient vitals in real-time. Our study is aimed to give decisions for small or big sports teams on whether one athlete good fit or not for a particular game with the compare several machine learning algorithms to predict human behavior and health using the data collected from mobile devices and sensors placed on patients. In this study, we have obtained the dataset from a similar study done on mhealth. The dataset contains vital signs recordings of ten volunteers from different backgrounds. They had to perform several physical activities with a sensor placed on their bodies. Our study used 5 machine learning algorithms (XGBoost, Naive Bayes, Decision Tree, Random Forest, and Logistic Regression) to analyze and predict human health behavior. XGBoost performed better compared to the other machine learning algorithms and achieved 95.2% accuracy, 99.5% in sensitivity, 99.5% in specificity, and 99.66% in F1 score. Our research indicated a promising future in mhealth being used to predict human behavior and further research and exploration need to be done for it to be available for commercial use specifically in the sports industry.

Low-Complexity Reliability-Based Equalization and Detection for OTFS-NOMA

Apr 26, 2023

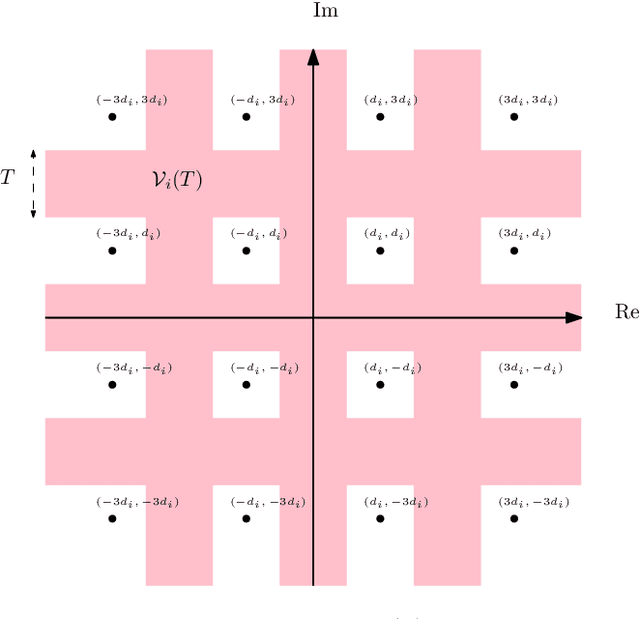

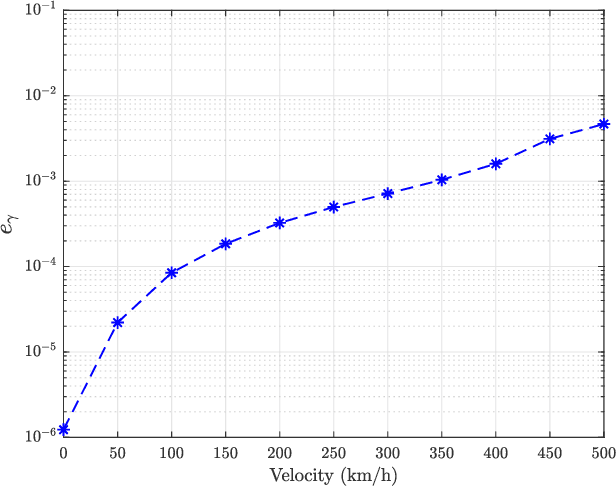

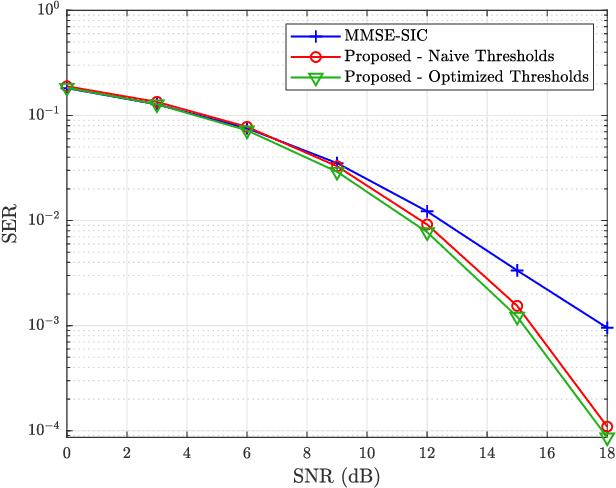

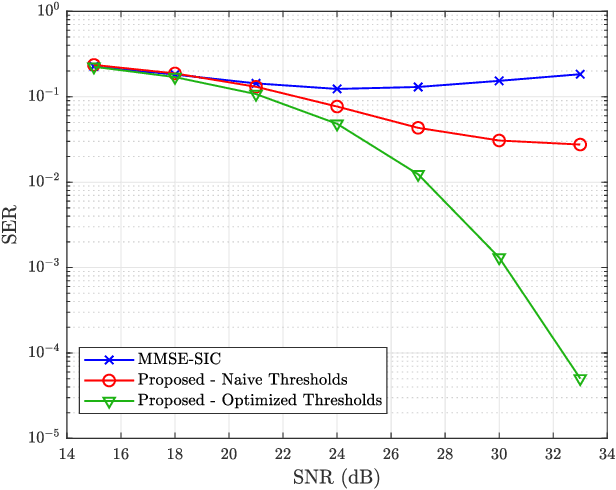

Orthogonal time frequency space (OTFS) modulation has recently emerged as a potential 6G candidate waveform which provides improved performance in high-mobility scenarios. In this paper we investigate the combination of OTFS with non-orthogonal multiple access (NOMA). Existing equalization and detection methods for OTFS-NOMA, such as minimum-mean-squared error with successive interference cancellation (MMSE-SIC), suffer from poor performance. Additionally, existing iterative methods for single-user OTFS based on low-complexity iterative least-squares solvers are not directly applicable to the NOMA scenario due to the presence of multi-user interference (MUI). Motivated by this, in this paper we propose a low-complexity method for equalization and detection for OTFS-NOMA. The proposed method uses a novel reliability zone (RZ) detection scheme which estimates the reliable symbols of the users and then uses interference cancellation to remove MUI. The thresholds for the RZ detector are optimized in a greedy manner to further improve detection performance. In order to optimize these thresholds, we modify the least squares with QR-factorization (LSQR) algorithm used for channel equalization to compute the the post-equalization mean-squared error (MSE), and track the evolution of this MSE throughout the iterative detection process. Numerical results demonstrate the superiority of the proposed equalization and detection technique to the existing MMSE-SIC benchmark in terms of symbol error rate (SER).

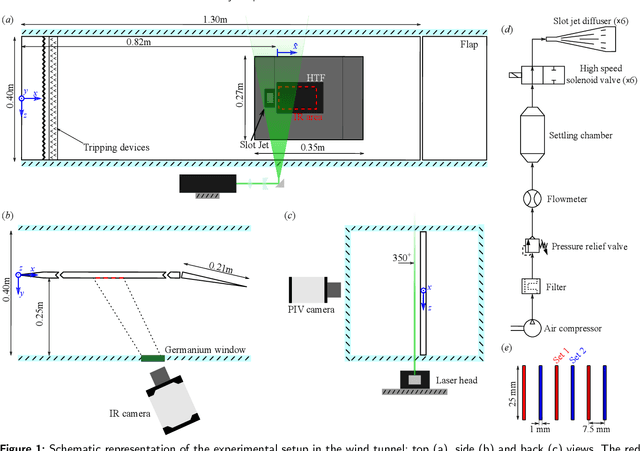

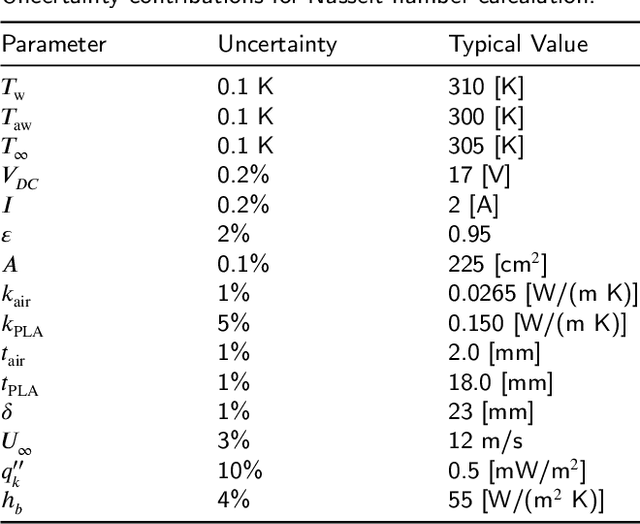

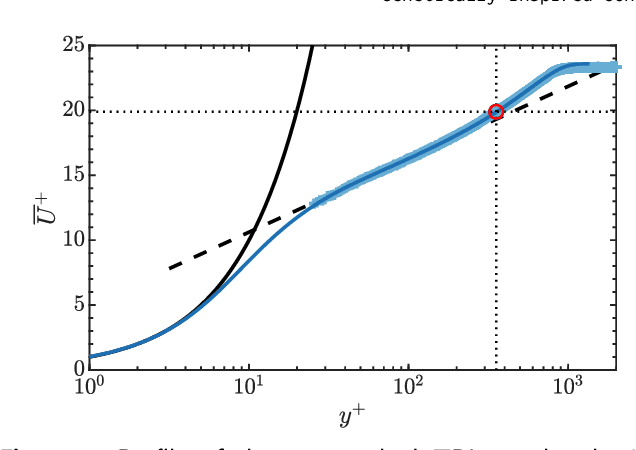

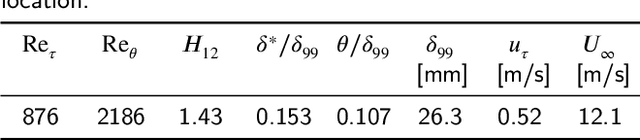

Genetically-inspired convective heat transfer enhancement in a turbulent boundary layer

Apr 26, 2023

The convective heat transfer in a turbulent boundary layer (TBL) on a flat plate is enhanced using an artificial intelligence approach based on linear genetic algorithms control (LGAC). The actuator is a set of six slot jets in crossflow aligned with the freestream. An open-loop optimal periodic forcing is defined by the carrier frequency, the duty cycle and the phase difference between actuators as control parameters. The control laws are optimised with respect to the unperturbed TBL and to the actuation with a steady jet. The cost function includes the wall convective heat transfer rate and the cost of the actuation. The performance of the controller is assessed by infrared thermography and characterised also with particle image velocimetry measurements. The optimal controller yields a slightly asymmetric flow field. The LGAC algorithm converges to the same frequency and duty cycle for all the actuators. It is noted that such frequency is strikingly equal to the inverse of the characteristic travel time of large-scale turbulent structures advected within the near-wall region. The phase difference between multiple jet actuation has shown to be very relevant and the main driver of flow asymmetry. The results pinpoint the potential of machine learning control in unravelling unexplored controllers within the actuation space. Our study furthermore demonstrates the viability of employing sophisticated measurement techniques together with advanced algorithms in an experimental investigation.

TranViT: An Integrated Vision Transformer Framework for Discrete Transit Travel Time Range Prediction

Nov 25, 2022

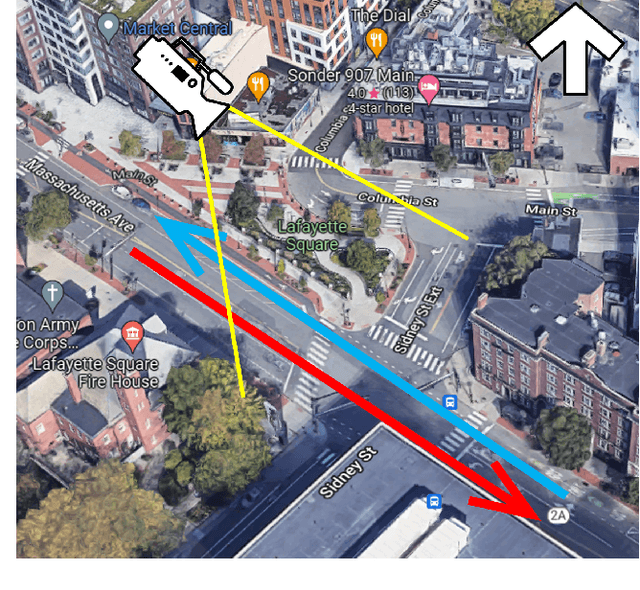

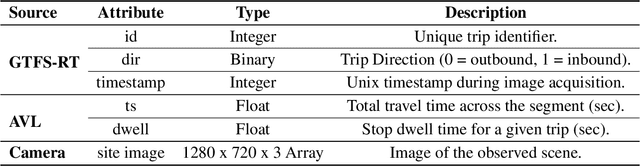

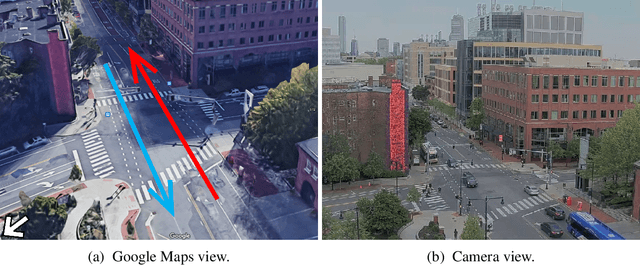

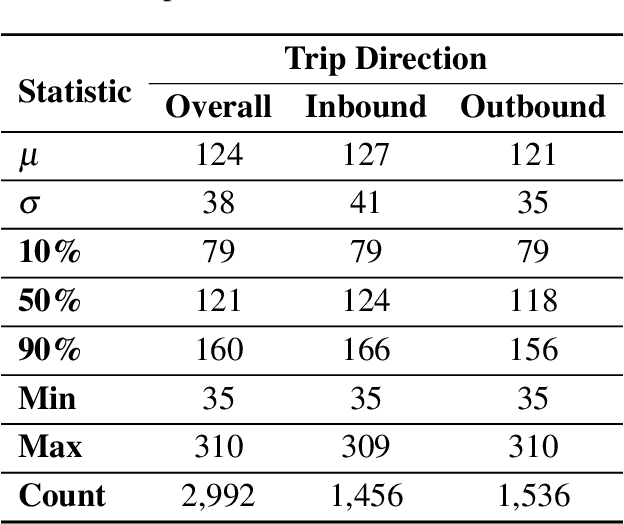

Accurate travel time estimation is paramount for providing transit users with reliable schedules and dependable real-time information. This paper proposes and evaluates a novel end-to-end framework for transit and roadside image data acquisition, labeling, and model training to predict transit travel times across a segment of interest. General Transit Feed Specification (GTFS) real-time data is used as an activation mechanism for a roadside camera unit monitoring a segment of Massachusetts Avenue in Cambridge, MA. Ground truth labels are generated for the acquired images based on the observed travel time percentiles across the monitored segment obtained from Automated Vehicle Location (AVL) data. The generated labeled image dataset is then used to train and evaluate a Vision Transformer (ViT) model to predict a discrete transit travel time range (band). The results of this exploratory study illustrate that the ViT model is able to learn image features and contents that best help it deduce the expected travel time range with an average validation accuracy ranging between 80%-85%. We also demonstrate how this discrete travel time band prediction can subsequently be utilized to improve continuous transit travel time estimation. The workflow and results presented in this study provide an end-to-end, scalable, automated, and highly efficient approach for integrating traditional transit data sources and roadside imagery to improve the estimation of transit travel duration. This work also demonstrates the value of incorporating real-time information from computer-vision sources, which are becoming increasingly accessible and can have major implications for improving operations and passenger real-time information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge