"Time": models, code, and papers

Interpreting Vision and Language Generative Models with Semantic Visual Priors

May 04, 2023

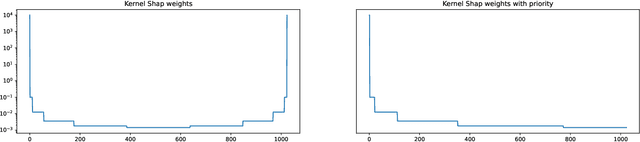

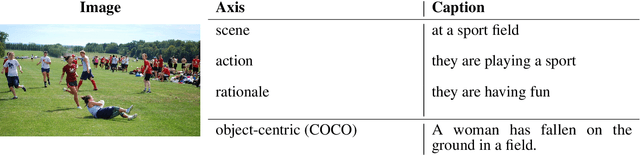

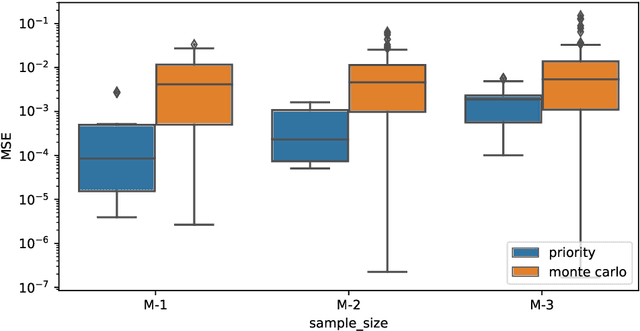

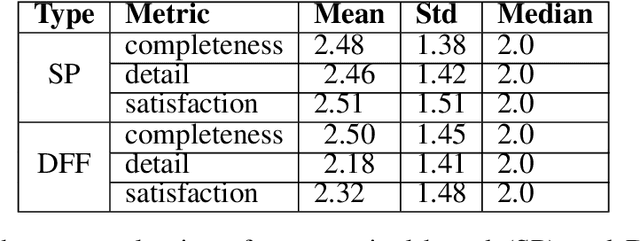

When applied to Image-to-text models, interpretability methods often provide token-by-token explanations namely, they compute a visual explanation for each token of the generated sequence. Those explanations are expensive to compute and unable to comprehensively explain the model's output. Therefore, these models often require some sort of approximation that eventually leads to misleading explanations. We develop a framework based on SHAP, that allows for generating comprehensive, meaningful explanations leveraging the meaning representation of the output sequence as a whole. Moreover, by exploiting semantic priors in the visual backbone, we extract an arbitrary number of features that allows the efficient computation of Shapley values on large-scale models, generating at the same time highly meaningful visual explanations. We demonstrate that our method generates semantically more expressive explanations than traditional methods at a lower compute cost and that it can be generalized over other explainability methods.

Deep Learning Aided Beamforming for Downlink Non Orthogonal Multiple Access Systems

May 04, 2023

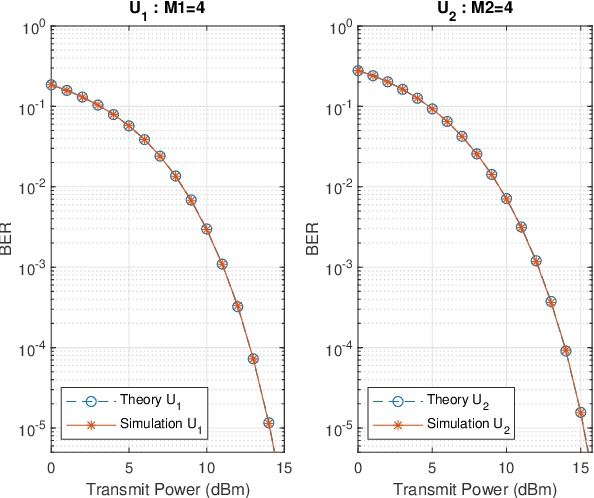

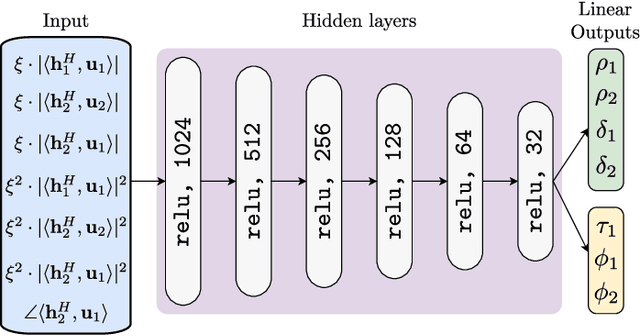

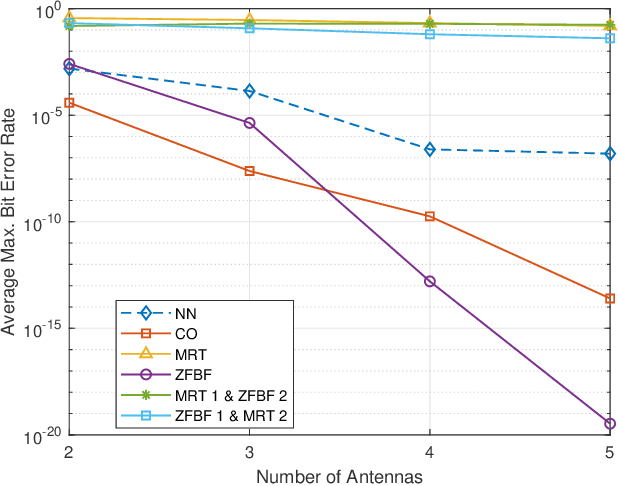

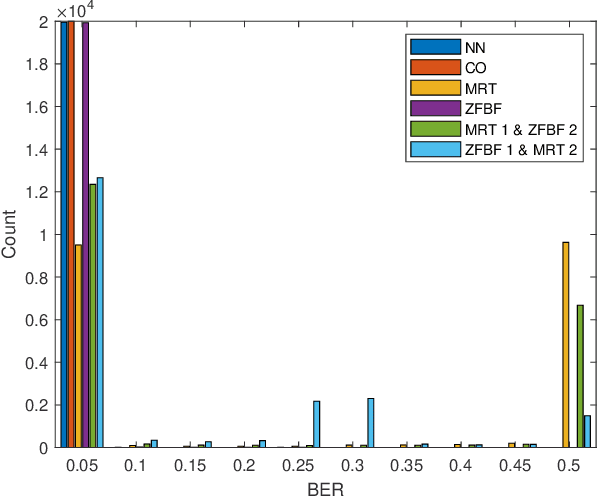

We investigate the problem of optimal beamformer design for the downlink of Multi Input Single Output (MISO) Non-Orthogonal Multiple Access (NOMA). In more detail, focusing on the two-user scenario, we first derive a closed from expression for the Bit Error Rate (BER) experienced by both user. Using the derived expression, in an effort to introduce fairness in our system design, we introduce the problem of optimal, with respect to minimizing the maximum of the BER values experienced by the two users, beamforming and propose a Machine Learning (ML) based solution for this problem. Finally, we conduct simulations which allow us to verify that our proposed algorithm outperforms other existing benchmarks as well as that in a variety of cases, it may result to BER performance close to the one obtained by the use of time consuming constrained optimization methods, such as to solve the given optimization problem.

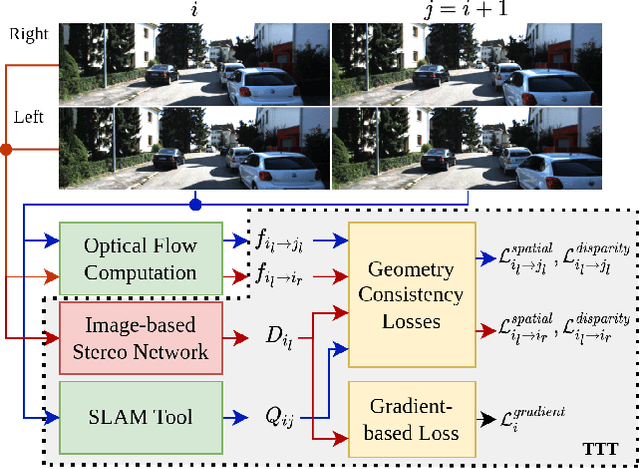

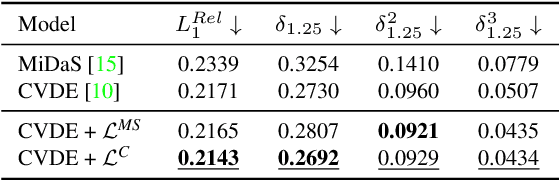

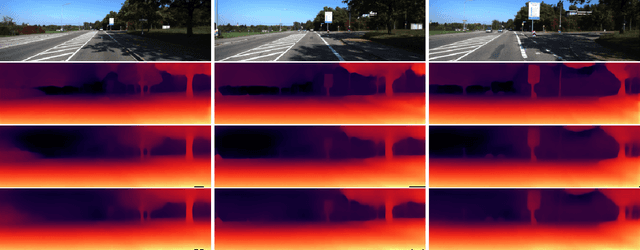

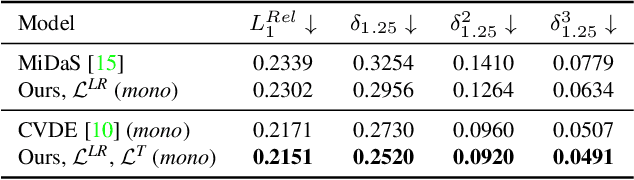

Edge-aware Consistent Stereo Video Depth Estimation

May 04, 2023

Video depth estimation is crucial in various applications, such as scene reconstruction and augmented reality. In contrast to the naive method of estimating depths from images, a more sophisticated approach uses temporal information, thereby eliminating flickering and geometrical inconsistencies. We propose a consistent method for dense video depth estimation; however, unlike the existing monocular methods, ours relates to stereo videos. This technique overcomes the limitations arising from the monocular input. As a benefit of using stereo inputs, a left-right consistency loss is introduced to improve the performance. Besides, we use SLAM-based camera pose estimation in the process. To address the problem of depth blurriness during test-time training (TTT), we present an edge-preserving loss function that improves the visibility of fine details while preserving geometrical consistency. We show that our edge-aware stereo video model can accurately estimate the dense depth maps.

Emulation Learning for Neuromimetic Systems

May 04, 2023

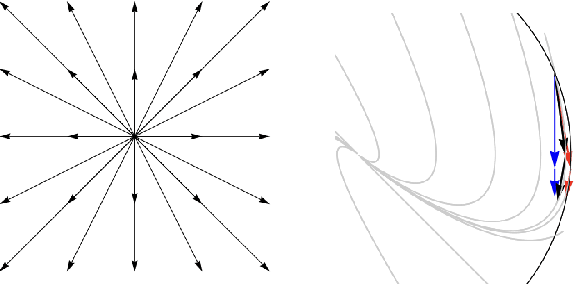

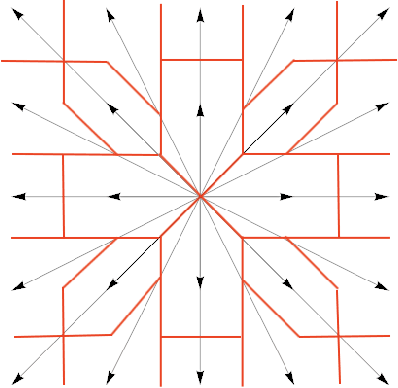

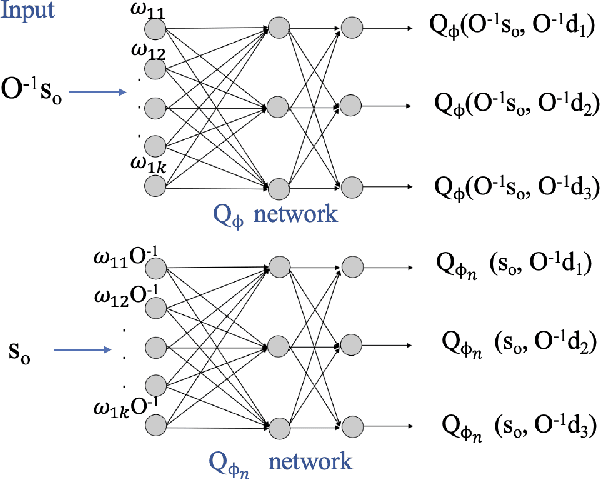

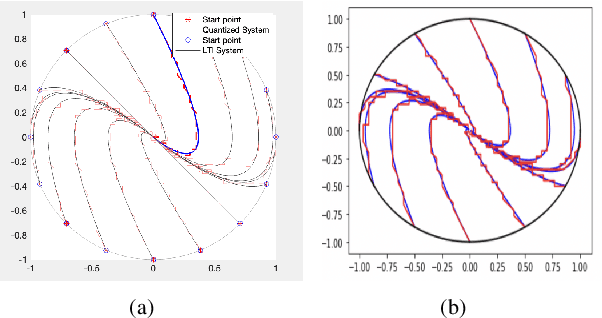

Building on our recent research on neural heuristic quantization systems, results on learning quantized motions and resilience to channel dropouts are reported. We propose a general emulation problem consistent with the neuromimetic paradigm. This optimal quantization problem can be solved by model predictive control (MPC), but because the optimization step involves integer programming, the approach suffers from combinatorial complexity when the number of input channels becomes large. Even if we collect data points to train a neural network simultaneously, collection of training data and the training itself are still time-consuming. Therefore, we propose a general Deep Q Network (DQN) algorithm that can not only learn the trajectory but also exhibit the advantages of resilience to channel dropout. Furthermore, to transfer the model to other emulation problems, a mapping-based transfer learning approach can be used directly on the current model to obtain the optimal direction for the new emulation problems.

Software-defined Optoacoustic Tomography

May 04, 2023

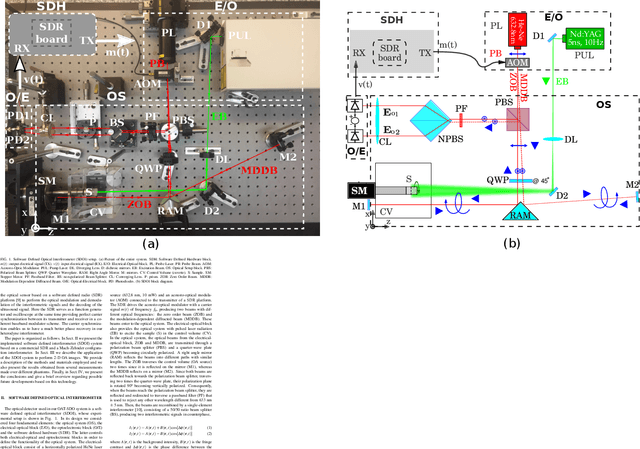

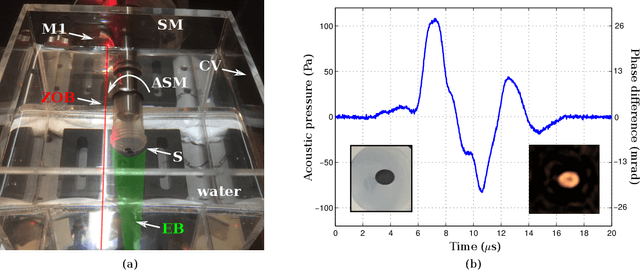

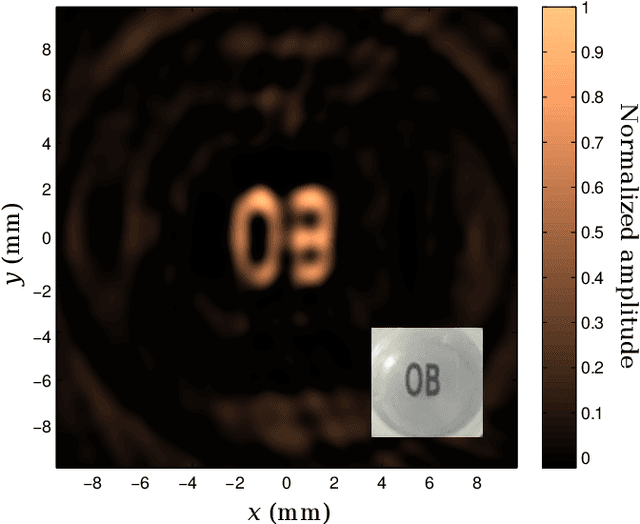

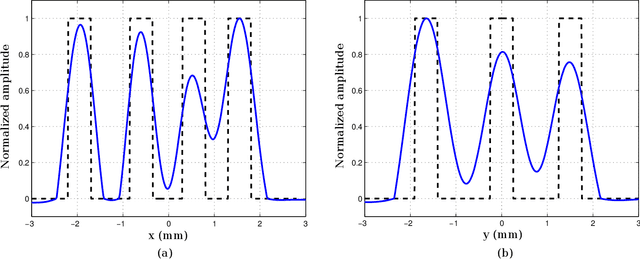

In this work we present the first application of software-defined optoelectronics (SDO) for bidimensional optoacoustic tomography (OAT). The SDO concept refers to optoelectronic systems where the functionality associated with the conditioning and processing of optical and electrical signals are digitally implemented and controlled by software. This paradigm takes advantage of the flexibility of software-defined hardware platforms to develop adaptive instrumentation systems. We implement an OAT system based on a heterodyne interferometer in a Mach-Zehnder configuration and a commercial software-defined radio platform (SDR). Here the SDR serves as a function generator and oscilloscope at the same time providing perfect carrier synchronization between its transmitter and receiver in a coherent baseband modulator scheme. Therefore, the carrier synchronization enables us to have a much better phase recovery. We study the performance of the OAT SDO system by means of different bidimensional phantoms and the analysis of the reconstructed images.

* Published in Applied Optics

Towards unraveling calibration biases in medical image analysis

May 09, 2023

In recent years the development of artificial intelligence (AI) systems for automated medical image analysis has gained enormous momentum. At the same time, a large body of work has shown that AI systems can systematically and unfairly discriminate against certain populations in various application scenarios. These two facts have motivated the emergence of algorithmic fairness studies in this field. Most research on healthcare algorithmic fairness to date has focused on the assessment of biases in terms of classical discrimination metrics such as AUC and accuracy. Potential biases in terms of model calibration, however, have only recently begun to be evaluated. This is especially important when working with clinical decision support systems, as predictive uncertainty is key for health professionals to optimally evaluate and combine multiple sources of information. In this work we study discrimination and calibration biases in models trained for automatic detection of malignant dermatological conditions from skin lesions images. Importantly, we show how several typically employed calibration metrics are systematically biased with respect to sample sizes, and how this can lead to erroneous fairness analysis if not taken into consideration. This is of particular relevance to fairness studies, where data imbalance results in drastic sample size differences between demographic sub-groups, which, if not taken into account, can act as confounders.

T-SciQ: Teaching Multimodal Chain-of-Thought Reasoning via Large Language Model Signals for Science Question Answering

May 09, 2023

Large Language Models (LLMs) have recently demonstrated exceptional performance in various Natural Language Processing (NLP) tasks. They have also shown the ability to perform chain-of-thought (CoT) reasoning to solve complex problems. Recent studies have explored CoT reasoning in complex multimodal scenarios, such as the science question answering task, by fine-tuning multimodal models with high-quality human-annotated CoT rationales. However, collecting high-quality COT rationales is usually time-consuming and costly. Besides, the annotated rationales are hardly accurate due to the redundant information involved or the essential information missed. To address these issues, we propose a novel method termed \emph{T-SciQ} that aims at teaching science question answering with LLM signals. The T-SciQ approach generates high-quality CoT rationales as teaching signals and is advanced to train much smaller models to perform CoT reasoning in complex modalities. Additionally, we introduce a novel data mixing strategy to produce more effective teaching data samples for simple and complex science question answer problems. Extensive experimental results show that our T-SciQ method achieves a new state-of-the-art performance on the ScienceQA benchmark, with an accuracy of 96.18%. Moreover, our approach outperforms the most powerful fine-tuned baseline by 4.5%.

Neuralizer: General Neuroimage Analysis without Re-Training

May 09, 2023

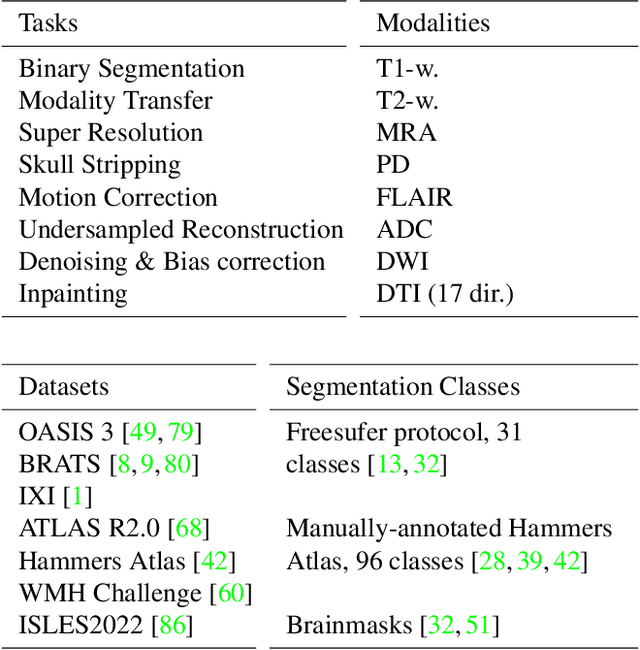

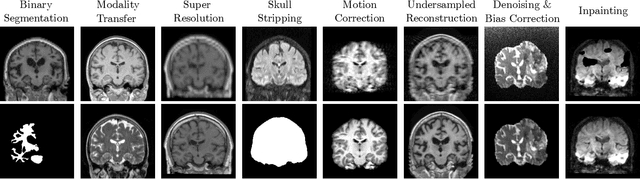

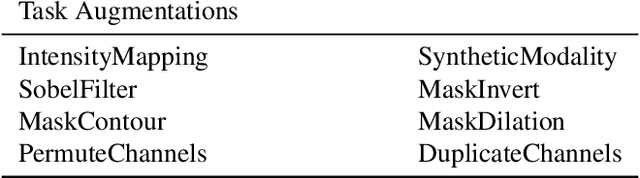

Neuroimage processing tasks like segmentation, reconstruction, and registration are central to the study of neuroscience. Robust deep learning strategies and architectures used to solve these tasks are often similar. Yet, when presented with a new task or a dataset with different visual characteristics, practitioners most often need to train a new model, or fine-tune an existing one. This is a time-consuming process that poses a substantial barrier for the thousands of neuroscientists and clinical researchers who often lack the resources or machine-learning expertise to train deep learning models. In practice, this leads to a lack of adoption of deep learning, and neuroscience tools being dominated by classical frameworks. We introduce Neuralizer, a single model that generalizes to previously unseen neuroimaging tasks and modalities without the need for re-training or fine-tuning. Tasks do not have to be known a priori, and generalization happens in a single forward pass during inference. The model can solve processing tasks across multiple image modalities, acquisition methods, and datasets, and generalize to tasks and modalities it has not been trained on. Our experiments on coronal slices show that when few annotated subjects are available, our multi-task network outperforms task-specific baselines without training on the task.

Optimizing Privacy, Utility and Efficiency in Constrained Multi-Objective Federated Learning

May 09, 2023

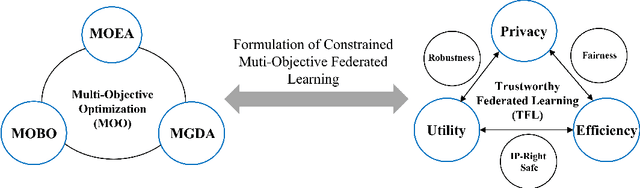

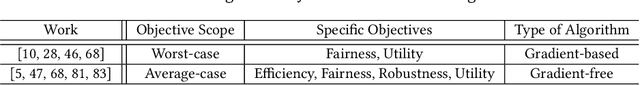

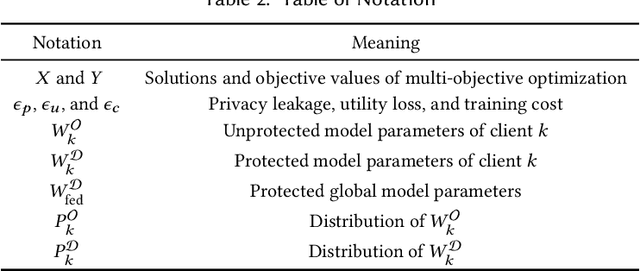

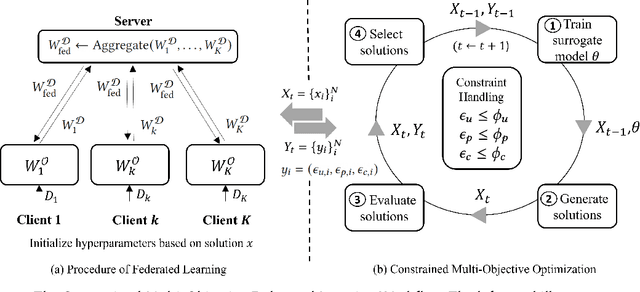

Conventionally, federated learning aims to optimize a single objective, typically the utility. However, for a federated learning system to be trustworthy, it needs to simultaneously satisfy multiple/many objectives, such as maximizing model performance, minimizing privacy leakage and training cost, and being robust to malicious attacks. Multi-Objective Optimization (MOO) aiming to optimize multiple conflicting objectives at the same time is quite suitable for solving the optimization problem of Trustworthy Federated Learning (TFL). In this paper, we unify MOO and TFL by formulating the problem of constrained multi-objective federated learning (CMOFL). Under this formulation, existing MOO algorithms can be adapted to TFL straightforwardly. Different from existing CMOFL works focusing on utility, efficiency, fairness, and robustness, we consider optimizing privacy leakage along with utility loss and training cost, the three primary objectives of a TFL system. We develop two improved CMOFL algorithms based on NSGA-II and PSL, respectively, for effectively and efficiently finding Pareto optimal solutions, and we provide theoretical analysis on their convergence. We design specific measurements of privacy leakage, utility loss, and training cost for three privacy protection mechanisms: Randomization, BatchCrypt (An efficient version of homomorphic encryption), and Sparsification. Empirical experiments conducted under each of the three protection mechanisms demonstrate the effectiveness of our proposed algorithms.

RATs-NAS: Redirection of Adjacent Trails on GCN for Neural Architecture Search

May 09, 2023

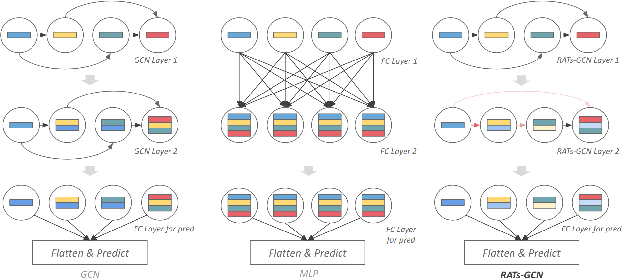

Various hand-designed CNN architectures have been developed, such as VGG, ResNet, DenseNet, etc., and achieve State-of-the-Art (SoTA) levels on different tasks. Neural Architecture Search (NAS) now focuses on automatically finding the best CNN architecture to handle the above tasks. However, the verification of a searched architecture is very time-consuming and makes predictor-based methods become an essential and important branch of NAS. Two commonly used techniques to build predictors are graph-convolution networks (GCN) and multilayer perceptron (MLP). In this paper, we consider the difference between GCN and MLP on adjacent operation trails and then propose the Redirected Adjacent Trails NAS (RATs-NAS) to quickly search for the desired neural network architecture. The RATs-NAS consists of two components: the Redirected Adjacent Trails GCN (RATs-GCN) and the Predictor-based Search Space Sampling (P3S) module. RATs-GCN can change trails and their strengths to search for a better neural network architecture. P3S can rapidly focus on tighter intervals of FLOPs in the search space. Based on our observations on cell-based NAS, we believe that architectures with similar FLOPs will perform similarly. Finally, the RATs-NAS consisting of RATs-GCN and P3S beats WeakNAS, Arch-Graph, and others by a significant margin on three sub-datasets of NASBench-201.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge