"Time": models, code, and papers

Continuous Time-Delay Estimation From Sampled Measurements

Nov 20, 2022

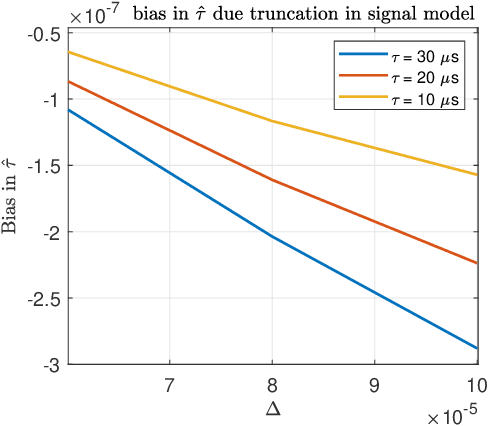

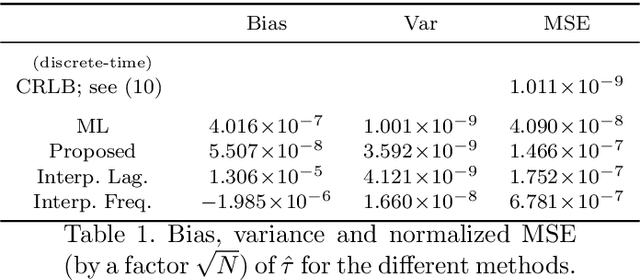

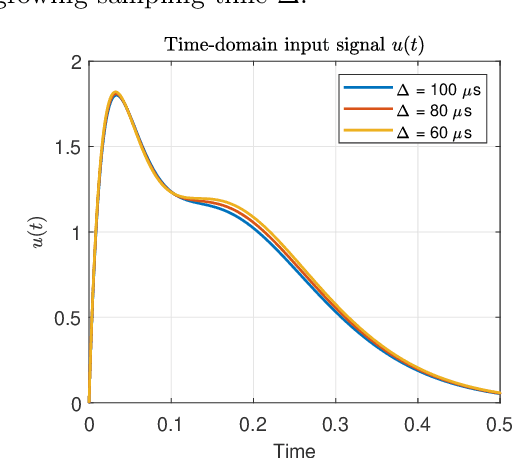

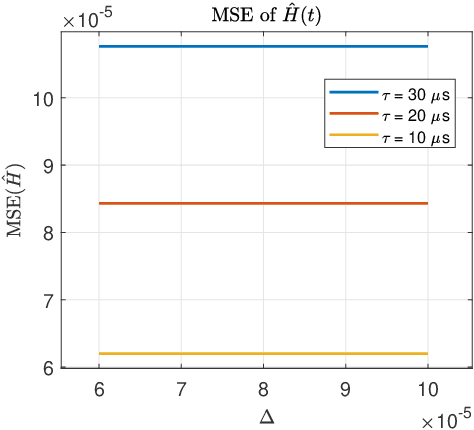

An algorithm for continuous time-delay estimation from sampled output data and known input of finite energy is presented. The continuous time-delay modeling allows for the estimation of subsample delays. The proposed estimation algorithm consists of two steps. First, the continuous Laguerre spectrum of the output signal is estimated from discrete-time (sampled) noisy measurements. Second, an estimate of the delay value is obtained in Laguerre domain given a continuous-time description of the input. The second step of the algorithm is shown to be intrinsically biased, the bias sources are established, and the bias itself is modeled. The proposed delay estimation approach is compared in a Monte-Carlo simulation with state-of-the-art methods implemented in time, frequency, and Laguerre domain demonstrating comparable or higher accuracy for the considered case.

Physics-Informed Localized Learning for Advection-Diffusion-Reaction Systems

May 05, 2023

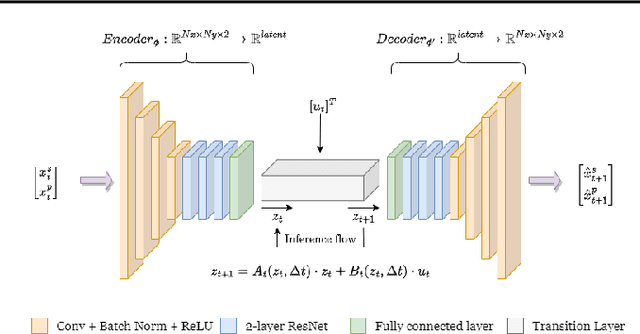

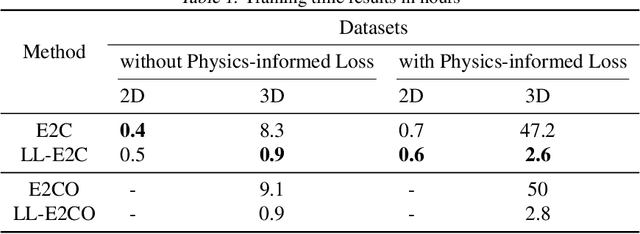

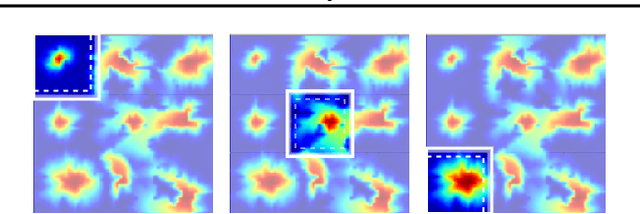

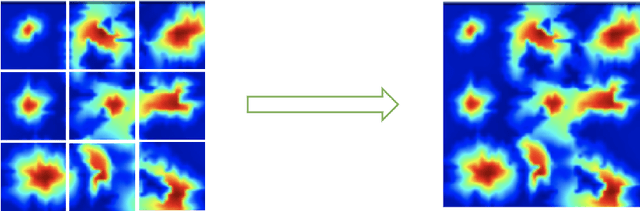

The global push for new energy solutions, such as Geothermal, and Carbon Capture and Sequestration initiatives has thrust new demands upon the current state-of the-art subsurface fluid simulators. The requirement to be able to simulate a large order of reservoir states simultaneously in a short period of time has opened the door of opportunity for the application of machine learning techniques for surrogate modelling. We propose a novel physics-informed and boundary conditions-aware Localized Learning method which extends the Embed-to-Control (E2C) and Embed-to-Control and Observed (E2CO) models to learn local representations of global state variables in an Advection-Diffusion Reaction system. We show that our model trained on reservoir simulation data is able to predict future states of the system, given a set of controls, to a great deal of accuracy with only a fraction of the available information, while also reducing training times significantly compared to the original E2C and E2CO models.

Video alignment using unsupervised learning of local and global features

Apr 13, 2023

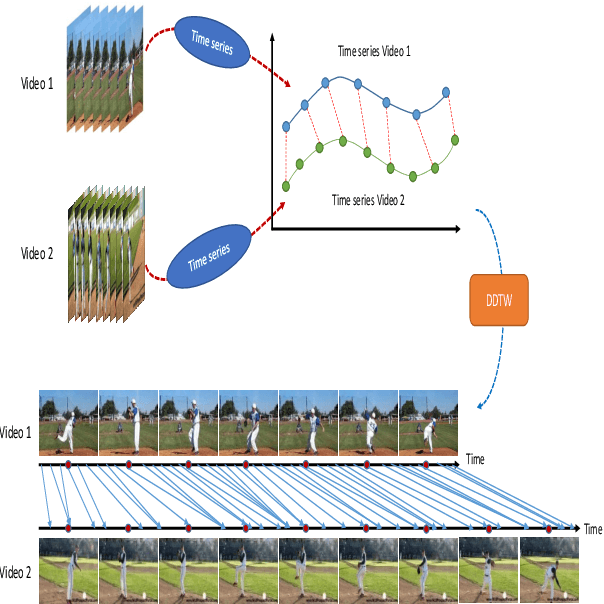

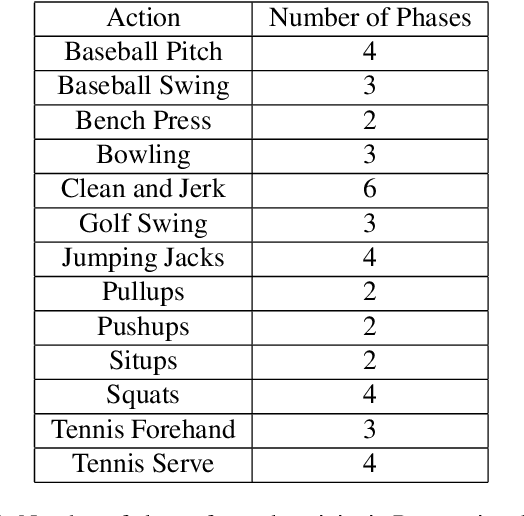

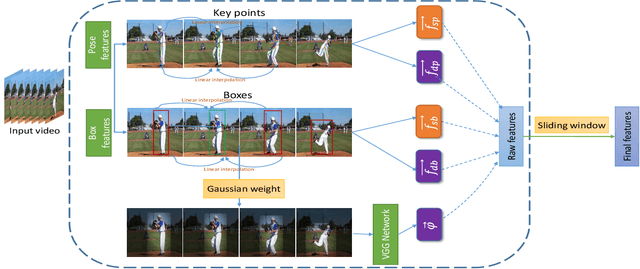

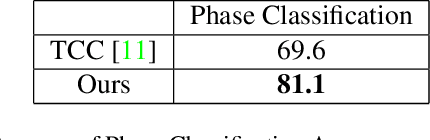

In this paper, we tackle the problem of video alignment, the process of matching the frames of a pair of videos containing similar actions. The main challenge in video alignment is that accurate correspondence should be established despite the differences in the execution processes and appearances between the two videos. We introduce an unsupervised method for alignment that uses global and local features of the frames. In particular, we introduce effective features for each video frame by means of three machine vision tools: person detection, pose estimation, and VGG network. Then the features are processed and combined to construct a multidimensional time series that represent the video. The resulting time series are used to align videos of the same actions using a novel version of dynamic time warping named Diagonalized Dynamic Time Warping(DDTW). The main advantage of our approach is that no training is required, which makes it applicable for any new type of action without any need to collect training samples for it. For evaluation, we considered video synchronization and phase classification tasks on the Penn action dataset. Also, for an effective evaluation of the video synchronization task, we present a new metric called Enclosed Area Error(EAE). The results show that our method outperforms previous state-of-the-art methods, such as TCC and other self-supervised and supervised methods.

Deep reinforcement learning applied to an assembly sequence planning problem with user preferences

Apr 13, 2023Deep reinforcement learning (DRL) has demonstrated its potential in solving complex manufacturing decision-making problems, especially in a context where the system learns over time with actual operation in the absence of training data. One interesting and challenging application for such methods is the assembly sequence planning (ASP) problem. In this paper, we propose an approach to the implementation of DRL methods in ASP. The proposed approach introduces in the RL environment parametric actions to improve training time and sample efficiency and uses two different reward signals: (1) user's preferences and (2) total assembly time duration. The user's preferences signal addresses the difficulties and non-ergonomic properties of the assembly faced by the human and the total assembly time signal enforces the optimization of the assembly. Three of the most powerful deep RL methods were studied, Advantage Actor-Critic (A2C), Deep Q-Learning (DQN), and Rainbow, in two different scenarios: a stochastic and a deterministic one. Finally, the performance of the DRL algorithms was compared to tabular Q-Learnings performance. After 10,000 episodes, the system achieved near optimal behaviour for the algorithms tabular Q-Learning, A2C, and Rainbow. Though, for more complex scenarios, the algorithm tabular Q-Learning is expected to underperform in comparison to the other 2 algorithms. The results support the potential for the application of deep reinforcement learning in assembly sequence planning problems with human interaction.

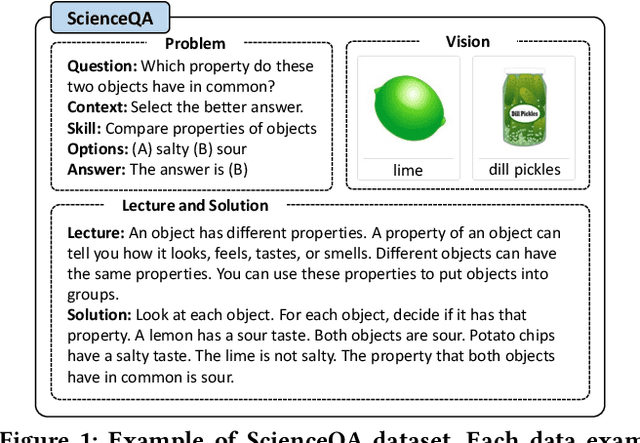

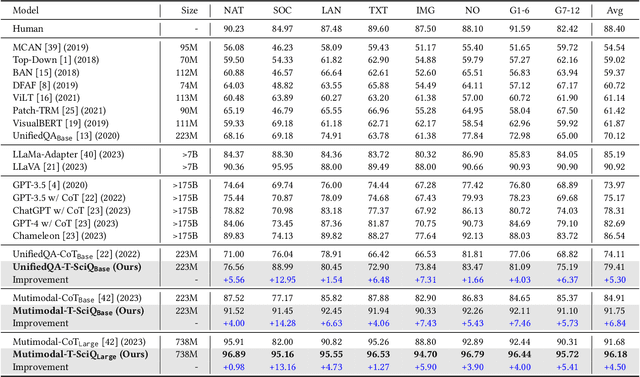

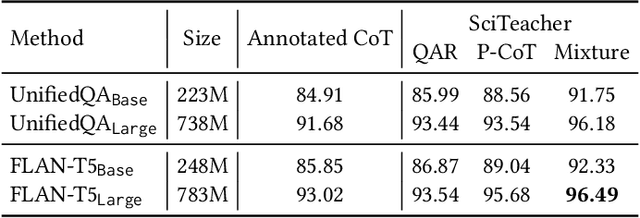

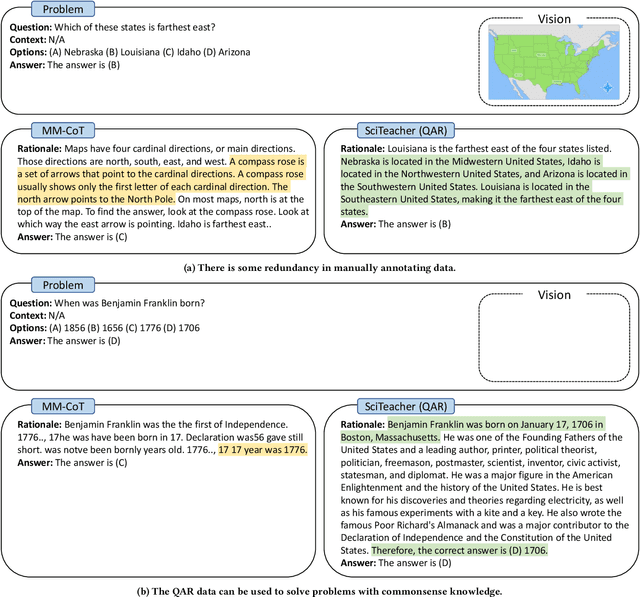

T-SciQ: Teaching Multimodal Chain-of-Thought Reasoning via Large Language Model Signals for Science Question Answering

May 09, 2023

Large Language Models (LLMs) have recently demonstrated exceptional performance in various Natural Language Processing (NLP) tasks. They have also shown the ability to perform chain-of-thought (CoT) reasoning to solve complex problems. Recent studies have explored CoT reasoning in complex multimodal scenarios, such as the science question answering task, by fine-tuning multimodal models with high-quality human-annotated CoT rationales. However, collecting high-quality COT rationales is usually time-consuming and costly. Besides, the annotated rationales are hardly accurate due to the redundant information involved or the essential information missed. To address these issues, we propose a novel method termed \emph{T-SciQ} that aims at teaching science question answering with LLM signals. The T-SciQ approach generates high-quality CoT rationales as teaching signals and is advanced to train much smaller models to perform CoT reasoning in complex modalities. Additionally, we introduce a novel data mixing strategy to produce more effective teaching data samples for simple and complex science question answer problems. Extensive experimental results show that our T-SciQ method achieves a new state-of-the-art performance on the ScienceQA benchmark, with an accuracy of 96.18%. Moreover, our approach outperforms the most powerful fine-tuned baseline by 4.5%.

Optimizing Privacy, Utility and Efficiency in Constrained Multi-Objective Federated Learning

May 09, 2023

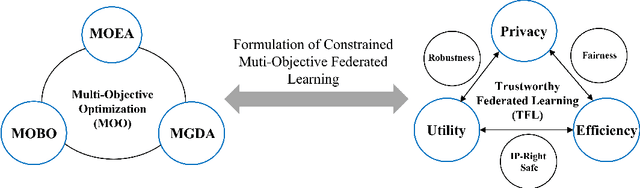

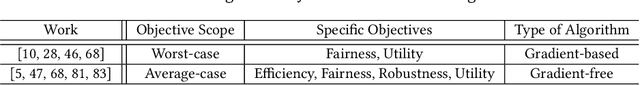

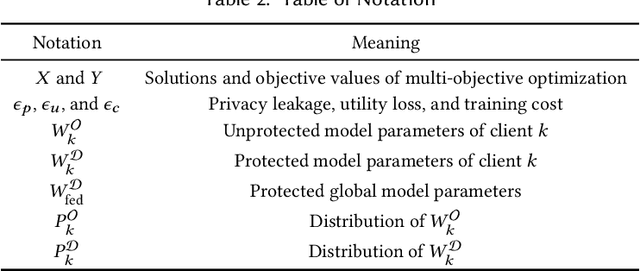

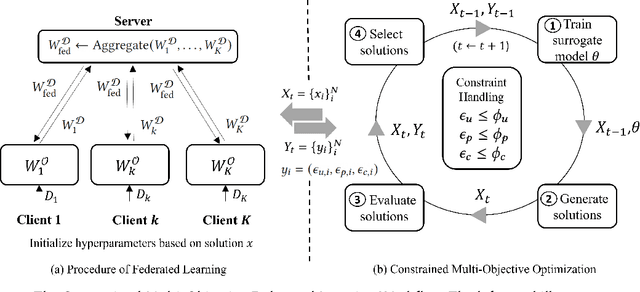

Conventionally, federated learning aims to optimize a single objective, typically the utility. However, for a federated learning system to be trustworthy, it needs to simultaneously satisfy multiple/many objectives, such as maximizing model performance, minimizing privacy leakage and training cost, and being robust to malicious attacks. Multi-Objective Optimization (MOO) aiming to optimize multiple conflicting objectives at the same time is quite suitable for solving the optimization problem of Trustworthy Federated Learning (TFL). In this paper, we unify MOO and TFL by formulating the problem of constrained multi-objective federated learning (CMOFL). Under this formulation, existing MOO algorithms can be adapted to TFL straightforwardly. Different from existing CMOFL works focusing on utility, efficiency, fairness, and robustness, we consider optimizing privacy leakage along with utility loss and training cost, the three primary objectives of a TFL system. We develop two improved CMOFL algorithms based on NSGA-II and PSL, respectively, for effectively and efficiently finding Pareto optimal solutions, and we provide theoretical analysis on their convergence. We design specific measurements of privacy leakage, utility loss, and training cost for three privacy protection mechanisms: Randomization, BatchCrypt (An efficient version of homomorphic encryption), and Sparsification. Empirical experiments conducted under each of the three protection mechanisms demonstrate the effectiveness of our proposed algorithms.

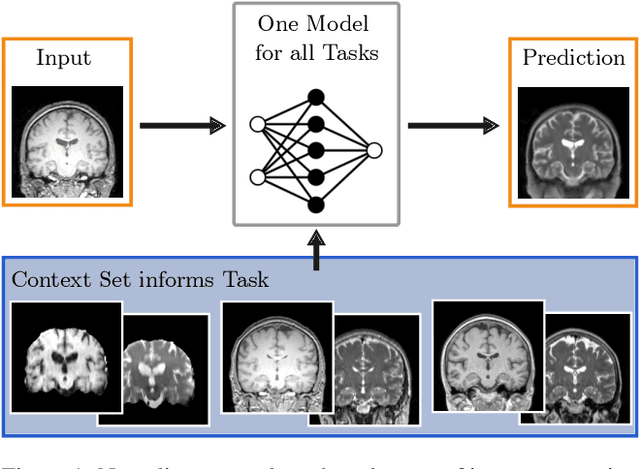

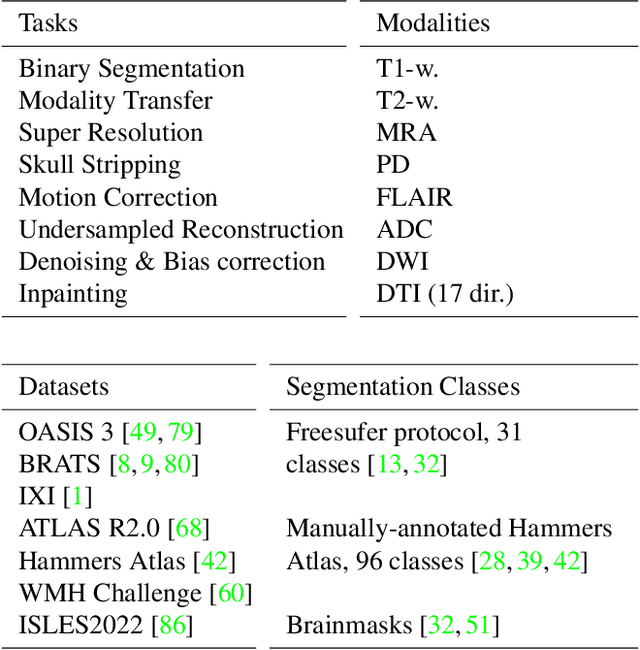

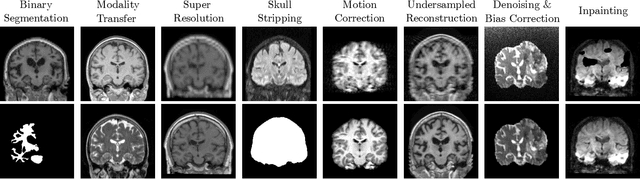

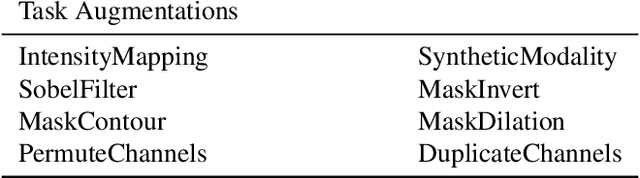

Neuralizer: General Neuroimage Analysis without Re-Training

May 09, 2023

Neuroimage processing tasks like segmentation, reconstruction, and registration are central to the study of neuroscience. Robust deep learning strategies and architectures used to solve these tasks are often similar. Yet, when presented with a new task or a dataset with different visual characteristics, practitioners most often need to train a new model, or fine-tune an existing one. This is a time-consuming process that poses a substantial barrier for the thousands of neuroscientists and clinical researchers who often lack the resources or machine-learning expertise to train deep learning models. In practice, this leads to a lack of adoption of deep learning, and neuroscience tools being dominated by classical frameworks. We introduce Neuralizer, a single model that generalizes to previously unseen neuroimaging tasks and modalities without the need for re-training or fine-tuning. Tasks do not have to be known a priori, and generalization happens in a single forward pass during inference. The model can solve processing tasks across multiple image modalities, acquisition methods, and datasets, and generalize to tasks and modalities it has not been trained on. Our experiments on coronal slices show that when few annotated subjects are available, our multi-task network outperforms task-specific baselines without training on the task.

Towards unraveling calibration biases in medical image analysis

May 09, 2023

In recent years the development of artificial intelligence (AI) systems for automated medical image analysis has gained enormous momentum. At the same time, a large body of work has shown that AI systems can systematically and unfairly discriminate against certain populations in various application scenarios. These two facts have motivated the emergence of algorithmic fairness studies in this field. Most research on healthcare algorithmic fairness to date has focused on the assessment of biases in terms of classical discrimination metrics such as AUC and accuracy. Potential biases in terms of model calibration, however, have only recently begun to be evaluated. This is especially important when working with clinical decision support systems, as predictive uncertainty is key for health professionals to optimally evaluate and combine multiple sources of information. In this work we study discrimination and calibration biases in models trained for automatic detection of malignant dermatological conditions from skin lesions images. Importantly, we show how several typically employed calibration metrics are systematically biased with respect to sample sizes, and how this can lead to erroneous fairness analysis if not taken into consideration. This is of particular relevance to fairness studies, where data imbalance results in drastic sample size differences between demographic sub-groups, which, if not taken into account, can act as confounders.

RATs-NAS: Redirection of Adjacent Trails on GCN for Neural Architecture Search

May 09, 2023

Various hand-designed CNN architectures have been developed, such as VGG, ResNet, DenseNet, etc., and achieve State-of-the-Art (SoTA) levels on different tasks. Neural Architecture Search (NAS) now focuses on automatically finding the best CNN architecture to handle the above tasks. However, the verification of a searched architecture is very time-consuming and makes predictor-based methods become an essential and important branch of NAS. Two commonly used techniques to build predictors are graph-convolution networks (GCN) and multilayer perceptron (MLP). In this paper, we consider the difference between GCN and MLP on adjacent operation trails and then propose the Redirected Adjacent Trails NAS (RATs-NAS) to quickly search for the desired neural network architecture. The RATs-NAS consists of two components: the Redirected Adjacent Trails GCN (RATs-GCN) and the Predictor-based Search Space Sampling (P3S) module. RATs-GCN can change trails and their strengths to search for a better neural network architecture. P3S can rapidly focus on tighter intervals of FLOPs in the search space. Based on our observations on cell-based NAS, we believe that architectures with similar FLOPs will perform similarly. Finally, the RATs-NAS consisting of RATs-GCN and P3S beats WeakNAS, Arch-Graph, and others by a significant margin on three sub-datasets of NASBench-201.

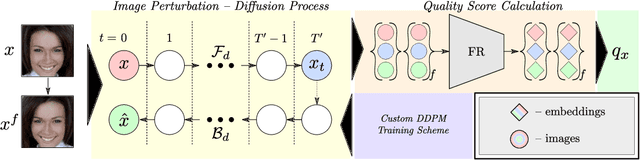

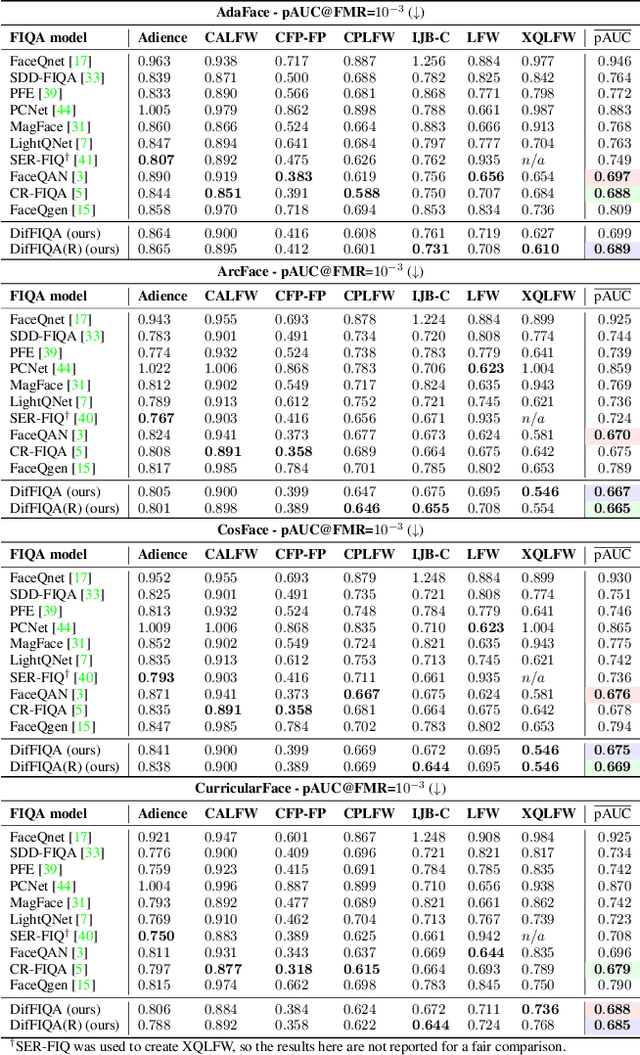

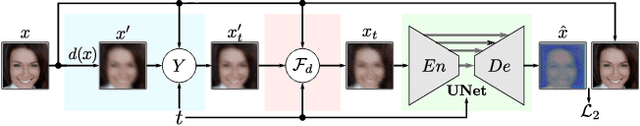

DifFIQA: Face Image Quality Assessment Using Denoising Diffusion Probabilistic Models

May 09, 2023

Modern face recognition (FR) models excel in constrained scenarios, but often suffer from decreased performance when deployed in unconstrained (real-world) environments due to uncertainties surrounding the quality of the captured facial data. Face image quality assessment (FIQA) techniques aim to mitigate these performance degradations by providing FR models with sample-quality predictions that can be used to reject low-quality samples and reduce false match errors. However, despite steady improvements, ensuring reliable quality estimates across facial images with diverse characteristics remains challenging. In this paper, we present a powerful new FIQA approach, named DifFIQA, which relies on denoising diffusion probabilistic models (DDPM) and ensures highly competitive results. The main idea behind the approach is to utilize the forward and backward processes of DDPMs to perturb facial images and quantify the impact of these perturbations on the corresponding image embeddings for quality prediction. Because the diffusion-based perturbations are computationally expensive, we also distill the knowledge encoded in DifFIQA into a regression-based quality predictor, called DifFIQA(R), that balances performance and execution time. We evaluate both models in comprehensive experiments on 7 datasets, with 4 target FR models and against 10 state-of-the-art FIQA techniques with highly encouraging results. The source code will be made publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge