"Time": models, code, and papers

A Hardware and Software Platform for Aerial Object Localization

May 29, 2023

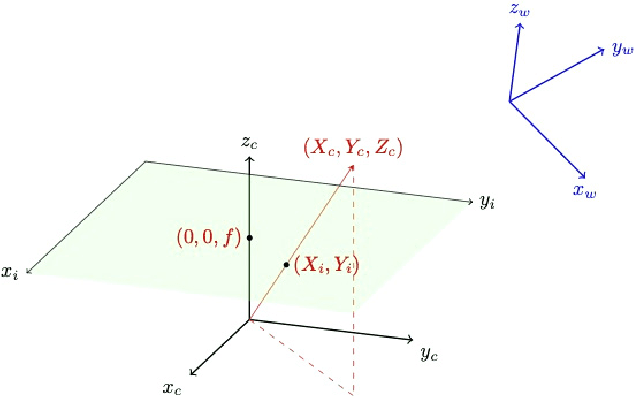

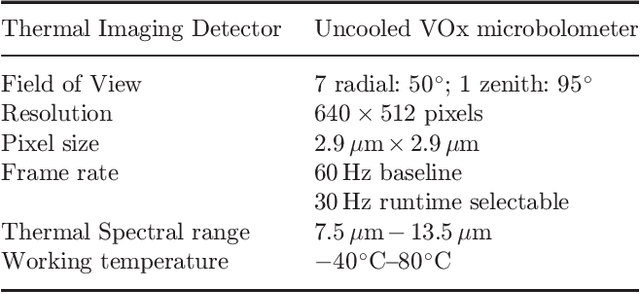

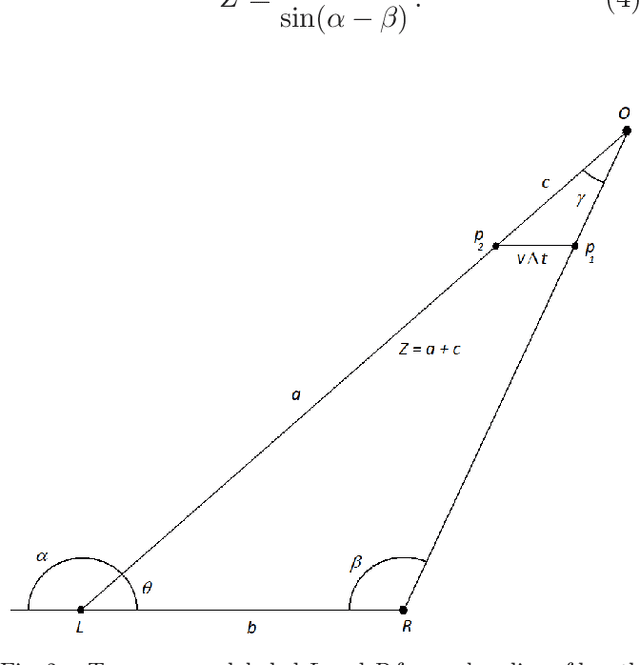

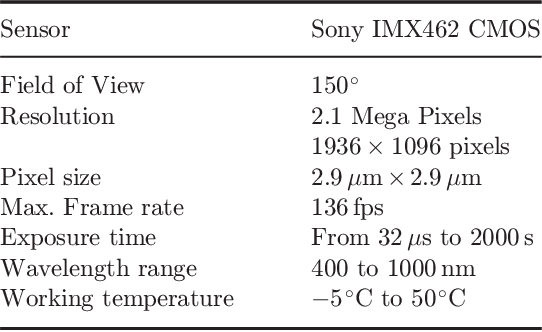

To date, there are little reliable data on the position, velocity and acceleration characteristics of Unidentified Aerial Phenomena (UAP). The dual hardware and software system described in this document provides a means to address this gap. We describe a weatherized multi-camera system which can capture images in the visible, infrared and near infrared wavelengths. We then describe the software we will use to calibrate the cameras and to robustly localize objects-of-interest in three dimensions. We show how object localizations captured over time will be used to compute the velocity and acceleration of airborne objects.

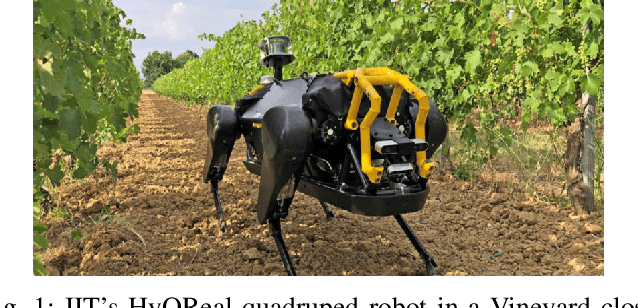

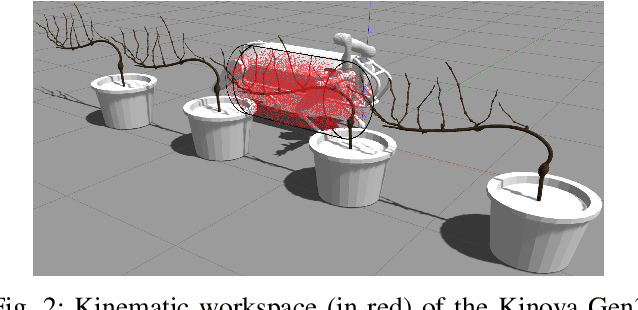

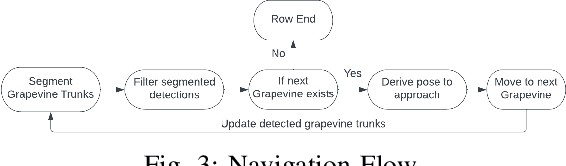

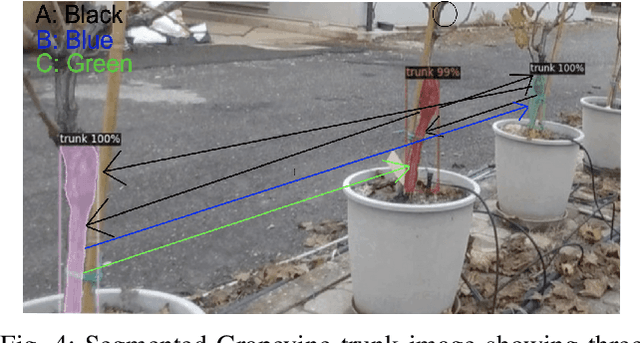

Computer-Vision Based Real Time Waypoint Generation for Autonomous Vineyard Navigation with Quadruped Robots

May 02, 2023

The VINUM project seeks to address the shortage of skilled labor in modern vineyards by introducing a cutting-edge mobile robotic solution. Leveraging the capabilities of the quadruped robot, HyQReal, this system, equipped with arm and vision sensors, offers autonomous navigation and winter pruning of grapevines reducing the need for human intervention. At the heart of this approach lies an architecture that empowers the robot to easily navigate vineyards, identify grapevines with unparalleled accuracy, and approach them for pruning with precision. A state machine drives the process, deftly switching between various stages to ensure seamless and efficient task completion. The system's performance was assessed through experimentation, focusing on waypoint precision and optimizing the robot's workspace for single-plant operations. Results indicate that the architecture is highly reliable, with a mean error of 21.5cm and a standard deviation of 17.6cm for HyQReal. However, improvements in grapevine detection accuracy are necessary for optimal performance. This work is based on a computer-vision-based navigation method for quadruped robots in vineyards, opening up new possibilities for selective task automation. The system's architecture works well in ideal weather conditions, generating and arriving at precise waypoints that maximize the attached robotic arm's workspace. This work is an extension of our short paper presented at the Italian Conference on Robotics and Intelligent Machines (I-RIM).

Learning time-scales in two-layers neural networks

Feb 28, 2023

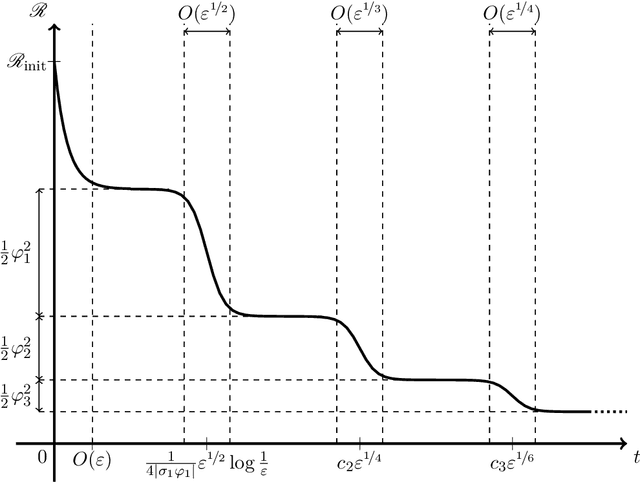

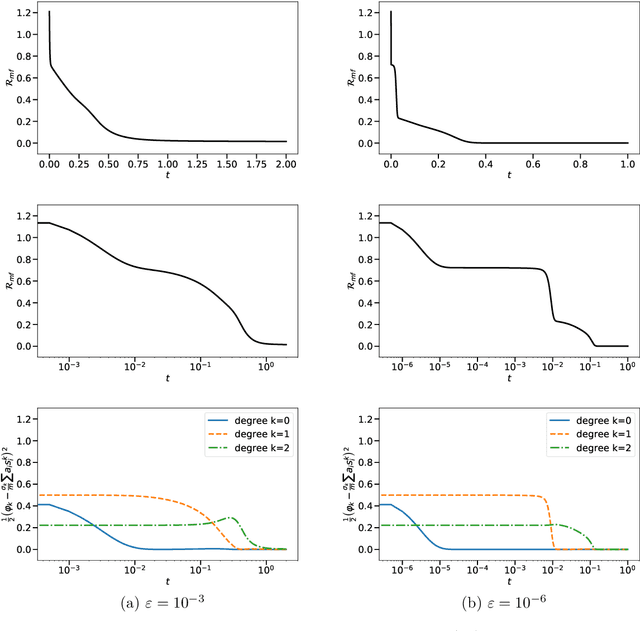

Gradient-based learning in multi-layer neural networks displays a number of striking features. In particular, the decrease rate of empirical risk is non-monotone even after averaging over large batches. Long plateaus in which one observes barely any progress alternate with intervals of rapid decrease. These successive phases of learning often take place on very different time scales. Finally, models learnt in an early phase are typically `simpler' or `easier to learn' although in a way that is difficult to formalize. Although theoretical explanations of these phenomena have been put forward, each of them captures at best certain specific regimes. In this paper, we study the gradient flow dynamics of a wide two-layer neural network in high-dimension, when data are distributed according to a single-index model (i.e., the target function depends on a one-dimensional projection of the covariates). Based on a mixture of new rigorous results, non-rigorous mathematical derivations, and numerical simulations, we propose a scenario for the learning dynamics in this setting. In particular, the proposed evolution exhibits separation of timescales and intermittency. These behaviors arise naturally because the population gradient flow can be recast as a singularly perturbed dynamical system.

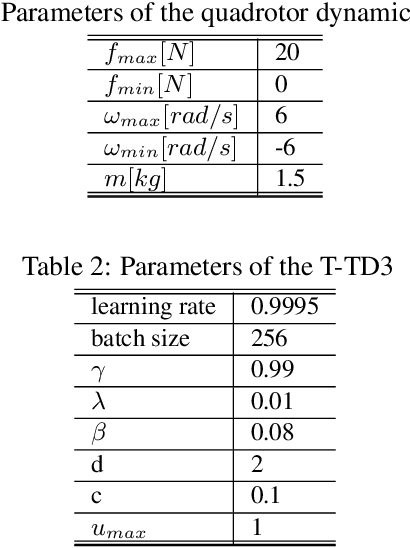

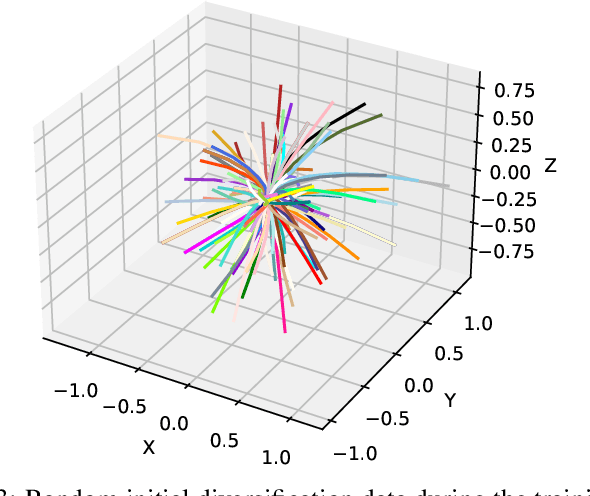

Time-attenuating Twin Delayed DDPG Reinforcement Learning for Trajectory Tracking Control of Quadrotors

Feb 13, 2023

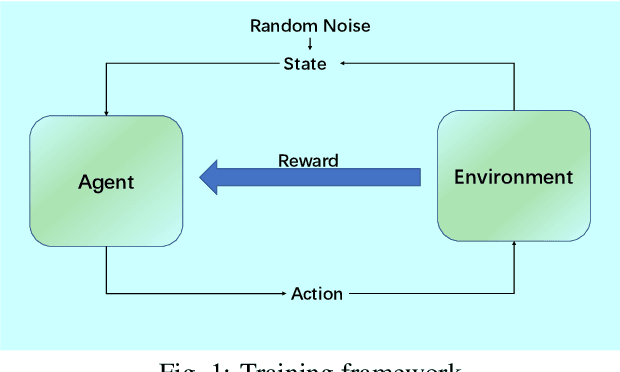

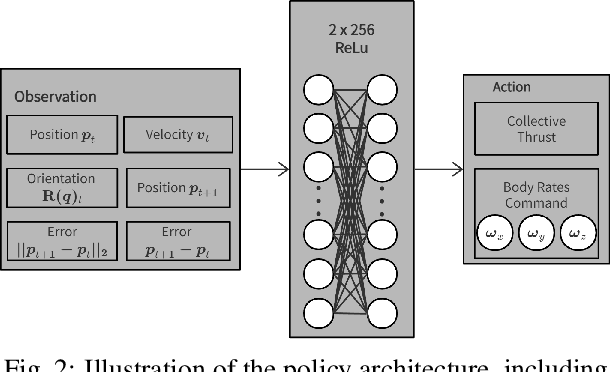

Continuous trajectory tracking control of quadrotors is complicated when considering noise from the environment. Due to the difficulty in modeling the environmental dynamics, tracking methodologies based on conventional control theory, such as model predictive control, have limitations on tracking accuracy and response time. We propose a Time-attenuating Twin Delayed DDPG, a model-free algorithm that is robust to noise, to better handle the trajectory tracking task. A deep reinforcement learning framework is constructed, where a time decay strategy is designed to avoid trapping into local optima. The experimental results show that the tracking error is significantly small, and the operation time is one-tenth of that of a traditional algorithm. The OpenAI Mujoco tool is used to verify the proposed algorithm, and the simulation results show that, the proposed method can significantly improve the training efficiency and effectively improve the accuracy and convergence stability.

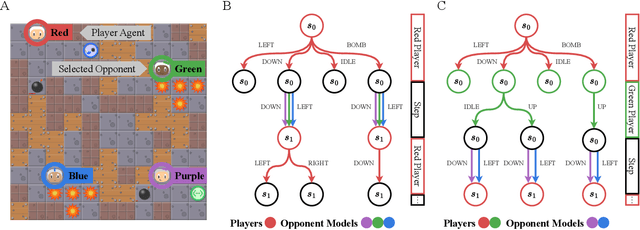

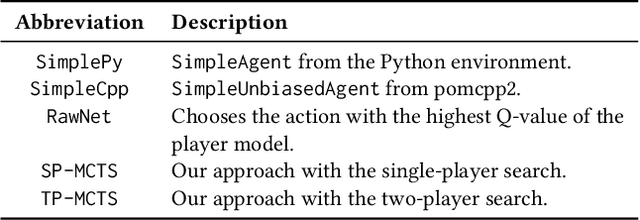

Know your Enemy: Investigating Monte-Carlo Tree Search with Opponent Models in Pommerman

May 22, 2023

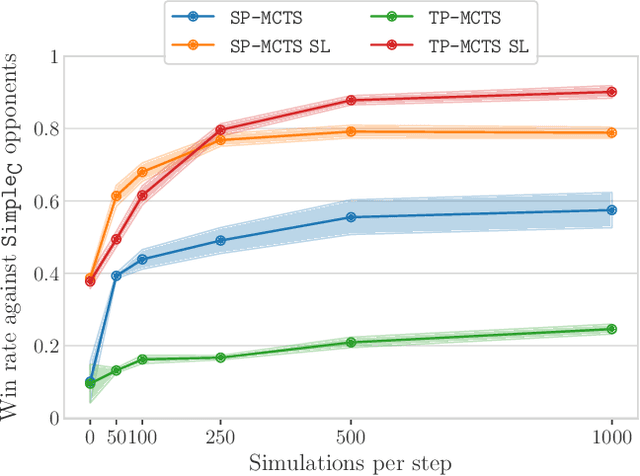

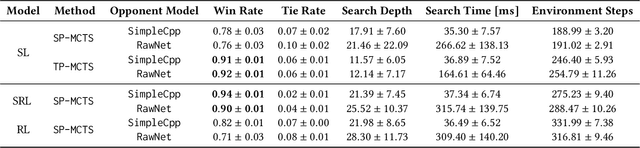

In combination with Reinforcement Learning, Monte-Carlo Tree Search has shown to outperform human grandmasters in games such as Chess, Shogi and Go with little to no prior domain knowledge. However, most classical use cases only feature up to two players. Scaling the search to an arbitrary number of players presents a computational challenge, especially if decisions have to be planned over a longer time horizon. In this work, we investigate techniques that transform general-sum multiplayer games into single-player and two-player games that consider other agents to act according to given opponent models. For our evaluation, we focus on the challenging Pommerman environment which involves partial observability, a long time horizon and sparse rewards. In combination with our search methods, we investigate the phenomena of opponent modeling using heuristics and self-play. Overall, we demonstrate the effectiveness of our multiplayer search variants both in a supervised learning and reinforcement learning setting.

A Survey of NOMA: State of the Art, Key Techniques, Open Challenges, Security Issues and Future Trends

Jun 11, 2023

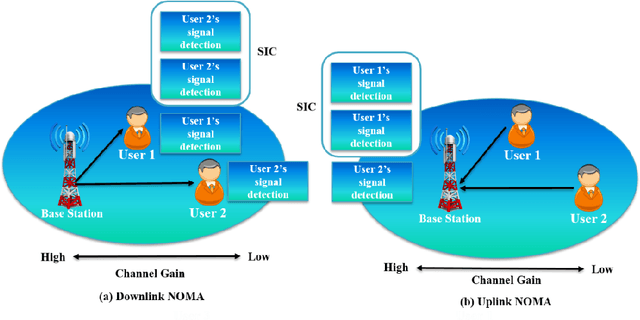

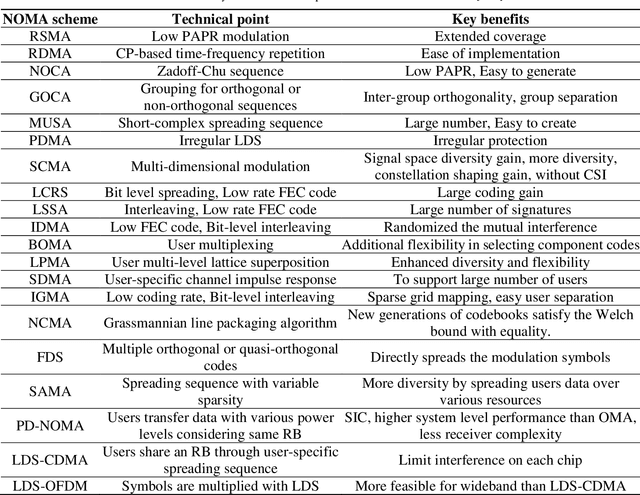

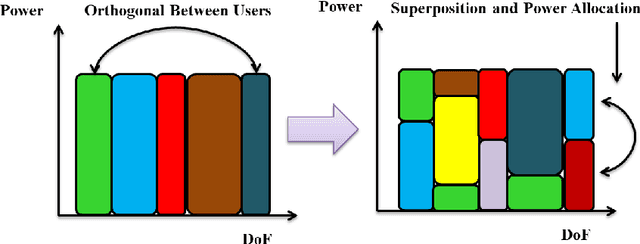

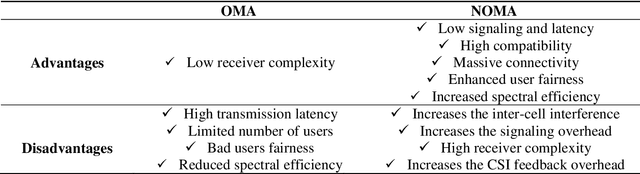

Non-orthogonal multiple access (NOMA) systems can serve multiple users in contrast to orthogonal multiple-access (OMA), which makes use of the limited time or frequency domain resources. It can help to address the unprecedented technological advancements of the sixth generation (6G) network, which include high spectral efficiency, high flexibility, low transmission latency, massive connectivity, higher cell-edge throughput, and user fairness. NOMA has gained widespread recognition as a viable technology for future wireless networks. The main characteristic that sets NOMA apart from the conventional orthogonal multiple access (OMA) techniques is its ability to handle more users than orthogonal resource slots. NOMA techniques can serve multiple users in the same resource block by multiplexing users in power or code domain. The purpose of this paper is to provide a thorough overview of the promising NOMA systems. Initially, we discuss the state-of-the-art and existing literature on NOMA systems. This study also examines the practical deployment of NOMA implementation and key performance indicators. An overview of the most recent NOMA advancements and applications is also given in this survey. We also briefly discuss that multiple-input multiple-output (MIMO), visible light communications, cognitive and cooperative communications, intelligent reflecting surfaces (IRS), unmanned aerial vehicles (UAV), HetNets, backscatter communication, mobile edge computing (MEC), deep learning (DL), and other emerging and existing wireless technologies can all be flexibly combined with NOMA. This study surveys a thorough analysis of the interactions between NOMA and the aforementioned technologies. Lastly, we will highlight a number of difficult open problems and security issues that need to be resolved for NOMA, along with pertinent possibilities and potential future research directions.

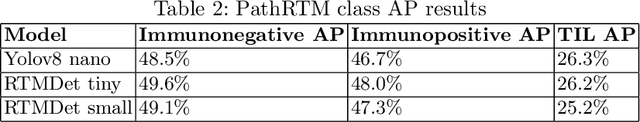

PathRTM: Real-time prediction of KI-67 and tumor-infiltrated lymphocytes

Apr 23, 2023

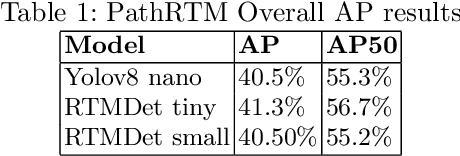

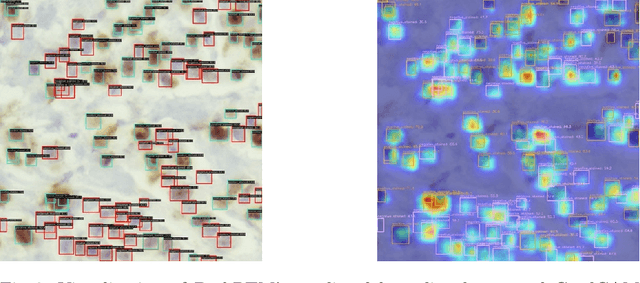

In this paper, we introduce PathRTM, a novel deep neural network detector based on RTMDet, for automated KI-67 proliferation and tumor-infiltrated lymphocyte estimation. KI-67 proliferation and tumor-infiltrated lymphocyte estimation play a crucial role in cancer diagnosis and treatment. PathRTM is an extension of the PathoNet work, which uses single pixel keypoints for within each cell. We demonstrate that PathRTM, with higher-level supervision in the form of bounding box labels generated automatically from the keypoints using NuClick, can significantly improve KI-67 proliferation and tumorinfiltrated lymphocyte estimation. Experiments on our custom dataset show that PathRTM achieves state-of-the-art performance in KI-67 immunopositive, immunonegative, and lymphocyte detection, with an average precision (AP) of 41.3%. Our results suggest that PathRTM is a promising approach for accurate KI-67 proliferation and tumor-infiltrated lymphocyte estimation, offering annotation efficiency, accurate predictive capabilities, and improved runtime. The method also enables estimation of cell sizes of interest, which was previously unavailable, through the bounding box predictions.

Metamathematics of Algorithmic Composition

May 24, 2023This essay recounts my personal journey towards a deeper understanding of the mathematical foundations of algorithmic music composition. I do not spend much time on specific mathematical algorithms used by composers; rather, I focus on general issues such as fundamental limits and possibilities, by analogy with metalogic, metamathematics, and computability theory. I discuss implications from these foundations for the future of algorithmic composition.

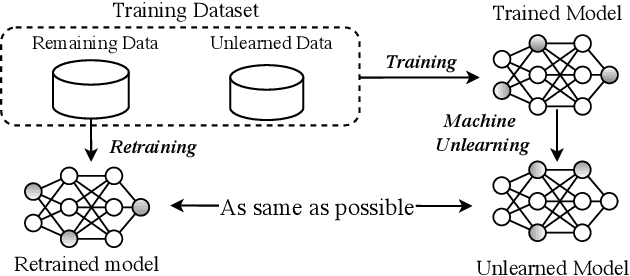

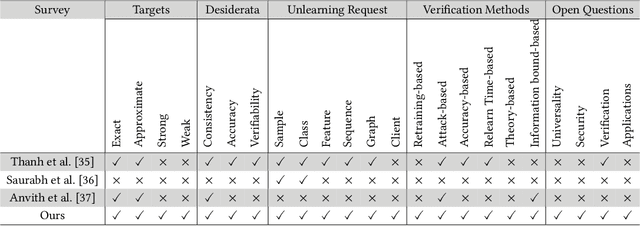

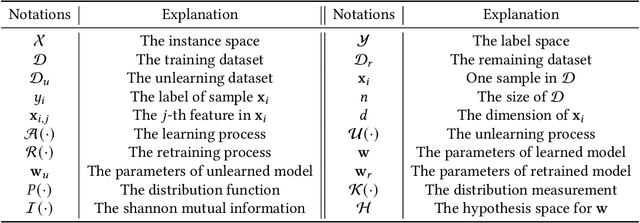

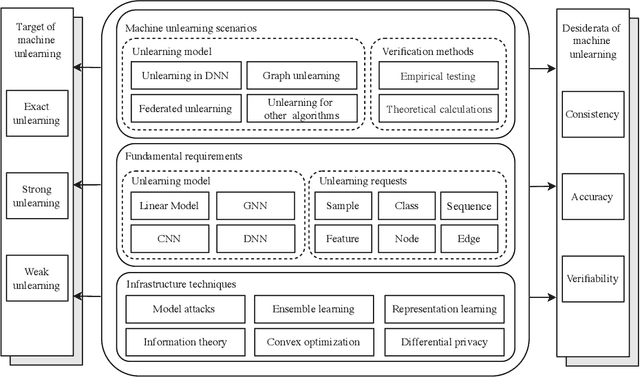

Machine Unlearning: A Survey

Jun 06, 2023

Machine learning has attracted widespread attention and evolved into an enabling technology for a wide range of highly successful applications, such as intelligent computer vision, speech recognition, medical diagnosis, and more. Yet a special need has arisen where, due to privacy, usability, and/or the right to be forgotten, information about some specific samples needs to be removed from a model, called machine unlearning. This emerging technology has drawn significant interest from both academics and industry due to its innovation and practicality. At the same time, this ambitious problem has led to numerous research efforts aimed at confronting its challenges. To the best of our knowledge, no study has analyzed this complex topic or compared the feasibility of existing unlearning solutions in different kinds of scenarios. Accordingly, with this survey, we aim to capture the key concepts of unlearning techniques. The existing solutions are classified and summarized based on their characteristics within an up-to-date and comprehensive review of each category's advantages and limitations. The survey concludes by highlighting some of the outstanding issues with unlearning techniques, along with some feasible directions for new research opportunities.

Buying Information for Stochastic Optimization

Jun 06, 2023Stochastic optimization is one of the central problems in Machine Learning and Theoretical Computer Science. In the standard model, the algorithm is given a fixed distribution known in advance. In practice though, one may acquire at a cost extra information to make better decisions. In this paper, we study how to buy information for stochastic optimization and formulate this question as an online learning problem. Assuming the learner has an oracle for the original optimization problem, we design a $2$-competitive deterministic algorithm and a $e/(e-1)$-competitive randomized algorithm for buying information. We show that this ratio is tight as the problem is equivalent to a robust generalization of the ski-rental problem, which we call super-martingale stopping. We also consider an adaptive setting where the learner can choose to buy information after taking some actions for the underlying optimization problem. We focus on the classic optimization problem, Min-Sum Set Cover, where the goal is to quickly find an action that covers a given request drawn from a known distribution. We provide an $8$-competitive algorithm running in polynomial time that chooses actions and decides when to buy information about the underlying request.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge