"Time": models, code, and papers

Correlated Noise in Epoch-Based Stochastic Gradient Descent: Implications for Weight Variances

Jun 08, 2023

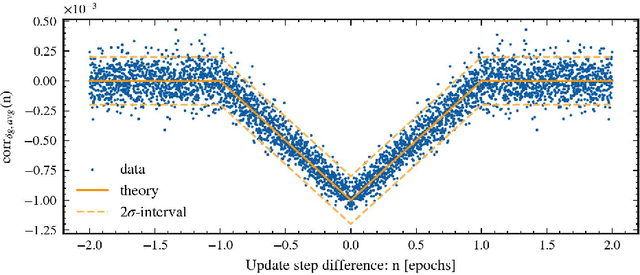

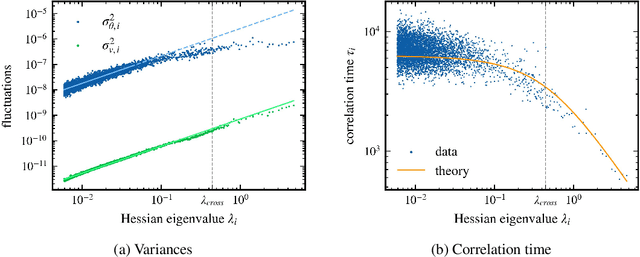

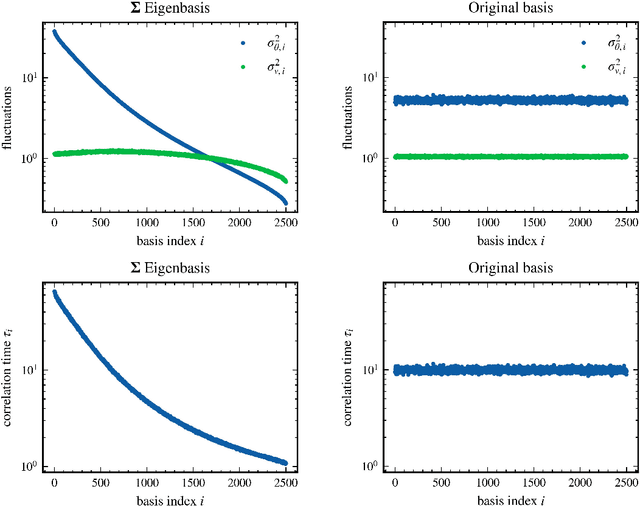

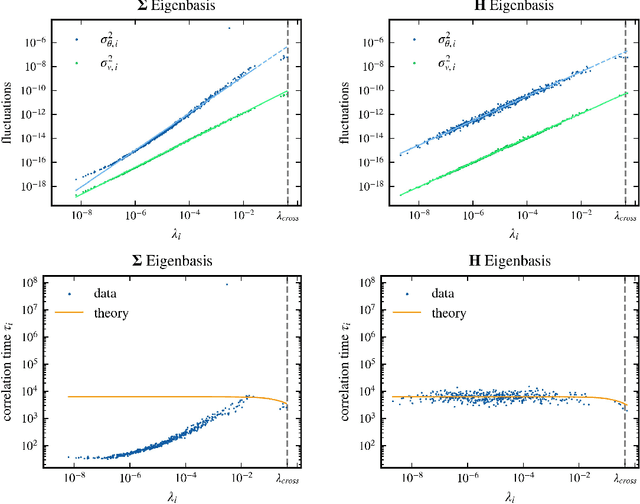

Stochastic gradient descent (SGD) has become a cornerstone of neural network optimization, yet the noise introduced by SGD is often assumed to be uncorrelated over time, despite the ubiquity of epoch-based training. In this work, we challenge this assumption and investigate the effects of epoch-based noise correlations on the stationary distribution of discrete-time SGD with momentum, limited to a quadratic loss. Our main contributions are twofold: first, we calculate the exact autocorrelation of the noise for training in epochs under the assumption that the noise is independent of small fluctuations in the weight vector; second, we explore the influence of correlations introduced by the epoch-based learning scheme on SGD dynamics. We find that for directions with a curvature greater than a hyperparameter-dependent crossover value, the results for uncorrelated noise are recovered. However, for relatively flat directions, the weight variance is significantly reduced. We provide an intuitive explanation for these results based on a crossover between correlation times, contributing to a deeper understanding of the dynamics of SGD in the presence of epoch-based noise correlations.

Ambulance Demand Prediction via Convolutional Neural Networks

Jun 08, 2023

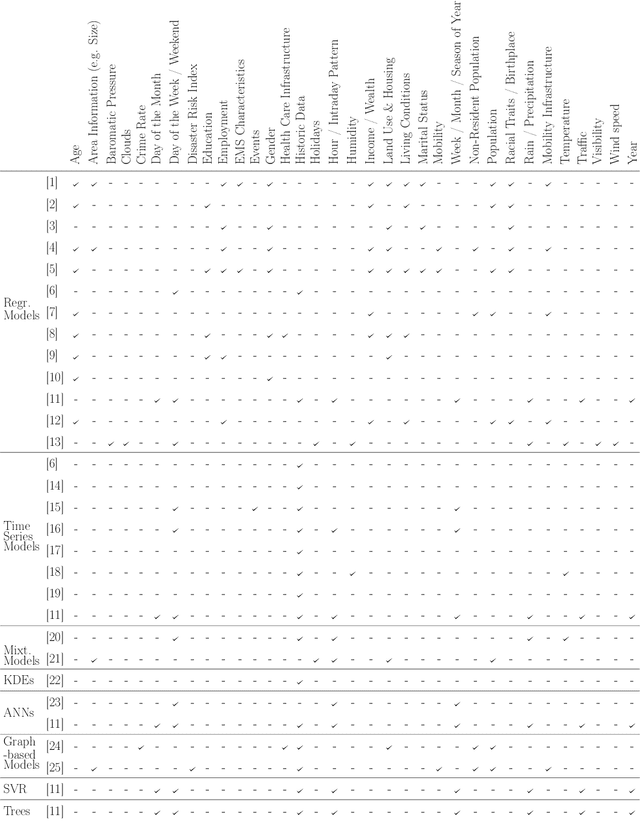

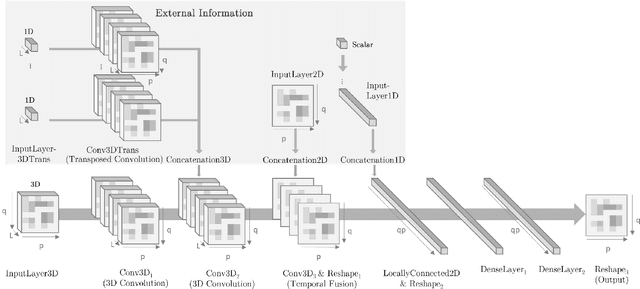

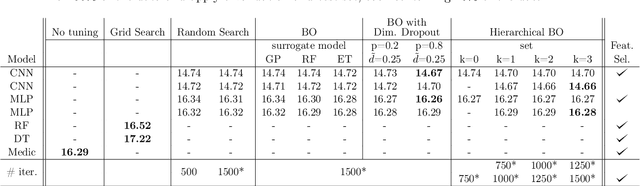

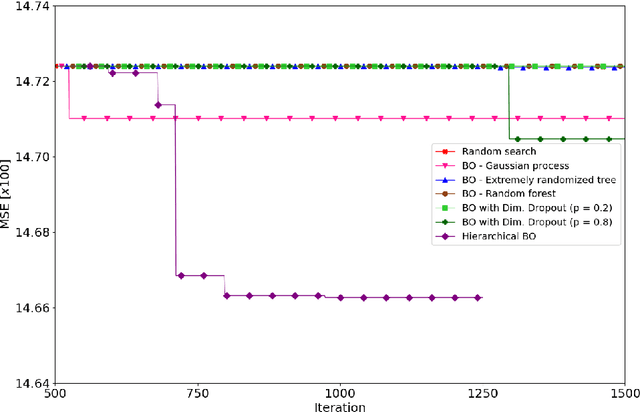

Minimizing response times is crucial for emergency medical services to reduce patients' waiting times and to increase their survival rates. Many models exist to optimize operational tasks such as ambulance allocation and dispatching. Including accurate demand forecasts in such models can improve operational decision-making. Against this background, we present a novel convolutional neural network (CNN) architecture that transforms time series data into heatmaps to predict ambulance demand. Applying such predictions requires incorporating external features that influence ambulance demands. We contribute to the existing literature by providing a flexible, generic CNN architecture, allowing for the inclusion of external features with varying dimensions. Additionally, we provide a feature selection and hyperparameter optimization framework utilizing Bayesian optimization. We integrate historical ambulance demand and external information such as weather, events, holidays, and time. To show the superiority of the developed CNN architecture over existing approaches, we conduct a case study for Seattle's 911 call data and include external information. We show that the developed CNN architecture outperforms existing state-of-the-art methods and industry practice by more than 9%.

QuOTeS: Query-Oriented Technical Summarization

Jun 20, 2023

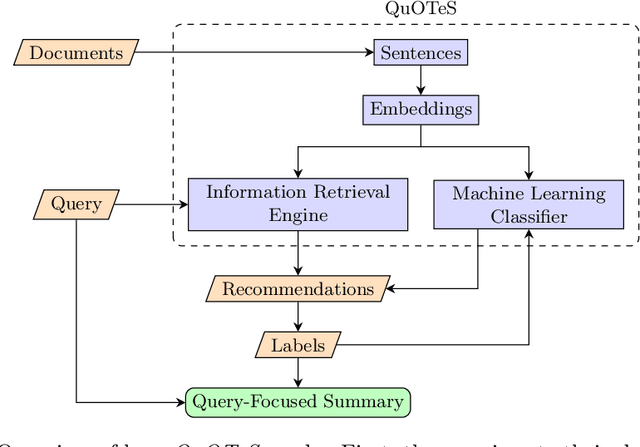

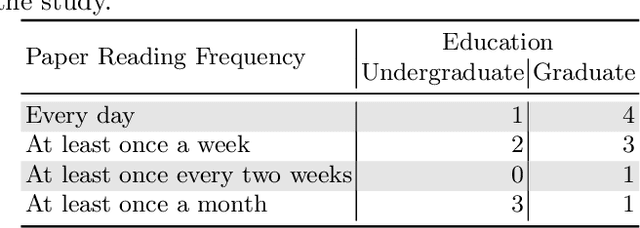

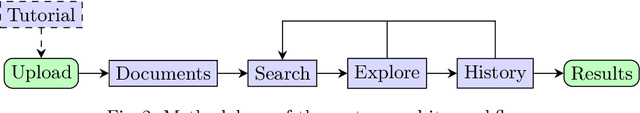

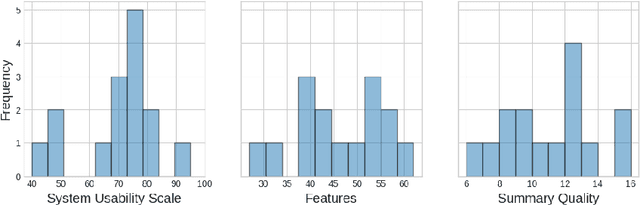

Abstract. When writing an academic paper, researchers often spend considerable time reviewing and summarizing papers to extract relevant citations and data to compose the Introduction and Related Work sections. To address this problem, we propose QuOTeS, an interactive system designed to retrieve sentences related to a summary of the research from a collection of potential references and hence assist in the composition of new papers. QuOTeS integrates techniques from Query-Focused Extractive Summarization and High-Recall Information Retrieval to provide Interactive Query-Focused Summarization of scientific documents. To measure the performance of our system, we carried out a comprehensive user study where participants uploaded papers related to their research and evaluated the system in terms of its usability and the quality of the summaries it produces. The results show that QuOTeS provides a positive user experience and consistently provides query-focused summaries that are relevant, concise, and complete. We share the code of our system and the novel Query-Focused Summarization dataset collected during our experiments at https://github.com/jarobyte91/quotes.

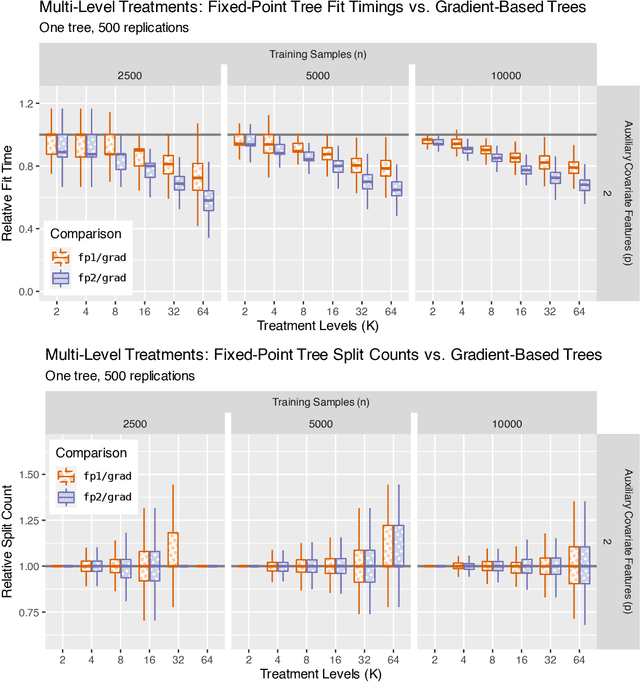

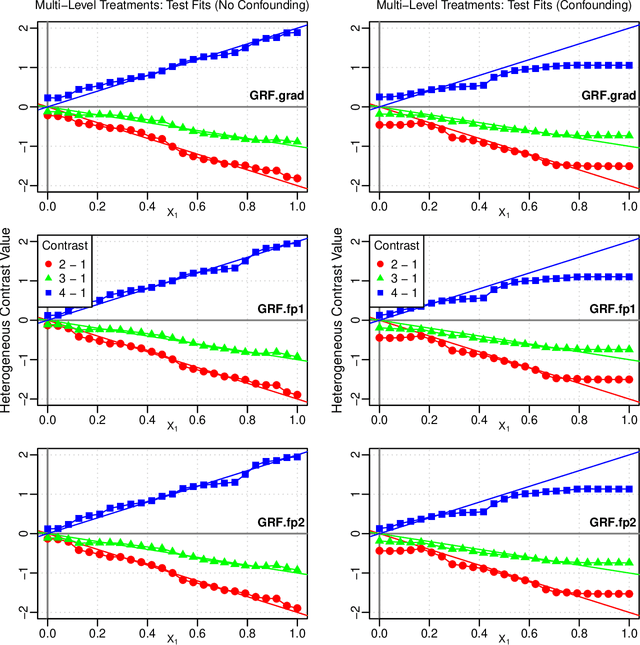

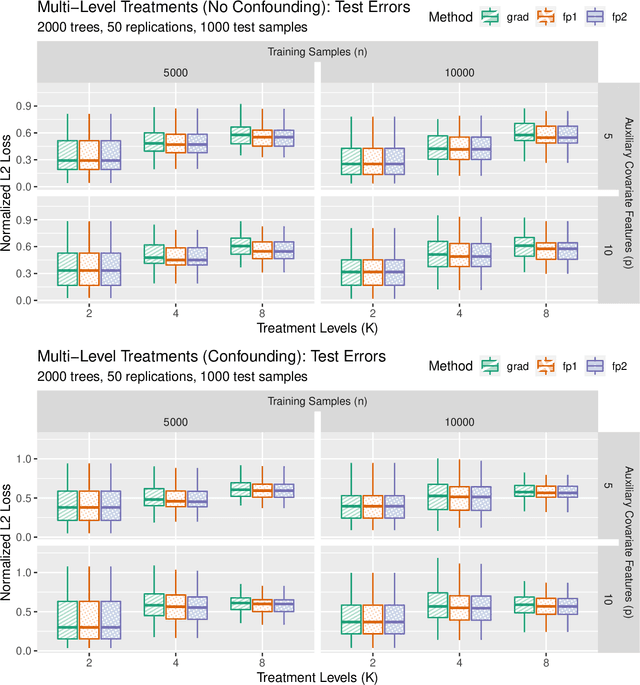

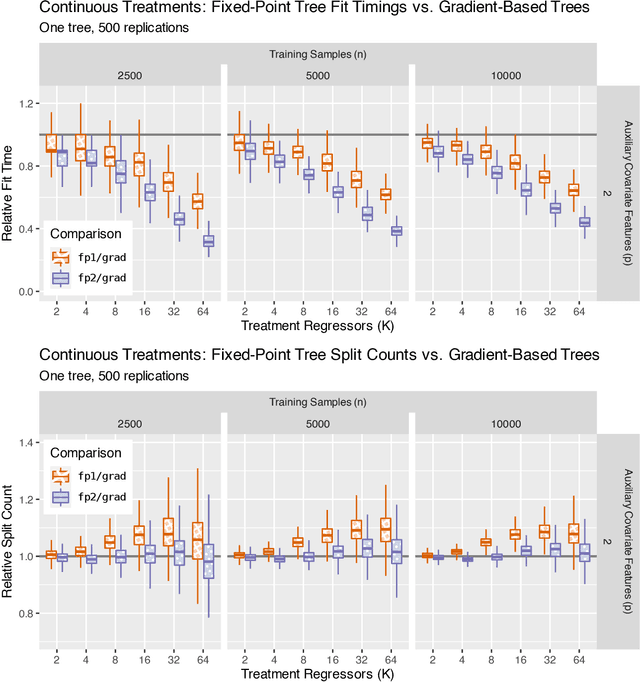

Accelerating Generalized Random Forests with Fixed-Point Trees

Jun 20, 2023

Generalized random forests arXiv:1610.01271 build upon the well-established success of conventional forests (Breiman, 2001) to offer a flexible and powerful non-parametric method for estimating local solutions of heterogeneous estimating equations. Estimators are constructed by leveraging random forests as an adaptive kernel weighting algorithm and implemented through a gradient-based tree-growing procedure. By expressing this gradient-based approximation as being induced from a single Newton-Raphson root-finding iteration, and drawing upon the connection between estimating equations and fixed-point problems arXiv:2110.11074, we propose a new tree-growing rule for generalized random forests induced from a fixed-point iteration type of approximation, enabling gradient-free optimization, and yielding substantial time savings for tasks involving even modest dimensionality of the target quantity (e.g. multiple/multi-level treatment effects). We develop an asymptotic theory for estimators obtained from forests whose trees are grown through the fixed-point splitting rule, and provide numerical simulations demonstrating that the estimators obtained from such forests are comparable to those obtained from the more costly gradient-based rule.

On Frequency-Wise Normalizations for Better Recording Device Generalization in Audio Spectrogram Transformers

Jun 20, 2023

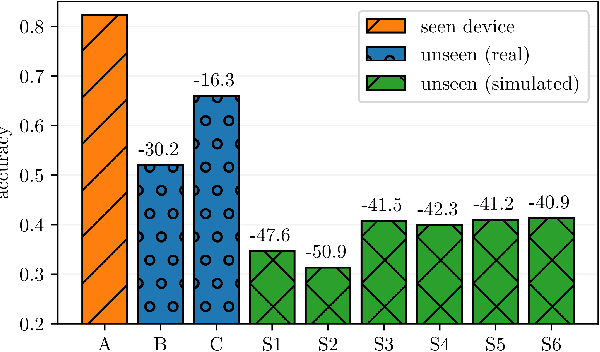

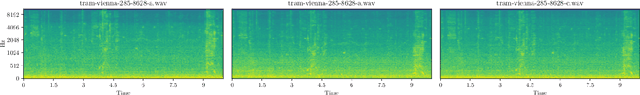

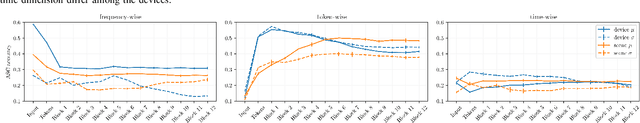

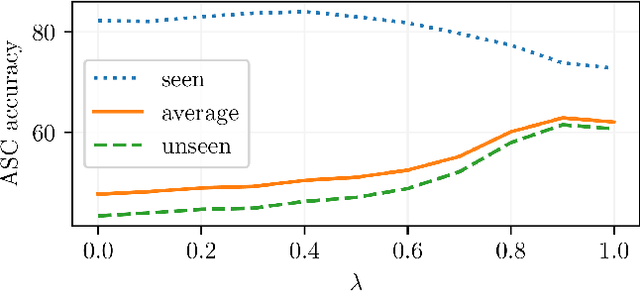

Varying conditions between the data seen at training and at application time remain a major challenge for machine learning. We study this problem in the context of Acoustic Scene Classification (ASC) with mismatching recording devices. Previous works successfully employed frequency-wise normalization of inputs and hidden layer activations in convolutional neural networks to reduce the recording device discrepancy. The main objective of this work was to adopt frequency-wise normalization for Audio Spectrogram Transformers (ASTs), which have recently become the dominant model architecture in ASC. To this end, we first investigate how recording device characteristics are encoded in the hidden layer activations of ASTs. We find that recording device information is initially encoded in the frequency dimension; however, after the first self-attention block, it is largely transformed into the token dimension. Based on this observation, we conjecture that suppressing recording device characteristics in the input spectrogram is the most effective. We propose a frequency-centering operation for spectrograms that improves the ASC performance on unseen recording devices on average by up to 18.2 percentage points.

A Systematic Survey in Geometric Deep Learning for Structure-based Drug Design

Jun 20, 2023

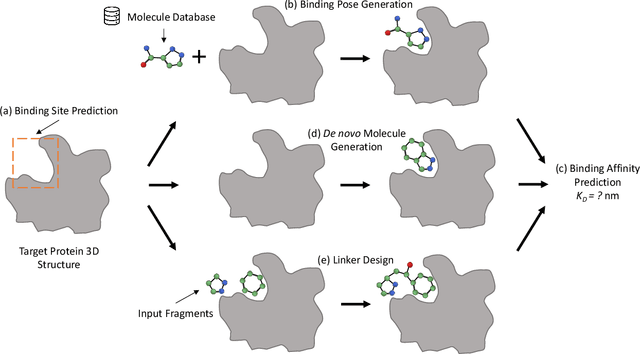

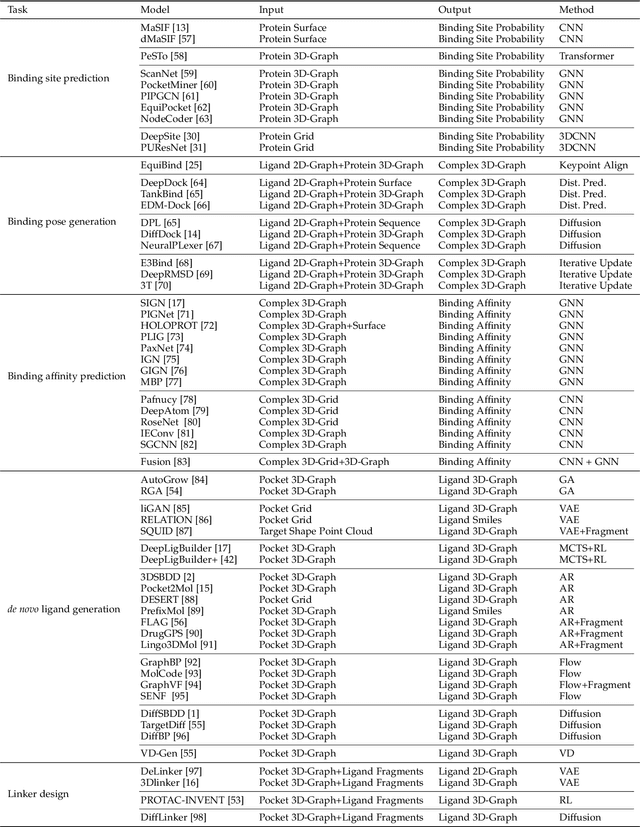

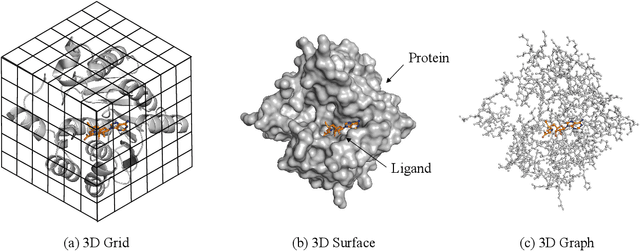

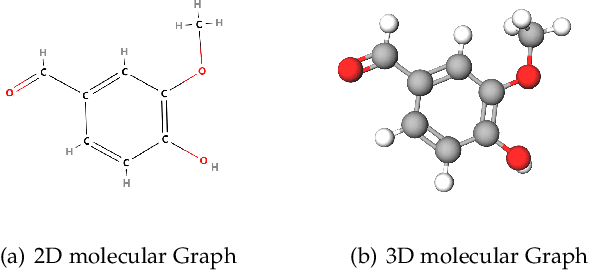

Structure-based drug design (SBDD), which utilizes the three-dimensional geometry of proteins to identify potential drug candidates, is becoming increasingly vital in drug discovery. However, traditional methods based on physiochemical modeling and experts' domain knowledge are time-consuming and laborious. The recent advancements in geometric deep learning, which integrates and processes 3D geometric data, coupled with the availability of accurate protein 3D structure predictions from tools like AlphaFold, have significantly propelled progress in structure-based drug design. In this paper, we systematically review the recent progress of geometric deep learning for structure-based drug design. We start with a brief discussion of the mainstream tasks in structure-based drug design, commonly used 3D protein representations and representative predictive/generative models. Then we delve into detailed reviews for each task (binding site prediction, binding pose generation, \emph{de novo} molecule generation, linker design, and binding affinity prediction), including the problem setup, representative methods, datasets, and evaluation metrics. Finally, we conclude this survey with the current challenges and highlight potential opportunities of geometric deep learning for structure-based drug design.

Improving EEG-based Emotion Recognition by Fusing Time-frequency And Spatial Representations

Mar 14, 2023

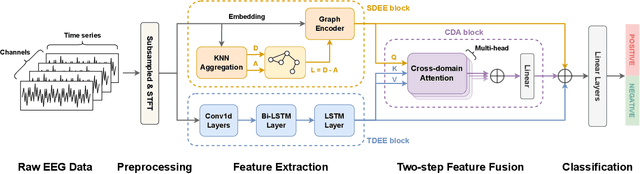

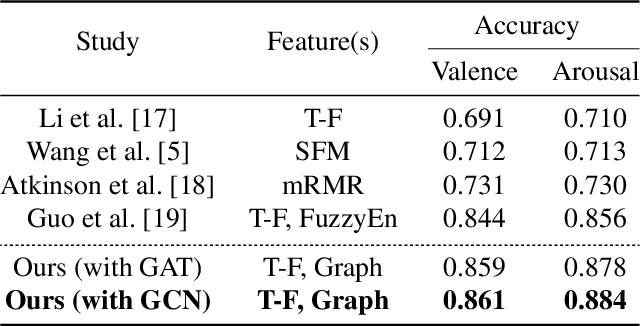

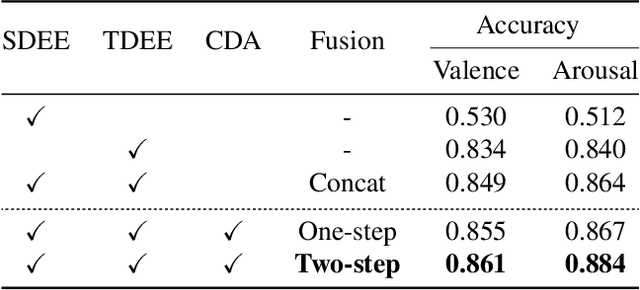

Using deep learning methods to classify EEG signals can accurately identify people's emotions. However, existing studies have rarely considered the application of the information in another domain's representations to feature selection in the time-frequency domain. We propose a classification network of EEG signals based on the cross-domain feature fusion method, which makes the network more focused on the features most related to brain activities and thinking changes by using the multi-domain attention mechanism. In addition, we propose a two-step fusion method and apply these methods to the EEG emotion recognition network. Experimental results show that our proposed network, which combines multiple representations in the time-frequency domain and spatial domain, outperforms previous methods on public datasets and achieves state-of-the-art at present.

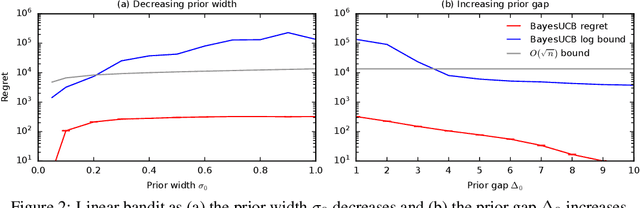

Logarithmic Bayes Regret Bounds

Jun 15, 2023

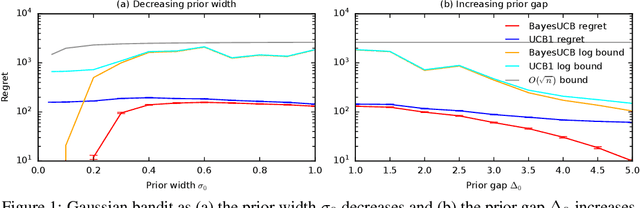

We derive the first finite-time logarithmic regret bounds for Bayesian bandits. For Gaussian bandits, we obtain a $O(c_h \log^2 n)$ bound, where $c_h$ is a prior-dependent constant. This matches the asymptotic lower bound of Lai (1987). Our proofs mark a technical departure from prior works, and are simple and general. To show generality, we apply our technique to linear bandits. Our bounds shed light on the value of the prior in the Bayesian setting, both in the objective and as a side information given to the learner. They significantly improve the $\tilde{O}(\sqrt{n})$ bounds, that despite the existing lower bounds, have become standard in the literature.

Fast Algorithms for Directed Graph Partitioning Using Flows and Reweighted Eigenvalues

Jun 15, 2023We consider a new semidefinite programming relaxation for directed edge expansion, which is obtained by adding triangle inequalities to the reweighted eigenvalue formulation. Applying the matrix multiplicative weight update method to this relaxation, we derive almost linear-time algorithms to achieve $O(\sqrt{\log{n}})$-approximation and Cheeger-type guarantee for directed edge expansion, as well as an improved cut-matching game for directed graphs. This provides a primal-dual flow-based framework to obtain the best known algorithms for directed graph partitioning. The same approach also works for vertex expansion and for hypergraphs, providing a simple and unified approach to achieve the best known results for different expansion problems and different algorithmic techniques.

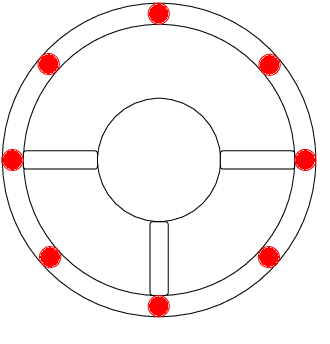

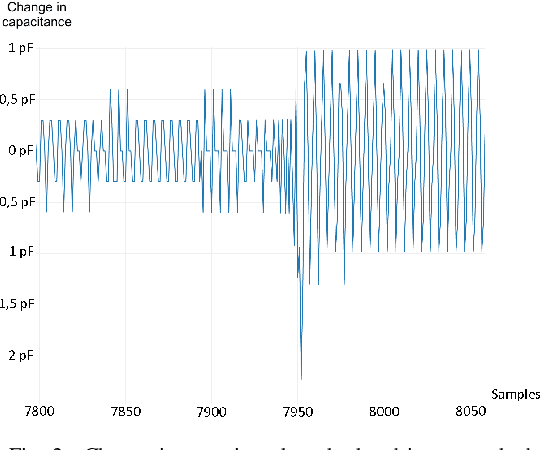

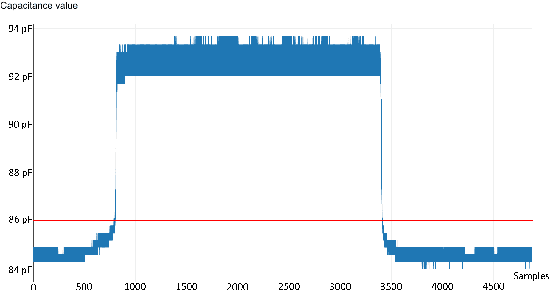

Hands-on detection for steering wheels with neural networks

Jun 15, 2023

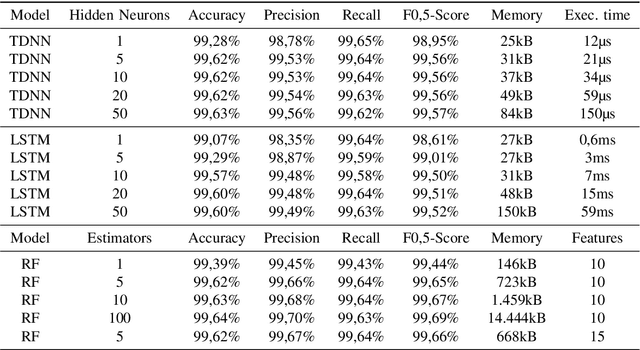

In this paper the concept of a machine learning based hands-on detection algorithm is proposed. The hand detection is implemented on the hardware side using a capacitive method. A sensor mat in the steering wheel detects a change in capacity as soon as the driver's hands come closer. The evaluation and final decision about hands-on or hands-off situations is done using machine learning. In order to find a suitable machine learning model, different models are implemented and evaluated. Based on accuracy, memory consumption and computational effort the most promising one is selected and ported on a micro controller. The entire system is then evaluated in terms of reliability and response time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge