"Time": models, code, and papers

RO-MAP: Real-Time Multi-Object Mapping with Neural Radiance Fields

Apr 12, 2023

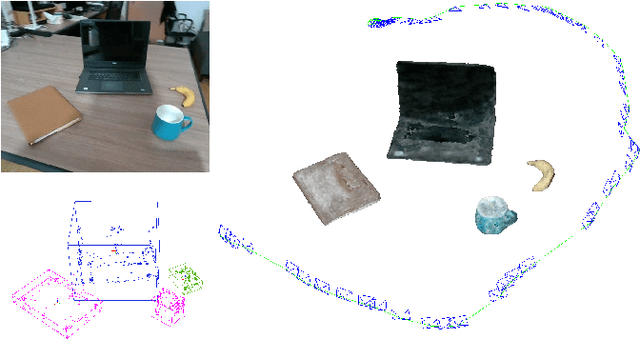

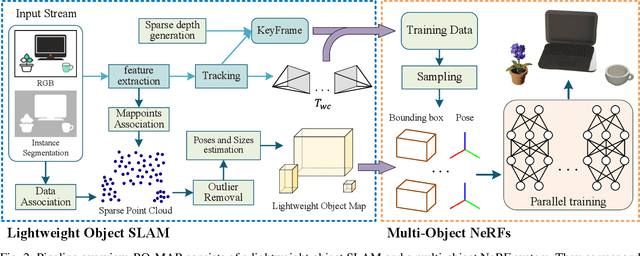

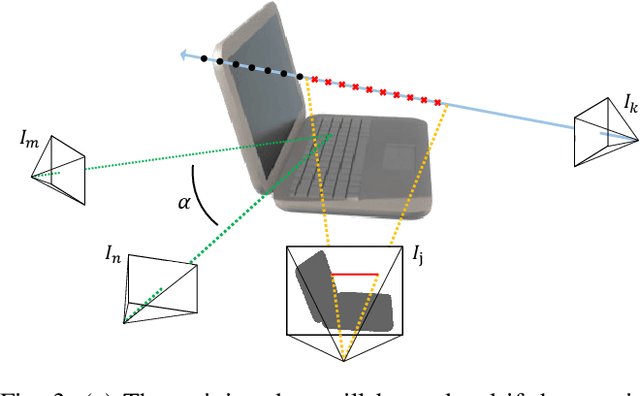

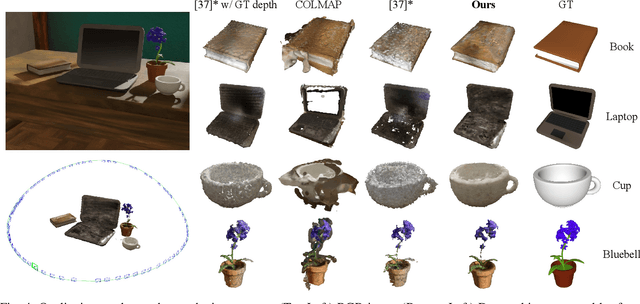

Accurate perception of objects in the environment is important for improving the scene understanding capability of SLAM systems. In robotic and augmented reality applications, object maps with semantic and metric information show attractive advantages. In this paper, we present RO-MAP, a novel multi-object mapping pipeline that does not rely on 3D priors. Given only monocular input, we use neural radiance fields to represent objects and couple them with a lightweight object SLAM based on multi-view geometry, to simultaneously localize objects and implicitly learn their dense geometry. We create separate implicit models for each detected object and train them dynamically and in parallel as new observations are added. Experiments on synthetic and real-world datasets demonstrate that our method can generate semantic object map with shape reconstruction, and be competitive with offline methods while achieving real-time performance (25Hz). The code and dataset will be available at: https://github.com/XiaoHan-Git/RO-MAP

Histopathology Image Classification using Deep Manifold Contrastive Learning

Jun 26, 2023

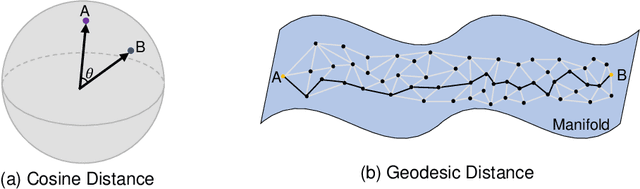

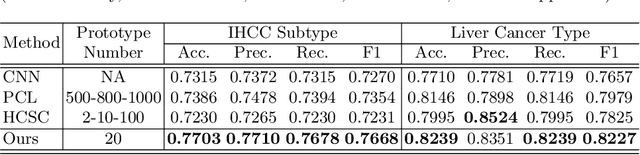

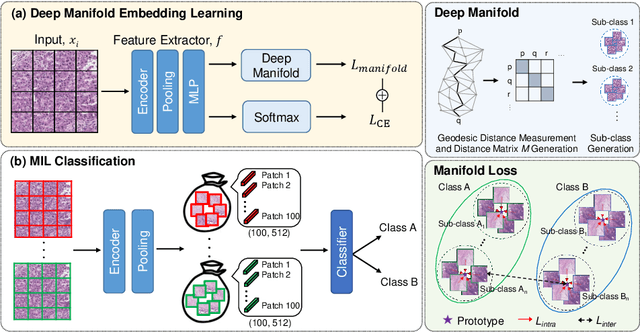

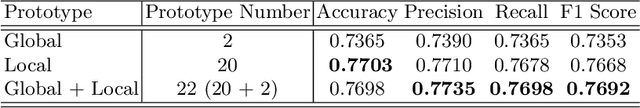

Contrastive learning has gained popularity due to its robustness with good feature representation performance. However, cosine distance, the commonly used similarity metric in contrastive learning, is not well suited to represent the distance between two data points, especially on a nonlinear feature manifold. Inspired by manifold learning, we propose a novel extension of contrastive learning that leverages geodesic distance between features as a similarity metric for histopathology whole slide image classification. To reduce the computational overhead in manifold learning, we propose geodesic-distance-based feature clustering for efficient contrastive loss evaluation using prototypes without time-consuming pairwise feature similarity comparison. The efficacy of the proposed method is evaluated on two real-world histopathology image datasets. Results demonstrate that our method outperforms state-of-the-art cosine-distance-based contrastive learning methods.

Factorised Speaker-environment Adaptive Training of Conformer Speech Recognition Systems

Jun 26, 2023

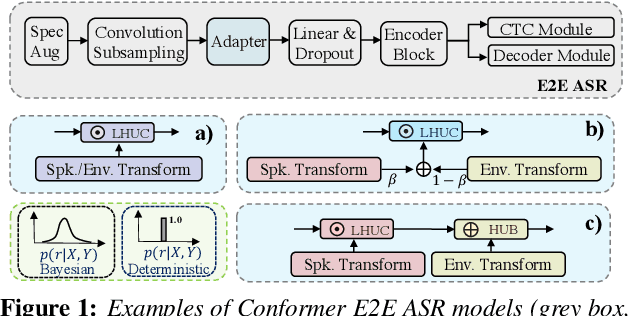

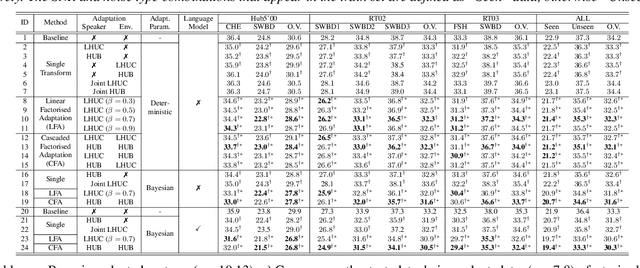

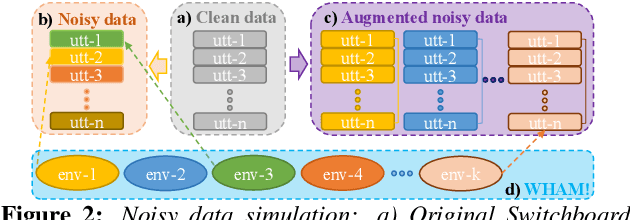

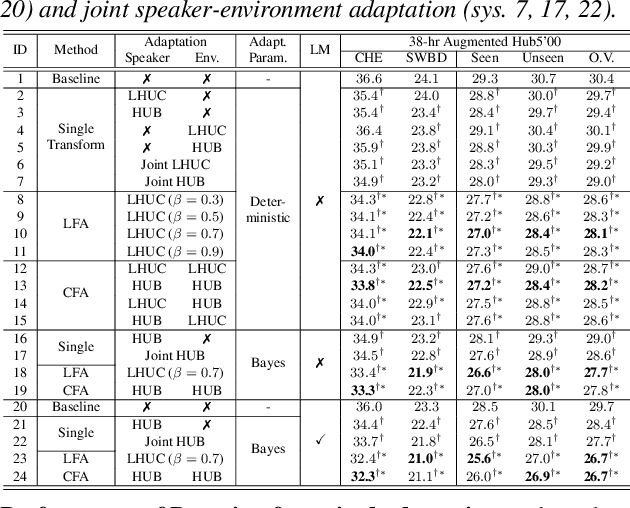

Rich sources of variability in natural speech present significant challenges to current data intensive speech recognition technologies. To model both speaker and environment level diversity, this paper proposes a novel Bayesian factorised speaker-environment adaptive training and test time adaptation approach for Conformer ASR models. Speaker and environment level characteristics are separately modeled using compact hidden output transforms, which are then linearly or hierarchically combined to represent any speaker-environment combination. Bayesian learning is further utilized to model the adaptation parameter uncertainty. Experiments on the 300-hr WHAM noise corrupted Switchboard data suggest that factorised adaptation consistently outperforms the baseline and speaker label only adapted Conformers by up to 3.1% absolute (10.4% relative) word error rate reductions. Further analysis shows the proposed method offers potential for rapid adaption to unseen speaker-environment conditions.

GloptiNets: Scalable Non-Convex Optimization with Certificates

Jun 26, 2023

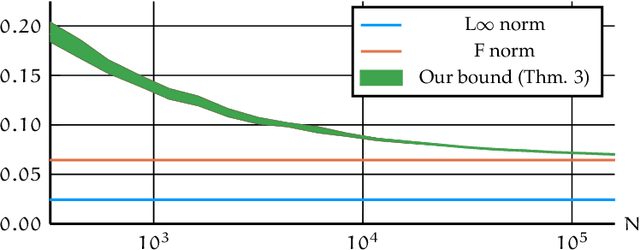

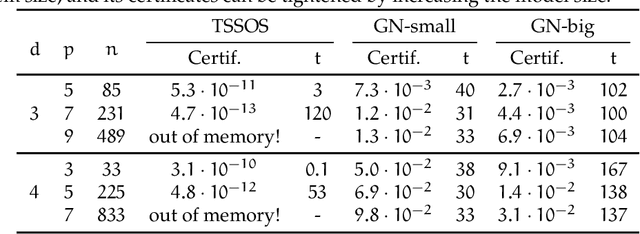

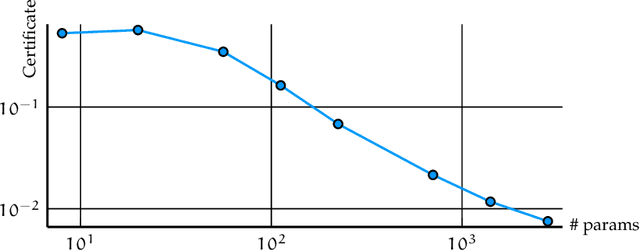

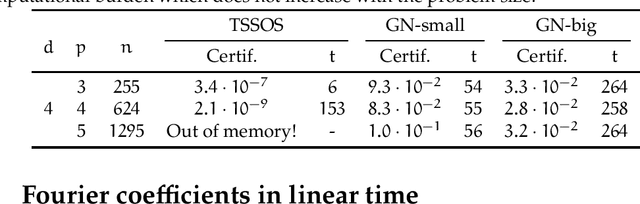

We present a novel approach to non-convex optimization with certificates, which handles smooth functions on the hypercube or on the torus. Unlike traditional methods that rely on algebraic properties, our algorithm exploits the regularity of the target function intrinsic in the decay of its Fourier spectrum. By defining a tractable family of models, we allow at the same time to obtain precise certificates and to leverage the advanced and powerful computational techniques developed to optimize neural networks. In this way the scalability of our approach is naturally enhanced by parallel computing with GPUs. Our approach, when applied to the case of polynomials of moderate dimensions but with thousands of coefficients, outperforms the state-of-the-art optimization methods with certificates, as the ones based on Lasserre's hierarchy, addressing problems intractable for the competitors.

Robust Dominant Periodicity Detection for Time Series with Missing Data

Mar 06, 2023

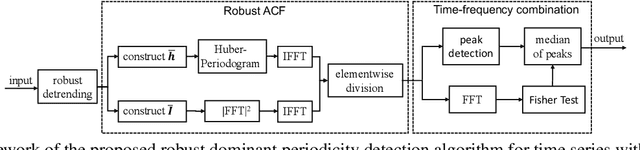

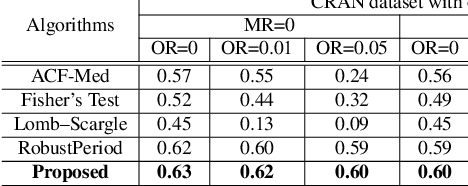

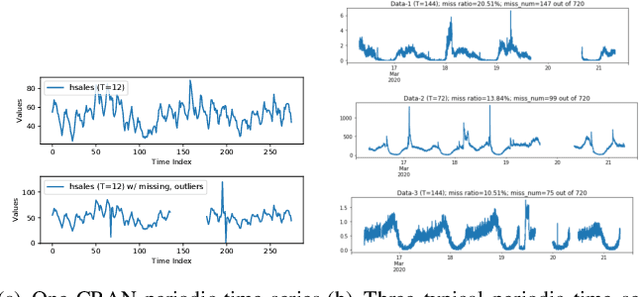

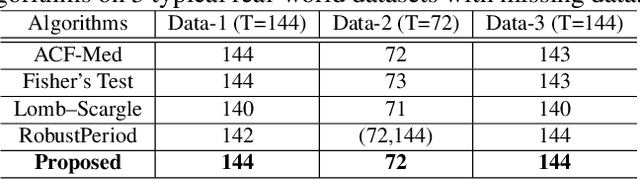

Periodicity detection is an important task in time series analysis, but still a challenging problem due to the diverse characteristics of time series data like abrupt trend change, outlier, noise, and especially block missing data. In this paper, we propose a robust and effective periodicity detection algorithm for time series with block missing data. We first design a robust trend filter to remove the interference of complicated trend patterns under missing data. Then, we propose a robust autocorrelation function (ACF) that can handle missing values and outliers effectively. We rigorously prove that the proposed robust ACF can still work well when the length of the missing block is less than $1/3$ of the period length. Last, by combining the time-frequency information, our algorithm can generate the period length accurately. The experimental results demonstrate that our algorithm outperforms existing periodicity detection algorithms on real-world time series datasets.

* Accepted by 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP 2023)

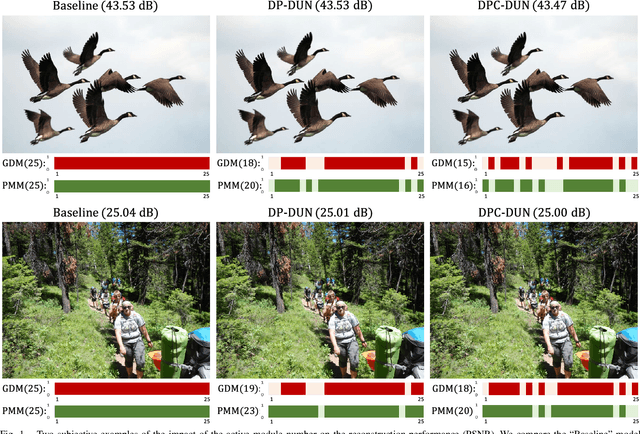

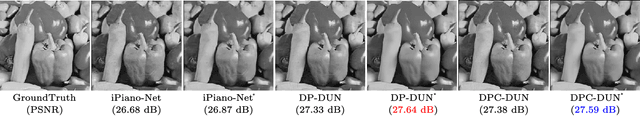

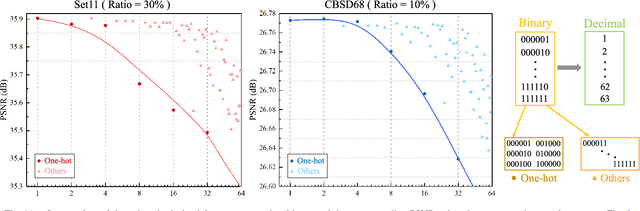

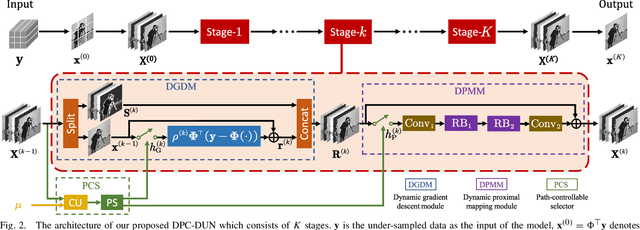

Dynamic Path-Controllable Deep Unfolding Network for Compressive Sensing

Jun 28, 2023

Deep unfolding network (DUN) that unfolds the optimization algorithm into a deep neural network has achieved great success in compressive sensing (CS) due to its good interpretability and high performance. Each stage in DUN corresponds to one iteration in optimization. At the test time, all the sampling images generally need to be processed by all stages, which comes at a price of computation burden and is also unnecessary for the images whose contents are easier to restore. In this paper, we focus on CS reconstruction and propose a novel Dynamic Path-Controllable Deep Unfolding Network (DPC-DUN). DPC-DUN with our designed path-controllable selector can dynamically select a rapid and appropriate route for each image and is slimmable by regulating different performance-complexity tradeoffs. Extensive experiments show that our DPC-DUN is highly flexible and can provide excellent performance and dynamic adjustment to get a suitable tradeoff, thus addressing the main requirements to become appealing in practice. Codes are available at https://github.com/songjiechong/DPC-DUN.

cuSLINK: Single-linkage Agglomerative Clustering on the GPU

Jun 28, 2023

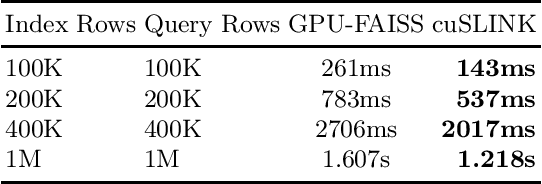

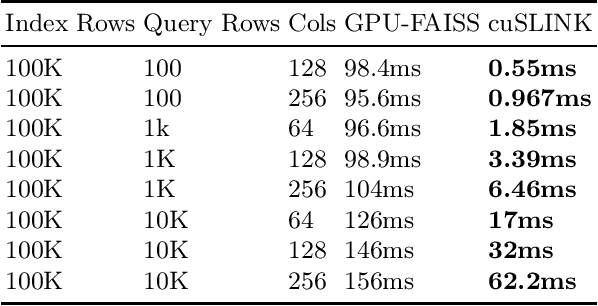

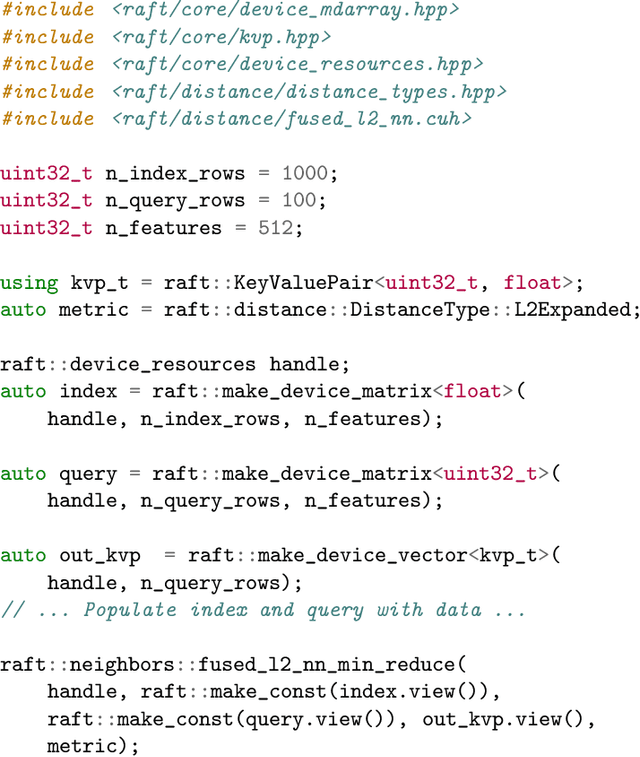

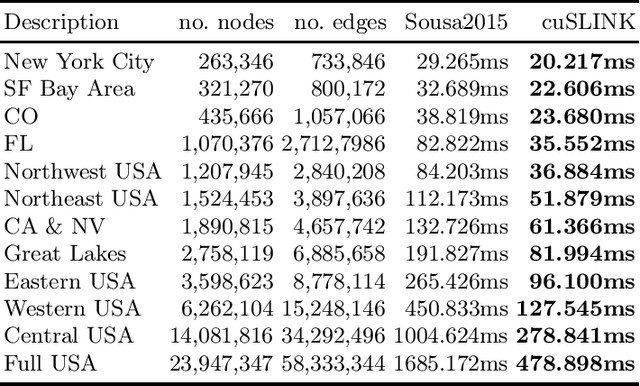

In this paper, we propose cuSLINK, a novel and state-of-the-art reformulation of the SLINK algorithm on the GPU which requires only $O(Nk)$ space and uses a parameter $k$ to trade off space and time. We also propose a set of novel and reusable building blocks that compose cuSLINK. These building blocks include highly optimized computational patterns for $k$-NN graph construction, spanning trees, and dendrogram cluster extraction. We show how we used our primitives to implement cuSLINK end-to-end on the GPU, further enabling a wide range of real-world data mining and machine learning applications that were once intractable. In addition to being a primary computational bottleneck in the popular HDBSCAN algorithm, the impact of our end-to-end cuSLINK algorithm spans a large range of important applications, including cluster analysis in social and computer networks, natural language processing, and computer vision. Users can obtain cuSLINK at https://docs.rapids.ai/api/cuml/latest/api/#agglomerative-clustering

What Sentiment and Fun Facts We Learnt Before FIFA World Cup Qatar 2022 Using Twitter and AI

Jun 28, 2023

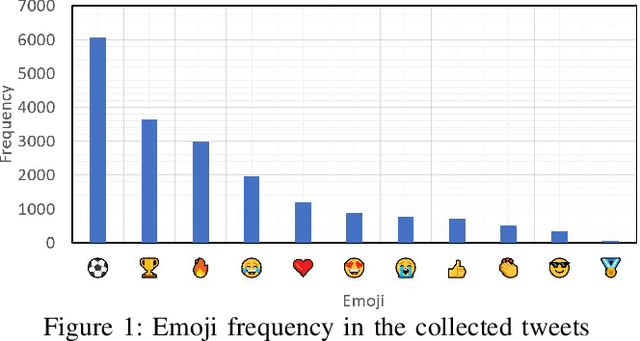

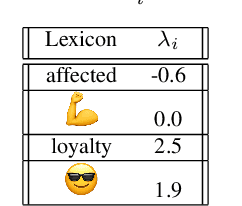

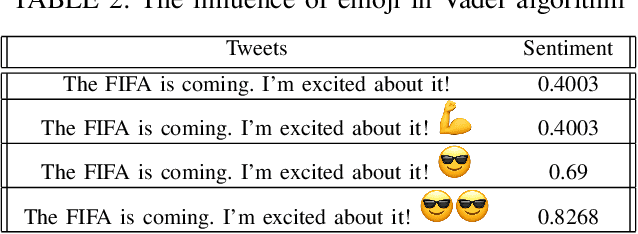

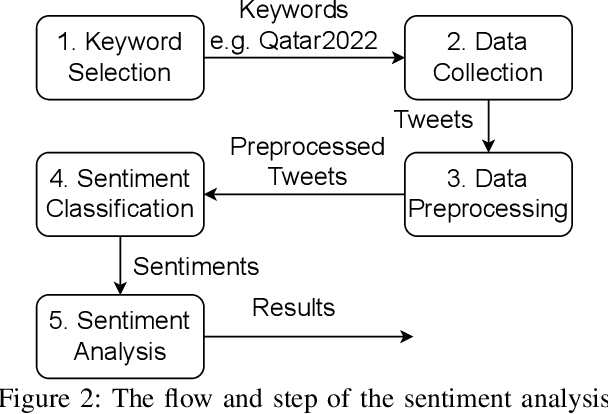

Twitter is a social media platform bridging most countries and allows real-time news discovery. Since the tweets on Twitter are usually short and express public feelings, thus provide a source for opinion mining and sentiment analysis for global events. This paper proposed an effective solution, in providing a sentiment on tweets related to the FIFA World Cup. At least 130k tweets, as the first in the community, are collected and implemented as a dataset to evaluate the performance of the proposed machine learning solution. These tweets are collected with the related hashtags and keywords of the Qatar World Cup 2022. The Vader algorithm is used in this paper for sentiment analysis. Through the machine learning method and collected Twitter tweets, we discovered the sentiments and fun facts of several aspects important to the period before the World Cup. The result shows people are positive to the opening of the World Cup.

Sequential Attention Source Identification Based on Feature Representation

Jun 28, 2023

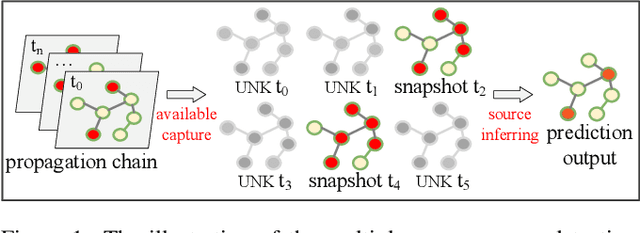

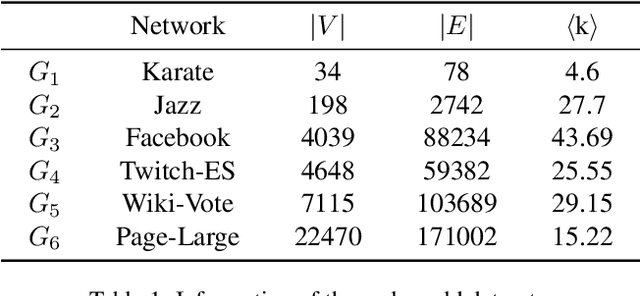

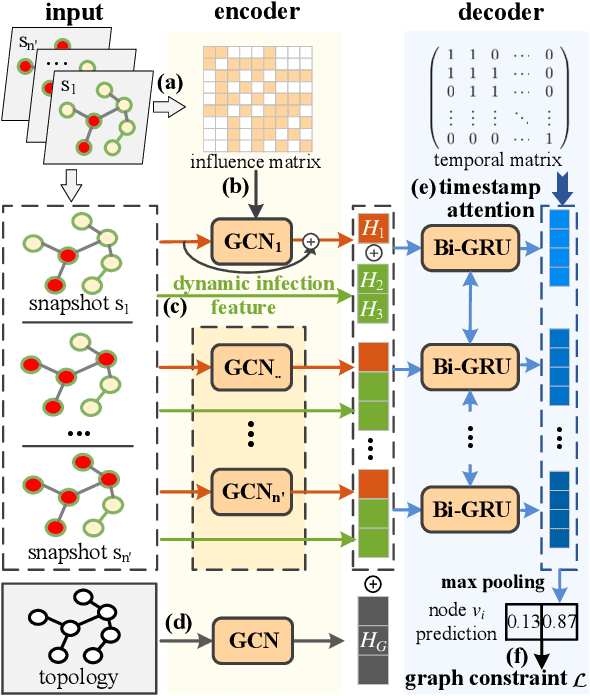

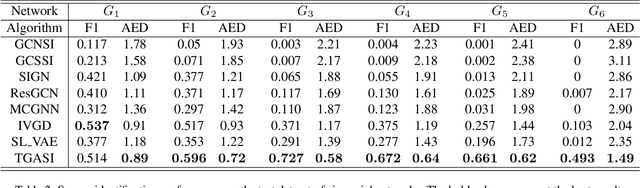

Snapshot observation based source localization has been widely studied due to its accessibility and low cost. However, the interaction of users in existing methods does not be addressed in time-varying infection scenarios. So these methods have a decreased accuracy in heterogeneous interaction scenarios. To solve this critical issue, this paper proposes a sequence-to-sequence based localization framework called Temporal-sequence based Graph Attention Source Identification (TGASI) based on an inductive learning idea. More specifically, the encoder focuses on generating multiple features by estimating the influence probability between two users, and the decoder distinguishes the importance of prediction sources in different timestamps by a designed temporal attention mechanism. It's worth mentioning that the inductive learning idea ensures that TGASI can detect the sources in new scenarios without knowing other prior knowledge, which proves the scalability of TGASI. Comprehensive experiments with the SOTA methods demonstrate the higher detection performance and scalability in different scenarios of TGASI.

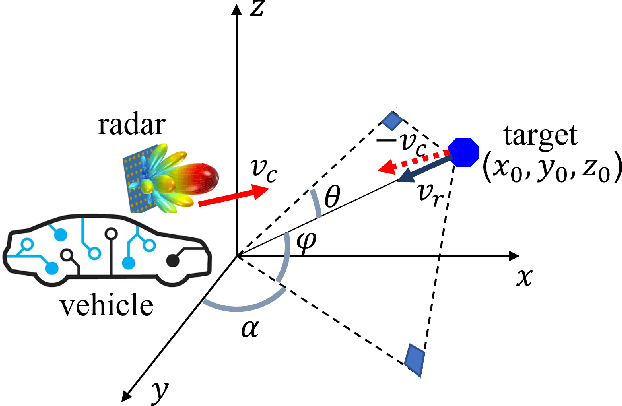

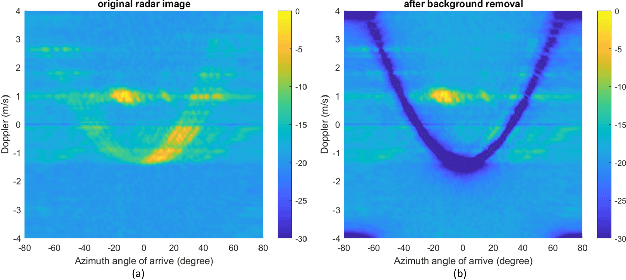

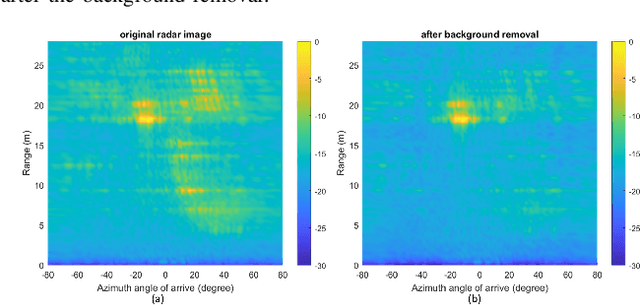

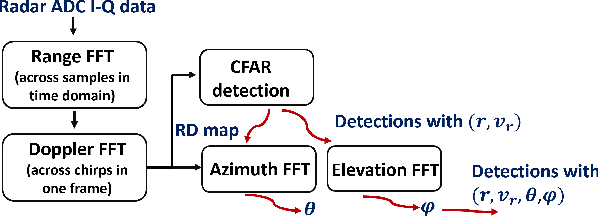

Static Background Removal in Vehicular Radar: Filtering in Azimuth-Elevation-Doppler Domain

Jul 04, 2023

A significant challenge in autonomous driving systems lies in image understanding within complex environments, particularly dense traffic scenarios. An effective solution to this challenge involves removing the background or static objects from the scene, so as to enhance the detection of moving targets as key component of improving overall system performance. In this paper, we present an efficient algorithm for background removal in automotive radar applications, specifically utilizing a frequency-modulated continuous wave (FMCW) radar. Our proposed algorithm follows a three-step approach, encompassing radar signal preprocessing, three-dimensional (3D) ego-motion estimation, and notch filter-based background removal in the azimuth-elevation-Doppler domain. To begin, we model the received signal of the FMCW multiple-input multiple-output (MIMO) radar and develop a signal processing framework for extracting four-dimensional (4D) point clouds. Subsequently, we introduce a robust 3D ego-motion estimation algorithm that accurately estimates radar ego-motion speed, accounting for Doppler ambiguity, by processing the point clouds. Additionally, our algorithm leverages the relationship between Doppler velocity, azimuth angle, elevation angle, and radar ego-motion speed to identify the spectrum belonging to background clutter. Subsequently, we employ notch filters to effectively filter out the background clutter. The performance of our algorithm is evaluated using both simulated data and extensive experiments with real-world data. The results demonstrate its effectiveness in efficiently removing background clutter and enhacing perception within complex environments. By offering a fast and computationally efficient solution, our approach effectively addresses challenges posed by non-homogeneous environments and real-time processing requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge