"Time": models, code, and papers

BASEN: Time-Domain Brain-Assisted Speech Enhancement Network with Convolutional Cross Attention in Multi-talker Conditions

May 17, 2023

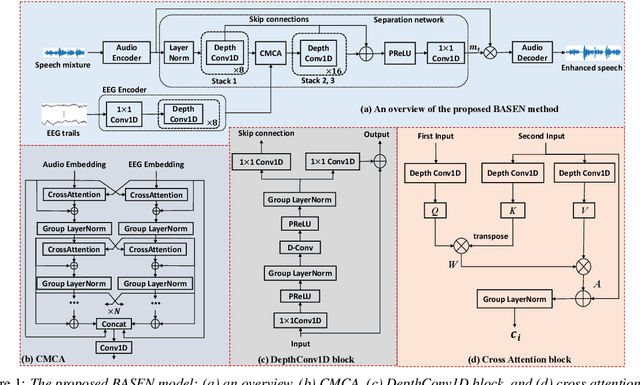

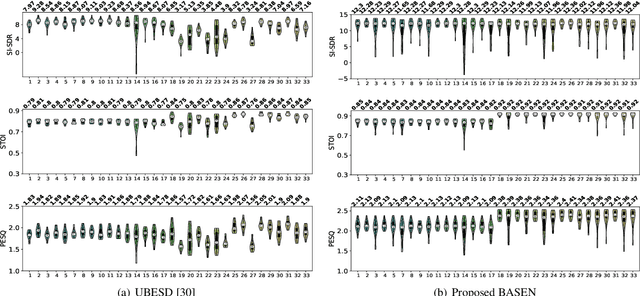

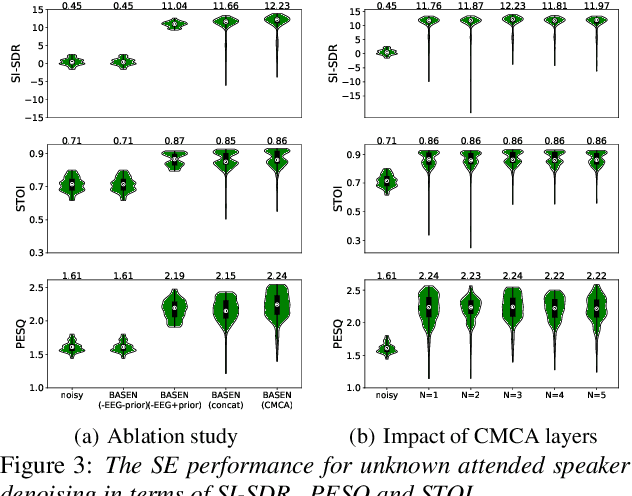

Time-domain single-channel speech enhancement (SE) still remains challenging to extract the target speaker without any prior information on multi-talker conditions. It has been shown via auditory attention decoding that the brain activity of the listener contains the auditory information of the attended speaker. In this paper, we thus propose a novel time-domain brain-assisted SE network (BASEN) incorporating electroencephalography (EEG) signals recorded from the listener for extracting the target speaker from monaural speech mixtures. The proposed BASEN is based on the fully-convolutional time-domain audio separation network. In order to fully leverage the complementary information contained in the EEG signals, we further propose a convolutional multi-layer cross attention module to fuse the dual-branch features. Experimental results on a public dataset show that the proposed model outperforms the state-of-the-art method in several evaluation metrics. The reproducible code is available at https://github.com/jzhangU/Basen.git.

Learning Structured Components: Towards Modular and Interpretable Multivariate Time Series Forecasting

May 24, 2023

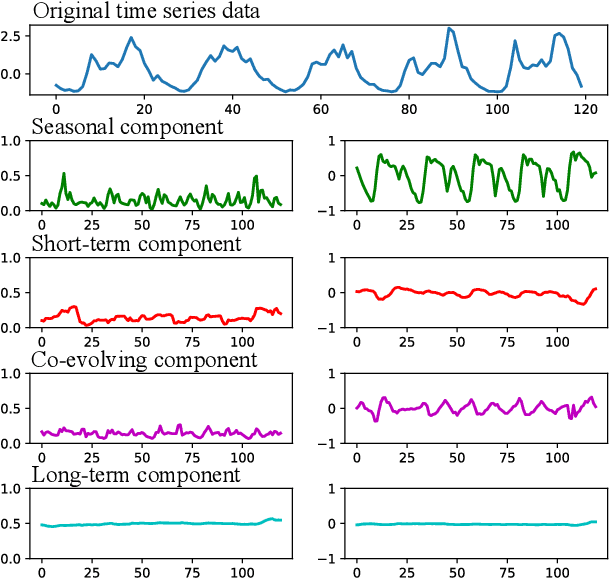

Multivariate time-series (MTS) forecasting is a paramount and fundamental problem in many real-world applications. The core issue in MTS forecasting is how to effectively model complex spatial-temporal patterns. In this paper, we develop a modular and interpretable forecasting framework, which seeks to individually model each component of the spatial-temporal patterns. We name this framework SCNN, short for Structured Component-based Neural Network. SCNN works with a pre-defined generative process of MTS, which arithmetically characterizes the latent structure of the spatial-temporal patterns. In line with its reverse process, SCNN decouples MTS data into structured and heterogeneous components and then respectively extrapolates the evolution of these components, the dynamics of which is more traceable and predictable than the original MTS. Extensive experiments are conducted to demonstrate that SCNN can achieve superior performance over state-of-the-art models on three real-world datasets. Additionally, we examine SCNN with different configurations and perform in-depth analyses of the properties of SCNN.

Causal Discovery with Stage Variables for Health Time Series

May 05, 2023

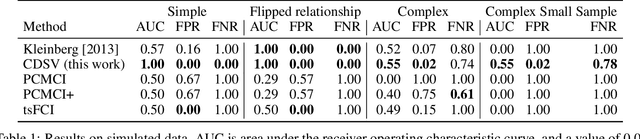

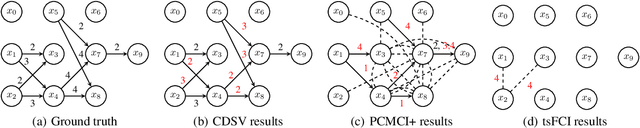

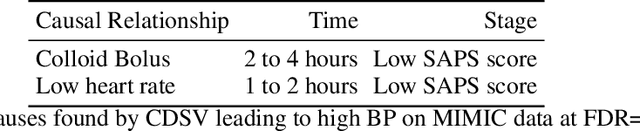

Using observational data to learn causal relationships is essential when randomized experiments are not possible, such as in healthcare. Discovering causal relationships in time-series health data is even more challenging when relationships change over the course of a disease, such as medications that are most effective early on or for individuals with severe disease. Stage variables such as weeks of pregnancy, disease stages, or biomarkers like HbA1c, can influence what causal relationships are true for a patient. However, causal inference within each stage is often not possible due to limited amounts of data, and combining all data risks incorrect or missed inferences. To address this, we propose Causal Discovery with Stage Variables (CDSV), which uses stage variables to reweight data from multiple time-series while accounting for different causal relationships in each stage. In simulated data, CDSV discovers more causes with fewer false discoveries compared to baselines, in eICU it has a lower FDR than baselines, and in MIMIC-III it discovers more clinically relevant causes of high blood pressure.

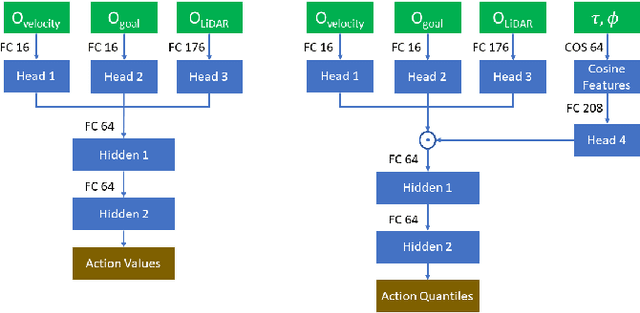

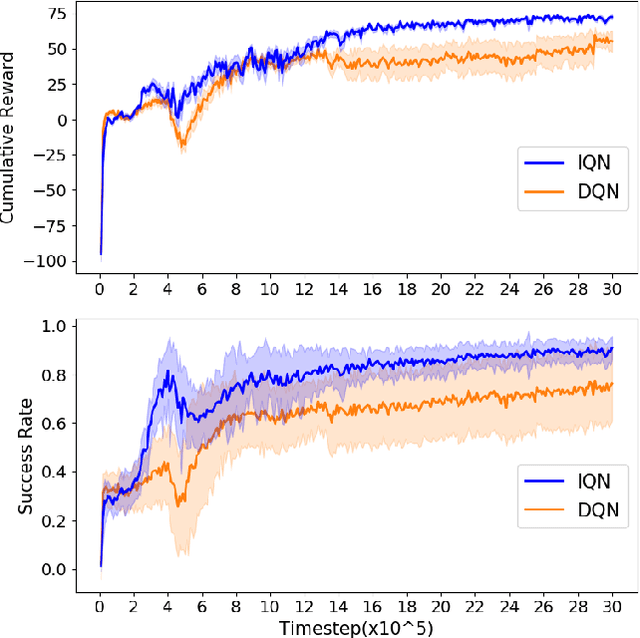

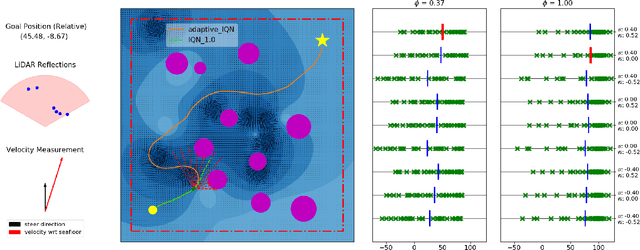

Robust Unmanned Surface Vehicle Navigation with Distributional Reinforcement Learning

Jul 30, 2023

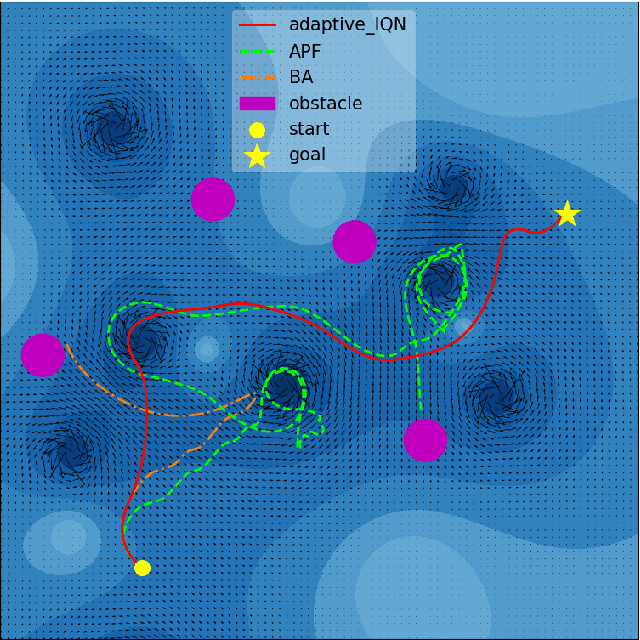

Autonomous navigation of Unmanned Surface Vehicles (USV) in marine environments with current flows is challenging, and few prior works have addressed the sensorbased navigation problem in such environments under no prior knowledge of the current flow and obstacles. We propose a Distributional Reinforcement Learning (RL) based local path planner that learns return distributions which capture the uncertainty of action outcomes, and an adaptive algorithm that automatically tunes the level of sensitivity to the risk in the environment. The proposed planner achieves a more stable learning performance and converges to safer policies than a traditional RL based planner. Computational experiments demonstrate that comparing to a traditional RL based planner and classical local planning methods such as Artificial Potential Fields and the Bug Algorithm, the proposed planner is robust against environmental flows, and is able to plan trajectories that are superior in safety, time and energy consumption.

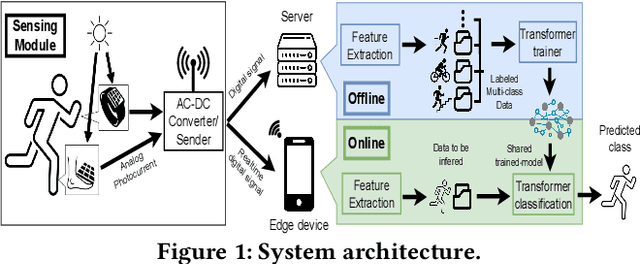

Eco-Friendly Sensing for Human Activity Recognition

Jul 30, 2023

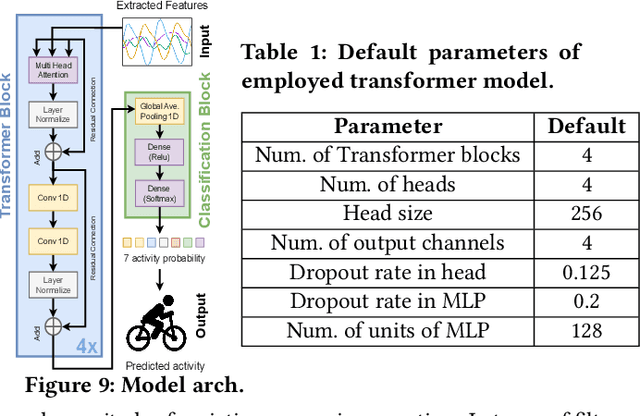

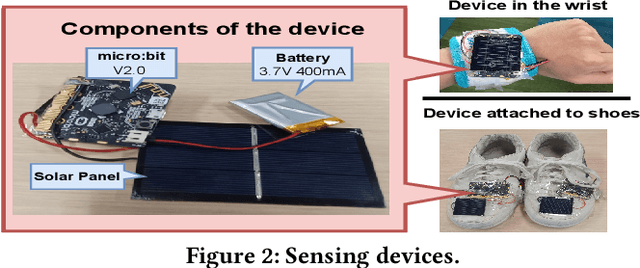

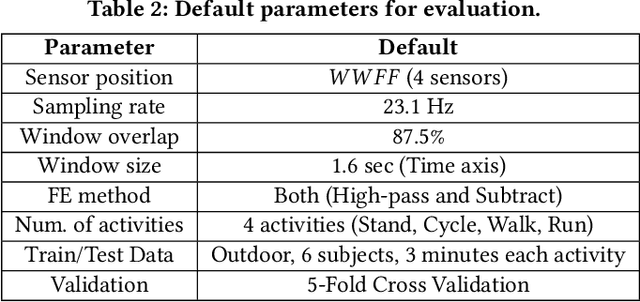

With the increasing number of IoT devices, there is a growing demand for energy-free sensors. Human activity recognition holds immense value in numerous daily healthcare applications. However, the majority of current sensing modalities consume energy, thus limiting their sustainable adoption. In this paper, we present a novel activity recognition system that not only operates without requiring energy for sensing but also harvests energy. Our proposed system utilizes photovoltaic cells, attached to the wrist and shoes, as eco-friendly sensing devices for activity recognition. By capturing photovoltaic readings and employing a deep transformer model with powerful learning capabilities, the system effectively recognizes user activities. To ensure robust performance across various subjects, time periods, and lighting conditions, the system incorporates feature extraction and different processing modules. The evaluation of the proposed system on realistic indoor and outdoor environments demonstrated its ability to recognize activities with an accuracy of 91.7%.

Efficient Q-Learning over Visit Frequency Maps for Multi-agent Exploration of Unknown Environments

Jul 30, 2023The robot exploration task has been widely studied with applications spanning from novel environment mapping to item delivery. For some time-critical tasks, such as rescue catastrophes, the agent is required to explore as efficiently as possible. Recently, Visit Frequency-based map representation achieved great success in such scenarios by discouraging repetitive visits with a frequency-based penalty. However, its relatively large size and single-agent settings hinder its further development. In this context, we propose Integrated Visit Frequency Map, which encodes identical information as Visit Frequency Map with a more compact size, and a visit frequency-based multi-agent information exchange and control scheme that is able to accommodate both representations. Through tests in diverse settings, the results indicate our proposed methods can achieve a comparable level of performance of VFM with lower bandwidth requirements and generalize well to different multi-agent setups including real-world environments.

Adaptive Modeling of Satellite-Derived Nighttime Lights Time-Series for Tracking Urban Change Processes Using Machine Learning

Jun 14, 2023

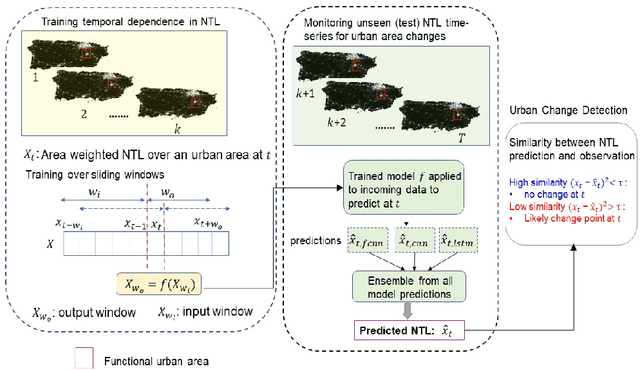

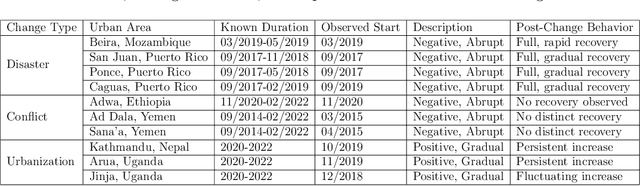

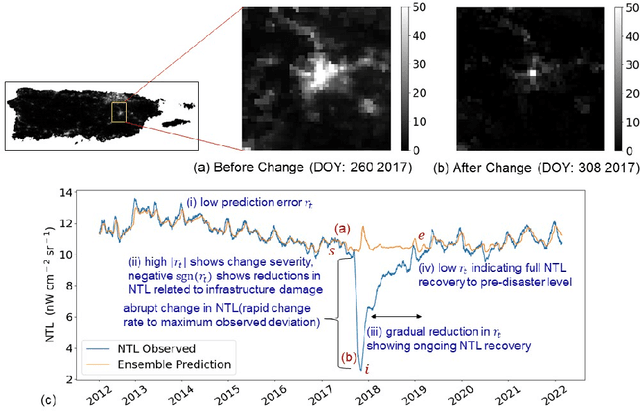

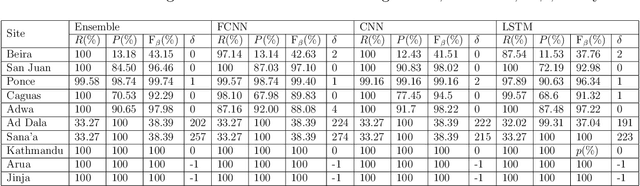

Remotely sensed nighttime lights (NTL) uniquely capture urban change processes that are important to human and ecological well-being, such as urbanization, socio-political conflicts and displacement, impacts from disasters, holidays, and changes in daily human patterns of movement. Though several NTL products are global in extent, intrinsic city-specific factors that affect lighting, such as development levels, and social, economic, and cultural characteristics, are unique to each city, making the urban processes embedded in NTL signatures difficult to characterize, and limiting the scalability of urban change analyses. In this study, we propose a data-driven approach to detect urban changes from daily satellite-derived NTL data records that is adaptive across cities and effective at learning city-specific temporal patterns. The proposed method learns to forecast NTL signatures from past data records using neural networks and allows the use of large volumes of unlabeled data, eliminating annotation effort. Urban changes are detected based on deviations of observed NTL from model forecasts using an anomaly detection approach. Comparing model forecasts with observed NTL also allows identifying the direction of change (positive or negative) and monitoring change severity for tracking recovery. In operationalizing the model, we consider ten urban areas from diverse geographic regions with dynamic NTL time-series and demonstrate the generalizability of the approach for detecting the change processes with different drivers and rates occurring within these urban areas based on NTL deviation. This scalable approach for monitoring changes from daily remote sensing observations efficiently utilizes large data volumes to support continuous monitoring and decision making.

Real-time Evolution of Multicellularity with Artificial Gene Regulation

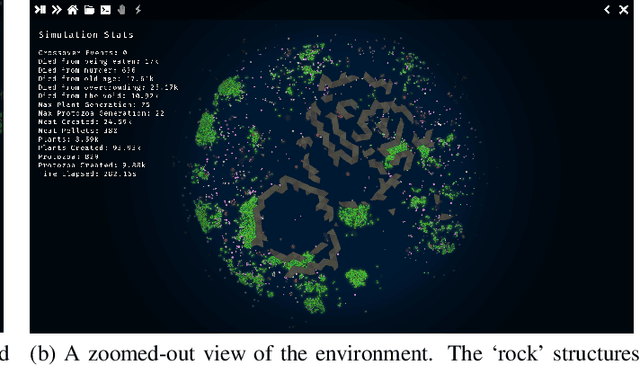

May 20, 2023

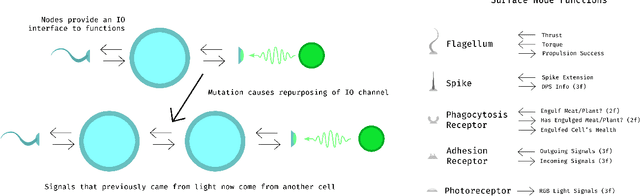

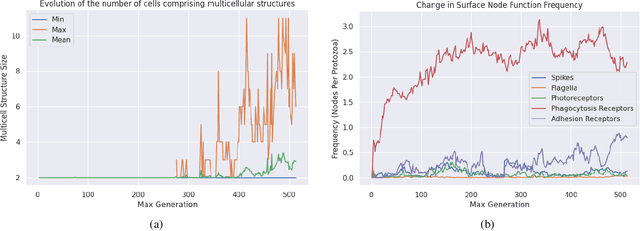

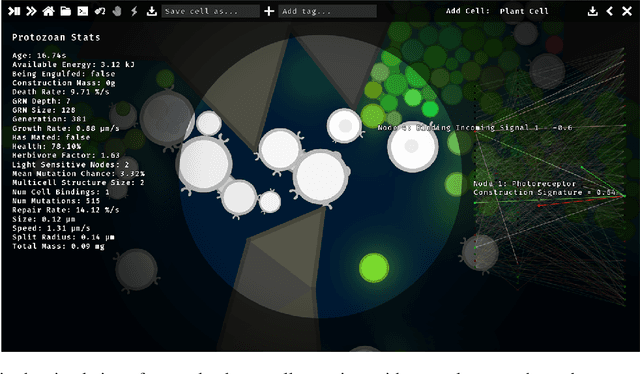

This paper presents a real-time simulation involving ''protozoan-like'' cells that evolve by natural selection in a physical 2D ecosystem. Selection pressure is exerted via the requirements to collect mass and energy from the surroundings in order to reproduce by cell-division. Cells do not have fixed morphologies from birth; they can use their resources in construction projects that produce functional nodes on their surfaces such as photoreceptors for light sensitivity or flagella for motility. Importantly, these nodes act as modular components that connect to the cell's control system via IO channels, meaning that the evolutionary process can replace one function with another while utilising pre-developed control pathways on the other side of the channel. A notable type of node function is the adhesion receptors that allow cells to bind together into multicellular structures in which individuals can share resource and signal to one another. The control system itself is modelled as an artificial neural network that doubles as a gene regulatory network, thereby permitting the co-evolution of form and function in a single data structure and allowing cell specialisation within multicellular groups.

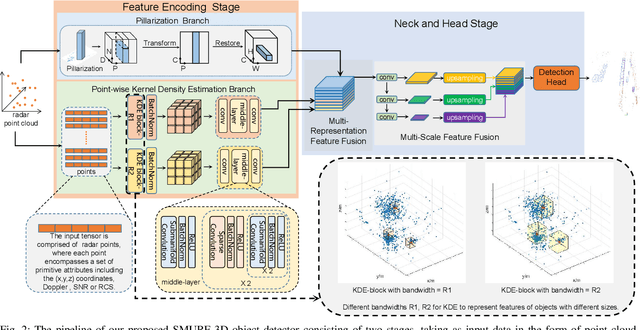

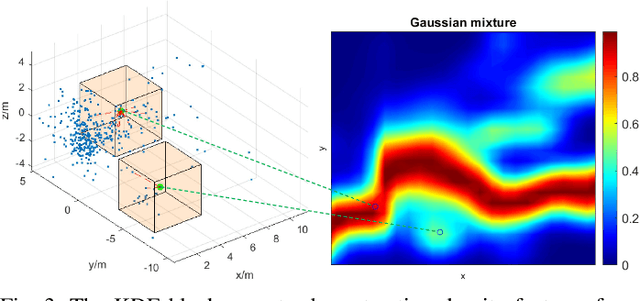

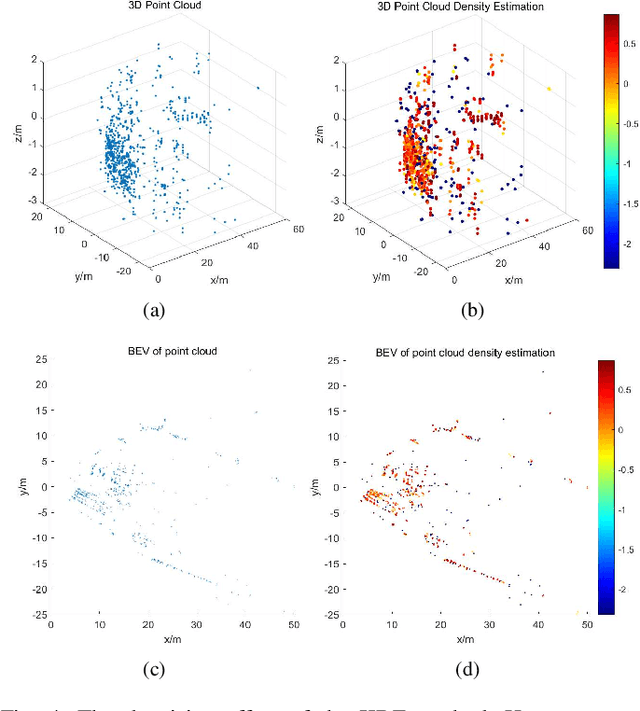

SMURF: Spatial Multi-Representation Fusion for 3D Object Detection with 4D Imaging Radar

Jul 20, 2023

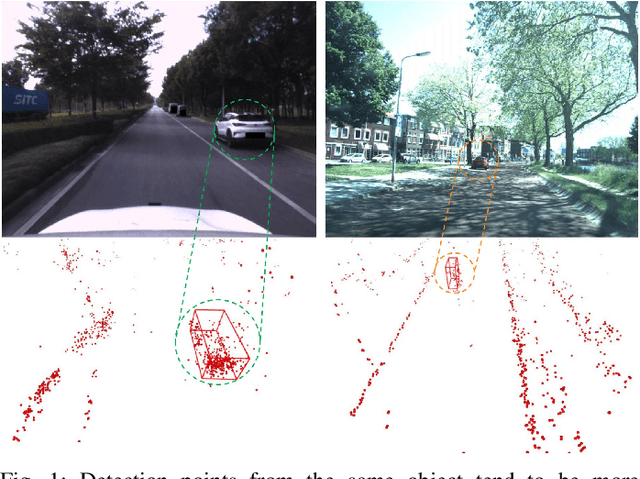

The 4D Millimeter wave (mmWave) radar is a promising technology for vehicle sensing due to its cost-effectiveness and operability in adverse weather conditions. However, the adoption of this technology has been hindered by sparsity and noise issues in radar point cloud data. This paper introduces spatial multi-representation fusion (SMURF), a novel approach to 3D object detection using a single 4D imaging radar. SMURF leverages multiple representations of radar detection points, including pillarization and density features of a multi-dimensional Gaussian mixture distribution through kernel density estimation (KDE). KDE effectively mitigates measurement inaccuracy caused by limited angular resolution and multi-path propagation of radar signals. Additionally, KDE helps alleviate point cloud sparsity by capturing density features. Experimental evaluations on View-of-Delft (VoD) and TJ4DRadSet datasets demonstrate the effectiveness and generalization ability of SMURF, outperforming recently proposed 4D imaging radar-based single-representation models. Moreover, while using 4D imaging radar only, SMURF still achieves comparable performance to the state-of-the-art 4D imaging radar and camera fusion-based method, with an increase of 1.22% in the mean average precision on bird's-eye view of TJ4DRadSet dataset and 1.32% in the 3D mean average precision on the entire annotated area of VoD dataset. Our proposed method demonstrates impressive inference time and addresses the challenges of real-time detection, with the inference time no more than 0.05 seconds for most scans on both datasets. This research highlights the benefits of 4D mmWave radar and is a strong benchmark for subsequent works regarding 3D object detection with 4D imaging radar.

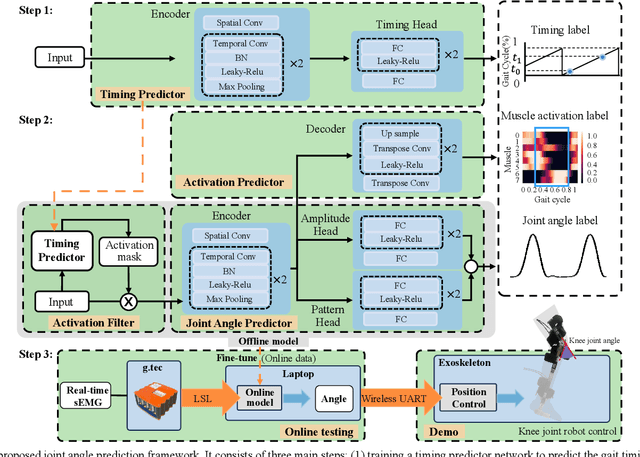

Gait Cycle-Inspired Learning Strategy for Continuous Prediction of Knee Joint Trajectory from sEMG

Jul 25, 2023

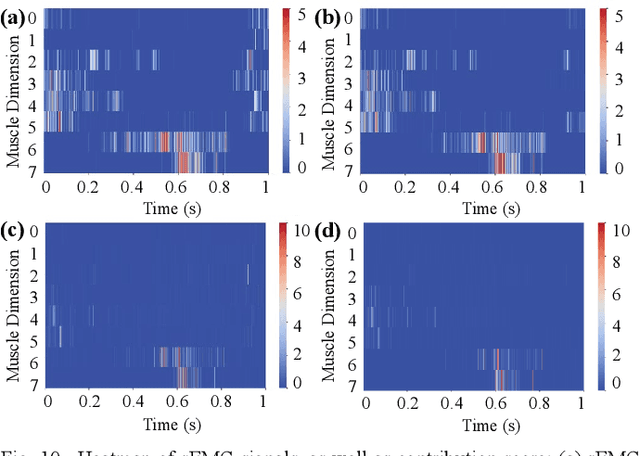

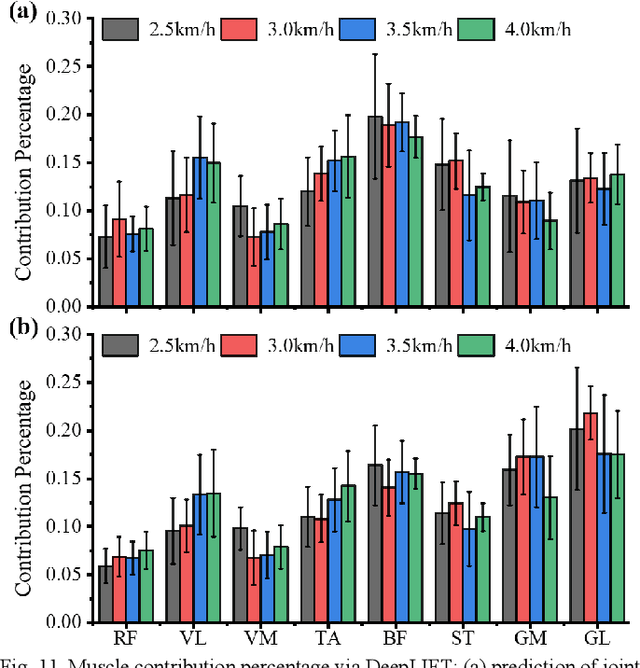

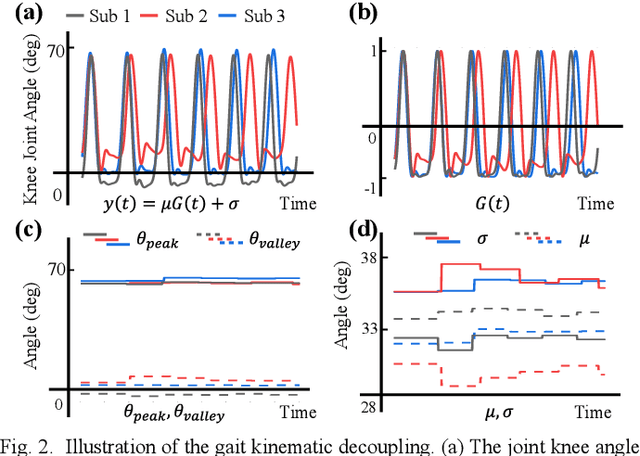

Predicting lower limb motion intent is vital for controlling exoskeleton robots and prosthetic limbs. Surface electromyography (sEMG) attracts increasing attention in recent years as it enables ahead-of-time prediction of motion intentions before actual movement. However, the estimation performance of human joint trajectory remains a challenging problem due to the inter- and intra-subject variations. The former is related to physiological differences (such as height and weight) and preferred walking patterns of individuals, while the latter is mainly caused by irregular and gait-irrelevant muscle activity. This paper proposes a model integrating two gait cycle-inspired learning strategies to mitigate the challenge for predicting human knee joint trajectory. The first strategy is to decouple knee joint angles into motion patterns and amplitudes former exhibit low variability while latter show high variability among individuals. By learning through separate network entities, the model manages to capture both the common and personalized gait features. In the second, muscle principal activation masks are extracted from gait cycles in a prolonged walk. These masks are used to filter out components unrelated to walking from raw sEMG and provide auxiliary guidance to capture more gait-related features. Experimental results indicate that our model could predict knee angles with the average root mean square error (RMSE) of 3.03(0.49) degrees and 50ms ahead of time. To our knowledge this is the best performance in relevant literatures that has been reported, with reduced RMSE by at least 9.5%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge