"Time": models, code, and papers

HMD-NeMo: Online 3D Avatar Motion Generation From Sparse Observations

Aug 22, 2023

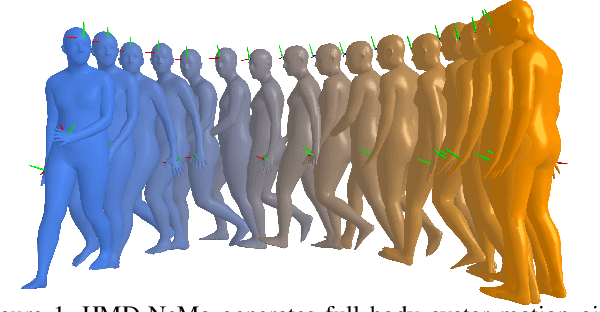

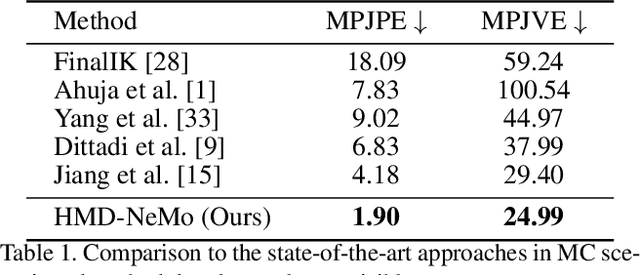

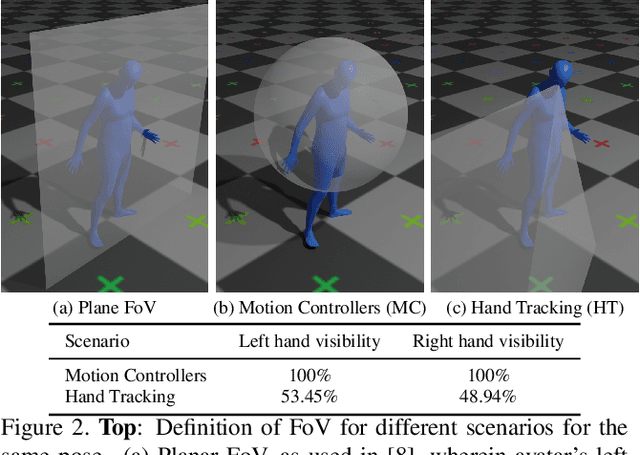

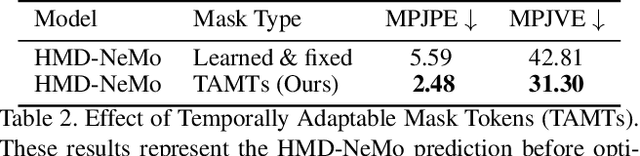

Generating both plausible and accurate full body avatar motion is the key to the quality of immersive experiences in mixed reality scenarios. Head-Mounted Devices (HMDs) typically only provide a few input signals, such as head and hands 6-DoF. Recently, different approaches achieved impressive performance in generating full body motion given only head and hands signal. However, to the best of our knowledge, all existing approaches rely on full hand visibility. While this is the case when, e.g., using motion controllers, a considerable proportion of mixed reality experiences do not involve motion controllers and instead rely on egocentric hand tracking. This introduces the challenge of partial hand visibility owing to the restricted field of view of the HMD. In this paper, we propose the first unified approach, HMD-NeMo, that addresses plausible and accurate full body motion generation even when the hands may be only partially visible. HMD-NeMo is a lightweight neural network that predicts the full body motion in an online and real-time fashion. At the heart of HMD-NeMo is the spatio-temporal encoder with novel temporally adaptable mask tokens that encourage plausible motion in the absence of hand observations. We perform extensive analysis of the impact of different components in HMD-NeMo and introduce a new state-of-the-art on AMASS dataset through our evaluation.

Pose2Gait: Extracting Gait Features from Monocular Video of Individuals with Dementia

Aug 22, 2023

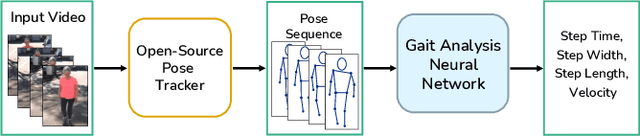

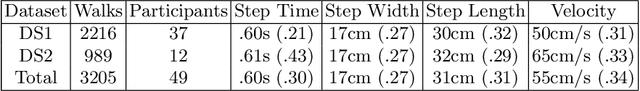

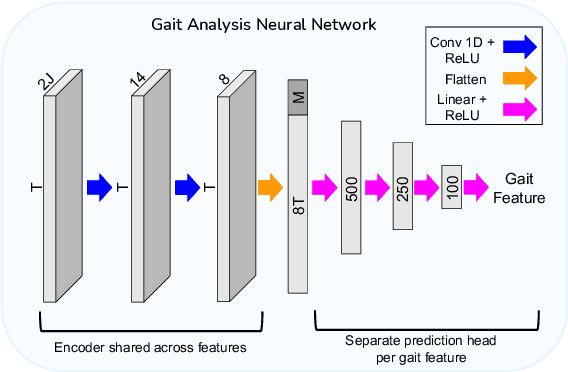

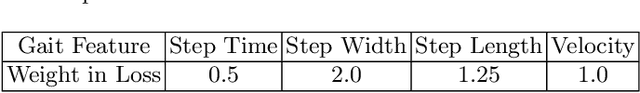

Video-based ambient monitoring of gait for older adults with dementia has the potential to detect negative changes in health and allow clinicians and caregivers to intervene early to prevent falls or hospitalizations. Computer vision-based pose tracking models can process video data automatically and extract joint locations; however, publicly available models are not optimized for gait analysis on older adults or clinical populations. In this work we train a deep neural network to map from a two dimensional pose sequence, extracted from a video of an individual walking down a hallway toward a wall-mounted camera, to a set of three-dimensional spatiotemporal gait features averaged over the walking sequence. The data of individuals with dementia used in this work was captured at two sites using a wall-mounted system to collect the video and depth information used to train and evaluate our model. Our Pose2Gait model is able to extract velocity and step length values from the video that are correlated with the features from the depth camera, with Spearman's correlation coefficients of .83 and .60 respectively, showing that three dimensional spatiotemporal features can be predicted from monocular video. Future work remains to improve the accuracy of other features, such as step time and step width, and test the utility of the predicted values for detecting meaningful changes in gait during longitudinal ambient monitoring.

CNN based Cuneiform Sign Detection Learned from Annotated 3D Renderings and Mapped Photographs with Illumination Augmentation

Aug 22, 2023

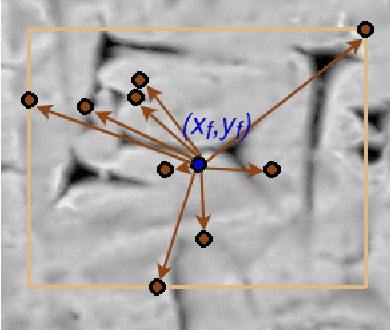

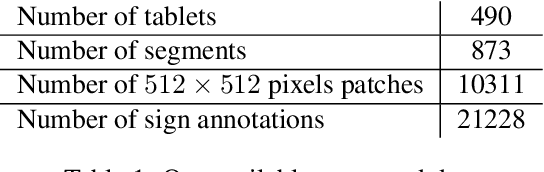

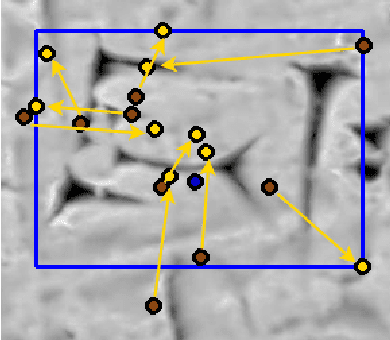

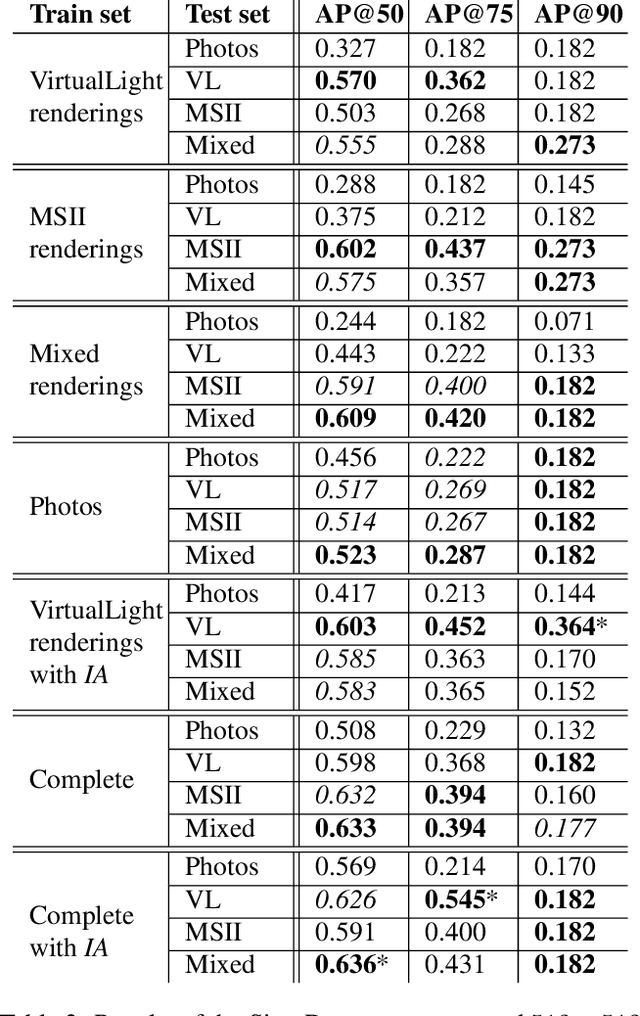

Motivated by the challenges of the Digital Ancient Near Eastern Studies (DANES) community, we develop digital tools for processing cuneiform script being a 3D script imprinted into clay tablets used for more than three millennia and at least eight major languages. It consists of thousands of characters that have changed over time and space. Photographs are the most common representations usable for machine learning, while ink drawings are prone to interpretation. Best suited 3D datasets that are becoming available. We created and used the HeiCuBeDa and MaiCuBeDa datasets, which consist of around 500 annotated tablets. For our novel OCR-like approach to mixed image data, we provide an additional mapping tool for transferring annotations between 3D renderings and photographs. Our sign localization uses a RepPoints detector to predict the locations of characters as bounding boxes. We use image data from GigaMesh's MSII (curvature, see https://gigamesh.eu) based rendering, Phong-shaded 3D models, and photographs as well as illumination augmentation. The results show that using rendered 3D images for sign detection performs better than other work on photographs. In addition, our approach gives reasonably good results for photographs only, while it is best used for mixed datasets. More importantly, the Phong renderings, and especially the MSII renderings, improve the results on photographs, which is the largest dataset on a global scale.

Exemplar-Free Continual Transformer with Convolutions

Aug 22, 2023

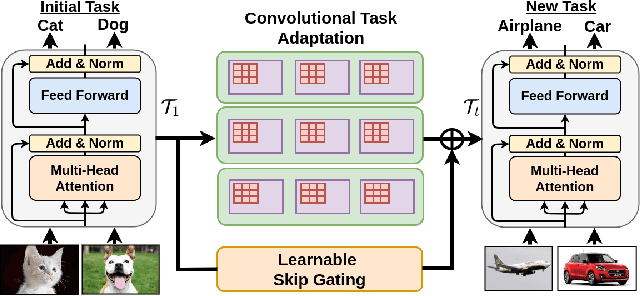

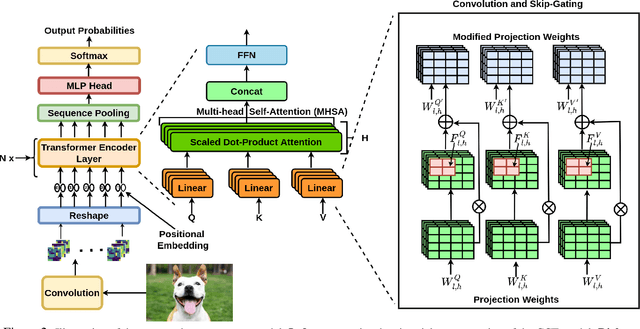

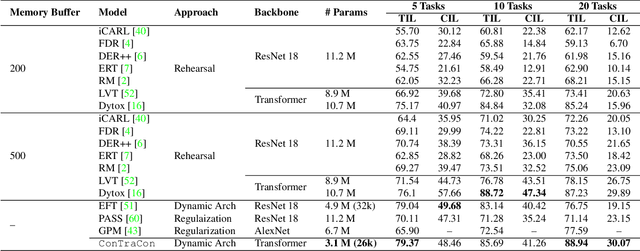

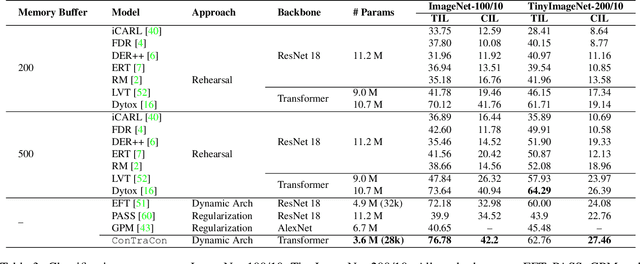

Continual Learning (CL) involves training a machine learning model in a sequential manner to learn new information while retaining previously learned tasks without the presence of previous training data. Although there has been significant interest in CL, most recent CL approaches in computer vision have focused on convolutional architectures only. However, with the recent success of vision transformers, there is a need to explore their potential for CL. Although there have been some recent CL approaches for vision transformers, they either store training instances of previous tasks or require a task identifier during test time, which can be limiting. This paper proposes a new exemplar-free approach for class/task incremental learning called ConTraCon, which does not require task-id to be explicitly present during inference and avoids the need for storing previous training instances. The proposed approach leverages the transformer architecture and involves re-weighting the key, query, and value weights of the multi-head self-attention layers of a transformer trained on a similar task. The re-weighting is done using convolution, which enables the approach to maintain low parameter requirements per task. Additionally, an image augmentation-based entropic task identification approach is used to predict tasks without requiring task-ids during inference. Experiments on four benchmark datasets demonstrate that the proposed approach outperforms several competitive approaches while requiring fewer parameters.

Graph Neural Network-Enhanced Expectation Propagation Algorithm for MIMO Turbo Receivers

Aug 22, 2023

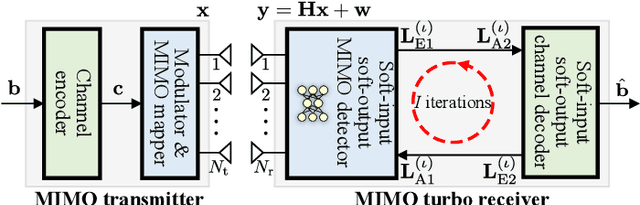

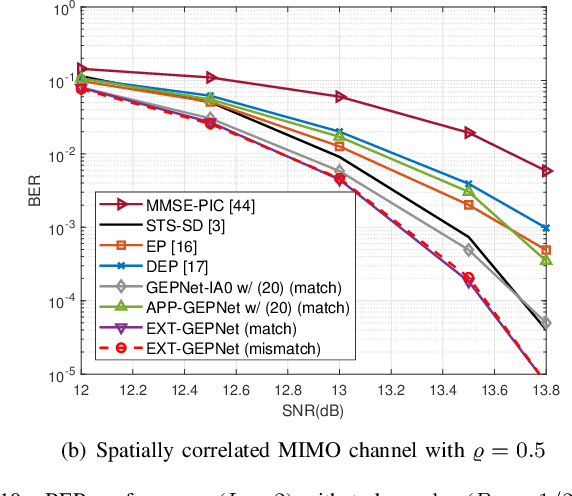

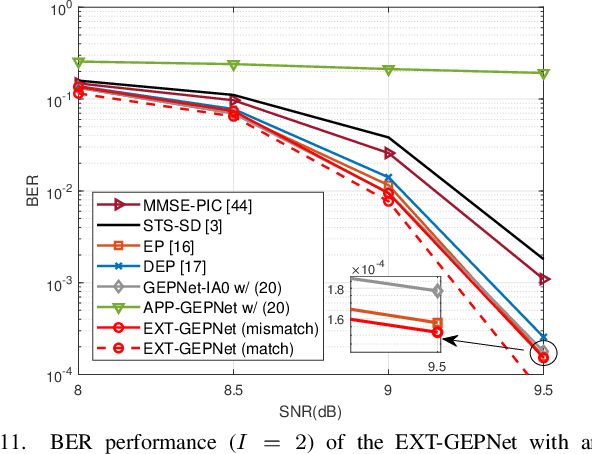

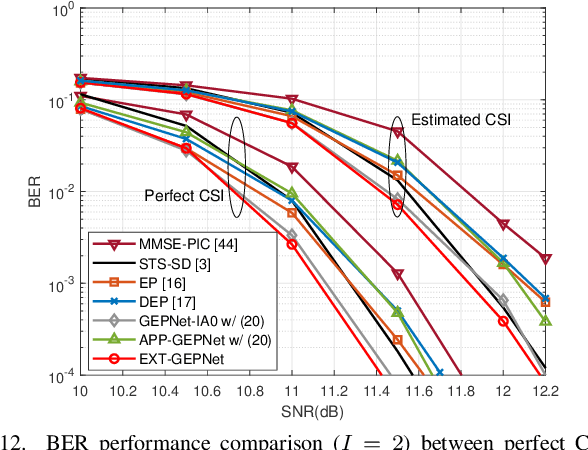

Deep neural networks (NNs) are considered a powerful tool for balancing the performance and complexity of multiple-input multiple-output (MIMO) receivers due to their accurate feature extraction, high parallelism, and excellent inference ability. Graph NNs (GNNs) have recently demonstrated outstanding capability in learning enhanced message passing rules and have shown success in overcoming the drawback of inaccurate Gaussian approximation of expectation propagation (EP)-based MIMO detectors. However, the application of the GNN-enhanced EP detector to MIMO turbo receivers is underexplored and non-trivial due to the requirement of extrinsic information for iterative processing. This paper proposes a GNN-enhanced EP algorithm for MIMO turbo receivers, which realizes the turbo principle of generating extrinsic information from the MIMO detector through a specially designed training procedure. Additionally, an edge pruning strategy is designed to eliminate redundant connections in the original fully connected model of the GNN utilizing the correlation information inherently from the EP algorithm. Edge pruning reduces the computational cost dramatically and enables the network to focus more attention on the weights that are vital for performance. Simulation results and complexity analysis indicate that the proposed MIMO turbo receiver outperforms the EP turbo approaches by over 1 dB at the bit error rate of $10^{-5}$, exhibits performance equivalent to state-of-the-art receivers with 2.5 times shorter running time, and adapts to various scenarios.

A LiDAR-Inertial SLAM Tightly-Coupled with Dropout-Tolerant GNSS Fusion for Autonomous Mine Service Vehicles

Aug 22, 2023

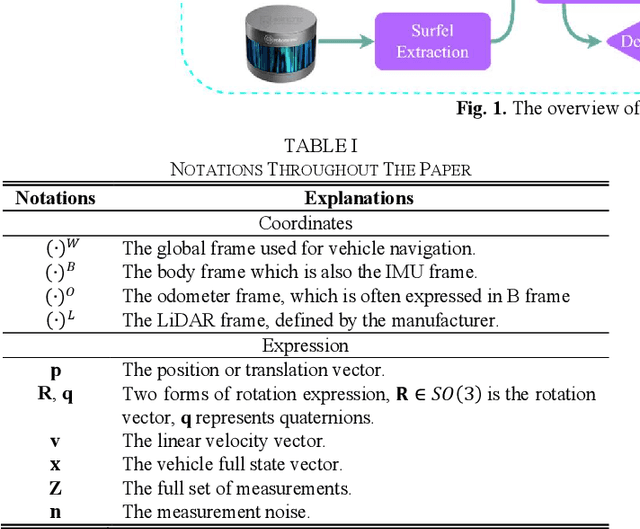

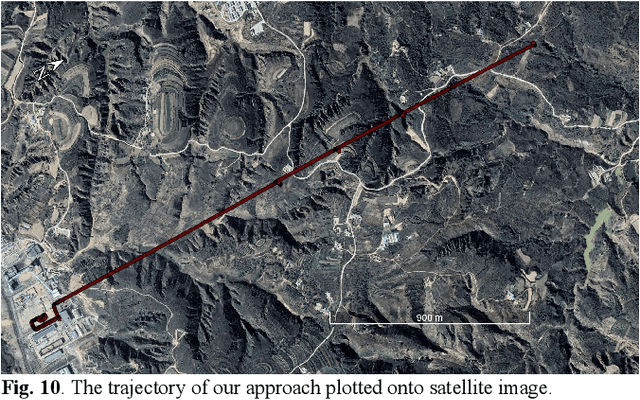

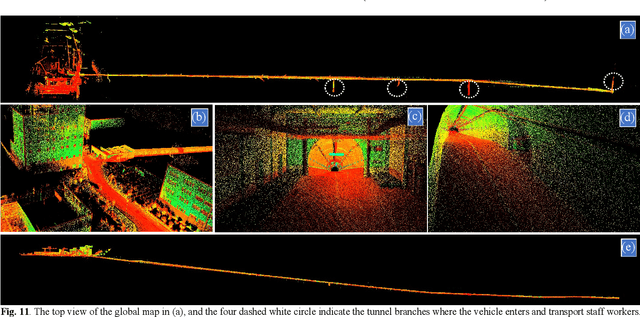

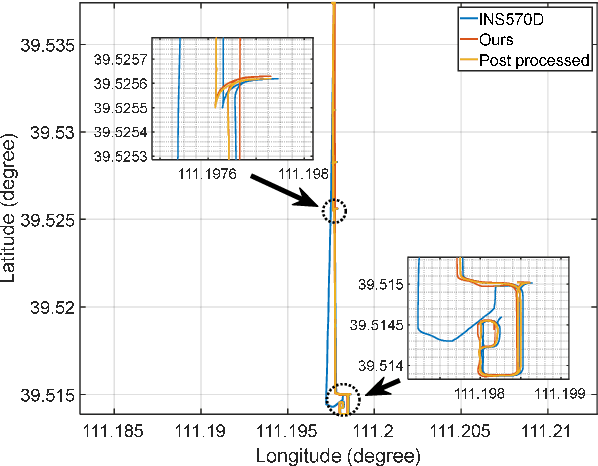

Multi-modal sensor integration has become a crucial prerequisite for the real-world navigation systems. Recent studies have reported successful deployment of such system in many fields. However, it is still challenging for navigation tasks in mine scenes due to satellite signal dropouts, degraded perception, and observation degeneracy. To solve this problem, we propose a LiDAR-inertial odometry method in this paper, utilizing both Kalman filter and graph optimization. The front-end consists of multiple parallel running LiDAR-inertial odometries, where the laser points, IMU, and wheel odometer information are tightly fused in an error-state Kalman filter. Instead of the commonly used feature points, we employ surface elements for registration. The back-end construct a pose graph and jointly optimize the pose estimation results from inertial, LiDAR odometry, and global navigation satellite system (GNSS). Since the vehicle has a long operation time inside the tunnel, the largely accumulated drift may be not fully by the GNSS measurements. We hereby leverage a loop closure based re-initialization process to achieve full alignment. In addition, the system robustness is improved through handling data loss, stream consistency, and estimation error. The experimental results show that our system has a good tolerance to the long-period degeneracy with the cooperation different LiDARs and surfel registration, achieving meter-level accuracy even for tens of minutes running during GNSS dropouts.

Application of Artificial Neural Networks for Investigation of Pressure Filtration Performance, a Zinc Leaching Filter Cake Moisture Modeling

Aug 11, 2023Machine Learning (ML) is a powerful tool for material science applications. Artificial Neural Network (ANN) is a machine learning technique that can provide high prediction accuracy. This study aimed to develop an ANN model to predict the cake moisture of the pressure filtration process of zinc production. The cake moisture was influenced by seven parameters: temperature (35 and 65 Celsius), solid concentration (0.2 and 0.38 g/L), pH (2, 3.5, and 5), air-blow time (2, 10, and 15 min), cake thickness (14, 20, 26, and 34 mm), pressure, and filtration time. The study conducted 288 tests using two types of fabrics: polypropylene (S1) and polyester (S2). The ANN model was evaluated by the Coefficient of determination (R2), the Mean Square Error (MSE), and the Mean Absolute Error (MAE) metrics for both datasets. The results showed R2 values of 0.88 and 0.83, MSE values of 6.243x10-07 and 1.086x10-06, and MAE values of 0.00056 and 0.00088 for S1 and S2, respectively. These results indicated that the ANN model could predict the cake moisture of pressure filtration in the zinc leaching process with high accuracy.

Clustering Method for Time-Series Images Using Quantum-Inspired Computing Technology

May 26, 2023

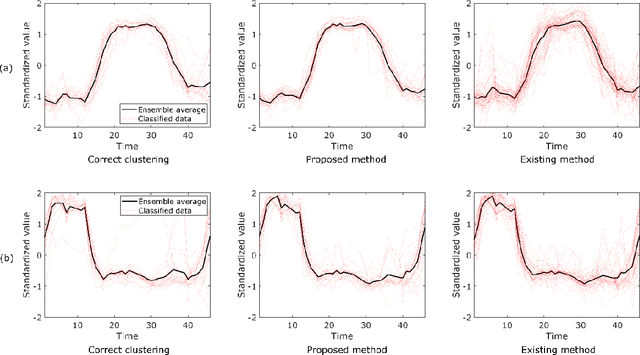

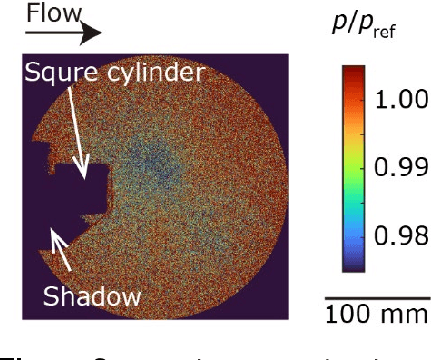

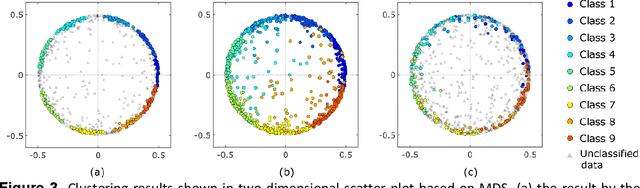

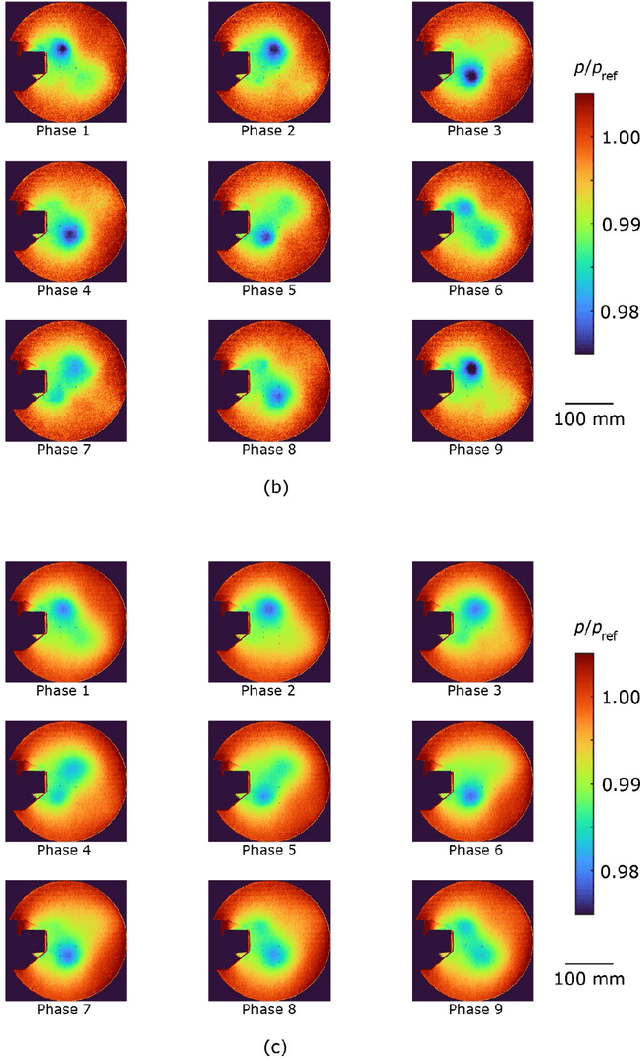

Time-series clustering serves as a powerful data mining technique for time-series data in the absence of prior knowledge about clusters. A large amount of time-series data with large size has been acquired and used in various research fields. Hence, clustering method with low computational cost is required. Given that a quantum-inspired computing technology, such as a simulated annealing machine, surpasses conventional computers in terms of fast and accurately solving combinatorial optimization problems, it holds promise for accomplishing clustering tasks that are challenging to achieve using existing methods. This study proposes a novel time-series clustering method that leverages an annealing machine. The proposed method facilitates an even classification of time-series data into clusters close to each other while maintaining robustness against outliers. Moreover, its applicability extends to time-series images. We compared the proposed method with a standard existing method for clustering an online distributed dataset. In the existing method, the distances between each data are calculated based on the Euclidean distance metric, and the clustering is performed using the k-means++ method. We found that both methods yielded comparable results. Furthermore, the proposed method was applied to a flow measurement image dataset containing noticeable noise with a signal-to-noise ratio of approximately 1. Despite a small signal variation of approximately 2%, the proposed method effectively classified the data without any overlap among the clusters. In contrast, the clustering results by the standard existing method and the conditional image sampling (CIS) method, a specialized technique for flow measurement data, displayed overlapping clusters. Consequently, the proposed method provides better results than the other two methods, demonstrating its potential as a superior clustering method.

Deep Learning Driven Detection of Tsunami Related Internal GravityWaves: a path towards open-ocean natural hazards detection

Aug 08, 2023

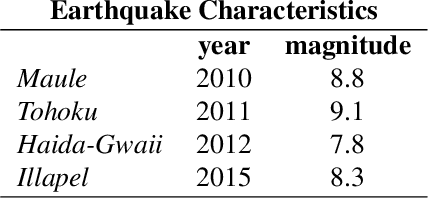

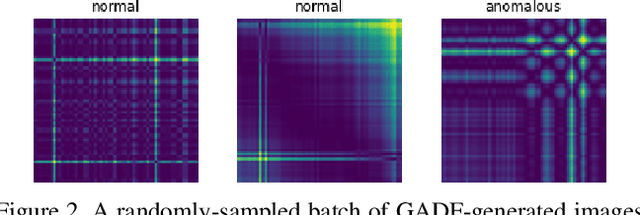

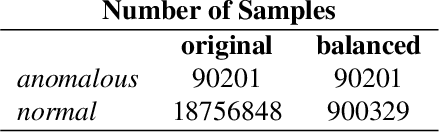

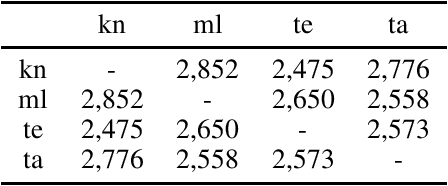

Tsunamis can trigger internal gravity waves (IGWs) in the ionosphere, perturbing the Total Electron Content (TEC) - referred to as Traveling Ionospheric Disturbances (TIDs) that are detectable through the Global Navigation Satellite System (GNSS). The GNSS are constellations of satellites providing signals from Earth orbit - Europe's Galileo, the United States' Global Positioning System (GPS), Russia's Global'naya Navigatsionnaya Sputnikovaya Sistema (GLONASS) and China's BeiDou. The real-time detection of TIDs provides an approach for tsunami detection, enhancing early warning systems by providing open-ocean coverage in geographic areas not serviceable by buoy-based warning systems. Large volumes of the GNSS data is leveraged by deep learning, which effectively handles complex non-linear relationships across thousands of data streams. We describe a framework leveraging slant total electron content (sTEC) from the VARION (Variometric Approach for Real-Time Ionosphere Observation) algorithm by Gramian Angular Difference Fields (from Computer Vision) and Convolutional Neural Networks (CNNs) to detect TIDs in near-real-time. Historical data from the 2010 Maule, 2011 Tohoku and the 2012 Haida-Gwaii earthquakes and tsunamis are used in model training, and the later-occurring 2015 Illapel earthquake and tsunami in Chile for out-of-sample model validation. Using the experimental framework described in the paper, we achieved a 91.7% F1 score. Source code is available at: https://github.com/vc1492a/tidd. Our work represents a new frontier in detecting tsunami-driven IGWs in open-ocean, dramatically improving the potential for natural hazards detection for coastal communities.

Exploring Linguistic Similarity and Zero-Shot Learning for Multilingual Translation of Dravidian Languages

Aug 10, 2023

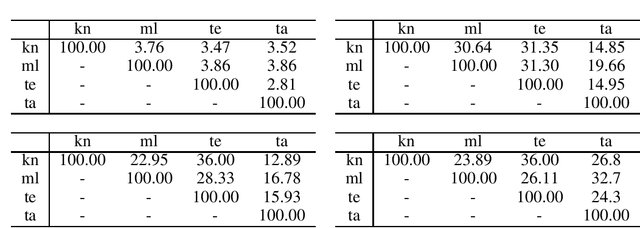

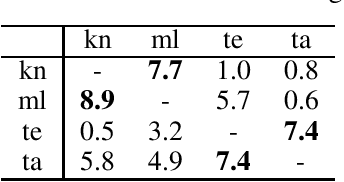

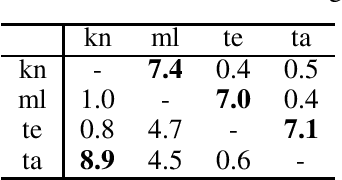

Current research in zero-shot translation is plagued by several issues such as high compute requirements, increased training time and off target translations. Proposed remedies often come at the cost of additional data or compute requirements. Pivot based neural machine translation is preferred over a single-encoder model for most settings despite the increased training and evaluation time. In this work, we overcome the shortcomings of zero-shot translation by taking advantage of transliteration and linguistic similarity. We build a single encoder-decoder neural machine translation system for Dravidian-Dravidian multilingual translation and perform zero-shot translation. We compare the data vs zero-shot accuracy tradeoff and evaluate the performance of our vanilla method against the current state of the art pivot based method. We also test the theory that morphologically rich languages require large vocabularies by restricting the vocabulary using an optimal transport based technique. Our model manages to achieves scores within 3 BLEU of large-scale pivot-based models when it is trained on 50\% of the language directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge