"Time": models, code, and papers

Effective Real Image Editing with Accelerated Iterative Diffusion Inversion

Sep 10, 2023

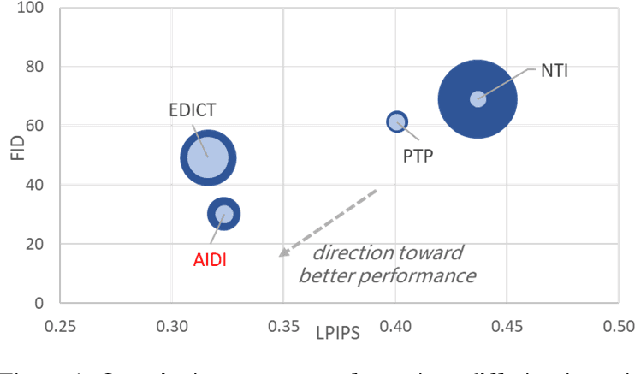

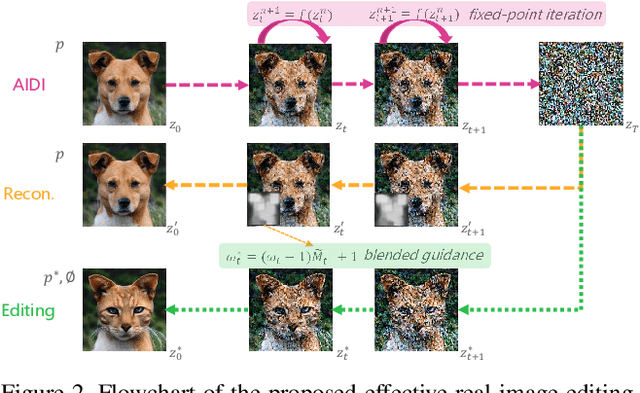

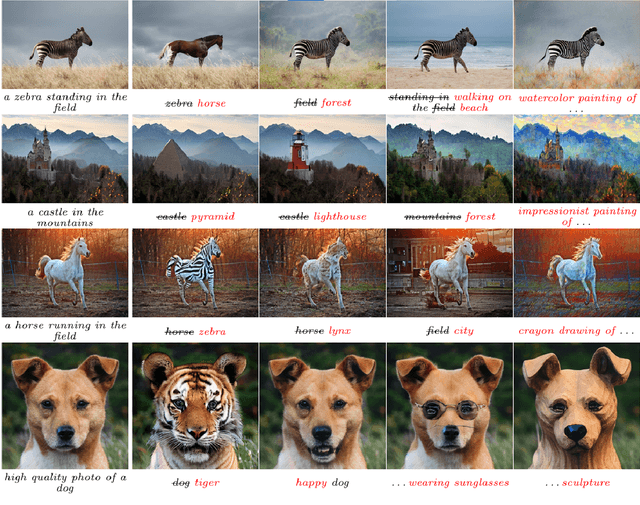

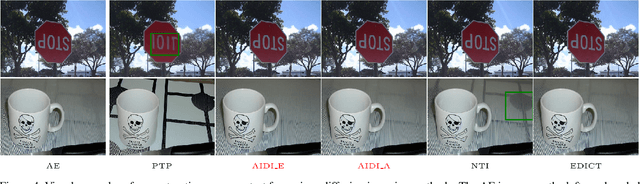

Despite all recent progress, it is still challenging to edit and manipulate natural images with modern generative models. When using Generative Adversarial Network (GAN), one major hurdle is in the inversion process mapping a real image to its corresponding noise vector in the latent space, since its necessary to be able to reconstruct an image to edit its contents. Likewise for Denoising Diffusion Implicit Models (DDIM), the linearization assumption in each inversion step makes the whole deterministic inversion process unreliable. Existing approaches that have tackled the problem of inversion stability often incur in significant trade-offs in computational efficiency. In this work we propose an Accelerated Iterative Diffusion Inversion method, dubbed AIDI, that significantly improves reconstruction accuracy with minimal additional overhead in space and time complexity. By using a novel blended guidance technique, we show that effective results can be obtained on a large range of image editing tasks without large classifier-free guidance in inversion. Furthermore, when compared with other diffusion inversion based works, our proposed process is shown to be more robust for fast image editing in the 10 and 20 diffusion steps' regimes.

RDGSL: Dynamic Graph Representation Learning with Structure Learning

Sep 05, 2023

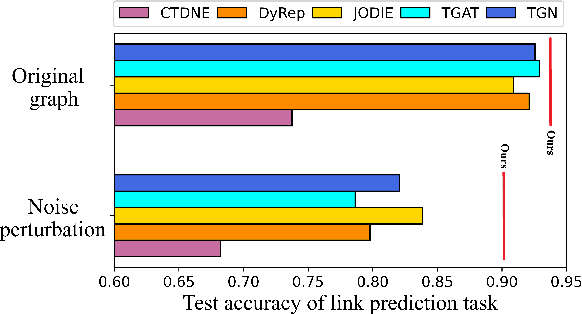

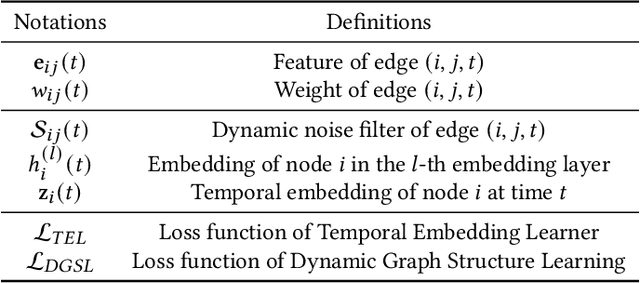

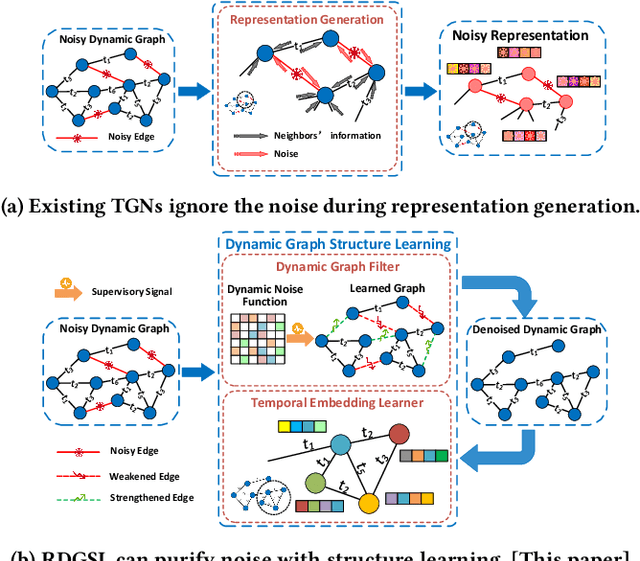

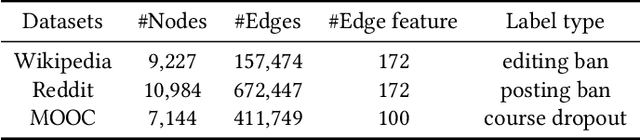

Temporal Graph Networks (TGNs) have shown remarkable performance in learning representation for continuous-time dynamic graphs. However, real-world dynamic graphs typically contain diverse and intricate noise. Noise can significantly degrade the quality of representation generation, impeding the effectiveness of TGNs in downstream tasks. Though structure learning is widely applied to mitigate noise in static graphs, its adaptation to dynamic graph settings poses two significant challenges. i) Noise dynamics. Existing structure learning methods are ill-equipped to address the temporal aspect of noise, hampering their effectiveness in such dynamic and ever-changing noise patterns. ii) More severe noise. Noise may be introduced along with multiple interactions between two nodes, leading to the re-pollution of these nodes and consequently causing more severe noise compared to static graphs. In this paper, we present RDGSL, a representation learning method in continuous-time dynamic graphs. Meanwhile, we propose dynamic graph structure learning, a novel supervisory signal that empowers RDGSL with the ability to effectively combat noise in dynamic graphs. To address the noise dynamics issue, we introduce the Dynamic Graph Filter, where we innovatively propose a dynamic noise function that dynamically captures both current and historical noise, enabling us to assess the temporal aspect of noise and generate a denoised graph. We further propose the Temporal Embedding Learner to tackle the challenge of more severe noise, which utilizes an attention mechanism to selectively turn a blind eye to noisy edges and hence focus on normal edges, enhancing the expressiveness for representation generation that remains resilient to noise. Our method demonstrates robustness towards downstream tasks, resulting in up to 5.1% absolute AUC improvement in evolving classification versus the second-best baseline.

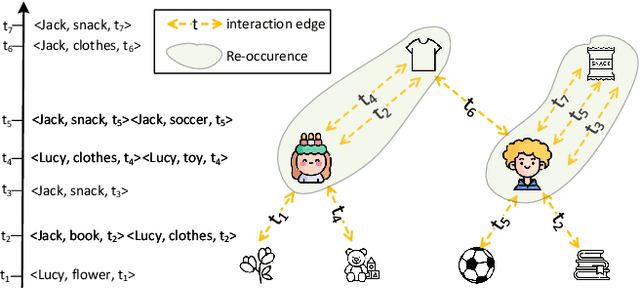

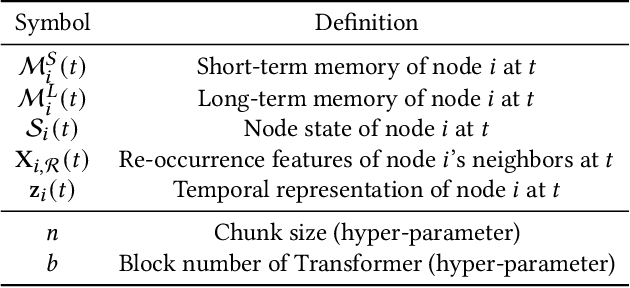

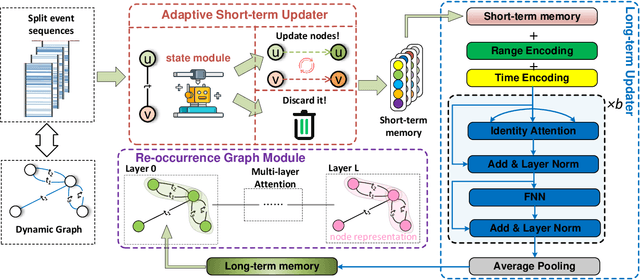

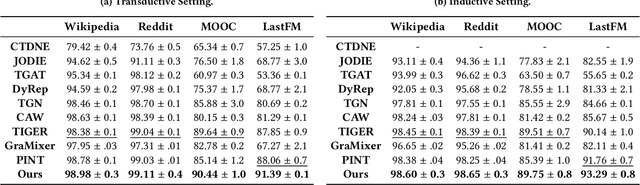

iLoRE: Dynamic Graph Representation with Instant Long-term Modeling and Re-occurrence Preservation

Sep 05, 2023

Continuous-time dynamic graph modeling is a crucial task for many real-world applications, such as financial risk management and fraud detection. Though existing dynamic graph modeling methods have achieved satisfactory results, they still suffer from three key limitations, hindering their scalability and further applicability. i) Indiscriminate updating. For incoming edges, existing methods would indiscriminately deal with them, which may lead to more time consumption and unexpected noisy information. ii) Ineffective node-wise long-term modeling. They heavily rely on recurrent neural networks (RNNs) as a backbone, which has been demonstrated to be incapable of fully capturing node-wise long-term dependencies in event sequences. iii) Neglect of re-occurrence patterns. Dynamic graphs involve the repeated occurrence of neighbors that indicates their importance, which is disappointedly neglected by existing methods. In this paper, we present iLoRE, a novel dynamic graph modeling method with instant node-wise Long-term modeling and Re-occurrence preservation. To overcome the indiscriminate updating issue, we introduce the Adaptive Short-term Updater module that will automatically discard the useless or noisy edges, ensuring iLoRE's effectiveness and instant ability. We further propose the Long-term Updater to realize more effective node-wise long-term modeling, where we innovatively propose the Identity Attention mechanism to empower a Transformer-based updater, bypassing the limited effectiveness of typical RNN-dominated designs. Finally, the crucial re-occurrence patterns are also encoded into a graph module for informative representation learning, which will further improve the expressiveness of our method. Our experimental results on real-world datasets demonstrate the effectiveness of our iLoRE for dynamic graph modeling.

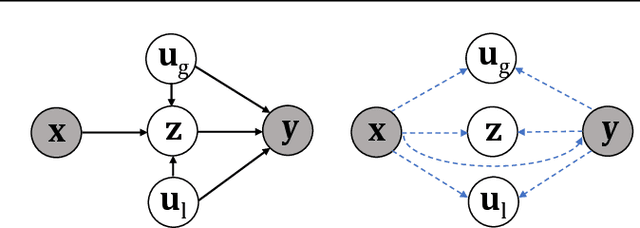

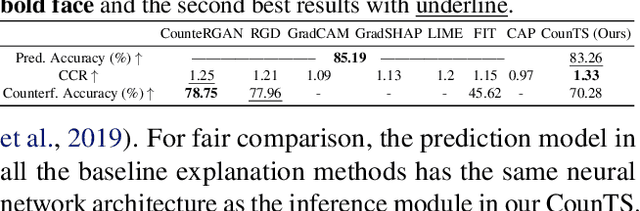

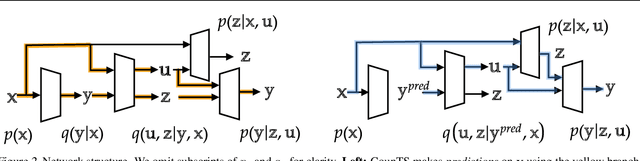

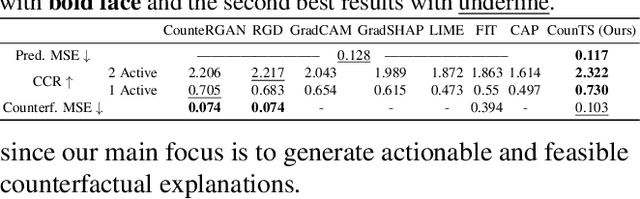

Self-Interpretable Time Series Prediction with Counterfactual Explanations

Jun 09, 2023

Interpretable time series prediction is crucial for safety-critical areas such as healthcare and autonomous driving. Most existing methods focus on interpreting predictions by assigning important scores to segments of time series. In this paper, we take a different and more challenging route and aim at developing a self-interpretable model, dubbed Counterfactual Time Series (CounTS), which generates counterfactual and actionable explanations for time series predictions. Specifically, we formalize the problem of time series counterfactual explanations, establish associated evaluation protocols, and propose a variational Bayesian deep learning model equipped with counterfactual inference capability of time series abduction, action, and prediction. Compared with state-of-the-art baselines, our self-interpretable model can generate better counterfactual explanations while maintaining comparable prediction accuracy.

Multidomain transformer-based deep learning for early detection of network intrusion

Sep 03, 2023

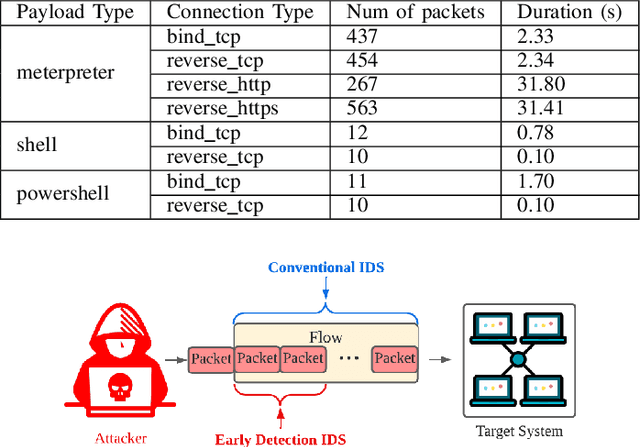

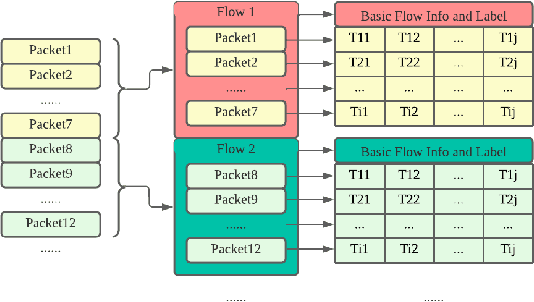

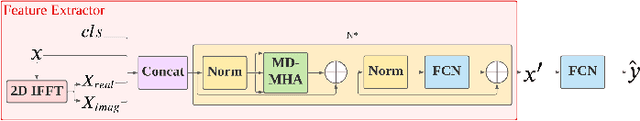

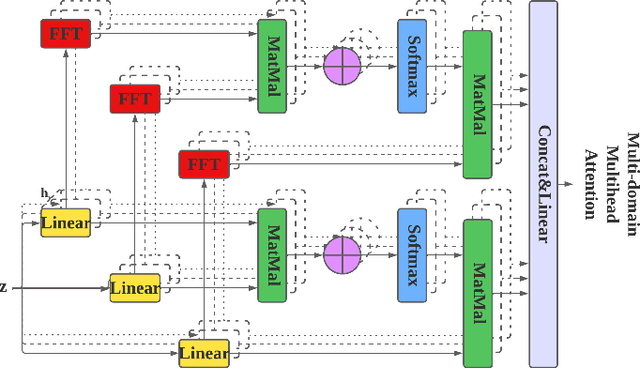

Timely response of Network Intrusion Detection Systems (NIDS) is constrained by the flow generation process which requires accumulation of network packets. This paper introduces Multivariate Time Series (MTS) early detection into NIDS to identify malicious flows prior to their arrival at target systems. With this in mind, we first propose a novel feature extractor, Time Series Network Flow Meter (TS-NFM), that represents network flow as MTS with explainable features, and a new benchmark dataset is created using TS-NFM and the meta-data of CICIDS2017, called SCVIC-TS-2022. Additionally, a new deep learning-based early detection model called Multi-Domain Transformer (MDT) is proposed, which incorporates the frequency domain into Transformer. This work further proposes a Multi-Domain Multi-Head Attention (MD-MHA) mechanism to improve the ability of MDT to extract better features. Based on the experimental results, the proposed methodology improves the earliness of the conventional NIDS (i.e., percentage of packets that are used for classification) by 5x10^4 times and duration-based earliness (i.e., percentage of duration of the classified packets of a flow) by a factor of 60, resulting in a 84.1% macro F1 score (31% higher than Transformer) on SCVIC-TS-2022. Additionally, the proposed MDT outperforms the state-of-the-art early detection methods by 5% and 6% on ECG and Wafer datasets, respectively.

Subject-Diffusion:Open Domain Personalized Text-to-Image Generation without Test-time Fine-tuning

Jul 21, 2023Recent progress in personalized image generation using diffusion models has been significant. However, development in the area of open-domain and non-fine-tuning personalized image generation is proceeding rather slowly. In this paper, we propose Subject-Diffusion, a novel open-domain personalized image generation model that, in addition to not requiring test-time fine-tuning, also only requires a single reference image to support personalized generation of single- or multi-subject in any domain. Firstly, we construct an automatic data labeling tool and use the LAION-Aesthetics dataset to construct a large-scale dataset consisting of 76M images and their corresponding subject detection bounding boxes, segmentation masks and text descriptions. Secondly, we design a new unified framework that combines text and image semantics by incorporating coarse location and fine-grained reference image control to maximize subject fidelity and generalization. Furthermore, we also adopt an attention control mechanism to support multi-subject generation. Extensive qualitative and quantitative results demonstrate that our method outperforms other SOTA frameworks in single, multiple, and human customized image generation. Please refer to our \href{https://oppo-mente-lab.github.io/subject_diffusion/}{project page}

Neural Chronos ODE: Unveiling Temporal Patterns and Forecasting Future and Past Trends in Time Series Data

Jul 03, 2023

This work introduces Neural Chronos Ordinary Differential Equations (Neural CODE), a deep neural network architecture that fits a continuous-time ODE dynamics for predicting the chronology of a system both forward and backward in time. To train the model, we solve the ODE as an initial value problem and a final value problem, similar to Neural ODEs. We also explore two approaches to combining Neural CODE with Recurrent Neural Networks by replacing Neural ODE with Neural CODE (CODE-RNN), and incorporating a bidirectional RNN for full information flow in both time directions (CODE-BiRNN), and variants with other update cells namely GRU and LSTM: CODE-GRU, CODE-BiGRU, CODE-LSTM, CODE-BiLSTM. Experimental results demonstrate that Neural CODE outperforms Neural ODE in learning the dynamics of a spiral forward and backward in time, even with sparser data. We also compare the performance of CODE-RNN/-GRU/-LSTM and CODE-BiRNN/-BiGRU/-BiLSTM against ODE-RNN/-GRU/-LSTM on three real-life time series data tasks: imputation of missing data for lower and higher dimensional data, and forward and backward extrapolation with shorter and longer time horizons. Our findings show that the proposed architectures converge faster, with CODE-BiRNN/-BiGRU/-BiLSTM consistently outperforming the other architectures on all tasks.

Recall-driven Precision Refinement: Unveiling Accurate Fall Detection using LSTM

Sep 09, 2023

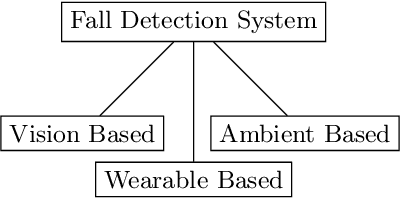

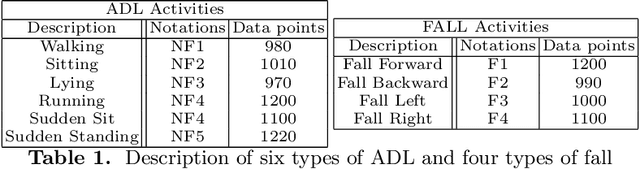

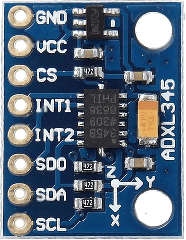

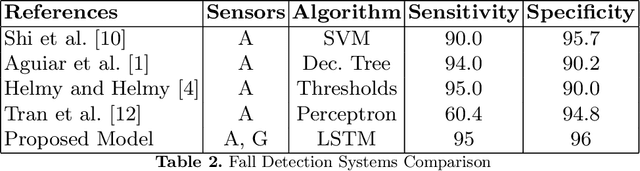

This paper presents an innovative approach to address the pressing concern of fall incidents among the elderly by developing an accurate fall detection system. Our proposed system combines state-of-the-art technologies, including accelerometer and gyroscope sensors, with deep learning models, specifically Long Short-Term Memory (LSTM) networks. Real-time execution capabilities are achieved through the integration of Raspberry Pi hardware. We introduce pruning techniques that strategically fine-tune the LSTM model's architecture and parameters to optimize the system's performance. We prioritize recall over precision, aiming to accurately identify falls and minimize false negatives for timely intervention. Extensive experimentation and meticulous evaluation demonstrate remarkable performance metrics, emphasizing a high recall rate while maintaining a specificity of 96\%. Our research culminates in a state-of-the-art fall detection system that promptly sends notifications, ensuring vulnerable individuals receive timely assistance and improve their overall well-being. Applying LSTM models and incorporating pruning techniques represent a significant advancement in fall detection technology, offering an effective and reliable fall prevention and intervention solution.

A Fast Algorithm for Moderating Critical Nodes via Edge Removal

Sep 09, 2023

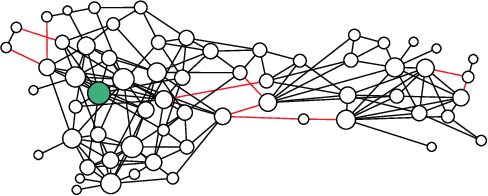

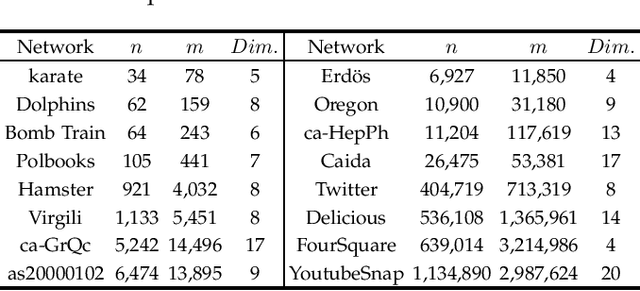

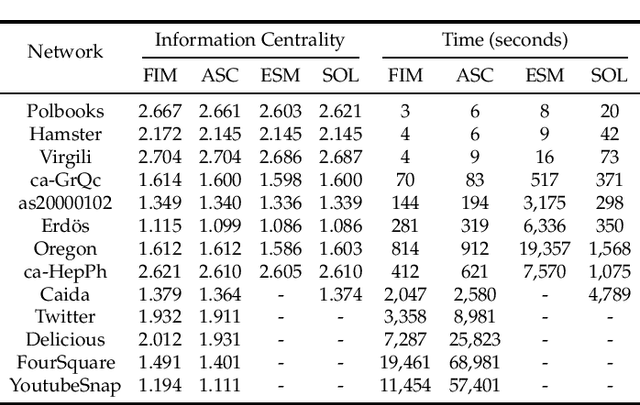

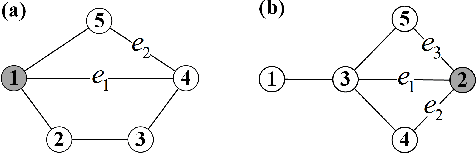

Critical nodes in networks are extremely vulnerable to malicious attacks to trigger negative cascading events such as the spread of misinformation and diseases. Therefore, effective moderation of critical nodes is very vital for mitigating the potential damages caused by such malicious diffusions. The current moderation methods are computationally expensive. Furthermore, they disregard the fundamental metric of information centrality, which measures the dissemination power of nodes. We investigate the problem of removing $k$ edges from a network to minimize the information centrality of a target node $\lea$ while preserving the network's connectivity. We prove that this problem is computationally challenging: it is NP-complete and its objective function is not supermodular. However, we propose three approximation greedy algorithms using novel techniques such as random walk-based Schur complement approximation and fast sum estimation. One of our algorithms runs in nearly linear time in the number of edges. To complement our theoretical analysis, we conduct a comprehensive set of experiments on synthetic and real networks with over one million nodes. Across various settings, the experimental results illustrate the effectiveness and efficiency of our proposed algorithms.

RoLA: A Real-Time Online Lightweight Anomaly Detection System for Multivariate Time Series

May 25, 2023

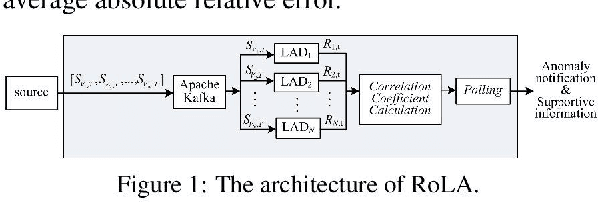

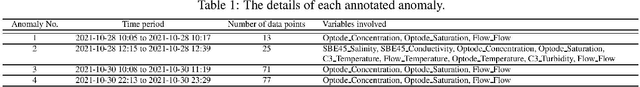

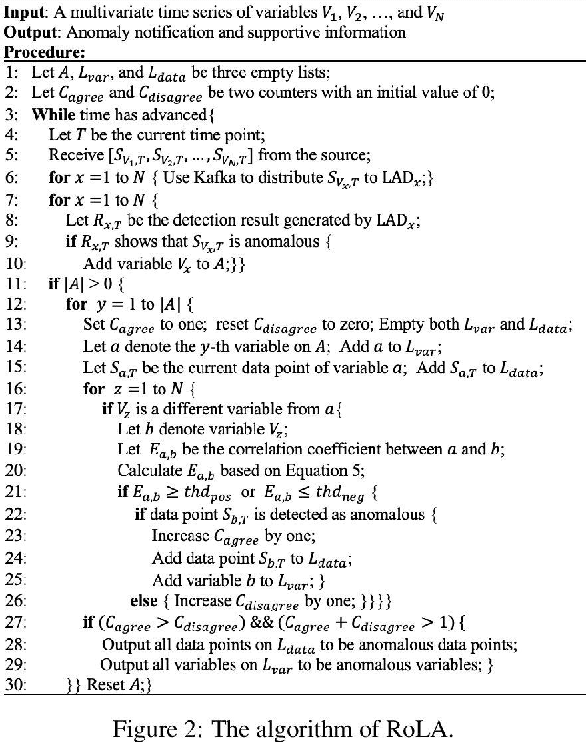

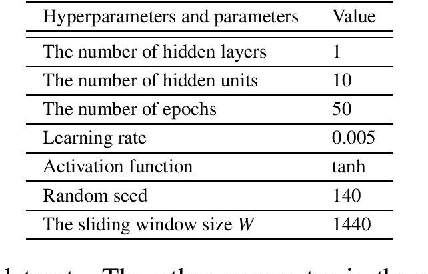

A multivariate time series refers to observations of two or more variables taken from a device or a system simultaneously over time. There is an increasing need to monitor multivariate time series and detect anomalies in real time to ensure proper system operation and good service quality. It is also highly desirable to have a lightweight anomaly detection system that considers correlations between different variables, adapts to changes in the pattern of the multivariate time series, offers immediate responses, and provides supportive information regarding detection results based on unsupervised learning and online model training. In the past decade, many multivariate time series anomaly detection approaches have been introduced. However, they are unable to offer all the above-mentioned features. In this paper, we propose RoLA, a real-time online lightweight anomaly detection system for multivariate time series based on a divide-and-conquer strategy, parallel processing, and the majority rule. RoLA employs multiple lightweight anomaly detectors to monitor multivariate time series in parallel, determine the correlations between variables dynamically on the fly, and then jointly detect anomalies based on the majority rule in real time. To demonstrate the performance of RoLA, we conducted an experiment based on a public dataset provided by the FerryBox of the One Ocean Expedition. The results show that RoLA provides satisfactory detection accuracy and lightweight performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge