"Time": models, code, and papers

Object Detection in Aerial Images in Scarce Data Regimes

Oct 16, 2023Most contributions on Few-Shot Object Detection (FSOD) evaluate their methods on natural images only, yet the transferability of the announced performance is not guaranteed for applications on other kinds of images. We demonstrate this with an in-depth analysis of existing FSOD methods on aerial images and observed a large performance gap compared to natural images. Small objects, more numerous in aerial images, are the cause for the apparent performance gap between natural and aerial images. As a consequence, we improve FSOD performance on small objects with a carefully designed attention mechanism. In addition, we also propose a scale-adaptive box similarity criterion, that improves the training and evaluation of FSOD methods, particularly for small objects. We also contribute to generic FSOD with two distinct approaches based on metric learning and fine-tuning. Impressive results are achieved with the fine-tuning method, which encourages tackling more complex scenarios such as Cross-Domain FSOD. We conduct preliminary experiments in this direction and obtain promising results. Finally, we address the deployment of the detection models inside COSE's systems. Detection must be done in real-time in extremely large images (more than 100 megapixels), with limited computation power. Leveraging existing optimization tools such as TensorRT, we successfully tackle this engineering challenge.

Generative Calibration for In-context Learning

Oct 16, 2023As one of the most exciting features of large language models (LLMs), in-context learning is a mixed blessing. While it allows users to fast-prototype a task solver with only a few training examples, the performance is generally sensitive to various configurations of the prompt such as the choice or order of the training examples. In this paper, we for the first time theoretically and empirically identify that such a paradox is mainly due to the label shift of the in-context model to the data distribution, in which LLMs shift the label marginal $p(y)$ while having a good label conditional $p(x|y)$. With this understanding, we can simply calibrate the in-context predictive distribution by adjusting the label marginal, which is estimated via Monte-Carlo sampling over the in-context model, i.e., generation of LLMs. We call our approach as generative calibration. We conduct exhaustive experiments with 12 text classification tasks and 12 LLMs scaling from 774M to 33B, generally find that the proposed method greatly and consistently outperforms the ICL as well as state-of-the-art calibration methods, by up to 27% absolute in macro-F1. Meanwhile, the proposed method is also stable under different prompt configurations.

An Interpretable Deep-Learning Framework for Predicting Hospital Readmissions From Electronic Health Records

Oct 16, 2023

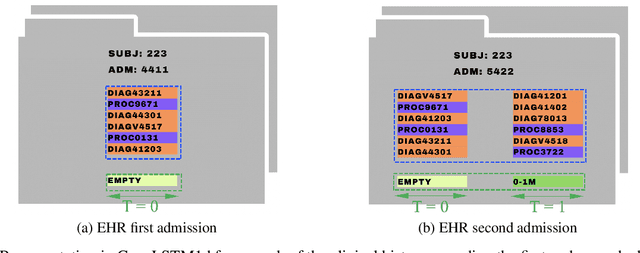

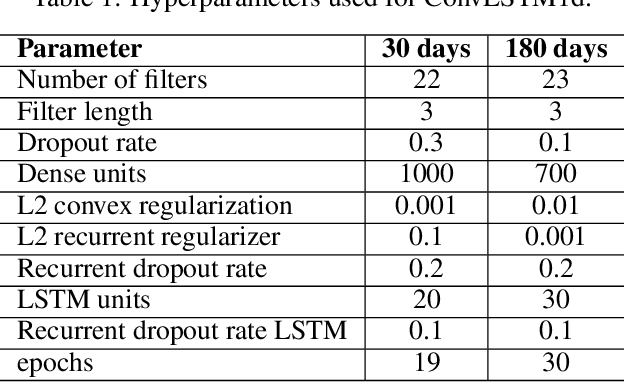

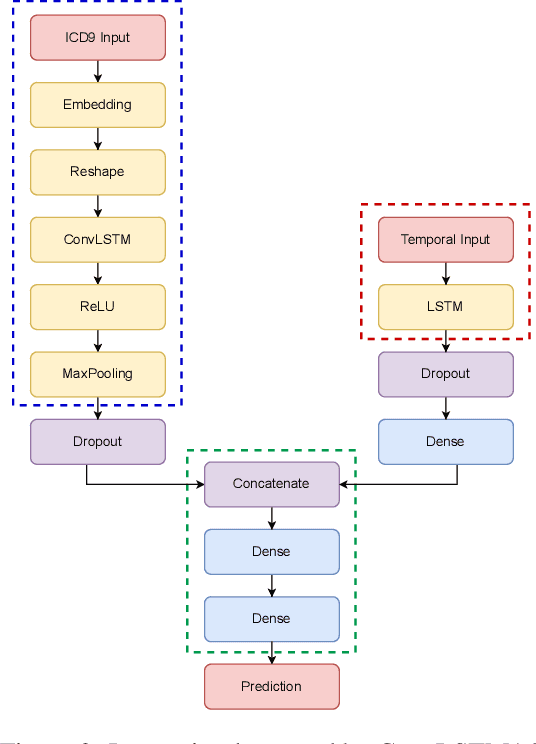

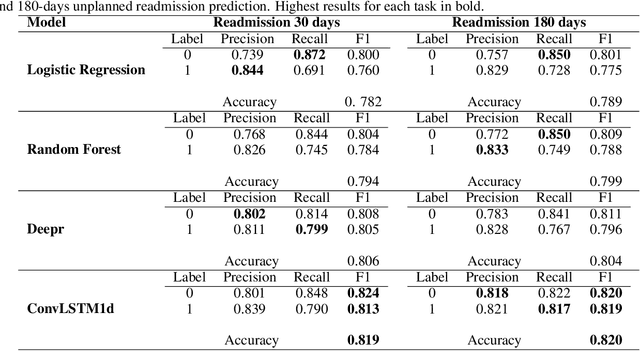

With the increasing availability of patients' data, modern medicine is shifting towards prospective healthcare. Electronic health records contain a variety of information useful for clinical patient description and can be exploited for the construction of predictive models, given that similar medical histories will likely lead to similar progressions. One example is unplanned hospital readmission prediction, an essential task for reducing hospital costs and improving patient health. Despite predictive models showing very good performances especially with deep-learning models, they are often criticized for the poor interpretability of their results, a fundamental characteristic in the medical field, where incorrect predictions might have serious consequences for the patient health. In this paper we propose a novel, interpretable deep-learning framework for predicting unplanned hospital readmissions, supported by NLP findings on word embeddings and by neural-network models (ConvLSTM) for better handling temporal data. We validate our system on the two predictive tasks of hospital readmission within 30 and 180 days, using real-world data. In addition, we introduce and test a model-dependent technique to make the representation of results easily interpretable by the medical staff. Our solution achieves better performances compared to traditional models based on machine learning, while providing at the same time more interpretable results.

Population-based wind farm monitoring based on a spatial autoregressive approach

Oct 16, 2023An important challenge faced by wind farm operators is to reduce operation and maintenance cost. Structural health monitoring provides a means of cost reduction through minimising unnecessary maintenance trips as well as prolonging turbine service life. Population-based structural health monitoring can further reduce the cost of health monitoring systems by implementing one system for multiple structures (i.e.~turbines). At the same time, shared data within a population of structures may improve the predictions of structural behaviour. To monitor turbine performance at a population/farm level, an important initial step is to construct a model that describes the behaviour of all turbines under normal conditions. This paper proposes a population-level model that explicitly captures the spatial and temporal correlations (between turbines) induced by the wake effect. The proposed model is a Gaussian process-based spatial autoregressive model, named here a GP-SPARX model. This approach is developed since (a) it reflects our physical understanding of the wake effect, and (b) it benefits from a stochastic data-based learner. A case study is provided to demonstrate the capability of the GP-SPARX model in capturing spatial and temporal variations as well as its potential applicability in a health monitoring system.

PELA: Learning Parameter-Efficient Models with Low-Rank Approximation

Oct 16, 2023Applying a pre-trained large model to downstream tasks is prohibitive under resource-constrained conditions. Recent dominant approaches for addressing efficiency issues involve adding a few learnable parameters to the fixed backbone model. This strategy, however, leads to more challenges in loading large models for downstream fine-tuning with limited resources. In this paper, we propose a novel method for increasing the parameter efficiency of pre-trained models by introducing an intermediate pre-training stage. To this end, we first employ low-rank approximation to compress the original large model and then devise a feature distillation module and a weight perturbation regularization module. These modules are specifically designed to enhance the low-rank model. Concretely, we update only the low-rank model while freezing the backbone parameters during pre-training. This allows for direct and efficient utilization of the low-rank model for downstream tasks. The proposed method achieves both efficiencies in terms of required parameters and computation time while maintaining comparable results with minimal modifications to the base architecture. Specifically, when applied to three vision-only and one vision-language Transformer models, our approach often demonstrates a $\sim$0.6 point decrease in performance while reducing the original parameter size by 1/3 to 2/3.

Unlocking Metasurface Practicality for B5G Networks: AI-assisted RIS Planning

Oct 16, 2023The advent of reconfigurable intelligent surfaces(RISs) brings along significant improvements for wireless technology on the verge of beyond-fifth-generation networks (B5G).The proven flexibility in influencing the propagation environment opens up the possibility of programmatically altering the wireless channel to the advantage of network designers, enabling the exploitation of higher-frequency bands for superior throughput overcoming the challenging electromagnetic (EM) propagation properties at these frequency bands. However, RISs are not magic bullets. Their employment comes with significant complexity, requiring ad-hoc deployments and management operations to come to fruition. In this paper, we tackle the open problem of bringing RISs to the field, focusing on areas with little or no coverage. In fact, we present a first-of-its-kind deep reinforcement learning (DRL) solution, dubbed as D-RISA, which trains a DRL agent and, in turn, obtain san optimal RIS deployment. We validate our framework in the indoor scenario of the Rennes railway station in France, assessing the performance of our algorithm against state-of-the-art (SOA) approaches. Our benchmarks showcase better coverage, i.e., 10-dB increase in minimum signal-to-noise ratio (SNR), at lower computational time (up to -25 percent) while improving scalability towards denser network deployments.

Test Time Embedding Normalization for Popularity Bias Mitigation

Aug 22, 2023

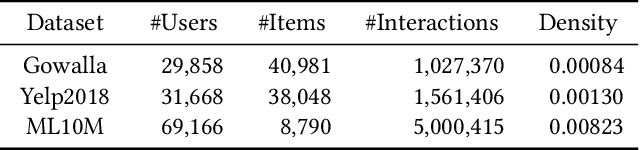

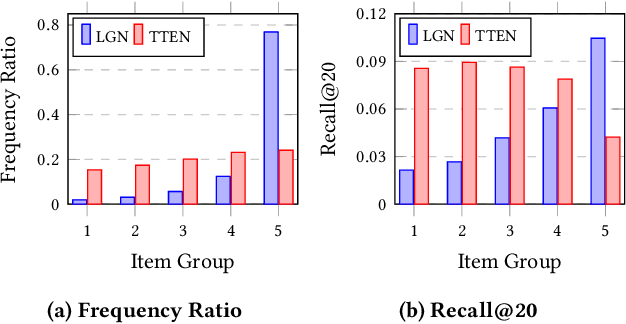

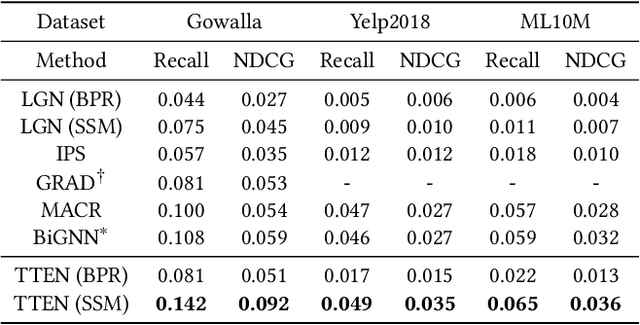

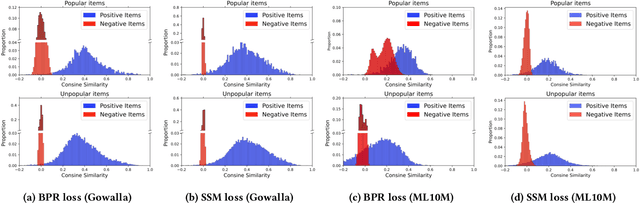

Popularity bias is a widespread problem in the field of recommender systems, where popular items tend to dominate recommendation results. In this work, we propose 'Test Time Embedding Normalization' as a simple yet effective strategy for mitigating popularity bias, which surpasses the performance of the previous mitigation approaches by a significant margin. Our approach utilizes the normalized item embedding during the inference stage to control the influence of embedding magnitude, which is highly correlated with item popularity. Through extensive experiments, we show that our method combined with the sampled softmax loss effectively reduces popularity bias compare to previous approaches for bias mitigation. We further investigate the relationship between user and item embeddings and find that the angular similarity between embeddings distinguishes preferable and non-preferable items regardless of their popularity. The analysis explains the mechanism behind the success of our approach in eliminating the impact of popularity bias. Our code is available at https://github.com/ml-postech/TTEN.

Learning to Generate Parameters of ConvNets for Unseen Image Data

Oct 18, 2023Typical Convolutional Neural Networks (ConvNets) depend heavily on large amounts of image data and resort to an iterative optimization algorithm (e.g., SGD or Adam) to learn network parameters, which makes training very time- and resource-intensive. In this paper, we propose a new training paradigm and formulate the parameter learning of ConvNets into a prediction task: given a ConvNet architecture, we observe there exists correlations between image datasets and their corresponding optimal network parameters, and explore if we can learn a hyper-mapping between them to capture the relations, such that we can directly predict the parameters of the network for an image dataset never seen during the training phase. To do this, we put forward a new hypernetwork based model, called PudNet, which intends to learn a mapping between datasets and their corresponding network parameters, and then predicts parameters for unseen data with only a single forward propagation. Moreover, our model benefits from a series of adaptive hyper recurrent units sharing weights to capture the dependencies of parameters among different network layers. Extensive experiments demonstrate that our proposed method achieves good efficacy for unseen image datasets on two kinds of settings: Intra-dataset prediction and Inter-dataset prediction. Our PudNet can also well scale up to large-scale datasets, e.g., ImageNet-1K. It takes 8967 GPU seconds to train ResNet-18 on the ImageNet-1K using GC from scratch and obtain a top-5 accuracy of 44.65 %. However, our PudNet costs only 3.89 GPU seconds to predict the network parameters of ResNet-18 achieving comparable performance (44.92 %), more than 2,300 times faster than the traditional training paradigm.

Fast Multipole Attention: A Divide-and-Conquer Attention Mechanism for Long Sequences

Oct 18, 2023Transformer-based models have achieved state-of-the-art performance in many areas. However, the quadratic complexity of self-attention with respect to the input length hinders the applicability of Transformer-based models to long sequences. To address this, we present Fast Multipole Attention, a new attention mechanism that uses a divide-and-conquer strategy to reduce the time and memory complexity of attention for sequences of length $n$ from $\mathcal{O}(n^2)$ to $\mathcal{O}(n \log n)$ or $O(n)$, while retaining a global receptive field. The hierarchical approach groups queries, keys, and values into $\mathcal{O}( \log n)$ levels of resolution, where groups at greater distances are increasingly larger in size and the weights to compute group quantities are learned. As such, the interaction between tokens far from each other is considered in lower resolution in an efficient hierarchical manner. The overall complexity of Fast Multipole Attention is $\mathcal{O}(n)$ or $\mathcal{O}(n \log n)$, depending on whether the queries are down-sampled or not. This multi-level divide-and-conquer strategy is inspired by fast summation methods from $n$-body physics and the Fast Multipole Method. We perform evaluation on autoregressive and bidirectional language modeling tasks and compare our Fast Multipole Attention model with other efficient attention variants on medium-size datasets. We find empirically that the Fast Multipole Transformer performs much better than other efficient transformers in terms of memory size and accuracy. The Fast Multipole Attention mechanism has the potential to empower large language models with much greater sequence lengths, taking the full context into account in an efficient, naturally hierarchical manner during training and when generating long sequences.

Developing a Natural Language Understanding Model to Characterize Cable News Bias

Oct 13, 2023Media bias has been extensively studied by both social and computational sciences. However, current work still has a large reliance on human input and subjective assessment to label biases. This is especially true for cable news research. To address these issues, we develop an unsupervised machine learning method to characterize the bias of cable news programs without any human input. This method relies on the analysis of what topics are mentioned through Named Entity Recognition and how those topics are discussed through Stance Analysis in order to cluster programs with similar biases together. Applying our method to 2020 cable news transcripts, we find that program clusters are consistent over time and roughly correspond to the cable news network of the program. This method reveals the potential for future tools to objectively assess media bias and characterize unfamiliar media environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge