"Information": models, code, and papers

Finite Mixtures of Multivariate Poisson-Log Normal Factor Analyzers for Clustering Count Data

Nov 13, 2023

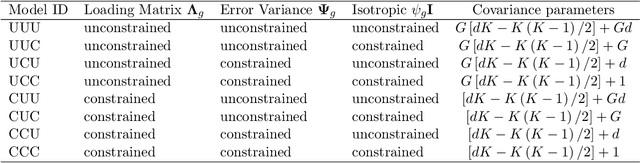

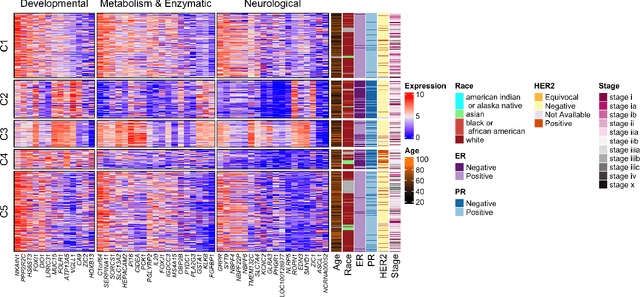

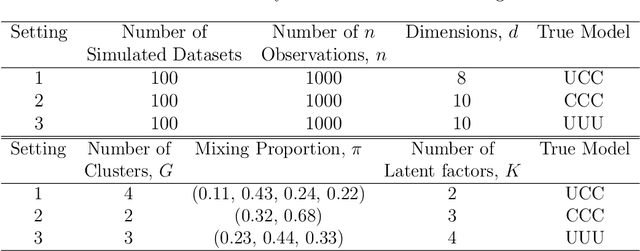

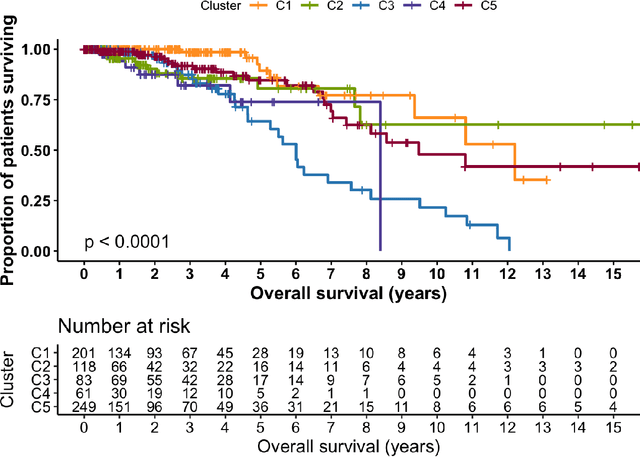

A mixture of multivariate Poisson-log normal factor analyzers is introduced by imposing constraints on the covariance matrix, which resulted in flexible models for clustering purposes. In particular, a class of eight parsimonious mixture models based on the mixtures of factor analyzers model are introduced. Variational Gaussian approximation is used for parameter estimation, and information criteria are used for model selection. The proposed models are explored in the context of clustering discrete data arising from RNA sequencing studies. Using real and simulated data, the models are shown to give favourable clustering performance. The GitHub R package for this work is available at https://github.com/anjalisilva/mixMPLNFA and is released under the open-source MIT license.

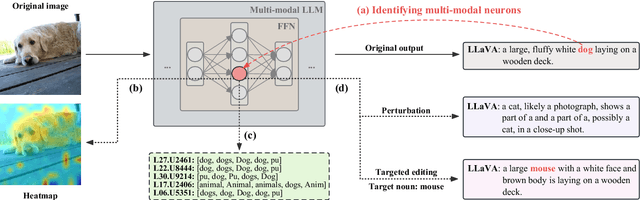

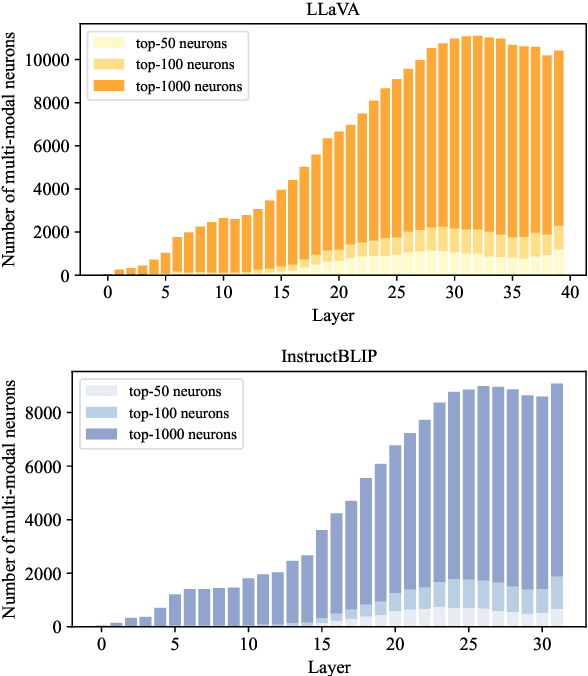

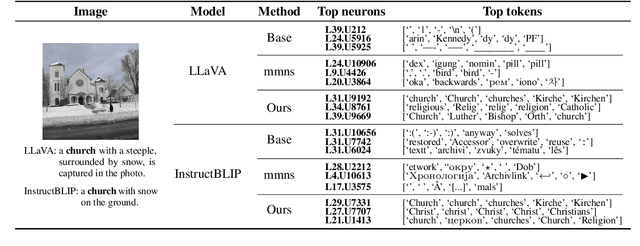

Finding and Editing Multi-Modal Neurons in Pre-Trained Transformer

Nov 13, 2023

Multi-modal large language models (LLM) have achieved powerful capabilities for visual semantic understanding in recent years. However, little is known about how LLMs comprehend visual information and interpret different modalities of features. In this paper, we propose a new method for identifying multi-modal neurons in transformer-based multi-modal LLMs. Through a series of experiments, We highlight three critical properties of multi-modal neurons by four well-designed quantitative evaluation metrics. Furthermore, we introduce a knowledge editing method based on the identified multi-modal neurons, for modifying a specific token to another designative token. We hope our findings can inspire further explanatory researches on understanding mechanisms of multi-modal LLMs.

TIAGo RL: Simulated Reinforcement Learning Environments with Tactile Data for Mobile Robots

Nov 13, 2023Tactile information is important for robust performance in robotic tasks that involve physical interaction, such as object manipulation. However, with more data included in the reasoning and control process, modeling behavior becomes increasingly difficult. Deep Reinforcement Learning (DRL) produced promising results for learning complex behavior in various domains, including tactile-based manipulation in robotics. In this work, we present our open-source reinforcement learning environments for the TIAGo service robot. They produce tactile sensor measurements that resemble those of a real sensorised gripper for TIAGo, encouraging research in transfer learning of DRL policies. Lastly, we show preliminary training results of a learned force control policy and compare it to a classical PI controller.

Scaling Up Music Information Retrieval Training with Semi-Supervised Learning

Oct 02, 2023In the era of data-driven Music Information Retrieval (MIR), the scarcity of labeled data has been one of the major concerns to the success of an MIR task. In this work, we leverage the semi-supervised teacher-student training approach to improve MIR tasks. For training, we scale up the unlabeled music data to 240k hours, which is much larger than any public MIR datasets. We iteratively create and refine the pseudo-labels in the noisy teacher-student training process. Knowledge expansion is also explored to iteratively scale up the model sizes from as small as less than 3M to almost 100M parameters. We study the performance correlation between data size and model size in the experiments. By scaling up both model size and training data, our models achieve state-of-the-art results on several MIR tasks compared to models that are either trained in a supervised manner or based on a self-supervised pretrained model. To our knowledge, this is the first attempt to study the effects of scaling up both model and training data for a variety of MIR tasks.

u-LLaVA: Unifying Multi-Modal Tasks via Large Language Model

Nov 09, 2023Recent advances such as LLaVA and Mini-GPT4 have successfully integrated visual information into LLMs, yielding inspiring outcomes and giving rise to a new generation of multi-modal LLMs, or MLLMs. Nevertheless, these methods struggle with hallucinations and the mutual interference between tasks. To tackle these problems, we propose an efficient and accurate approach to adapt to downstream tasks by utilizing LLM as a bridge to connect multiple expert models, namely u-LLaVA. Firstly, we incorporate the modality alignment module and multi-task modules into LLM. Then, we reorganize or rebuild multi-type public datasets to enable efficient modality alignment and instruction following. Finally, task-specific information is extracted from the trained LLM and provided to different modules for solving downstream tasks. The overall framework is simple, effective, and achieves state-of-the-art performance across multiple benchmarks. We also release our model, the generated data, and the code base publicly available.

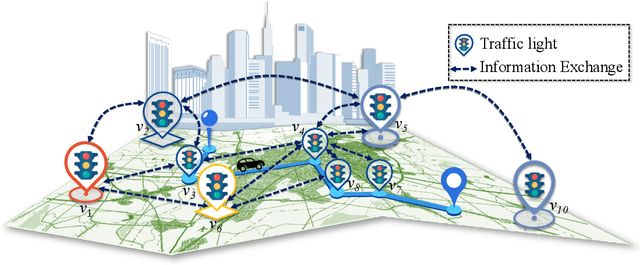

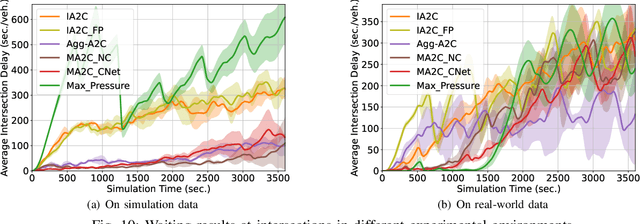

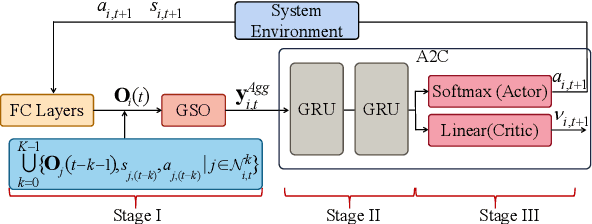

Learning Decentralized Traffic Signal Controllers with Multi-Agent Graph Reinforcement Learning

Nov 07, 2023

This paper considers optimal traffic signal control in smart cities, which has been taken as a complex networked system control problem. Given the interacting dynamics among traffic lights and road networks, attaining controller adaptivity and scalability stands out as a primary challenge. Capturing the spatial-temporal correlation among traffic lights under the framework of Multi-Agent Reinforcement Learning (MARL) is a promising solution. Nevertheless, existing MARL algorithms ignore effective information aggregation which is fundamental for improving the learning capacity of decentralized agents. In this paper, we design a new decentralized control architecture with improved environmental observability to capture the spatial-temporal correlation. Specifically, we first develop a topology-aware information aggregation strategy to extract correlation-related information from unstructured data gathered in the road network. Particularly, we transfer the road network topology into a graph shift operator by forming a diffusion process on the topology, which subsequently facilitates the construction of graph signals. A diffusion convolution module is developed, forming a new MARL algorithm, which endows agents with the capabilities of graph learning. Extensive experiments based on both synthetic and real-world datasets verify that our proposal outperforms existing decentralized algorithms.

Image-Pointcloud Fusion based Anomaly Detection using PD-REAL Dataset

Nov 07, 2023We present PD-REAL, a novel large-scale dataset for unsupervised anomaly detection (AD) in the 3D domain. It is motivated by the fact that 2D-only representations in the AD task may fail to capture the geometric structures of anomalies due to uncertainty in lighting conditions or shooting angles. PD-REAL consists entirely of Play-Doh models for 15 object categories and focuses on the analysis of potential benefits from 3D information in a controlled environment. Specifically, objects are first created with six types of anomalies, such as dent, crack, or perforation, and then photographed under different lighting conditions to mimic real-world inspection scenarios. To demonstrate the usefulness of 3D information, we use a commercially available RealSense camera to capture RGB and depth images. Compared to the existing 3D dataset for AD tasks, the data acquisition of PD-REAL is significantly cheaper, easily scalable and easier to control variables. Extensive evaluations with state-of-the-art AD algorithms on our dataset demonstrate the benefits as well as challenges of using 3D information. Our dataset can be downloaded from https://github.com/Andy-cs008/PD-REAL

Information Leakage from Data Updates in Machine Learning Models

Sep 20, 2023In this paper we consider the setting where machine learning models are retrained on updated datasets in order to incorporate the most up-to-date information or reflect distribution shifts. We investigate whether one can infer information about these updates in the training data (e.g., changes to attribute values of records). Here, the adversary has access to snapshots of the machine learning model before and after the change in the dataset occurs. Contrary to the existing literature, we assume that an attribute of a single or multiple training data points are changed rather than entire data records are removed or added. We propose attacks based on the difference in the prediction confidence of the original model and the updated model. We evaluate our attack methods on two public datasets along with multi-layer perceptron and logistic regression models. We validate that two snapshots of the model can result in higher information leakage in comparison to having access to only the updated model. Moreover, we observe that data records with rare values are more vulnerable to attacks, which points to the disparate vulnerability of privacy attacks in the update setting. When multiple records with the same original attribute value are updated to the same new value (i.e., repeated changes), the attacker is more likely to correctly guess the updated values since repeated changes leave a larger footprint on the trained model. These observations point to vulnerability of machine learning models to attribute inference attacks in the update setting.

Bridging the Gap between Multi-focus and Multi-modal: A Focused Integration Framework for Multi-modal Image Fusion

Nov 03, 2023Multi-modal image fusion (MMIF) integrates valuable information from different modality images into a fused one. However, the fusion of multiple visible images with different focal regions and infrared images is a unprecedented challenge in real MMIF applications. This is because of the limited depth of the focus of visible optical lenses, which impedes the simultaneous capture of the focal information within the same scene. To address this issue, in this paper, we propose a MMIF framework for joint focused integration and modalities information extraction. Specifically, a semi-sparsity-based smoothing filter is introduced to decompose the images into structure and texture components. Subsequently, a novel multi-scale operator is proposed to fuse the texture components, capable of detecting significant information by considering the pixel focus attributes and relevant data from various modal images. Additionally, to achieve an effective capture of scene luminance and reasonable contrast maintenance, we consider the distribution of energy information in the structural components in terms of multi-directional frequency variance and information entropy. Extensive experiments on existing MMIF datasets, as well as the object detection and depth estimation tasks, consistently demonstrate that the proposed algorithm can surpass the state-of-the-art methods in visual perception and quantitative evaluation. The code is available at https://github.com/ixilai/MFIF-MMIF.

Multimodal Machine Unlearning

Nov 18, 2023Machine Unlearning is the process of removing specific training data samples and their corresponding effects from an already trained model. It has significant practical benefits, such as purging private, inaccurate, or outdated information from trained models without the need for complete re-training. Unlearning within a multimodal setting presents unique challenges due to the intrinsic dependencies between different data modalities and the expensive cost of training on large multimodal datasets and architectures. Current approaches to machine unlearning have not fully addressed these challenges. To bridge this gap, we introduce MMUL, a machine unlearning approach specifically designed for multimodal data and models. MMUL formulates the multimodal unlearning task by focusing on three key properties: (a): modality decoupling, which effectively decouples the association between individual unimodal data points within multimodal inputs marked for deletion, rendering them as unrelated data points within the model's context, (b): unimodal knowledge retention, which retains the unimodal representation capability of the model post-unlearning, and (c): multimodal knowledge retention, which retains the multimodal representation capability of the model post-unlearning. MMUL is efficient to train and is not constrained by the requirement of using a strongly convex loss. Experiments on two multimodal models and four multimodal benchmark datasets, including vision-language and graph-language datasets, show that MMUL outperforms existing baselines, gaining an average improvement of +17.6 points against the best-performing unimodal baseline in distinguishing between deleted and remaining data. In addition, MMUL can largely maintain pre-existing knowledge of the original model post unlearning, with a performance gap of only 0.3 points compared to retraining a new model from scratch.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge