"Information": models, code, and papers

Trajectory Synthesis for Fisher Information Maximization

Sep 11, 2017

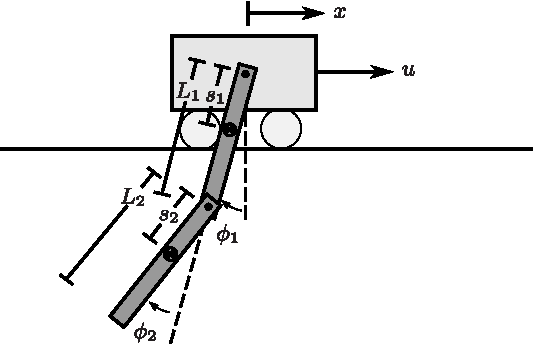

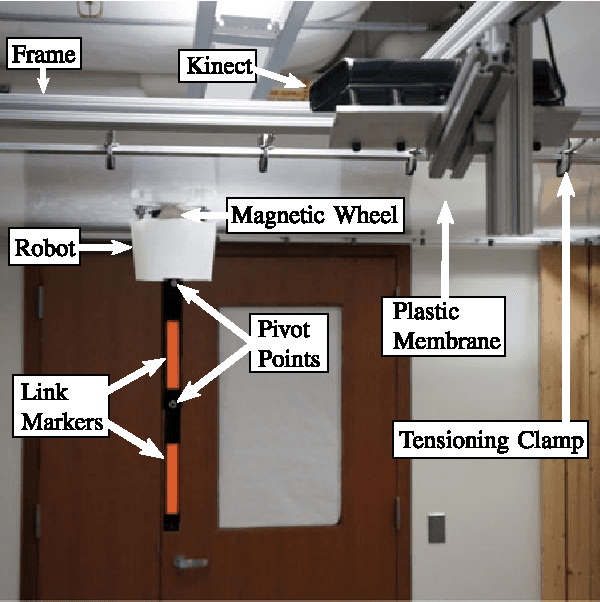

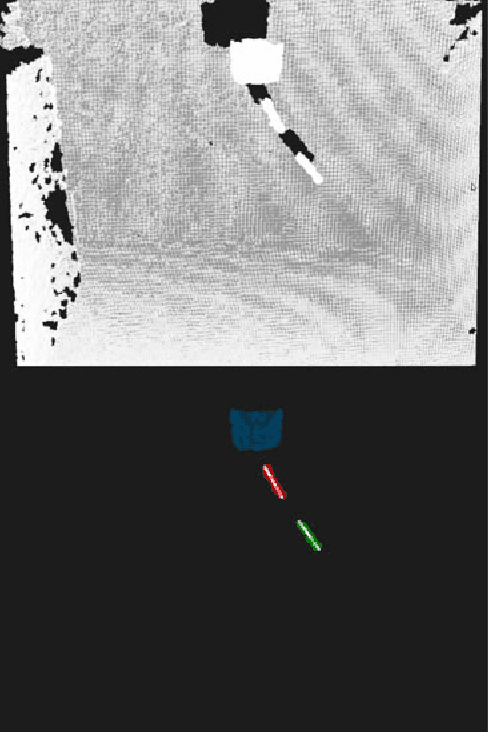

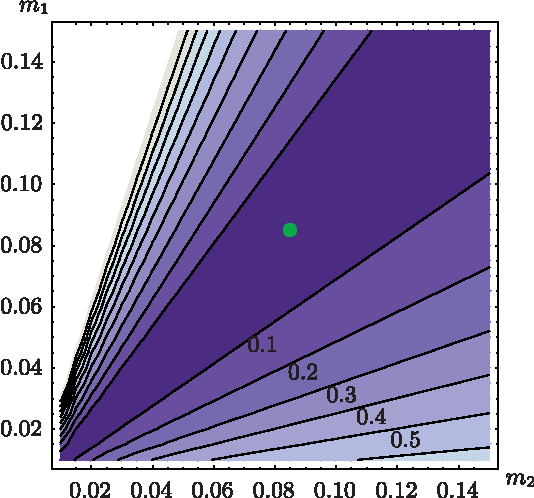

Estimation of model parameters in a dynamic system can be significantly improved with the choice of experimental trajectory. For general, nonlinear dynamic systems, finding globally "best" trajectories is typically not feasible; however, given an initial estimate of the model parameters and an initial trajectory, we present a continuous-time optimization method that produces a locally optimal trajectory for parameter estimation in the presence of measurement noise. The optimization algorithm is formulated to find system trajectories that improve a norm on the Fisher information matrix. A double-pendulum cart apparatus is used to numerically and experimentally validate this technique. In simulation, the optimized trajectory increases the minimum eigenvalue of the Fisher information matrix by three orders of magnitude compared to the initial trajectory. Experimental results show that this optimized trajectory translates to an order of magnitude improvement in the parameter estimate error in practice.

* 12 pages

Electroencephalogram Signal Processing with Independent Component Analysis and Cognitive Stress Classification using Convolutional Neural Networks

Aug 22, 2021

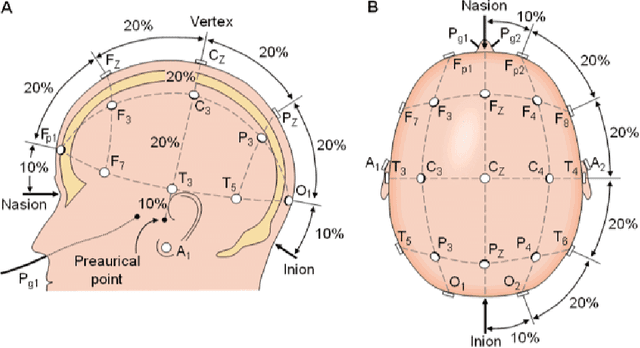

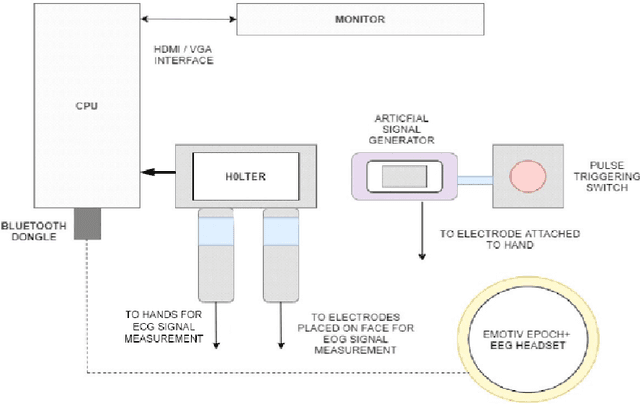

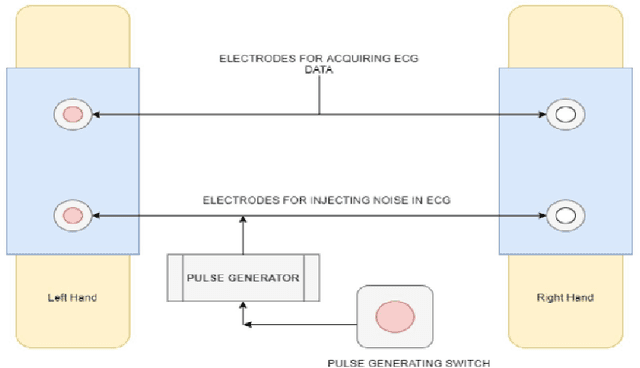

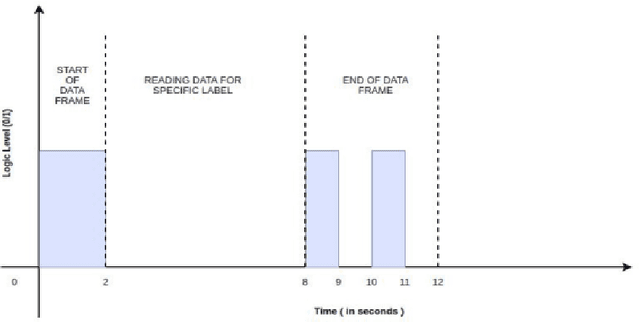

Electroencephalogram (EEG) is the recording which is the result due to the activity of bio-electrical signals that is acquired from electrodes placed on the scalp. In Electroencephalogram signal(EEG) recordings, the signals obtained are contaminated predominantly by the Electrooculogram(EOG) signal. Since this artifact has higher magnitude compared to EEG signals, these noise signals have to be removed in order to have a better understanding regarding the functioning of a human brain for applications such as medical diagnosis. This paper proposes an idea of using Independent Component Analysis(ICA) along with cross-correlation to de-noise EEG signal. This is done by selecting the component based on the cross-correlation coefficient with a threshold value and reducing its effect instead of zeroing it out completely, thus reducing the information loss. The results of the recorded data show that this algorithm can eliminate the EOG signal artifact with little loss in EEG data. The denoising is verified by an increase in SNR value and the decrease in cross-correlation coefficient value. The denoised signals are used to train an Artificial Neural Network(ANN) which would examine the features of the input EEG signal and predict the stress levels of the individual.

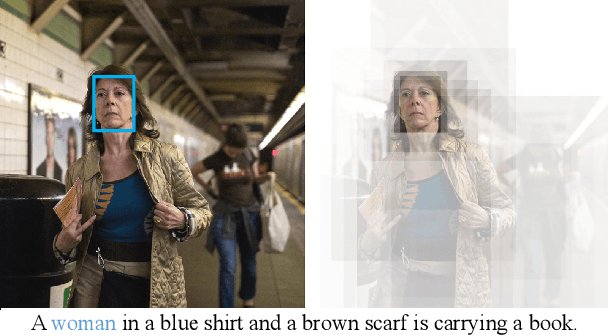

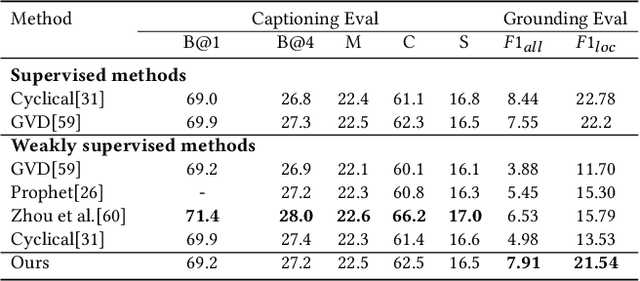

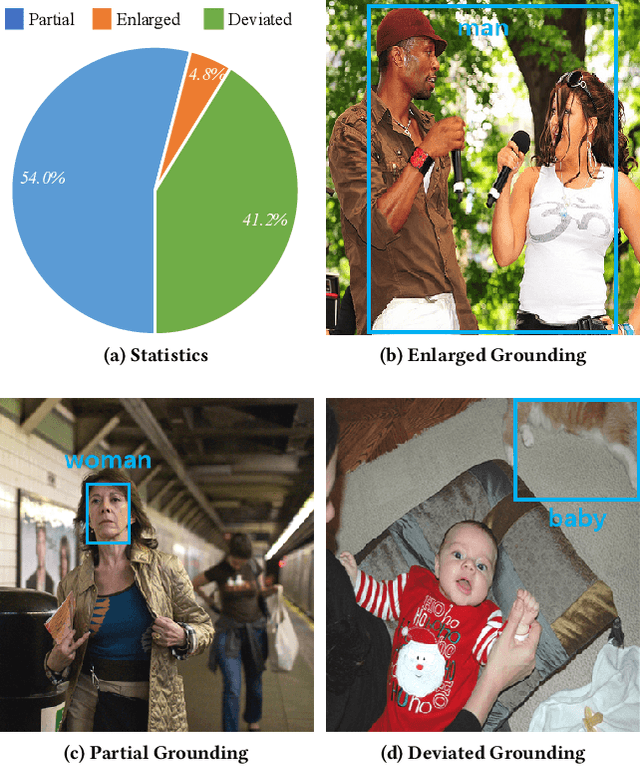

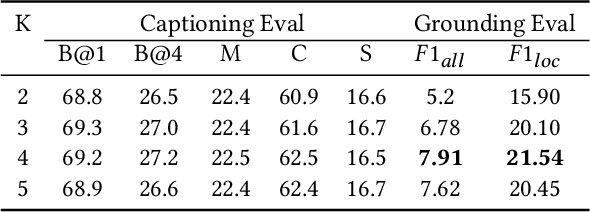

Distributed Attention for Grounded Image Captioning

Aug 22, 2021

We study the problem of weakly supervised grounded image captioning. That is, given an image, the goal is to automatically generate a sentence describing the context of the image with each noun word grounded to the corresponding region in the image. This task is challenging due to the lack of explicit fine-grained region word alignments as supervision. Previous weakly supervised methods mainly explore various kinds of regularization schemes to improve attention accuracy. However, their performances are still far from the fully supervised ones. One main issue that has been ignored is that the attention for generating visually groundable words may only focus on the most discriminate parts and can not cover the whole object. To this end, we propose a simple yet effective method to alleviate the issue, termed as partial grounding problem in our paper. Specifically, we design a distributed attention mechanism to enforce the network to aggregate information from multiple spatially different regions with consistent semantics while generating the words. Therefore, the union of the focused region proposals should form a visual region that encloses the object of interest completely. Extensive experiments have demonstrated the superiority of our proposed method compared with the state-of-the-arts.

Wind Power Projection using Weather Forecasts by Novel Deep Neural Networks

Aug 22, 2021

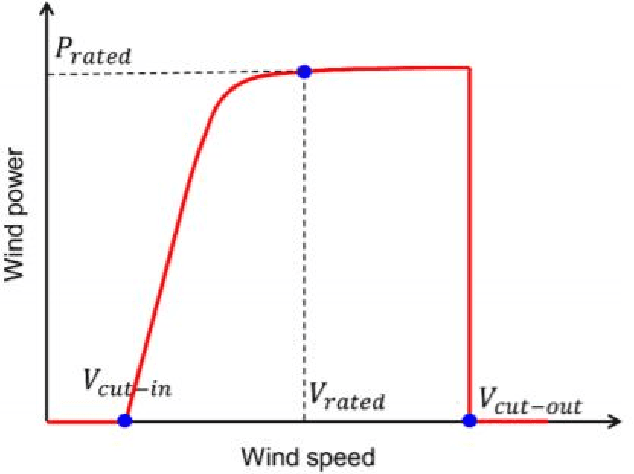

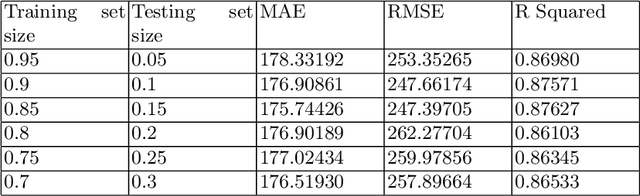

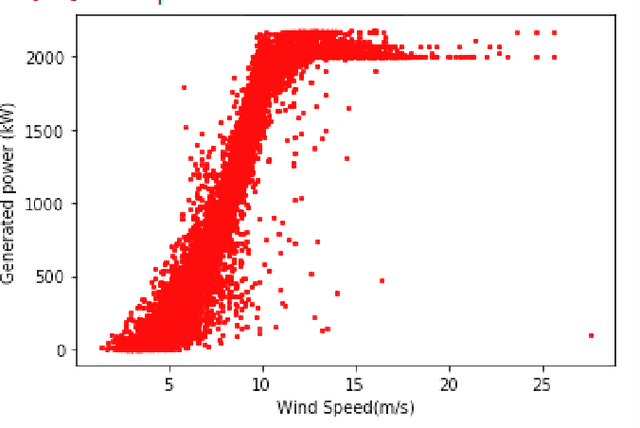

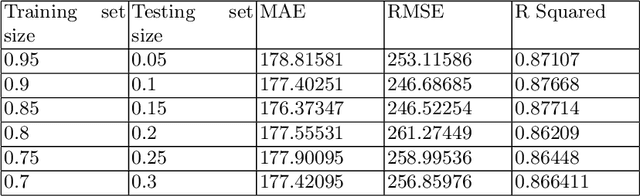

The transition from conventional methods of energy production to renewable energy production necessitates better prediction models of the upcoming supply of renewable energy. In wind power production, error in forecasting production is impossible to negate owing to the intermittence of wind. For successful power grid integration, it is crucial to understand the uncertainties that arise in predicting wind power production and use this information to build an accurate and reliable forecast. This can be achieved by observing the fluctuations in wind power production with changes in different parameters such as wind speed, temperature, and wind direction, and deriving functional dependencies for the same. Using optimized machine learning algorithms, it is possible to find obscured patterns in the observations and obtain meaningful data, which can then be used to accurately predict wind power requirements . Utilizing the required data provided by the Gamesa's wind farm at Bableshwar, the paper explores the use of both parametric and the non-parametric models for calculating wind power prediction using power curves. The obtained results are subject to comparison to better understand the accuracy of the utilized models and to determine the most suitable model for predicting wind power production based on the given data set.

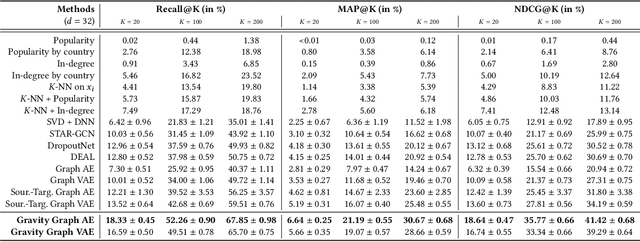

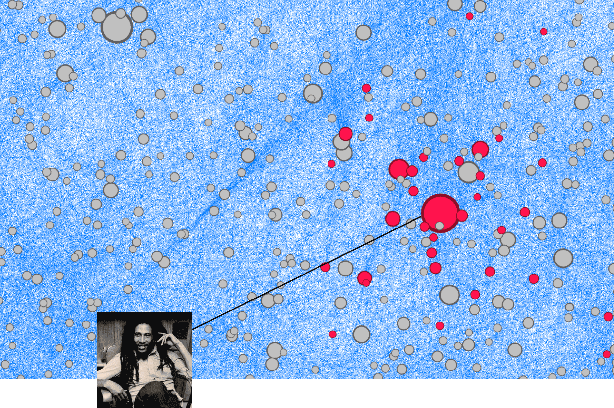

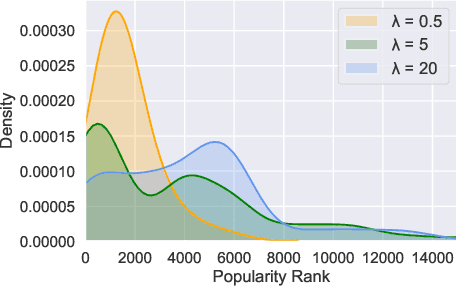

Cold Start Similar Artists Ranking with Gravity-Inspired Graph Autoencoders

Aug 02, 2021

On an artist's profile page, music streaming services frequently recommend a ranked list of "similar artists" that fans also liked. However, implementing such a feature is challenging for new artists, for which usage data on the service (e.g. streams or likes) is not yet available. In this paper, we model this cold start similar artists ranking problem as a link prediction task in a directed and attributed graph, connecting artists to their top-k most similar neighbors and incorporating side musical information. Then, we leverage a graph autoencoder architecture to learn node embedding representations from this graph, and to automatically rank the top-k most similar neighbors of new artists using a gravity-inspired mechanism. We empirically show the flexibility and the effectiveness of our framework, by addressing a real-world cold start similar artists ranking problem on a global music streaming service. Along with this paper, we also publicly release our source code as well as the industrial graph data from our experiments.

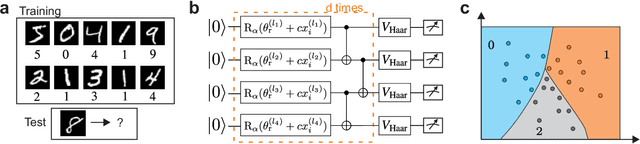

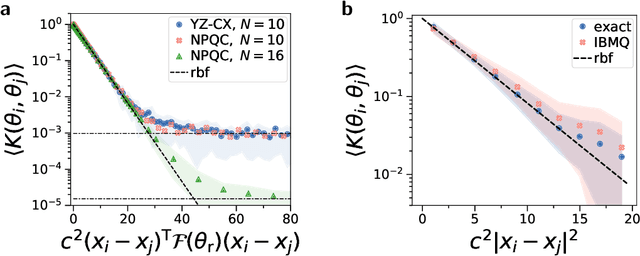

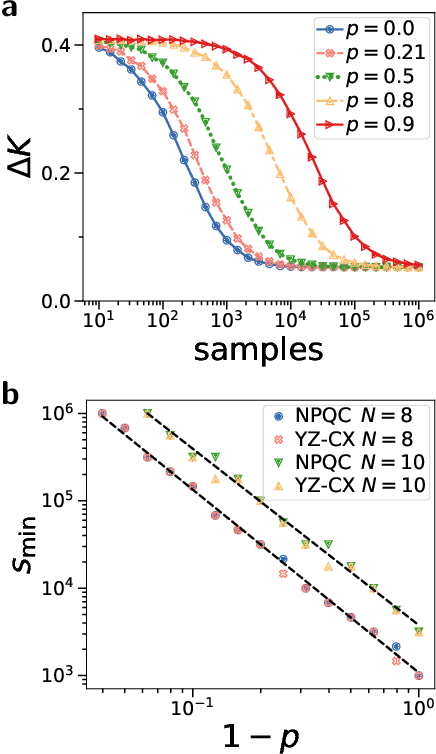

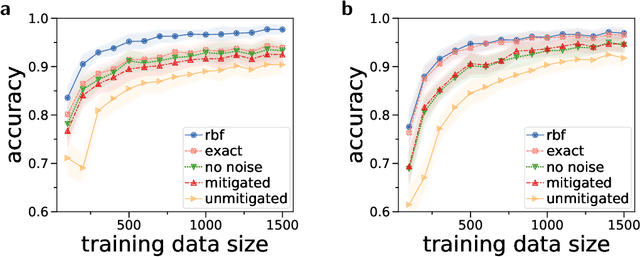

Large-scale quantum machine learning

Aug 02, 2021

Quantum computers promise to enhance machine learning for practical applications. Quantum machine learning for real-world data has to handle extensive amounts of high-dimensional data. However, conventional methods for measuring quantum kernels are impractical for large datasets as they scale with the square of the dataset size. Here, we measure quantum kernels using randomized measurements to gain a quadratic speedup in computation time and quickly process large datasets. Further, we efficiently encode high-dimensional data into quantum computers with the number of features scaling linearly with the circuit depth. The encoding is characterized by the quantum Fisher information metric and is related to the radial basis function kernel. We demonstrate the advantages and speedups of our methods by classifying images with the IBM quantum computer. Our approach is exceptionally robust to noise via a complementary error mitigation scheme. Using currently available quantum computers, the MNIST database can be processed within 220 hours instead of 10 years which opens up industrial applications of quantum machine learning.

CodeT5: Identifier-aware Unified Pre-trained Encoder-Decoder Models for Code Understanding and Generation

Sep 02, 2021

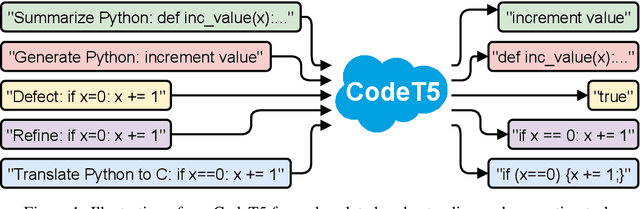

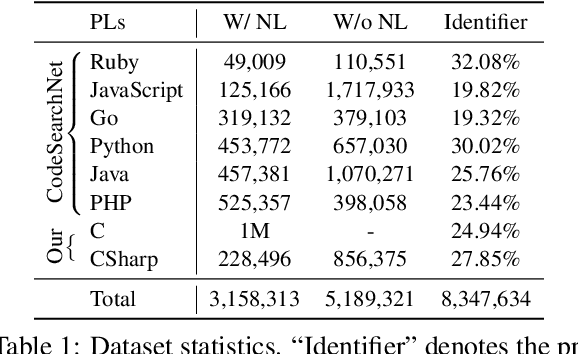

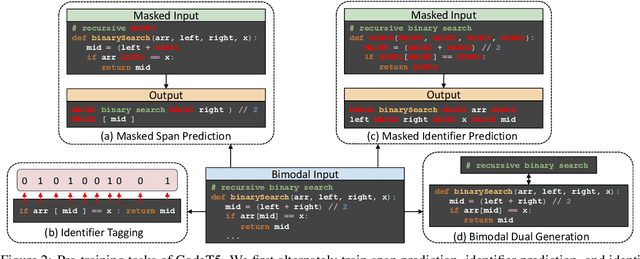

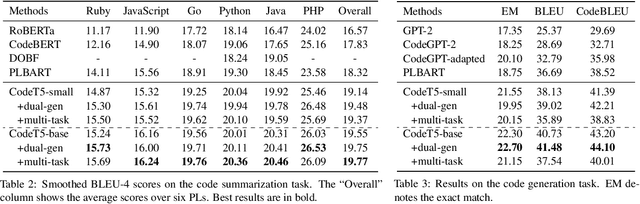

Pre-trained models for Natural Languages (NL) like BERT and GPT have been recently shown to transfer well to Programming Languages (PL) and largely benefit a broad set of code-related tasks. Despite their success, most current methods either rely on an encoder-only (or decoder-only) pre-training that is suboptimal for generation (resp. understanding) tasks or process the code snippet in the same way as NL, neglecting the special characteristics of PL such as token types. We present CodeT5, a unified pre-trained encoder-decoder Transformer model that better leverages the code semantics conveyed from the developer-assigned identifiers. Our model employs a unified framework to seamlessly support both code understanding and generation tasks and allows for multi-task learning. Besides, we propose a novel identifier-aware pre-training task that enables the model to distinguish which code tokens are identifiers and to recover them when they are masked. Furthermore, we propose to exploit the user-written code comments with a bimodal dual generation task for better NL-PL alignment. Comprehensive experiments show that CodeT5 significantly outperforms prior methods on understanding tasks such as code defect detection and clone detection, and generation tasks across various directions including PL-NL, NL-PL, and PL-PL. Further analysis reveals that our model can better capture semantic information from code. Our code and pre-trained models are released at https: //github.com/salesforce/CodeT5 .

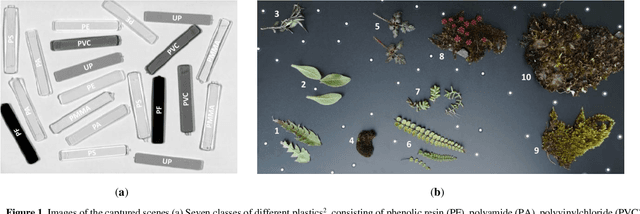

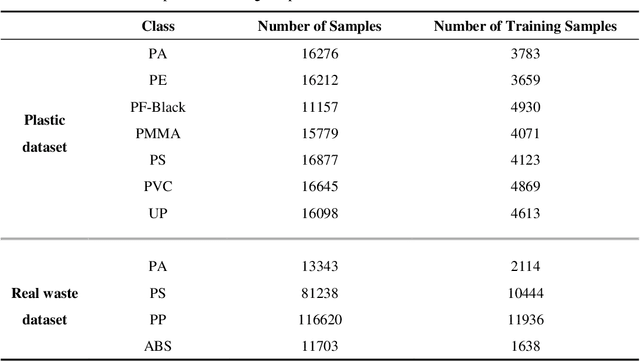

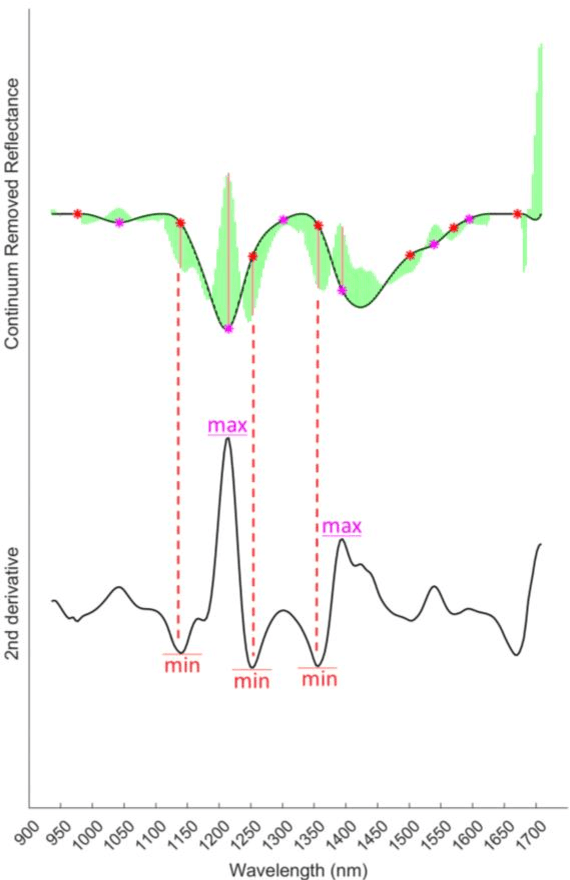

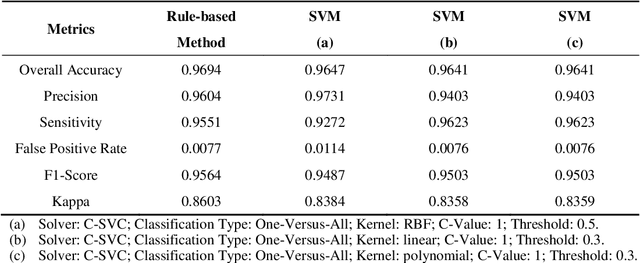

Rule-Based Classification of Hyperspectral Imaging Data

Jul 21, 2021

Due to its high spatial and spectral information content, hyperspectral imaging opens up new possibilities for a better understanding of data and scenes in a wide variety of applications. An essential part of this process of understanding is the classification part. In this article we present a general classification approach based on the shape of spectral signatures. In contrast to classical classification approaches (e.g. SVM, KNN), not only reflectance values are considered, but also parameters such as curvature points, curvature values, and the curvature behavior of spectral signatures are used to develop shape-describing rules in order to use them for classification by a rule-based procedure using IF-THEN queries. The flexibility and efficiency of the methodology is demonstrated using datasets from two different application fields and leads to convincing results with good performance.

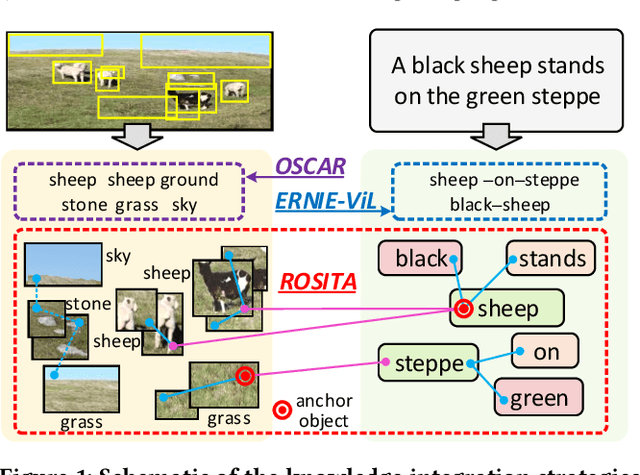

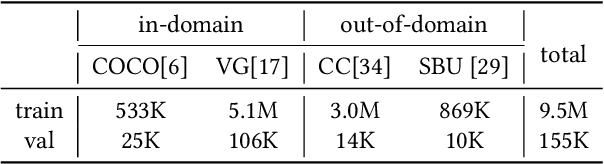

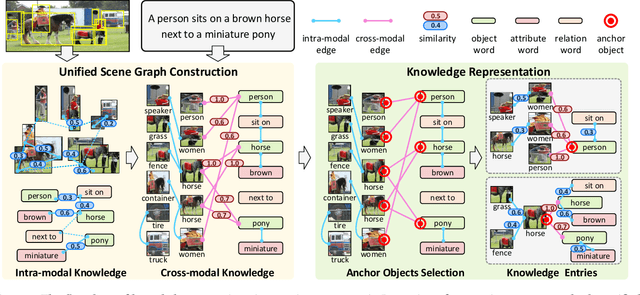

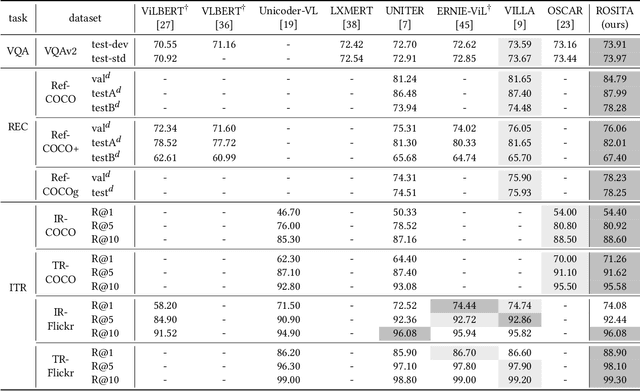

ROSITA: Enhancing Vision-and-Language Semantic Alignments via Cross- and Intra-modal Knowledge Integration

Aug 16, 2021

Vision-and-language pretraining (VLP) aims to learn generic multimodal representations from massive image-text pairs. While various successful attempts have been proposed, learning fine-grained semantic alignments between image-text pairs plays a key role in their approaches. Nevertheless, most existing VLP approaches have not fully utilized the intrinsic knowledge within the image-text pairs, which limits the effectiveness of the learned alignments and further restricts the performance of their models. To this end, we introduce a new VLP method called ROSITA, which integrates the cross- and intra-modal knowledge in a unified scene graph to enhance the semantic alignments. Specifically, we introduce a novel structural knowledge masking (SKM) strategy to use the scene graph structure as a priori to perform masked language (region) modeling, which enhances the semantic alignments by eliminating the interference information within and across modalities. Extensive ablation studies and comprehensive analysis verifies the effectiveness of ROSITA in semantic alignments. Pretrained with both in-domain and out-of-domain datasets, ROSITA significantly outperforms existing state-of-the-art VLP methods on three typical vision-and-language tasks over six benchmark datasets.

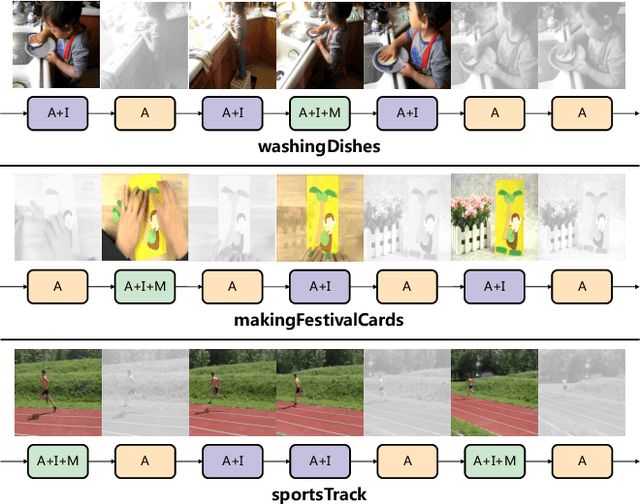

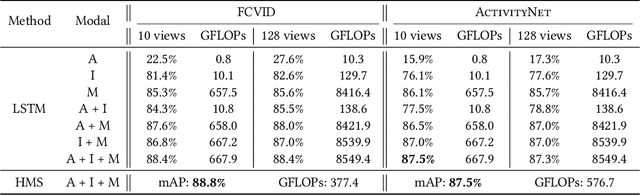

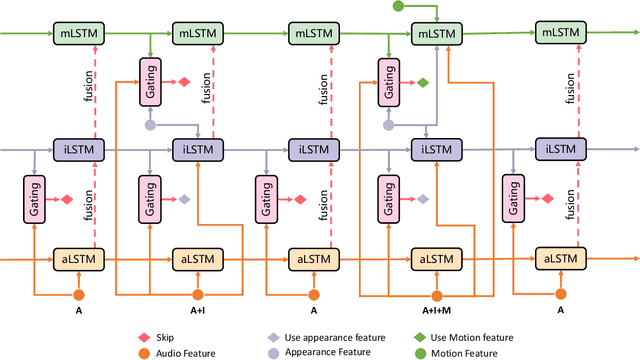

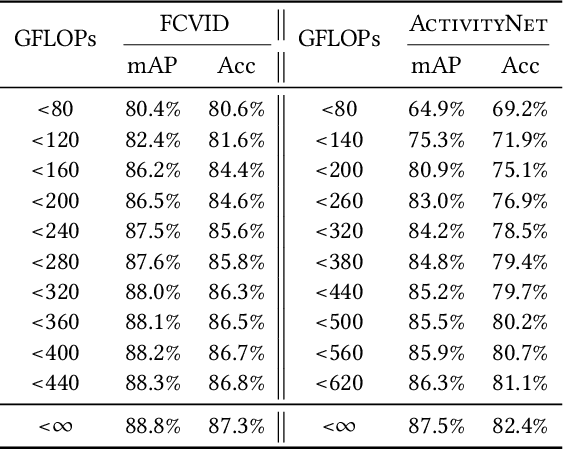

HMS: Hierarchical Modality Selectionfor Efficient Video Recognition

Apr 20, 2021

Videos are multimodal in nature. Conventional video recognition pipelines typically fuse multimodal features for improved performance. However, this is not only computationally expensive but also neglects the fact that different videos rely on different modalities for predictions. This paper introduces Hierarchical Modality Selection (HMS), a simple yet efficient multimodal learning framework for efficient video recognition. HMS operates on a low-cost modality, i.e., audio clues, by default, and dynamically decides on-the-fly whether to use computationally-expensive modalities, including appearance and motion clues, on a per-input basis. This is achieved by the collaboration of three LSTMs that are organized in a hierarchical manner. In particular, LSTMs that operate on high-cost modalities contain a gating module, which takes as inputs lower-level features and historical information to adaptively determine whether to activate its corresponding modality; otherwise it simply reuses historical information. We conduct extensive experiments on two large-scale video benchmarks, FCVID and ActivityNet, and the results demonstrate the proposed approach can effectively explore multimodal information for improved classification performance while requiring much less computation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge