"Information": models, code, and papers

FINN.no Slates Dataset: A new Sequential Dataset Logging Interactions, allViewed Items and Click Responses/No-Click for Recommender Systems Research

Nov 05, 2021

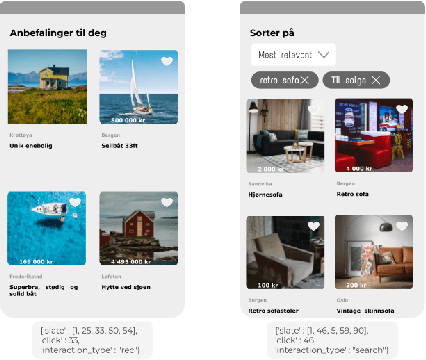

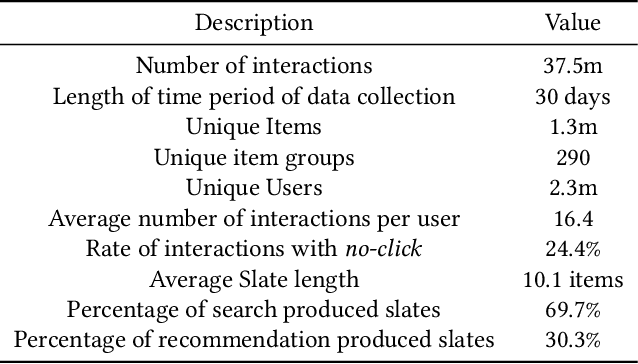

We present a novel recommender systems dataset that records the sequential interactions between users and an online marketplace. The users are sequentially presented with both recommendations and search results in the form of ranked lists of items, called slates, from the marketplace. The dataset includes the presented slates at each round, whether the user clicked on any of these items and which item the user clicked on. Although the usage of exposure data in recommender systems is growing, to our knowledge there is no open large-scale recommender systems dataset that includes the slates of items presented to the users at each interaction. As a result, most articles on recommender systems do not utilize this exposure information. Instead, the proposed models only depend on the user's click responses, and assume that the user is exposed to all the items in the item universe at each step, often called uniform candidate sampling. This is an incomplete assumption, as it takes into account items the user might not have been exposed to. This way items might be incorrectly considered as not of interest to the user. Taking into account the actually shown slates allows the models to use a more natural likelihood, based on the click probability given the exposure set of items, as is prevalent in the bandit and reinforcement learning literature. \cite{Eide2021DynamicSampling} shows that likelihoods based on uniform candidate sampling (and similar assumptions) are implicitly assuming that the platform only shows the most relevant items to the user. This causes the recommender system to implicitly reinforce feedback loops and to be biased towards previously exposed items to the user.

A Signal Detection Scheme Based on Deep Learning in OFDM Systems

Jul 24, 2021

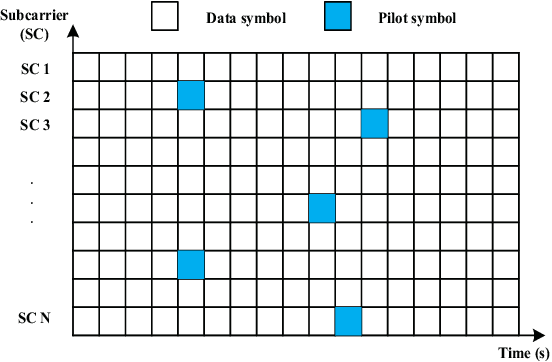

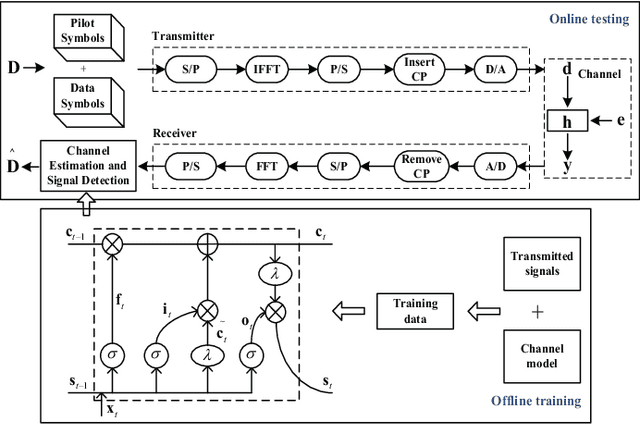

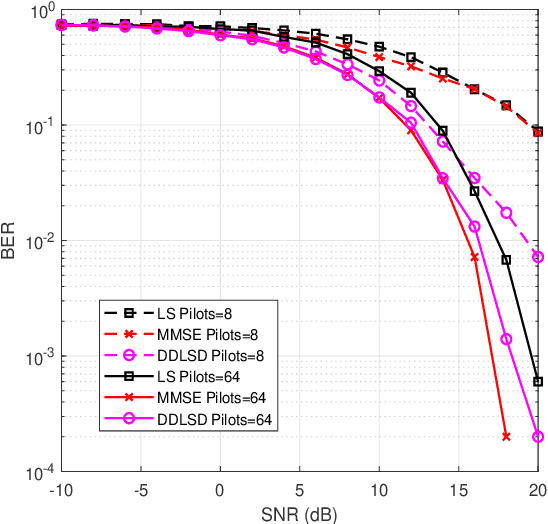

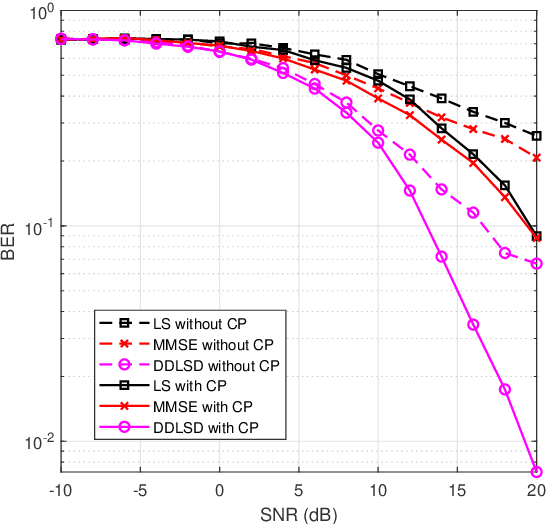

Channel estimation and signal detection are essential steps to ensure the quality of end-to-end communication in orthogonal frequency-division multiplexing (OFDM) systems. In this paper, we develop a DDLSD approach, i.e., Data-driven Deep Learning for Signal Detection in OFDM systems. First, the OFDM system model is established. Then, the long short-term memory (LSTM) is introduced into the OFDM system model. Wireless channel data is generated through simulation, the preprocessed time series feature information is input into the LSTM to complete the offline training. Finally, the trained model is used for online recovery of transmitted signal. The difference between this scheme and existing OFDM receiver is that explicit estimated channel state information (CSI) is transformed into invisible estimated CSI, and the transmit symbol is directly restored. Simulation results show that the DDLSD scheme outperforms the existing traditional methods in terms of improving channel estimation and signal detection performance.

Understanding the Logit Distributions of Adversarially-Trained Deep Neural Networks

Aug 26, 2021

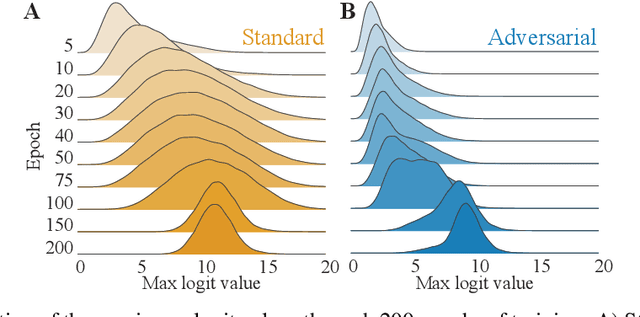

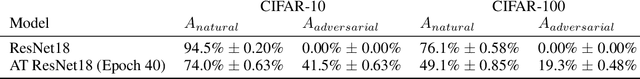

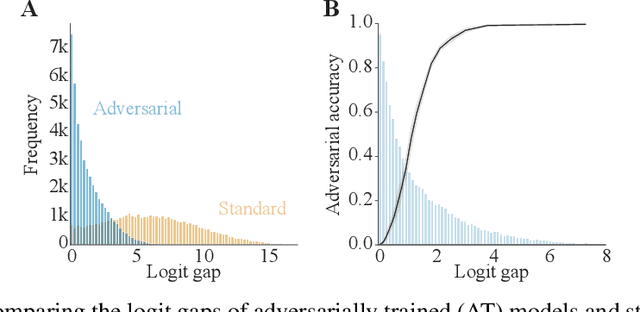

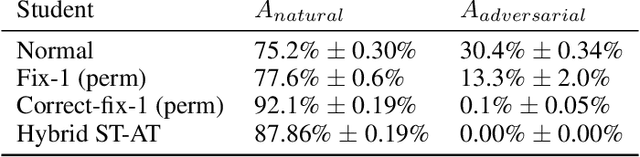

Adversarial defenses train deep neural networks to be invariant to the input perturbations from adversarial attacks. Almost all defense strategies achieve this invariance through adversarial training i.e. training on inputs with adversarial perturbations. Although adversarial training is successful at mitigating adversarial attacks, the behavioral differences between adversarially-trained (AT) models and standard models are still poorly understood. Motivated by a recent study on learning robustness without input perturbations by distilling an AT model, we explore what is learned during adversarial training by analyzing the distribution of logits in AT models. We identify three logit characteristics essential to learning adversarial robustness. First, we provide a theoretical justification for the finding that adversarial training shrinks two important characteristics of the logit distribution: the max logit values and the "logit gaps" (difference between the logit max and next largest values) are on average lower for AT models. Second, we show that AT and standard models differ significantly on which samples are high or low confidence, then illustrate clear qualitative differences by visualizing samples with the largest confidence difference. Finally, we find learning information about incorrect classes to be essential to learning robustness by manipulating the non-max logit information during distillation and measuring the impact on the student's robustness. Our results indicate that learning some adversarial robustness without input perturbations requires a model to learn specific sample-wise confidences and incorrect class orderings that follow complex distributions.

Grounding-aware RRT* for Path Planning and Safe Navigation of Marine Crafts in Confined Waters

Sep 14, 2021

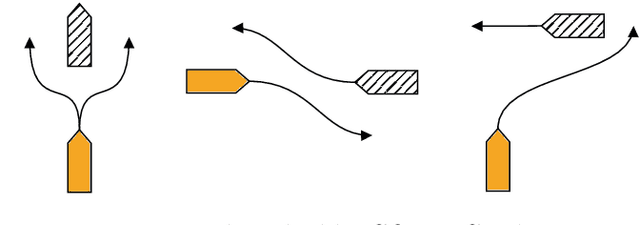

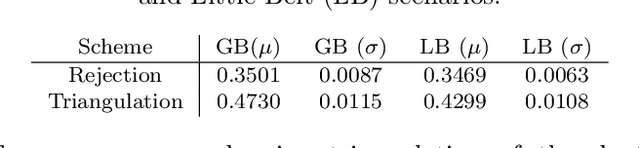

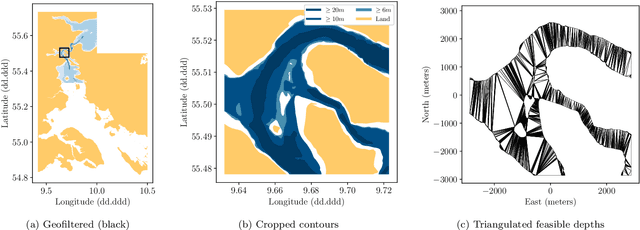

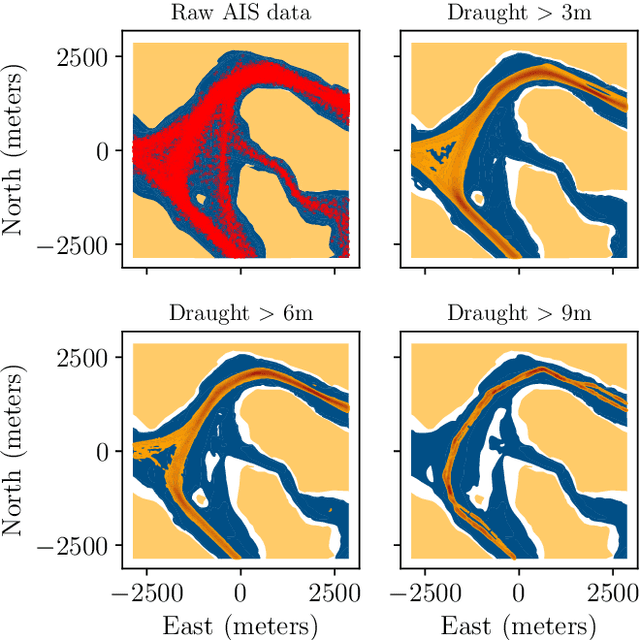

The paper presents a path planning algorithm based on RRT* that addresses the risk of grounding during evasive manoeuvres to avoid collision. The planner achieves this objective by integrating a collective navigation experience with the systematic use of water depth information from the electronic navigational chart. Multivariate kernel density estimation is applied to historical AIS data to generate a probabilistic model describing seafarer's best practices while sailing in confined waters. This knowledge is then encoded into the RRT* cost function to penalize path deviations that would lead own ship to sail in shallow waters. Depth contours satisfying the own ship draught define the actual navigable area, and triangulation of this non-convex region is adopted to enable uniform sampling. This ensures the optimal path deviation.

Uncertainty-Aware Semi-Supervised Few Shot Segmentation

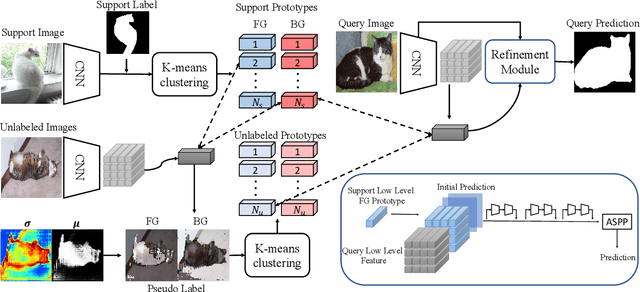

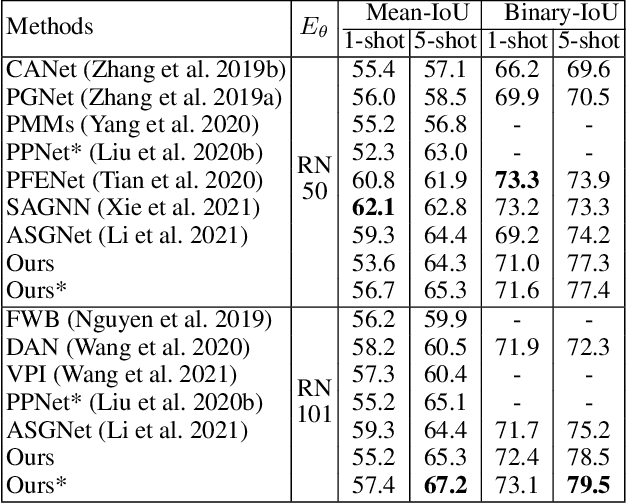

Oct 18, 2021

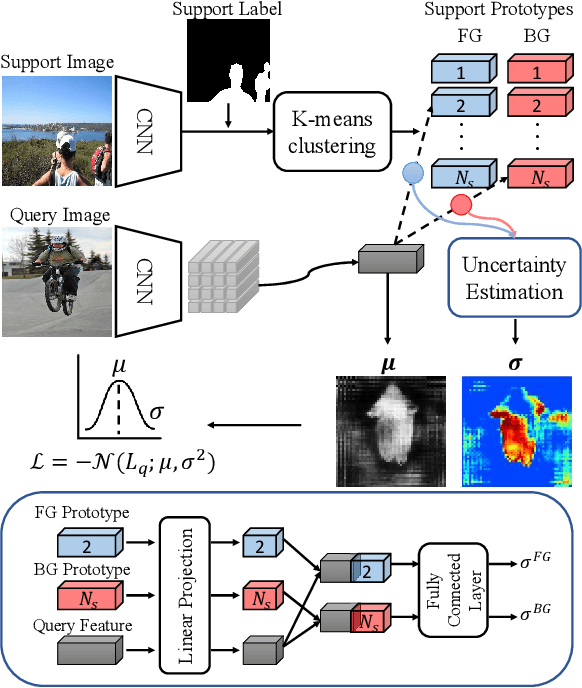

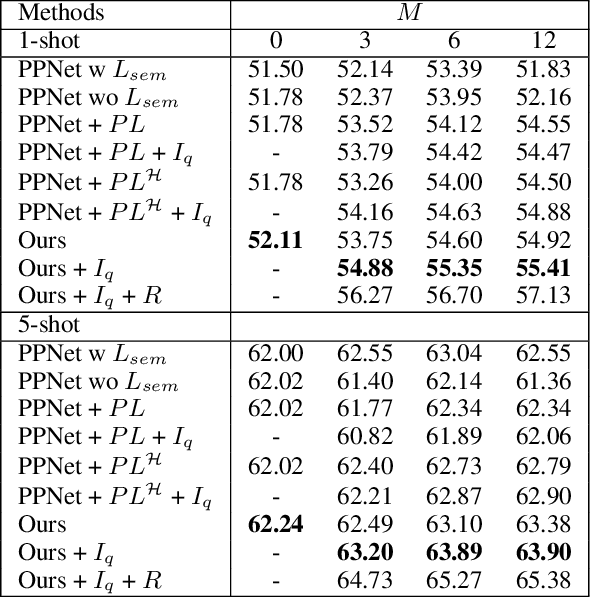

Few shot segmentation (FSS) aims to learn pixel-level classification of a target object in a query image using only a few annotated support samples. This is challenging as it requires modeling appearance variations of target objects and the diverse visual cues between query and support images with limited information. To address this problem, we propose a semi-supervised FSS strategy that leverages additional prototypes from unlabeled images with uncertainty guided pseudo label refinement. To obtain reliable prototypes from unlabeled images, we meta-train a neural network to jointly predict segmentation and estimate the uncertainty of predictions. We employ the uncertainty estimates to exclude predictions with high degrees of uncertainty for pseudo label construction to obtain additional prototypes based on the refined pseudo labels. During inference, query segmentation is predicted using prototypes from both support and unlabeled images including low-level features of the query images. Our approach is end-to-end and can easily supplement existing approaches without the requirement of additional training to employ unlabeled samples. Extensive experiments on PASCAL-$5^i$ and COCO-$20^i$ demonstrate that our model can effectively remove unreliable predictions to refine pseudo labels and significantly improve upon state-of-the-art performances.

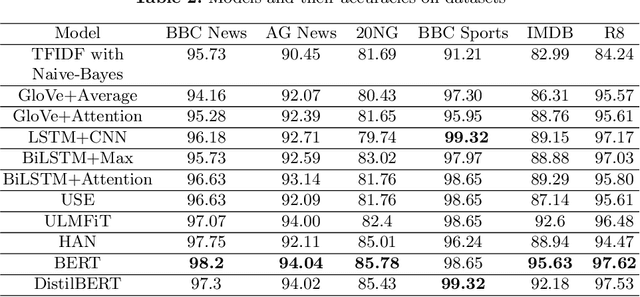

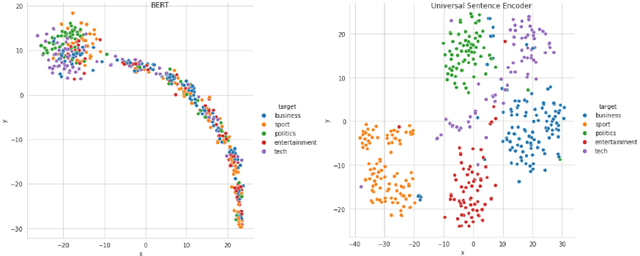

Comparative Study of Long Document Classification

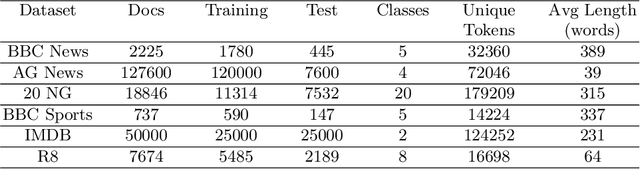

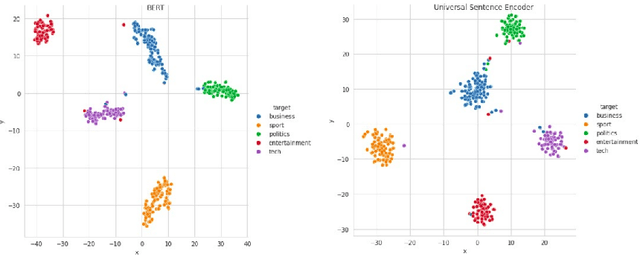

Nov 01, 2021

The amount of information stored in the form of documents on the internet has been increasing rapidly. Thus it has become a necessity to organize and maintain these documents in an optimum manner. Text classification algorithms study the complex relationships between words in a text and try to interpret the semantics of the document. These algorithms have evolved significantly in the past few years. There has been a lot of progress from simple machine learning algorithms to transformer-based architectures. However, existing literature has analyzed different approaches on different data sets thus making it difficult to compare the performance of machine learning algorithms. In this work, we revisit long document classification using standard machine learning approaches. We benchmark approaches ranging from simple Naive Bayes to complex BERT on six standard text classification datasets. We present an exhaustive comparison of different algorithms on a range of long document datasets. We re-iterate that long document classification is a simpler task and even basic algorithms perform competitively with BERT-based approaches on most of the datasets. The BERT-based models perform consistently well on all the datasets and can be blindly used for the document classification task when the computations cost is not a concern. In the shallow model's category, we suggest the usage of raw BiLSTM + Max architecture which performs decently across all the datasets. Even simpler Glove + Attention bag of words model can be utilized for simpler use cases. The importance of using sophisticated models is clearly visible in the IMDB sentiment dataset which is a comparatively harder task.

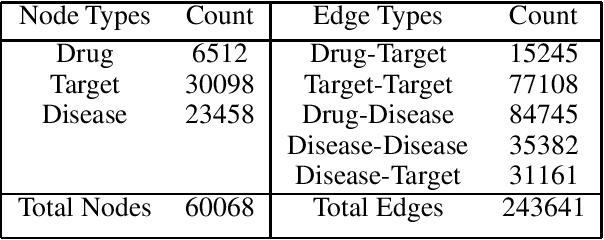

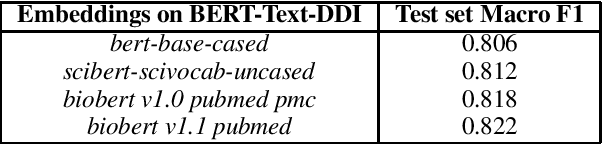

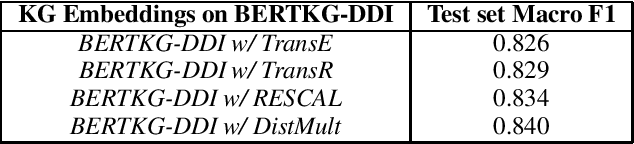

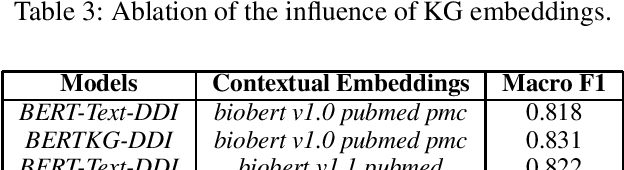

Towards Incorporating Entity-specific Knowledge Graph Information in Predicting Drug-Drug Interactions

Dec 21, 2020

Off-the-shelf biomedical embeddings obtained from the recently released various pre-trained language models (such as BERT, XLNET) have demonstrated state-of-the-art results (in terms of accuracy) for the various natural language understanding tasks (NLU) in the biomedical domain. Relation Classification (RC) falls into one of the most critical tasks. In this paper, we explore how to incorporate domain knowledge of the biomedical entities (such as drug, disease, genes), obtained from Knowledge Graph (KG) Embeddings, for predicting Drug-Drug Interaction from textual corpus. We propose a new method, BERTKG-DDI, to combine drug embeddings obtained from its interaction with other biomedical entities along with domain-specific BioBERT embedding-based RC architecture. Experiments conducted on the DDIExtraction 2013 corpus clearly indicate that this strategy improves other baselines architectures by 4.1% macro F1-score.

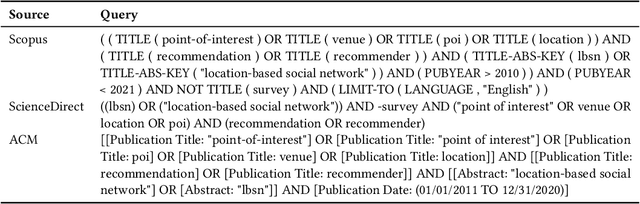

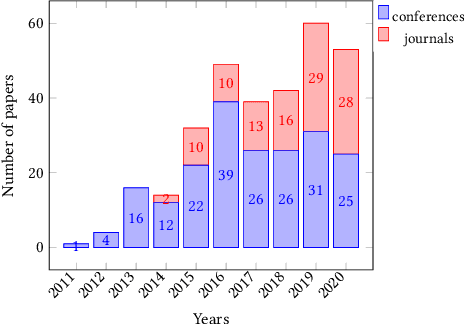

Point-of-Interest Recommender Systems: A Survey from an Experimental Perspective

Jun 18, 2021

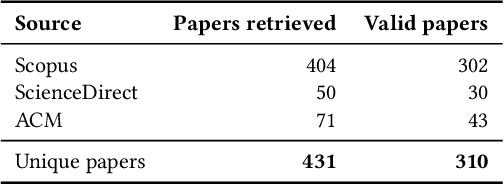

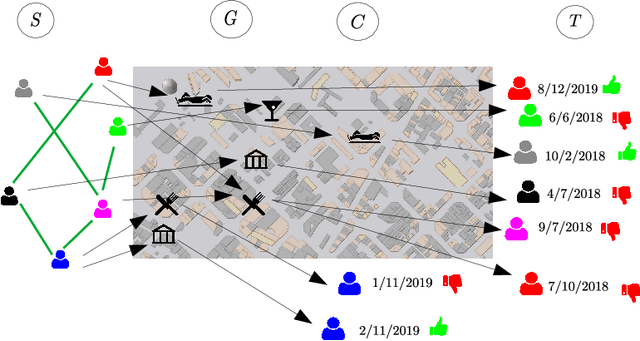

Point-of-Interest recommendation is an increasing research and developing area within the widely adopted technologies known as Recommender Systems. Among them, those that exploit information coming from Location-Based Social Networks (LBSNs) are very popular nowadays and could work with different information sources, which pose several challenges and research questions to the community as a whole. We present a systematic review focused on the research done in the last 10 years about this topic. We discuss and categorize the algorithms and evaluation methodologies used in these works and point out the opportunities and challenges that remain open in the field. More specifically, we report the leading recommendation techniques and information sources that have been exploited more often (such as the geographical signal and deep learning approaches) while we also alert about the lack of reproducibility in the field that may hinder real performance improvements.

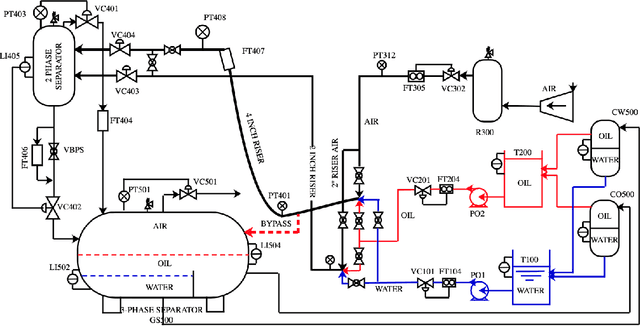

Automatic digital twin data model generation of building energy systems from piping and instrumentation diagrams

Aug 31, 2021

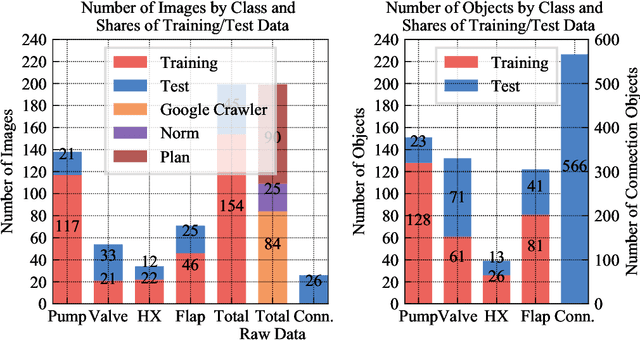

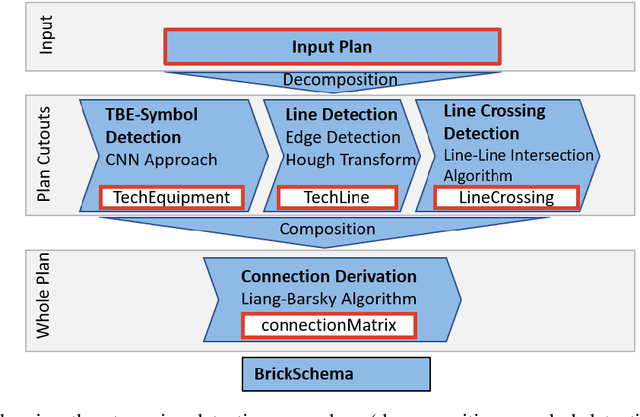

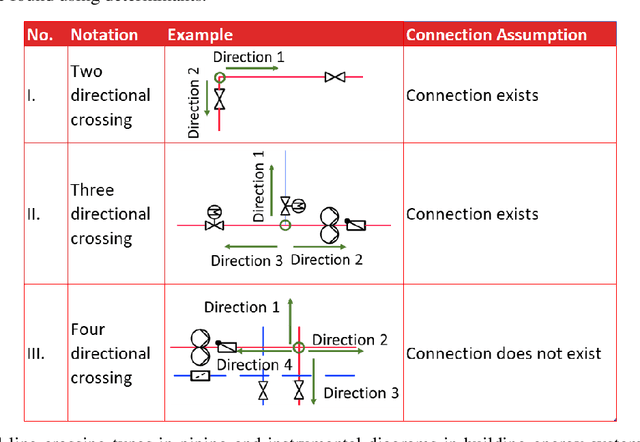

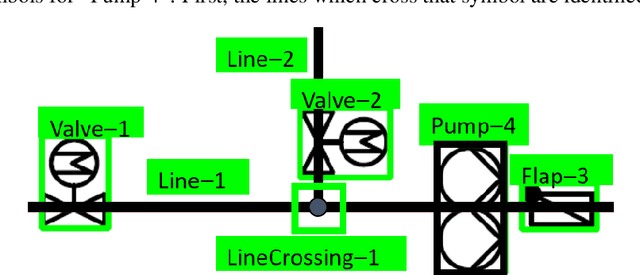

Buildings directly and indirectly emit a large share of current CO2 emissions. There is a high potential for CO2 savings through modern control methods in building automation systems (BAS) like model predictive control (MPC). For a proper control, MPC needs mathematical models to predict the future behavior of the controlled system. For this purpose, digital twins of the building can be used. However, with current methods in existing buildings, a digital twin set up is usually labor-intensive. Especially connecting the different components of the technical system to an overall digital twin of the building is time-consuming. Piping and instrument diagrams (P&ID) can provide the needed information, but it is necessary to extract the information and provide it in a standardized format to process it further. In this work, we present an approach to recognize symbols and connections of P&ID from buildings in a completely automated way. There are various standards for graphical representation of symbols in P&ID of building energy systems. Therefore, we use different data sources and standards to generate a holistic training data set. We apply algorithms for symbol recognition, line recognition and derivation of connections to the data sets. Furthermore, the result is exported to a format that provides semantics of building energy systems. The symbol recognition, line recognition and connection recognition show good results with an average precision of 93.7%, which can be used in further processes like control generation, (distributed) model predictive control or fault detection. Nevertheless, the approach needs further research.

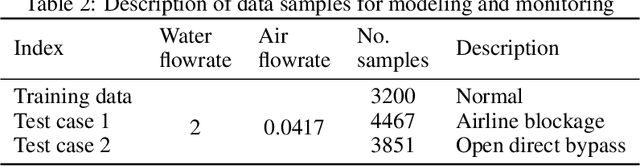

Dynamic probabilistic predictable feature analysis for high dimensional temporal monitoring

Sep 29, 2021

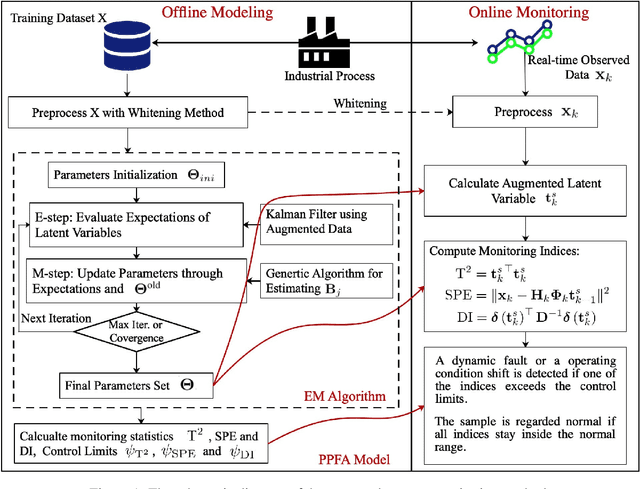

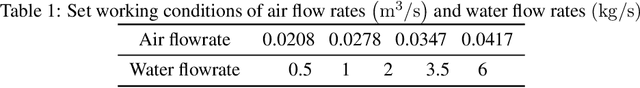

Dynamic statistical process monitoring methods have been widely studied and applied in modern industrial processes. These methods aim to extract the most predictable temporal information and develop the corresponding dynamic monitoring schemes. However, measurement noise is widespread in real-world industrial processes, and ignoring its effect will lead to sub-optimal modeling and monitoring performance. In this article, a probabilistic predictable feature analysis (PPFA) is proposed for high dimensional time series modeling, and a multi-step dynamic predictive monitoring scheme is developed. The model parameters are estimated with an efficient expectation-maximum algorithm, where the genetic algorithm and Kalman filter are designed and incorporated. Further, a novel dynamic statistical monitoring index, Dynamic Index, is proposed as an important supplement of $\text{T}^2$ and $\text{SPE}$ to detect dynamic anomalies. The effectiveness of the proposed algorithm is demonstrated via its application on the three-phase flow facility and a medium speed coal mill.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge